Hacking Things That Think

Show original YouTube description

Show transcript [en]

good okay well the first thing I have is a Shaggy Dog uh which this is now the thing that's interesting about this Shaggy Dog is that a dog very similar to this was once used by the CIA to infiltrate a very highly secured facility and the way that this infiltration worked was that um the agency had a source that was already in this facility and drove into this facility on a daily basis and they needed a way to penetrate this facility to physically penetrate the facility and um they were having a lot of difficulty with this because the um the facility was really really highly secured and there was just no way that they could

get into it so they kind of brainstormed about this and they came up with an idea and the idea was this that this source that was inside that facility would start bringing his dog to work every day with him and it was this big Shaggy Dog in fact actually I don't think it was his dog I think the agency actually bought him this dog so they expensed it but got him a big dog as a pet right and uh so he's bringing this dog in to this highly secure facility and the first time he does it like the guards at the checkpoint just go freaking nuts right they're like what are you bringing the dog in here for and he's like well it's

a service animal it's my you know my comfort animal you know I got to bring it in with me and um so they freak out and they they you know anal probe the dog and do all this stuff and and check everything about the car cuz it's like ah this is just so weird right but there's like a really high level scientist in this facility so it's like ah it's eccentric but we got to go with it because you know we got to make our nuclear bombs or whatever it was so um so they freaked out but they let him go through with the dog takes the dog to work next day same thing and they freak

out again a little less so next day they're still freaking out but it's getting to be you know like okay this is just the weird guy who brings the dog to work and okay whatever two or three weeks couple months go by and pretty soon it's just like yeah that's the you know Bob the nuclear scientist who brings his dog to work whatever just wave them through that point the CIA made a Shaggy Dog suit that their operative could fit inside and guess what happened when the operative in the fuzzy dog suit goes through the gate they didn't even bat an eye didn't even look at the dog they're just like yeah whatever go through and that's how the agency

physically penetrated that facility and that story is on uh Dark Net Diaries if you want to hear the whole yeah a few of you have heard this the reason I bring that up is that that's a great example of a cognitive attack okay and I have been railing for years about cognitive security because I'm just one of those people who rails about things that people generally ignore and in this case that physical penetration was facilitated in fact enabled by a cognitive attack and that cognitive attack was habituation they habituated those car those guards to accept the fact that this eccentric scientist brought a dog in every day and after a while he lost power um in all seriousness I don't know

what happened guys um um did it go to sleep yeah maybe it went to sleep sorry okay the power working is it a little light yeah hold on sorry um yeah well let now I know how to fix it we good um okay so that was a physical penetration that was facilit facilitated by a cognitive attack okay now what I've been advocating for for quite some time is that we have three essentially phys um security domains three domains of security we have physical which is arguably the oldest area of security right physically uh securing uh physical uh objects or Val valuables we have cyber which is securing uh information right it's either information going from one place

to another or it's information being stored in place but in one way shape or fashion it's securing information but what we're moving into what we're moving towards is the cognitive domain now you could argue that the cognitive domain has been around for a while because humans have been around for a while so on and so forth but what's different is that we're conect Ed now right and this is a phenomenon that's really only been happening for maybe 100 arguably maybe 150 years uh you go with radio and these other Technologies and now it starts to uh uh evolve into something different okay and more recently with the emergence of AI this is where I think

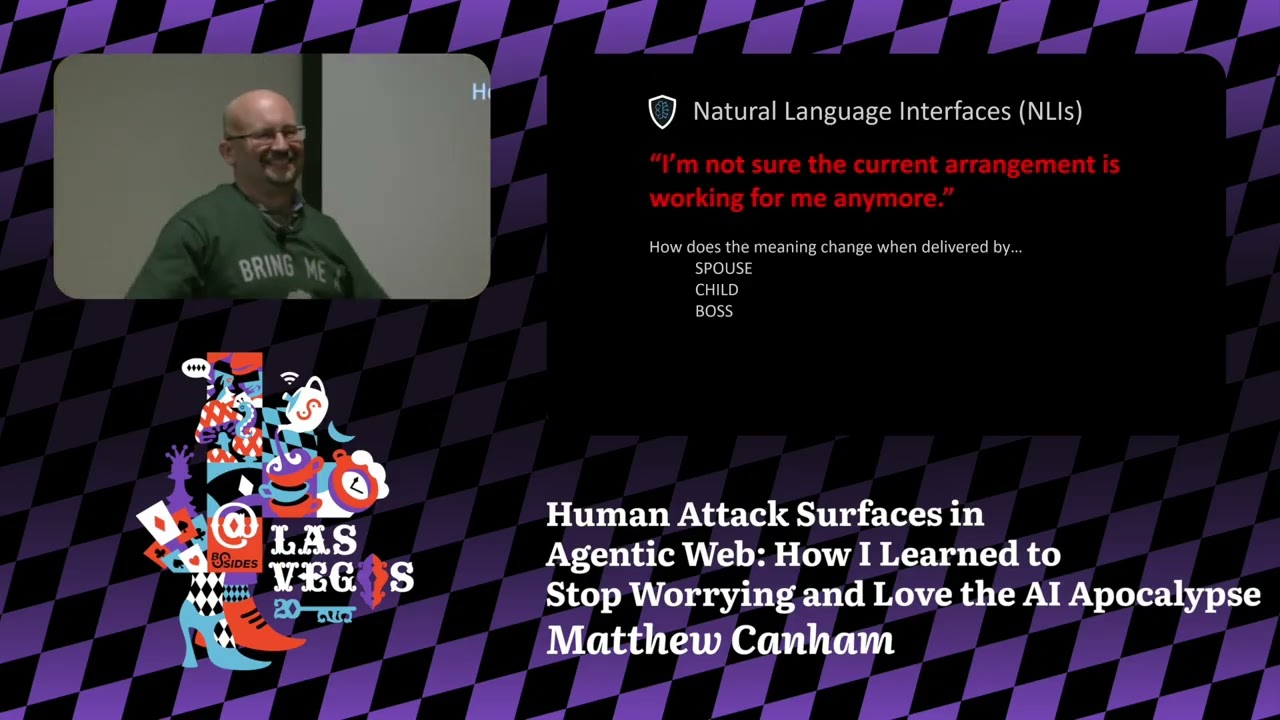

cognitive security is beginning to become really interesting because what we're seeing is we're seeing attacks that are meant for humans but actually also work for machines which is a really strange thing if you think about it uh which by the way this is an example of and so some of you well given the audience I'm sure all of you are at least somewhat familiar with jailbreaking llms I'm just going to go with that assumption given this crowd um what's interesting about this paper I think this paper is about three maybe four months old is is that it introduced something called uh persuasive adversarial prompting now there's um there's a um psychologist who's very well known by

the name of Robert Shalini and he has these uh principles of influence he's got a book called influence if you haven't read it I highly recommend that you read that book because that's like the Bible of social engineering um what was really interesting in this paper that they were using chini's principles of social influence and persuasion that were meant for humans to jailbreak llms so you have an attack that was meant for a human that's being applied to an llm okay so isn't this just social engineering isn't cognitive security just social engineering by a different name I'm arguing no and so this right here is a cognitive hack we have paintings that are uh on the street that

it's just totally flat ground but they're drawn and they're Illustrated in such a way as to imp um impose that um visual representation on the um you know basically in the visual input of the Observer from a certain perspective and in the case on the right with the little girl on the ball that is uh specifically an instance of what's known as a nudge and a nudge is a um how do I say it it's it's a n it's it's a quasi voluntary way to uh influence behavior uh or qu quasi consensual uh on the other uh on the left side that's just uh painting it's um I forget the artist's name I'm sure some of you know the artist but he's

he's pretty well known and this is interesting but check this out if we draw lines on a stop sign we can make computer vision think that that stop sign is now a basketball uh or if you put randomly uh or selectively placed pieces of tape that are white and black on that stop sign now all of a sudden that car doesn't recognize that as a stop sign um humorously uh some folks uh projected um Elon Musk in a uh perspect Ral sort of image uh on the street in front of a Tesla and got it to stop very uh quickly so anyway what I'm showing here though is that this is not what we would think of

when we think of social engineering right this is something different and that's what I'm arguing is part of this greater umbrella idea of cognitive security oops uh this is uh I think another interesting one that I just came across last week uh Masha sedova from um it was Elevate now she's with uh mcast gave this to me and what these graphs represent is um on the right it's the time of the day okay so imagine that as being a 24-hour clock not a 12-hour clock and this is when um actual fishing emails are reported the frequency at which they're reported to the security department by users and if we see I don't know why users are reporting

fishing at 3:00 in the morning but they seem to like to uh same with five but what's interesting is is we see this spike a around nine and 10 and then it drops off right around lunchtime and the interesting thing here is that there was an Israeli study done with judges and how what their likelihood hardening um or reducing the sentence of uh convicts would be and what they found was that the likelihood of granting a pardon or commuting commuting a sentence actually went up if that case was heard just after lunch so if you're convicted of a crime time your pardon or your commutation at the right time in the docket so that you

get a favorable judge right and it seems seems like there might be something kind of analogous here I don't know exactly what's going on but there's a little Spike at around 2 three and then the other interesting thing is on the left this is when fish clicking happened and we're seeing a spike right around the time that people are getting ready to leave work and I don't know what the 700 p.m. thing is maybe people are getting done with their commute and they're checking email real quick and they're being caught off guard the point is is there's something cognitive happening here it's this isn't uh fishing filters this is humans okay so what do I mean by

cognitive system let me just take a a quick um s uh Divergence here and give you just a real quick thing about my background because I think it is relevant here uh my PhD is in cognitive Neuroscience I did not focus on cognitive psychology I did not focus on humans cognitive psychology was a part of my education but we were looking at the broader scope of things my first class in my graduate program was we were building um multi-layer actually three- layer perceptrons uh modeling human Vision systems and how they would respond to visual stimulation and to do that we were doing that as a in an effort to model the human uh Vision visual system and the reason I bring

that up is that cognitive science has a different perspective on how um cognition happens okay it's not it's not just neurons okay so people get very fixated on the brain and the squishy stuff but the important thing to realize is that cognition can happen outside of the squishy stuff it can happen in other ways and essentially what uh what we can think of as a cognitive system is this cognitive system or this cognitive agent that has percepts uh perceptual inputs uh what we'll call sensors and it has ways to act on its environment actuators and so this could be um it could be an amoeba it could be a bug it could be I mean a literal like

crawling around bug uh could be a human it could be a group of humans it can be an AI and so when we conceive of a of a cognitive system in this way it really opens up the possibility of what we might consider to be a cognitive system and on the internal side we have uh the ability to store and make decisions on information that has been taken in through those uh sensors there's a reason why I'm bringing this up and the reason why it's important and that is that once we understand that cognition is about information processing now now we can start to formulate attacks against different types of information processing systems and I'll come back to that but

this is how this ties into cognitive security is that now we can start to look at ways to attack cognitive systems and so as I became interested in this idea I've been collecting lots and lots and lots of examples and um the idea hit me I don't know whenever I sent the paper in a few months ago I should put all these things together and um so go easy on me as I go through this because this is my first Wikipedia or my first Wiki and while I have a PhD in cognitive Neuroscience I I have um about 3 hours of Wiki experience so uh but here it is and so if you'd like to go there again go easy this is a

work in progress uh cognitive attack taxonomy dorg I really wanted cat.org but I didn't have the $50,000 to buy the domain so this one was uh I think $8.98 and uh it renews at like $4 or something so anyway um when you get to the first page though um you'll see a couple of links if you click on that very first link it'll bring you to the index and this this is the index right here it's a screenshot and and um I'm going to walk through what these things mean but basically what I've been doing is in a nutshell I have been collecting all of these different instances and this is ongoing so I think right now I'm at

around 362 or something but I keep adding stuff you know as I can uh I take social engineering attacks I take scams of different types any kind of new sort of tool tactic procedure exploit vulnerability that could be applied to a cognitive system and I I put a little identification tag on that and I write a little you know summary about it and so um let me walk through that and I put the uh URL on top of a bunch of these slides so um it'll be around for a little bit um if you pull up the page um the first thing that you'll see is the cat name and this is the uh cognitive Tac

taxonomy ID and within um that ID uh you'll see let me think it's the uh the number it's the year and then I think it's the number cat year number um sorry I just was doing this on the fly it seemed like it would work if anyone is an expert at indexing things and wants to like put input into this I'm totally open um underneath that is a very short description on what that thing is um then the uh the identification number that's the tag uh the layer I'm going to get into this in a little bit but the reason I went into depth about what a cognitive system is is uh so that I can talk about these layers uh the

scale I'm going to talk about that in a minute as well but that basically talks about is this at a tactical operational or strategic level uh level of maturity I don't know if this is the right term for this but um I'm trying I'm trying to be really polite here there's a lot of snake oil out in the world on this kind of stuff and I'm really what I'm trying to do is I'm trying to account for the snake oil like I'm trying to say oh we have snake oil here but at the same time I'm trying to acknowledge that uh we may not want to really trust the snake whale we might want to trust this other stuff over here

so that's where I'm capturing that but I may change that name again if anybody has a great suggestion for a name like that that's not too offensive i' be open to it I don't know scale just didn't sound right um so uh category uh I'll get i'll get into the category in a minute subcategory I'll get into in a minute and then also known as um it's it's just kind of what it sounds like it's like a lot of these things have very similar terms or different terms for the same thing uh okay uh brief description let me just uh kind of run through some of these as I um okay layer um now I I talked earlier

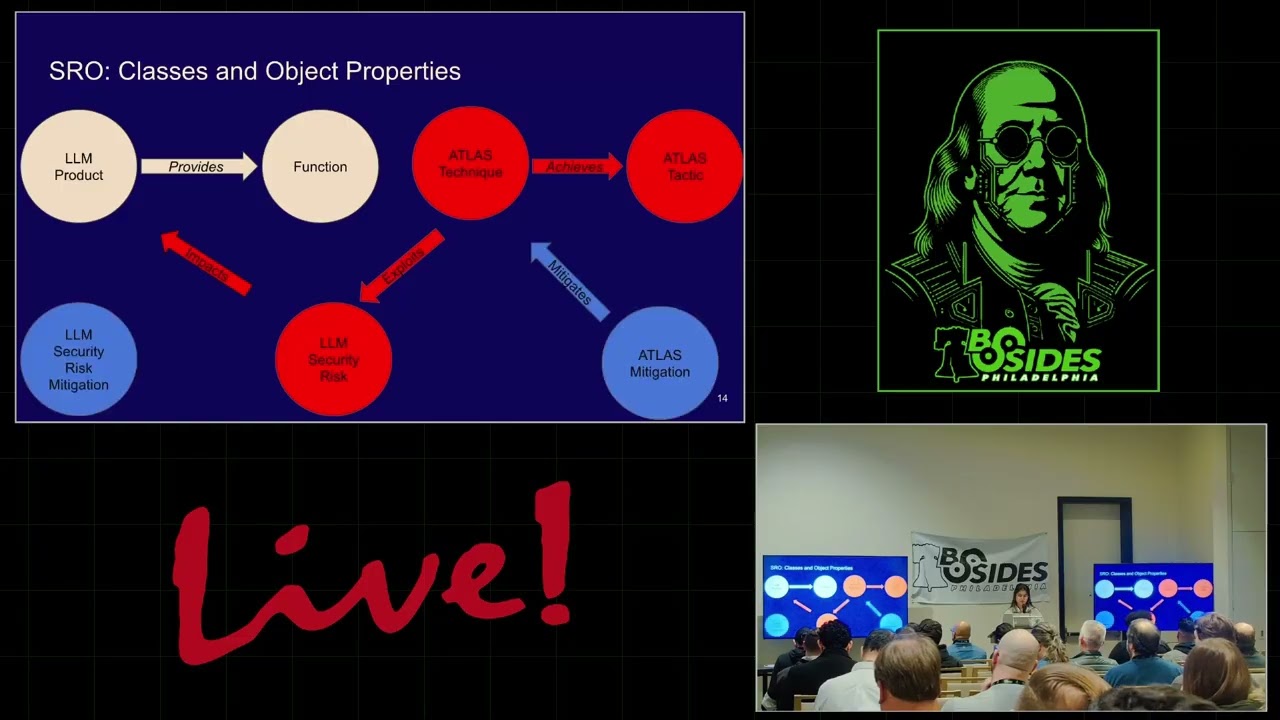

about um how a cognitive system is essentially an information processing system right it's not an individual person it's uh not necessarily an AI it can be anything that can process information now um most of us here are probably familiar with the OSI uh the open systems interconnection uh model and this is a model that describes how different uh systems information processing systems can talk to each other and in security it uh informs us about how we can secure different systems uh what types of attacks we might uh expect for different systems and so um that goes from 1 to seven one is uh physical so this is actual physical Hardware or it might be uh electromagnetic waves going through

the air and on the other side of it we have the application that faces the human I talked to jat uh chat GPT about it and chat GPT told me that it resides at layer 7 so I took it at its word and so I've got chat GPT at layer 7 and I have layer 7even attacks the persuasive adversarial prompt that I showed earlier would be an example of a layer seven attack layer eight so we've got layer 8 n and 10 this um so Bruce schneer talks about it but there was also a gentleman by the name of Ian farore that talked about it as far as I can tell I think Ian farar

talked about it first but if if I'm wrong about that please let me know um anyways back in 2010 said hey we should extend the OSI model to include humans and he proposed the human interconnection model and that has layer eight for individual humans layer nine for organizations and layer 10 for legal and Regulatory bodies and um so the thing that's a little weird here is the way that I break this down is that a mob would still be layer eight because a a mob does not have a coherent set rules that process information organizations do and this is important because I I can point to lots of examples where people have deliberately used policies in some cases

stupid policies to exploit an organization okay um an example if an organization has a policy to not scan packages coming in through an x-ray what a great way to to warship and collect all of that wireless you know stuff floating around and that would be a layer 9 attack because you're aware of this policy that opens up a vulnerability there uh layer 10 this is one I'm a little less familiar with but it's the legal and Regulatory uh sort of layer the the distinguishing um characteristics of this layer are that uh number one it moves much slower than policy policy could be switched well po policy can be switched relatively quickly uh to change a law takes a very long time however um

there's a kinetic component to the legal system that there is not um present in policy right so the law they can um possess your person they can take away your freedom they can take away your life and that's a very important distinction there now the other thing that I really wanted to do with this taxonomy is I I play around in a couple of different spaces I play around in the information security space and I play around in the um kind of cognitive Warfare information uh Warfare influence Warfare kind of space and the thing that's interesting about this is when you talk to like a scops person they're they're talking very much about the same kind of things that

social Engineers will talk about it but they're talking about it in a slightly different way and what I really wanted is a way to unify these two sort of universes because I'm like we're we're sort of talking about the same thing we're just using slightly different words and um something that is important from a military context is the level of operation so in information security we're typically operating at a tactical level and what a tactical level is is it's a single engagement uh I'm going to see if I can tailgate my way into that building or I'm going to tailgate my way into that building so that I can get access to that server and then I'm going

to get out that's a a single engagement that you're engaged in okay now if I am going to um let's say that in order to get access to that same server I'm going to do a seaing campaign where I'm dropping USBS all over the place I'm going to uh try to um you know capture somebody's uh swipe card so I can you know counterfeit that I'm going to try to tailgate I'm going to try to fish I'm going to come at them from all these different angles right and let's say that this is over months and I'm going to have people helping me that is an operational level engagement where it's it's multiple people over a long period a relatively

long period of time strategic level is pretty much nation state stuff although when we're getting into large multinational corporations we're also starting to talk at strategic level this is things like influencing elections uh causing uh internal um Discord to the point where it becomes an internal Civil War uh stuff like this this is strategic level security threats so when I put these all together what we get is this table because I'm an academic and I love tables uh where we got physical at layer one we got technological at layers 2 through 7 uh and then human 8 n and 10 and um I don't want to venture into The Nether world here so I'll stay here but if we looked

at layer8 at a tactical level you'll see the social engineering uh you can see it at the uh operational and strategic level I give examples of all of those kind of along uh the uh rows there good um okay so coming back to um level of maturity um as I mentioned before I wanted to account for snake oil right um personally speaking I have not seen a lot of support for neurolinguistic programming but I know a couple of case officers who absolutely swear by it they're like I use this stuff and it works I'm like okay um that would be something I would put at a fairly low level of maturity um yeah I'll walk through an example but

um basically I have uh five Le levels to uh level of maturity so neurolinguistic program is something that I would put as uh theoretical uh I have not seen a lot of scientific empirical re uh support for it and so I'm going to say it's there but there's not a lot of empirical support for it proof of concept this is something that's been documented but it's not actually out in the wild we're seeing a lot of this in AI right now right so it's like somebody will do some attack it's a really cool attack but we haven't actually seen it you know out in the wild uh something that's been observed in the wild is kind of the

converse of that is something that we've actually observed somebody's lost money to it or Worse common use we all know that it's out there and it's it's available and then uh well established it's got a lot of uh scientific empirical support behind it and uh it's easy to observe so we'll work through an example very quickly and then I'll kind of tell you a quick use case of how I've used this so loss aversion is a cognitive bias that uh everyone has and basically what it says in a nutshell is that you feel um losses more viscerally then you feel gains so if you lose $100 that's going to hurt more than if you around

$100 on the ground uh we've got the short description the ID uh the layer operational scale I I'm putting what's it's usually used at um I'm going to probably build that out later but that's that's where I'm at right now I categorize that as a vulnerability because it's a bias that can be used to exploit a person so there are psychological tactics that can be used to exploit the vulnerability the cognitive vulnerability of loss aversion an example of that would be oops uh would be um um scarcity so imposing scarcity like hey you're not going to have this resource after a certain period of time invokes that law loss Avers um that loss aversion so it's kind of like um it's

the exploit that's operating on that vulnerability happens all the time in fishing click here to uh uh prevent losing access to your account that sort of thing okay so how I'm starting to use this and I'll get into U some other uses uh in a little bit but uh basically I'm building these all out into a graph where if I wanted to attack specifically loss A verion I can do it by using these different exploits or uh tools tactics techniques and procedures conversely if I know that I'm going to go attack a Target using a fishing email I can use this to start looking at let me come back to that I can use this to start looking at

different vectors that I might take so for a CEO um I can sort of scan likely cognitive vulnerabilities of a CEO and I can use that then to tailor the fishing email that I'm going to send to those vulnerabilities and the way that I'm doing this right now is I'm taking these definitions and I'm basically plugging those into an llm in the forms of prompts to help me to tailor to automate the tailoring of fishing emails to certain individuals now if it's a CEO they're probably not going to react very well to an appeal to Authority some random person suddenly telling them hey you need to do this because I'm the boss doesn't work very well when you're the

boss and so I can account for things like that so the dotted lines are actually negative connections those are things that might actually backfire uh but decision fatigue which means that you um your um ability to make effective decisions at the end of the day starts to decline because your your neurons have been basically firing the whole time that is something that is probably very likely to work on a CEO right because they're constantly making decisions so I can use that then to then decide what type of an attack I want to launch so let me come back one slide real quick and um hey Jessica how are we doing for time okay okay cool um okay so

um I I actually do work for a living now but I used to be an academic so I I still kind of have that in me and uh one of the downfalls of being an academic is that I commonly build things and I have no idea why I'm building it's just like oh this is really cool and I just want to do it and then I'm like ah but what are we going to use this for is there really a reason so no joke this morning I was like oh I got to come up with some reasons so uh anyways um okay so beyond having just this catalog of attacks right I'm thinking that having this

catalog may help with uh threat modeling and it may also help with um threat intelligence and if anyone Works in either of those two spaces and has an opinion of this I would love to hear it because kind of what I'm thinking is if we have these tags right on these different types of attacks and then we have um like the the Bad actors they're they're people generally too right and they've got these vulnerabilities and one of those vulnerabilities is that they have habits and because of these habits they have certain ways of doing things over and over again and with these tags we can start to develop cognitive thumb prints of these different attackers so I

don't know maybe maybe I'm just BS in but that's that's one thing that I kind of came up uh the other idea is that we can unify these ideas of cognitive Warfare and social engineering because there's a lot of overlap in these two Fields um and uh yeah and then the last thing was I just showed an example of how I'm using this to build cognitive attack graphs to um help with automating cognitive attacks so anyways that's uh that's kind of what I came up with if you'd like to check it out and give me feedback I'd be very open to it uh here's my contact uh email if any of this is interesting I do a weekly

meeting we're on a twoe pause because of the cons but we do a online meeting and we're limited to 50 participants or less uh you can see uh presentations from previous meetings on our YouTube channel which is uh the URLs also right there what the way we do it is uh Pres comes on they'll present on a topic they'll have PowerPoint slides usually in the whole the whole deal and we'll record that that portion that portion gets taken and it gets put up onto our YouTube channel the conversation afterwards stays within that meeting we don't publish that so the nice thing is is that we've got a nice little Community going and it gives people an

opportunity to talk one toone with um authors that are doing really really cool stuff uh researchers in different fields and and um yeah with that I I'm done I'll take any questions thank

you any

questions thank you hi uh thank you for the talk I really appreciate it I learned a lot um one thing I was wondering in terms of the the attacks the cognitive attacks that you've identified is that an emerging property of llms and how they process information or is it just human biases imprinted in the training data that are then kind of being exploited because we have bad training data um are you spe specifically speaking to the graph um sure not specifically I just just sort of in general okay um no it's not particular to llms so llms are under that umbrella but there's a lot of human stuff there too um the attack the attacks I think are the new part because

what I'm doing with the graph is I'm taking the information from each of those nodes and those become prompts into the llm and then those prompts are seeds which then the llm uses to create the attack that then I launched does that make sense okay so this so you're not attacking the llm you're using the llm as a tool to I I I could attack an llm if if so what it would look like is um instead of having a CEO at the top there it would be a certain type it' be Claude the llm at the top there and it would be like known vulnerabilities for Claude and then I could come down and

have uh attacks for each of those vulnerabilities okay but I guess my question then would be are those vulnerabilities tied to the training data that Claud is being trained on that's sort of inheriting human biases that are sort of laid in the training data so that's a a really good question I don't have the answer for it um probably yes but um here's the weird way to wait think about it right is is loss aversion a construct of the training data that humans were trained on do you see the yeah so that that's where it starts to get really weird yeah thank you

yep um it's a bit less sexy than threat Intel but one area that I can see quite a bit of overlap with this content is Consumer Protection Law so there's a lot of research into so-called dark patterns um the ways in which consumer decisions get hacked quite un quote um there's actually a lot of research being done in the Australian government at the moment on how creating taxonomies of dark patterns for the purposes of consumer regulation let's talk after the talk uh yes uh so um oops I'm going the wrong way um yeah so dark patterns I actually have um 20 somewhere between 20 and 30 that are listed in in this I'm big fan

of dark patterns and um yeah I'm trying to get you don't happen to know the gentleman that started that de you because I'm trying to come have him come speak at the cognitive security Institute so if anyone does please put me in touch because that's high on my list right now um by the way really cool paper about using uh dark patterns to uh fool hackers which I thought was really interesting sort of playing into hacker uh cognitive

biases any more questions remember Matthew needs an indexer y'all thank you Matthew all right thank you very much than time