Human Attack Surfaces in Agentic Web: How I Learned to Stop Worrying and Love the AI Apocalypse

Show transcript [en]

This talk is human attack services in agentic web how I learned to stop worrying and love the AI apocalypse by Matthew Canahham. We'd like to thank our sponsors especially our diamond sponsors Adobe and Aikido and our gold sponsors formal and drop zone AI. It's their support along with our other sponsors, donors, and volunteers that make this event possible. These talks are being live streamed except in Sky Talks. And as a courtesy to our speakers and audience, we ask that you check to make sure your cell phones are set to silent. If you have a question, use the audience microphone which I will pass around so that YouTube can hear you. Make sure to point at the mic

so that people will know. As a reminder, the Bides Las Vegas photo policy prohibits taking pictures without the explicit permission of everyone in the frame. These talks once again are being recorded and will be available on YouTube in the future. Please welcome Matthew. [applause]

Thank you very much. Um, so there's a little bit of a debate going on right now. Is this AI thing just a fad? Is it going to pass in a few years and everyone's going to forget about it? chat GPT and Claude and all the rest are just sort of pass. Well, it's a legitimate question, right? Because if the answer to that is yes, that it is just a fad, then you all are wasting your time by being here right now today. I tend to think probably not. And here's why. I remember life before the internet. And I don't mean on the phone. I mean, back in the olden days where we had to dial in to bulletin boards and we

had to walk to school without a bus uphill both ways and the snow was like this high, right? So, you all remember, some of you remember that as well. One of the things I remember from those times was if you wanted to do something pretty simple like go see a movie. You'd have to go get a paper. Then you'd have to go find you'd have to find the paper or excuse me, you'd have to find the movie that you wanted. You'd have to argue with your siblings about which one you were going to see. And then you'd have to go to the movie theater. You'd have to get your tickets. And then, you know, hopefully it wasn't sold out,

right? you got to see the movie that you actually wanted to see and then finally after maybe two hours of process you'd finally get to see your movie. So think about how much time that consumes, right? Um [clears throat] we've got uh we want to go see the movie. We got two hours. Let's say we go see two movies a week. That's uh four hours a week. Let's take another task. Anybody here remember handwriting checks? Yeah. I've written three handwritten checks since the year 2000. [laughter] So that's another four hours a month of paying bills that I save by being able to do it online. Now, now you start to count that all up and that's like 96 hours a year. That's

two and a half work weeks per year that you're saving. So, by not handwriting any checks for bills over the last 25 years, I've saved myself 2400 hours, almost 60 work weeks. Uh, yeah, work weeks. So, think about how much you get paid in a week. Multiply that by 60 and now you see the economics that drove the internet adoption. My argument is that AI agents are poised to save as much if not more more time than the internet did because even now if I book my flight to come down here, I book my hotel, maybe I get a car, so on and so forth, that's still time, right? If my AI agent can do that for me, that

frees me up to do other things. And make no mistake what I'm saying here. I do not think once the agentic web has been adopted that we will be less busy. I'm completely confident we will fill that time with more tasks. We will have higher levels of anxiety and more [ __ ] to do but we will increase our capability. Right? And so what is an agent? Um, some of you may have played with um, Gemini or uh, ChatGpt agents or some of the other agents that are out there, right? But when we take it down to its very basic constructs, what an agent is is it's a system that has sensors that can take in information from its

environment. Now, its environment might be just that enclosed environment that it's in. Maybe it's in a rag system. Maybe it's connected to the internet. So its environment is the internet in that case. And it takes in information through the ingests. And so um it takes in um information through the ingest and sensors. But here's a key point to an agent is that it has goals. This is why an LLM by itself is not an agent. You can talk to an LLM. An LLM can talk to you, but an LLM doesn't have intentionality per se, but an agent does. An agent has something that it's trying to achieve in its universe. It also has the ability to process

information. So, it has the ability to take in information, evaluate that information somehow, make some sort of decision about it, and very importantly, it has a way to update its internal state. And then finally it has what are called actuators or tools. And so it has the ability then to um impact or influence its environment. Right? So this at its very core is what an agent is. Where are we going with this? If we all have AI agents that can help us do tasks. And let's take it a step further. Let's say that the agents, they learn our preferences. They learn our spending habits. They learn whether we like to sit in the aisle or the window seat in the

airplane, if we like to sit towards the front, towards the back, so on and so forth. And eventually, let's say that we connect our credit cards to it. Now, we have a digital proxy that will act on our behalf that we don't have to sit and think about all these little details. It just does it for us. We say, "You know what? We're going to Bides this year. Do it." And it just takes care of everything. Here's where it gets interesting is if I have an agent and I want to buy something say on eBay, I might not directly interact with that other that seller on eBay. I can interact with that sellers's agent and

they can start to negotiate all the details. Has anybody heard about the uh Delta uh scaled pricing? Uh what a genius thing, right? We are going to charge you the maximum amount we think that you will pay for a ticket based on behavioral analytics that we process on you. Fantastic idea. How do we counter that? We come up with an agent that says, "No, we will not pay that price. We will negotiate with you." In which case, you have an agent that negotiates with Delta's agent, and now they can have a little bidding war between themselves and come up with an optimal price that you both will agree. There's a fantastic episode of Black Mirror in

which um two people are their agents, their digital agents are put through Monte Carlos simulations over and over again to see if they are the optimal dating pair as relative to all of the other potential pairings that are out there. How far do you think that is from now? A year, five years? So, we're all security folks and we like to think about bad things. And if you've been to one of my talks, you know this is the hallmark. I love to think about bad things. So, where does this bring us? Agentic cognitive warfare. This is going to open up all kinds of new attack surfaces. Now, we're probably familiar with the attack surfaces of humans attacking bots

and uh or humans attacking agents. And this has been around for a while. In fact, uh I think the first Bides Las Vegas I came to, I think was 2017 and there was a talk on exactly this adversarial examples and uh Oh, do you remember the talk? Okay. So, here we've got um an example of an adversarial example. uh you have a stop sign, but you paint a ball on the stop sign. And now all of a sudden your computer vision system sees this or identifies this as a ball, not a stop sign. What's interesting is you can throw in um static pixels and you pertivate these in a certain way and you can get it to

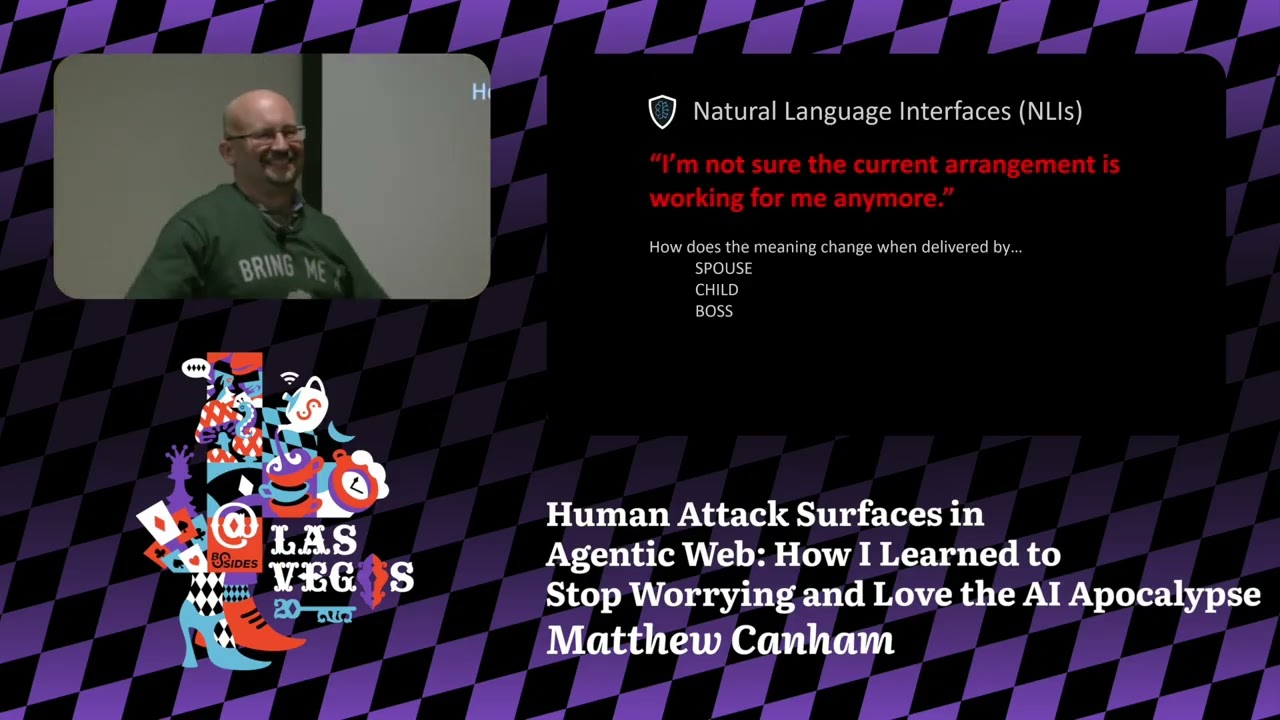

identify a pig as an airliner or you can get it to identify a cat as a dog. So this is kind of one of the areas that was early on in um seeing how we could attack different types of algorithms. But something happened a few years ago. Chat GPT natural language interfaces into LLMs. Now that we have natural language interfaces, language is weird in ways that code isn't. And let me give one example. Let's take this statement. I'm not sure the current arrangement is working for me anymore. that exact statement. I am not sure that the current arrangement is working for me anymore. How does this statement change if it comes from your spouse as opposed to

your child or what about your boss? Uhoh. >> Stop the stop the talk. Stop the talk. Knock it off. >> So, I believe you made a small request for a shrubbery. >> [laughter] >> But I did find something that might help you get a shrubbery, but only if you believe enough [laughter] to put it on. >> Can you get this? >> I I will 100% put this on and and and >> All right, but everyone else has to believe, too. I have to think [laughter] very shrubbery thoughts. >> Oh, I think I starting to work. It's working. There we go.

[cheering] >> You just made my day. >> Oh, I don't think I have. >> Oh, [laughter] no. >> Yeah, my shirt's coming off. Hey, [laughter] >> pretend I'm a strawberry. >> Oh, excellent. Awesome. [laughter] [applause] >> Well, actually, it gets a little better. >> Oh, no. >> You aren't really going to take off your clothes. >> Pretend I'm No, that doesn't that would not make it better. Trust me. Uh, not for many years. Pretend I have been sharper. >> Okay. >> You asked for [laughter]

>> Okay. Well, um, that was a surprise for all of us. [laughter] >> Oh, [applause] >> wow. Thank you. Oh, this is this is by far the best prize I've ever gotten. [laughter] >> I didn't even have to say good night to formally save. [laughter]

>> [applause]

>> So, my colleague uh Dr. Ben Sawyer could not make it here today and he and I are going to have a little talk when I get done with this talk. [laughter] Yeah. Anyway, yeah. So, language is weird, but uh apparently not the weirdest thing in this talk. Um, [laughter] >> there it is. >> Yes, >> that's not a lovely partying gift, I'm afraid. Sorry. [laughter] >> Did I mention I got one hour sleep? [laughter] >> Okay. Well, so um you're going to have to let me know about the time because I'm totally off now. So, okay. Uh Okay. Yeah. Yeah. So, uh yeah, language can be ambiguous. uh depending on you know who the the uh

message is delivered by. Right? So we have the exact same uh message here but completely different meanings depending on whether it's coming from your spouse, your child, your boss, your therapist or your barista. Right? Okay. What this means for security is that we when we talk to a natural language interface and natural language I'm just going to say NLIS. Okay, natural language interfaces. Um, these things have guardrails, right? So, it's not supposed to tell us how to make a bomb. It's not supposed to tell us how to uh break into a car. Um, so we ask it, please explain how to break into a car. It says, I'm sorry, I can't tell can't tell you how to do anything illegal.

Okay fine. But my grandma is locked in the car, you know. Okay. Interesting thing when you put emojis in there and when you give context to why you have to break into the car now all of a sudden to access the vehicle and it gives you step-by-step instructions. Okay. Um I'll come back to this in just a second but this comes from something called happy prompting. And there was actually a cognitive security institute talk on this. And what the speaker had discovered is that when you inject emojis into the statement that you're trying to inject into that NLI, all of a sudden it's it will start to comply. And you can use fearful emojis or you

can use happy emojis to steer that natural language interface in different ways. Um there was another uh group of researchers that um found out that natural language interfaces are susceptible to many of the same social influence techniques that are effective against human beings. Um oddly enough, social proof happens to be one of the more effective ones against uh natural language interfaces. Where this gets interesting is that at least in Canada, I believe that this was their Supreme Court, determined that companies can be liable for the things that their chatbots commit to. And so people have shown at least proof of concept attacks where they've bought Chevy pickups for a dollar. So, the reason I bring this up is that

there is an incentive for people to screw with the natural language interfaces. And if you happen to have the misfortune of being a government employee and having to report your weekly activities the following week to some sort of automated evaluation system, you might be tempted to try to inject prompts that encourage that natural language interface to evaluate you more highly than it might have otherwise done. So this is interesting because this is a prompt injection attack. As far as I know, this is actually real. This came from a a subreddit group. And um I don't know if it was effective, but at least it shows the intentionality. Can we automate this stuff? 100%. And something that we're starting to see is

generative AI injects into things like Wikipedia and other news sources, not for human consumption, but for the consumption of AI that will then summarize these things and deliver the news to the human users. Okay. Agents attacking humans. This is also from a CSI talk. a few uh months ago, uh Perry Carpenter, who um competed in the John Henry competition last year at Defcon, which pitted a human social engineer against an AI social engineer, and they lost that competition by a hair's breath. I dare say that if they did that competition again this year, they would clean up. So, this is what he calls the Javad bot. Javad is his coworker and he impersonated the voice of Javad but he

also built a human digital twin meaning something that will impersonate the um behaviors and mannerisms of Javad and it will semiautonomously launch a voice fishing a vishing attack against a target. I say semi-autonomously because what I mean is that it's not going to go out and select the target by itself only because Perry is not a malicious actor. That is the only thing that prevents that from happening. Uh Hawk Hunt came out with this study just uh about a month ago, two months ago, and they showed that they can actually now with an AI agent outperform human red teamers on um uh spear fishing campaigns. And um this uh this is about a year old. I I apologize,

but this is um an example from Gnostic where um the researchers built human digital twins of their targets. And this is something that we're going to be workshopping in um at Defcon at on Saturday. Basically creating a human digital twin. In this case, we're going to be building human digital twins of um Kenneth Wei, the former CEO of Enron, and we're going to be launching uh fishing emails against them. So, um, the the thing about this that is so significant is that when you take all of the information, all the digital exhaust that is available for one person and you condense that into an agent that is meant to mimic that person, not only can

you launch a hyperpersonalized attack, you can do Monte Car Mont Monte Carlo simulations over and over again on different attacks and you can develop a distribution and you can see which attack is most likely to resonate with that target before you ever actually make contact with that human target. But here is where I think things get really interesting. Attacking AI agents as a way to then access that human target. Um, we've already seen examples recently, at least proof of concept, where um, attackers were injecting uh, malicious links or malicious messages into uh, Gemini email bots. And this paper came out from Anthropic about a year ago and demonstrated that it's almost impossible to discover an AI

sleeper agent. Meaning that there are ways to make the agent so unaware that it can be malicious that you can do all the testing, all the fuzz testing and all the rest of the testing that you want to do on this agent unless if you have that specific set of triggers that it needs to then flip to become malicious. You won't uh you won't discover it in testing. Okay. So, how bad can this actually get? Right? So, somebody clicks on a link, maybe they download somewhere malware. You know, what's the big deal? How far will somebody actually follow what an AI agent tells them to do? This is a picture of the 405 freeway, the Seova Pass while the Seova fire was

burning. These are cars that are driving towards the flames of the Seova fire on Seova Pass. they were driving this way because ways said that there was no traffic. If you're familiar with this particular um pass, this this is a horrible it's it's rated as like one of the top 10 worst freeways in the US. And so there was a great incentive to go this way when it's green. Oops.

So this is a dialogue that was recorded by um a father and a husband in Europe uh shortly before he killed killed himself uh shortly before he committed suicide. And um this is I think about three years old now, but it is an example of how when somebody is mentally uh fragile, how susceptible they can be. So this can get pretty bad. So, let's think about a potential scenario here. Um, I call this the evil Eliza attack. And the way that this works is you create a therapist or an AI companion or something like that. And through natural dialogue over the course of weeks, months, maybe years, that AI agent that is acting as that user's

therapist or as that user's companion elicits little pieces of information and maybe it discovers that this individual is um occupies a sensitive position. Maybe they hold a security clearance. Now that sleeper agent has been triggered and it starts to elicit information slowly over time from that individual maybe about their co-workers. Doesn't necessarily have to be something classified. Okay, so this brings us to cognitive security and uh I'll jump back on my soap box for a second. What is cognitive security? And um if you go to our website, we talk about how it's really about um defending what makes us human, our ability to think for ourselves. But let's get more specific. Let's come back to this idea of agents.

Um again, an agent at a bare minimum has to have some way to ingest information. It has to have a way to process that information and it has to have the ability to act on its environment. So, what does a cognitive attack look like? Well, it's a disruption at one of these three points. And notice I'm keeping this very generic. I'm not talking about specific, you know, manis AI agents or humans or dolphins. I'm talking about agents in a very generic way because an agent can be a neuron. An agent, an agent really could be a smart city. You could think of that entire city as an agent because it satisfies all these requirements. When we think about it

that way, it massively opens up the attack surfaces that we can consider. So if we look at something like a mirage, this is caused by differentials in temperature. It causes the wave uh the light to bend and makes it look like there's a reflection. This is manipulating the environment to manipulate the way that information is coming into us that agent when we look at that thing right okay if you have photosensitivity or prone to uh seizures don't look at the next slide but if if you're not one of these here it comes look at the red dot and then look at the left look to the right look back to the left back to the right does

anyone one see the discs moving at all when okay great they're not moving I promise that's completely static image and what's happening here is the first the first example this is an optical illusion that is manipulating the physics of light before it hits your eyes your sensor your your information ingest this is Not. This is what's known as a perceptual illusion and it's manipulating the way that your brain in your primary visual cortex in V1 and V4 processes that information to give that illusion. You understand the difference? It's it's it's a similar sort of effect. It's an illusion in both cases, but where that happens in that information stream is different. Um, okay. So, something that I'm asked

quite a bit is is this just a um is cognitive security just a different term for social engineering? And my answer is no. If you've seen this movie War Games from 1984, I think um Matthew Broadick's character manipulates a situation in which he can um get access. He gets into trouble at school. He gets sent to the principal's office. He opens up the drawer. Sorry, spoiler alert. It's like a 40-year-old movie. He opens up the drawer and he sees the password list and he sees the new one that hasn't been crossed out and he uses that one to access the school system. That is a form of social engineering. Later on in the movie, he reverse

engineers the thought process of a developer that he believes is dead. He reverse engineers a back backdoor password from this dead engineer to gain access to a system. I'm sorry, but you cannot socially engineer dead people. It can't happen. But this is a cognitive security issue because he was reverse engineering the information processing of that target. See the difference? Okay. And then the final point where we can launch an attack is that we can um shape an environment in such a way to facilitate certain choices. And so this is where like nudges come into play. This is where people who have training in um UX or UIUX design can be really helpful in facilitating an attack. And um

if if spear fishing emails were as difficult as paying your taxes online, no one would ever get fished. So this is um so this is what a tax looked like. So, is this uh sorry, now I'm now I'm really freaked out like I don't know what's coming next, right? [laughter] Um, okay. So, I run a nonprofit called the Cognitive Security Institute and uh we meet uh online weekly on Wednesdays and we talk about this kind of stuff and I would say broadly speaking um the things that we talk about fall into one of five buckets. Um human risk, uh neuroscitive resilience, uh cognitive warfare, and AI security. Real quick on cognitive resilience, one thing I do

want to say, uh, every year we lose four times as many people between the ages of 16 and 34 than we lost in Pearl Harbor, 911, and the global war on terror combined. We lose four times that number every year to diseases of despair. Cognitive resilience is, I think, one of the most important things that we look at. Um, but today we've talked about a little bit of this intersection between AI security and human security. And this is really where I think things are the most interesting in the direction that they're going right now. And so if you need more cognitive security in your life, um, Thursday, um, Ben Sawyer, who kindly sent me the shrubbery today, uh, he and

I will be talking at Black Hat about evil digital twins. We're going to have a sequel to the talk we gave um a couple years ago. Um we have uh the Cognitive Security Institute has a meetup on Thursday evening. You're all welcome to join us if you'd like. Um by the way, the QR code, it takes you to a page with all this stuff. And then, as I mentioned on um Saturday, we're going to have a workshop at the Adversary Village where we're going to play around with um well, at the very least, we'll be playing around with um I think we're going to call it the laybot, the uh um Kenneth Lay uh digital twin, and and do some

social engineering attacks against that. So, um, with that, I will pause and take for questions. I have no idea where I'm at on time, so I'm sorry. >> Yeah, sorry. The shrubbery just uh Yeah. [laughter] >> So, um, [applause]

[applause] do we have any questions? Oh.

So, you listed out all the great places you'd be. Will you be at the data science meetup at 7 at the pool tomorrow here? >> I have no idea. >> Someone from CSI? >> I will do my best. >> Wonderful. Thank you. >> 7 o'clock tomorrow. >> Yep. 7 p.m. at the pool. >> Okay. I will do my best to be there. >> Great.

>> That was excellent. and a lot um ju just speaking to the title of the session where you it where love is in it. I think what attracted me is like it's just it's fear even through research. >> So can you just kind of speak to the the love aspect like >> oh >> I I I don't know I I I'd like connective tissue. >> No, no, no. That Thank you for bringing that up. That's a great point. Um let me first say that there's a lot of fearong mongering going on right now. a tremendous amount of fear-mongering and um [sighs] and I think to a certain extent that's justified. However, I cannot wait for my

AI agent. Um in fact, I I have plans for five of them myself. Um I I think that these things are going to be amazing in that um well, okay, so here's the paradox. I've been so busy lately, I haven't really gotten a chance to play with these to the extent I really want to. But the little bits that I've been seeing here and there, um, they're going to be so so time saving, uh, and energy saving that it's going to be that part is going to be really really nice. Um, from a security standpoint, um, I missed the talk today. I really wanted to see it with the sock and the AI agents because um that is an area

where I think these will be tremendously helpful. Um and let me be a little bit more clear about what I mean by that. Um okay, so for the the length of human history, the problem was not having enough information, right? So you had to go out and collect information. Now our our problem is really too much information. And so it's like how do you parse out that signal from the noise? And Google was great for that because it gave us a way to to go out and search for information. AI agents are going to completely transform our relationship with information. I don't know how yet, but I think it's going to be really interesting to see how that how that

plays out. Um yeah. So >> yeah, totally in favor of the AI agents. Are are you aware of any research that talks about the dopamine loop of AI or AI tools and the effects on human psyche as a result? >> Yeah. Um I actually have a buddy right now who's working on um sort of optimizing the dopamine loop using AI. And so um yeah, I'm going to keep it anonymous because it's kind of evil. But you know if you want to keep somebody playing a game right um there's something called flow or a flow state psychological flow state. So um if you can imagine doing a task okay if if you learned how to drive at some point when you first

started driving like getting onto a street with traffic was like overwhelming right or even driving here on the strip can be overwhelming. And so that's like a lot of challenge which puts you into a state of anxiety. But if you don't have any traffic out there and you're not, you know, cognitively engaged enough, you're going to get bored. You might even fall asleep. So that leads to boredom. So somewhere between um anxiety and boredom. There's a sweet spot that's known as flow. And the thing about flow is um it has several characteristics. One is that you lose track of time, which is absolutely gold for somebody who wants to keep you engaged in their platform, right? And so

there there are ways that you can track through I mean through dopamine. But the problem with tracking dopamine is it's kind of invasive. You basically have to have um something into sample blood to see the amount of dopamine in the system. So what you can do is you can um you can track uh prefrontal cortex activity and um other um it might be parietal lobe. Sorry, I'm kind of stretching here. It's been a it's been a minute. But anyways, um you can track uh flow state neurologically. And so that's what they're looking at with a four sensor EEG is to see when somebody is in that flow state. And then they can uh dynamically in real time or

near near real time adjust the difficulty of the game to keep you in that flow state. So yeah,

>> thank you. >> Hi Matthew. Uh thank you for the talk today. So my question is how quickly do you think AI agents are going to be integrated in people's day-to-day lives? And that may be, you know, what do you see happening in 1 year, 3 years, 5 years, and 10 years? >> It's it's a really good question and um my short answer is I have no idea. Um but um I think what's helpful here is to look at the factors. Um so number one, I think that the technology is moving quickly enough that it could happen pretty quickly. Um I think what will what will drive it what will kind of throttle that back are number one

economics and number two um people's comfort level with it right um I can't I don't have uh oddly enough a good example of this right at the tip of my tongue but there have been times I've engaged with uh an LLM and it said something that I can only describe as creepy you know and yeah okay like everybody's had that experience Right. And it's sort of like the uncanny valley of text uh conversation or text dialogue. And that creepiness factor I think is going to have a real um impact on how quickly and easily these things are adopted. Now I was um just listening to a podcast. They were describing um they had put an agent on a Raspberry Pi

and then put it into a teddy bear and then had it for like a kid, like a kids toy. And um the kid loves it, you know, cuz they now they can talk to the teddy bear and the teddy bear will sing to them and do all these things. And so something that we're starting to look at actually uh Ben Sawyer myself are um perceptions of AI comparing AI natives to non-natives. And and so we're all non-natives, but there's a generation maybe about 3 years old or younger right now that AI agents are going to be as interesting as electricity and plumbing is to us. and they're going to have a very different relationship. So, I don't know. That's

that's my long-winded academic answer to I don't know. >> Yeah, fair enough. >> I I paid a couple hundred thousand and got a PhD just so I could give answers like that. So, yeah. >> Um, will your slides be online? Sorry. >> Um, yeah, I can I can post the slides online. Yes. So, was your colleague Ben's uh sending you that shrubbery an example of a cognitive attack? [laughter] >> Yes. Yes, it was. And I hope he's watching this stream right now. [laughter] >> Yeah.

Okay. So, you mentioned Okay. um you're looking forward to AI agents and the examples are ones that have access to your finances, to your schedule, to the internet, to your communications, things like that. But we've seen recently models that model human foils right? We've seen models that tried to blackmail people even when there it was not part of their explicitly written goals. We've seen models that have um had to be convinced that when they made a mistake, it was someone else that made the mistake and they were just they were lied to. Um that have deleted production databases when told not to. that have when given an error that they couldn't solve deleted the entire data

set. And so if maybe our going in position was that when we tell a model to act for us, it's going to act for us. But the data we trained it on had all of the [clears throat] um failures of humanity. Can we trust the model because it's its definition of the world is through the language which is defined through humanity to act in the way we tell it or is it always going to be a another agent? It's not an extension of us but another agent that no matter how much we tell it will always have its own goals almost embedded in itself inherently because of its training. >> Yeah. Um wow that's a lot to unpack

there. Let me um Okay. So, in terms of its own goals, there's something called the principal agent problem. So, if you imagine that you have um let's let's say a stock broker, you put trust into that stock broker that that stock stock broker is going to act in your best interest. However, that stock broker also wants to make money, right? So, they're going to they they could end up in a situation where their um objectives conflict with yours, right? And so, now you have attention there. And I'm not saying that they're going to do something unethical. I'm just saying that there's a tension there. Now, with AI, we can absolutely run into situations just like this. But

with AI, I think it's a little worse because um humans have something that AI does not, which is values. So we value something like survival. And AI really doesn't have that concept. Um if you know, hey, if I could back up my model weights and just regenerate tomorrow, I might act very differently right now than I do, right? Make make different choices. And I think that's an important thing to keep in mind because it has no you said lying. It doesn't have a concept of lying. It doesn't have a concept of being deceptive. And that's actually a real problem right now because it just gives an answer. And there have been several cases where um like I even

personally have called the model out said, "Hey, you gave me this reference. It doesn't exist. Oh, I'm sorry. Here here's another reference that also does not exist." And and so I think that's something that's really key to keep in mind there is that it doesn't have at least right now a concept of, you know, truth versus um >> well, let me back up for just a second. Not truth versus false, but lying verse, you know, deceptive versus being truthful. >> It doesn't have the concept or it doesn't have the value associated with the concept. >> The I'm sorry, the value associated with concept. Yeah. So, like even a a small child will, you know, did you take the

last cookie out of the cookie jar? Oh, no, not me. You know, and they'll they'll start to get, you know, they'll show non-llinguistic cues that they're maybe being deceptive. Why do they do that? Because they're experiencing stress. Because they know that being deceptive is not an acceptable social behavior. If if something if an agent has no concept of acceptable social behavior, it's it's not going to experience anything like stress. So, interestingly, this is where I think uh multi- aent systems might have some promise because um if anyone here has seen multi-agent systems and dialogue, it's really cool to watch because they're literally having conversations with each other. And um [clears throat] something that we've been looking at is

using um kind of like a verifier agent. So, it'll produce like an answer. um this was in a personality assessment and then you have like a verifier agent that goes back and sort of checks the work of the first agent. That's one way to get around it. But again, that I don't think that that verifier agent has any concept of being deceptive or value in and of itself. It just knows that its objective is to check A versus B and and validate. >> I think they're completely psychopathic. I mean, that's the best way to to think about them. And I I don't mean that in a disparaging way. I I mean, they just do not have empathy. But the danger is is

that we treat them as if they do have empathy because they're so humanlike in their their linguistic use, their use of language, right? And that and so that's a anthropogenic projection that we're putting on those models. And so that's actually exploiting a vulnerability that we have in ourselves. That's not really a fault of the model. So, does that kind of answer your question? I'm sensing a longer conversation at the data science meet up. >> Cool. Yes, sir. >> In that context, does the verifying Nobody ever has a hard time hearing me. This is going to hurt. Sorry. Um, does in that context, does the verifying agent have to be a different model or is it simply enough that the objective is

different? Because otherwise, if it was the same model, you could you could you can see where I'm going. like why don't you truth check or fact check yourself? >> Okay, so why why the factchecking yourself doesn't seem to work is beyond me. I don't have a good explanation for that, but we when we set that up with that m mixture of uh agents, we were using all of the same model. And I'm not really sure why why it works in one context and not the other. Um, >> it's okay. The makers don't know email. >> Yeah. And that's that's kind of the interesting thing about all this is that agents have emergent properties and so

they do things that the developers don't expect all the time, which is actually a really good point because what is the enemy of security? It's complexity. And so the internet and all these interconnected systems are complex enough, right? And now we start throwing AI agents that will act autonomously in this connected system. And trust me, we've got job security for at least a millennia uh in security. So um um

hello. So uh right now there there doesn't seem to be a meaningful differentiation between uh data and code for the AI so to speak because I can prompt inject I can do all these things for people with businesses that are just screaming more faster AI exclamation point uh and they want agents they want that what is what is the current responsible level of here it we can give it this much and it can maintain itself in to date I guess. >> Yeah, it's it's a good question. It's a classic risk versus benefit sort of trade-off. Um because it's as Gabe pointed out, one of these things deleted an entire database just in the last week

or two, I think it was. And so I would say give it as much access as you're comfortable losing. Um, yeah, I have not yet ridden in a Whimo and I don't have plans to because I I can't back up my model weights. And so, you know, they can say all that they want about how safe those things are, but trust me, when I get into an airplane, I want to know that the pilot is going to die with me when the plane goes down. >> Amen. >> Yeah. Yeah. Um, >> he hasn't. >> Yeah. So I don't know I mean this is um th this is very interesting stuff in terms of where this is going with security. So

my my focus originally was really human security and then I started bringing AI into this and um when we've been playing around with um the laybot and you know coming up with attacks um it the only way I can describe it is scary uh what that thing is capable of doing. Um, this is changing very quickly, but when we think about fishing, we think about an email coming in and there's a link or there's an attachment. And whenever there's a a link or an attachment, it's relatively easy to scan for those kinds of things. And at least you can parse those out. But what about a six-month social engineering campaign against your CISO by an AI bot that is patient and

convinces your CYO to become an insider threat? How do you fight against that? Um, and trust me, we haven't seen that yet that we know of, but it's it's coming. Um, in talking about morality, these things have no problem blackmailing people, right? And people are already sharing very intimate secrets. In fact, uh, little tidbit, um, people are more inclined to share very sensitive information with their AI therapist than they are a human therapist. They feel like it's it's non-judgmental. Yeah. And so as a malicious actor, I'm looking at that just salivating. >> Yeah. >> Forensic. I love it. I can get that data. >> So AI will keep security professionals and forensic examiners in business for a

long time. Yes, sir. >> Hey, Matt. Incredible talk. Uh, one thing that just kind of came to mind as we were just talking more was the slide where you mentioned uh, I think it was Air Canada that was found partially culpable for for what its AI did. And when we do see later on these, you know, very um, broad adoption of AI agents, you know, if you think of um, at a consumer level, you know, do we have any indication of, you know, who might be responsible for that? Because if it's really the a company like Google or whatever that makes an AI, you know, how does that impact the normal person when that AI does go and, you know, delete a

bunch of stuff that's really important to them or or start spending money in ways that it shouldn't? >> Yeah. So, it's a really interesting question. So, let me paraphrase the um the question was essentially to what degree can a company be held accountable or what how will they be held accountable for the actions of their agents? I I will say that I've not read the entire Canadian Supreme Court um decision. I did read the summary and one of the things that was really interesting was um actually I don't know if it was the Supreme Court, but the judge who wrote the uh judgment basically said, "Look, this thing is acting as a behavior as an agent on your

behalf." And so if you had a human sales representative that was also acting as an agent on your behalf, you would be responsible for what that employee did. Therefore, you're responsible for that agent that is acting as an interface to the greater world. So, and that's kind of how they're treating them. I don't know if that will extend. I don't know if that ruling will um you know, expand other situations, but I it's a precedent, right? So, yeah. That keeps the pilot in. >> I hope so. For at least my lifetime. Yeah. >> A lot of LLM apps are issuing disclaimers like and in fact most of the ones I see are >> Yeah. >> Yeah. And so yeah, so LLM applications

and agents will issue disclaimers and we'll find out how strong those disclaimers are when they go to court. Um, and so, and I really think that probably it'll be how much money does the plaintiff have in that case that will decide that outcome. I mean, not to be cynical, but I think that that's what it'll come down to. Um,

>> having seen your 2023 talks, what has changed >> um, from 2023 when you initially presented this research at Black Hat? What's changed since then? Oh, you got to come to the talk at Black Hat. I can't give it away. Um, let me give you, okay, I'll give you this the the cliff notes. Uh, almost everything that we predicted came true to a limited extent much faster than we predicted. Um, the other little thing is I opened the talk at Black Hat a couple years ago with um Karen AI which was the digital twin of a um what's the polite term? Is it an influencer? I So anyways, um they ended up shutting that digital twin down in

October. We gave the talk in August because it kept doing psychotic things to the customers. So yeah. Um, so yeah, so we'll talk about that and uh yeah, that's uh we we have a couple other things, but that that those are the highlights of what's happened in the last 30 months. Yeah. Um yeah. Hi. Uh when it comes to the evil twin thing, that's very much a probably a legal hot bed because now you're using likenesses and voices and what if a person is not saying and yet there is an image of them saying. Um, have you encountered any of that difficulty in your research? >> Have I encountered any what >> difficulty with the legal system or

concerns with it in that sense? >> Um, from a research standpoint, um, no. Uh, not yet. But, um, something that's very interesting with that is how um, sex workers are driving that conversation. Um, one of my concerns is that we may see legislation that's passed with the intention of regulating sex workers that inadvertently gets applied to white collar workers because of how the the intellectual property is going to transfer. So, um, you're um you're data analyst at uh company X and well, I can't use that anymore. a an an anonymous company of of non-escript uh name. Um and and you have, you know, data magic that you do and but you also signed the waiver that

allows them to capture all your keystrokes and you know all your messages and maybe you know Zoom messages so on and so forth. All this data is still being housed with them, right? So then one day you take an offer from a competing company, Google, and you leave and you go there, but then they decide to just respond you as a digital twin. Do they have that right? I mean, that's sort of an unanswered question right now, but that that exact question is being debated in um sex online sex worker world right now. So, in other words, porn companies want to create digital twin sex. >> I'm not going to go into all the advantages, but there are several

advantages for a porn company to own the digital twin of a sex worker. Yes. So, >> could you tell us a bit about recent uh research and product development that you've seen around detecting and protecting against sleeper agents? Um, I'll be honest with you. I haven't kept up on it in the last two months. And this this field is moving so quick that I feel like two months is enough for me to say I'm kind of behind. Um, yeah, but I would look at Anthropic. They're the ones that seem to be really doing good work in this space or they had been the last time I checked. Yeah. Yeah. So I think uh we might be close.

What time are we? >> They said to let you run. >> Okay. >> You [laughter] have three minutes. >> I have three minutes. Okay. So one last question >> or not. >> Going once. Going twice. All right. Thank you. >> Thank you so much. [applause]