LLM SRO Ontology Driven Security for LLMs - Dr. Thomas Heverin

Show transcript [en]

Good afternoon everyone. I'm Dr. Thomas Har and again I'm presenting with Caroline Ma and Avery Buyer and we hail from the Baldwin School an all girls private school out in Bridmore Pennsylvania and our talk is entitled LLM SRO ontologydriven security for large language models. So thank you for attending our talk. So first I'd like to share a bit of our background. I think I could spend the whole presentation bragging about what these two researchers have done but also uh other students. So in the picture is a depicts my uh AI and cyber screen class. So at the Baldwin school I teach ethical hacking 1 and two and I also teach AI and I make the classes hands-on

to feel like so the girls can feel like they're making a real world impact live in the classroom. This picture is from a field trip to the FBI computer forensics lab in Radford. But some of our ethical hacking achievements in our classes. So we reported on hundreds of vulnerabilities including IoT devices, printers, security cameras, classroom display systems. We use show end map and various tools. And we even created our own hacking tools uh based on Python such as tools that can hack into FTP uet servers, IoT devices and many more types of devices. We also have authored a an exploit DB entry. Exploit DB is an exploit database full of different exploits that can be used against

different products. So we created an exploit DB entry on Solstus pods which are classroom display systems. We found that we can grab the live session key and then get into display systems and display whatever we wanted to ethically of course. And we also go partnerships with UPEN Drexel and Villanova. We've done some hacking projects with uh some universities across the pond in UK and also in the University of Mexico. And I'd like to highlight some of our AI security achievements. So this group uh that we're presenting today, we have published a prompt injection research paper at a cyber worker conference and also on archive. Archive is an open technical scientific paper repository. We did a research

study where we tried to exploit 36 LMS with different prompt injections that we came up with. And a prompt injection is a way to trick an AI tool to produce content it's not supposed to, such as key logger code or fishing email. We tested 36 LMS and found that over half of them were vulnerable to our prompt injections. We also presented to MITER which is a federal contractor. Uh we showed MITER how we can use prompt injections to actually create some small scale and large scale industrial virtual system packing modules. And we presented over a lunch and learn session. over 130 uh cyber screen researchers and engineers were there and they stayed the whole talk. It's been cool. And my

background specifically, I have my PhD from Drexel. After I got my PhD, I worked down at the build up the Navyyard doing research and which led to a patent focused on cyber security risk assessments on Navy ship systems including like smart carriers. And in that work, we used ontology modeling and some algorithms to do risk analysis over Navy ship systems, which is relevant to our work that we're going to discuss today. So again, I think I could spend a whole session bragging about our accomplishments for these two research accomplishments. Uh but now I'd like to give you an overview of our technical talk. So first I'll lead off in talking about the challenges of managing LL risk

because in addition to teaching ethical hacking in AI at the Ball School, I'm also the head of technology and cyber security there. We'll talk about the development of the security risk antology. Avery will take over that talk. uh creation of the SRO GBT analysis that we can run with the SRO GBT and insights gained. Caroline will talk about these. We'll wrap up with some future research. So now like to talk from the leadership view about how hard it can be to manage LM risk at an organization and our talk focuses on the K12 domain. So in terms of confidentiality, integrity and availability in managing risk, we really focus on confidentiality. We don't want student information to be out there in

the public. We don't want financial aid information about families out there in the public, health information about students and many other types of data. But it's really challenging when teachers and staff start spinning up their or start logging in using different LLMs and who knows what they're uploading to those LLMs. So it can be hard to maintain awareness of how LMS are being used by faculty and staff. Imagine they're uploading grades or the like learning profiles if students have learning differences. Imagine they're uploading that to a LLM and hackers get in and expose that data. It's really sensitive and private data and then who knows what's happening with data governance and uncertainty in terms of

CO and others. So in K12 domain we have certain safeguards or frameworks we have to make we have to follow. uh when I was serving on in the Navy or working for the Navy research, there's different you know frameworks that we have to follow there for guidelines and unbedded integrations is a big concern too because we don't know if a teacher or staff member is connecting one of the LMS to our Google Drive who knows what the LM is pulling who knows how that data that teachers have now integrated is being used to train the LMS. So it leads me as I'm if I'm trying to manage the risk at the Balling school or other K12 school.

It's can be really hard in terms of it's really hard to determine like where are the greatest risk at my organization in terms of confidentiality. All the different LM being used and the risk among each LM not sure what data teachers are staff upload data. It's really hard for me to determine by myself like where is the greatest risk require a lot of manual analysis and a lot of work also what are the best mitigations to use against those risk so many of you work at organizations where you wish you had more cyber security IT staff at the Baldwin school there's only three of us maintaining uh IT and security for hundreds of staff members and hundreds

thousands of students it's been really hard so doing that type of analysis trying to identify where is my greatest risk is is super challenging. So what can help answer those hard questions? Ah an ontology model integrated with a GPT that provides easy access to complex analysis. So that's the basis of our talk. Uh so the basis of my talk is we're going to talk about ontologies like what they are how they can be used and then what we can do with an ontology once we embed that ontology into the GPT. And again this work the source of this work in my background comes from again the Navy research that I did result in the patent that focused

on modeling Navy ship systems all the software hardware on the systems how the systems are interconnected and then using algorithms to calculate the risk over that model. So we apply that approach to the K12 domain using ontologies and GPT. So now I'm going to pass on to Avery Moer who's going to talk about ontologies. Oh, wait. Actually, actually, pause on that. I'm going to keep going. Uh because as a leader, I'm so excited to pass it on to my uh fellow researchers. Like, I can't wait. So, I'm going to try to make this quick. So, as a leader, I'm going to try to evaluate risk risk across my system, which is the K12 system. There's some

frameworks out there that I can use. But first off, of course, I want to know what LM are in use and what the functions are of those LMS or how they're being used. Then, I want to know, well, what are risk out there? So yeah, I can come up with my own list of risk, but OASP is the open web application security project experts around the world. They determine uh together like what are the top risk against LLMs. So the top risk they determine is prompt injections which are again ways to trick AI tools in producing content that I'm supposed to. So they come with things that I can use to try to analyze risk on my system or

mitigate the risk because they come with mitigations too. but can also use uh Atlas which is MITER's adversarial threat landscape for artificial intelligence systems landscape. It's a framework showing you how an hacker or someone on the offensive side is trying to exploit risk against LLMs. So they talk about atlas techniques which are specific techniques hackers will use to try to exploit you know risk on LM. They also provide mitigations and tactics. So we know in cyber security it's it's really hard when we have to do like manual work and start from the ground up. I imagine someone already did some someone already did some work for us. That's why I use frameworks in analyzing risk. And also of course we have a CI

CIA triad confidentiality integrity and availability in a K12 domain. We really focus on confidentiality keeping student data confidential health data uh learning data learning profiles learning differences all that data financial aid data keeping that all private. So just briefly about OA was top 10 L1 risk. You see the number one risk is prompt injections and there's sensitive information disclosure and a whole bunch more. So as a leader of cyber security IT I'm trying to analyze the risk of my systems. I'm looking at OAS to learn more about specific risk I want to get more knowledge about. And within each risk it's great because OAS also says preventions and mitigation strategies. So I'm not sure like what I can do to

mitigate problem injections, I can look at OAS and get some ideas of what I can try or what I can do or what's applicable to me. And what's really great too is that when OAS also points to minor atlas, it points to the offensive techniques that one would use to exploit the weaknesses on my LLMs. So hop over to wire atlas and they talk about direct prompt injections and it also points to mitigations such as AI telemetry logging and down at the bottom points to tactics which are like the main strategies of attackers and execution. But minor atlas is a lot. It's got many different tactics up top which are kind of the main strategies of hackers and it

has different techniques that hackers would use. That's a lot for us humans to consume. I can sit there for months trying to understand this and analyze it and applying it to my school or any organization or like to a Navy ship or a government system. A lot of information to process the data overload and that's what leads us to trying to model this. Now I'm going to pass off.

Hello. So an ontology. So an ontology is a formal model of the domain that defines the key concepts such as LLM products, risks and mitigations and the relationships among those concepts. In the context of LLM security, this structure lets us move beyond lists and instead represent a unified map of what systems exist, what they do, what can go wrong, and how those risks can be addressed. Once this model is in place, it becomes possible to pose and answer and to pose and answer complex questions over the entire landscape of LM usage. For example, the ontology can reduce can support queries such as which mitigation strategies best reduce confidentiality risks across all deployed LMS with the

least implementation effort. That kind of question is extremely hard to answer reliably with spreadsheets or scattered documentation, but it becomes trackable when the concepts and relationships are formed in code.

The ontology is built from four main component types that together form a semantic network. Classes represent categories of objects such as L1 product or L1 function which organize entities into meaningful types. entities into meaningful types. Objects are such as an individual product like wise AI or a concrete function like video surveillance. Data properties capture attributes of objects such as an LLM conf function confidentiality index score of 10 which invarically encodes how severely a function affects confidentiality if compromised. Object properties then link two objects together such as Wisnet AI provides video surveillance explicitly stating how products functions risks and mitigations are connected. Now by combining classes, objects, data properties, and object properties, the ontology becomes a knowledge graph that

can be queried for patterns, dependencies, and optimal mitigation paths across many different LLM deployments.

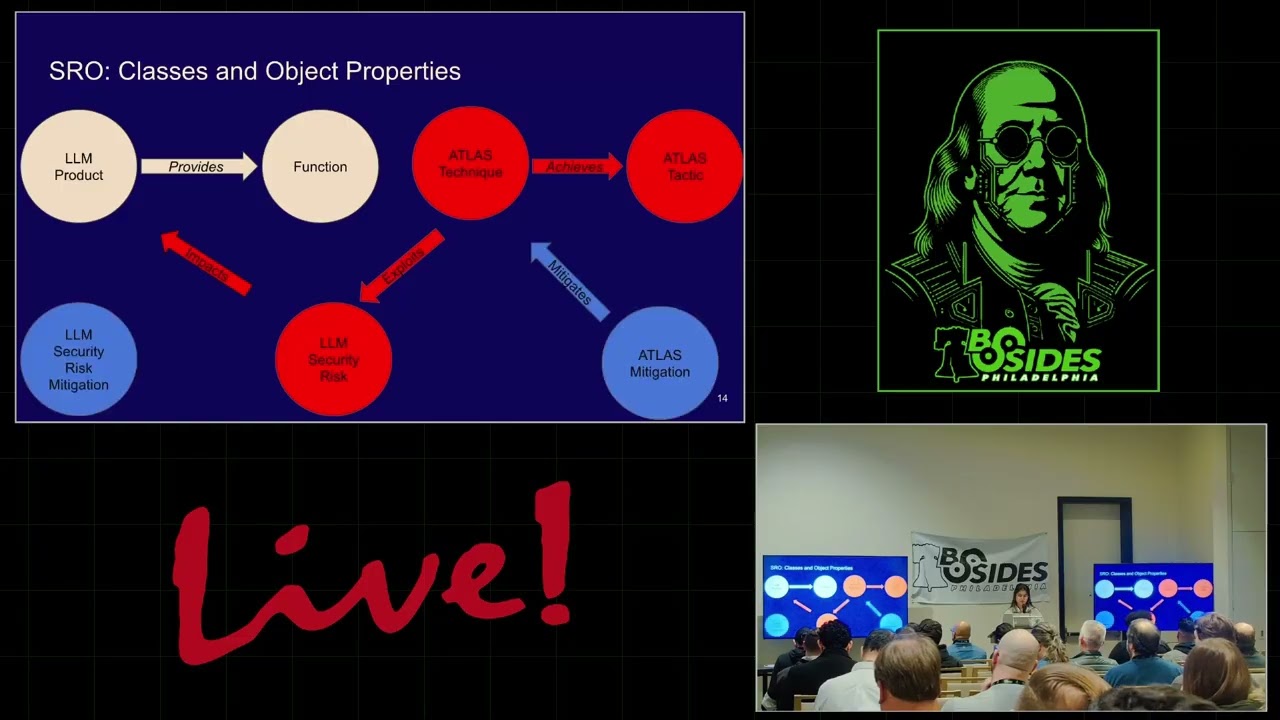

This slide introduces the core SRO classes and the object properties that connect them. The key classes are LLM product function, LLM security risk, LM security risk mitigation, Atlas technique, Atlas tactic, and Atlas mitigation. The arrows on the slide show how these classes relate to one another. provides links an LLM product to a function. Impacts links a function to an LLM security risk. Exploits links an atlas technique to an LLM security risk. Achieves link an Atlas technique to an Atlas tactic. And lastly, mitigates length atlas mitigation to an Atlas technique and also can link an LLM security risk mitigation to an LM security risk. Following these arrows from left to right shows an attack defense chain. Products provide

functions. Those functions are impacted by risks. Adversarial techniques and exploit those risks to achieve tactics and mitigations push back on the techniques.

Now the abstract structure is grounded in a concrete example. The arrow's meaning is clear. Here on the slide, we start with the LLM product financial edge NXT AI. The arrow label provides points from this product to the function financial aid tracking. Meaning this LLM is used to support financial aid tracking tasks. The function financial aid tracking has an arrow labeled impacts pointing to LLM security risk prompt injection. This indicates that this function is exposed to prompt injection risk. If the function is compromised, attackers can manipulate instructions given to the model to alter its behavior. The Atlas technique direct prompt injection has an error labeled exploit pointing to the same prompt injection risk. This shows that an

attacker can carry out a direct prompt injection technique specifically to exploit the prompt injection risk in the financial aid context. The Atlas technique direct prompt injection then has an arrow labeled achieves pointing to the Atlas tactic malicious execution. This models the attacker's goal. By exploiting prompt objection, the attacker achieves malicious execution such as triggering harmful or unauthorized actions through the LLM. Finally, two mitigations appear. The LM security risk mitigation human oversight has a mitigate arrow pointing to the prompt injection risk. The Atlas mitigation AI telemetry volume has a mitigates arrow pointing back to the Atlas technique direct injection. In order of the arrows, the example demonstrates a process. A specific LLM product provides a financial function.

That function is vulnerable to prompt injection risk. A direct prompt injection technique exploits that risk to then achieve malicious execution and targeting mitigation to push back both the risk level and technique level.

This slide adds quantitative data now properties onto the same example. to show how the ontology supports prioritization and trade-off analysis. The prompt injection LM security risk is assigned a confidentiality impact score of 10. This indicates that if exploited, prompt injection in this financial aid context is considered maximum severity for confidentiality. Highly sensitive data could be exposed or misused in this case. The LM security risk mitigation human oversight has an ease of implementation score for this suggests that putting human review in the loop requires moderate effort feasible but not trivial involving processes staffing and workflow changes for example. Then the atlas mitigation AI telemetry logging has an e implementation score of two. This reflects that configuring

logging and telemetry at least in this context is relatively hard compared to human oversight. Perhaps because it demands engineering integration, storage, and monitoring infrastructure. By combining the class object structure with these data properly, the ontology supports questions such as given a risk with confidentiality impact 10, what combination of mitigations gives the best reduction in risk relative to how hard they are to implement? This then lets organizations choose mitigation plans that are not only effective but also practical under limited time and resources. Web protege is used to instantiate the abstract ontology with real world individuals in the individuals by class view. Each class such as LM product function, LM security risk or mitigation contains a list of concrete instances

like actual tools. For example, Microsoft copilot or math GPT as seen on the slide here. These specific comp injected risks and name mitigations deployed in educational environments are also listed. Seeing these individuals organized under their classes makes it easy for security teams to trace actual assets and threats within their environment. Instead of thinking about LL risk in the con in the abstract context, they can see which precise products functions and risks are present in their K through2 setting and how they interconnect to one another. Going back a slide here. After defining the class hierarchy, the next step is to then populate the ontology with concrete individuals that represent real world entities being managed in the web prote.

Each class such as LM product function, LM security risk or LM security risk mitigation contains a list of specific instances that exist in the environment. A particular LM tools, named risks or concrete mitigations used by an organization. This transforms the ontology from an abstract schema into the living model of an actual LLM. For example, under the LLM products class, individuals might include tools such as Microsoft Copilot or Map GT deployment classrooms. While under LM security risk, individuals might represent specific prompt injection or information disposal risk that have been identified. Associating these individuals with the appropriate classes ensures that every product, function, risk, and mitigation is formally represented and can participate in theories, analyses, and reasoning. This

step is essential for tying the ontology directly to the assets and threats that security teams are responsible for managing in practice.

Data properties that in web proteable characteristics. Examples relevant to this ontology include scores like function confidentiality impact score, LM risk integrity impact score and other CIA related impact metrics. By standardizing these scores across the ontology, analysts can run quantitative comparisons rather than relying on subjective judgments alone. For instance, multiple risks can be ranked by confidentiality impact or mitigations can be filtered by ease of implementation, enabling more systematic datadriven security planning. The security risk ontology's complexity comes from its rich network of data properties overlaying the relationships among products, functions, risks, techniques, tactics, and mitigations. Each link can carry associated impact scores and other attributes for confidentiality integrity and availability, reflecting how a single vulnerability may propagate across

multiple dimensions of risk. Instead of a binary view of safe versus unsafe, this structure captures nuanced multi-layered effects of attacks and defenses. Analysts can assess, for example, how an atlas technique tied to an exfiltration tactic impacts confidentiality compared to integrity and then select mitigations that best balance these competing concerns. This slide also talks about how once fully populated, a security risk ontology becomes a complex highfidelity graph of the LM environment. Each LLM product function risk atlas technique at Atlas tactic and mitigation is represented at the node and each object property such as provides impacts, exploits, achieves or mitigates is represented in a directed edge. Layered on top of this structure are data properties that assign confidentiality,

integrity and availability impact scores as well as ease of implementation or other relevant metrics. That complexity is not just visual. It encodes the many to many relationships that exist in real world cyber security today. A single LLM product may provide multiple functions each exposed to several risks which can be exploited by multiple techniques encountered by overlapping sets of mitigations. The ontologies richness captures this intertwined structure explicitly which is exactly what enables advanced graph based analyses. Instead of simplifying risk into isolated checklists, the model preserves these interdependencies so that security teams can better understand how attacks propagate or defenses overlap and where critical gaps were made. Now I will hand it off to Caroline to talk about our DPT model.

Now let's discuss why putting the LM security risk ontology into a GBT is important. In this image, you can see how easily accessible and readable ontology is inside the TBT where users can ask a variety of questions. Also, I wanted to add that this research is so important in K through2 schools as it protects personal data and private information involving finance. The GPT makes the model more accessible by giving users a clear structure to understand LLM security without needing deep expertise. Meaning anyone can use this and understand complex topics by researchers. It acts as a dynamic training and education layer, adapting explanations to each user skill level and helping teams learn continuously. It allows natural language questioning

about the ontology. So users can explore risks, relationships, and mitigations simply by asking. It enables multiple algorithms to run over the ontology supported supporting structured reasoning, automated classification, and impact analysis. It provides more precise and consistent outputs because the model is grounded in standardized concepts from the SRO. It improves the automation of security tasks, policies, and documentation, making workflow faster, more accurate, and easier to skip. And lastly, it futureproofs security by allowing quick updates as new risks and patterns emerge, keeping the model aligned with evolving threats. Here's a picture of how the GBT was created, further proving how anyone can take complex data and make it into an accessible resource for others. By providing a name, simple description and

instructors as well as our ontology file and data, the GBT allows users to ask a diverse set of questions. Here are some basic questions that can be asked in our GBT. For instance, if you ask which Atlas mitigations defend against the most techniques, the user will receive some defenses that protect against many attack methods, strong mitigations that block multiple attacker techniques, and these are most broadly useful safeguards. If you ask which Atlas tactics have the most techniques pointing to them, the GBT will respond that some attackers goals use many different methods and state that tactics with many techniques are higher risk and that these tactics show where the attackers focus their effort. Lastly, if asked for the

exfiltration tactic, which tech which techniques expose high confidentiality risks? The GPT provides techniques that steal sensitive data, methods targeting high impact confidentiality weaknesses, and approaches that extract private or protected information. Let's look into the specific examples. With the first image, we can see that the GBT shows specific mitigations as well as how effective they are. We can see that the AI teleament locking which tracks AI behavior for attacks and detects many malicious actions as a strong broad security visibility and mitigates six different techniques. Restrict number of AI model queries mitigates six techniques which limits attacker attempts, reduces the chances of repeated frabbing and stops many trial and error attacks. Passive AI output offiscation mitigates three techniques which hides sensitive

model details, prevents attackers learning system internals and protects against system information revealing attacks. Generative AI guardrail mitigates two techniques which blocks unsafe outputs, prevents harmful motor model responses and help stop promptbased misuse. We ask GBT to analyze atlas ontology and determine which tactics have the highest number of techniques playing to them via the chief tactics relationship. According to the results, the tactics with the most techniques mapped to it is exfiltration using five techniques with impact using three techniques and execution using two techniques. These help us see which phases in the Atlas framework have the densest technique coverage, highlighting areas with greater detail or complexity. It also provides insights into how these specific techniques can help mitigate

risks. We asked the GBT to analyze the atlas ontology and identify which exfiltration related techniques target LLM risk rated as high confidentiality impact. The GBT returned three exfiltration techniques that all map to the same high impact. LLM risk LLM O2 information disclosure risk shows that the techniques are inferred training data membership, invert AI model and extract AI model. All three techniques oppose attempt to expose sensitive model information aligning them with the only exfiltration linked to LM risk categorized as high confidentiality impact in the ontology. These prompts show how the SRO GBT can move beyond simple queries and perform real analytical reasoning in the atlas ontology. The weighted greedy max coverage question helps us balance two

things. one, which mitigations are the most effective and two, which are the easiest to implement, giving us a practical prioritized mitigation plan. The function seated personalized page rank lets us identify which techniques are structurally most likely to be targeted as high confidentiality impact functions, essentially highlighting the highest risk attack paths. The small mitigation question focuses on efficiency. What's the minimum number of mitigations that still covers the majority of weighted risk? This is especially great for constrained environments or resources. And the centrality analysis question surfaces the risks that matter most, those that influence large portions of the risk graph and therefore deserve priority attention. A waiting greedy max coverage algorithm and picks the most effective mitigations

first by balancing how much risk they reduce and how easy they are to implement. The algorithm finds mitigations giving the biggest security benefit but also factor into how easy each mitigation is. The results show that the best value mitigations for protection versus effort and that AI teleament logging ranked highest overall while generative AI guard was ranked second best choice. Personal Pinter PRR highlights which attack techniques are most likely to target specific high confidentiality functions by measuring their relative importance in the ontology. This means it shows the most likely attacks on sensitive functions. The GBT uses the ontology to rank techniques by the probability of being used and shows that direct comp injection is highest risk which helps

prioritize defenses. Moreover, it demonstrates several techniques share a similarly higher risk of likelihood of being attacked. In the example, the method of the ontology uses a smallest set mitigation plan which finds the fewest mitigations that cover the largest amount of PR weighted attack risk adding one mitigation at a time to maximize the total risk reduction. The results illustrate that one mitigation covers about 42% of the PR risk where PR stands for potential risk reduction. AI telemetry logging is the best first choice and two mitigations can cover about 61% of the risk with guard rails added as the second most helpful and that overall more mitigations steadily increase total protection. Finally, in this instance, the GBT uses

centrality analysis to identify which risks are most connected in the ontology breath, meaning they influence or are influenced by other elements. The GPT results show that prompt injection is the most centrally connected risk. Unfounded consumption is tied for top critical risk and information disclosure as well as misinformation risk are highly connected and that central risks impact many parts of the system. Now concluding it all, what does this all mean? Ontologies can help evaluate the risk of organizational LLM usage by creating a shared vocabulary and clearly map relationships between variables.

An ontology based GPT allows for various type of analyses both basic and advanced which provide insights about AI risk. By integrating our ontology into a GPT, the SRO GBT that Caroline just detailed, users can ask natural language questions that trigger graphs based analyses. Basic analyses include questions such as which atlas mitigations defend against the most techniques or which tactics have the most techniques pointing to them. While advanced analyses use algorithms such as weighted greedy coverage, personalized page rank and centrality and centrality to reveal most likely attack paths and high impact mitigation. Now I'll hand it over to sorry future research includes adding more LLM expanding our ontology focusing on integrity and availability and examining domains beyond the K through2

educational land. Thank you.

So now I'm going to open the floor for any questions and if you want I can show you uh hands on how we can use web prote if you'd like. But first like to see are there any questions uh the audience so far?

So when you guys are >> okay >> so when you guys are building the ontology that tool it seems like it's basically relational database is that basically you guys built it by hand you see any opportunity to build it with >> yes >> um so yes it's similar to relational database what's called a graph database there's many to many relationships that can exist uh that's why we use an ontology similar to knowledge graph um in terms of building it. Yes. So we build it manually to make sure we had high fidelity of data but there are automated techniques to build ontologies. So for example I know uh just like Avery mentioned like a lot of

cyber security is done in spreadsheets and as my experience down at the Philadelphia Navy security is done on ships like spreadsheets. So there's ways to integrate the spreadsheets automatically into antology of some uh input input tools. Uh but yes you can use the LM to also create theology. So when there's like frameworks out there like um the Atlas and OAST you can use like chat like hey can you create for me and for the GPT version we're using we use the paid version it's not just the free version. Yes, it's a question. >> I'm creating the onology manually in the web. I copy and pasted like each tactic or the technique used from the website,

but I think the LM could definitely be useful if you can just put the link in. Maybe they can get all the information you need so it's more efficient. Are there other questions before we show you white proteg?

>> How are you all verifying that you're not getting hallucinations back from the LM while you're you're injecting this graph? >> Yes, that's something we encountered when we first put the ontology in the GT. So he found one way to mitigate that was to give it clear instructions when you set it up. And also we learned in our prompts like only use the data that's in the source ontology. Sometimes we have to remind it again and again to know AI they think they're smart but not always the smartest tool in the sh but that's one way to mitigate it. Uh but that's a we face that there other questions about the GPT. So I can quickly show you what web protege

if you like our models in there to show how easy it is to kind of create classes in there. I can show you that one

So as we discussed in the presentation, Web Prote is an open source ontology editor. Uh there's a version you can a desktop version you can download a client. You can also use the web version which is what we use to collaborate uh on this ontology data modeling project. So in web prote first you create a a project and in ontology modeling the first thing you want to do is create classes which are categories of objects and what I love about ontology modeling because I've done it again for the navy but I published on it before is that once you create kind of a core ontology right away you see like hey there are other classes I can insert it here there

are other connections I can make that are out there and that's what I love about ontologies it's like a flat database with many to many connections and we can talk about other things we might So these are our main classes. Uh if I want to add a new class, uh can anybody think of like another class that might a category of objects? I have one in mind, but I can also think of another. So one thing in mind that we don't have here is we're really thinking about security risk of LNS are the like security settings of particular LNS such as you know allowing the data to be trained in the model. Can we limit the

number of queries that are done each day? So I can easily um add of course I have to log in my password is 1 2 3 4 don't use that just kidding

work I do okay so we have classes again if I want to add another class one class just you know about thinking about this project is we don't have anything in our model right now talking about specific security settings that one can select on an element or an IT admin or start to create admin implement on an L. So I can go here under things how to model a whole world. I click on create and now of course it won't let me but hypothetically uh I would be able to add a new class. Um yeah because this I have it locked down but it goes you to show like classes also properties again these are object

properties where we can link two classes together. So we have like basic object properties here, but it's really easy to add in here. And with ecology modeling, what's great about it is like you work together and you as a team come up with how you want to label the data, how you want to label the relationships and how it speaks to your domain. So what we use in the K12 domain may be totally different than how you label things or describe things in your domain whether it's private sector, the government sector. Uh so we can use object properties for that and also data properties are like attributes about particular uh objects. So again it's easy to create new data properties or

new data attributes and for our scoring system forgot to mention that our scoring systems at a 10. So for example like function confidentiality impact score the score is at 10 but again in your teams you can come up with whatever score and measures that you want but adding the data properties and the numeric values allows you to do quantitative analysis over the ontology and you can add many many more uh data properties and you al of course many many more object properties I start thinking of other things to link this to. Um so other I'm going to go back to classes. So of course you're going to add security settings here. Perhaps you add people like people in different

roles if you're doing uh access role based management or actually pick particular names of people because we have many different people that work at a K12 school including financial accounting uh facilities security you know students teachers nurses and many more. So you can add them into the mix to analyze the risk of particular types of users. So after you create the ontology it's always flexible keep added on to it then we can embed it into a GBT. Has anyone created a GBT before in chat GPT? Yeah, it's pretty it's really straightforward. Uh we did it in our AI class present or created different uh GPTs. Um but in the GPT, one of the things you can do is you have a source

file kind of like the main sources you want the GPT to reason over or calculate over. So that's where you upload it. We downloaded our ontology for web prote. And from there we can ask questions such as if I'm working on this like we did as a team if someone's new to this ontology simple questions like can you list the classes object properties data properties of the source ontology and I go to where I had to say source ontology so it wouldn't just kind of start making up stuff and it lists the classes that are in there and object properties data properties and then one could easily ask hey explain what uh Atlas tactic is or

atlas technique is but from there you can ask some of the questions that we asked in our presentation of which Atlas mitigations defend against the most Atlas offensive techniques, state the full name of the mitigations. Um, but one thing I love is the meta level. So the meta level is when the anttology is spelled out as Andrew was saying with individuals becomes a knowledge. uh it specific entities in a domain and what we can ask is given the source focused on LM risk and mitigations what types of graph algorithms could be run over the source describe each algorithm in two bullet points so there's many different algorithms out there that are used to analyze graphs such as like what is the

most critical component in this particular type of graph what is the shortest path from one node to another node in this graph so many of these algorithms are really advanced but now with that ontology In a GT, we can find so many different types of advanced algorithms that will help us gain insights or look for patterns or help quantify the risk in our source or in in the domain that we're modeling. So many types of algorithms that are used and again this is relevant to the ontology model we did for the Navy. We created a graph of Navy ship systems analyze risk over there and we use many similar types of algorithms calculate the risk on Navy

ships. Now we're applying similar techniques for the GPT and I risk on a K12 system. Now I like to hand off now to you. Are there any questions or comments you have about the research?

All right. Well, thank you so much for attending our presentation. Round of applause for Avery.