From Pipelines To Problems: My Early DevOps Lessons In Security Culture - Prevail Uzodinma

Show transcript [en]

Hello everyone, my name is Prell Zadima and today I want to share my story on how a simple student DevOps project turned into my biggest security wakeup call. I call it my dev sec oops moment because that is exactly what it is. Now a little about me. I'm a software engineer, but I'm also doing my mers in software engineering at the University of Greater Manchester, and I'm building my career in DevOps and cloud engineering. Now, I love automation. You know, the idea of building things that run themselves, but I learned the hard way that automation without security is the grand recipe for automated chaos. Now, I have a question for everyone in the room. you, my wonderful audience,

maybe you're an engineer or maybe you're not, but you've written something code related. Have you ever had that moment where you you've written this piece of code and it just refused to run and after 3 days of debugging and crashing out in front of your laptop, it finally runs and you go, "Nobody touch anything cuz now that it's working, I just want it to stay as it is." Now that is exactly what happened with me during one of my M's modules called DevOps. Now at the end of the module we were required to deliver a project you know that proved our understanding of continuous integration and continuous deployment. So basically it didn't matter what we

built if the lecturer does not see automation in action no chance. You were not passing that module. And so what he wants is that every time we make a push to the codebase on GitHub, it will automatically trigger a build, a test and a deploy without any manual intervention. So this was our setup. We built uh a product called Expense Pal. It was a simple web- based expense tracker for students. So for our front end, we used React. For the back end, we use Node.js and Express. But for our CI/CD, we decided to use Jenkins instead of GitHub actions because we wanted a bit more control over the deployment process. We're also planning a separation of concerns by running two

different pipelines, one for the back end and one for the front end. So it would be easy to identify where issues came from. And then for the infrastructure, we went with AWS EC2. Now the application wasn't the main focus, you know, it was the deployment. So we just hurried through the development so that we could set more time to bringing the automation to life. Now we did this as a team but I was responsible for the back end and the CI/CD part of things. Now this is where it gets Are you ready? Now, after seven long days of failed builds, as you can see from my snapshot, the reds and the grays, seven long days of failed builds, broken

stages, SSH issues, permission issues, after seven long days of questioning all of my life's choices. Ladies and gentlemen, my pipeline finally ran. I had a green. And at this point, this was my nobody touch anything moment cuz we have something to deliver. We have something for submission. It's working. Just leave it alone. Victory, right? Well, not quite. After a few days of submission, few days after submission, I was reviewing our repository and I noticed something that made my heart freeze. Our AWS credentials were sitting pretty in our Jenkins file pushed to GitHub for the whole world to see. And to top that off, we had Jenkins running on admin level permissions on AWS. So I

basically handed the keys to my kingdom to all of the internet to do as they please. So after re after after reviewing this and discussing this with my lecturer I realized that even though this was a student project in the real world it would have been a major disaster that would trigger immediate incident response. I'm talking alerts firing dashboards turning red engineers running everywhere. But the question is why? Why was this such a big deal? And I'll tell you you see AWS access keys are not just passwords. They're actually master keys. Anyone that has access to that key can create, modify, delete, and drain every AWS resource in your account. Now, attackers don't just stumble on these keys by

accident. They actually have bots that scan GitHub 24/7. And keys can be exploited within minutes of being pushed to GitHub. Now, for companies, this kind of exposure would mean millions in unexpected charges and damages. And I'll give you an example. In 2016, Uber accidentally uploaded their AWS credentials to their private GitHub repo. Now, hackers got a hand of it and they exploited their system and stole personal data of 57 million users and drivers. Now Uber paid $100,000 in fines in in sorry in ransom to have the data deleted. However, they ended up paying $148 million in fines for failing to disclose that breach. So you see this is a real world implication of committing your AWS access keys on

GitHub. And that's the tricky thing about Git history and we'll get to that. You see if even if you delete the file say oops I committed the key and I deleted the file a gate actually keeps a record of everything in its history. So once your credentials enter the repo even for a second g keeps it forever unless it's scrubbed and attackers know this and so they scan even your old g comments. So the question now would be how were we able to fix this? Because saying oh I made the commit I deleted it is not a fix. So what is the fix? How do we deal with this kind of issue? And you see this has been a top security issue

for the past 5 years in industry. So how did we fix it? Now remember back to my story. It was a student project quite all right but we decided to mimic a real world incident for learning purposes. How would we clean up in the real world? So, first we moved all our hard-coded secrets. We deleted them and moved them to environment variables so that it's safe and out of sight. And then we replaced our overly permissive Jenkins uh the overly permissive admin assets that Jenkins had. We replaced it with IM roles that followed the least privilege principle of DevOps so that it only has access to what it requires and nothing more. And then we rotated all the

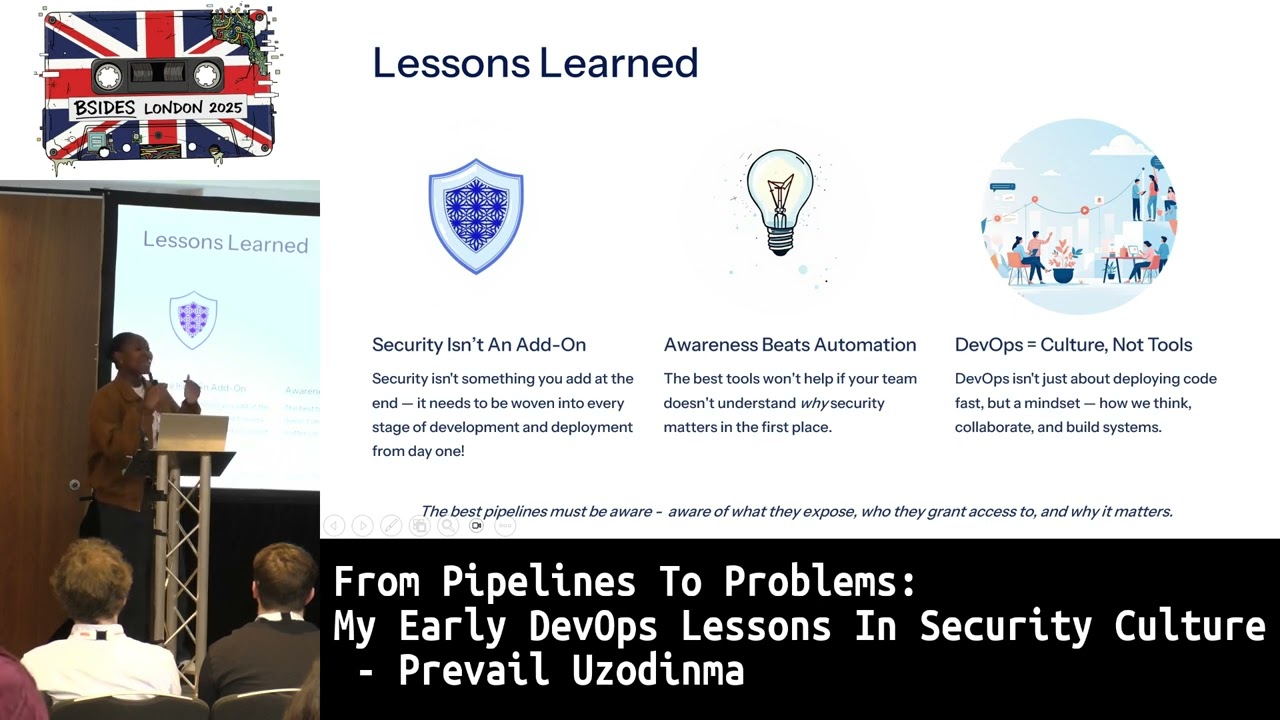

exposed keys. So meaning we discarded the old ones and we created new ones so that even if anyone got a hand of the leaked keys from our old g commit history, it would be useless cuz you can't access anything anymore. And then finally to make sure that this mistake does not repeat itself again we integrated a secret scanning tool called GIS so that we can detect any secrets that's out there before we even committed an error. Now this experience taught me three very important lessons that changed my perspective on DevOps and security. First off I learned that de security is not an add-on. It's not something you sprinkle at the end. It's actually supposed to be baked into every

decision from the moment you start designing the system. Security comes before code is written. Security comes before the pipelines are designed. Security should start before the infrastructure is set up. Secondly, I learned that awareness beats automation. Now it doesn't matter if you have the best tools, the Docker, the Kubernetes, the Terraform, the pipelines. It doesn't matter if you have the best tools. If your team doesn't understand why security matters in the first place, these tools can help you. Before my mistake, I had AWS, I had Jenkins, I had the pipelines, I had all of the fancy automation. We even had peer reviews before merging, but we were reviewing code. We were not reviewing for risks

because we didn't know it was something to look out for or what to look out for. And that is why awareness is important because it helps you understand why. And so these tools can then protect you because you're not aware of what you're protecting yourself against. And then finally, I learned that DevOps is about culture. It's not about the tools. It's not about the deployments and the pipelines. It's a way of doing things. And when I say way of doing things, I mean it's how themes think. It's how they collaborate. It's how they approach solving problems together. You see, I started this project, you know, we started the module, we're doing an expense pile. We're doing an expense

tracker for students. But at the end, the project built me by turning me into a more security conscious software and cloud engineer. Now I'm passing the baton to you. Before I wrap up, I want to leave you with a few practical things that you can do starting from today. Whether you're a student, you're a junior engineer, or you've been in the industry for a long, long time. First off, scan your repositories. And not just the current ones, the old ones, too. Cuz we've learned that secrets can hide in your old comets. And you know, the things that we forget could be the most dangerous ones. Secondly, review your AM permissions. If you have admin access

lying around, review them and replace them with IM roles using the least privilege principle of DevOps practices and then audit your Git history. You see, we have tools like truffle hog and git secrets for a reason. They can help you spot out those secrets that you manually won't detect. And then finally, automate the security checks. How about integrating secret scanning to your CI/CD pipelines? And so you're not just relying on memory or luck. If you take out the time to do these things future, you would be grateful that you took the time to do them. And you know why? It's because security is not an action inspired by fear. On the contrary, security is not

fear. Security is care. It's care for the systems that we build. care for the people that use these systems and care for the businesses that rely on us to build these systems. My name is Prell. I want to say a big thank you to Bside for the opportunity to have my first talk. A big thank you to my mentor Jim for the counsel and guidance to this very point. And to my brother who's the one that made me aware of the opportunity in the first place and encouraged me to share my story. And to you, my wonderful audience, thank you for listening. I hope my mistakes help you avoid yours. Thank you. [applause]

>> That that was an absolutely outstanding first talk. Well done. Really well done. Do you want to take Q&A or >> Yes, please. If you have anyone questions, I'm going to take them. >> I can't see the whole room because of the pillar in the way. Is someone down there? Two seconds.

Hello. All right. Um, I was curious if your lecturer spotted the credentials in the repo. >> Well, he didn't. I did. And then I took it up to him in class during our review week and then he told me about the dangers of doing that. Exactly. [laughter] So yeah, because at the beginning of the module, he made it clear that security wasn't exactly a prerequisite. He just wanted us to be sure that we understood the concept of continuous integration and continuous deployment. So but it was during the review week, you know, when the adrenaline of submission was over and you're now calm, you realize, oh my goodness. Yeah. So thank you so much for that question.

>> Anybody else? [snorts] Oh, >> thank you for the wonderful talk. >> Okay. >> So, um I want to ask what parts in the CICD pipeline do you think security should be most implemented or taken note of? >> Well, I would thank you so much for that question. Uh so, in my experience, I would say security should start out from the design. you know when your team is gathered together designing how you want to move things from the build to the test to the deploy to the deployment so the security should start even when development is happening before deployment uh comes in so that's in my experience and I know that there are dev sec ops practices that align with

security by design so it should come when you're designing yeah and then if you could integrate um security peer reviews so that even during the development stage when there's peer reviews before the merging there can be things called security peer reviews I think it's a thing as well a word called security peer reviews where you are reviewing to check for loopholes and issues before it's even merged into uh the code base thank you thank you very much >> okay thank