Exposed Secrets — How Public Git Repositories and Docker Images Expose Millions of Secrets

Show original YouTube description

Show transcript [en]

Uh, we're going to kick off today by learning about exposed secrets in GitHub from Mackenzie Jackson, who is a developer advocate. I think I know the company right now, don't I? At You promised you'd get it right. I'd get it right. So, I'm going to ask you really. I'm going to ask you read it. Cuz I cuz I I like you invented it 2 minutes ago. Um, at GitGuardian. Thank you. Thank you very much. Yeah, so uh hello everyone. Welcome to day two. Um, yesterday was awesome for those that were were here. So, it's awesome. It's great to be presenting back in front of everyone. I think uh all the presentations I've done over the

last 2 years have been in my in my bedroom. So, it's it's great to see actual people. It's great to be wearing pants. Um So, today we're going to be talking about exposed secrets. So, we're going to be talking about exposed secrets both both publicly in places like public Git repositories and public areas like Docker and also privately and what that all means. So, we're going to start off with do a quick rundown about secrets, what they are, how they expose themselves. We'll talk about some breaches. We'll leak some credentials live and see what happens. And then um we'll run through some research that we've done that will quantify um the problem. So, just to start, what are

what are secrets? So, I'm sure probably most of you understand this, but I want to kind of get everyone onto the same page. So, we'll quickly run through what secrets are. So, they're digital authentication credentials. They are essentially the crown jewels of organizations. These are our API keys, our security certificates, our credential peers, our private keys. These are what authenticate us and our systems with everything that we're using. They provide access to the innermost workings of our organizations and they're exactly what attackers and adversaries uh are after cuz it allows them to move laterally, elevate privileges. So, why do these secrets exist? Well, we've had a big shift in how we build software, right? No longer are we

building any monolith monolithic applications. We're building these distributed systems filled with microservices. And because of this, developers have huge amounts of sensitive information that we need to keep track of. They need access to these secrets to be able to uh test applications, to be able to import to import them. The operations team need them. They need to be uh used to deploy. So, we need to distribute these secrets really really widely, but we also need to protect them, right? So, this creates an immediate conflict of what we need to do. Now, because they're made to be used programmatically, they often end up in source code. So, programmatically means that they're meant to be used not by

people, but by systems, by our applications. So, this means that they are great candidate for getting into our source code and source code is a crazy leaky asset, which we'll talk about later. And then everyone's stressed. Software developers are under pressure. Security teams are under pressure. Operation teams are under pressure. And this creates a perfect storm of how these secrets, the crown jewels of our organizations, end up in the public sphere. So, let's do a quick example. We're building an application. Um, and if we want to do credit card processing, if we want to do authentication, then we're probably not going to build that ourselves unless you're mad. We'll use different services. We'll use Okta.

We'll use Stripe. We'll use Algolia for searches. So, quickly our application is a collection of different services. All of these services leverage secrets. Right? Then we need to have some infrastructure. We need to host our application. So, now we have some cloud infrastructure. We have testing tools. We have VCS. Uh, you know, we have all these different collections of services in our infrastructure all leveraging secrets. They all need to be able to talk to each other. Then when we finally launch our application, the pesky marketing team, the sales teams, they want integrations into Salesforce and Zendesk. And so, we need to have more secrets to be able to connect to them. And this doesn't even

include all of the microservices that we've created that also leverage secrets. So, in this very simplified example, we can see that we have hundreds of different services all leveraging secrets. So, this is how we end up with so many secrets in our infrastructure. And these get rolled largely through our source code. So, you imagine when source code goes into a Git repository, if it has secrets in there, that source code with those secrets is then distributed and sprawled across all of our developers' machines. It's probably in our messaging systems, in our wikis, in our internal documentation. It's probably been backed up into different systems. And if it gets compiled, it's probably inside our running applications. Maybe

it's inside our Docker images. And the biggest thing before I kind of stop talking so much about this is that we have no idea where these secrets are. They started off in our nice secrets management system. We shared them with a developer doing their job and now they're everywhere. And our attack surface that attackers can target to try and find them is huge, but we don't know how big it is because we have no visibility over where they are. All right. So, this is what we call secret sprawl. So, I want to do just a quick demo that I'll get back to at the end. Live demos, what can go wrong? Okay. So, here I have an AWS key. So,

everyone quick take a picture. You can do some crypto mining. Um, so this is a real it's not a real AWS key. It's a canary token. And I'm going to do something that we should never do. And I'm going to push this into a public uh Git repository. And over here we have our canary token set up. And this is going to let me know every time someone tries and access this AWS credential. So, at the end of the talk, I'm going to come back and we're going to see if in the time that I've finished talking, someone's tried to access that AWS credential. And this is a free service, canary tokens. I think they're response to too. So, you can

recreate this experiment. You don't need to trust me. All right. Back to the presentation. So, let's talk about some cases where attackers have actually used secrets that have been leaked and how they've used them. So, Uber has two cases, public and private. So, the public incident. This happened when an employee of Uber pushed code to the wrong repository. They pushed code to their personal public Git repository. So, what's important is that this is outside of Uber's control. Uber has no idea that this has happened. Attackers were monitoring the employees of Uber because this actually happens quite frequently. And they found the an Amazon S3 bucket. They were able to access that, get sensitive data, uh and move forward. The second case

happened on a private repository. So, I hear a lot that oh, we don't need to worry too much about secrets. All our Git repositories are private. We don't do any open source. Well, here there was secrets inside Uber's private code repository, but because of poor password hygiene by some of the developers, attackers were able to access that. Um, and again, Amazon S3 bucket uh was there. And they were able to access sensitive information again. So, the next one uh which is a little bit more complicated, but definitely one of my favorite examples is that of Codecov. So, last year Codecov had a pretty significant supply chain uh attack. So, if you don't know what Codecov is, so Codecov is a code

coverage tool. So, it sits in your CI/CD pipeline and it basically checks to see gives you a report of how much of your application is being tested. It's a really cool tool. It does a very specific job. And at the time of the breach, they had about 20,000 customers including some big ones, which I'll come back to. So, what happened? So, on the official Codecov Docker image, there was uh a plain text secret, which provided access to their source code. So, the attackers were able to download this Docker image, extract the secret, and then they had access to Codecov source code. And in in a in a bash upload a script, so just a pretty ugly

ugly file that just follows out a set of instructions, they injected one line of malicious code, which easily would have been ignored. And that malicious code did one thing. It said, "Every time one of the 20,000 customers run Codecov, we're going to take all the environment variables. So, these are all the secrets that that application is using to test itself. So, it's going to need some access to databases. It's going to need access to services. And we're going to take all those environment variables and we're going to send them to the attacker." So, every time someone ran Codecov, the attacker got all the environment variables. Now, hopefully you're using different credentials, different secrets to test applications than you are in

production. Hopefully. But some credentials you use constantly. And one of them is your Git credentials. So, Codecov had access to the Git the GitHub tokens if it's running on GitHub or the Git credentials that gives access to those repositories. So, then the attackers were able to move from Codecov into the private code repositories of the victims. So, those victims were HashiCorp Twilio monday.com Rapid7. These attackers were able to access the private source code of all of those companies. And here's what's crazy is that all of those companies I just named that have great security posture, are fantastic companies, all had secrets in their private code repositories. Now, if HashiCorp, the company that created the term secret

sprawl, that builds one of the best secrets managers out there, has secrets inside their code repositories, cuz they did, then I'm willing to bet anything that you all have secrets and we all have secrets uh in their code repositories. And we all make mistakes, right? It happens. I was making a video about what happens when you leak credentials and accidentally leaked a real credential whilst making the video. It happens to all of us. So, this is This is a great example because we've moved from Docker into the version control system and then we've leapfrogged into other companies. So, the other one to talk about is that source code uh doesn't private source code doesn't always stay that private, right?

The mechanisms that we use to in version control systems allow it to be widely distributed. I'm never advocating against that because we need that to be able to work efficiently. But, it does mean that it's not very secure and it's a leaky asset. And we've seen a particularly large trend of, let's say, companies involuntarily open-sourcing their source code. So, we've seen that uh recently with a few. So, one of them is Twitch. So, last year, I think in October, Twitch's source code was leaked online. How this happened was there was a misconfiguration in their servers that allowed remote access to the Git repositories. It wasn't there for very long, but in that brief window, someone

found it, took all of their repositories, about 250 GB of data, 6,000 repositories, I think, and then they published them online, involuntarily open-sourced them. We scanned all those repositories and we found thousands of secrets in there. Now, the headlines following on from that was all about how the Twitch the Twitch streamers' income was leaked. But, we found 194 AWS keys that no one was talking about. We also found 69 Twilio keys and 68 Google keys. Now, this actually it seems huge, 6,600 secrets, bold letters, and I made some clickbaity titles in articles about that, too. But, the reality is that that's not that unusual. That's about what we would expect. Actually, it's probably a little bit

better than what we'd expect. And in my opinion, the adversaries in this case did Twitch a huge favor by publishing it online because Twitch then knew they had a problem. They could rotate all their secrets quickly and mitigate that risk, which is why I don't think we saw any massive high-profile uh breaches following on from that. Had the adversary not published it online, had the adversary instead looked for secrets himself and sold them, then that could have been a very different story. Now, the other one to talk about is uh Samsung, Nvidia, Microsoft. These were all uh breached for their and their source code published uh this year with the Lapsus group. So, Lapsus, we later found out, was

uh a band of teenagers and they were ransoming and threatening to release private source code if uh if they didn't pay the ransom. Now, we scanned these again for secrets and we did find thousands uh of secrets because they had significant warning, a lot of them were revoked, some of them weren't, but some of them can't be revoked like the Nvidia uh signing keys that were inside their code repositories. These were used to sign malware and we know that they came from the Nvidia breach. So, then you got to ask, how did a group of teenagers breach the private source code of these really high-profile, fantastic companies? And well, the answer is that they were

recruiting insiders. So, here we have uh a message from the Telegram channel that says, "We recruit insiders, employees, insiders at the following." They list a bunch of companies. So, you think about everyone that you want to have access to your secrets, right? You've probably got a secrets management system. It's probably tightly controlled and your secrets are highly encrypted. And now think about your Git repository. So, if those secrets are in your Git repositories, think about everyone that has access to them. Your interns, your developers, your engineers. How many of them have had a bad day? How many of them, if given the chance to make a couple of grand, chance to give someone access to their

Git repositories, would do that? And as we see, quite a lot. So, private source code isn't always private. So, we need to have this posture when it comes to source code that yes, we need to protect our open-source stuff. Yes, we need to make sure we're not leaking stuff publicly, but it's not good enough. We also need to make sure that our private source code are protected as well and that we don't uh have sensitive information in there. So, uh this brings me to the next part of the presentation here. And so, I want to talk about a report that GitGuardian does uh every year called the State of Secrets Sprawl report. So, this is a

report and we look at um the secrets that have been leaked publicly, so in places like github.com uh or on on Docker, so Docker Hub. And then we also look at the secrets uh that have been leaked privately. So, just to give you um an idea, Git uh GitGuardian scans every single public commit made to GitHub. So, last year we scanned a billion commits. If you've pushed something to github.com, then we would have scanned it. Now, how do we do that? Well, at the start of this presentation, I pushed a AWS key and 5 minutes after I pushed that key, it was broadcast on GitHub's APIs. Anyone can monitor it. There's a couple of events to look out

for, the push event and the public event. Public's when a private repository is made public. Push is when code's pushed. So, anyone can monitor it. GitGuardian can monitor it, scan it, and look for sensitive information, but so can adversaries. So, we did that. We found a lot of secrets, um a huge amount of secrets. I'm about to show you a scary slide next. And um and we published this in a report. So, how many secrets? So, in 2021, we discovered 6 million credentials that were leaked on github.com. That is a huge amount of secrets. So, this was about a 2x uh increase from from last year. Now, if you looked at last year's report, we said that we found 2 million

credentials. This year we're saying we found 6 million. And if you're good at math, you'll know that 2 + 2 doesn't equal 6. But, the reason why we do this is because the the code that was pushed to GitHub increased plus the amount of detection that we covered publicly also increased. So, in a fair comparison, we think that probably twice as many credentials were published last year than this year, which is crazy. Um 56 million users pushed to GitHub, so that's different than the Git GitHub's official numbers. That's because not all of their users pushed code last year, but 56 million of them did. So, we're looking at at a huge numbers of uh huge

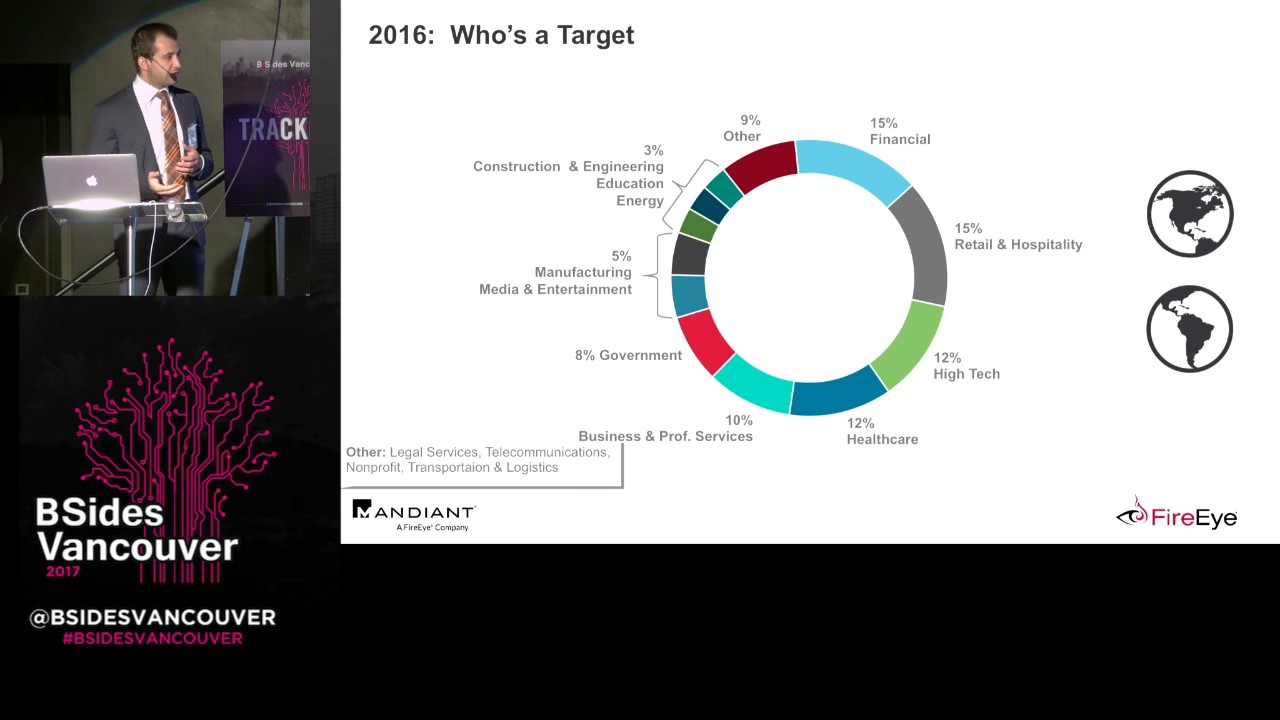

numbers of information here. And uh attackers know this as well, so they're also monitoring this. So, what are the type of secrets that we found? Well, we found some data storage keys. Um those were about 21%. The other category, which is the largest, is what we don't know they do. So, they might be private they might be uh private key security certificates. We we can't tell you what they do. Um uh 15% of the keys were cloud providers. You don't need to be very imaginative to know what to do with a cloud provider key. You can take some pretty cool open-source tools like Pacu and exploit these keys, change privileges, see what you can do.

Um some great tools out there. Messaging systems was 8.5%. Now, messaging systems is one of my favorite because um they're everywhere. People don't seem to control them too much. They hand them out willy-nilly. But, what you can do with a messaging system key is if it's a Slack key, then you can use it to post internal phishing campaigns. So, if you get an internal message from Slack to say, "Hey, change your password," you're much likelier to trust that than a Nigerian prince who's just emailed you. All right. So, where else do we find secrets? Well, we find secrets everywhere. We found 500 commit messages that had the GitHub token in the commit message. So, if you don't understand

that, then that's like putting your password in the subject line of an email. Like, but this is just showing that people don't fully understand the technology. And so, this is a problem. So, you might have employees that may have lied about their Git abilities on their resumes that are pushing uh that are pushing uh their tokens through messages because they're not understanding how that all works. Now, there is some uh some benefits that we've seen. For instance, AWS keys we've seen a decrease. This is because AWS is doing some great work for awareness and they're also looking for keys themselves on GitHub and they're actually sometimes auto-revoking those keys when they're found. So, we're seeing some good

movements with AWS. This is great because it's showing that we can buck the trend. Still a lot of keys on there, but we can buck the trend. Unfortunately, this is pretty much where those trends for positive stop. And also because we we're getting really wide, we're getting much more services. So, uh PlanetScale and Superbase uh cloud uh cloud infrastructure startups. Um PlanetScale started around about mid last year. Um but, we've already seen huge amounts of secrets of these uh being leaked. So, this means that our detection needs to be way ahead of the curve for uh prevention. So, Docker images. So, this is Docker Hub um is the largest uh place to store your Docker images, uh

which is basically just a way to run an application. We scanned 10,000 Docker images. We found that around about 5% of them, 4.6 if you're being pedantic, um had at least one secret inside them. This is huge. The secrets were a little bit different. They're much more internal structure. We found a lot of GitHub tokens. But, 5% of Docker images containing a secret is massive. That means you only need to download like 20 25 Docker images to find something sensitive. Uh we scanned 10,000, we found 4,000 secrets. 1.2 thousand of them were unique. So, some some troubling concern there with with with Docker and people not understanding kind of how those those containers work. And if you don't understand Docker, it's

it's it can kind of be a little bit like Git. You have layers. We see a lot of of instances where people are adding a secret into their Docker image or adding it in their Docker file and then removing it later, but because it's built up in layers, those previous layers that you had your secret in it is actually still visible if you know what to look for. Now, the other one is private repositories. So, this one's a little bit more tricky. So, we published the results that we have of Git Guardian's internal customers. So, the average customer, enterprise customer, has 400 developers. So, we took that as a staple and averaged out our results.

So, in a typical country company with 400 developers, we found around about 1,000 unique secrets, each occurring about 13 times. So, around about 13,000 secrets we would find in the private repositories of an average company with 400 developers. Now, taking the industry standard of one app sec engineer per 100 developers, these companies would have four app sec engineers. So, if we divide the 13,000 secrets, 13,000 incidences of secrets amongst these four app sec engineers, each of them would have to investigate, communicate with the developers, remediate, revoke, republish new credentials 3,400 times in a year. It's completely unachievable. So, you do the next best thing and you just ignore the problem. But if we did this 10 secrets a day for

the whole year, we didn't take holidays, it would still take the entire year just to remediate that. And I don't know about you, but I don't know many app sec engineers that have heaps of free time and are looking for extra work to do. So, this is why we need to create tools, create systems, create awareness for it. It's not good enough to identify the secrets, we also have to stop the bleeding. We need to empower developers. I know we love to use the words shift left, but it's totally true. Developers do need to take some responsibility into this. And how do we do that? Well, we provide them the tools that they can do

to to do it. For instance, Git Git hooks. So, they prevent you from leaking secrets. So, why does this happen? Well, it it happens for lots of reasons. We accidentally make mistakes. We're human, right? I think it was a keynote that said that because we're human, we have great job security, which I quite liked. So, we accidentally push stuff to the wrong repositories. And it's important to know that we can do this on our personal accounts in GitHub cuz github.com is unique because we have one account usually for professional and personal. Very easy to mix them up. So, we accidentally push secrets publicly or into the wrong places. We have secrets buried in the history that we don't know

about. It's easy to imagine a developer working on some remote working on some dev branch who's quickly trying to get something to work, hard codes a credential, removes it later for the code review because he knows you're not meant to do it. Code review passes because there's no credentials. Gets pulled into the master branch and everyone forgets about it. But if you scan the history, an adversary knows to do this, then that secret still exists in Git. So, we forget about secrets. We accidentally add some auto-generated file. Debug logs can contain secrets and environment variables. So, they can expose themselves here. We have sensitive files like PEM or ENV files that actually get captured in Git

repositories. And then the last one is that we still have companies actively managing secrets in Git. Just adding their environment variable files because it's easy. We can distribute it to all the team. Everyone has access to it. And we have excessive trust of our employees. So, you know, that's it's it's a a multi-faceted problem and we need lots of different solutions. So, hang on. Now, at the start, I leaked an AWS key. This is how many times it has been pinged since my presentation. For multiple Now, I do a lot of these IP addresses will be the same. So, we have some but let's let's try and find one here. So, this one's from the Czech Republic

in Prague. So, probably not someone that should be accessing this credential. So, this is just important to know, you know, people are actively monitoring this. It is a known threat and you're getting good results. Now, these aren't people. I don't have a friend in Prague refreshing on my repository going, "Come on." This is just a bot that's trying to exploit it and if it finds something interesting, will report back. And then we can start an attack. So, it is a it is a massive massive problem and one that we're not getting on top of. So, how do we solve this? Well, we need to do multiple different things. We need to have better education. We need to

have tools. There are tools to scanning secrets. I work for Git Guardian. We're a secrets detection. I won't tell you why we're the best because I'm biased. But there are also other tools out there. There's open source tools. If you want to to use those. So, there's lots of different ways that we can do this. Git repository We need to have Git hooks. We need to have better secrets management. We need to have better education. But we can get on on top of it. Uh nope. Wrong buttons. So, if you want to read the full report, you can uh you can blindly trust this QR code. I promise it won't do anything bad. But also, if you want to come see me, I

do have a one-pager of the report that has some of the values that we have that I can give you. And you can also from there go and check out the the full report of the state of secrets sprawl. There's lots of great information. Um but yeah, that's the end of my presentation. So, I'll invite anyone if they have any questions to to ask them now. Thank you.