Inside the Open-Source Kill Chain: How LLMs Helped Catch Lazarus and Stop a Crypto Backdoor

Show original YouTube description

Show transcript [en]

Okay. Hello everyone. Um, glad you are here. Uh, let's continue with the next talk. The next talk is uh is called inside the open source killchain. How LMS help catch Lazarus and stop a crypto backdor presented by McKenzie Jock Jackson. Uh, I would like to remind you just a few things. Um the first one is uh I want to thank to our sponsors especially the diamond sponsors Adobe and Iikido and our gold sponsors formal and drop zone AI. Uh it's together with the volunteers and the donors this event can can happen. And uh also second reminder is the to silent your cell phones. Third reminder is to not uh take photos when everyone uh is in the frame. And uh

that's it for me. Let's let's kick this off. And McKenzie, your floor is yours. >> All right. Thanks everyone. Great to great to great to be here and uh presenter at my favorite conference of the year uh here. Uh and I don't say that about every conference, I promise. Uh but if you want to know a little bit about me, I'm from Arteoa or New Zealand. Uh I'm the co-founder of a health tech company called Compago, which is headquartered in Australia, but I haven't been involved in that for quite some time. I live now in the Netherlands, and I work for a company called Aikido Security in Belgian, just to keep everyone guessing as to where

I'm going to be in the world. Uh you can find me anywhere on social media at the handle AdvocateMac. Uh I'm also the host of the Disclosure Podcast. Uh my mom says it's her favorite podcast and you all should listen to it. Uh so I'm going to go ahead and break the talk up into three parts today. Uh the first part I just kind of want to explain the problems around our open-source supply chain um because it's it's really important to the next part. Uh then we're going to talk about two different areas. One is going to be discovering undisclosed vulnerabilities. So using LLMs to find vulnerabilities inside open source packages that haven't been reported that don't have a CVE

number to them. And then the third part, we're going to be talking about discovering malware uh using LLM. And I have some great examples of the types of malware uh that we've found. I'll try and get through the first part as quickly as I can uh because I know we all want to dig into kind of the discoveries and juicy malware, but it's important just to build and paint a picture as to why why we started doing this uh and what the kind of core problems to it are. All right. So you may know this, you may not know this, but somewhere between 70 to 90% of all of the code that lives inside your application that makes

youration run comes from open-source projects or free and open source projects. Now this is great because it means that we can do things really quickly. It means that we can keep building on the past, stand on the shoulders of these awesome open source kind of maintainers, the giants of the of the world. But it also comes with a risk because we don't have a control over 70 to 90% or limited control over 70 to 90% of all the code in our application. That means that if an attacker is able to poison that, they can poison our application or if that code has a vulnerability in it, then it can come into our application and we

have limited control over that. So that kind of comes from the the scary part. So this has been a big challenge and obviously when it comes to attacking an open source component we're not just attacking one application we're potentially attacking thousands or millions. So the resources that can be thrown in behind this is astronomically larger than trying to attack a single application. So we just kind of visualize this for a moment. We have our application and then our application has open source dependencies. Now we know what these dependencies are. We've selected them. We've vetted them. We know who maintains them. maybe uh and we're happy. But these dependencies also have dependencies. And maybe we know

what these are, maybe we don't. And they also have dependencies. And I'll stop there because the icon started getting too small. But we can really go about 30 layers deep into this. We can add a little bit more complexity onto this because now our applications operate with a lot of third party services. Maybe we're using Octa for authentication, Algolia for search, Stripe for credit card processing, and all of these. Now, right now, this looks very manageable. I have my open-source stuff over here. I have my third party stuff over here. And it's all structured nicely. I can understand this. I could visualize it. I This is kind of a conceptual uh idea. I actually mapped

out a realistic real world scenario of what this actually looks like and it's this uh and that is because open source projects are dependent on each other. Third party services are dependent on open source. So actually understanding what you're vulnerable to is really really difficult. And that's why it's so important that we actually not only know what's in our supply chain, but we know if there's malware and if there's vulnerabilities inside them. If we take a random kind of icon here, this one here, let's just give it a random madeup name. I'm going to call it log for J. Uh, and what can happen is if we have a vulnerability inside downstream, then it can travel up

upstream and obviously in fact infect our application. If you're new to security, if you came into security after uh December 2022, uh then you may not know log forj, but it was a terrible day uh that we all dealing with and it's essentially was a transitive dependency, a logging application in Java uh that really made the entire internet vulnerable. Uh so and people really didn't understand what they were what they even if they were vulnerable to this. Now, it's not a security conference until someone shares this meme. I'm sure everyone's seen it before, but it's just so good that I can't not share it again. But what I love about this is that this meme became

so popular that it sparked a a new term in security called the Nebraska problem. And the Nebraska problem is basically saying that we have all of of our infrastructure that's being held up by open source maintainers. And this is so accurate, this meme, that we can actually even give names to to kind of what these projects are. things like UA parser log forj we talked about executis all of these are kind of being maintained by individuals um and if they collapse and the whole thing falls under them and the these are three examples which either collapsed or came extremely close to collapsing in the case of x utilus so I just want to kind of give an example of one it's just

a good example it's a little bit old now but it's still extremely relevant just to explain the problem so UA parser is a simple open source project uh that does something simple but really important and you could see why you'd use it. It parses information about the operating systems, the device that your users or or people are use are looking at your application through. You could see why I want to know if they're operating on an Android or iOS mobile device versus a desktop. So you could see but you could also see why if there's an open source tool you would use this and not try and write that logic yourself. This is an extremely popular project. It had 10

million weekly downloads. It had 56 versions. It had 1,9 had thousands of dependents. So, this would pass any smell check. And Meta was using it. Other companies were using it. This is this is a a very solid project and it would pass anyone's smell check. You wouldn't be able to tell or predict that this was going to become compromised. And spoiler alert, it became compromised. Uh so we saw this get posted in a Russian hacking forum which basically saying that he's selling an account on npm. Uh there's no two-factor authentication. So you can kind of take over it, change the password, lock the uh lock the person out and bidding started at $10,000 and it was ended up

being sold for $20,000. So someone bought this account and then what did they do? They installed nasty malware inside of it. So they installed a credential stealer and a crypto miner. That meant if you were using UA parser and you upgraded to this version, bear in mind that it had 10 million weekly downloads, that that meant that your application was now mining crypto for an attacker and stealing your users credentials and sending them to the attacker. So this is a pretty significant worst case scenario for it. And even though it was discovered because the account was taken uh was taken over, npm had to be involved in recovering that. It took several days and lots of people uh got stung by this.

So, how do we know how do we know if we have malware inside our application or not? Well, we kind of rely on a pretty uh I don't want to say outdated because it's kind of the best that we've got, but it's it's it's a flawed system, and that is that we have something called CVE numbers, and these are published in a database. Malware and vulnerabilities are published. So, here we have that the UA parser crypto mining backd dooror is in here. We know what version it is. It has a severity score and it has a number assigned to it. So this is how we know if there is issues or not. We can take

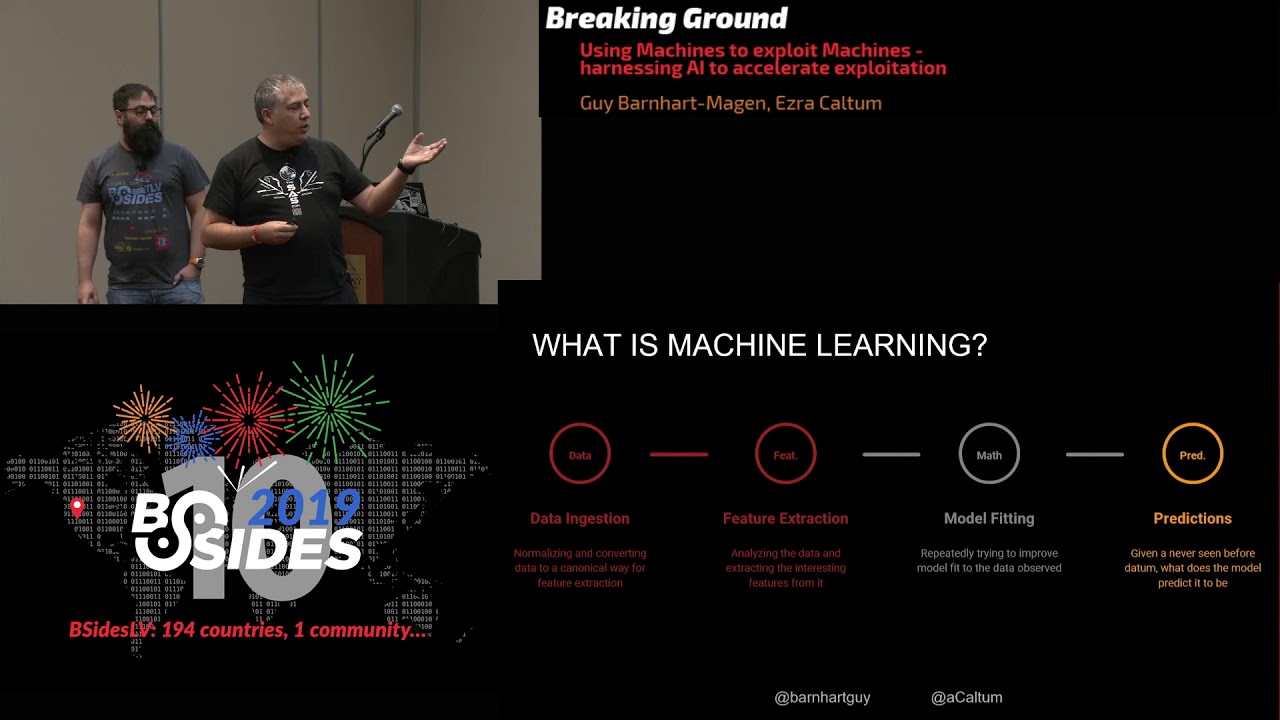

another one uh this or this this one for example is log forj the vulnerability that here we know that in this version of log forj there uh there is a vulnerability we can check if we're using that version and we move on. So, and this is typically how it works. And we don't manually do this. We use tools called SCA tools, software composition analysis, and they basically work quite simply. Someone finds a vulnerability, maybe it's the maintainers, maybe it's someone else, maybe it's a researcher that found some malware. They then report it to one of these big databases. There's many. There's the NVD, the National Vulnerability Database, the MITER CVE database, the GitHub Advisory Database.

It gets published in all of these places. Then we introduce something called an SCA tool, software composition analysis. It looks at our application, maybe in something like our package lock.json files or requirements.txt depending on what language you're in. It looks at all of the projects that we're using, the versions. Maybe it creates something like an sbomb and then it checks this against the databases that we have. So this is kind of like the best that we've got. Unfortunately, this doesn't help us a whole lot. It does help and we should definitely be using these tools but there's a big problem with it and that is that all of this relies on discovery and reporting and I don't know if anyone here has gone

through the process of publishing a CVE report it's kind of painful so especially if you have kind of gone through the effort of discovering something if you're a maintainer and now you have to go through this kind of process to report something prove it give it a score all of this kind of fun stuff. There's a bit of a delay that happens on it. So, this is kind of the best that we've got, but you can definitely see that it's a pretty flawed system. So, what happens if the vulnerabilities were never disclosed? So, all of this relies particularly when we're talking about vulnerabilities and not necessarily malware, but if we're talking about vulnerabilities, so an

open- source application has a critical vulnerability in it like log forj did and someone discovered it and they decided not to report it. And there is a term for this. The official term is called silent patching. I'm trying to coin the phrase shadow patching just simply because I think it sounds cooler. Uh and I'm trying to get get attention to it. But shadow patching is basically when a maintainer discovers a vulnerability inside their their open source project. They fix it and they never tell anyone about it. Now this is a big problem because a lot of people don't necessarily always patch super quickly, right? They take some time uh forward. However, if there is a critical

vulnerability in your open source, then you're probably going to patch a whole lot faster. If you don't know about that, then maybe you won't patch. Everyone's out there kind of without without knowing about it. It creates a serious problem. So, this is a little bit of an issue and we decided that we would try and come up with a solution for this. So traditionally how larger companies have tried to deal with this is that they have essentially thrown in large research teams which is really which is really great and important and these researchers will look at popular packages use various methods and try to uh report uh vulnerabilities and they typically will hide these in their own

little kind of secret little databases that they can sell to other people uh for it. At Aikido, we aren't big enough to have a gigantic research team to do this. So, we decided that we wanted to try and apply some logic and and use some of the new tools that we have in LLMs to try and identify these undisclosed vulnerabilities and and bring them to the surface. So, why are vulnerabilities not disclosed? Why will why would maintainers choose to kind of not bring this to the public? There's lots of reasons. There's the fear of kind of it being exploited before updates are applied. Semivid uh reputation concerns. No one wants a CVE next to your next to your project uh

from it. Maybe you have the lack of resources to actually make that make that report go through it. Um maybe you believe that the issues aren't that big. You know, it's just a little SQL injection. Uh [snorts] who cares? Maybe your dog ate the CVE report that you're doing it. They're all kind of valid reasons as each other, but unfortunately so much of our e ecosystem works on the reporting of vulnerabilities that when they aren't reported, it's a serious problem. And what we discovered is that they're actually not reported a whole lot. Uh which is kind of scary. The last kind of thing to talk about it is that there's a giant bottleneck at the moment and there's a struggle for

funding in these areas that we won't get into. uh but at the moment it also takes a long time for when a CVE report is actually made that it actually becomes public and everyone knows about it. So we're dealing with kind of complex complexities on on multiple angles. So that's why we decided to create Aikido Intel. Uh Intel's kind of an open source uh an open-source vulnerability database. Uh we publish everything that we find. It's totally free. Uh there's RSS feeds, there's GitHubs, everyone can contribute to it. And essentially what Intel does is it looks for vulnerabilities inside open source open source supply chains that have not been reported yet that don't have a CVE

number for it. So our initial concept was pretty simple uh and uh at least we thought that. So we were going to use LLMs to look through public change logs of open source projects. We were then going to identify if any changes related to security have been made. We're then going to check that against the databases to see if it's been reported and then if not a security researcher would then have a final look and give it a a vulnerability scoring using a standard scoring process. Uh we released this, we started monitoring around about 5 million projects in their change logs. We quickly found out that it wasn't such a simple challenge to do. uh we

discovered that change logs don't have any kind of standardized formatting to it. Languages can be very ambiguous. Uh and I have some examples of that. So change logs aren't always on GitHub. They're on various different websites, various different places. Uh and verifying the results was very very timeconuming. So we had to build an NLM that was very certain uh of what it did. So we kind of have a look here are just three change logs in different places in different formats. very difficult to build systems to be able to detect this. Um, and the final one is a lot of people ask why why do we need LLMs for this? Couldn't we have built a rule set uh and

done standard kind of grepping or something through these change logs to find it? So, the reason why we had to use an LLM as we quickly discovered is that quite often uh language is very very ambiguous or purposefully misleading uh in it. So maybe there's something uh ex explicit like escape selected text to avoid cross-sight scripting exploit. Okay, seems like that's probably something security rated. Uh it kind of gets work. But then when we go down to increase default work factor for PB uh PBR F2 to 1 billion trillion thingies iterations, that doesn't mean a whole lot to me. I can barely even read it. So but the YLM was able to figure out that

this is actually relating to something to do with security. All right. So this is kind of uh the different levels and and why an LLM was actually so uh important during during this step and why we couldn't use standard detection forms for it. So what's the actual format uh that we did? Um I made a really weird uh design decision to go like up and down and uh that so it's very difficult to follow but you can um but essentially we scraped 5 million most popular open source packages. Uh we we kind of take that we know where their chains logs are. Uh that's kind of the the the first area then to scrape that

information. The first area that we use an LLM is we actually use an LLM to standardize everything into a standard format for us. Then we use a second LLM that's been trained just to identify vulnerabilities inside these change logs. We verify and cross-check these and then we finally um classify classify that with a human with a human engineer. Uh, another question that people kind of often have is, uh, what LLM model did we use? At the start, we tried to be really cool and we invested some money into training our custom AI model to go for and do this. Uh, during that time, this was kind of a years ago, but a new version of GPT came out and when we

benchmarked it against our custom very cool model, which did perform better than the previous version of chat DPT, I mean GPT, GPT actually performed much better uh, in the new version. So we decided that to abandon our concept of trying to build out our own LLM models and we started to use the frontier models because we get free upgrades all the time. And uh just as a kind of out of interest if you if you're wondering about the computational cost of this and how much we actually pay when we started doing this at the very start of 2024 our costs for modelers has gone down by 10 times. So like the the the cost of

actually using these is is getting so much smaller uh as well. This is because the models improve but also they this getting cheaper and cheaper for us to use. So the costs are kind of uh and what we can do is getting kind of uh a lot more exciting. All right results uh I can kind of stop waffling about the problem now. So what did we discover? So last year we did this renders for a whole whole year. We discovered 550 undisclosed vulnerabilities. Now, that may not seem like a lot, but when we're talking about uh that these are all very popular open source packages that this is actually quite significant. 61 of them were critical uh which meant not

only were they kind of high severity vulnerability, but they were also very exploitable and 113 of them were high severity and obviously we had some some lower ones uh as well. This year we're finding a whole lot more simply because our our models and everything has been refined. So, we're kind of just over halfway through the year and we've almost caught up to last year's one. Uh, we've found 48 critical vulnerabilities and 51 high severity ones. So, a whole lot of uh a whole lot of uh critical kind of vulnerabilities inside them. None of these were discovered at the point when we discovered them. Uh, now a lot of question can you get is are all

of these never reported? Well, our methodology or our hypothesis at the start was that most of these would end up being reported and only a very few of them wouldn't be reported because we just figured that the major barrier to entry was actually just the time that it took to go through the process. But that wasn't the case. 67% of these are actually never disclosed and never get a CVE number to them. So that's a huge amount of vulnerabilities that are actually going out there. So if we kind of extrapolate this into other areas where potentially there's no mention of it in the change log, so they've completely removed it uh or it's hidden in other ways, we're probably looking at

a huge amount of vulnerabilities that are never disclosed out there in our open source uh supply chain. Uh and and what was also scary is that the other hypothesis I had was that I could understand why a low severity vulnerability isn't really reported by the maintainers. I I get that. I have a lot of sympathy for open source maintainers. They're doing a very difficult job, often for free. But when I saw that 56 of the critical vulnerabilities were never reported, I lose a little bit of sympathy. Uh to be honest, a little bit of a fun fact, the shortest time that we actually saw a CVE being created was one day and the longest time that we saw was 9 months.

Uh so and that 9 month one was because someone else found it and reported it uh not the maintainers themselves. So let's have a look at some of the interesting findings that we had. So Axius, Axius is a promisebased HTTP client. It has 56 million weekly downloads. Uh they had a high severity prototype pollution vulnerability inside them. This was discovered over a year ago by us and there's still no CVE for this. Uh it has never been reported. So this is a uh on this version there is uh uh a vulnerability. Really fun fact here. We give everything uh a number kind of like a CVE number but it's like an Aikido number. A little bit of vanity there.

You'll notice this has the the the one the ID at the end one 01. This was actually the very first one that we ever discovered. So when we discovered this, you could imagine like how much excitement there was in the room or it was the first one that we actually kind of manually reviewed to look through. So this was uh yeah, this was uh pretty exciting to have. Um here we have another one. This one is Craft CMS. Uh this one has 3 million uh installs. It was discovered nine months ago and has a critical path traversal uh vulnerability. This one here isn't so crazy. There's lots of other ones. But why I decided to include this one here

is because these are all the vulnerabilities from that same project. None of which well one has a CVE assigned to it. So all of these were were never disclosed and we have a bunch of uh criticals in there as well. So this is kind of a project that's obviously has no uh processes in place to actually report vulnerabilities uh going forward. Here we have another one uh which had a des serialization of untrusted data. This one was discovered three months ago. What's interesting about this is if you know about the SharePoint vulnerability recently. This is a very similar or the same kind of uh vulnerability that was inside that 86 million uh installs uh a PHP project and

again of course no CVE on this one either uh at the moment at least after three months. Uh so there's some of the kind of more interesting things that we have. Last one here, uh, Maria DB, an improper certification validation. Again, discovered three months ago. Uh, a little bit less popular, but this one is, uh, still used in a whole bunch of different projects as well. So, question everyone may be asking is, all right, if we're so great, how come we don't uh kind of report these CVE ourselves? Uh, we disclose as much as we can. We report it to the maintainers. Uh, we're also trying to register as a CNA, but this is less than fun. A CNA is

an organization that is able to report these CVs. Once we get there, we will be able to report uh these vulnerabilities as well. And just as a reminder, all of this is public. We have an RSS feed. If anyone wants to use our database and their products and their tools and their and their work, you absolutely can. You don't need to ask for permission. It's all public. All right. Now, moving on to some really uh kind of fun juicy things that we have out there. Uh talking about some malware detection. So this was kind of the the same kind of concept of we don't have a big enough uh resource team to be able to do malware discovery. So

we came up with a kind of a concept similar to use LLM. This time uh we tried to figure out what was the like would it be feasible would it be worthwhile scanning all of this with LLM and LLM didn't perform better actually performed worse than traditional scanners but then the problem is that the traditional scanners generate huge amounts of false positives for us to look through and we didn't have enough resources to look at it so that's where we actually implemented an LLM to ingest all of that data from the traditional scanners and then make a determination of how likely it is that it contains malware if it had a high determination, then it goes to uh a human. And usually

it's pretty either like yes or no. There's not a whole lot the LLM can't figure out. And we give it a whole bunch of indicators. So the biggest challenges that we have was with false positives. So npm gets 30,000 package versions on it a day. Um so that's if we if we had something like a 1% false positive rate, we would just be drowning. So, we needed to tune our LLM and also our detectors to make sure that not only did we not miss anything, but we really weren't kind of creating uh kind of too much too much noise in in our pipeline. So, how we kind of did this, we scraped all of the information

from the major uh package managers. So, npm, pipey, maven, ruby gems. We then scan that kind of with traditional tools I put there. So, we use open grip uh an open- source uh static code analysis analysis. We also use some other tools in there as well. And we also use other indicators of malware. So for example, maybe we're there's the use of a val in there. Uh there's calling external external uh domains. There's obfiscated code. There's binaries inside them. But it also could be things like versions not matching on GitHub and npm. So typically what will happen is someone will get access to they'll be able to compromise an npm account but not the GitHub account. So then they uh create a

whole bunch of versions and all of a sudden we have a mismatch of GitHub and npm. that can be a good indicator. Uh if there's spelling mistakes and typos, uh that indicates that it might be something like a typo squat. So there's lots of indicators that we use that aren't just uh coming from the traditional code itself. As I said, we use LLMs to validate that. Looks at all the indicators, determines how likely it is, and then every step we have a human to make the final decision uh decision from that as well. So what did we actually detect? Well, this is just in June. We detected 4,000 package versions. I've got versions there in bold because it's important to

know that um uh when someone does compromise a package or they're delivering a malware, often what they'll do is they'll publish a bunch of versions to give you the illusion that you're kind of falling behind in your updating uh there. The average time that we took to detect it was 5 minutes and the average time that it took us to report the malware and publicize it was an hour. So, it's a really efficient system that we actually managed to do. And what we've discovered is it's very rare that the LLM actually gets it wrong and gives us a package that it thinks is malware that isn't malware. And often when that does happen, it's usually

because it's very obvious or explicitly stated that this is like a research project or something along those lines. We will typically still report it because it still could be dangerous. But that's kind of like the only cases that we typically get where we're getting kind of uh not complete information. And in total, we've found uh over 20,000 uh malicious packages in npm, pi, and other places as well. The vast majority come from npm. We have a fair bit from pi and then very few from the other ecosystems uh as as well. NPM is an absolute cesspool at the moment. I'm not there's no shade on npm. is just kind of where a lot of the malware is being

delivered uh to it. So this is kind of some uh this is a screenshot from today. So this is like some that we've found just today some malicious packages. So I don't know you can check if these are in your system but again all of this information is public. Uh it's all open source as well. You can all check out everything uh that you want to do. You can include this in your own products as well. All right. So I wanted to kind of go through some of the more fun things that we have. I'm not going to go through 20,000 examples, but I am going to go through some of the most fun examples of

malware that they discovered. This one here is really, really stupid, but it's also really, really great. Uh, and this one came very close to actually tricking us at the time. So, this here is uh a package called React HTML to PDF. It was kind of typo squatting another package. We got an alert that this was malware. And then this is what we were confronted with in the file that LLM told us there was malware in. And we looked at this and there was like only very few lines of code and none of that looks anything like malware to us. So we were all very confused from it. It took us a long time. Uh maybe some of you have noticed

there's a small indication on here that there is malware present from us and that is the scroll bar and what the attackers. This was Lazarus, uh, the North Korean hacking group, and they had just used white spaces to push the malicious code off the screen. [snorts] Uh, I'm embarrassed to say that it almost got us. And this was the actual code, and here it was calling a very suspicious looking external uh domain to to give it to give us malware. This one was especially interesting because you'll see here that it's using Axius. Ironically, this is the same project that we discovered a vulnerability in earlier on in the presentation. But anyway, it's using Axis to call uh and

to get get information from this external domain, but they haven't actually included Axius in here. And what we discovered, we found this within 5 minutes of it happening. And whilst we're still confused analyzing it, we noticed a new package version had come up and they were debugging. They were adding in like different like console log checkers and things like that to try and figure out why their why their malware wasn't working. Um, little did they know that we had figured out that it was because they forgot to include Axis as a dependency. U, but eventually they did figure that out and and add it in. So, this one was kind of stupid, kind of fun. Uh, when you actually look

at the payload itself, uh, it's a big it's a big payload. It does everything that you would expect a payload from Lazarus would do. This include it steals your browser's caches, tries to steal any passwords and session tokens. It searches for crypto assets. So just what I have here uh these kind of ids down here. They're the ids for uh browser extension crypto wallets. So it's kind of finding does anyone using this browser have these extensions installed? And if so, can I steal your wallet keys? It tries to steal keychain information. And then finally, of course, like any good malware writer would do, it installs a back door onto your infrastructure or machine that you're running it on as well. So lots of

fun uh there. So, that was kind of the stupidest one. This is the one that had the biggest potential impact, one that we're really proud to have caught. Uh, this time here, it's from uh Ripple. So, or XRP. So, if you don't know, XRP is a cryptocurrency. It's the sixth largest cryptocurrency in the world. Um, and it's maintained. The Ripple Foundation uh manages this project. It's the official Ripple SDK. It's not a typo squad. the official Ripple SDK. Uh now what's kind of important to know is that this SDK is used by all of the major major kind of cryptocurrency exchanges. This is a tool that helps you communicate with the Ripple Ledger, right? It's not really something that

you want to write yourself. So every major cryptocurrency exchange will be using this package uh as well. So whilst it doesn't have billions of uh of downloads, it does have 133,000 weekly downloads. And I will say all of these will be probably pretty interesting to to deal with. So what actually happened happened with Ripple? We noticed this here uh in the bottom of the code. We also noticed that the GitHub account had a different version history all of a sudden. And so what this was doing is it created a function called check validity of seed. And that called an external domain from us. And what this is is a backdoor into uh ripple itself. Um and this here as it

was just setting up the function and then we could see that it was calling this function in various different places uh in in the code itself. Why this is so scary uh and has such a big potential impact is because what this did is it stole all of your wallet keys. But what that means is that if let's use the example of a cryptocurrency exchange. if they were using this package and you made any transaction as a user to the Ripple ledger, it would not steal your Ripple. It would steal all of your cryptocurrency because it had access to the wallet. And so if we kind of have a look at that, this is the domain that it

was uh being used. It was registered by uh Spaceship Incorporated. Um uh from there, it was registered a few months before the attack actually happened. Uh, and there's lots of malware in the actual payload when you actually deliver it. Uh, public service announcement. Don't try and get these uh, payloads. It's it's a little bit risky and if you do, make sure you do it in a sandbox. But it was doing lots of things and you can kind of see it's calling this check validity of seed and then it's trying to send information like private keys and stuff like that and stealing those wallets. So there's a lot more information that we have and going through more detail of the malware

on a on a site, but this is kind of essentially the gist, the main gist of the whole thing. Uh, and now we have my favorite example. It's hard to call malware beautiful, but this here was just a truly spectacular, very beautiful example of malware that uh yeah, it it would be very interesting to meet the the person that that wrote this. So this one here was in a package called OS info checker ES6. Very fun name. Uh the code's a bit small, but if we blow this up, essentially what is happening is it has this this string here called decode. And what's going through it is down in this >> [snorts] >> uh in this area here, it's decoding

something from B 64. That's always a little bit of an indication to us uh and it is part of our indication that when something's being decoded from B 64 then uh it's a little bit weird. In this case, it was only decoding one character, just one character from it. So that in and of itself was especially weird. And so we decided to have a little bit of a closer look at this. Uh, and that's when it got really interesting because when we actually opened this up in something that can view uh, asset characters uh, uh, or sorry, Unicode characters, we actually discovered that this one thing here that was visible had a whole bunch of strings

that were invisible behind it. What these are are PUA Unicode characters. These are characters that can't be seen by text editors, only be seen by something that can view Unicode characters. And you can define what these are in your project. So what was going on is they were defining what these characters are and then this here was a base 64 string that was being de decoded. Now this is impressive but it gets so much weirder and funkier after this. So obviously once we discovered this we decided to decode this base 64 string that wasn't visible and that took us to this well it took us to a domain. We got the we got the payload from the

domain. Inside that payload, there was another B 64 string. So, we're getting very convoluted now. This B 64 string when it was decoded gave us access to a Google calendar link. When we went to that Google calendar link, there was a really weird title. This is another base 64 string inside the title of the Google calendar invite that gave us our final boss payload domain. And then once we went to this domain, we finally got access to the actual malware that they were trying to write. But this was just such a beautiful example of the most heavily obfiscated crazy malware that we've ever that we've ever seen. We really thought we were going crazy at

multiple steps trying to analyze this, but eventually we did persevere and we did get to the end result. The bad news for attackers is is that this trick will no longer work uh because we're very prepared to try and find these invisible characters now. But it was a fantastic example that if it wasn't for the LLM system, I don't think that we would have discovered this because our LLM was very confident that this was malware even when we were very confident that there wasn't any malware there. But luckily, it did. Its confidence allowed us to persevere and have a look through. So, a good indication that the that the the using the LLMs in that way is a really

use uh useful use case. Uh so this coming to the end if you want to find out some more on the Aikido blog we have lots of fun uh things where we go into detail about all the malware that we find and write it up. Uh the latest one is about glue stack. Glue stack was a uh uh was a tool that got compromised. If you're wondering uh how npm accounts often get compromised. There's actually been a massive fishing attack going on that's used the domain NPN, not npmjs.com. And they've been sending out fishing campaigns and they managed to compromise a whole bunch of thread actors. That was reported on. Then the same thread actor

bought the domain pip instead of pi and did the same thing for pi and compromised a whole bunch of pi pi pi applications. So that's how it happens. We have fun stories like the the malware dating guide which goes through all the different types of malware that we discover out there in the wild and what they're kind of used for and who we suspect uh are behind them plus a whole bunch of other ones going through it. So if you're interested, the Aikido blog has lots of fun content uh that we like to keep up on. Uh the final thing too is if you're wondering, you know, what can you do to protect yourself against this malware?

Well, we built a really cool fun open-source project. It's quite simple. It's called Aikido safe chain or just safe chain. And what it does is it acts as a wrapper around your uh package managers like npm. So when you make the call mpm install, what it first does is check to make sure that you're not installing any known malware. And if you are installing any known malware, it will block it. So it's very very simple. You don't need to go outside of your current workflow. Just in the future when you do npm install or another install uh then it will actually block it if there's no one malware in our database uh from it. So, a really cool

fun project that we just just released uh here. And that's all I had for you today. So, I hope you enjoyed the presentation. I'll be hanging around and happy to ask any questions.

>> Okay, I'll go first. Couple of questions. uh first is have you seen the same approach that you're using with LLMs used to triage static analysis output which suffers from a similar signal to noise ratio issue? >> Yeah, absolutely. Uh, so, uh, I always hate talking about product stuff, but in in our in Aikido product, we do static analyzing and we have a triage function that's based off the same training, the same LLM that we have that does that. And that basically looks at all of your code holistically and tries to identify is this a real like vulnerability or not and upgrades it based. So, we have seen that, but I also know that we're not the

only ones using that approach in terms of vendors out there as well. So I think that this is actually a really good use case for LLMs at the moment because where we're not at is using LLM to actually detect vulnerabilities in that LLM aren't good enough to be able to do that. But what they are good enough is taking additional context and then making decisions to elevate something. But yeah, so yes. Yes, absolutely. And I think that this is a really good use case for lots of companies to use LLMs for. >> Yeah, I'm kind of uh looking more to build something, right? And so the the idea would be that I have a pretty

substantial security champions organization already. Yeah. [clears throat] >> Uh which is made up of engineers who are triaging output. >> Got it. >> Uh that's related to the modules they work on. Right. So it's basically >> um there will be some output and they'll take a look at that. But you know they're we've trained them up pretty well but they're engineers. They're not security experts. And so what I'd really like is to have something in the middle between the raw output from the tool and the engineers who's looking at it to say, "Hey, these are things that look a little bit weird. You may want to pay extra attention here." >> Yeah. So >> that's a fantastic use case for it and

it's one that like we we're really impressed at how the LM systems working for us. >> So when you've looked at different models, is Open AI the best one? Do you think Anthropics better? Are there any LLMs that are better or worse? Yeah. So, we use uh GBT4 and we also use uh Claude as well and like kind of different ways. Uh we find that they're they're the best. Uh there isn't a huge amount of deviation between the models that we've tested for it. The only thing I'd recommend is don't build one yourself, [laughter] >> but uh too late. >> Or maybe he was we couldn't. Yeah. Yeah. But that that was kind of one of the

things that we we discovered is that the the output of that of those were were really good. I think as the models kind of continue to progress, they're getting further and further apart in different ways. I think that will be interesting to uh to analyze and have a look at what better >> and you really think it's worth it to pay open AI just because they have better training data >> for us? For you it was for us. Yeah. Yeah. Yeah. For us it was but also like we didn't have a gigantic budget behind this you know like and that and this isn't part of our core I think more importantly this this is this part like

the intel part this is not our core offering of our product this is not how we're making money from it this is all fun research so that you know we can come present and talk about interesting things so therefore the argument for us to build when we looked at when we looked at the various is kind of lower but that that argument could be changed if this became like a really core part of how I ate food. Uh, >> and the tool that you release that's totally free. >> Yeah. Yeah. Yeah. Everything's everything uh totally open source >> and it's not scoped only to GitHub, but it'll work with GitLab as well. >> Yeah. >> Okay. Because it's it's just in front of

like npm and the other tools, right? >> Yeah. Yeah. Yeah. For sure. >> Okay. Awesome. Thank you. >> I just got one question. Um, in terms of your guys's communication with the maintainers, right? Yep. Like I remember in the first part of the um the presentation you were like uh the amount of you describing the amount of uh critical >> Yeah. >> Yeah. All the different uh [clears throat] percentages >> are what's your guys' communication process with them like in terms of letting them know versus taking the impetence to the impetus to actually disclose it your guy yourselves. um is this or is this going to happen once you guys get um that certification you were discussing?

>> So the communication will get a whole lot better once we get the certification. Right now we're just shooting out emails to the maintainers that we know of to say, "Hey, we've found that there isn't a CVE attached to this and we're letting you know that we're publishing it in our database. So therefore, it will be like public." >> Are they responsive to that? >> Sometimes. Usually not. >> Yeah. Usually like we get a very we get a very low response rate and that varies between being really thankful uh telling us that they've got a CVE or getting really pissed off um uh to it. So like we it there is a we can understand we

can understand uh like why why maintainers will get annoyed. We're not trying to do that, but we just believe that uh if we have been able to discover this vulnerability on a fairly low sophistication of a product of a of a tool that we've we've released, then thread actors who are looking to compromise tools who are looking to exploit things will be able to do the same approach. So we believe that it's even though it's not in a database, we believe it's still public because it's on their change log. So that's yeah. So like with it like within that workflow like if they are aware do you post po do you post it post remediation like just to let people know

this did exist at one point or is that like >> yeah so when it's in the change log it generally means that the problem's been fixed. So there like so the vulnerability doesn't actually exist in the latest version of it. So, but it's so but it's just about the fact that the the people using that won't often don't necessarily always upgrade if there's no like vulnerabilities in there, right? Why risk breaking my application if everything's working fine and maybe also in the change log that you got like some small performance improvement or some I don't know something else, you know, then you're like, oh, I don't really care about that. I'm not going to upgrade. If there's a critical

vulnerability, you probably will, you know. So, that that's that's what it is. But when when we communicate like the problem has been fixed and we're not publicizing a zero day that's that like that that has no fix available to it. What we're publicizing is that a previous version has has something and that you need to be aware of it. >> All right. Good. Thank you McKenzie. I appreciate that. That's

>> Hello. Hello. Thanks. Very nice talk. I just want to ask a small question. is it this project to trace very large number of the repository using a so how much for the M cost and token for every day >> yeah it actually it it costs less than you think um I don't know if I can tell the number but it's we we we we're spending several thousand a month on our on our LLM costs um but this has gone down from tens of thousands so like it's gone down significantly I I recently just to give you like a a data point to look at I recently did some research where I gave prompts to

generate code to various different models and in total I gave around about 12,000 prompts like to these models to generate code snippets back when I proposed this research project a year ago it was the cost that we calculated was going to be in the tens of thousands. When I did it uh this year it cost me 420 bucks to do like all of those prompts. So like the computational area is is coming down so much in this. Um I'm not sure how that's possible, but it is like I like I'm sure that none of these companies are making money off of off off the it but yeah uh it's it's getting less and less at the at the

moment. Yeah. But it's still is it is in the thousands. Yep. All right. If there's uh no more questions. Thanks everyone. Uh and enjoy the enjoy the party tonight.