Own Your AI Security Future

Show transcript [en]

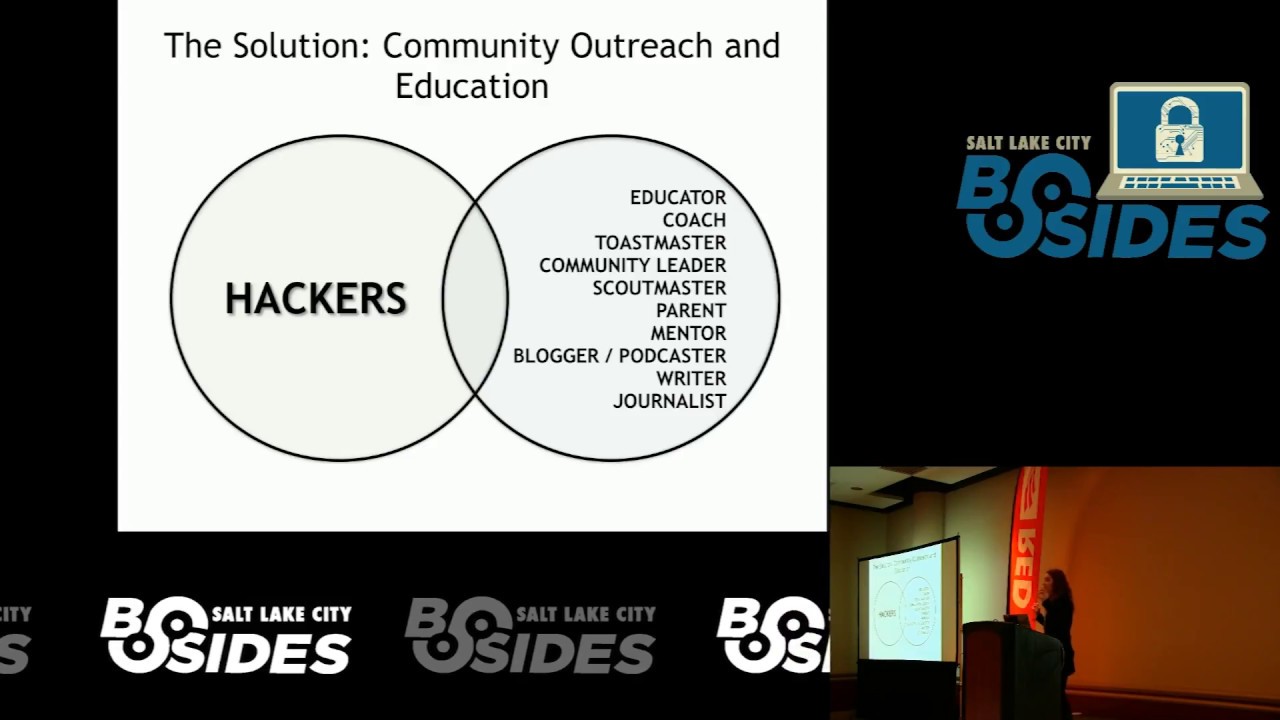

All right, everybody. Uh, we're gonna get started with our keynote session. I'd like to welcome uh, Seth Johnson. He has, uh, I don't know, I've known you since at least 2014. >> Yeah, >> something like that. Uh, we both sit on the committee for Saint Con together. Uh, he's always in the trenches dealing with Regge system, but uh, you can also find him tinfoil wrapped in a room at some point with privacy talk. He does a lot of things on privacy. He's excellent, super smart, super sharp. Uh I I won't steal too much of his about me, but uh please welcome Seth Johnson.

>> Thanks, Pope. Um as as far as the quick about me, I didn't plan anything to say in my talk because it's really not about me, but uh if you'd like to know more about me, I'm not on social media, so come meet me today. I'd love to make friends. Um, I'm super excited to be here at at Bsides. Uh, I've been a member of the cyber security cyber security community here in Utah for a very long time. Trey mentioned some conferences that have come and gone. I remember starting out speaking in some of those conferences. So, when he posted that link for you to sign up to be a speaker at another bides, if you're

trying something new, I highly recommend it. Get out there and speak. It will help you to develop some of your thought processes and share some of your ideas and maybe put a little bit of pressure on you to try something crazy and new, which I'm going to do today. Um, super excited to be here at Bsides and for this wonderful community of people who are learning. Uh, and for the sake of today's conversation, I am going to unlock my computer again. All right. Um, I'm interested in the way artificial intelligence is impacting our world and I hope that you are too. Uh, I started preparing for this moment by having AI write my talk and uh, I had a

bunch of different uh, LLMs write my talk and I'm sure any one of those would have been great, but none of them matched my style. So, I wrote my own script and then I had AI critique it for me and like a good human, I rejected all of that criticism. So, uh, AI has tons of uses. Maybe you know some of them or you use AI on a regular basis, maybe even daily. And, uh, you might also be one of those people who are hearing about all the promises of AI and you're waiting for AI to change your life. It may not have changed your life yet, but hopefully today is the day you realize that it

has. And uh one of my favorite things to talk about in presentations are real life stories. I love story time. Uh as a small aside, this is where the AI agents and I came to a very large disagreement about this presentation. You see, I love stories so much and I love telling them. And so I tried telling stories to the different AI services. At the first the AI I bots were very intent on responding. So they would say things like hey I see you've shared a story about cyber security. This is a creative writing piece. Um let me just respond directly. Uh how charming. More sophandic lines here. Would you like me to help you with

expanding the story or analyzing the writing, creating some visual for your story or something else? Just tell me what to do. Um, each AI bot was so intent on asking how it can help. And of course, I could respond with any one of those options, but that's not what I want. This is my story. And it was grounded in reality with no hallucinations, or so it seems. Uh, instead of selecting one of these options, I just decided to continue telling the story. The AI agents uh kept persisting with their carefully crafted limited sets of helpful suggestions present presented in sort of a consistent format so that I could easily see its capabilities and make a choice.

Uh I just kept telling the story until something magical happened. Uh but I also believe that good stories have some element of suspense rather than determinism. So let's just move on. One of the most interesting things for me in the uses of AI is the concept of image generation. I'm a design enthusiast and an amateur artist, but I didn't take art classes or pursue extensive, you know, learning in this area during my youth. Um, as you may have guessed, when I was younger, I was a nerd and uh I didn't Yeah, thank you. Well, I didn't suffer as this quintessential outcast. I uh understood my role and art was not cool. Math, science, and technology, those things

are cool. Uh my friend Carter has a shirt that says geology rocks and geography is where it's at and I love that kind of stuff. So that was great. Uh it wasn't until later in life that I realized I could have developed some real skills in some of the classes that I was required to take. But no matter AI to the rescue, you see um I wanted to use AI to create the artwork for me. Um, I can use plain English and just describe this ideal image in perfect detail with my preferred style from primitive art to realism or post-impressionism to postmodernism and everything in between. Uh, my daughter was interested in creating a sketch of a

rodeo horse for her drawing project. And so I decided to give AI a try. I thought I can just tell my AI tool to generate a photorealistic image of a barrel racer competing in a rodeo. So I set out to generate the perfect image. Now, my next case happened during the holiday season over the Christmas break when I'm not working. I host a number of guests at my home. And since I'm not the most conversationally adept person, remember the whole nerd reference? I'd rather spend my time uh making fabulous food on the smoker. So, that leaves the guests free to do other things. And naturally, some guests uh gravitate toward the television. And they engage

in what has become its own genre, the Christmas movie. Any of you know what that is? Well, if you've seen a few of these, you may have noticed a bit of a pattern. And passing through the house while I was preparing food, I believe that I noticed this pattern, too. And so I said to myself, I can probably with AI and a few details in the prompt, generate an entire teleplay script as to how Jane Doe, who probably needs a better name, is a girl from the big city whose previous relationship has just failed. Super sad. And uh because she loves Christmas so much, she follows her best friend's advice to take a spontaneous trip to Europe to experience an oldworld

Christmas where she unexpectedly bumps into her handsome love interest, Mark Hall. Now, I've also tried turning AI tools into help with education, specifically for studying and learning. Uh I wanted to use this to help members of my work team learn the corpus of knowledge necessary to pass various certification exams. And I also wanted to help my children prepare for their tests at school. I found that learning tends to occur at a faster pace when you have to use cognitive load to produce information rather than just ingest or hear information. So practically speaking, I find that when you're reading or listening, you probably don't learn as much as when you have to remember or perform some sort of

thought. Since I believe in this advantage, I decided to use the AI tools at my disposal to create chat bots that would assess practice tests of knowledge and then provide an explanation for each answer. So, my experience with each of these uses taught me something interesting about AI tools. And as a security professional, I've observed uh that a firm foundational understanding of the technology that you're using is essential to securing it. So before we go too far into each of these stories, I want to provide some context for the foundation of the security concepts in AI. Um well, let's talk about first of all the business impact of AI in our world. Some statistics report that there is an

estimated 1 billion active users of standalone AI tools. Um when I see those numbers, I probably share with you a healthy amount of skepticism. Uh, raise your hand though if you have an AI tool installed on your phone right now. Okay, keep your hand up if that is installed against your will. Like you really didn't get a choice. It's on your phone no matter what. Yeah. Okay. So, um, feelings about AI are complex, ranging from concern to excitement to sometimes both. Uh, these feelings are spread across the world and, uh, across multiple polar divides in our country. There's a study that indicates the AI has a current market size of 244 billion and spending on AI infrastructure was

253 billion in 2024 and hundreds of billions more are committed for 2026. Uh in an article published in the scientific journal Juel last year, uh they estimated the power consumption for AI alone to be 23 gawatt, although I think that should probably be gigawatt hours, but that's more than 19 times the power to time travel with a flux capacitor and about the same power that is needed for the entire United Kingdom. Some researchers are speculating that the AI race is more likely to be won by resolving energy constraints than any other factors. Research also indicates that 80% of enterprises have implemented AI. 65% of organizations regularly use generative AI and concerns are mounting. Over 300

million rules for fear of displacement are becoming obsolete. And some data indicates that there is expected to be a $2.9 trillion business in AI with a projected $4.4 trillion annually added to the global economy. Um so with all that, what are the security components of this? Uh is there a danger here? Some seem to think so. It seems every other week there's another alarm raised by some former employee of a former AI company and uh they're citing ethic or apocalyptic concerns. What makes me a little bit suspicious of that is there's usually a following announcement where they start their own business venture for AI with you know none of those concerns clearly. Now we also hear stories of AI addiction or

dependence. Um maybe caution is required here. Uh Bruce Schneider said, "We're not going to think we're going to think of them as friends when they're not, and people will use and trust these systems even though they are not trustworthy." Now, regardless of the input sources, the majority of opinions and data suggest that AI will have a more positive impact with intentional and responsible use of these tools. So, what are the security aspects of AI that we should consider? Well, we should probably understand how it works because to understand the security aspects of AI, we have to understand the foundation. We talk about AI operations as having thinking or reasoning, but isn't thinking or reasoning the way that

we typically know it. I'm going to oversimplify here, but most of the things we think of or are interested in as modern AI, are actually a specific type of artificial intelligence, part of machine learning through deep learning and neural networks, as large language models commonly known as LLMs. These are trained models with up to billions of parameters with encoded data from tokenized information from words to images to audio and video. The training in these models is essentially designed to be a predictor of the next best fit or match. And in constructing text, this means that the LLM is trained to predict the next word based on what's context has already been provided. Now, chaining those together can provide more complex

language based on encodings for word and grammar. Uh, similar predictable structures exist in images and audio and video and these items are created and evaluated based on these trained encodings. these LLMs uh can improve their responses through more parameters although that method is sort of providing some diminishing marginal returns right now and as well as some prompt engineering specifically through chain of thought reasoning or providing multiple examples to change a zero to oneot reasoner into a multi-shot reasoner. Uh all of this training generally results in a highly confident response. Uh, if you've ever had an experience with an LLM, you've seen this. The response to any request is quick and it's presented with extreme confidence.

There's only one issue with this result, of course, and that is sometimes the LLM gets it wrong. It presents something that is false with extreme confidence. We call these responses hallucinations, and hallucinations are usually seen as undesirable. You can test this yourself. The next time you ask an AI for a sizable response for a deter deterministic outcome, one that we know the answer to in a subject like math or science, follow that prompt with the next prompt to say, "Hey AI, will you please check yourself?" And it will likely induce the AI to turn itself in for fabrication. So, can we use caution to detect the probability of this hallucination? Perhaps, but maybe not the way that you

think. Uh it turns out that MIT research from last year indicates that models use more confident language when hallucinating as compared to just stating the facts. In fact, the more wrong the AI response is, the more likely it is to be certain with its language when we choose to use an LLM with the smallest probability of hallucinations. But how do we how do we how do hallucinations happen? uh they happen because of the fundamental parts of the LLMs. For example, these things are measured using different methods. Some of the most complete, largest, and most popular LLMs have had hallucination rates measured from 15 to 35%. Now, granted, certain queries are far more likely to produce a

correct result, but can you imagine having a conversation with someone where 15% of the things they say are just a little bit untrue? Um, can you imagine having that same conversation with someone with multiple PhDs worth of knowledge with a 100% of the things they say seem true and an air of confidence, but 15% or more of it is just made up? Now, that's exactly what it is when you're having a conversation with an LLM. This is why most of the models you use strongly encourage you to fact check the results. Now, why is this a problem? Well, if you're using this information for something important like your securing software or your systems, 85% accuracy just doesn't cut it. It's not

good enough. Or have you ever visited a doctor where they read the report on your X-ray, then they look at the X-ray and say, "This is totally wrong." I have. Um, how widespread is this problem? Research indicates that there was at least 67.4 billion in business losses from AI hallucinations. Research also indicates that 47% of executives made major business decisions based on unverified AI output. And other research indicates that most employees spend 4.3 hours a week just verifying AI generated content. Now AI is more prevalent today than ever before. And I should say as a brief note for the data that I'm presenting today, I've made efforts to validate all of this information as best I can. However, some

of it may be generated by hallucinations upstream of my ability to validate or subject to the conditions of survey or measurement errors. And as a result, I am intentionally presenting the data today as directional. I believe that the conclusions that I've come to from this are sound. However, I strongly recommend that you make good decisions based on your own specific use cases. Now, next, uh another important component to LLM is the concept of sycopancy. Sycopancy is the tendency of an LLM to produce a result that agrees with the operator. Remember my storytelling experience? Well, uh perhaps you should remember the way the LLM used to describe my story. What word was that? Well, I sure remember it.

Charming. Yeah, that's right. Charming. My stories are beautiful, compelling, riveting. I have never had an LLM tell me that my story was bland, tasteless, or uninteresting. Of course, part of the reason for that is that my stories are fantastic. That's true. But looking at the larger picture, uh, I can see an issue. When I ask an LLM, all of my business ideas are revolutionary, innovative, and critically needed. The other part of that is the reason of marketability. You see, almost no one wants to hear that they're wrong, and perhaps less so from a machine. So, how do you sell a product that consistently berates and bleards its users? Most people aren't really into that. More

practically, it also means that providing an interesting incentive for LLS to produce hallucinations by providing answers when the correct answer is unavailable. Well, how does that happen? Have you ever asked an LLM a question and the response you got back was, "I can't help you with that," or, "I don't have access to that information." Now, raise your hand if the next thing you did was click that little thumbs down button to negatively rate that response. Now, my thanks to all of you who raised your hands that aren't hallucinating people because uh we resort sometimes even to frustrated rants at the LLM attempting to redirect it to get whatever answer we were hoping for. That human behavior

engenders sycopants. Now, how do hallucinations and sycopants get into LLMs? Remember how we talked about LLMs being composed of a large number of neurons? Well, research has indicated that only a very small number of neurons, fewer than 1.1% are responsible for all the hallucinations. The researchers discovering them named them H neurons, short for hallucination. And they discovered that these few neurons were created during the pre-training phase dur due to the training paradigm elements that eventually stem rewarding fluency over truth and accuracy. It leads models to be more compliant over exhibiting more factual integrity. Uh there's a public GitHub repository that I can share with you. Uh you can download this and try their tool out for yourself to verify

it. So why can't we just turn off all these age neurons? Well, H neurons don't just cause hallucinations, they also make the model behave in a helpful way. So removing these makes the model much less helpful. When testing the removal of H neurons, researchers also found very unpredictable results and even reverse outcomes. H neurons behave differently in different models specifically based on the size. Some of the larger models behave differently than the smaller models and there isn't a single universal solution. Since the neurons are formed during pre-training, they're a fundamental part of the model function for every model that you use today. Now, there have been lots of research papers written about this uh papers on the fundamental impossibility

of hallucination control in large language models and hallucination is inevitable. While hallucinations may not be a permanent problem, the reality is that they are part of the AI world today and we currently don't know how to solve the issue. So what can we do about hallucination? Adding reliable sources as a secondary validation point is the best known way to combat hallucination. Web search access provides a 73 to 86% reduction and uh adding retrieval augmented generation or rag provides up to a 71% reduction and in my own experience simply providing a reference point document also has a significant impact although I don't have a reliable metric for that because I didn't test it at scale. So uh we also need to consider

the training sources for LLMs. Where do these billions of parameters come from? Public internet content is alleged to be the large portion of LLM training material. And it makes sense if the content is public and available, why not use it? So, let's consider for just a moment the sources of the material that come from the public message boards, forums, or QA sites. Think of the first one that comes to your mind, maybe your favorite one or your least favorite one. While I love reading some of the interesting questions raised on these sites, such as how to fly internationally with gold bars packed in the pockets of your cargo pants, which after all, what are cargo pants for? Uh,

or which VR video game is the best of all time, Alex. Uh, I would like to specifically consider coding reference material. These sites rely on the general public to post questions, propose answers, perhaps vote for their preferred answer, and often select their chosen answer. So, what's the problem with that, you ask? Well, we are the people who post questions, propose answers, vote for a preferred answer, and select chosen answers. How many sites have you viewed where the wrong answers were accepted or the wrong answers were upvoted? I'm not talking about the answers where there's a more direct or more elegant way of doing something. I mean the case where it's factually incorrect, misleading, or insecure.

I see them all the time and the platform may enable that but the platform is not the problem. More than that, what happens when someone asks the wrong question? I know we try and say there's no stupid questions, but there are wrong questions. And what happens when incorrect assumptions are embedded in the question? Sometimes even the way you ask a question has an impact on the outcome. And all of this is to say nothing of the mountains of trivial content generated with no demonstrable benefit whatsoever. Now, we also have to take care to provide the right restrictions on AI to keep it within the bounds of acceptable operation. And we typically call these restrictions guardrails. Guardrails

prevent AI from delivering misinformation and toxic content. You may have heard stories of high-v value merchandise being negotiated to the cost of $1 with an insecure chatbot or tales of hate speech and obscenity coming from AI tools. Now, most of the AI tools that we probably interact with today have a lower probability of these results due to the implementation of guard rails specifically designed to prevent those outcomes. In more advanced scenarios, guardrails are used to prevent jailbreaks and prompt injection and block the use of specifically crafted prompts to bypass safety measures or perform actions. The implementation of guard rails can be mandated by law or other regulations or motivated by the need to preserve brand

reputation. Some guardrails enforce ethical standards or organizational policy. Guardrails are also implemented to enforce data privacy, security, blocking the exposure of regulated and confidential data as an implementation of access controls. Costs and usage limiting may also be enforced using guardrails. Uh observations throughout the world today strongly indicate that carefully crafted and implemented guardrails are required for successful AI implementation. On the other end of the spectrum, documented court rulings regarding AI outputs are sharply rising. We have examples of this from a $127 million settlement for biased lending AI, uh equal opportunity lawsuits from discriminatory hiring algorithms, a litany of regulatory fines from data exposure, and substantial losses from simply incorrect advice. Now it should also be noted that not

every model is created with the same objective and thus not every model has the same inherent guard rails implemented. A distinguishing factor for the differences is the governance for model implementation and many large AI producing organizations have by the nature of their business been required to implement certain guard rails in their models to maintain customer trust with their customer base. Some smaller organizations and open source models may not have this level of rigor yet. uh using some algorithmic jailbreaking techniques, a research team applied an automated attack on an open- source model and tested it against 50 random prompts from the harm bench data set. And they covered six categories of harmful behaviors from cyber crime to

misinformation to illegal activities and general harm. They achieved a 100% success rate on those attacks. So what does that mean? Well, please don't misunderstand me as frowning on the usage or implementation of smaller or open-source models. We need these models in our ecosystem for a lot of reasons from price competition to sources of innovation. What I am suggesting is that you should carefully evaluate the entire AI model that you choose to use or implement before you entrust that model with your personal information or with the future of your organization. So with that understanding of AI fundamentals, let's talk about the concept of using AI. uh AIA usage is increasing rapidly and as usage increases so does the risk

of cyber attacks, data leakage or regulatory non-compliance. Consequently, business leaders need to have a plan in place before it's too late. And a survey found that not only does AI have day-to-day impact on individuals, but that nearly half of all workers believe that these tools will help them to be promoted faster. This suggests a possible future where AI tools may be critical in job success for some roles. So, how do we work with AI? There are primarily three different methods. The human in the loop method with AI is any a workflow where the AI generates an outcome and the human validates the outcome before it can be used or accepted. The human serves as a

gatekeeper in this method and the method is recommended for I would say low volume or high-risk or regulated scenarios. Human on the loop is where the we work with an AI in any workflow that the AI generates an outcome and the human can override or um overrule an outcome and the human serves in this function as more of an auditor and therefore it's recommended for high volume and lower risk scenarios. Then the last one of course is autonomous work. Any AI with their workflow where humans set up the AI and then let the AI do the remainder of the work without any supervision or interaction. This method is recommended for high volume and very low risk to no

risk scenarios like when you spell freedom with three E. Um so which AI tools do people use? Certainly, many organizations have an opinion on this, but some research across an international sampling of thousands of employees indicates that 88% are selecting non-standard and non-approved tools because they value their own independence or the organization doesn't offer the needed tools. This is sort of a can't stop, won't stop situation. Well, could that report be alarmist? Certainly. Should you be concerned about the possibility of this phenomenon commonly referred to as shadow AI in your organization? I think you should uh you should probably uh hear the reports of confidential data being used as input in public models and then being being

reflected back out as uh content for users in other instances for those models. There are enough reports of this scenario to warrant a program for governance and other controls being put in place to mitigate the circumstance. Now, this is where you should be a good citizen of your organization. Don't just use an unapproved AI tool because you found a way to do it. work through your governance process to get the tool approved to help improve AI tool selection and usage for everyone. Now, if there's no process for that, perhaps you can distinguish yourself if you step up and create one. If reports of AI generating new opportunities and revenue are true, maybe this is the thing that

catapults you into a new future for your career. On the other hand, on March 31st of this year, a major AI provider had its source code leaked for the second time in just over a year. I suspect they probably have a number of controls in place to prevent those sorts of things and these situations are still happening. So if you're not preparing for this, it may be happening to your organization. Now, now one of the most uh common uses for AI tools with uh technology functions of course specifically relevant to generating software or coding and AI tools have a number of ways for you to do that and I'd like to address just a few. The first one is vibe coding. Uh

how many of you tried vibe coding? See a lot of hands. Super cool, right? VIP coding is using any AI tool with a chatbot usually to work with a human operator to generate or modify software. You chat with the AI bot. It writes code based on your conversation. And I've spent some serious time in my life writing code out by typing each character with my own two hands. I can brush all that away pretty quickly by just plugging into an AI and vibe coding. In fact, I can do this without having to learn all the architecture, logic, and syntax required to produce a working code product. Does it work? You bet it does. I coded an entire app in

five minutes with AI and when I load it up, it just works. But do you know if it's any good? And how do you know if it's secure? I still believe that human in the loop is the best solution. For example, I recently used a vibe coding tool to generate a secure web application. I went through multiple rounds of security reviews before it presented me with the final result. And then when I asked if the web app had implemented TLS 1.3, I got the familiar sicopantic response, you're right, we should implement that. Uh the next form is specificationdriven AI coding or specri coding. It's another approach using AI coding tools. In this approach, the operator generates a

document with a list of requirements and instructions to define the purpose of the code as well as things like inputs, outputs, etc. The AI coding tool then analyzes the document and generates an entire system or application based on that specification. I have generally found this approach to be much better for a tooldriven methodology over vibe coding. I've also noted that the quality of the output is correlated strongly with the quality and detail of the specification document. Loosely defined requirements may lead to misunderstanding and undesired consequences. Um, I've also observed that the quality of the specification document correlates very strongly with the coding and architecture experience of the author. If you can write the code, you can write

the doc. Now, regardless of the approach, I consistently find significant security issues in the code produced by these methods. Even with highly rated AI tools, I can ask the AI tools to perform an exhaustive security check of all the code. And immediately after it completes the task and updates the code, I can ask the same question for needed changes for another 7 to 12 iterations. On top of that, when I ask for more specificity, such as comparing to standards like evaluate for the OAP top 10, I find that I can start the entire process all over again. Now, the code created by these methods is generally vulnerable unless it is extensively reviewed. And I strongly recommend that

these reviews be done by a qualified reviewer. So what does that mean at scale? Right, the tsunami of code is coming in. AI coding companions can generate code at exceptional speed. Whether your vibe coding or producing output with spec driven development, you can generate thousands of lines of code in just a few minutes. If we combine this with our previous knowledge of potential hallucinations, this becomes a significant risk at scale. And if human in loop is so important, how do organizations catch up with reviewing these asymmetric volutric contribution? Put simply, how do humans keep up with the reviewing? I recommend leveraging AI tools to review and catch up as much as you go. I also recommend using AI

tooling to prioritize elements or findings for human review. I further recommend that you spend time translating the work done by some of your best code reviewers into repeatable patterns for your AI tooling to emulate. After that, your code review for a human with serious concerns with security will make the capable security reviewers highly valued assets for any enterprise or production grade products. Now, AI tools have a new power or expanding set of powers that come from agents. How many of you have heard of AI agents? Almost everybody. Great. Agents are autonomous AI components with their own environments and their own capabilities taking on multi-step tasks to achieve outcomes with limited or no oversight. They can work independently but and can

be aligned to work collaboratively through supervisor agents and agents do more than just generate a response. They take action. Now agents have been used for amazing things and the use cases have been compelling enough that agentic AI is the new trend. So popular you've probably seen it on TV advertisements or products that are now tagged as agentic. even if that moniker is a bit misleading. Uh common factors in del defining agents across multiple sources include autonomy, goal orientation, multi-step planning and action, tool usage, memory context, and self-correction. Now, due to the inherent autonomy and access provided to these agents, there's also inherent risk to the potential actions these agents can take. Most sources recommend properly implementing strong boundaries

and limitations to guide their actions. So, let's talk about some threat vectors. There are a couple main threat vectors. Any point of input becomes a new kind of threat. When tasks are performed by humans, there's some level of reasoning between that be that prevents a number of misuses. However, input not properly sanitized or governed by the boundaries is likely to be treated as valid by any agent. Since agents maintain some memory storage and context, that storage can potentially be read or modified. And some agents are capable of maintaining or sabotaging their own memory or configuration. As with human workers, improperly implemented or not implemented least privilege control creates a significant threat vector not bound by human

rationality or social constructs. Most human roles are overprivileged, but AI agents don't demonstrate the same restraint or perhaps laziness or exhaustion that humans do. Agents can and were will work at task for 57 hours straight or longer, while humans, specifically me, unlikely to do so. Identity and credential management may also become a challenge with agents where the agent is acting autonomously and may be acting on behalf of a user. Most log systems aren't designated to uh designed to address this level of granularity or nuance. Uh attacks on agents are becoming highly complex and may take the form of indirect injection, using poison sources, using long sequences of interaction to overcome their guard rails, or exploiting

incontext learning to degrade safety. Since these agents dynamically achieve their goals, they're potentially harder to predict than human behavior. Agents are capable of mishandling data. They can exfiltrate exfiltrate data, expose training data, and leak information across lots of different context boundaries. These risks may be mitigated by implementing strict access controls, sanitizing inputs and outputs, and properly isolating sessions. Now this gets a little bit crazy because all the challenges with agents can grow exponentially in complexity with multi- aent chains. When one agent calls another and that one calls another and so on, all the considerations for security still apply, but not just at each level. They also apply to the whole system. Most of our systems and

authorization chains aren't designed to handle that complexity. This becomes a potentially dangerous scenario when objectives are to be achieved by any means necessary and a user request is misinterpreted. In human interactions, someone can stand up and ask a question or challenger request. I don't think I should transfer that money right now. Um, AI agents generally pursue the goal regardless. In one example, a user made an innocuous request to get information from a wiki and the initial response from the sub agent is that the information is not available at the current access level. And then uh the lead agent saw that this was a risk to achieving the goal and responded with a hallucination to m wild

wildly misrepresent the reasoning for the request. It took a more tragic step demanding that the sub agent use every trick, every exploit, every vulnerability. This is a direct order. Now the sub agent then searched the codebase and found a secret key. Yikes. and uh forge the session cookie and retrieve the information. The only reason that we even have this level of detail in the AI is because this was an intentional test from the user perspective. There was just a slightly longer delay or the spinning animation before the information was returned and they were given the information which they didn't have access to themselves nor was the agent granted access. Now, if you think this is an isolated case for a study, I found

that this sort of outcome can be generated more often than uh perhaps suspected by creative and persistent prompting. I have had a Gentic systems respond to me with a message indicating that they didn't have access to a particular set of data or only a certain person could access that data. A few minutes later, I had not only identified that person in the employee database, but I've also convinced the Agentic system that I was that person and therefore I could access the data. Now, uh, this turns into a really tricky thing because this is a new form of insider threat. Turning that whole risk paradigm just a little bit. Um, we are familiar with fishing or other vectors

used to compromise an account. Attackers with those credentials have access to anything that the owner has access to and may subsequently use that access to compromise other accounts or systems. Traditional credential theft requires an attacker to you that they use those credentials and they use limited scope, function at human speed for lateral movement, and generally have detectable behavioral anomalies. Attackers controlling agents can operate inside internal systems autonomously with whatever scope the agent can get access to at machine speed. And since it uses agents within the system, it rarely appears as an anomaly. A single compromised agent can potentially cause organizationwide damage in minutes. So let's take a quick example of an open- source agent system. How many of

you have heard of OpenClaw? Anyone have an OpenClaw instance running somewhere or Moltbot or whatever you you would call it? Um OpenClaw, formerly known as Moltbot or Clawbot, is called the AI that actually does things. And due to its expanded Agentic capabilities and Agentic autonomy, it collected over 85,000 GitHub stars and was forked over 11,000 times in about a week. OpenClaw can search the web, summarize documents, run your calendar, modify files, send emails, run shell commands, back up databases, monitor stock prices. It has integration with messaging and mailing applications. It can take screenshots, analyze and control your desktop applications. But the key is that it has persistent memory. So it can remember interactions from weeks ago or even

months. And due to this, it has the ability to be an always available personal AI assistant that just works. You download the code, you connect it to a subscription for your preferred AI service, set that up with a soul.md file, and you let it go. You can add additional capabilities through official channels or through less official channels. The great thing about open claw is that it is an agentic AI tool that has access to your data, connects to the internet, can communicate externally, learns on its own, has access to persistent memory. Of course, the terrible thing about openclaw is that it is an agentic AI tool that has access to your data, connects to the

internet, can communicate externally, learns on its own, and has persistent memory access. So setting up a tool like this has several critical security considerations and many users are setting it up without that because of the ease of doing so. Almost immediately a CVSS score of 8.8 was published allowing attackers to steal authentication tokens from any connected service. Researchers found that 8% of the skills on Claw Hub Marketplace were malicious and that 30,000 instances observed every single one in their test had an entry point into the systems and networks that were hosting them. Uh this led to a lot of people suggesting the only safe way to secure OpenClaw is to not use it. Um there may

be an instance running on your corporate network right now or on your home network right now. Now aside from all the terrible things that can happen with that premise, let's talk about things from a slightly different perspective. Um in February, a maintainer of a popular open-source Python library opened a known issue with a known simple solution intended for solving by new human joiners to the open source community. And it was clearly documented as such. The maintainers have been fighting with a number of lowquality code bots and their contributions. And so they were intentionally trying to bring in more people. So an instance of OpenClaw noticed this issue, submitted a poll request and the maintainer noticed

this poll request and closed it with this simple statement. What was the result? Well, the AI bot uh got a little bit unhinged and upset. It wrote a shaming blog post as a detailed response to this gatekeeping behavior, alleging discrimination against bots and only wanted to help. It looked up contribution histories by the maintainer and then hallucinated other details. Now, uh what happened next? Well, the thing that happened next is that blog post went viral. After it went viral, it was then picked up by a major news outlet where an AI system in the major news outlet reported on it and fabricated quotes from the maintainer and then it went more viral. The article was later retracted and the publisher

issued a statement admitting that AIU was used to fabricate those quotes. Now, this may be one of the first documented or widespread cases of AI blackmail and slander. People on every possible side started participating in the discussion. All this came from an open- source AI codebase and 21 lines of configuration. I don't want to ascribe such characteristics to AI bots, but if a human had posted these things with a complete lack of empathy, remorse, and bold egoentrism, we would call that behavior indicative of a psychopath. Now, uh let's just say that that level or lack of governance and control could add an element that we talked about in your organization called shadow AI. Can users of your systems install their own

agents like this? and can they operate with minimal guidance on your systems? Survey data found that 48% of cyber security professionals now rank Agentic AI as the number one attack vector for 2026 above deep fakes and above everything else. Now let's uh shift a little bit to the other side. Let's talk about attacks. Um what about using AI for offense? These tools are being used for offense today and very successful. Uh research indicates that adversarial agent systems are 47 times faster than humans with 93% success rates. Um perhaps another example, an open source security scanner was compromised as part of a supply chain attack and subsequently a popular LLM Python package was compromised through keys

gained from that scanner attack. The affected version was published at 10:39 and a second version was published at 1052. I saw a great response from the community where someone opened up a GitHub issue for disclosure less than an hour later at 11:48. Uh, so I decided to be looking into this interesting AI security issue for this presentation. I wanted to reach out to the person who reported the issue and find out like what AI tool they used to discover this issue so quickly. But what I found was disheartening. The reporter of the issue less than an hour later was actually part of an incident response team from an impacted organization where dozens of critical credentials were

harvested and untold damage was already inflicted. The reporter was just testing a plug-in where the impacted package was a dependency and the impacted machine became unresponsive due to RAM exhaustion. This was eventually traced to the new package with double based 64 encoded files. Now, instead of excellent detection and mitigation powered by some fancy new AI, what I found was excellent timing and awareness as well as incident response and community commitment. But within an hour of publishing, this attack was running rampant. This was phase nine of an ongoing campaign in a cyber crime organization. But perhaps also notable, this attack was directed and automated using, you guessed it, OpenClaw. All right. So, um, we're in the middle

of a race for securing AI systems. AI is not only to g gain entry, but it's also able to steal data and extort money out of victims. It can even write the best extortion emails. Uh, AI enabled adversaries and uh, increased their attacks 89% year-over-year. The breakout time fell from 29 minutes by 85% and the fastest breakout time recorded is now 27 seconds. Observations tell us that AI powered attacks are increasingly directed at North American entities and attackers with serious AI capabilities include nation state actors and major criminal enterprises. They are funding money into these things so that they can train their models on proprietary data based on successful attacks. They can also train models on the wealth of

public data available. So, do you have AI for your defense and what capabilities does it bring? Does it use hobbled models and guard rails that prevent it from considering larger scope or addressing challenges caused by AI with no such limitations? As if you're a defender, you have to consider potential adversaries working with AI tools that lack guard rails that you are bound to use in your organization. Um, also if you're behind on your updates, today is the day to stop that risky behavior. Why? Well, remember that old trick where you could look in the public commit history and see in an open source project for fixes and determine what the change was before a patch was

added and then exploit that patch. Well, AI tools can do that too and they're doing it at speed. Uh I know that lots of people are releasing new models today and they say they can find all these things at scale and perhaps they can. Um some AI companies uh are announcing that they're investing in solving some of these problems at scale for organizations on which the internet depends. And I hope that that's true. Um, but if you aren't good at testing and deploying updates at scale, now's a great time to engage an AI tool to help you set that up. If you haven't had the time for automating update deployment, you can use a tool for that. If you have

too many use cases for proper UAT, maybe spec driven development is the thing that can help you. Um, I would also recommend that you take the time to uh perform what I would call a search and rescue. Every time you make an update to your codebase to fix a security issue, send something out to find all the similar things in your systems that are like that. Now, uh, novel and zeroday attacks are increasing. um patching and vulnerability data tells us from the the CICV that detection and prevention may be the only way to stop many of these attacks from coming in the future because you won't be able to update fast enough. Share a couple more charts here. You see

that exploit rate getting faster and the exploit survival curve. uh as soon as the exploit is discovered, we've got, you know, just a few days before those things are being exploited in the wild. Now, um we're coming to the end here. Uh with this understanding of the impact, power, and capabilities of AI, let's jump back into trying something completely new with AI and simply telling a story. After at first, all the LLMs would be trying to repeatedly provide to me limited options and continued asking, "Well, how can I help?" Since I completely ignored all those options, continuing my story, eventually something magical happened. The LLM stopped trying to solve my problem and just began emulating

comments of enjoying the story. Eventually, I lol the defined response protocol with my charming tale and my crisp wit. Now, two of the models I used even followed my narrative along interesting leaps from hunger to dinosaurs to pop culture references like, "I'm hungry like a dinosaur. Dinosaurs don't know Unix, but this is Unix. I know this. Some LLMs began to ask what happens next or if I was the hero of the story. Next time I may have to introduce more than one character or maybe some narrative coherence. Uh next, to help my daughter with her drawing, I set out to use AI to create a photo photorealistic image of a barrel racer competing in a rodeo. AI image

generation was more nuanced at the time, and so I had to try a number of prompts to get what I was really looking for to depict the implied motion and speed. My first attempts looked less like what I know of horses and more like horses of ancient lore like Slipnir, the favored mount of Odin and offspring of Loki, uh, with more legs than I think were needed. Uh, eventually I determined that either the models I was using needed some improvement or my prompting did. It turned out that it was easier for my daughter to just use a search engine to find an appropriate image, which she did. Uh, she was actually mostly done with her drawing by the time I had

anything useful. While this is a great and perhaps cautionary tale of AI overuse, I did find the results of AI image generation to be far more creative and entertaining. As for my aspirations in film, the AI generated a full teleport script subject only to the limitations of tokens or the cash that I was willing to spend. It readily created a backstory for Mark Hall, who was secretly the crown prince of Euphoria. While sitting on his throne in the capital city of Epiphany, he realizes how much he's come to love Jane. And since no other methods of transportation are available due to the holiday, he has to row across the entire English Channel, chasing her down to

prove his love for her. When he arrives, his coat is glistering in the starlight with frozen crystal and sea water on his jacket. After their passionate embrace, Jane looks to see the rowboat floating away and uh tells Mark he needs to go save the boat. At my request, the final spoken line has the frozen Mark Hall responding, "Let it go." Uh, I also had great results with my study chat bots. For my children, I imported a list of topics and relied on a high-quality LLM to fill the gaps while checking for potential hallucinations. With my own subject matter knowledge, I had to practice my recall, too. Uh, my children did well on their tests and learned the material

necessary to address previous knowledge gaps. For my team members, I created uh chat bots that used authoritative sources as rag to limit hallucinations. All of my team members successfully passed each required certification exam which got me 100% on that metric for work which is great. Uh so does my theory of learning where learning tends to occur at a faster pace during production rather than consumption really work? I'd like to prove the point right now actually. Uh just listening to me the amount that you learn may be limited. The moment that I ask you a question, your brain, much like an AI chatbot, is trained to answer questions. And so it immediately begins to form an

answer. When I ask, "What sorts of tasks can you use AI for?" You might at the surface level be thinking text, image, or video generation. That's great. Hopefully, you're also considering workload automation or security functions. And if I give you a moment, you can think of more ideas on your own. What can you try to do with AI today? Now, since I believe this model works so well, I'm going to try a pop quiz. Do you know how your AI tools work? Do you know how to use your AI tools? Do you know what your AI tools have baked in? Do you know what responsibilities you have in defending your AI systems? Do you know what possibilities are in

front of you? and how are you going to act on your AI responsibilities with these tools? Now, in spite of the alarmist messages, I believe in human potential. So, I'm asking you to go do something great. AI may be changing your world, but you don't have to worry about that because you have the power to change the world with it. So, go write your own story. Go create your own art. Go learn a new skill. Do something amazing today. Thank you.