The AI Cyber War: Inside the AI Arms Race Between Attackers and Hunters

Show original YouTube description

Show transcript [en]

So, it's time for me to introduce our keynote speaker. Um, this year's keynote speaker is Mike Spicer. And, uh, he he has some things about he describes himself as a mad scientist hacker, um, with a deep obsession for wireless technology, which he does. Uh, he's currently a hacker at CoFire, and he's creator of the Wi-Fi Cactus. And if you don't know what the Wi-Fi cactus is, I got a really good picture of him right here. Um, that's him. And what's also fun is that that was on the back that that's that's the shirt of Defcon 32. So he's he's a Defcon staple. He's good. Um, and uh, so that's that's the Wi-Fi Cactus. He works with Blackhot, Defcon,

Saint Con, Bides. He's all over the place. Um and he's incredibly good when it comes to wireless anything Wi-Fi and uh also with software security and AI. These are these things are true. Um his his handles at Dark Matter, but I just want to say one quick thing about like the first time I really interacted with Spicer. So I was at I was at St. on um and he was talking about a vulnerability to VPNs where you could insert yourself into a VPN tunnel and then see all the traffic that's going through. So Mike's giving his presentation at Scon and he's like hey does anybody want to have their VPN hacked here on stage right now? And

I was like yes right like because a I I had a VPN going to my house. I was like I want to make sure that I'm secure and I was like I'm hoping I'm not vulnerable to this. And then he's like, "All right, connect to the McDonald's network or whatever it was." Starbucks. I think it was Starbucks. If you see Starbucks network, don't connect to it here, by the way, and please turn off autoconnect right now. That's my two cents. Um, but I was like, he's like, "Yeah, connect to Starbucks network." I did. And then he's like, "Here's your IP address." I was like, "Crap." Um, so I and he he was able to get in and I was like, "All

right, so what do we do now?" And he looks over. He's like, "Come talk to me after." He's like, "Come to this hotel. go down or go downstairs into the lobby, right? I'm like, "That's not my hotel." Like, but he's like, "Go downstairs to the lobby and I'll help you fix it." I'm like, "All right, sweet." So, we went I I went because that's what you do. You find a hacker and you go to their hotel. Um, right. And but I went and and like Mike's there, a whole a couple other people, and he he just walked me through. He's like, "This is one thing you need to add to your code. This is all you got to do, and you're good." And

I was like, "Oh, wow." Like, "Thanks for that." Right? And Mike didn't just do that for me. he would do that for anybody. So, A, he knows a crap ton and B, he's a really, really, really nice guy. And that's all you need to know. Um, and that's that's all I'm going to say before I introduce our guest speaker, Mike Spicer. [Applause] >> Well, for starters, that was way too nice. Oh my gosh. I appreciate that. Holy cow. Let's see if I can log into my computer up here. >> It's password one, two, three. >> Exclamation point. >> You got to use a special character. Um, yeah. No. And this guy's been awesome. He's been he's

been like so supportive. And And here's the thing in security when you get to a situation where it's like, "Oh, hey, maybe I'm vulnerable." like asking the question if if yes, I am vulnerable and like having that discussion is some of the coolest things and experiences I've had uh in cyber security and and like meeting up with people and meeting people from the community. So, thank you for sharing that story and and I'm just so stoked to be here and talk with you guys this morning. So,

>> that wake up. >> There we go. Cool. >> Yes. No. Maybe. >> Yeah. Maybe. I don't know. I don't know. We'll see. We'll see. Sorry, everyone. Give me a second here. How's everyone doing this morning? Thank you for being up here at 9:30 in the morning on a Friday. Happy Friday to everyone. All right. Well, that Sorry, I'm still a little bit takenback by that introduction. I I appreciate it. >> Introduction. What? Check. One, two, three. Are do I need to be like eight miles style? >> Yo. Yo, >> I also like to do karaoke. So, we're turning this into karaoke now. All right. Anyways, so all right, let's let's What am I here for? Why am I here

for? So, uh, one of the things I'm super duper obsessed with is AI. And one thing that I like love about AI is it's everywhere. and I see things that I think are interesting and so today I'm going to share some of those things with you. So I hope you think they're interesting as well. So um yeah, I'm very very excited about this talk put it together. Uh welcome to the AI cyber war inside the AI arms race between attackers and hunters. So yeah introduction it was awesome. Thank you so much. A little bit about me. Uh, I work for Coal Fire. Um, in the We have a new division called Division Hex, uh, which is our special services division,

which is, uh, pen testing and oh, hey, we got some some Division Hexers among us with hats and everything. Yay. What's up, teammates? So, uh, yeah. So, uh, man, yeah, sorry, still a little bit head. Yeah, thank you so much for that introduction. So, let's get into Oh, a couple other little things that uh wasn't mentioned in that one is also I have a a computer hardware storage startup called Apex Storage. Um, I ran into a situation where everything I do always uses more bandwidth and needs more speed and I always end up at like the root of what it is. And in the case of the cactus, I couldn't figure out where uh all the bandwidth was. And

it turns out it's on the PCI Express bus and so hardware moved to uh hard drives moved to being on uh the PCI Express bus with MVME technology. And I was like, "Oh, I want more of that." So I made a device that holds 21 MVME drive uh drives at a single time and will do over 50 gigabytes a second readr. So anyways, yeah. So if you need fast storage, hit me up. I I have some solutions for you. Uh all right, so let me tell you a little bit about myself. I thought it'd be interesting. Um I know we've got a wide range of uh skills here and people and so I' tell you a little bit about um my background

and history and kind of how I got here. And it's kind of a I think it's kind of a crazy story. So, it started back in about 2015. Um I go to a conference. I've been going my first one Defcon. It was mentioned. Uh the first one I went to was in 2000. So, uh I went for a lot of years and every year I would go I was like, "Yay, party, fun times, Defcon, hack all the things." And then everyone kept saying, "These are the most dangerous networks in the world. This is the most scary thing in the world to have a computer here. You bring your personal phone, it's going to get hacked. You bring your laptop, it's

going to get hacked. So, the curiosity in my brain at Black Hat, uh the first year I ever like snuck into Black Hat, I didn't have money. I didn't work for um a corporate company that would send me to conferences. Um I in fact in 2015, I was uh doing my own startup. Uh we were doing IT services. Uh but I've just been like it uh cyber security adjacent for years. Um, and so I was like, how do I get there and go? Well, it turns out when you have friends that work in places, it's easier to get into different places. So, in this case, I had a lot of friends that worked in the

Black Hat Knock thanks to in part uh there's another Defcon group that we have in Utah called DC 801, which is kind of the the the first Defcon group that ever existed. So, I had a lot of friends and a lot of the people in the DC 801 group helped run the Black Hat Network and helped do quite a bit at Defcon because Utah, for some crazy reason, has been in the hacker scene since the beginnings, the origins. So, I go to this black hat, I show up, no ticket, no anything. And I'm like, okay, but why is this the most dangerous? Why why is it is all my stuff going to h get hacked? So, I of course being me, I put

together a little Raspberry Pi and a battery and some wireless antennas in my backpack, took it, started capturing data, and with that curiosity spawned a legacy of projects that like I could have never predicted where it went. So, I started I looked at the data and I learned immediately I did it wrong. I screwed up. The data that I captured was not very interesting. It was missing things. I was using the wrong tools. I didn't understand what I was trying to catch. So then that went to 2016. I was like, "Okay, well, I'm going to figure out what I had done wrong and build something that's better." So instead of a single Raspberry Pi, I ended up

building 15 boxes that had what multiple wireless adapters in them because the missing parts of the data was one, I was using the wrong tool. I was using uh aerodyump which was very specific for dumping passwords and uh dumping credentials and it wasn't necessarily grabbing all the data and I wanted to see all the packets. I wanted to see all of the information. So then I get those boxes and then I get uh some friends to help me run them around conferences and install them in walls and put them in places. So then the next year I'm like, well that's interesting, but I still am missing data because I didn't have enough wireless radios. And you're

talking these conferences at the time had about 15,000 people and about 22,000 at Defcon during those times. And I still didn't have enough because there's so many people and there's so much spectrum that I wasn't capturing all the data. I wanted more. I wanted a network tap. I wanted to be every piece of information. So that led me to the project that was discussed, the Wi-Fi cactus. I wanted to capture every piece of data. So, I put together this insane project that had 50 radios that caught all the channels in 2.4 and 5 gigahertz. The problem with that is that's big, but it also requires a lot of power. 50 radios is a lot. So, I was like, "Oh,

you know what I'm going to do? I'm going to strap a car battery to it because that's the best battery source, right?" Uh, yeah. No, those are like 25 pounds. Um, and you hang that back off of a, uh, I was using a backpack, open frame backpack, uh, is what I built the, uh, cactus out of. Uh, it's heavy. The whole thing weighed about 55 lbs. So, it was insane this project. So, I'm like, "Oh, there's got to be a better way. There's got to be a better way." So, of course, there was optimizations and figuring out ways to to make things better and and to condense it, which led to some other projects like this project

called the Wi-Fi Kraken. Um and also I condensed batteries and and switched to lithium ion because they became cheaper as well. So all of these iterations led me to another stage where okay now I have all this data. How am I going to process this data? How am I going to process this data? How am I going to be able to see through this data? Well and that was in about 2018 2019 time frame. I had captured like uh for one conference at Defcon walking around with this backpack for about three and a half hours I caught a terabyte of wireless data which was insane. That was so much data. And if anyone has ever dealt with

a terabyte pcap file you know how bad you hate your life. So I learned another lesson right especially in 2019 we don't even have we didn't have the compute that we had back then. So I was like oh I'm going to start writing a tool. So I built a tool called pcapinator. PCAPator was a tool that basically was a wrapper around T-shark and um uh and and just tore apart pcap files into multiple small pieces and then used Python to do multi-process to launch multiple instances to be able to query and then it put all the results back together. So I was like oh that's cool. But then it wasn't enough. I needed still more. So I

got into data analysis and data informatics which led me into machine learning and because of that work and some of the things that I had done with that work I actually uh started was working on a startup with a friend of mine where we're actually using machine learning and machine vision to identify counterfeits because during that time we started to have uh supply chain shortages. Anyone remember the sewers canal the the ship that got stuck in the middle of the sewers canal? Uh and so basically this massive container ship got turned sideways and got beached and so it blocked off one of the biggest shipping ports uh in the world uh shipping throwaways in the world and so

through that with that created a chip shortage. So what happened is is people started to just do build epoxy with all these microchips and then send them out and you'd plug them in and your boards wouldn't work. And it's like okay well this is insane. Why is why do we have this? we could identify that with X-rays. And so I was like, well, if we build a machine that takes X-rays using like dental uh like when you go in to get dental X-rays, use one of those machines, take an X-ray of a chip, and then we build a library of what these chips are supposed to look like under X-rays, we can start automatically with

machine vision to identify these. So I started building and messing with PyTorch. We were way ahead of our time. If I were to pitch this idea now, I would be probably like a multi-millionaire and have the best AI startup, but in 2019, people are like, well, if you're not Google DeepMind and you're not solving uh Alph Go and you're not playing Starcraft with AI, you're you're not doing AI properly. So, that actually turned into a failed to start. We we didn't really get too much traction despite all the cool things. So that led me to a journey on where I went to work for um uh a company where uh it was called Secure Yeti and we were a

contractor with the Federal Reserve Bank. So then I spent two years uh doing pen testing inside of the Federal Reserve Bank. So I was startup with AI then I went to the Federal Reserve Bank and got to play with some insane networks. Uh also the Federal Reserve Banks has amazing amounts of uh large amounts of um uh people doing data processing there and I was lucky enough to actually start working with some of them on the AI projects they were doing and data automation projects they were doing. So I was pentesting AI environments and that like clicked for me in around 20 uh 22 of like oh I want to hack AI systems so badly because I

see what's coming. So then in of 2022, like when ChatGBT first came out, I was like, "Bye bye bye." Like, "Sign me up for the sign me up for the demos. Sign me up for all the pieces." So I was like, "I want to be a part of whatever this thing's going to turn into. I've seen what's happened in the past. I see how these tools work. Writing my own machine learning software and training my own models to uh to the point where um I see where things are coming. I'm like, this is going to be exciting. So I was a very early adopter and started just using these tools in projects as much as I could. So then moved that to

24 2024. So I started at Coalfire. Um and one of the things about Coalfire is uh when I interviewed with them I'm like well I'm kind of a weirdo and I kind of do stuff. Well it turns out the people who hired me are the people I work with at Black Hat and at a number of conferences around the world. I spent a lot of time with these people and they're like, "We will give you an environment for you to do your crazy madness." And I was like, "Yes, I'm so excited. I get to do this type of stuff." So now I've actually done AI pentests on Fortune 500s. I've done pen tests on Fortune 500s. I've done uh some

crazy projects on uh situations with threat actors that is like there's active nation state threats on as well as uh like helping people implement their AI solutions as well as currently building AI solutions for our company and and testing the plausibility of making AI an asset and tool that we can use to increase our ability for pentesting. So that's a bit of my journey. It's a little long- winded. That's the long version of the journey. So that's where we are here and that's why I think that I can speak to some of these things and I hope that this stuff is interesting to you guys and uh yeah we'll we'll we'll go from there. So I

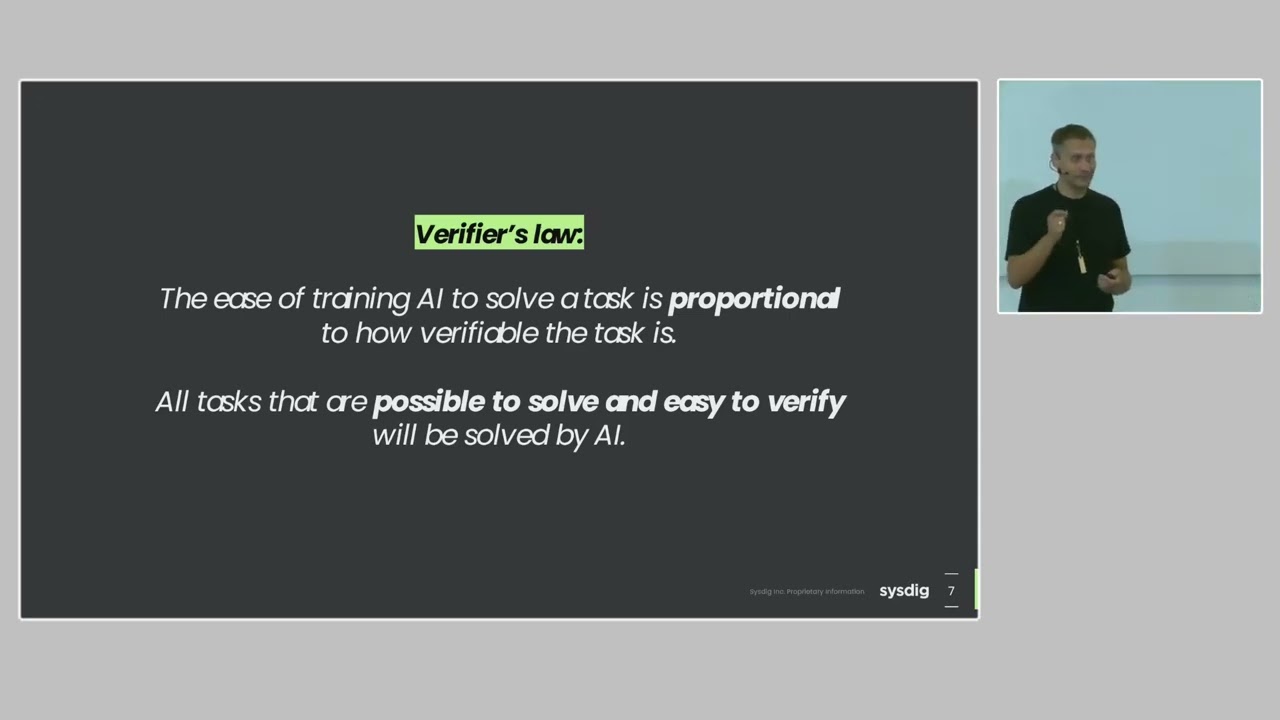

read this uh quote years ago actually and then it was re-reed to me recently and I absolutely love it by um Dr. Stephven Hawking. Success in creating AI could be the biggest event in his in the history of our civilization, but it also could be the last. Unless we learn how to avoid the risks, alongside the benefits, AI will bring dangers like powerful autonomous weapons or new ways uh for the few to oppress the many. So like it's crazy and the stakes have never been more real. So for example, currently there's wars going on like things are actively escalating. We have things that are happening on nation state levels and in Ukraine they're seeing more and more cyber attacks. 70%

in the last year than the previous year of of cyber attacks that ramped up there. In addition, there are AIdriven missiles, AIdriven drones now uh that are going to be autonomous targeting. Why? because jamming and control and guidance systems are being affected and impacted. But if you make it fully autonomous though they can operate without interference. In addition, we have nation state campaigns. We had AT&T, Verizon, and these different networks that had these thread actors inside of their networks that are linked to China for years. Well, potentially years. We don't know the exact date. All that we know is they are now evicted. Are they really? Are they do they still have back doors or

still things happening there? We don't know. It's it's it's sketchy. And then on top of that, we have cyber groups, cyber espionage groups like AP44 that are absolutely tied to military um uh military uh exercises and to provide wartime advantages. So like cyber security is absolutely impacting things on a national national level. And on top of that with AI and with the things that are happening in AI, criminals are embracing AI just as fast if not faster than anyone else. So there's this thing called worm GPT. It's the number one darknet AI. Uh it's completely uh no safeguards uh no filters. Uh you can basically have it do whatever you want to create whatever things you want for it. Um and uh you

can pay a subscription and get an API for it even. And the other thing is there's a number of ways that cyber criminals are using AI to operate extremely fast. They are using it to automate, evade, and adapt. So why does this talk matter? Why am I giving this? Why am I talking about these things? The point is AI isn't coming. It's already here. One sec. Sorry. It's already here. It's in C-pilot. It's in the tools you're already using. If you're using any sort of sock tools, they're probably saying, "Switch over and use our AI. It's going to solve your security problems for you." Right? So that's the world we live in and we need

to understand that world. So what's the agenda for today? So I'm going to talk about one the attackers uh because I like to spend a lot of time on the attacker mindset and I do offensive things, but also we need to talk about the defenders because guess what? They're not left out in the in the in the rain in this. The defenders have a chance. Next up, I'm gonna talk about uh the hype and the reality because there is a ton of hype with AI. Like, it's literally solved all of our problems already, right? Has AI fixed everyone's problems that you have in here? Yeah. I mean, it's done it's done a lot for Pope, so let's be honest. And finally,

I'm going to talk about different strategies you guys can use to stay ahead of AI curve or at least at least jump on board and get get on the roller coaster wherever it's going to go. So let's start with the attacker. So I see AI as a threat multiplier. So what does that mean? It's for an attacker and for an attacker minds set, it's going to enable them to create malware faster. So in increase the velocity of writing code for malware, it lowers the technical uh uh bar of entry. The the the technical bar of entry. In the past, in order to write like uh very awesome shell code that would evade defenses, like you needed to understand

programming. Now I can take shell code and put it into chatgpt and say convert this into Rust and it does an okay job, a good enough job or even say take this PowerShell script and turn it into a Python script. And now I just evaded the endpoint detection. It also increases the complexity of payload payloads because I can come up with an idea and I can say okay well I want to create a reverse tunnel out. Maybe I want to do that reverse tunnel and have it beaconing to a discord server and maybe then from there I want to have it respond to certain commands that I give it in the Discord server. One of those commands could be uh you

know if if all things fail I want it to encrypt the hard drive. if I'm going to do ransom and it's like I can write that all together, all of those pieces of information in cursor, have that whole malware built and like I've never written a Discord bot before like I don't I don't need to know how to do that. Uh because I have access tools that will do that. On top of it, Agentic, who knows about the Agentic, the Agentic Revolution, there's agents everywhere. Uh on top of that, the agents are going to do more damage because essentially agentic all all the agentic thing is if you don't know, it just means that you're running something

on something else. It's it's a computer, it's a shell, it's it's it's another uh LLM, it's an MPC uh MCP server. And so essentially what you've got is uh one thing controlling multiple things. Well, guess who knows how to do that very well? Cyber criminals, they know how to do that very well with botn nets. They're already super aversed in making things control things. So with a gentic, it's only going to get worse because they'll be able to automate and run commands much faster using that lower techn that bar that lower technical barrier. And finally, AI attacks on its own. And I'm going to show you an example of that and what we saw at

Defcon this year where AI can attack and do its own thing completely on its own uh with very uh minimal human interaction. So you're talking launching attacks fully autonomously. So uh my number one thing that I always go to is coding and uh even even before AI came out I spent a lot of time I would write payloads in Python. I would write uh shell scripts in assembly. Um, I did quite a bit of reverse engineering over my career and I would use Python to leverage reverse engineering tools. So, code is the language of computers and a lot of times computer security. So, I don't know if you'll notice on this picture, hopefully it's large enough for

everyone to see. This is a very dynamic environment. There's a lot going on here. Uh, this is this is cursor that's running Gemini with cloud code. So, thank you. That's how I AI. I I go three levels deep. So, >> uh, we'll get to that. I I Pope's trying to do some spoilers. I have a whole I have a whole whole tier list of why that's not um, and so, uh, I do things in the most crazy way possible. So, it's like, guess what? When you run Gemini and you have it modify code inside of cursor, at some point cursor will be like, hey, guess what? there's another thing modifying my code and it will

detect and it will get angry at you. So, don't know if you guys have known that before, but that was something I've learned uh with some of the stuff I do. So, anyways, the point is there's code tools that are making attackers be able to create code super fast. So, uh here's an example of low tech barriers. So, uh this was from April uh 22 or April 2022 on an underground hacking forum. You basically have someone that's saying, "Hey, I went to chat GBT and I'm had it create this powers PowerShell script for me." Uh, and they're like, "Look how cool it is. Look how smart this is and look how effective this is going to be

for info steelers." It's like, "Oh, wow. Now they're starting to share and show that." Let's talk about increasing the complexity. Increasing complexity is always is the thing that always makes it harder for defenders. So back in the day, what did anti virus focus on? Signatures. It needed to have a hash file. It needed to have a piece of information that it could detect. Then we went to EDR. What does EDR do? It tries to look for behaviors. But ultimately, what does EDR look for? It's mostly looking for known types of attacks. And now we're getting into this new MDR and XDR and QDR and LDR. and I I don't know what all the other acronyms

are, but essentially the whole idea is they want to move to a behavioral analysis because things get more complex. So, as an attacker on pentests, when I've gotten in, I've gotten my PowerShell running. Even when I've done red teams where I'm physically breaking into a place and I'm plugging in an OMG cable or a rubber ducky and I'm running a PowerShell script, like for example, I was I was on an engagement and plugged in Cortex is like, uh uh uh you didn't say the magic word. Uh I was like, "Dang it, come on, Cortex. Let me do this thing. I'm just trying to pop a shell reverse shell out." And so then I just went and changed a few of

the variable names. And then I made it so instead of uh uh ch you know uh running um I don't even remember the command uh changed one of the PowerShell commands and so and then encoded it in B 64 and that made it enough so Cortex was like okay you're cool. So now with AI I can take that same script that doesn't work in Cortex and be like okay let's polymorph this another level. Let's do uh instead of B 64 let's I don't know Caesar cipher. let's write our own Caesar cipher for it cuz who's going to expect Caesar or or even better why don't we put it let's encode it in Morse code and then have a Morse code decoder

built into it and now your malware is built with Morse code so you're seeing just dots all across dots and spaces all over this this uh this PowerShell script and it's like oh this looks benign I don't know what this is and it's it's easy to create that type of stuff you can literally type into cursor say turn this code into a Morse Morse code uh uh interpreter and or a Morris code encoder and decoder and you know your your victims are none the wiser. So let's talk about agentic pipelines. So at Black Hat this year, uh, one of the most awesome things that I think came from the talks that happened there was a a researcher that demonstrated

that there was a zero click, zero attack, zero interaction beyond just having prompt injection in a Google doc. So the Google doc had that prompt injection. They had their agents attached to read that Google doc. From there, they were able to get a reverse shell from a prompt injection in a Google doc, which is crazy to think about. Like, now we're at the level you have to think about, okay, not only are the words in my Google doc important, but if it's attached to an agent, it's possible that a prompt injection might happen that could do further damage down the line. So what AI's done is it's created a threat for us that is an end

to the end problem because you have your threat that's the baseline threat but it could be built in with the agentics. So super duper scary stuff and I promised I would talk about autonomous AI attacks again at black or excuse me at Defcon there was this really cool challenge called the AI cyber challenge. The AI cyber challenge was put on by DARPA and essentially pitted teams against each other to build autonomous AI using large language models and novel techniques to take known software that has vulnerabilities, analyze that, write exploits for that, attack other systems, and write patches and defend it all at the same time. In addition to this, the team that took second place um oh, their

name just just give me a second, it'll come back to me. The team that took second place completely open sourced all of their toolkits and all of their stuff. It's called uh oh no I just forgot butter something I don't know crap my brain. Anyways they completely open sourced it. So if you go search AI uh the AI cyber challenge and look for open source tools, there is an exploitation LLM framework that they have released uh that you can just start playing around with and run it on open source projects and uh part of the thing is it does source code analysis. So it does need to be um it does need to have access to a source code repository. But

from there, like you're you're talking about a new level where AI is doing source analysis, looking for vulnerabilities, writing auto autonomously a p uh an attack script to prove that it is actually vulnerable and then on top of it rewriting the code to make it so it's patched. So trail of bits, those are the folks. And the name of the the the the tool is it has butter in it. I know >> buttercup. Thank you. See, I promise I don't get stage anxiety. Just kidding. I'm deathly nervous up here. You all are scary. Just kidding. No, thank you so much. Yeah. No, that that was an incredible incredible showcasing. And not only that, DARPA always brings the money. I don't know if

anyone saw screenshots or things of they had like this whole like LED litten up with all of these panels. They had people there that were providing explanations. It was rad. If you can like go search for YouTube videos of anything that's involving the AI cyber challenge, super cool tech, super cool stuff. All right, let's get to the good stuff because let's let's be honest, the attackers are cool. Screw those guys. It's all about the defenders, right? Let's long live the defenders. I wouldn't have said that a year ago. Now that I now that I work technically in defensive services, that's that's where I am. So, okay. All right. So, here's my here's my pro. How much time do I have left?

>> Okay. Okay. Okay. I'm just checking. I'm just checking. >> Also, too, if you guys are like bored, you're like, "Ah, Mike, bro. Bro, come on. Let's go." You're like, "Just kidding." Okay. Uh, honestly, at this point, from a defensive standpoint, traditional defenses do not seem to be enough. I think we need to do more. We need to look at things in new ways. Why? Because we have alert fatigue. There's evolving threats and we have speared deep fakes. What what what is these things you talk about? So with alert to alert fatigue, the number one thing that I see and I'm sure my cohorts would agree with me that we see in a lot of

organizations is they are underresourced. There is never enough sock analysts. There's never enough defenders. There's never enough security staff to do all of the jobs that need to be done. And a lot of times those security staff are also wearing multiple hats, right? Because organizations do not have incredible budgets. They are very have refined and only that security in most cases is a cost center, right? There's nothing that comes from having security other than we have to continue to pay into security. So what does that mean? That means your DevOps person is also doing security and they are going to be looking at not just the alerts for the SIS logs and for what's happening, but they are dealing

with tons and tons of of of all sorts of information. So they're getting overwhelmed. Also, the threats are getting more and more complicated. They're coming in at a much more rapid pace. Companies that weren't even being attacked in the past are getting like knocked over on the daily. If you don't believe me, go look at breach forums. Go look at any site that that hosts the leaked files of people that have gotten ransomware. Like it is fascinating to just like click through and look through what files and who's been targeted. Like it's from mom and pop real estate companies to like companies that are building like plastic like fittings for things to agricultural companies to trucking companies. Like it

doesn't matter. Like they're going after the lowhanging fruit. They're going after anyone that they can get into because it's a revenue stream for these attackers. And on top of it now with AI it's hard to distinguish what videos are real from what is AI generated. A few years ago there was the Will Smith example eating spaghetti. It's like, "Oh, yeah, that's funny. That's that's awesome." And that was the worst it was ever going to be at that time. And now there is videos online that you would not even know that they are entirely AI generated or fake. I mean, I've been duped. I mean, I there was there was some movies that I wanted to have happen

that don't exist and and so I'm super sad about that were turned out to be AI. So, uh, yeah. I mean, it's it's a crazy time that we're in. And again, I'm going to say it again. Right now, what you're seeing is the worst it's ever going to be. It's only going to get better. Okay, so let's talk about the alert fatigue example. So, in this example, I wanted to talk about a specific case that we dealt with recently where you end up with a company that's has all of their information supposedly going into this a direct place. It's all being thrown together. It's being aggregated, but it's also being mixed in with SIS logs, which in Linux, if you have Linux

SIS logs, like it's whether the computer booted, whether the things are coming up online, whether your discs are good, whether your temperatures are good, it could be so much information that has nothing to do with what you're doing. And if you don't have like aggressive filters and aggressive alerts, excuse me, you will have a situation where you're just inundated with information. On top of that, there's a lot of organizations that are like their their uh CTOs and CISOs or, you know, they go to those the vendor hall at Black Hat and it's like, "This AI tool is going to solve all your problems." They're like, "Sign me up. I'll pay half a million dollars to have that tool integrated

into our environment." And then they go to tool B. Hey, I'll get that tool. And there are overlapping tools in some cases. So, it's like you see environments that are Microsoft environments. their active directory and they have Sentinel and they get, you know, a certain level, but then it turns out they don't know how to use Sentinel or they don't understand the subscription features. So, they're like, "Oh, well, we also want additional protection because Sentinel is not enough. So, we're also going to do CrowdStrike or we're going to do we're going to do uh any number of other tools, right? And so, you end up getting all these disjointed sources of data." And if you're not

aggregating and putting that information together, you've got problems. you've got huge problems. So then on top of that you have limited resources in these environments creates a massive problem. So what what can what could be done in those situations? So number one is you can fight fire with fire. So we can start arming our people with AI. We can start giving them the tools. So one thing that is super effective in those cases is you can actually write alerts and rules in human language. So in the account example that I was giving suppose you have all of these different you have sent and you have uh maybe you have Splunk and you have all these other

tools. You can literally just ask AI to say, "Hey, what are the top 10 most things that I should have alerts in my systems for? And can you give me those queries?" And it will probably give you something. And it will probably give you something. And guess what? That's probably a better start than where you are with the default built-in canned ones because it's giving you something based on language that you're implementing because you have knowledge of your environment. You have information of what is going on inside of your network. So start there. Start asking it what things you can put into your SIMs, what thing you can put into alerts. And then on top

of that, if you want to level that up, ask yourself, okay, maybe you're in the aerospace vertical, maybe you're in uh maybe you're in a retail vertical, maybe you're in a vertical that's being targeted by a specific group or there's threat actors that are currently doing ransomware. You could say, okay, we're really worried about ransomware at our organization. What's the number one way that ransomware is getting in? And it's like, okay, well, usually it's through fish. And then on top of that, they're running some crazy PowerShell to be able to get access. And then they're pivoting across the network. Every single one of those actions is creating logs and pieces of information that can be

queried if you have EDR, if you have logs, if you have that type of stuff. And then you can ask it and you can start getting rules and start adding alerts, start building out your data. So, uh, and then next on it, you could talk about threat prioritization like I've sat in many rooms with people and it's like we don't even know where to begin. We are so overwhelmed. Our list of things that we should go after is massive. How do we even get going? How do we even start on this? Have a conversation with your with your co-pilot. Have a conversation with it. It's like these are these are the things we're overwhelmed with. These are the

things that we're worried about. These are the things. What do you recommend? And it's pro it may turn give you garbage. may say, uh, well, you need to enable two factor and you need to enable this and you need to do this. It's like, well, we already have that. And it's like, okay, tell it. Be more specific. Give me more answers. I'm not saying that AI is solving all the problems and that you can just deploy an agent in your environment and it's fixing all this. But what I'm saying is you can level up yourself with AI and get access to resources to be able to get answers and be more effective. And finally, the next piece

that always makes things more uh effective is task automation. Ever since I started in Linux, the whole point of writing shell scripts was I want to replace myself at my job with very very basic shell scripts so that I don't have to do my job anymore, right? Like that was my start in it. That was like one of the biggest jokes back in the day. It's like, oh, I'll just replace these people with shell scripts and automate everything with cron and everything's happy, right? And now we can do a lot more of that way faster. We can do task automation a lot more. So look at the repetitive tasks in your life in your day-to-day that are annoying that are

having problems with and figure out if you can write scripts to do that for you. Uh in in a lot of examples like just pulling log data and getting access to that information is is so time consuming and getting it into a place where you can actually type a query can be so timeconuming. But having access to that information and automating that uh will will pay exponential in your dividends. All right. So let's talk about alert fidelity using a case study. So one of the things I I think I failed to I I mentioned and I'm a part of Black Hat and I think it was on the first slide I didn't really talk too much

about it. So I'm a I'm a lead at Black Hat. Uh I'm a co-lead. I'm I'm a sub lead. I don't know exactly what our titles are with Pope. Uh, I I don't know exactly where we f I'm whatever I want. I own Black Hat. I'm in charge of all of Black Hat. Pope said it was true. And Grifter and Bart are not here to tell me no. So So at Black Hat, uh, I I work in the Black Hat Knock. And honestly, it started because I, uh, kept showing up and saying, "Hey guys, can I run these boxes? Can I leave my Wi-Fi cactus here for a few hours? can I collect data? And

they're like, dude, stop screwing with us. You are our biggest threat. Come do work for us. I'm like, all right, all right, I guess. And so that happened about what, like four or three years ago, three or four years ago. They're like, yeah, come actually do work and let's see if we can make things better. I was like, all right, game on. Let's do this. So, one of our missions is a we provide the network. We tear everything out. I don't know if anyone is is familiar with the black hat network uh but we think that it's very hostile. Well, not only that, we have trainings just like here there's trainings. So, people are learning like hacking tools

there. And a lot of times these are world-class trainers who are talking about some of the like bleeding edge attack uh methodologies and defense methodologies and like people are paying like between five and six grand for these trainings. And our job is a to make sure they have a stable network, but then on top of that, we need to make sure that they're safely doing it because things that are scary in like everybody's environment like attacks that are going to the cloud might be demonstrations. They might be uh different types of uh of proofs of concept. They might be people doing demonstrations uh to their class and it might be students doing attacks. So, we

have to look in an inordinate amount of threat data that is just all alerts that would probably make anyone in a in a typical sock puke and find if there's real threats in there because we do have people that come to Black Hat. We do have people that want to do real harm. We've we've seen people that have come and have specifically targeted people. There's been cases where we've seen uh a private investigator that was following an employee uh at the at Black Hat because they thought the employee had left on bad terms with the company and was stealing company secrets. Turns out that investigator was not good at encrypting their data. So, we were able

to see all of the all of the the files and pictures and and like communications he was having with his employer. Uh and so it's like these types of things are absolutely insane and we deal with just so much data because of this and what we have done and side note that's uh that's a friend of ours Jimmy from PaloAlto uh one of our one of our super friends from uh from the black hat now we have a partnership with a lot of different vendors and companies uh and PaloAlto Cisco uh Arista Corite um and some others that I can't think of off the top of my head that we all work together and we end up with a team of in the US show

about 60 did we have or 80 70 people we have the best case scenario you could have of all of the defenders the smartest minds we have people from PaloAlto's unit 42 we have people from Cisco Splunk we have people from like the smartest threat hunters from corite some of the smartest threat hunters that I've ever met in the world sitting next to each other in a room and competitors nonetheless and we are looking for threats and things And even we are overwhelmed. We only have so much time to look for threats and look for that that crazy behavior. So long story extremely long. Uh this year we did something I think that we have

never done previously years and I think the uh Pope's talk here coming up is going to go deep dive into this. We were able to fully have a pipeline of AI help with our alert triaging to where we would have highlevel alerts and it would be like this is an attack. This is somebody doing like launching a payload. This is a known payload. But guess what? It's a it's it's a training class. Somebody is learning a new tactic here. And we close it and we're like we're going to let that behavior fly. That's fine because that's black hat's pos we black hat positive because it's a positive identification of a threat, but we're going to let it go on the network

because it's allowed behavior. It's and maybe even expected behavior. So, we used AI at this conference to look through all of those alerts, help us figure out. So, then our threat hunters, that team of 70 to 80 people are only looking at the most interesting and most crazy attacks that are happening on the black hat network. So we can be much much more effective and this is the like this year I think was the pinnacle and we have never done like this amount of threat hunting. We had never closed this number of issues. We had never looked through and found this level of threats. So super awesome uh stuff that we're doing at the Black Hat Knock. So uh

there's a wrap-up talk every year. Uh this one for Black Hat USA isn't out yet. uh but look for that and it's it's it's going to have some cool info and good insight into it. So your organization could be very soon having a similar workflow to where AI is helping you get that higher level of alert fidelity. So let's talk about skills multipliers for defenders. So number one it's helping with investigation acceleration. Uh and and I've cited all the sources down below. Don't worry I'll make the slides available so everyone can have it so you don't have to worry about it. But these are different examples of where in the world right now there is actual

impact happening and it's being measured like people are putting KPIs on AI to make sure it's doing its job and doing the thing it's supposed to. So it's it's enhancing investigations. It's uh doing a lot of what was traditionally um manual analysis we can do automated so we can catch up and look through things faster. And this study uh specifically was saying it's increasing responders effectiveness by 55%. Which is crazy. So it's doubling responders ability to look through things in in in uh investigations. It's also reducing costs. It's allowing us to have cheaper breaches. It's allowing more effective impact on organizations that have uh that have um they that have had AI and AI tools uh

related to their uh breaches and had those tools. It's costing less on average. And a lot of that cost reduction is the fact that these organizations are already mitigating a lot of the damage that happens to begin with because they are more advanced in their tool set. It's also reducing false positives. So it's kind of like alert reductions. A lot of alerts are false positives and that we spend a lot of time fighting with false positives. So Sock analysts are seeing a huge reduction in false positives. Uh, we're also seeing detective effectiveness, detection effectiveness. So, we're also looking more at behavior analysis. So, I don't know if any of you remember back in the olden days of of the early email

and we had spam assess. I mean, spam assassin still exists, but like the spam did it was like spam got through no matter what. Like, it was just happening every single time. And it's still bad. It's still a problem, but people have to be more creative to have it land in your inbox. And we've approached that problem from lots of different levels. I think the same thing is going to happen with malware analysis and malware detection which ultimately is going to lead to a reduction in response time overall. So super cool stuff. All right, so let's talk about the reality and hype. My favorite part. So this is the primagen. Uh if you're a coder and like funny

coding people, he's hilarious to follow. I I always like to check in on his statement.

We're 27 months into AI completely stealing all of your jobs. Uh, and the one thing that popped up just recently is is, uh, people with chat GBT5 coming out. They're like, "Oh, it's AGI is here. AGI is here." So, uh, yeah, there's a bell curve of people that are like, you know, on the on the bleeding edge that think that this stuff is happening tomorrow and it's taking over. And then you have, you know, people that are more skeptical that are slightly in the middle. And then you also have people that are like, I'm never using AI. I'm never touching it. So, um, I I don't know where I fit on that curve. It changes dayto-day. So, and it depends on

the hype cycle, right? So, right now, I'm feeling kind of hypy. So, I think I'm more on the uh I think AI is changing the world stuff. All right. So, one thing I think that's important um is to talk about is uh this thing called the paro rule. So, paro rule is this idea that 8020. there's 8020 and it's just generalized. These numbers don't have to be exactly accurate, but in general, you can apply this principle to a lot of things. And I think you can apply this rule to AI and a lot of the hype in AI because the initial 80% of accuracy is relatively AI for uh AI to achieve uh using large data sets. This often

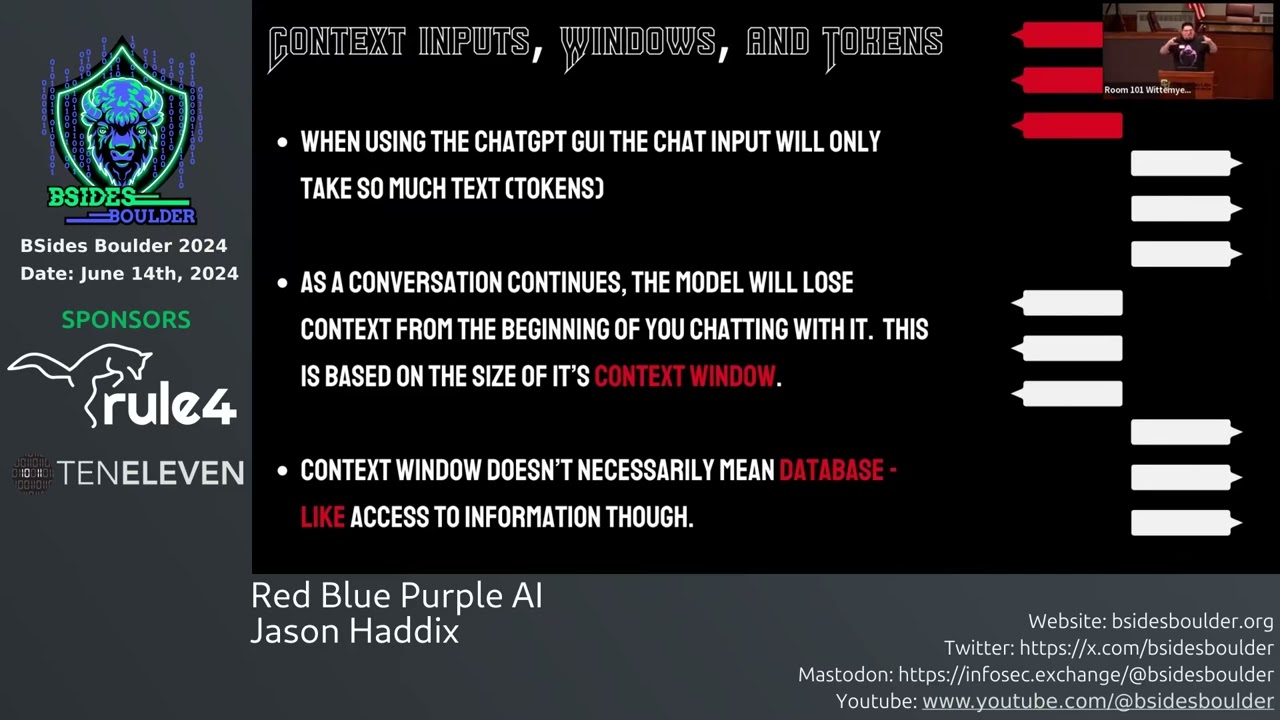

creates a false sense of security that the task is nearly complete. However, that final difficult 20% of accuracy where uh AI um is encountering the following. So, my idea is that we're at that 80% or maybe not that high, maybe less than that, but we're in that that window and now to get it the rest of the way is going to be extremely difficult. And the reason why is because there's edge cases and outliers. We have all sorts of uh missing specific context, data quality issues, and data bias. So, how do I know that? Well, I know specifically where it falls short. If you want to go find Splunk queries in Chat GPT, in Claude, in Cursor, in in

tons of LMS, cool, you're going to find it. No problem. Splunk's going to be there. Why? Because Splunk's one of the largest query languages that is used and has examples all over the internet. You want to do Sentinel? Sure. Microsoft has documentation for days and they've like been very open about it. And like you can even test it. Go use CrowdStrike Falcon FQL. gets a little more dicey. You're not going to find stuff that are as accurate. It's going to miss some of it. Why? Because it's going to return you Splunk queries because that's what it knows. You get to these edge cases, that's where AI falls apart. So if you want to test something that's an AI

product and see if it's hype or not, take something that you are very specific domain knowledge about that you are an expert in and ask it about that edge case, that weird fact, those weird things that only you know and I guarantee you will find the limits of AI very fast when you're doing that. I'll give you another example. Expo, have we heard about the Expo? It's uh Expo is this crazy company that popped out of nowhere and all of a sudden kept rising up the leaderboards of hacker one and all of a sudden it was number one on hacker one. Whoa. Our and then they came out and are like all of our stuff is AI. We are

using AI to solve all of the problems on hacker one. Bug bounty the bug bounty problem is fixed with AI. That's literally what they came out and said. The problem is if you get into the minutia and the details, if you add those numbers up down in the bottom there, that gets you to around over $und00 million of investment. You've solved a,000 bounties with a hund00 million over $und00 million. And on top of that, if you actually look at the bounties that they've they've solved and applied for, their reputation score is a 17, which is out of 50, which indicates it's mostly low. and some medium bounties. So if you look at the top bounty hunters on hacker one, their

reputation scores sit higher than 40. And so this is a tool that's solving the lowhanging fruit. Thus the prao rule, it's solving the the easier things, the things that that we're catching and which is good. It's a good solution, but that's a lot of resources. That's a lot of of technical capability that went in to solve a limited skill set. And honestly, like I'm giving Expo a lot of crap here. Uh and and they're doing stuff and they're moving it forward. And I think the automation's awesome. I just think the way that they did it was was kind of a uh unfortunate for the community. They could have worked with the bug bounting community a little bit

better. And now they're trying to build some of those bridges since Black Hat, it seems, uh through some of their correspondence and working with companies. Um, but ultimately I think we're going to have AI tools that are able to do this lowhanging fruit, do this stuff, and Expo is an example of that. However, they said it solves the all of these problems. And when you get into the minutiae of it, it's it does with an asterisk, right? All right. Where are we at? Where are we at on time? 10 more minutes. All right. Let's go. We're We're good. All right. Here's some warning signs if you're if you're looking at some snake oil. So are they

overpromising things? Are they saying that it's literally solving the world's problems? Is it doing everything? Uh and then on top of that, is it a wrapper on it existing tool? There is so many tools that are like we're training our own data. We have our own threat intel. We have our own XYZ. And then you're like, okay, well, can I just get access? And they're like, yeah, yeah, you just have to go log in. And then you like do rightclick inspect and it's like, oh, hello chat GPT or hello cloud API. So you're literally just a wrapper on top of something. You're you're you're maybe a system prompt and you're maybe a wrapper on it. So that's a huge red flag

that they're probably overstating the the technical capability of what they're doing. And on top of it, one of the things that we deal with in like AI and and especially in compliance is we want to know where the data flow goes. Can you tell me your data flow for how things are going into AI, where it's being processed? Do you have agents? Is there data flow into your process for your agents? Where's that data flow going? If they can't give you data flow diagrams of how their system is interacting, might be a big warning sign. All right, so now let's talk about staying ahead. We're on the home stretch here. I found this graph and this thing

just blows me away because like you think about it and it's like, well, AI is pretty incredible. Well, it's been two years and like you know I talked about the Will Smith example and like now it's creating much more insane videos and like that was two years ago and but when you take like just 1900 to now and think about whoa like we didn't even have computers that were capable of doing things like before like the 1950s right like we didn't have things that even could do computation like people were doing things by hand like it's incredible. So what does the future show for us? Well, I don't I don't know. It's hard to say. It's hard to say. Is this a

hi a hype bubble? Is this the next dot or the the cloud bubble or are we in that? Maybe. Maybe. There's definitely though some things about this hype cycle, this AI trajectory that we're on right now that seem to be making real world impacts. And so, yeah, it's going to be really interesting to see what what uh our kids' lives are like, right? and see further and their kids what it's going to look like for AI because yeah, it's really insane. So, what can you do today to get started to do things? Uh whether you're an AI skeptic, whether you're already an AI enthusiast, I hope I've got some things here that are interesting to you. So, number one, this

is what I say in every talk I've ever given and it's literally what started if we go all the way back to the beginning when I was telling you my path, what I was doing. So number one, be curious. Be freaking curious because like asking questions. Why is this thing work? How does the AI do its thing? Why is it giving me this answer? Why can't like I get to the piece of information? Why are they blocking me from this piece of information? Curiosity is the best way to be with AI. Question all of the pieces of it. Question where the data is going. question if OpenAI is like saving your data and training on it, all of that.

Then there's two routes from here. So, growing up as a kid that's uh from Utah, grew up in rural southern Utah, uh grew up pretty poor, so I always just immediately have a how can I do this for free or for cheap mindset. That's like the very first thing that that my brain always goes to because commercial tools have always been out of reach for me. And I've always worked for companies where I've been the guy that has to scrape it together for as cheaply as possible. So if you go the commercial route and you're lucky enough to do that, what I'd recommend is get into dev tools. Get into dev tools that will help

you. Uh Cursor, Windsurf, uh Devon, if your companies will pay for it. Uh and then on the agentic side, get into agent space, which is this cool new tool from Google that starts doing agentic stuff that allows you to integrate with a lot of different pieces. Uh, and then N8N, if anyone has has heard of N8N, it's a full autonomous agent network where you can add APIs, you can connect to different pieces, you can even run it locally, which is what I mentioned down here in the local LM section. So, you could run it in the cloud or you can run it locally. There's a lot of really cool information. I'd love to talk to you

more about it after this talk if you have questions on that. On the other side of this fence, you have local LMS. So, I try to run things locally. Why? Because I know what the hardware is doing. I know where the code is. I know how it's running on my computer and I can control the cost. It's it's a cost upfront, but it's not that much. Like, if you just want to get started, I would say go after the Mac minis. Mac Minis are probably your best bang for your buck right now. You can get a lot of uh compute out of those. Look for a 16 gig or a 32 gig Mac Mini. Make it your

little AI computer and you can do all sorts of crazy stuff with it. and you're between like between on what how you can find a for sale like $500 to $800 is all to get started with that. And there's tools that will be fully open source that will enable you to do the same thing as cursor with uh uh void the void editor. Void editor is awesome. Go check it out. Fully open source editor that allows you to start doing cursor-l like activities with fully open- source uh um fully open source uh frameworks. And on top of that, there's another uh programming language or programming library called Langchain, which I've been using since like 2022

uh when it first started coming out because we wanted to start chaining pieces of thought together with AI. And now it's like the agentic revolution. It's implementing all of these agents and function calls and being able to run things in terminal. So go check out Langchain if you're a developer. Otherwise, use N8N. N8N puts a wrapper on those in a guey. So you can connect up functions. You can connect them to your uh LLMs. You can connect them to API calls. It can even go scrape your uh your your uh uh your your Gmail for you if you wanted to. Well, don't use Google. I keep saying goo. Dude, I'm sorry. I love Google. All right.

>> All right. So, furthermore, what what else can you do today? We're wrapping through this. Uh we're getting close to the end here. Uh, here's my I put it in a tier list in a past presentation and I don't know if tier lists are cool anymore. Are we past tier lists now? Anyways, I'm just give you my list of of what I think is awesome right now. What's working the best for me as a cyber security practitioner, software developer, and just all-around hacker, Visual Studio Code, plus cloud code. That has just been money. However, you do have to pay subscriptions. There's API call fees, and there's things to go along with it. And I get rate limited

all the time. So when that fails, I'll go to cloud code. Uh and work luckily pays for me to be able to mess with cloud code inside of cursor with max mode. So if you haven't ever experienced max mode, uh go play with that for uh how long did I have your account, Pope? Pope gave me his account and I started vibe coding some stuff and uh I started using max mode and then I went to other things that weren't max mode and I was like these tools suck. These are awful. So, but don't pay for it if you if you don't have the money for it. Don't I wouldn't pay for Max out of my own

pocket unless I was like actually like making headway with it. The next one is Gemini 2.5 Pro with Max. That thing's solid. It's been great. If you don't have Max, I think Gemini 2.5 is the best because it has the largest context window. So, it's going to analyze a lot of your codebase and Gemini is they're doing cool stuff. I know. I know. We have the Google hater, but >> yeah, but you have to enable it and then you have to change your project and there's a bunch of things with it. They did it out of the gate. All right. Uh, no, that's true. Sonnet does do a million context window. So, you can do that larger context window

because people are trying to be able to analyze more information. Next up, I would say Gemini CLI, uh, which is basically their version of Cloud Code, plus Visual Studio. Again, my background is computer science. I graduated from Southern Utah University and so I'm always in a code editor at some point even when I'm on a pentest. Uh code editors are my friend. Oh, and then a really cool one that I want to talk about that's new that I don't think anyone really knows very much about. It's the LL expert that is a fork of Gemini CLI, but its focus is connecting it to APIs. So you can connect it to local LMS. So I've used it with Olama uh

with my Olama models. You can hook hook it to your cloud API. So you get all the juicy yummy of Google Gem uh the Gemini CLI but open source. So pretty rad stuff there. Uh then finally like the lowest mode is cursor auto. I just haven't had a lot of success with auto. It just keeps like fumbling and I just run in circles and I'm frustrated. And then finally GPT5 I think is the worst in my opinion out of all of these. So this is my experience so take it for what it's worth. All right. Uh, furthermore, a couple other tools that I think are really awesome and want to just shout out. Uh, there's this thing called T3.

You should check it out. You can even do some free queries while I was sitting here in the audience. Maybe you can jailbreak it. Uh, don't do that. Don't do illegal things. Um, but, uh, it's created by this guy named Theo. He's a he's a Twitch streamer. Um, and he competes in contests at Defcon. I've had the opportunity to, uh, uh, meet him a few times. Uh, he he can be he can be interesting. He's got some very strong opinions, but he is like radically uh uh excited about very fast React programs. So, anyone that's like into web UI and frontends, uh T3 Chat is the fastest interface to be able to talk to tons of

different LLMs and it's only eight bucks a month. So if you're looking to test a large range of things from deepseek to open uh the open source one from uh uh open AAI that just came out open I forgot his name. You can test all of those right in that platform and see what its responses give you in a chatbt like interface. It's lightning fast super cool um gets you access to even some some pro models and stuff like that. Another cool one on here is Mist AI. I don't know if anyone's heard about Mist AI. I use this and it allows me to I can type one chat and I can send it into like in some cases I have as many

as 10 different LLMs I attach it to because I want to see how good it does in my local LMS like DeepSeek. I do like uh Gemma uh 7 billion parameters, but then I'll also run it against Claude and do Claude sonnet. I'll have it do open a open uh open uh open AI's chatgpt 5 and have that API tied into it and I can have those all launch at the same from one studio interface. Super cool tool. Definitely check that one out. If you don't like the idea of uh having um to pay a single company like because right now every single one of these is a subscription I pay to every single one

of these. I think my I'm currently at about like between $700 to $1,000 a month on open on uh uh AI subscriptions because I pentest on a uh for my job. I do these things like in the daily. So I I need to know these things. So I need to mess with them. Plus they're all cool and I want to try them. I want to try all the things. So Open Router allows you to get access to tons of different LLMs through API through one source. like they for example when DeepSeek first dropped they were one of the first ones that had it in API access. All right, dude. We are like right on time. All right, key takeaways. We're

going to we're going to run through these real fast. All right, so number one, AI is here. It's not going anywhere. Uh probably every single one of your company's leadership has said we're reducing headcount or reducing costs. We're implementing AI. Tools are replacing it. So deal with it. Like that's what we got to do. Become an expert at it. Use it. Figure out what it does. Even if you're a skeptic, figure out how to break it. Show where it shortfalls are. Show where it doesn't make sense. Explain to leadership where it sucks. Uh it can enhance your skills. It's enhanced mine. I've vibe coded some crazy projects uh uh go check out on the next slide. I'll I'll explain it. It's a

a network monitoring tool uh that allows us to see a pew pew dashboard at Black Hat um uh called Vibes uh that was based off of uh actually uh this this Logan was the was the birthplace of the original tool um why did I just forget its name? OIP. OIP. So network monitoring tool called OIP that had little things. So I I rewrote it in React and Go. So, um, uh, using vibe coding this over the over the over this summer. That was that was my project I did over the summer. Uh, and attackers are using AI whether you like it or not. They're using it. They're creating very customized spear fishing. They're using it for deep fakes. They're using it for

malware, things like that. Ultimately, see through the hype. There's definitely some things to see through. Just like any product that there's been in security, there's going to be hype and there's going to be snake oil. And as security practitioners, we can see through that. We can find the basis through it and figure out where the real uh uh value lies in that. And so be focused on that. And with that, thank you so much. [Applause]