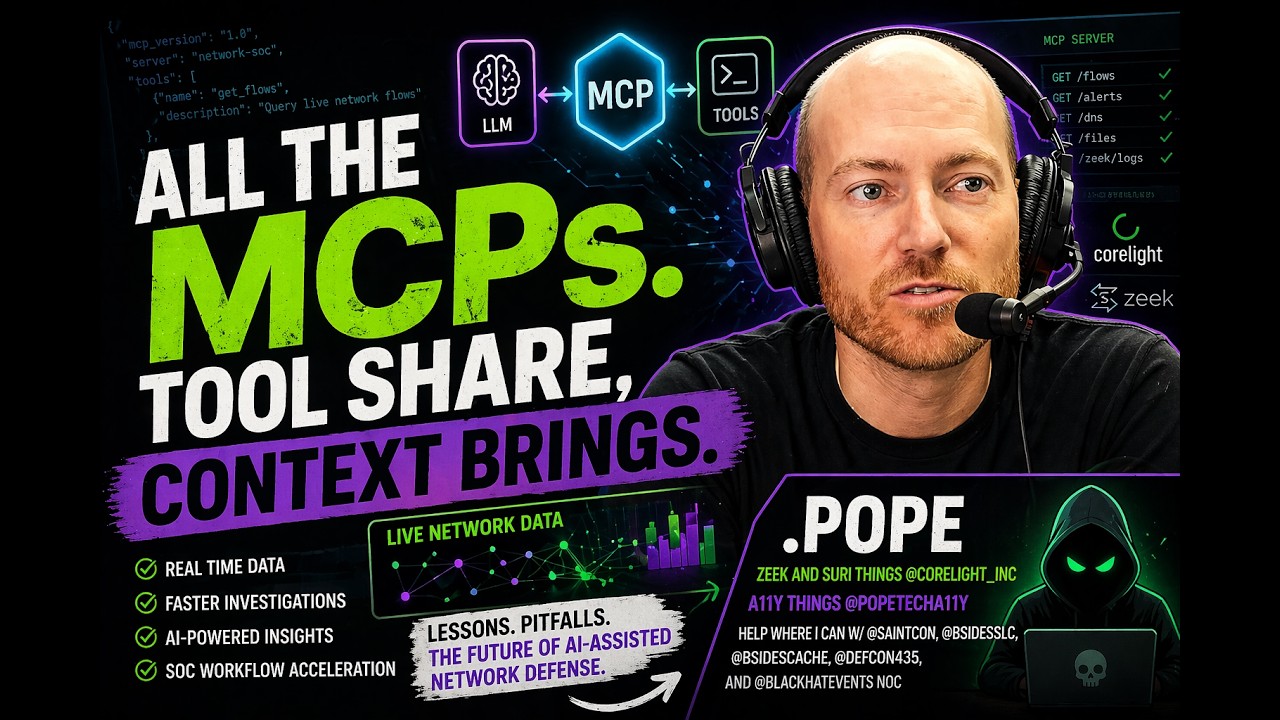

Model Context Protocol (MCP): The Future of AI-Powered SOC Workflows

Show original YouTube description

Show transcript [en]

Okay, we're gonna get started with our next presentation from James Pope. James Pope is an infosc junkie with a very extensive um experience in threat hunting across many universities, governments, cyber security conferences and a ton of uh some of the largest enter uh uh enterprises. He brings a lot of passion and expert unique expertise to um to these I guess methodologies and especially teaching sock analysts how to improve their security posture. Thank you James. Thank you. >> All right. So today we're talking uh a lot about MCPs. We'll get into the details of what that means but uh let's start with initially the beginning of a story. Um this was in the Black Hat Knock which we'll we'll go into depth

here in a little bit. uh in in the noise of all the alerts that you see, these are people taking classes much like here, right? You go into a class, you learn how to do an exploit, do a thing. There's people on stage presenting doing things. There's demos in the uh uh the floor going on and you also have um CTFs and other stuff like that. So you have all these things that are happening that are legitimate, not for a normal enterprise, but for black hat could be legitimate. And then inside of that, you're trying to find the the real bad stuff. And in one example, uh we got a flag from our backbone or access points

that were accessing malicious sites. And uh the ability to get that faster through the noise is one of my goals and objectives. And I'll I'll cover the rest of that story here in a bit. So, real high level about me. I work at Coralite. That's who pays me to be here. Um, but don't worry, we're not getting in into any vendor stuff. One of my other jobs is I am the sock lead for the Black Hat Knock, which is where most of this is going to be based on what we're talking about. I also volunteer for a bunch of the Utah nonprofit conferences, including Saint Con, uh, this one here, Besides SLC, and Besides Cash. I live up

in uh the Cache Valley area and um yeah, so lots of that kind of stuff. I also do some web accessibility stuff uh with some of my brothers at a company I own. So for Black Hat Knock, this is what the knock looks like if you haven't seen it. These are various shows. The biggest show is the US. There's I don't know 60 to 80 people in that knock. We run multiple shifts. We're there the entire time. We have to set up the entire thing. like we bring in firewalls, we bring in access points, we bring in switching and we have to get it all working and then immediately turn around and try to secure that. So that's a

little different than most your organizations. I I suspect you're all not deploying an enterprise environment in a week and then trying to use it for the week and then ripping it all out, but maybe there's probably a few edge cases like that. But as far as the defense part, that's very similar to how a lot of organizations work. So yeah, it's a large group. Uh we have partners. We truly call them partners. There's no way to buy your way in or come up to us and say, "Oh, I want you to use our product." We look for the products that solve uh issues and challenges that we have. And then this is our stack of our partners that we use

from Arista, Cisco, Corlite, JF, and PaloAlto Networks with various capabilities from each of them on what they're doing. This is what it looks like. A little bit of a fishbowl action. You can come in, do a little tours of it. And um inside of there, we're we're there working. I have a luxury that a lot of organizations don't have. Uh that's not true for all. Some big organizations. I've definitely threat hunted in that do have a large personnel of folks who are in there threat hunting or analysts working through data. But like the US show, I probably have on shift at any point 20 to 40 people just hunting through data. uh could be higher

at some points but a lot of organizations they have a threat hunter and that is also their analyst right there multiple jobs so we have that luxury a lot of what we do comes to um trying to protect the things that we can protect right we don't want to stop a demo we don't want to stop somebody uh on their presentation showing a new cool thing they did what we're trying to do is protect the things like registration the backbone system users attacking users and users doing things on the internet, they shouldn't be doing like hypothetically attacking a police precinct or something like that, right? So, we do things like profiling our backbone and our systems to see what

they do and try to find those outliers. And so, yeah, it's uh it's it's something that's not for everybody because you got to like be willing to stick your head into logs and do that the whole day. Some people gravitate towards that, some don't. There's even some people who are really good at it and uh they fail in this environment because there's a movie playing on the wall and music's going in there and the lights are dimmed and they're used to working from their home office in a nice quiet area and they don't do well in that environment. Then there's some people who thrive in this environment, the collaboration, working with people, miniature war rooms of

things going on. A lot of it is educational. We do these tours. People come in, we explain what we're seeing, how we're seeing it, how this impacts you, what you can do at your organization. So, there's a lot of educational component that's a part of it. This is just a picture somebody snapped of me uh doing a a knock tour for this group. So, the evolution of this is we get a bunch of highly skilled people. Um, we do a lot of threat hunting. We have a lot of detections. We have this category that I suspect most orgs would not have. We call it the black hat positive. That is where you have a detection that is a

legitimate detection. It is real. It did its job. It's not a false positive. It's not noise. But in our environment, it is noise. That is a legit detection for a classroom of 120 people running a legit exploit that are paying to run that exploit. So stopping that traffic is going to be a bad day for the trainer, the students, everybody. So we call that a black hat positive. I want to do as much as we can as fast as we can to rule those out, to focus on the things that we really care about, which I talked about a second ago. um after that even with all those baseline tuning it's still too much noise there's still too many things to

look at even for all those people so we leverage as much as we can with ML and AI profiling leveraging bringing in context of the classrooms to try to understand like is this allowed in this room or not uh should this escalate to human in the loop should this be autoc closed out uh one example is if we have multiple people in the same room or a high percent of those people all doing the exact same thing in the same room going to the same destinations for the same detection. Those are all really good stacking indicators that say this is allowed for that environment. One person doing it, it might be allowed. It might be the trainer

on their lunch break making sure their labs work before everybody else comes in. But it also could be somebody doing something they shouldn't be doing. All right, so let's get to the the MCP part of this. So MCP stands for model context protocol. Uh it's been referred to lots of different things. These are just some of the uh things I'm pulling out, but the protocol for TCP from AI to use tools. You probably if you've used AI, you probably used MCP in some form without even knowing it in a lot of situations. Maybe you're on uh chat GBT or Gemini and you've connected your Google Drive to it or you've connected some other tool to it. Those are usually

MCP protocols that allow that uh connection to those tools. They're gateway adapters. Uh probably the easiest way to explain it, it's like to let you as a human talk to AI in your natural language to get a result from something else. They are very powerful and very useful for um I guess the term now is starting to be called legacy infrastructure, but they're still pretty standard and even modern things. So you want to talk to your SIM, your database, your endpoint device, your data lakeink, any of those things, you have this layer in between that lets you communicate with AI to do that. We won't get into the rest of this, but you can connect different clients to an

MCP server, whether that's Cloud Code or Gemini CLI or Codeex or your own app or whatever that might be. You can connect different clients to an MCP server. That client is typically where the LLM layer sits. The AI layer sits. If it's Gemini CLI, you say, "Tell me about my alerts." Gemini turns around, delivers that, makes appropriate API calls, returned results. You can keep going back and forth uh on that. All right. So, just give you some context uh for this uh presentation on MCPS. Um, the first date here is October 2024. I'd say this is when I legitimately tried to get into the vibes. There was things I was doing before with uh GPT

applications, doing sections of things, but always had challenges and were failing. Not enough context window, not enough context window, not enough context window. So October 2024, started actually vibe coding some stuff, building some applications with AI, and I found it very frustrating and it really made me mad. you'd get some part working and then it would just blow up the working part to do something else. And I'd messed with it quite a bit and I pretty much had rule it out. And then I went to uh we have an AI conference here in Utah. It's typically in February. I think it was last month. Um I went to that in February 2025. And um this guy John, I asked him if he

was going to be here. I'm going to shout him out. Anyway, uh he's he was sitting down. He's like, "How how's it working? like what are you doing with this? And I was just venting about how terrible it was and how much it was making me mad. And he's like, well, have you tried this? Have you tried this? Have you tried this? Have you tried tried this? I was like, no. And so he sat with me for like two hours, maybe longer. And he was showing me how his workflow worked. And I don't think I I didn't go to bed that night till like 5 in the morning because it like unlocked things that were

currently locked for me. It's like, wait, I can tell it not to touch these things and it won't touch those things. I can create rules. I can create all these other stuff. So, like this was instrumental in at least uh my adoption because sometimes like hitting a wall and you want to plow through it and then sometimes you just repeatedly bash your head on that wall and you want to give up and I was at that point of like I don't know it's for the birds. So anyway, John, I'm pretty sure that's what he looks like. No, I'm just kidding. It's definitely not he looks like. Um, so then I uh MCPs started out that November and I didn't I I never

even heard of an MCP at that point. That is my frustration part where now um February is when I'm now starting to like unlock of how to do more things with AI and I was like man we really should be able to use this as like sock analyst to drive value to like existing things sims like Splunk or Elastic or Log Scale. we should be able to just like I can write a join query I just hate it like I mean I don't want to do it and teaching new people to do it's even worse like okay do you know SQL great do you do you know some weird bastardized version of SQL that's what

you need to know to query some data lake or some sim and they're all different and I was like this would be super useful so I started diving into that and I came across MCP which again had come out the November before so now we're March and so I started I built my first MCP um to connect it to Splunk in uh March and a lot of lessons learned there but it was actually very hard it's substantially easier now fast MCP exists way easier to get going and all the things that you Google now if you type MCP will come back related to MCP not some other acronym that was not true then it was always something else it was

very frustrating you were like trailblazing but not it's it was like a middle a middleware of like you know people have the answers on some Stack Overflow somewhere, but you're trying to figure out how it applies to you. So, uh, built the first one and connected, I believe, a claude code to it and created a little demo video of doing that. And that was the first one. Then that turned into um doing a bunch more of other sim. It was I did elastic and uh so as your organization, you're not going to go do a bunch of SIMs. A lot of organizations unfortunately do have more than one SIM. Um, most small orgs only have one, but a lot of big

ones have two. So, you might in that scenario. Uh, but as far as like we're we're just trying to like show people how the data works in other environments. So, had a Splunk, had elastic, had a log scale and then um I brought it to the Black Hat USA show and tried to there's a difference always between like you you make something, right? You vi you do it with the vibes and then now you want to do it in real data and that's where a lot of people stuff breaks down, right? It's like it's worked on my machine at my house and did this thing um or worked with this small sample data set. Uh if you're if you're

turning through a small log, AI is pretty good at that. When you're trying to turn through gigabytes, you got to code for that. And so bring it to real show is like a real proof of concept. Does is this a thing? Like is this have value beyond demos? And uh I also hooked it up to uh the the sim we use at Black Hat was Palo Alto's XIM. So made a connector for that uh for that show as well. And what I realized doing the demos like that Cloud CLI and others is that um the adoption like this room might be a little bit of the exception. like we're we're pretty nerdy here. But if I tell somebody like go download

Cloud CLI or Gemini CLI, go set up your MCP and the alerts and errors were really hard to find back then on those. And then now you can ask it questions like the adoption rate was really low even for small technical smart technical person like they they wouldn't not that they wouldn't do it, it was just hard to do. And what I realized and had a a great uh discovery here uh Slack released uh AI apps like the week before Black Hat last July, August. I think it started at the end of July, August. And I decided to go code up a Slack AI app integration to the MCP. And that turned into something that

I stand firmly behind now. And that's you got to meet people where they are. Right? If I go and say here's how you do this thing, I lose a lot of people. If I say go into Slack and hit slashpostcog and ask it IP address, the adoption was much higher. People were using it. The same smart, competent, capable people who have login to these tools and systems will stand over my shoulder and ask me how to do something or have me do it. If I integrate it into something like chat ops and they can do it that easy, they do it. that has now turned into putting it in browsers and making that easy on that aspect too. But um it

worked. It worked really well and uh but there's challenges, lessons learned, integration things, a lot of things to go improve. Uh MCPs based on your number of tools and at the time you were heavily limited on the number of tools you can have. It's still a problem. That's still a real problem with MCPS. And I took those lessons learned and well oh here's a few screenshots of that u from this is from that black hat show where I I was teaching like do give me threat summaries and I have the luxury that uh you could have at your organization if you sit with your subject matter expert which is here's the start of a threat hunt here's all

the stuff that happened and here was the end result I have that data and I can work with AI to say shortcut that learn that knowledge here's what I'm starting with here's the type of results we're looking for. And um so yeah, this was that and then here was the Slack integration where it's just like tell me about a thing, tell me about this incident. Um tell me the first time we saw this IP address. It was pretty limited at the time. I think it was like FQDN's IP addresses. Uh it was like three or four use cases. It wasn't very high. All right. So at the end of Black Hat that uh quickly turned into Black Hat

USA last year that quickly turned into oh I gota I got to build I got a code for this thing and this thing and this thing and this thing like oh it didn't work for DCRPC and it didn't work for Net Bios and it didn't work for and uh I realized quickly even trying to like optimize the tools of teaching how to like pivot and use the logs that we're blowing up context windows and I'm running out of the amount of tools. Every time you loaded it, it loaded all the tools and all the prompts in it. And so I started looking at what are the options and I came to two conclusions. Option one was some sort of a router in

front of it. So you can call a router if it's in Python. It could literally just be a function and subunctions. It's like I'm going to put something in front of it that it talks to and then it then can go to a sub function or I'm going to have it hit this and then it can go to these places because that can reduce the context window to just the endpoint and then you can have more things for your additional logs, additional pieces of data. Uh and then right around this exact time uh a bunch of people start dropping papers about code mode. And so code mode is the ability for AI to write its own code. And now it doesn't seem as

wild, but in October that was pretty wild. I know it's moving fast, but it was like, hey, you're going to let it write its own Python. Where where is it writing this? And so Docker put out a thing. Um, Cloudflare put out a thing. Uh, Anthropic put out a thing. Like a bunch of people put out things on like code mode is where it's at. And if you've I' I'd argue a high percent of this room has used code mode, even if you don't know you use code mode. If you've ever been in Gemini or GPT and you asked it to edit a picture or give you a diagram and it creates Python script and then shows you it, that's

code mode. It's generating Python code to accomplish its task. They're not coding for every edge case. They're saying AI can do that. And so I went from 38 tools and at the time I think there was a cap of 42 tools. Like that was it. You couldn't have more than 42 tools and I had 38 and it went down to three and I've optimized it down to one now. Sometimes they'll have a separate like a tool to run the Python and then they'll have a tool to stop or a tool to do lookup schema, but I just put that all behind a single one. So code mode um went that direction. And this is what it

looks like. This is just an example for uh one for investigator and one for Splunk. But essentially the MCP is advertising says, "Hey, you have Splunk code mode. You can execute Python." Now, there's a lot of things that you got to consider into that. Like this is inside of a Docker container that only has network access only to the endpoint it goes to. And there's like eight controls around that and user permissions. And essentially every call destroys it and builds a new one. And you have to decide your level of appetite. But this has got way easier than it was at that time. I was following some pretty rough stuff at the moment, but now like Nvidia had

dropped an entire thing around open claw and how to do this. There's entire containers that are just like, oh yeah, of course, everybody wants AI to run code now. So this is way easier than it used to be. And I don't think I could go back because give me an OT protocol like, oh, I got to like ask AI something about some OT protocol of these logs. I don't want to have to go code for that. And code mode, it works. especially because I coupled it with a thing called just in time index and what that is I took a schema for the sim that I was looking at and um I don't know one

example one was 20,000 lines that's a lot even for AI that's a lot especially for AI and what it do is they have this schema and every time it builds the app it creates a just in time index for it that lets Python if you ask it a question about DCER RPC log instead of 20,000 lines There's six lines of that in there. And AI can now go through those six lines and attempt those schema full lookups using code in its own brain to accomplish that task. And that's a great pairing. It's in my tips. Uh highly recommend something like that. All right. So then that moves into I'm feeling pretty good about it. We run

this uh CTF called Cyber Cup. It's uh down in Alabama for Fed stuff. And I was hoping to get it in time for that to let people start messing with it. Didn't happen. Always run out of time. But I did, hey, I think I have this ready enough. And we did it at a local DC435 meetup. Here's everybody gets access to an MCP server and and and I think it was Gemini CLI is what I gave everybody. And it worked well until it didn't. And what I didn't account for was how fast we were going to nuke all those tokens with a room. And we got some smart people. Like I think one guy had like eight T-Ux

windows going, which is okay. If you're trying to win a CTF, that's great. Uh it wasn't a prize, but that nuked a lot of tokens. So we we blew that key out within like I don't even know 10 15 minutes. And then the rest of it was pretty boring because I don't I don't have any more keys and the request process takes long. So I guess just play with the thing that you can't use anymore. if you have your own key. So, it was it started out well and then didn't. So, a lot of lessons learned from that where um the same epiphany and that I tried it with some other people. It was a it was more about that meet

them where they are. It was like I was already losing a lot of people with just Gemini CLI. Go to a Linux terminal, type in Gemini, hit enter, type inmcp, like just I was just losing people. Again, this group might be the exception. we might uh you might you might be better at following that um versus um not. But that led me to a few different things. This was kind of like parallel. I'm not sure which one was first or not, but playbooks. I rebased my MCP off of one that other people were doing at the organization. And they had these playbooks and the playbooks, they have different terms, workflows, playbooks. They're now turning into essentially

they're just called skills for agents. uh that seems to be like everybody's just referring them to as but essentially some sort of uh AI workflow that instead of one step do this then do that then do this then do that. So implemented these playbooks with the primary focus of alert validation. You got an alert. Tell me how real this alert is. Right? Do I care about this or not? And what I realized quickly when we added in these playbooks is the context window was just shot. They're doing like seven 11 different steps going through it and each one of those is retaining token count. And it was just blowing up tokens. And in fact, what I didn't

realize for longer than I want to admit is none of them were actually completing 100%. They were all airing out somewhere in the middle and then recovering and giving me a result. Once I solved that, I a single playbook was over a million tokens. And so that went down some paths of well, how do you solve that? You want to get into those rabbit holes? There's like different layers of LM caching and six different ways you can do it from application to in the cloud versus not versus anyway there's a lot of things you can do with that. Um but also uh at the time my mind I used to run a dev shop and so my mind

often when there wasn't a result for it was like well how would a developer solve this outside of AI? Like let's say AI doesn't exist because that's an easier thing to Google than trying to Google a thing that doesn't exist. Like how do I reduce my context token size? Way more results now than there was then for that. Um but uh I came up with this thing that I forget what name I gave it. I give everything dinosaur names, but essentially it's like some sort of a a commaepparated value short change for JSON files. I think there's an there's like a bunch of AI things called lazy load and a whole bunch that exists now

that weren't there but help reduce that window. Also, I realized that parallel matters with streams and that if one was colliding with the other, that was actually what was causing that takedown. So, anyway, lesson learned on that if you mess with it is you do want to allow parallel streams probably just for health checks alone with your retrieval. But um the playbooks were now executing. And then I realized like but I have code mode now. So instead of using hard-coded playbooks, we could let AI pick the playbooks. Then that went to well we could let AI make the playbooks. And then it went to well when AI finds these logs, it can dynamically adjust its playbook.

And that became pretty powerful because it found, oh, here's some SMB traffic. And it's like, well, now let's go down that rabbit hole of SMB and what it talked to and what protocols it used and uh operations and anything else that was tied to that. So, yeah, playbooks were u important and became a big deal. This is kind of what the uh results of that looks like. Um, from triaging an alert to the results and I like to customize everything. So, it lets me edit my system prompts and user prompts and connection details and then everything that I like to do. I, you know, I don't like the AI just trust me, bro type of

thing. I like the evidence and reasoning to back it up so I can go prove that out, especially when I'm trying to build something. And so that led to an MCP app, which is nothing more than just a browser application. And this started off uh there's a bunch of people doing very similar things where now you're saying all right for a lot of these users instead of saying go use cloud CLI or Gemini CLI just go to this browser and you can interact with it there. You can still use those directly to the MCP server but now you have this front end as well that you can access. And that opened up a lot of options. We can build

threat hunting queries where instead of you saying go threat hunt on a thing and trying to explain this, you can just click on it like I want to look at drive by compromises and the queries are in there and you can uh there was a few of them I did. One was around um these uh MITER attack and then the other one was around directionality. So you pick north, south, you pick the service, it shows you the query, you can edit the query, you can hit run the query and that was really useful for uh letting people just use it. It was also really useful for people learning things. What I real what I didn't realize or

appreciate was how much pe how much I was trying to like get value out of this for analysts and a lot of the people were like no that's value but am actually using it to help me learn versus just give me the easy answer which I didn't appreciate how much of that was going to happen. So then I took an attempt to do what I was trying to do, create another CTF, do the CTF again without blowing up all my keys or I got Gemini to extend me to tier three keys. If you don't know what that is, it's a lot of money, but tier three keys. So uh and then I got five keys and backup keys and I was ready for

this next CTF. Ran this in February, 35 people. It was like 7 8 million tokens and it actually went really well and they were able to just ask questions in a chat interface, get results directly in their browser. Uh, and because it went a little too well on the CTF side, we I added in this little uh graduation cap in the right corner on the chat where they could click on it and what it would do is it would show the exact query that they could go put into the sim. So in this CTF example, they had access to here's your MCP app which can talk to the MCP server and Splunk. You want to use Splunk to answer it? Great.

You want to use the MCP app? Great. You want to use either or? Great. But here's your exact query. You can then just copy that. There's a little copy button. Kind of hard to see at the dark mode there. And then you could go paste that into Splunk and hit return and it should give you the same results. And that was uh the goal of that was actually Elden's idea. Um, I was like, we want people to learn something when they do the CTF, not just learn how to copy and paste. Copy an copy question, paste it, copy answer, paste it, points, move to the next one. I was like, that's not super fun. So, we added this one. What I

didn't appreciate was how many people were actually just using this to learn. A lot of new people who weren't even in it or security. I was like, "Oh, so you just leverage the MCP to just chat." And they're like, "I started that, but then I said, "How would I do this in Splunk?" And I clicked on that and I copied that. And then they were learning how to use the sim because it empowered them to do something that was daunting before before going into Splunk and even if my guided directions showing them what to do people were like that's the moment you leave me here I'm done I can't continue on I'm not sure how to write

those but with this combination finance people were like completing 30 plus questions which I had not anticipated outside of using the MCP like they were using it but they weren't just taking the answer and pasting it, which is what I was expecting. And then this is kind of what it looked like at that moment. You have this MCP app. You have this server with a just in time index. I added in a reinforcement learning model. The moment you go to code mode, this makes a ton of sense. I think I'm on generation 7 right now where it is autoimproving its own Python code against those data sources. Highly, highly recommend. So much so that when I got to Black Hat Europe, the

first place I tested this against real data was Black Hat Europe uh last December and um I was testing it. I had to redo everything because now it's all code mode instead of tools. So XIM integration I did had to get redone has to get done now with code mode. Instead of going and building that out, I just essentially gave it a token limit and said go query yourself and go do a bunch of stuff. And in three days, I had a pretty good working uh MCP application to server against XIM because of reinforcement learning. The nice thing about the CTF is I was able to capture all of that and it wasn't just the way I ask LMS, it's the way a

bunch of users ask it. And that CTF alone uh had two evolutions of improvements from just that capture. All right. So then that led me to um there was another project I was working on uh where I was essentially creating an agentic harness and I was running AI to complete CTFs. So kind of like a different version of it and different data sets and having them run against it. And the stuff I learned there about one agent talking to other agents and how to handle things, I was like this would be useful for that. So I started building that in where instead of it just being a single agent, you could have specialty agents that you could

define everything. And so this there's actually three more underneath this, but whatever. There's hunting agents, there's sock analyst agents, there's IR agents, there's report writing agents, and then these agents have access to skills, which were those playbooks that are now customized. There's even just traffic sanity checking like, hey, is my traffic even good? Because again doing this against demo easy real data your threat hunt's going to suck if your traffic inest sucks. So if I can't validate that before I even get to this then it doesn't matter. So had to build all those pieces out um and then give me the ability to say like do I want this agent to have reinforcement learning or

not. Should it be able to have it or don't and what temperature should it be able to have and what in what model should it be using? And so lots of customizations on that which led to um essentially I guess pretty cloud code uh openclawesque but you have these system agents I think they call them heartbeats on that but you can set like schedules and you can set agents and you can say like check alerts every what five minutes check for alerts every five minutes every time you get an alert run the alert validator every time the alert validator runs run the uh report agent type of thing right so you can build out these harnesses so agentic harnesses or

whatever you want to call them where multi- aents and you have an orchestrator that's handling it. You can decide when you do this. Um this is pretty much where everything's pretty much going to now. If you want like a single orchestrator that talks or if you want to let the agents talk, there's pros and cons of both. I settled on it was uh I I had better results with a single orchestrator not letting the agents talk to each other, letting it handle the uh communication between. All right, so that gets into this giant lips uh list of tips. Uh meet them where they are. If you're you're coding anything, you're doing any of the vibes,

your end audience or whoever's going to mess with it. Uh if your barrier starts with 15 minutes of explanation and before they've even started using it, even understanding like how to get going and how to get started, you've probably lost a lot of people. So meet them where they are. Uh if you're doing any of the vibe coding, dual shot planning is where I'm at right now. That means you can come up with your coolest prompt. You can have the coolest cloud code skills and and uh you know, make me le app million dollars a day, no no hallucinations, uh no no issues, just solve it type of thing. But whatever your plan is, no matter how much

orchestration you have around it, as soon as it builds it, tell it to go and audit it. Comprehensive review, see what we're missing, see integrations happen. highly recommend dual shot when you do your plan. A lot of people will do a plan and then implement the plan or make revisions implement but build the plan, revise the plan with a some second review, second agent and then go ahead. If you're doing anything with a browser like where I was showing you that app where the application site is now talking to the server, just use a proxy. You're going to get into cores issues. You're going to get an HTTP versus HPS for local dev issues. you're going to

get into all sorts of nonsense that just will waste so much time. Uh just throw some proxy on there. Whatever your favorite is, EngineX, Caddy, I don't know, take your pick. Um but substantially uh a sync of time. We'll go into that if you don't do that. If you just have an MCP server, you're using Gemini CLI, not needed. This is for browser schema making. Um, I didn't realize that uh just through natural figuring it out that I came up with a pretty good system until I talked to multiple people at different companies and I was telling them how I was doing it and they're like taking notes. So anyway, when it comes to schemas like you want to integrate

with an API with LLM, their docs, if they're good are your friend. Unfortunately, a lot of times they are not up to date or not current. But the first thing I do is I go grab their docs. I sick AI on it and say just parse through this entire thing. And depending on the AI you use and if it's agentic or not, if it can chunk things or not, you're going to run into different limitations and barriers. One of those some of those scenarios, it can just do it. It'll just run through it. It'll just create itself, create it little compressed windows, and keep moving. Uh if not just tell it like a limit like do 250 lines at a time. So

parse this doc 250 lines at a time save it to this file. AI likes JSON markdown seem to be the top two. YAML's making a thing. XML sometimes but JSON and markdown seem to be the top two it prefers. And save it to this file and then do the next 250 all lines and append it. Only save things that we need for the future. So if there's a website and there's ads on there, there's other stuff on there, you don't want to be saving all that. So, you're just saving the API uh for reference. Listen, if they got a like a well doumented great swagger that has everything you need, you don't need all this. But I've yet to

find that that is up to date and current. So, anyway, you save this schema, you build out this schema, and u AI is also really good at this. You know, gauge yourself on permissions of what you can or can't do, but you can have it take that and then validate it against the source. Now go run these and tell me which of these are real results, which ones are bad endpoints, which ones there's now a V4 instead of a V3 or they deprecated this or added this. And then at the end of that cycle, if you have the tokens to burn to do it, I end up with the schema that is better than the

company's own documentation. You couple that with a just in time index that lets your AI look through that entire schema, you have something really powerful. So you have your MCP app, its code, its common things that it does, and then when it doesn't have that result, it goes to the just in time index and can get anything it wants. Now, you'd have to think that through. Hey, if I integrate this with an EDR, do I want to give it the permission to host isolate? Think that through. Maybe it's readon, right? If you're starting out, I would definitely start readon. I wouldn't maybe implement the ability to go and like lock down Active Directory servers or something else that's going to get

you in a lot of trouble. Um, yeah, just in time index. And yeah, just sometimes you got to think about it different. If you're trying to solve something with AI and there doesn't seem to be like good results on what you're looking for, there's a ton of research papers that exist uh that are great to go dive into, but sometimes I kept deadending. Just think like take out the word AI and replace it and say like how would a developer solve this challenge because most likely they have solved something like this a different way before AI existed and you can solve that challenge or you can sit on your thumbs and wait a few months and something will

come to you. Right? This stuff is iterating and going fast. A lot of the stuff that I've done you don't have to do anymore because now there's a version for it. But if you're trying to trailblaze on that or push on that, just think, how would a developer solve this? Parallel is your friend. I thought I only need single. I only need a single playbook running at a time. I only need a single chat at a time. Uh, no illusions. You need parallel. You're always going to want to test something like if you have one of these big playbooks that's going to take two to 10 minutes in some scenarios. You don't want to you want to be doing something

else. Parallel is your friend. Health checks are your friend. Parallel is great. Uh, reinforcement learning. I think it it's pretty much a must. You can decide if you want to autoimplement reinforcement or not. I think you absolutely should have it. Turn it on. So, it's saying, "Hey, here's at least on errors. Every error I want to log that error and I want to improve every blank traces of that air." It can create the new version and you don't have to auto apply that new version. If I'm in a production environment that this has set lots of users, that's probably what I'm doing. I'm not auto applying. I'm having it learn and I'm having it save that file

where I could then go diff it and say, "Yeah, that's a good idea. Let's implement that." But you can set them to autolearning. That gets into drift. I'll cover in a second. Um, and things that you should watch for there. Golden answers. Not everybody has the luxury of having this. I had the luxury of having this. I have my data sets. I have I know what's in them. Especially when it comes to the CTF stuff and other things. This becomes really important to know if it's lying, hallucinating, making up stuff. Your harness is even good. Um, highly recommend, especially if you're on rapid development, come up with a short small set of data that you know, like you

know, you know, these are the IPs in it. This is the things in it. Because it becomes really easy to be like, the hell did you get that from? like that's not even in here. I know it's not in here. I know it's in here. Uh having they call there's different things but they sometimes they'll call them golden answers. It's how you can validate there's non-determin for non-deterministic things that they are not hallucinating. And I spent a lot of time trying to track hallucinations and all the metrics around that. And what I found is it's not nearly as needed as much if you have a good harness. If you have a really good harness that focuses

it or its sub agents to do what you've asked it to do and having an orchestrator that can help validate that, um, hallucinations weren't nearly as bad as they were before. Now, to be fair, that's also using like a top frontier LLM, right? If you get a local dev of something that has a low context window, you don't have a great GPU, results are definitely going to vary on that a lot. Uh these three agents are the three that I settled on to be my favorite for retrieving data and this could change tomorrow but as of right now uh some sort of a SQL agent. I can I have a bunch of tests I've done

for local LMS that I can give you like here's one I would recommend for that but um most of this data is stored again in some database or weird version of a database and there usually some version of a SQL query to retrieve that. So some sort of a SQL agent is highly useful. In fact, most my MCP stuff was primarily based on that. And then when I did the other agentic one, uh page index, uh I I came across this one because I was trying to figure out with with it and security stuff tokenizing like how many bytes did I send you? You can't token two words together. Each one of those numbers could be different. So you end

up with my mind starts thinking like well who else has these problem like mathematicians got to have this problem to the worst degree everything is a number they can't be stacking words together so what what is leading right now for mathematicians for rag systems for retrieval lookup and I came across I I tried out like 16 different retrieval things uh one of these was a page index where it essentially builds a map going down like like a family tree of I found this I go down that path to this result to this result. Um, I like that one. I'm a fan of it. And then the last one is map reduce summarization rag and essentially it can chunk your data into

whatever count you want. I think mine's 500. Um, so it puts in 500 log lines, creates a chunk of that, and then it runs that through LM and creates a summary for it. Those are really useful for anomalies, behavioral things, things where like especially for like a CTF where I was like from the previous question due to that compromised host, what was the next thing and it had in it summarization that it saw a command injection. It already knew what that compromised host was where the other two agents spent cycles trying to prove what was compromised and behavioral things. Yeah, things like that. So those are three that uh if I was starting new I

wish somebody told me it would save me a lot of time. All right, a few more. Agent drift is real. When you do reinforcement learning uh agents can like this is this is the thing right now people like I I created this thing and it worked great for my demo and I put it on real data and it was running 247 two weeks later it broke and it most of the time it's from agent drift. When your agents can learn, they change themselves and sometimes to a a an adverse effect, right? They instead of improving a self, maybe you have bad harness or something around it, you're you know, you've incentivized the wrong thing, right? You've told AI like

get results, optimize yourself at the sake of everything else. And that maybe not the way you worded it, but that's the way it interpreted it. And so it will improve itself even sometimes when it shouldn't be improving itself. And so you get agent drift. I'm a huge fan. I implemented into the MCP app I working with um Hodtoscope. If you never heard of that and you you're you have if you're messing with multiple agents. I highly recommend at least googling that. It's an open- source project. The cool thing is is it puts a map around everything and it uh essentially you can watch all your agents and it clusters them. And when you have an outlier,

you can even set an alert for that outlier. So, I think mine set to 20% drift. So, I'm gonna let it do something a little different because maybe it's trying to get better at it. I might like it, but if it drifts beyond 20% of what I want it to do, I need an alert so I can go look at that agent and see like, is this really what I want you to be doing? Or you can add a judge agent on top of that to evaluate that. Or there's a lot of lot of other options you can do. Um, you do need to think about security a lot with this is this, but it's it matters so much

about where it is and who it's for. If it's you at your house or you on its own VM, completely different story. You have multiple users, completely different story, right? If I have multiple users, now I got to think about authentication systems, ooth, things like that. I need to know who did what. If it's on your own host, I don't necessarily need to care about that. If I'm starting today and I want to go spin up a fast MCP, don't start with that. If your end result is I need this to work for 20 users, you got to think that through. Um, also, if you do reinforcement learning, traces exist. you're keeping a trace of everything

that's happened because it's looking at that trace to decide what to improve. That can be super useful, could be even beneficial for you if you're doing auditing of those logs and you need those, but it could be detrimental if that's part of a compliance or the access to those is to somebody who shouldn't have access to those. So you want to think about that where you're sending like CIS logs, what whatever tool that it's accessing and the requirements around its logging should also probably apply to your MCP. Again, at your house, you're doing it yourself, not as big of a deal. But this can cascade really quick into a full enterprise application just for things

like that. I mentioned this a minute ago, but yeah, I what I figured out if you have a solid harness, these modern Frontier LLMs, the Geminis, the the Claude, the um Codeex GPT 54s, 52s, they're pretty solid. Like hallucinations, I was tracking them. They still exist, but it's like negligible. And I don't think I could say that before. I'm doing a lot of local LM testing now for optimization of like this local LM is really good at writing a summary or an IR report. This one's really good at SQL. That is not true for those. They their context window is so short and so small that the moment they run up to their diminishing results, they start

not doing it or making stuff up. But when you're using one of those top three models with really good harnesses around them, I'm I'm seeing the hallucination rates being substantially lower than I had anticipated. I built way more coding to track that than I would have knowing where it's at right now. Um, this is a thing right now is uh kind of these next two lines, CLI verse MCP. So if you go Google's a great example of this, use Google Workswuite, there is an MCP for that. You can use that MCP to pull down your Google Doc and reply to comments and edit your documentation. You can also use Google CLI for that, which is in preview.

And there's arguments for both. Um, I've tested and played around with both. My my opinion as of right now, which could change with a new article dropping tomorrow, is that I think they're pretty sixes. There's a lot of people saying uh CLI is better because it has less context, but when I design an MCP and I can limit the code mode to exactly what I want, it's no different. I just think it's really more about um the implementation of it and it's all about managing that context window. You can absolutely do the same amount of token efficiencies with an MCP as you can do a CLI. So, just find the one that works for you. I'm still messing around more

with CLI stuff. Um, but if that's easier for you or your agent that you're messing with or your your local model or something works better with it, go for that. Like CLI that maybe you don't need an MCP. You need something that can talk to that API with AI. MCP is just a protocol to do that. But CLI got big boon because of the open cloud stuff. A lot of its tools are built around that. And so maybe that becomes a a higher mass that takes off. But um as of now um I keep reading MCP's dead, but it's at its highest trending number it's ever been with corporate and enterprise adoption. So I don't know. I

doubt it. Every some people move fast, some move slow. We'll see what the future holds on where that lies. But I still think if I were sitting the audience right now, I'd probably still go take a stab at a fast MCP Python. Spin that up. go try something with that. Try a CLI version. See what it does. Um, another uh thing that I came across in my discovery is AI is better at open-source data than it is other data. So, as far as what I'm pulling in multiple uh data sets for socks, when I'm giving it something that came from like Zeke or Serraata or uh some other open source tool, it inherently knows it

better, which conceptually makes sense. It's sitting there in GitHub repos. It's in their training data set. If it's something like Zeke and I say like tell me where this came from, it knows its ID or ridge_h where you as a user might not know that. Or if it's a closed source or another tool, it might may or may not know that. Definitely when it came to some other tools that were proprietary edr stuff, um I had to explain more things. So put that in your head. That could be useful. Um, and then what I did come across was AI does hit knowledge ceilings based off that data. And again, conceptually makes sense. If you don't

collect that telemetry needed to answer the question, AI can never answer that question. No matter what fancy model, like whatever thing comes out that's super late, if it doesn't have the data to give you the answer, it will never give you that answer. So try to find your blind spots and your observability wherever that might be because that's a determining factor. I think that's more important than picking the which of those models and then this became really apparent and that is okay so even if it's open source and AI knows it just because it's trained on the data does not know does not mean it knows how to use it and what that turned into I I created

this thing called core concepts that was like start here do this next so that code codes. Here's your Python. And then it immediately gets followed with core concepts. This is what I want you to do in this order. Check this correlate logs this way. You need to give it some way to do something. Whatever's instinctively in your head, let's go to Zeke because that network data for Black Hat, uh, we can't go put EDR on all of every attendees devices. They won't let us, right? So, we rely on network data pretty heavily. And so, for network data, um, they have a UID that stitches all the logs together for Zeke. Well, if I just say go do this, it will figure it

out. It's going to churn and spend a lot of time, but it will start in the wrong places. If I say start with the con log, see what you can answer there. Use the UID to pivot. Use the FUID to pivot. If there's a file log, you give it these things. It's increases everything. Speed, time, accuracy, everything shoots up. So, think about that with your own data set. depending on what you're using. You're connecting this to an EDR, you're connecting this, maybe you're just doing an MCP to connect it to your own ticketing system or whatever else you're doing. Whatever instinctively is in your head of that core concept of here's what I do first, here's what I do

second, give that to AI because it will have better results than figure it out, bro. And it will figure it out. You're just going to turn and spend time. All right. So the future um the Black Hat Asia is coming up like wildly fast. I'm flying out on Wednesday. That will be the first time where I will be testing the Agentic multiple agents on real data set uh in production with variables I don't control. And so uh I can report back on how that goes and see if that's a improvement over the last version uh that we had. I also um I wanted to bring this up. I think I've been asked a lot of questions like,

well, if you were doing this from scratch or this thing from start or you're building a sock from start, what would you do? This thought just keeps coming to my mind. Um, and I know some some orgs are probably working on something like this, but I would just take whatever one idea and I want to mess with it is I want to take those logs and just send them to object storage, S3 or whatever it is, and then leverage an MCP or CLI to query that versus using their UIs anymore. have it correlate my identity traffic with my EDR traffic with my network traffic with my whatever you know firewalls etc. Um there's there's limitations on that like

some people are putting other things in front of it to speed up queries and whatever but it's something I want to mess with. I'm also going to mess more with the CLI versus MCP. I think there's a big advantage of having the if you have an application already to put the CLI underneath it versus a separate MCP because then it's all auditable in a single app. So I want to mess more with that local LM testing. I have a bunch of tests with 13 different local LLMs and testing every part of of what I need in my stack. So, every one of those agents and every one of those skills, I have it testing to say like uh Cisco's security

uh local LM, what can it do or not do? And then what's its benchmark rating for this or that? Like, oh, is it really good at writing reports? Can it write a SQL query? No, because it doesn't support tool mode. Okay, so all tool modes, it's out for that. Um, Metatron, Nvidia's, does it do this? Gwen, does it do that? Um, I haven't messed with the new Google one that just came out, but um, essentially that way you can leverage this piece to do this, this piece to do that without using those frontier token calls or really what I'm looking for is speed and controllability. Um, some some people don't want their stuff connected to

cloud, especially with potentially sensitive things. Uh, another thing we're chasing, actually Ivon's doing some of this for me, but um, training our own small language model that's really good at this thing. It's not good at being your therapist. It's not good at knowing another foreign language. Maybe maybe in a sock analyst I might want to know a foreign language, but it's really good at reading edr logs. That's it. Or it's really good at knowing network logs. That's it. And I want to test that in that agentic flow. When it comes to that, have this return the answer. Is it an improvement? Speed accuracy versus generic training and log logic. Uh also there's a bunch of RSA

stuff got announced. uh we'll see what the reality is about agentic databases or agentic sims or agentic data links. I don't know what that means yet as far as how this interacts or improvements will be made for that versus quering a legacy sim versus quering an agentic sim. And then ADA, I know there's a very specific protocol by Google for ADA, but this is a placeholder for more agentto agent communication. And as these other tools, whether that's an agentic database or whether that's another tool and their endpoint is no longer an API, but that endpoint is an MCP or in the future an agent endpoint. that'll likely end up moving towards agent talking to agent

without a middle middleware MCP needing to convert that. And so I think that's from from my vision for this year. I think that's where that's headed. We'll see what some of these new uh uh Frontier LM come out with when uh general people get access, but I think that can change some things. All right, we're pretty much out of time. Um, this was at the end of a very long day with me and AI and I asked it to make me a picture of how it felt dealing with me all day. And uh, the part I thought was especially funny is all the burning tokens everywhere. Uh, I I don't know if I want to say on here

how many tokens I use use, but come find me afterwards. We can have a chat about it. Um, with that, I do have like two minutes. Is there any pressing questions from anybody? >> Yeah. So using your agentic workflow, what is the rate of false positives of its finding when compared to say a human in a soft? >> Oh, that's a great question. So he asked um using aentic flow, what's the rate of false positives versus a human? I don't know that I have a a straight comparison to that. Um, I do know that it is like the the CTF one. I have metrics because we track all the users and how they do in CTFs because we're trying to improve

the questions, not have gotchas and other stuff. So, we've been have we have some of that data that goes back quite a bit. And I can tell you the fastest user for the CTF that I ran through completed each question in about two minutes. The average was 6 to 8 minutes. And this was uh this was a professional one that was people who were senior people, right? And uh AI the fastest model was uh GPT54 and it was doing it in about uh 20 to 30 seconds. And so like the hours versus 15 minutes for 44 questions for that one. Um Opus 46 just case you care was like 30-ish minutes. um we ran them through multiple

times and averaged them and then Gemini was like two two hours for 31 preview. So as we get more data like I would love to know that exact answer. Um but I can tell you as far as like sorting through all of those logs and associated logs to tell me what is relevant and bring it just to pull those together and then try to validate if that's real or not. It's it's going to be faster because it's tedious as hell. But I don't know. I don't know your exact answer, but the CTF version, I have a version of that. Any other questions? >> Yeah. >> Maybe ask you after about which of the open source models are best for purpose

build? >> Yeah. Um, he asked what open source models are better. I'm hoping to come out with a models war paper that I've already started building out all my runs and tracking for and um I'm trying to do it. I'm trying to give it a realistic limit of like what a real person could have on their machine, not like unlimited GPU and AWS type of thing where it's like, yeah, that's a data center. I can't really have that. Um, I can give you some of my rough results now. Um, I want to run them through more times and average them out because nondeterministic outputs lead to edge things that could be really good or really bad and I don't want to weight it

based off of one thing. All right, I think that's it. I appreciate it. And uh any questions on any AI stuff? I'll be around at least whenever conference ends. Thanks.