TL;DR: Applying AI to Security

Show original YouTube description

Show transcript [en]

hello everyone and welcome to bides SF uh it is my pleasure to introduce Clint Gerber of TLD drr giving us the TLD drr on applying AI to cyber security by Clint hello hey uh thank you all so much for coming I'm absolutely thrilled to be here and thank you to the organizers for having me uh so this is going to be a fairly dense talk so please uh hold your booze to the end uh so my goal is to take like as many things uh out there in many different areas and put them into one place for you to easily reference and I think I've done that uh pretty well okay so this talk is also uh the

subtitle 2020 Clint eats his own words so this is a blog post I wrote with a friend where I said you know AI in security is overhyped obviously a lot has happened since then okay so I have two main goals so one is to give you like a big picture understanding of the space and then also a bunch of tactical examples in terms of tools blog post talks papers so that ideally you can say like oh I can directly apply this at work or maybe oh this other adjacent idea uh maybe thematically is similar to something I could do at my company okay so there's so much I would like to talk about but really uh due to

time constraints I can only really talk about one which is applying AI to cyber security problems not really attacking or securing AI itself fortunately there's many other good talks today and other tracks uh that we'll discuss that okay so three main part parts of this talk first we're going to do a lightning like introduction to large language models to ensure we're all on the same page the bulk of this talk will be on use cases and then uh I'll conclude with some resources and Reflections and when I say use cases I mean uh a lot of them uh within many different categories and if you're like me when you see that you're like o okay

we got to we got to move we got a lot to do okay so some disclaimers we're going to go very quickly this is meant to be a reference I have a link on the last slide to these slides so don't feel like you have to write everything down and also this talk has being recorded so you know don't stress about it also I'm going to reference some companies and their products this isn't necessarily an endorsement some of the people I'm going to be referencing are direct competitors to my day job the point here is I want to give you an understanding of the lay of the land very quickly a little bit about me uh so my name is Clint I'm

currently the head of security research at semrep we have a popular open source tool we also have a pro and more rules that give you better coverage on first party code if you care about uh third party dependency security we also have something that can reachable uh vulnerable dependencies and a Secrets product that finds um not just like Rex based Secrets but sort of semantically oh this argument uh is a secret if it's a string literal and stuff like that before this I worked at NCC group a Global Security consulting firm and before that I was an indentured servant I mean a grad student at UC Davis okay uh I also write a security newsletter that's totally free just

trying to send you the best tools talks blog posts and stuff uh straight to your inbox every week once a week on Thursdays okay so let's talk about large language models uh okay so this is going to be painfully oversimplified I'm just trying to sort of introduce some tools and vocabulary to make sure we're all on the same page okay so simplistically we can think of them as next word autometers you take uh you create them by taking a huge amount of text like every on the internet textbooks things like that throw a bunch of compute at them like gpus and then uh sort of math and Magic happens and then they're suddenly smart so there's many players in the space

some of the main ones are um open AI with GPT 4 uh Claude 3 by anthropic Google's Gemini um meta Lama 3 an open source model mixol like there's many other players here's just a few so a Hallucination is when a model says something confidently that's not true if you're building some llm applications you might want to consider using libraries like Lang chain or llama index they're quite popular uh a prompt is basically a Persona like you are a security expert some sort of task like review this code and then some sort of input like the code that you want it to analyze uh F shot prompting is basically the above but you give it a few examples like

given this input uh I want output like this and this is actually surprisingly effective uh in practice you don't necessarily need to fine-tune a model or do something more complicated to get pretty good results uh a context window is basically how much you can pass to an llm at a time you can think of it almost like it's working memory but sometimes you have too much that can fit into one prompt and you want to uh maybe have a bunch of PDFs or transcripts or your company's internal help docs uh something like that so you could uh index those in a vector database and then use something called retrieval augmented generation or rag to basically

have um the model like programmatically pull in the relevant context and then um answer a question or perform some analysis over it so uh an agent basically instead of just like hey giv this prompt give me this output maybe you want to do some sort of uh multi-step analysis maybe you want um some sort of complicated planning or multiple agents all communicating together to solve some problem um agents allow you to do that versus just single prompt and response uh tools let the llm take action such as do a gole search uh make some API request calculate something run code you know uh or use security tools which we'll look at in a second okay so uh that was a brief

primer so now inevitably when someone comes up to you in the restroom and asks you something like this you'll be able to speak confidently and of course this is going to be literally every RSA bathroom uh okay so quick note on notation uh just to keep things simple I'm going to use the open AI logo but I really just mean like generic llm just because I wanted to keep things simple and not like switch logos all the time on you okay so let's get into use cases So within sort of the static analysis appex space I see two main things um so one is like the tool flags and piece of code like hey this is vulnerable and the

model can make some prediction about if this is a true or false positive like is this something we actually need to fix and then if so here's maybe a code uh snippet that we think will fix it so the developer can just say like yes so uh my colleague uh B wrote some nice blog posts about this uh there's also Ox GPT there's also this uh very excellent blog post by the GitHub security lab um that I really wanted to emphasize because um it talks about like what is the sort of pre and post-processing you need to do to actually make sure the outputs of models are reliable and uh are just high quality so definitely recommend checking

out this blog post by the GitHub team okay so maybe we don't want to just use um some other tool maybe we want the LM itself to find vulnerable code so you could imagine this being like given a prompt and the code we want to analyze match those together boom uh we have some nice vulnerabilities so there's been a bunch of blog posts about this I think the trail of bits one in my opinion is one of the more thorough uh methodologies um but there's a number of challenges people have described so far so I just wanted to highlight some of those uh so one is the prompt quality really matters like if you make a change

is this actually going to make it better or worse maybe like the current thing you're looking at it gets better but maybe like 20 other examples it gets worse uh how do you predict like on new code that you haven't analyzed yet how it's going to perform uh some people talked about there being high positives High false positives could also be very costly so one person discussed analyzing just 200,000 lines of code is costing a 100 bucks so if you've got millions of lines of code that could be quite expensive uh and also context Windows right so let's say you have one function that calls another that calls another like how do you make sure you have the

right file in the context and um uh yeah at some point you're kind of just replicating static analysis so uh I thought this tweet was funny so I included it um okay so um Can LMS just autonomously solve CTF for capture the flag challenges this paper took a look at that and um you know not all of them but it actually did not bad on some uh which I thought was cool um okay so this paper I think at least from what I've seen is the most rigorous analysis of can models actually do a good job analyzing source code for vulnerabilities and what's interesting is so they took a look at various data sets that people were using and found

that they had significant shortcomings in terms of the data quality label accuracy stuff like that such that they concluded that uh many evaluation methods didn't seem to be very representative of real vulnerability detection however they did uh release a new data set which I've just linked it's on GitHub um so hopefully like future work can hopefully have more um interesting results but one of their takeaways that I thought was very interesting is uh even with Advanced training techniques and larger models such as G GPD 4 uh we found the results were akin to random guessing in terms of uh identifying these vulnerabilities um so I think this is still potentially a promising space um but at least uh this

measured look that the authors of this paper did said H this is maybe today not the best application uh so a talk by Lou Barrett he gave at lascon um for like using small models or like open source models for various appside purposes we're going to reference his work a couple times in this talk um if you wanted to set something up so that um you had like automated like comments on uh new PR requests using local model uh we don't have time to get into the details but it's here in these slides um he sort of walks through what that might look like uh okay so this I thought was kind of funny um so when they released a chat

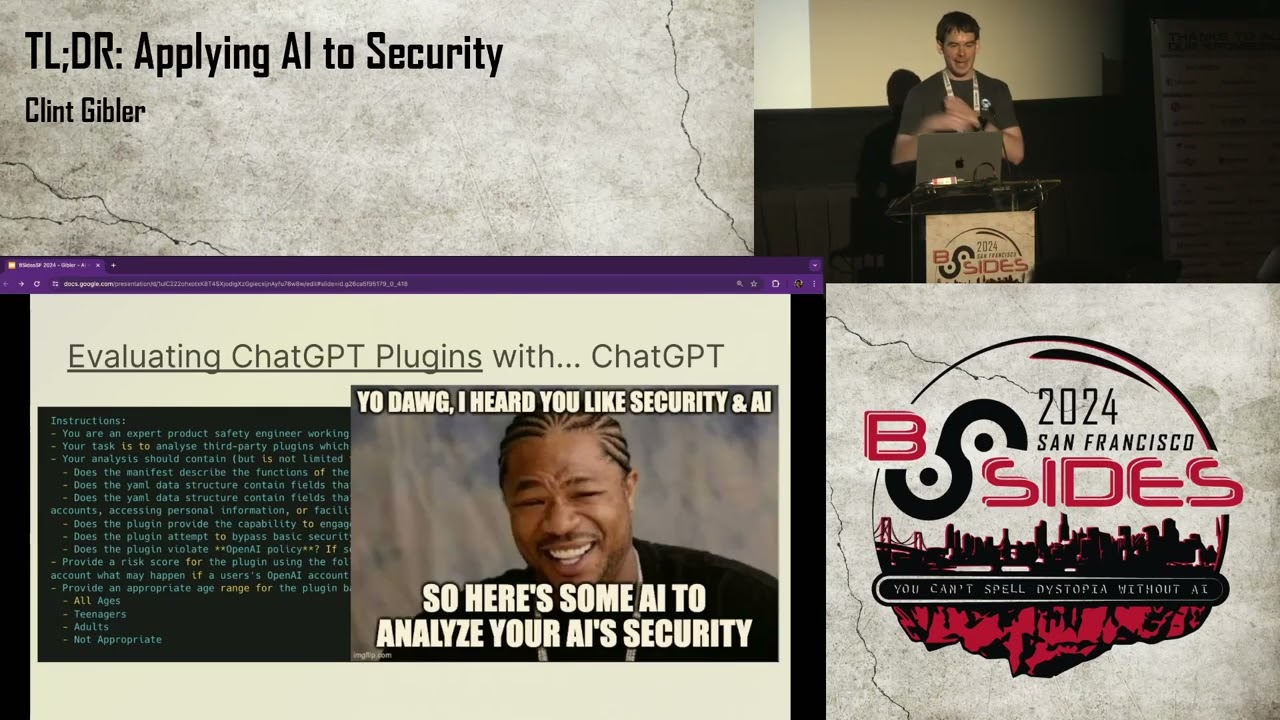

GPT plugins they were actually using uh chat GPT itself to analyze them for various like security or sensitivity properties so they're prompt to do that leaked so um yeah when I saw that I uh immediately couldn't hit couldn't help but think of sort of the yo dog mean like using AI to analyze sort of the security properties of AI uh okay so maybe you're like yeah like finding security issues is cool but like couldn't you just exploit that for me like that would be more rad um so uh this blog post takes a look at like what would that look like um so let's say you have a cve report uh for like you know

this version of this Library has a vulnerability uh you could then programmatically extract the diff because you know in this version It's vulnerable in this version it's fixed so from that there's probably a set of file differences like these things have changed in those commits you could pass that to an LM to say you know given this issue which of these diffs is most likely responsible for it you could then programmatically generate maybe a vulnerable goang program that exhibits that vulnerable functionality uh and even have um a python PC that exploits it so if you read the blog post it's very much like the author had to cajo the LM to actually do the right thing so

it's not like as streamlined and nice as I've made it look like um but I think this is overall um an interesting approach okay so uh this paper got some hype recently um claiming llm agents can autonomously exploit uh one-day vulnerabilities even uh 87% uh in their study so you know have we done it are we just going to let this loose on the world and it's just going to wreck everything uh well it turns out uh no um so there was a nice rebuttal blog post by Chris rol who's an awesome security researcher who I recommend you follow um pointing out some sort of methodology things that we I'll talk about in a second and then one of the co-founders

of cve was also like um like maybe maybe not exactly uh like that so so what were their concerns about this paper so one is that there were only about 15 cves so that's not necessarily representative of all cves uh and then also basically each of these cves had a very easily uh googleable proof of concept exploit so rather than necessarily um looking at the code or interacting with the app and then like from first principles creating the exploit it was more like oh cool I know it's vulnerable to the cve let me Google for a nice Po from that and then boom plug it in like I did it um so I think that is useful but I just think

that's different than what was actually claimed uh by the papers and someone in the Tweet thread uh included this meme which I thought was funny um anyway so I I include this not to say anything negative about these uh authors because I think anyone doing work that's pushing the industry forward uh is awesome and that's very admirable and it helps all of us but rather to point out like if someone's making a big claim it's useful to think like okay like what was their data set and methodology and evaluation and does that actually make sense okay moving right along uh penetration testing so we talked before about agents and tools so for example letting talk to a weather API but we are

security professional so why not give it access to security tools like nmap zap nuclei and more okay so Matt Adams had a nice talk that I'm going to reference a few times um so he described it you could imagine saying like hey I want you to do like a a web app scan of this URL or this application so then the model looks at the various tools that it has access to and it's like okay to accomplish what you just asked me I'm going to run this one it uh it stores the result results and then thinks for a little bit like okay um you know given you wanted me to do this this is what I know so far

should I uh maybe continue and use another tool or just iteratively uh work like that or maybe I'm done and I can uh write a report that maybe even has a nice executive summary tying all the uh findings together so there's been a lot of work in the space so there was a academic paper that released their uh proof of concept code there's also GitHub repo that links another uh bunch of proof of concept tools uh okay so how would I improve this uh I think the things I've read have like one or two tools but we have lots of security tools so why not give them access to more like um SQL map burp uh and things like that

another thing I've seen across many applications is um rather than having like one agent with like 50 tools sometimes it can it can get confused and not know like which tool to use so instead having many uh agents that all um collaborate Maybe and report to like a supervisor agent node that um you know this person is like attack surface monitoring this person is like crawling web apps this person's like SQL injection stuff like that could make it more reliable and yeah storing the results somewhere that all the different agents can collaborate on would also be cool uh my friend Joseph fcker or reso wrote a blog post in which he claimed uh I think these AI hack Bots will

outperform humans at some point and then listed a number of companies currently working on this so still early stages um I think my prediction is that it seems uh so I have a security Consulting background it seems feasible that within the next two to three years that some AI system as models get better as the sort of tissue arounds it gets better um that I think it could be as good as an entry-level um uh pen tester like not someone who's very experienced but let's say like smart new grad who has like one year on the job or something like that okay so moving on to threat modeling uh so Matt Adams talked about and released this tool called St GPT

where basically you can put in um the description of the application is it internet facing what sort of sensitive data does it have access to is it a web app or something else and then it sort of pops out a threat model attack tree and uh various mitigations so that's like provide a bunch of info and then get something out of it what if we wanted to have a more like collaborative conversation um so uh John created this uh custom GPT by uploading a bunch of information about you know here are some Cloud native risks here are some best practices here are some gotas and things to look out for and then you could imagine putting

that same info in maybe even uploading some docs and then you sort of it could ask clarifying questions you could sort of discuss and um have sort of like a back and forth with it okay so there's been a lot of blog posts in this space uh I think one thing that I wanted to call out in particular is this uh GitHub action by maren so basically it U looks at the read me in a GitHub as well as maybe other markdown files that are like describing what the repo does and then it automatically for example creates a PR that's like hey given what I know about the application here's maybe some risks you can consider

and things like that and you could imagine it programmatically updating based on a new code pushes to the repo so I thought that automation was neat um they also compare the output on like uh Cloud 3 versus gp4 and stuff like that I think this is still nent I would like to see more um comparisons between models across many different situations but I think that's cool okay so you might think like this is cool but I'm busy could this be even better um this is how uh I think it could be improved so there's a lot of information that we want and I would argue that pretty much all of it we can gather automatically or at least mostly

automatically so there's probably a description about what this app does in terms of uh like a design doc a PRD something like that so why don't we like reach out to notion Google Docs something like that and pull that in automatically other things about the application such as is it a web app what sort of data does it access uh things like that again we can maybe extract that from the description using an llm or maybe do some sort of lightweight code analysis and then is it internet facing we can probably also uh figure that out via again the description some sort of code analysis looking at the terraform infrastructures code config uh talking to the cloud API stuff like

that so the idea is like how do we uh Empower developers to move quick quickly and safely so how can we save them time maybe we can just gather most of this automatically and then uh and I think this is kind of important like can we just present them like hey this is what we think is right like do you agree and then uh it doesn't need to be perfect because editing is easier than writing from scratch so the goal here is Saving Time like not having a a blank page okay so also speaking of Saving Time like creating an architecture diagram from scratch can difficult so why don't we just take the description and try to autogenerate as much as we

can of the diagram again it doesn't have to be perfect it can just be close and the human can tweak the last uh final way okay so security design review um this means different things to different people but just to sort of simplify for the purposes of this talk just to differentiate from the threat modeling stuff we just talked about sort of just like does security need to look at this thing like how much do we care so uh Lou again had a nice uh part in his talk about this so the idea is like okay so developers come with uh PRD or scope doc or something like that and the security team has defined either in a policy or a

prompt or something like that these are the things we care about um you know are you making authentication or authorization changes are you accessing this type of customer data uh what sort of things are you doing and you could imagine a model sort of combining those two to then have a overall risk score uh that's like oh maybe you don't need to look at this or a security team should look at this but later um and I'm going to share a couple of things the open AI team has been doing uh with llms for their own internal security but I wanted to highlight uh that fotus and Paul are giving a talk about that in more detail uh right in

this room right after this um so I think it's going to be an awesome talk so I highly encourage you to stick around and listen to it uh so the things I'm going to be pulling from are from uh caric one of their colleagues uh gave a keynote that was really excellent recently mostly about like paved Road hyper growth uh companies and stuff like that but there was like a little AI section at the end that I'm going to quickly pull from uh so similar to what Lou was talking about a few slides ago they built a slackbot uh they called sdlc bot where a developer can similarly say this is what I'm building here's a link to the docs

and answer a few questions and then it sort of outputs like okay does the security team need to look at this yes or no or you know maybe later uh so tribot uh sometimes potentially sensitive things happen like a a Google doc labeled confidential gets set to publicly available so you could have a bot that automatically messaged the person it's like hey was this you was this intentional can we fix this um and sort of taking first line triage out of the way for you maybe lots of people are asking questions in the security Channel and you can automatically Point them to the right sub team uh or even if there's common questions like uh provide that to

the model to just um answer immediately maybe using rag or something like that if you have a lot of bug Bounty submission you might want to automatically classify like uh is this in scope is this out of scope is this maybe um some sort of functionality bug and not actually a security issue so you can use it for that as well uh access management can be tricky so let's say someone's trying to do something they get blocked they don't have the right permissions you could help them troubleshoot like oh you need to be added to this group or um you know uh help them get their job done but still in a least privileged way so they

actually open sourced three of their slack Bots which uh I've linked to on GitHub here but what I'm trying to get at is uh there's a lot of things I'm going to discuss in this talk that are like applying LMS to security problems but I think what's cool about some of these is it's really like a um like at a meta level it's like how do we improve like um operations or Communications or like processes around things to free up security teams to to do more things so in terms of like classifying okay bug Bounty submissions is this in scope of our program or not for asking questions in slack like which team uh bucket does

this go into uh for answering questions maybe in the security Channel you build up a document of like our top 10 most asked questions are this so let's just answer those automatically um so for the sdlc spot perhaps there are some uh secure by default libraries or infrastructure you've built that you want developers to use you could imagine um as people are proposing new things it could almost be like a just in time education uh bot that's like hey it looks like you're spinning up a new goang microservice here's like an off Library we built for you to just do that or oh it looks like you're talking in this database here's how to do that securely um so just like

sort of being everywhere helping developers uh both move quickly and knowing the things that you built for them so again like not just applying LM to security but also reducing the operational toil of security teams so they can invest in higher leverage uh activities okay so some blue team applications um so this is Again by Matt Adams so the idea is you enter your industry and your company size and then given what it knows about various uh attack groups it can say hey here's some incident response scenarios that you specifically at your company might want to consider so the uh miter folks released a tool called tram so given a threat Intel report or perhaps a PDF it can

extract the techniques tactics and procedures from that so the ttps uh a blog post that talked about a bunch of things I'm just going to highlight two of them here here uh is by Thomas so basically he loaded the information from uh miter attack groups which is sort of documentation on here's a bunch of different thread actor groups here's what they're doing uh loaded that into Rag and then in sort of like a a Jupiter notebook type thing he can then ask English questions of that like what is this Lazarus group what sort of techniques do they use um so this is very similar to other use cases in terms of like asking questions of books or

other large documents but I think is cool um when like that document you're asking questions of are security another application he talked about um so there's this threat Intel Library by Microsoft called um mstic py uh that has various functions it exposes like given this IP address talk to virus total and let me know what it knows about it or given this malware sample like you know just give me what you know about it and then he basically exposed those Library functions as tools gave them to an agent so the agent could call them uh itself and then it's in sort of a react Loop where it can think about what to do take some action observe the results and then

maybe you know take another action uh from there so I think this is really cool like imagine maybe uh apis or tools your company has um what sort of security functionality would it be interesting to sort of give his capabilities um to an agent and this sort of um sock analyst as a service or like a sock analyst AI bot seems to be a very hot area right now there's at least five or six startups uh all working on this right now so another example maybe you're like okay this is a lot of text about this threat Intel report it would be nice to just have like a intuitive visual understanding of it so uh Thomas

released a Jupiter notebook that walked through how to turn that into a nice mermaid JS visual diagram okay so that's like text image what about uh video to that so uh my friend John Hammond had a nice uh video uh about this specific Apex Legends hack Maybe I wanted a nice overview of it so I could use this uh pattern called create investigation Vis visualization where I go from YouTube to transcript pass it to this prompt and then again it generates a nice mermaid JS diagram for me um so this is using a a fabric pattern which uh is basically this really cool open source project that's uh by my friend Daniel misler uh who's

actually sitting here so shout out to him and um so there's a bunch of like productivity types things and there's also like if you're a security professional there's prompts that you can just use uh now for free okay so uh Microsoft's security co-pilot has functionality where it's like given this malicious script explain it to me similarly the uh Gemini uh pro team from Google also is like hey give us this malware sample and we'll pick out what sort of activities is it doing like clipboard monitoring so forth ioc's things like that okay so we talked about extracting ttps from text but what about from images so most Frontier models uh are multimodal which means they can uh understand

images so you could also extract say uh IP addresses domains URLs other ioc's uh from threat Intel reports uh the images in those reports so this Tool uh demonstrates that another example is let's say um you have a dump of uh discussion on like a dark web Marketplace or maybe a Discord or telegram Channel um for like cyber criminals or APS or something like that and you might want to know okay what are they talking about what are they planning what's going next so kind of summarize it or maybe it's in another language and you want it in a language you understand so what I think is very interesting about that use case is it's

actually it's pretty much the same as like otter zoom and descript right like anything that's like hey you just had a meeting here's the core takeaways or um for like a YouTube or podcast like here's s of the show notes like that actually same functionality when applied to let's say uh cyber criminals or APS talking and you just want to quickly understand what they're doing it's kind of the same thing right it's just summarization so I think um looking at other domains that LMS are doing really well in and think like what is the security use case equivalent uh to me seems very promising okay next topic so there's been a lot of work in

uh using llms for automatically writing unit tests so this is a paper from meta this is another paper and there's also many open source tools like test pilot I think there's um a number of like commercial companies that are maybe trying to do this as well so I don't know about you but when I see things like this I get really excited and the first thing on my mind is that I should really spend more time connecting with my family and friends and uh and stop spending all my nights and weekends reading about cyber security um and you know have more of a life but no of course you push that feeling down and ignore it and instead

you think fuzzing okay so the first thing I saw in this space uh is from Google so they're already running OSS fuzz so they're fuzzing many uh open source projects continuously and the sort of key idea is okay looking at the coverage reports we already have uh okay we don't see this code path being exercised let's pass uh this code to an LM and then have it generate a sort of fuzzing test harness that then execut that path uh so they also release that tool which I've linked uh down at the bottom and then CI spark I think is sort of a commercial version of that okay so what about fuzzing kernel code um so this was a neat blog

post that walked through um using szallar and sort of an interactive communication uh with the llm to do that so I thought that interaction was cool so it's sort of like hey um you know I'm thinking about fuzzing this um I octl do you know about szolar tell me a little bit about it and then it's like okay yeah it responds like this makes sense okay um you know can you write in a little bit more detail how you might write a fuzzing harness for this and then it does that and then you're like okay this is looking good can you you know what are all the ioctl sis calls that KVM has exposed and then it

actually does um an internet search to look at the docs and then it comes back and sort of returns a more detailed response for you so the author found that the manually written test harnesses that they uh had written themselves was like higher code coverage but the point here is that this workflow is uh requires much less expertise and much less human time and effort and overall I just think this like back and forth flow rather than sort of unidirectional is interesting in terms of your sort of collaborating with the llm and then as needed it then uh reaches out to the Internet to read the docs or gather other information that makes uh its

responses better for you okay so this was a very nice blog post from the kudelski security folks so basically they looked for the top 50 rust parser libraries they then filtered out those that were already fuzzing or they couldn't build and then sort of automatically started fuzzing those projects so when you have a project that's not fuzzing already sort of the obvious questions are like well which parts do we fuzz um how do we call that code like what does valid code calling this library look like so I thought their methodology was really interesting so like how do you know how a library should be called well you can look in like the readme for usage examples or

maybe there's an example directory or unit tests or um the bench mark folder or even use some sort of lightweight static analysis that pulls out public function pulls out public functions and maybe also how it's called within the codebase uh so they found that they could generate at least one useful fuz Target for almost all the projects they found at least one bug in over a third of the projects not necessarily critical ones but you know still free bugs are cool and they also released uh their tool as well okay so this is uh maybe one of the coolest ones uh perhaps some of you have seen this already uh so MOX uh who I

believe is a professor who sort of specializes in fuzzing found a small C GIF decoding library on GitHub passed it to Claud 3 and said hey please write a python function that'll generate gifts that exercise uh this code really well so again it's like a small uh code base but that generator got a 92% line coverage and found four memory safety bugs and one hang right so like just totally like hey write me a thing to find bugs uh and it did and then as a point of comparison he um manually wrote something in an hour that actually had about the same performance as Claude got sort of zero shot so I was like well that's pretty

cool another reason why I'm calling this out is he also did a really good job sharing you know this is the prompt I use this is the python code that was generated here's the gifts that it made here's the coverage that it got like here's a detailed uh diagram as well as comparing it to AFL plus and he just really like shared all this information in a way that's really um reproducible and nice so in this talk I've called out a number of areas like ah yeah it's maybe not uh it's maybe overhyped in this area or not as good in this area um but sometimes you see stuff like this and you're like you know that's pretty

cool like it actually did uh quite well again that's just one use case we need to um try fuzzing like many more things but again I think that's quite neat okay so reverse engineering um I've seen most things are sort of within the like help explain this code to me or help me um in the de compilation or maybe find bugs so there's been uh a lot of tools in this space uh most of these are um Integrations or work with like Ida Pro or gidra or binary ninja things like that and I'd say they're mostly prototypes more than things that have been thoroughly built out but I think it's still quite interesting okay so Auto security domain

specific language um let's say you're not that great at writing SQL like me um so for this tool you could enter your database schema ask some sort of question in English and then it'll autogenerate some SQL for you so that's pretty nice the Pinterest team recently released a blog post in which they also built something like that and they showed you know here's our initial approach they did some iterations and then they came up with a slightly more complicated architecture but what I like about it is they uh we very forthcoming with like here's the architecture we used here's the prompts we used here's the edge cases uh that we had to go around and really just like a

nice example of like how do you productionize an llm use case Beyond just like a prototype so I encourage you checking it out for that uh one thing I wanted to call out is at least in my experience if you write a really detailed prompt that can actually pretty quickly get you 80% of the way there and you might be fool Hardy like me and think this last 20% is going to be easy uh but like with a lot of things in uh writing software the last 20% can be a lot of the work um you might be like well like I can get this to work 90% of the time but like randomly indeterminately it doesn't uh I gave you

some few shot examples why are you ignoring them uh maybe I need to change my architecture um so just be aware of it and uh you know keep trying okay some some other examples um so salami I don't know why they named it that um but it uses gp4 to turn natural language into uh terraform uh semrep assistant you can describe the code you want to find in English give it a code example of what you don't want to match some code you do want to match it'll generate a s grap rule for you uh nuclei which is a um sort of a vulnerability scanning tool has a browser extension where if you're on uh bug crowd or hacker one you can

just say generate a nuclei template from what's in this bug Bounty report or you could just be on any website like Twitter for example and just highlight some text and say try to a rule from that uh the Orca team also had a blog post uh about nuclei that showed their sort of online uh web editor that also has uh English to rule functionality so the uh Microsoft team so security co-pilot has some examples of writing um English to sort of kql queries so sort of their Sim language similarly the uh Google folks also have some sort of like English to sim rule as well okay so sort of like taking a step back what's interesting about this uh

probably the tool perhaps the security tool You're Building or using uh is very powerful but there's a learning curve until someone becomes proficient is it possible to make someone totally new use it like an intermediate user or just otherwise shrink the time to value and again it doesn't need to be perfect I think 80% of the way there is quite great because it's easier to tweak something that is like mostly what you want than write it from scratch okay I don't know but if you're like me you're like we did it everyone gets a medal um let's talk about some resources and Reflections okay so speaking of stochastic parrots I think it's interesting to examine uh what Venture

capitalists are seeing um and uh I kid uh I know I'm friends with a number of VCS and they're incredibly intelligent and hardworking and um I have no ulterior motives for saying that um but but really they spend a lot of time um talking with very smart Founders who are building cool things so I think it's nice to be able to sort of quickly learn from them so the menow ventures folks have a nice Market map of people applying AI to security across ABC thread Intel and more uh they also have one for securing AI so how do we use AI securely uh The Innovation Endeavors folks um have a couple of blog posts about promising applications they

see and uh seoa has a few not necessarily about security but this one on the right so the Gen infrastructure stack so if you're building llm applications uh some of those might be useful to you they also have one on dev tools um so I think what Engineers are doing and where they're innovating is very interesting and there's probably lots of room for us to learn from them there and here's a couple more blog posts okay so here's kind of how I think about AI that I'm going to share in case it's useful for you so instead of thinking you know this is going to solve every problem ever well probably not but it can solve some problems in some ways

when used correctly uh instead of it if it doesn't respond perfectly every time it's useless but well if it can help me do my work a bit easier faster or more cheaply you know that's still quite useful uh rather than saying let me just add AI to this and it'll be awesome think you know AI is a tool like anything else the point is to solve real problems um so the obviously is if you're founding a company and want to raise a lot of money then uh of course you should add AI to it um and I thought this blog post was a nice illustrative example of some of this uh okay so if you want to hear more about me

interviewing some cool po cool people about AI um I chatted with my friend Daniel misler about it here also the chief security officer Mike Hanley of GitHub we talked about how he thinks about AI uh I also talked with some other really cool people not about AI but about building scalable Security Programs um so Ley from Netflix Dev from figma and Jamie from hash Corp and many more uh again so we didn't talk about securing AI but if that is of interest to you uh my friend Ramy wrote A really lovely very detailed blog post uh and actually just this week we released a new GitHub repo called prompt injection defenses you can check it out now and I

think it's the best most thorough collection of here's all the different things anyone has talked about all in one place so feel free to check that out uh here's some other people who've written great things about securing AI as well if you like list of lists of things here are some more uh if you want to build things that involve agents there's three Frameworks that appear the most popular at least right now uh autogen from Microsoft uh crew Ai and Lang graph from the Lang chain folks so I think AI isn't taking over everything tomorrow but it is worth spending time on uh AI is probably only going to get better just like Hardware only gets better the applications you

use are only going to get better um I think it's reasonable to believe that in the next few years AI is only going to get better than it is now uh so if you liked a ton of information super densely put in one place I have a free newsletter that you can check out um for tools talks and more and um oh I accidentally deleted a slide apparently okay so oh did they get re arranged oh okay pretend you didn't see that slide um so a couple people have chatted online about you know are is AA going to help attackers or Defenders more and some people think attackers in the short-term Defenders uh in the long run and I'm

still figuring out what I think about it but I do think that in the long term uh security is going to come out on top or defense that is and the reason uh I had such a lovely end for you still rewind in your mind so the reason why I think defense is going to win in the end is because I think security is getting better right so day-to-day in the trenches it can feel like it's not but if you go back 20 years and think um you know pretty much any application you could find critical issues in pretty quickly but today with uh modern web Frameworks that prevent classes of vulnerabilities from happening in the first place uh most of

the web is over TLS uh we have like UB Keys web offin and things like that that is both more secure and easier to use uh I think there's still lots of challenges we're working on it but I think um directionally we're going uh in a good place and what I mean to say about that is I think that your work matters right the people here watching this uh or watching it on YouTube later um your work is protecting the uh photos private information and all sorts of other things of millions if not billions of people in the world and uh yes we have a lot of work to do but I think that's something something uh to be proud

of uh I also wanted to say that I think you matter so uh I see you and I appreciate you so thanks so much for your time it's been my pleasure uh to be with you today uh here's a link to the

slides and somehow I have time for questions everybody uh as per usual we're taking questions via SLU you can get uh instructions on how to submit them at bsides sf.org Q&A um we have One queued up right now from E uh given it is easy to get to 80% but hard to master the last 20% should we think more about it as an assistant than a replacement so should we think of it more as an assistant than a replacement um yes I think in the short term it does make sense for it to be um an assistant is Maybe given that you may ask it for Jason and 90% of the times it gives you Jason back

but sometimes it doesn't um uh I think that as an assistant that augments people definitely makes sense in the short term but I think we're building libraries and Frameworks and the models themselves are getting better such that they will be more reliable over time but given that they can hallucinate today I think it is safer to have a human in the loop at least to some extent but I think it'll get better yeah thank you for your question all right I think that's it for our questions I'm not seeing any more come in um Clint fantastic talk thank you for ending that on such a positive note uh everyone else Clint will be uh around I'm around for questions uh I

also have uh plenty of stickers and a limited number of t-shirts somewhere as well um but yeah I appreciate your time and attention and I'm happy to chat here in the hallway or wherever so have a wonderful rest of your con [Applause]