Securing Cloud Delivery Pipelines - Findings From A Blue/Red Team Security Simulation by Foo Meden

Show transcript [en]

okay hey my name is phu i do some type of form of nondescript cyber and i really miss via conferences it's great to be here uh so today i'm going to speak about securing cloud delivery pipelines and i would like to start from taking a look at what's going on in the industry in 2021 first of all i want to look at the state of devops report there is a year report looking across the industry and what practices tooling are adopted and how they impact software delivery and this year we have 56 percent of respondents saying they are adopting some form of public cloud i think 21 of them they are even adopting multi-cloud at the same time in the last 10-15 plus

years we had an explosion of adoption of practices techniques tooling to do deliver software better better quality faster and in particular uh work the rise of continuous integration continuous delivery kind of tooling um all the um servers that go along with that video github actions if you're unlucky you get jenkins i'm really sorry and so on and so forth what happens then is the community um the industry really came together of looking at what are the common threads that emerge from the adoption of the print of these tools like configuration mistakes as we increase the complexity and the speed we should deliver software we created a plethora of tools services commercial offerings to counter this can this kind of threats

there's plenty of tuning that we can use to check our cloud configuration to detect secret and so leaks so forth at the same time for defending from um average hacker scenario um occurs in uh trying to get a quick return on investment uh probably any kind of offering out from the box from your cloud vendor from your software service offering uh will put you in a decent position to counter these kind of threads but then if we look at advanced motivated attackers if the software that you're delivering contains some form of highly sensitive information maybe trade secrets maybe personal information of some uh group some demographic is a good target for advanced attackers can we reuse

public cloud software as a service to protect from these kind of adversaries in order to find this answer agreed this year cabinet office organized uh writing blue team simulation in which i've been part of in the capacity of being the tech lead for the blue team so what i'm gonna cover in the next half an hour also is some context around uh the simulation as we said about and then i'll cover what we learn on pipelines and architectures practices and processes so first of all again the simulation was being set up by cabinet office involving a team of builders the blue team a team of attackers the red team and there is a team of penetration

testers that are impersonated a highly advanced helicopter we had the lack and privilege of having some cloud smes from aws uh security smes from the national center security center and other hmg departments to join us in an advisory capacity now the whole project has been done has been dubbed uh thin tool uh that doesn't make any particular sense but i'll refer to that again later especially because that's how we named our repositorizing github they are available for they can look up i'll show the link later on as we started we decided that we didn't want to just like build something out until looking at the technology but we wanted to look at the technology through the lens of

people and processes as well so the first thing that we did rather than starting from an architecture diagram was to start from creating a little backstory and we decided to scope the whole exercise in creating this fictional government agency called the creative licensing agency and trying to figure out what is their mission what is the day after disposal as they start the journey on delivering service to the public the idea is that they're building service for people to apply for creative license they work for their personal information we decided that this is highly sensitive and as being a very small organization just starting we make an assumption they have very limited budgets very limited stuff they only have a couple of very

small teams one team of infrastructure experts they're familiar with technologies such aws infrastructure is called the tiger forum and we have a small team of developers that are familiar with web technologies java services react anything like that with this in mind we start mapping out a possible architecture for this more organization starting and the way we want to architect this is to try to adopt as much software as a service and cloud as possible trying to use anything that we can have out of the box and this is following ncsc's cloud principles and in particular the one that i want to call out is the idea of adopting as much out of the box services as possible from

cloud vendors from sas and the idea being that as more organizations starting up any kind of security that you can have built into your tools built into your services from your vendors can surely be better of what you can invest into at the beginning of your journey so what we end up with is with cloud deployment on amazon web services uh we start from the outset with a multi-account aws organization setup this is following also published best practices from the vendor uh aws has the well architected framework uh with well documented best practices for how to architect all of this and some of these and in particular the way we set up your account even get embodied into

automation within the tool itself uh in this case we looked at the bls control tower that is a kind of one-click solution that gives you a well-architected aws organization out of the box with some strong opinions and for the development of delivery tooling we looked instead of github uh we created a github organization and we adopted github actions as a base continuation continuous integration hyphen software after creating this uh target architecture we started uh threat modeling in a iterative function with the week and we created a map of what we think the main threads are where the entry points for an attacker are in their architecture or they can move laterally and we started asking the question this

being a simulation which of these are interesting or where do we want to invest our time in understanding better how these attack scenarios will play out what we came up with as another focus actually some of this is greyed out i'm not sure you can see the grain up on the screen but we decided to focus basically on the area in the middle and in particular on the delivery pipelines um we should ask the question well we can assume that on a motivated attacker will throw as much fishing as they can at achieve one like three six nine months of fishing will eventually land you an engineer's credential what can we do once uh engineering what

the team is compromised they have their credentials stolen they have legitimate access to git repositories may be limited how far can they go in compromising their environment in exfiltrating the sensitive information that were collected from the public in order base i would also like to call out which areas we did not decide to go into uh in particular we didn't look at privileges i will bootstrap the whole thing securely this because it can pretty much be an entire research project in its own right um so we made an assumption that a small subset of the infrastructure engineers have access to some form of hardware machine for which they can perform highly sensitive operations like bootstrap in the world the blessed

environment and managing aws data organization uh administration credentials and so on so forth detection on target hunting uh is something that connecting them to what we discover the beyond is something that is fundamental to a successful um defense uh so social security program but we also knowledge that at the beginning of the journey an organization you probably don't have the investment to really make this effective we don't have the staff to look at the logs and try to get something out of it uh so it's something that we left out of scope for the simulation we don't have car duty but was pretty much as much as we did and lastly supply chain uh in recent

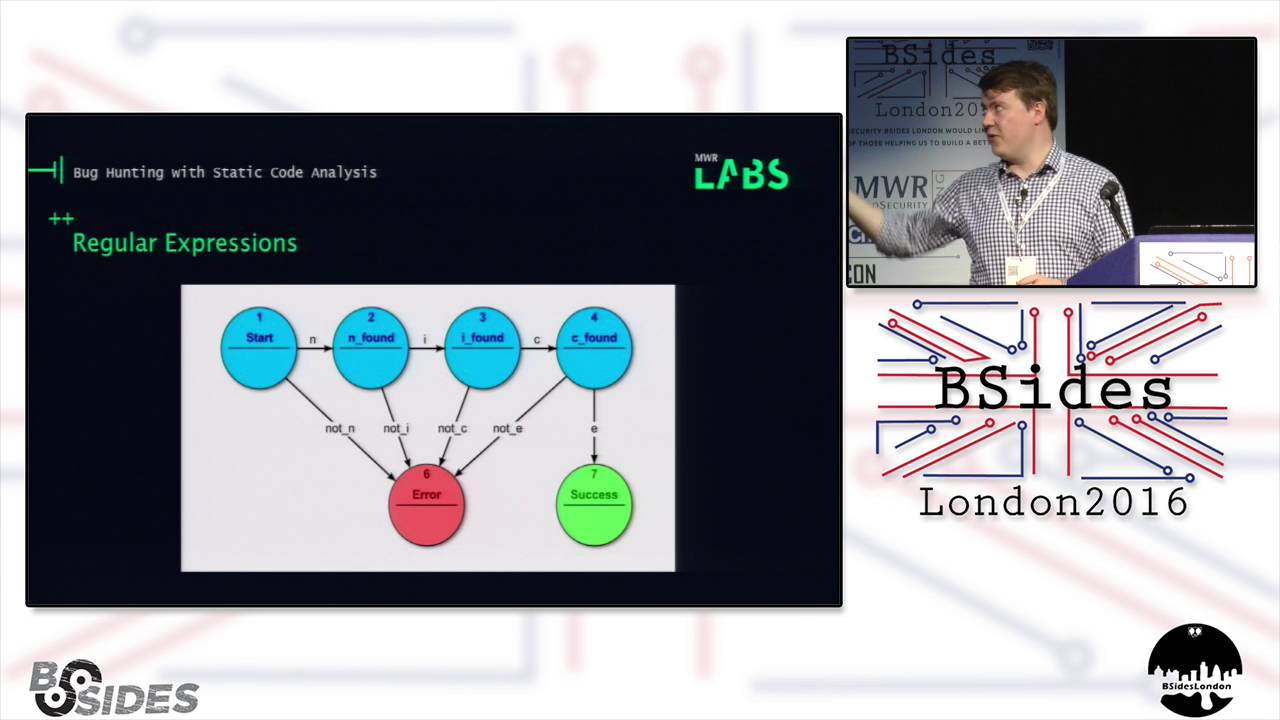

years there's been a lot speaking about supply chain attacks but there's also been a lot of research on it and we felt that there was plenty of active discourse and that um it wasn't useful for us to go through some of this like once again that's it i would like to first speak about pipelines um if you weren't one earlier today you probably already have some of this but i want to get back to the concept of continuous integration continuous delivery pipelines the core idea is that we want to have tooling that automate the way we build deliver fast ship deploy software and these tools especially these days are designed to greatly empower delivery teams

to take home to go on a journey of continuous improvement of their own techniques and they can be self-managed by the teams like with cml files you can commit a repository you can have the configuration your pipeline in the repository and the way you change what this automation server does is all encoded in this yaml file that is great if you want to quickly iterate and learn always the best possible way to build and testify software not so great if one of your engineers is compromised because then you can do stuff like um add very simple echo commands in the build script and exfiltrate any kind of secret uh that has been stored uh in the ci

server so with this in mind uh knowing that the current model that we're looking at is specifically looking at a compromised engineer we ask the question what would what can we do to do better than this and the model that we came up with is what we're calling this trusted pipeline model and the intuition is that if we go and look down into our pipeline uh with the discrete matched by someone else we cannot trust into it and build high assurance but we create a lot of friction for the software delivery process but is it possible then to have a little bit of both of that the best of both worlds and having uh entirely cell phones self-managed

constitute continuous integration side of the pipeline where developers can still attain the ability of performing um or having full control over the way they build and shift the software but move any kind of operation that requires highly privileged credentials only on the operation in which we rely on some trust to be created uh to a validation into it in a different section of the pipeline that is not self-managed and can be locked down by administrators um the tricky part in this is really how do we make the lockdown part as minimal as possible so we can create a trust without creating friction to get into production but still we started with this model um what we decided to adopt for this again

thinking from the perspective of adopting as much out of the box services as possible we use the ws code pipeline and it pretty much looks like this a simplified well some kind of lambda the listens for when the github action side of initial build pipeline finished and then we pull in source code and we essentially rebuild it and there's kind of two models here you can start from source code again or you can also receive a build artifact and then you have to kind of validate the source code and artifact match before proceeding we made the choice of starting by simply getting in code and rebuilding it again and then on top of this we can layer any kind of security

control applying policy and so on and so forth and then performing deployment having secret keys entirely managed within aws itself and this sounds provides until we think that in reality to build services again to build code again we have build scripts that are more often than not to incomplete in the case of what we're looking at java services building gradle gradle uh as a build tool is scripted in group way i have like pretty much the full power of the movie at your disposal to do whatever you want really so essentially uh we're now moving the target from a malicious engineer connecting changes to a yaml file that is built up in youtube actions to accelerate seekers

now they can target a gradle build file uh to pull out to command the control centers from within the continuous delivery pipeline and do stuff like exfiltrating uh the temporary credentials assigned to the builders and so on support any kind of authentic takeover with this in mind we think okay coming from an engineering perspective being the blue team and being that mostly builders other than security engineers okay what can we use computer engineering of the problem and if the problem is that some of our inputs can be malicious can we have some tools for starter detection uh can we have uh customized uh detection rules can we have all css code to detect if any of these is my issues

before executing it um well yes you can but it kind of becomes a cut-and-mouse game you probably start from some out-of-the-box resets uh but then some of the clues are rough edges and like this is an example of a very simple comment the uh added to evade tooling is simply adding a space on the back of the detection laws um and then as the blue team we can look at this and to say okay we can learn from this and like improve the detection rules and then that thing can go okay fine because i concealed that opposite of this because you still want to be as transparent as possible uh to reduce the time to debug and

troubleshoot uh then they can just look at it and create a new version always and so on and so forth so this becomes really a cat and mouse game so we think okay fine uh that's our finding for this section we'll move on and look at some alternative solutions and we start looking instead of what we can do for boundaries um permissions uh both in terms of authorization privilege and in terms of networking privilege the interesting parts of adopting some aws native services like pipeline for this is that we can manage authorizations at a very granular level built up by build staff using aws ian what this allows us to do is to look at

the various stages in the pipeline and say well we have some credentials that are available to all of these stages or we can think that we only need these credentials when we deploy before the build stages before that we don't and we can make it so these are only available in the deployment set that doesn't need a customized clip that is an input controllable by an engineer vice versa we don't need this in the build step so we can take that away and that's where we have potentially interested inputs so we can you know like mitigate the impact of an untrusted code of an intellect similarly we then look at network controllers and think well

do does all of this need to go out to the internet uh to perform this well yeah probably yes uh building a java service and building containers we probably need to go and get these images for our containers we have to go for java dependencies maybe terraform modules maybe some other bits and bubbles of code from from github snippets uh but if that's all we need we can probably enumerate the places that we want to reach out on the internet until some firewall they can say well at the very least if these are all only the only places where we expect build scripts to reach out we can effectively straight to only these places and this is great because

going back uh to a malicious bill straight biscuit reached out to commander control center what we can do is saying well you can't go there anymore we have the very least to take the firewall into allowing you to go there because you are structured to go only to another repository to github to the telephone registry and so on and so forth uh so uh looking at what is the egress of the network that you need uh you can feel that that is something that can be a really useful tool to improve the security posture of icd pipeline so that's it for pipelines some key takeaways that i would like to repeat this is we have to think that some different

stages different operations in the whole sort of deliberate cycle potentially need different properties there is something for which you want to have fast feedback really empower delivery things there's something else that needs deep trust that built into it to really work uh we can try to build this differently and match them differently instead of using a single tool to embody all of this thing upon at one time the ability of assigning permissions and privileges in a final manner can be a really powerful control but of course that requires a lot more to a lot more work to configure correctly and a couple of things that i didn't really cover is then who configures these pipelines ideally

we want to configure all of these as uh infrastructural code but then what is the pipeline to configure all the other pipelines because of course that would be a source of trust again like i said earlier we didn't really look in that how to solve the security the secure introduction problem we made assumption that some of the engineers with the product machines are able to set up and boost up all of this uh from a hard man point and pretty much likely to die lastly going back to the issue of uh stealing credentials and how do we move from a self-managed environment like productions to trigger pipelines within aws looking at pool models rather than push

models is a great way to avoid having credentials in less secured environments and just a quick mention here [Music] it's also been incredibly frustrating doing this with the ws code pipeline uh ideally it should have the ability to trigger on git commits but that doesn't really fit together nicely and you can build a custom lambda custom thing to trigger all of this but it's messy so yeah that's that uh so now for architecture and actually time that's not so going back at the beginning what we started from uh like i was saying is an aws multi account setup and the way we started all of this was to adopt aws control power control tower is essentially

an out of the box uh one click deployment of an opinionated multi-cloud multi-account setup uh in just a few clicks you can configure um i think it starts with four accounts with a management account uh you get the bls single sign on to manage your review users and access across your state uh you get an account dedicated to security monitoring and all the thing you get an account dedicated to local archiving and you get an account to start between your workloads and in and it gives you a bunch of guardrails in the form of aws config anyways these are always like around periodically and look at how ceo constantly oh well there's a security group that doesn't look right

uh there's some stuff that is not really in line with the cis benchmarks and so on so forth and it gives you an opinionated setup for uh your voice across uh yourself permissions across the account so you don't have to start them up you don't need to start thinking all right now that i have five accounts i need to create an admiral that is valid in all of these i'm provisioned as an sso and how do i design with my uh permission model and not mapped to my work of course you will have to do that tailor to your org at some point but at the very least you start from not zero uh that is great when at the beginning

of a project specifically i have so much to do and i spend a lot of time just to get started is not ideal so we were very happy about adopting this tool i think that to get somewhere decent quickly is a fantastic way to get there but the other thing for which i really appreciate all of this i really appreciate starting from a multi account aws setup is the iam is really hard it's incredibly powerful because it's very granular and you can do like very complicated very advanced stuff with it but there's just so much of it and is like you know like the time spent to create a very nice look down precise policy

and tested and make sure that uh no possibility escalation is a lot and what happens more often than not is that um then you will have to start with an aws manager only and these are often overly permissive um so we all said um what can we do to reduce the impact of it to reduce the impact of the code of automation and separating workloads separating databases separating any kind of thing you do across multiple accounts kind of provides a natural boundary there because then to share resources across accounts you really need to be explicit about giving permissions some things even on both sides if you think about uh either you do across the counter sooner or later

um so that's fantastic because essentially saying it makes it really hard to do the wrong thing so starting with multicount is something i will always recommend for how simple the thing that you're gonna do with this account will be think about adopting more than one account from the start and if not a control tower because that enables config and that costs money at the very least starting with sso that is for free is a good start uh so that's it for account boundaries and then uh on a different kind of boundary i want to think about security groups so i'm gonna move towards the application side and what we built as part of this is a very vanilla

uh shoot here kind of application we have java web server show some pages i see some phone calls connects to a database fronted by a lot of balancer nothing special nothing fancy but the interesting thing with all of this is that we start with a fairly classic um division in um installments and security groups pretty much would be default to tend to start with a way of enabling egress to the network from all the stages but then we looked at the web service and we looked at what the right thing could do to us and some of the things that they did try and succeed into was uh to commit some malicious code within the web application that once deployed

will reach out to commander control centers and we looked at this and thought well actually do we need to allow the application to go out to the internet um well situational for us the answer was well actually no it's just a thing that like you know receives a request from the internet and displays displays our web page some time ago like if we deployed this on an ec2 machine and match the server then we definitely needed to go to the internet because we need to patch the server we need to run apt updates on any kind of other thing but if you think of building all of these with techniques such as immutable infrastructure and continuously

rebuilding the base image and destroying that are building that rather than continuously patching that live or using containers for this can achieve the same results but we don't really need to reach out to the internet and we can remove eagles entirely and cut off the ability of directly for the right team to reach out to minor control centers so this was a quite interesting one we deployed all of these on docker containers on the elastic container service so it was trigger for us to achieve of course depending on what your web services are doing you might need to reach out to the internet uh an example if what you're implementing depends on some third-party api

but that doesn't mean that you can't look at your architecture and thinking well actually maybe here i'll keep moving towards micro services and i can break down this one service in a park just displays websites and interacts with users and so like back processing part that is deployed separately and has access to the internet and you can potentially obtain some better segregation out of this and then we can take these ideas a bit to an extreme and saying well actually we're managing all of our users all of our aws users with aws sso rather than being aws iam users and if we're doing this we can adopt a service control policy and say across all organization

is not allowed to create iim users in the first place and as a consequence we can't have aws access keys and we can have headbase console passwords that can be leaked and stolen and uh get a foothold inside the organization and now i'm being a bit extreme because of course you definitely have some cases in which you kind of need to create an aws a.m user to integrate some tool or something else but you said i'm going with this like if you put some effort into it you can look for opportunities to take away pieces of your architecture or shift that so that you can reduce your attack surface um so once again learnings out of this

don't allow what you don't need and do check do think twice about what you really need for your application to run successfully for your sdlc to complete successfully consider adopting immutable infrastructure if you're not already uh that can give dragonized security properties that we can leverage for all of this and last but possibly the most important out of this account boundaries are a fantastic bulkhead i would definitely recommend a lot in this last one up [Music] practice some processes um so we touched a little bit about policies code earlier on configurable detection rules um we looked out that can become a cat and mouse game but we also discussed that as something we put in a pipeline to try

to prevent execution of malicious code before pipeline will execute that but still running within a pipeline the thing that really surprised us is that the very first attack that we suffered from the red team was not our time to take over our bioplastic over our environment was an attempt to take over our engineers the very first the very first thing they tried was to add some malicious snippets inside the gradle build files meaning for some other engineers to under build locally undercover big credentials and take over their accounts so they try to pop a card on on windows lucky thing for me is that i actually run ubuntu desktop we don't have a hike you have to write a compiler yourself so

i'm safe um but that said uh this all these policies called kind of tooling doesn't really save you from this and similarly there's other attacks that directly manage to slip through once we cut away the ability of originals to command the control center from malicious code in the web services we thought oh well if we can't reach out to the internet proactively we just can create a backup for ourselves and essentially i did a web show so this bit of code is uh in the context of uh uh javascript service is just one more endpoint with some offers getting names that will know i just passes any kind of http request straight into our os shell

and sends the result mark so being this response and not a request then we can avoid the barrier of eagles filtering entirely um by the way as apprentices we tried to throw aws web application firewall at this and it did absolutely nothing especially when the commands that we receive are something simple like id who am i stuff like that it was just like yeah that's that's fine for me even with base64 the reason i'm raising this game in the context of people on process is that as we though started adding better resets for detection into this um that detected the simple stuff like oh you're using an excerpt in your java code this is a bit uncommon when i'm

very dangerous maybe you shouldn't then the way they avoided that was to introduce meta programming in java does reflection so i still say in the uh java.exec i can say hey java give me the exact give me the metal name x i can execute that for me please it's more convoluted way to execute stuff mode scattered way of executing stuff but as they start adding obfuscation in the code base this starts looking weirder and weirder and for a machine this one for for who said written down this uh allows evasion but what if we have a person looking at this suddenly all of this starting is starting to really pop out to a human eye

and this leads us to speak about code review getting into the simulation uh especially from the perspective of a builder that is uh component of trunk based development of trying to deliver software faster and avoiding big leap times we came in uh trying to avoid doing code reviews and thinking can we do better detection engineering so that we have we can push later down the line any kind of stage of manual approval and just like use good um delivery techniques with the coding techniques ahead and not have these oh i have to submit a pro request out wait some days a week for someone else to review it and that was done but what we learned out of this is that

if we are trying to defend from attackers that have access to the code base some of the facts the only way you are to find them is to really have another human to look about but the other thing that we thought is well how does the work in the context then of using agile methodologies such as pairing by default uh we usually work with a pair of people working on the same code at the same time can we really use this as a code review more often than not in my experience at least what happens is the idea of two people on the same machine they work together on something and then they just commit and push

straight master straight main trunk um however you call it on the same machine and that kind of counts as a review and that really doesn't necessarily protect from this kind of cases you still have a single identity a single credential being allowed to push code possibly all the way into production but if we take this and add a technical control on top of this that is something like a branch protection and enforce as even a small little pull request and combine a two things then we can have some guarantees that are fairly strong in this we need because the branch protection at least two people to approve call to the emerging main main line before getting

there and this is compatible with the same two people looking at the same screen and working on the thing together but then one of the two will have to jump on their own machine to run the approval but that doesn't this doesn't have to happen in a day in two days in a week it doesn't have to slow down the process they have all the context of what they just seen working with a colleague and they can just quickly go through it have a fairly quicker view just checking that whatever they see on their screen on their machine matches the expectations of what they just worked on on the machine of a colleague that might or may not have

been compromised and they can have this out of bound kind of approval but still in the flow of the delivery process

yeah so in conclusion we feel that in reality being able to have an effective way of doing code reviews is one of the most powerful controls that you have and you should have in the way you deliver software and it's not just about having two people looking at the code at the same time you need some form of technical control but this this doesn't have to be uh introducing longer lead times uh so once again in summary uh detection invasion is a bit of cat and mouse games but the good news is that the better detection rules you have the easier it would be for a human to catch stuff that becomes weirder and weirder

similarly what we also observed that was went through this is that good engineering practices also positively contribute to this uh things like having coded a self-descriptive having smaller classes smaller methods smaller pieces of code that are less complicated having more simplicity or architecture having stuff that is well tested all of these make for easier detection of weird implants in your holidays

that's end of it so in conclusion uh getting out of this whole exercise our feeling is that the security bar that is achievable in the public cloud and adopting um software as a service is possibly higher than many believe but it does need attention to design in particular our overall system is definitely less secure than the sum of this part and devil is not only in the details but in the details of our different pieces of software different services connect together when we have these boundaries between say git of actions on aws that's really when you want to put your iphone checking how you're piecing together the whole solution architectural controllers can be really powerful

do think about what you can take away from an architectural you can shift it to improve your security posture and good engineering practices do impact positively security can help having a better view across your software state with automated tools and can help humans to have better more efficient quality and lastly once again choose advice does not necessarily imply longer lead times and friction for a delivery process but in order to add that effectively for security you also want to have a technical control added to that and not simply the activation people in front of the sky with a single screen at the same time and that is uh yeah lastly uh check out the repositories all of the stuff that we

wrote is public on github.com slash team tool these so there's a repository that is called in tulip where there's uh all the documentation uh all the the edition weekly that modeling sessions and so on and so forth everything else is a mix of the example service that we built and some infrastructure was called of the whole state so take a look hope that is useful and that's it for me i think i have a few minutes for questions excellent