Container Secrets Done Right

Show original YouTube description

Show transcript [en]

[Music] so how many people by show hands real quick have used containers or at least a passing Lee experienced with containers in general but how many of you have heard of the term had a back attribute based access control so I'm going to talk a little bit of both these things today a little bit about myself I come from a company called Divi we're a start-up down and Lehigh we're changing how credit cards work we're allowing you to get ahead of the transaction it's kind of fun because you can generate virtual cards so you can do like one-time purchase off virtual cards and it's just a lot more powerful the interface it's kind of modernizing

credit card systems I've been kicking around the tech industry for a while I've been in various different positions from developer engineers CEO CIO CTO type stuff and it's kind of throwing all this stuff together have a book out I can talk about it offline if you want it's called activator it's just around design thinking in the tech industry through all of these experiences though I've done a lot of stuff with systems and configuration and security and the most recent thing I've been playing with is containers and the secrets and the challenges we have around those sorts of things so I'm holding it upside down our rights we'll find out here there we go before we really get into the reason the

configuration secrets are a challenge let's talk a little bit about containers you've heard of the term ephemeral or ephemeral systems and one of the reasons that people like containers so much is because they're kind of the holy grail of system administration the way we used to do systems management was more like if you had a factory and a whole bunch of you want to build cars in this factory so you'd paint a whole bunch of parking spots and you would actually bring in a bunch of cars and fill up the factory like it was a parking lot then you would build robots to go out and pull up handles on every single car and then come back and they'd tell them go

out and change the paint color or whatever it is that's kind of how we've done the system administration up to now containers are really the the assembly line automation that is really in reinventing the industry and that's why everybody's getting so excited about them and it all boils down to being ephemeral the idea is that when you build this image of a container you don't burn into that image anything that deals with system configuration stuff and so when you create the image you can sign it you can scan it you can do a lot of stuff but there's no sensitive data in the container there's no configuration data in the container and then you can promote it on through a

pipeline if you're doing it right a lot of people don't do it right because the challenge you have is the other side of this is you don't have configuration management in the container at runtime you shouldn't be running assault minion inside your containers and that makes it challenging because you kind of have this catch-22 like I don't want to have config management and ever I don't want to put secrets in there and so what's happened is that it's people have just really struggled with this and there's different solutions and I'll get into them a little bit through the talk that it's become a challenge for the industry and and just to really kind of make sure

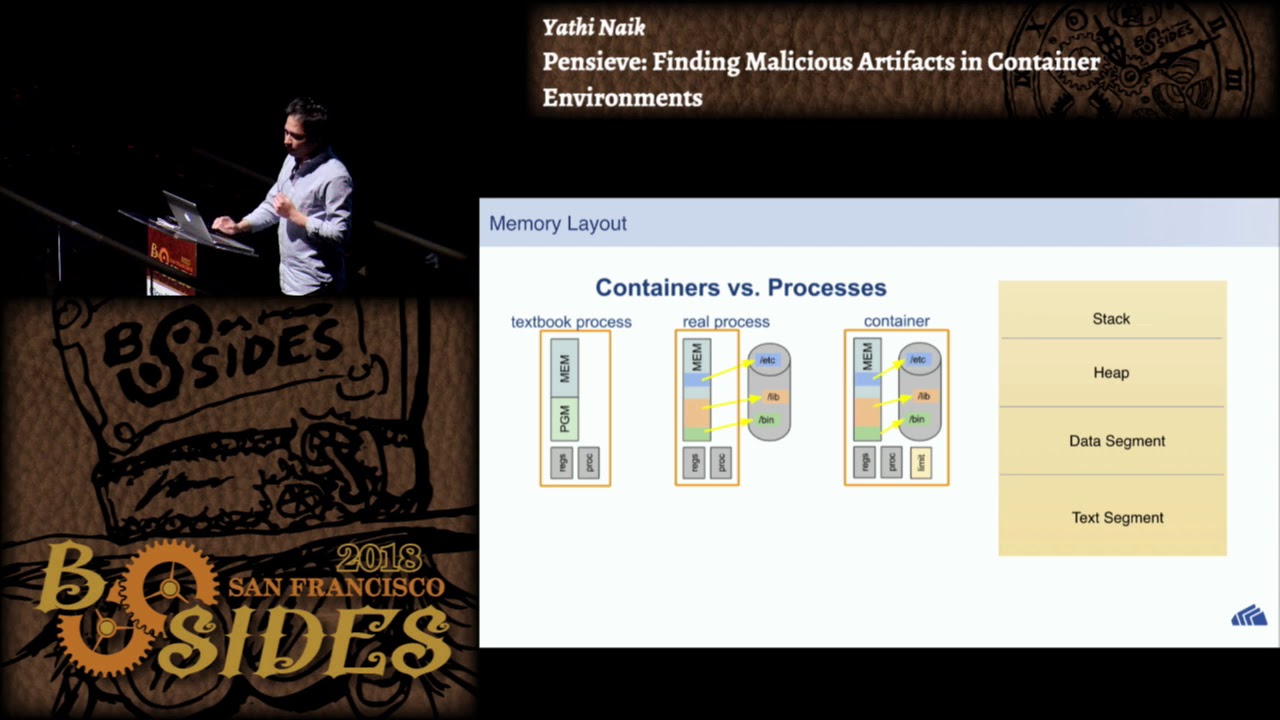

I hit all the elements on the ephemeral system if you look at a container and how things are going you've got a couple elements that bring them together and what these come down to is this you're going to have the stack or basically everything that runs your container so it's going to be a little bit of the operating system that's going to be your code base of what runs your code base it's going to be like your your Ruby or your elixir or whatever it is that's driving it that's the first kind of layer and that's the basis for your container then you're going to layer into that on top of that you're going to

put whatever code that you want to have run in that container so if you're running an application it could be your web code whatever it is it goes in there's the second layer and that kind of becomes what your your image that gets pushed through that ephemeral state and then when you launch it you should introduce your configurations at runtime and then they should be talking outside the container to like databases to manage data these are really the four elements that kind of make up a femoral Stax type container system but the problem we're having is just like I was saying getting those configurations in at that last time is a real challenge and so it's all about where do we put

those configurations now if you if you've ever has anybody here struggled with this challenge before I mean is it a if you've what what often happens is that you just put them in the environment because that's the easy thing to do that's kind of like the fallback but it's a really dangerous thing to do because you're those environment variables however they're actually stored if you're using kubernetes if you're using just standard docker if you're using whatever it is getting those delivered is not necessarily an easy thing and so they've improved on it and we'll talk about some of the things that are there but some of the places you can put it is you could just burn it

right into the image but then you run into problems where you've got secret data stored in this off-site or wherever the repository happens to be for your container and and it also makes it no longer in a mutable state and so if you want to take that container image and promote it on up you can't run the same container image in a development and in a staging and in a production because your configs are burned right into the image and so that's really a type-a turn putting them in the environment there's challenges to that because environment variables in fact has anybody read the 12 factor manifesto if you don't know what that is go out and look at 12

factor net it helps describe how to run things in a container manner but they're it's very high level and one of the statements they say is they say put your configurations in the environment I would contend that doesn't mean put them into the os's and the processes environment space because those variables are actually not as secure as putting it right onto your your processes can allocate its own memory and that the variable space basically those stacks and heaps go the OS environment area is actually a little more accessible and other processes can read into that and it's just not a safe place to be putting secrets and it's also unmanageable and unwieldy especially when you get longer and

bigger key files and stuff it just becomes really hard to maintain and so the latest thing is then you know if docker has added secrets to their system where they you can generate a secret in the doctor data center control or the universal control plan and then it will go ahead and make that accessible to the Container through a device file you have to actually so you write a script and then it reads from this device file that's been presented to the container and then it gets a secret that way you still need a startup script or something that basically runs before your application runs the best most ideal container is that you're going to

actually have an entry point that says run node.js on this index.js file unlike nothing else so it's very thin there's nothing nothing in the intermediary's and so putting a startup script in there is kind of again anti-pattern to that but there's almost no getting away from it this is kind of the best place to do it because it runs every time your container runs and you're getting closer basically to the solution and then the final one would be actually to rewrite your application just to read directly from a result source and so in nodejs and some of these other stacks you have config wrapping solutions that read different config files and it's kind of become a convention about how you can

have different styles of configs kind of come together and of these things they're starting to actually allow you to read off of remote repositories and so your application starts up and it reaches out to a remote repository to bring its configuration straight into variable space on the application stack EP but this industry is still trying to figure all this out that it's really struggled with the whole entire challenge and if you ever get in to try and deploy these into a pipeline where you have a devastation a proud or whatever your pipeline to be called you'll find the same challenge exists and if you're getting into compliance it also is a challenge because these secrets need to be locked down more so

than even the application image because they're really the keys to the kingdom so what we've got out there is I was just mentioning how docker secrets works there is also hash e Corp has a tool called vault it does a key value store and it does encryption at rest and that sort of thing so does docker secrets at encryption at rest at CD can do encryption and then the tool that I've that I came here to talk about is reflex engine is the tool that I've put together and what reflex engine does the similar these other things but it takes a kind of a step further it also is looking at the whole service and then it brings in attribute

based access controls these other services we're talking about here they're very much an implicit sort of thing where it's a role based access control I mean if you basically can get access to the system to kind of in and there's a lot less fidelity over the individual granular level of controls so just to hit a little bit a back and our vac if you've not heard of attribute based access control this is what has been come up with to help address the Internet of Things and the idea of attribute based access control is that everything is an attribute instead of trying to trying to say let's figure out what if you if you recognize the

authorization identify authorization and then our authentication and authorization once you're authenticated in an hour back world you're kind of done worrying about what those attributes are and you just deal with authorization in an a back world those attributes are always relevant and they're they should be analyzed anytime someone goes to access something they become part of the authorization cycle as well and the simple explanation of this is going to be if you think about the medical world and you have a role based access control system managing medical records you have a nurse working on the floor and she has the role of being nurse she can log into that system from home and access patient data she

can log into that system while standing next to the patient and access patient data and so this becomes a challenge and this is where the Internet of Things kicks in because if you add an RFID tag onto every patients arm and then you put a proximity sensor on the kiosk that she's using to get into the records that becomes an attribute saying she's within proximity the patient and she has this attribute of being a nurse and those two things in combination allow her access those records but if she wanders off and goes home and tries to log in she's no longer in proximity she can't read that RFID tag as that attribute and so the a

vac would kick in and say nope he can't access those records that's kind of the concept of how why this is such a thing and this is really still forming there is not really any good standards around how to manage this and to think about a different direction and what I've done with reflex engine if you think about querying his who's written the sequel before her wealth passingly familiar with sequel okay when you get access to a database a sequel database you're going to authorize and authenticate that database then it's going to say okay I know who you are once you have that access if you go to query a table it doesn't say you know

per row I'm going to decide what attributes you may or may not be able to see you get access to the whole table now you can do grants you can grant like write access or read access but you can't say I want this person to be able to read these five rows but not the other twenty rows like you can't do that II would say vac that we've got to reflux there's boolean logic that takes an account all of the attributes they've come to reflux with and so it's looking at the IP addresses are coming in it's looking at any certificates they might come in with their API keys any sign in that exists and all of these attributes

come together and you create a logical expression on the table saying I want to authorize this set data that you're looking at based on if they're coming from my trusted network that's one attribute and they've got an API key or they've got these different attributes that all come together and then that decides on how it will release the secrets and I've got a diagram that I'll kind of show that with a little bit more hair in a sec the key though with a back is that everything is an attribute and once you start thinking about things that way because even think multi-factor authentication really it's just another form of a back we're just adding those

extra attributes together and once you start adding attributes together the quality of our security really just ratchets up through the roof quite a bit because just like the the reason for MFA being there it's how many people have implemented MFA systems have you guys have you guys talked about um has anybody talked recently about SMS messaging is that MFA this is one of the challenges I've been doing with some of the co-workers I've got recently I would contend that an SMS message sent to somebody is not an MFA in the true sense of FA but it is an a back thing because it's another attribute but the difference is going to be in a true time-based key

exchange system with NFA what happens is somewhere out-of-band either you've identified yourself and authenticated yourself to the service of question and then you've both exchanged this time seed and then you both go away and you say in the future days month whatever if I come together at the exact same time the way that the seeds work they know that the two seeds will always generate the same number and so they'll match so the exchange only happens once at a time you've already been identified and authorized that I nted whereas with the SMS systems I'm just going off on the rail tier sorry this is a hot topic for me right now with the SMS systems

what's really happening is you're saying hey trust me and here's how you're going to trust me is you're going to come and sign in and then after you've signed in I'm going to say here here's the key code go ahead and give it back to me and I'll trust that you really are who you say you are and it's like that's not really MFA right and so anyway that's a separate factor it is still valuable if they don't give you another option but SMS then I guess to go with it but anyway fun stuff with anything the other thing is that all these vaults that you create in the Internet of Things everything's becoming more and more

online which makes things real challenging because if you have a build system that wants to do something and maybe it needs to pull down a key to sign stuff with and and verify that all my data in my image has been signed properly and then it wants to put that back somewhere like you still need to have a place to have all of your sensitive data but do you want to take your vault if you're running hashey corpse vault and put that publicly on the internet without anything other than just the a standard vault access controls and that's kind of scary and so most people hide these things away and if you get back to what a BEC means the

really you should be able to take and just expose the whole thing out the internet and just say I'm going to rely upon all these attributes coming together properly so secure that system so just to kind of walk through how this could be if you've got a configuration here so I've got a secret it does work I'm going to secret back here and I'm got it behind in a back policy and this policy says something to this effect that basically says I've got an API key that's in my list of servers and so I'm going to go through and define a set of servers that I know I can trust and I'm going to give them an API key and it's

so this is going to be pre generated like a job basically and we're going to give it out to somebody or out to a service stack and then I'm going to say I know that if they're coming from a 10-0 subnet like that's my internal private subnet so I'm going to give that a little bit of credibility and Trust in combination with the API key and on top of that I'm going to actually pull the certificate ID off the SSL session and so I'm going to do client-side certificates and I'm going to assign out a certificate and so if those three things come together then I'm going to go ahead and trust that I should be able

to give this set of secrets out to that that whoever's requesting it and go ahead and do it or you know the boolean expression maybe I'm going to go ahead and pull a user ID so I've taken a login session and I just trust the user ID by the time I guess this level of the evaluation that the authentications worked for it just wouldn't be there and so that user ID is listed as one of the admins and the IP address is actually my VPN address and so I'm delegating a little bit of trust there by saying that my VPN is already doing MFA and so if they come in with a legitimate ID and

they're coming off my VPN host you know and this gives you some control over that you could also add in a policy that says you know I know that this block of internet addresses it's kind of like a firewall almost you see I know this block of Internet addresses I'm going to whitelist it and say these are my build services from some sass provider and combine that with an API key or something you know to allow the scripting to work and so it gives you a lot more control and power over those sorts of things some of these things you still can delegate up channel you can have your firewalls doing some of these if you want multiple layers of security

it's not like a limitation but the cool thing with this is you get to apply these policies at the row level and so you describe how you want your secrets to look and then you can have it be kind of selective about it so you could have all of all of your secrets in one database and you can set up different teams of people and different services and depending where they're coming from they can access those things so I think goes through some of this EP so reflux engine it's an open source project I've put together it is in its infancy I'll be honest I've been this is so it's running a couple production sites that I've worked on but being open

source you know we iterate and make things improve them a little bit over time the main thing is that reflux engine the difference between it and a hashey core vault a net CD is the reflex engine takes a step back and it actually looks at the service itself and so it's not just configurations it actually has services mixed in with everything and the reason that's powerful actually I've been sitting down talking to Thomas hatch from saltstack about this same thing I want to try and get some of these things in the salt stack and so he and I were talking through this and if you think about the conventional world of container or of configuration

management systems they pivot around servers that's really what they do is they work on servers and they have a really really struggle when it comes to containers because they're the robot and that factory that I talked about earlier so you've got this Factory with the cars and it's running around you have this Corral of robots that goes out and they're really good about putting the doorknob on all the cars and then coming back and taking more instructions and going out but like we don't do that in the real world because that's actually highly inefficient and we do assembly lines and what's happening is in order to do that a configuration management systems need to stop thinking about

servers and then you start thinking about services I have a group I service that I'm addressing and so when I was talking to Thomas it was it was a really fun conversation because you the first impulse that most people from the config world have if they say let's put salt minion on every one of the hosts that runs my container stack think of these as hypervisors if you're in the virtualization world and then from there it can reach inside the container and do stuff you know and it's like this little box with a question mark of what stuff would be you know and we had to go around a couple times to explain it that's kind of an anti-pattern and even

the idea of running a minyan it's like well then we'll just run the minion inside the containers and so we'll have a salt minion in each container and again it's an anti-pattern because the way the salt or any of these other stacks work as they address servers once that container is running its ephemeral you don't want to change it again so there is this point when you launch that you should actually go in and fiddle with it and you can tweak it but then you're kind of saying it's ready to go and now I have this live state and you don't go back in and change it again and that's really configuration management is about the life cycle and changing it

over time and containers have no lifecycle other than their on and then you throw them away and so you need to step back when you're talking about configuration management and you got to talk at a higher level which is really going to be that service level so what we're saying is I have a web service and that web service can have one container running or it could scale up to 15 or 300 back down to 10 whatever it is behind the scenes there's a whole bunch of instances but they're all ephemeral and really all my configuration is managed at the service level and we had a really fun conversation I think that there's going to be some good

changes coming out of salt I think we're gonna start seeing some service level addressing who's used salt before anybody so I'd I'd like to think we get some of these concepts even in a salt where you can start to address things at the service level and say I want to put a database secret but it's going to be the same database secret for every container right you're not going to have to go into every single container classic ways you're going to every single server and you put that configure out there for your database secret and this way you put the configure in one place on the set on the service and then when the containers launch they pick it

up in addition to that it gives you some fun polymorphism because you're changing even the entry line the entry points so on a container you give it an entry point and you will say either run my startup SH or you know I was just looking through some of the docker data center pieces and they are just using entry SSH now and so the idea of the entry points kind of changed originally when the container world started the entry point would be your code that you would launch and still a lot of people do that and it's changing now so with reflex engine that we've got a launch command and the launch command you basically say my entry point is going to

be launched and that's about all you got to say and you put three environment variables on the container and nothing else one of those variables says where the reflex system is at it's a REST API the other variable is an API key and then the third variable is going to be the name of the service in reflex and so when you run launch it goes out and it finds that service and then that service gives it its identity assuming that the image itself has what it needs to run and the fun thing about that is if you have a node application and you've got it's running web services you might launch it a certain way that fires off

node index J s for general web processing but if you have a job that has to run once a day just do a little bit of batch processing and you want to just bind that into the same code base because you want to create a whole entire new image and have a whole new node modules and all the dependencies because it's really same thing it just needs to run this batch you can take that same image and just give it a different service name and that different service name now says instead of running node index J s it says run node batch J s and so it gives you that kind of polymorphism without even having to go in and hack on the

containers you don't have to change your shell scripts anymore and it lets you do all that at the a little more flexibly it's also because it's a rest-based interface it's addressing the concepts of infrastructure as code have you guys heard that I'm sure that term has been bandied around a little bit um I think it's a hard term for some people to grasp because automation is not necessarily infrastructure as code and I've kind of been the rounds with a few people on this a lot of the configuration systems that are out there most people say hey I'm going to go ahead and put all of my puppet configs into github than there versioned and they're stored that is not

infrastructure as code because those configurations are very hard to mutate and change you can't programmatically very easily script against something that's in a github repo because you have to do a check out you have to go on mangle some files you have to check back in and they have to go ahead and get it back out to the puppet or yeah it just it's not as flexible as if you have a live state where you can just programmatically query an API make changes and let that kind of cascade on out it'd be got ahead of myself here and then continuous delivery is what this also helps you with a lot of the config management systems also struggle around

pipelines so you have a development environment a testing environment of staging and a QA and whatever your pipelines look like that's usually the last thing people kind of address what we're bringing to the table here is it has this concept of a pipeline built right into a pipelines and services and that is when you really get into doing DevOps and better security is when when the security becomes built into the fabric instead of an afterthought so inputs into the last second because compliance people came by and said you can't do it environment variables that's really what this is trying to address the saying security first and if you do it right then you don't have to deal

with a lot of like these uncomfortable questions that come up later because invariably they will once the auditors come through and say I don't know if that's such a good idea to put that in an environment variable I think actually I did I'm going to actually pop through I think I got some other slides here to talk about I don't know how long this was going to go guys so this talks about insight reflux the kind of the different objects you would have and I've talked through a little bit of these ie it keeps track for you of or sorry you build services and then those services are part of a pipeline and then you have

configurations that tie to that and then it will keep track of the instances for you so like every time you run launch that actually reaches out to reflux to grab its configuration so it also keeps track of its instance information so even though that wasn't part of the design there's actually even a little bit of service discovery which is which can be a little bit challenging in a container world quite a bit if you've ever dealt with it so that's actually not an advertised feature but it is one of the things that kicks in there you can query the instances before a really good deep dive on this I actually just want to ask you guys I mean what do you

think about what I've talked about thus far we've got some time to QA a little bit I can keep going deeper and deeper and deeper on these things if you want attribute based access control what are your thoughts on that any questions on a back if this is the first time you've heard of it I don't know it's a challenge a back is a challenge I would say because roles are easy you have a group you put people on a group you're done a back is is boolean logic and how you get that associated can be challenging what about container secrets have you guys what kind of war stories does anybody have any war stories with

trying to deal with secrets and containers anybody want to there anything yeah so I think you're asking um I'm trying to rephrase what you're saying it's for everybody's sake so you were asking the idea of saying that the launch script will run other stuff and how does the services like if you're running kubernetes or dr datacenter only so those how do they keep track of that is that what you're asking e so it is still following the principle of one to one and so when launch runs the polymorphism is only for the current state it won't create another one it actually runs an exec and it disappears from the picture after the service runs and so the stack is

actually that docker spins up a new container which is just a process and the I'm gonna actually want to fish it so I got another presentation on doctor that explains the layers of docker but so it fires up another process and that process then is it this is right now it's in Python and so it runs the Python binary the Python binary runs launch and launches the script the script goes out reaches out pulls in the secrets now this is actually the kind of fun thing about this is because it's at runtime it's not in the containers environment and so if you actually you can attach to a container out-of-band and you get a shell there's actually no secrets that

show up there if you do it that direction which is another nice thing about this so anyway the process runs it grows out grabs the secrets brings them into the space and then after it's configured done all the secrets the way that however you want and that has inheritance it has a lot you can write files you can dump file you can change macros you can do a lot of stuff with it then it runs exact and so the Python binary has dumped out of memory all the reflux stuff is now gone from the equation and now it's just your application after that and so there if you wanted the polymorphism but actually come with a higher level where you would

go into your tool that's whatever tool it is and you would define a different binary and you would just be able to reference the same image that's the the polymorphism is at the image level and if you and so actually I kind of want like I kind of want to jump in and actually pull up another presentation on docker I'm trying to resist that a little bit um there the one of the biggest challenges I wrote a blog post recently one of the biggest challenges with containers is that there is so much because they're so game-changing there's a ton of technologies out there and so it's hard to just go in and get your toes wet like you kind of have to go

into the deep end and that's what has caused a lot of challenges with people is that it's hard to do the whole thing and there's a lot of different technologies out there that are all trying to deal with a lot of these challenges and so like the service management side of things can be a challenge for sure I think Amazon has put together solution that's really painful personally speaking kubernetes is a great solution but it's there's a lot of open source to it and so it's a lot less it's not as polished right docker is working on the solution that there's has a lot of polish but it's a little bit unstable there's a lot of

peripheral systems out there Nano box IO has some local ties if you've ever seen that one um or some of these other ones out there you've got Rancher you've got a camera half these names there's a bunch of other there's there's a huge community of people that have built systems to try and manage containers and I would say don't go against those systems like this is meant to be a thin little layer that you can just insert into the middle of that without really disrupting the flow because I'm not trying to solve everything just trying to enhance it hopefully so I don't know if that answered your question or

oh and that's an anti-pattern so the statement is the fusion image you know you don't have a good container unless you've got syslog and some of these other parts to it and I would contend the opposite you really make me want to float this with a presentation on ephemeral systems but um any other thoughts on this reflux and that yeah back here

now

so the question is how do you how do you separate the people who want to use the have a config system that brings their configs in and the secrets that come in how do we make this because people want to deal with two systems and they want to bring it manage it in one place but you don't wanna put other configs in one place you don't want to put all the secrets in one place necessarily and so this can be kind of a confusing challenge is that what you're asking so how I have dealt with it is I wrote reflux engine um and what uh-huh yeah what reflux engine does is it actually brings them into one place you don't

need to have a config management system anymore because it's actually a really powerful configuration management system it's all JSON objects and you can do some inheritance and I didn't go I don't have any of the examples up here cuz I didn't know how deep we would want to go with this and so I can talk to you out-of-band if you want but it was easier like that very challenge you talk about how people don't want to use multiple systems that's the problem with the container world is like everybody has very niche solutions and I was trying to balance out what is the niche I'm trying to fit with what's the most convenient like usable use case around

that and so that's why I brought configs in it's like rather than having configuration be separate because now every single service that runs has to have kind of this confusing complex init script I just brought it all together into one place and I pivoted it off the service level and it brings all of your configurations into I've run monolithic Java applications with this where it goes out and it will dump in key key store files so we was working with a service where we're interfacing with grants.gov and to every university would have to have a key that's the authorization system that they use in graphs I gather these email PKI keys and every University has a unique key assigned to

them that's been registered with them and so every time they go to do a grants.gov transaction I got to hand this over and it's stored in a Java keystore file and so every container for each of these universities we were hosting would have to have a unique keystore file but you can't burn that in the image right you'd have hold 70 some-odd images or keys so we and we only wanted one key store we didn't want University of X's key store to be in University of lies yeah okay then accidentally send the wrong grant to the wrong place and so what we do is we've stored the key store right in the reflux database and it's a

secret but it's not the same as like a database password and then when it runs it goes ahead through the inheritance and all the different things that reflect has and it pulls just the because the service would be named for University X and it would launch up and we're bringing that one keystore file right into the repository and write it out along with all the properties files and whatever else is needed and select with the properties files I ended up this templating them so the repository or the image that the doctor image that's created has a templated file that follows the variable substitution pattern so it can be checked into github and they can just see it because there's

no secrets there she's lippel inch of template match you know sequences and then when it runs reflux is told here is a template file process it then spit it out in this location with all the secrets in it and then Java goes ahead and reads it I've actually done one more thing with reflux because I don't even like writing configs to disk that alone the whole ephemeral nature I don't like that and so reflux will also do this config flattening because all of its configure stored in a JSON format and so you have this deep dictionary structure you can use and it will go ahead and bring all this together flatten the inheritance hierarchy and you can tell

just write it to standard in on my process and so when your process starts instead of looking for disk for something it just reads standard in it gets a JSON blob and that's all it's config get is there and you can take off and run so now you're not even bouncing secrets off disk if you wanted to use that option it does require you change your app a little bit not all apps can handle that of course but many other thoughts yeah in the back

yeah

if you cannot change the application to read from like a standard anime standard in is the best I've come up with um otherwise I think what you're doing off ramdisk is actually a great way of doing that because it keeps it in memory and as long as you close it out afterwards you're going to be safe um the best thing would be to just get the application because this is a rest interface so we have this launch scripts I talked about I'm working on improving the library so these different libraries if you're running Python right now you can actually just load up our FX so the way to install reflex that's actually a Python module you just pip install our

FX CMD to get the command inside of it that you can just Pippin stall our FX and then just run our FX client you can just go right out and just grab these things direct and live and just do it through that interface instead it depends on the application and your mileage will vary of course because now you're fundamentally you're more tightly coupling the application to a service like this and there's going to be pros and cons to that of course so other thoughts yeah

yeah it is a chicken and egg thing well so it's jots who's familiar with JSON web tokens some people so JSON web token it's a really cool construct I think Joerg might be no Jo I think is worth that JWT io until the API keys are jobs now I think a lot of the problem with an API key is that the general concept of an API key is not authentication its identification it's saying here this is a key and you're going yet that the key you don't really know for sure if the bearer of that really is who they should be and that can be a challenge and so the the way that I've set it up

I would love to eggs and I have a ladder diagram of it somewhere so what happens with jots is they have three parts if you ever go look at a job go to Giada oh look at it they're broken into three parts split by periods and the first part is going to be a base64 I'm going deep because I figure we got a lot of people who like to go deep ansan this tech stuff that there's three parts to it the first part says this is the cryptography I'm using second part is here's my payload the third part is a signature and what's neat about that is the cryptography says they put it's the type of signature you kind of put

anything you want in the payload and so the way that the API key works for reflux is I don't the API key itself is actually two parts it's an identifier for the user and then a secret and the secret never goes on the wire what you do is you use that secret and it generates a jot it takes the jot hands it off to the reflect server the reflect server then actually creates a session secret that's exchanged in this process and then it asks the client to keep using the session secret for all the rest of the jobs until the session expires the long and short is to answer your question you know can you really trust the API key

you can't fully trust just an API key you should do more I'm not sure if that's ask answering your question or not but

that is passed through so the API key and the reflux engine URL are set as environment variables and then the rest of those attributes come in from the query session and so when launch runs it's going to connect ups like if you did assert you would need to also put that certain environment so that it would go upstream and so there is still some challenges with that you're kind of deferring some of these things and so that's where the different pieces like a certificate and stuff I'll be honest I haven't gotten too deep into certificates I haven't seen much value of a cert over just the API key because the API key is kind of doing a lot of

the same sort of things where you have the signed key that's kind of and that's really all you're getting out of a certificate the value of doing client-side certificates and the reason I've got it at the mix is because it helps you address man-in-the-middle sort of things on the TLS session more than anything so it just depends on how how secure you want it to be and it's an emerging technology and so there's going to be you know we got a this a back saying in general you know people are still wrestling with so thanks though any other thoughts yeah yeah client-side certificates right the clients are it would be the TLS session certificate for the client instead of it being

server sided only I didn't catch that drive out what pinned P I NN e d e um I haven't really got my head into the pinned stuff I mean I know they've been recommending pins lately I'm wrestling with it like I understand the logic and the argument but some part of my soul still says this doesn't feel right I can't say why and so I've got to get my head into the pin certificates more um I mean painting a certificate really is just bypassing the third-party delivery of the CA verification if I'm wrong am I missing on that yeah

yeah

mm-hmm yeah so let me for everybody else's sake let me talk a little bit about pinning we're just going to meander our conversation all over the place we got time so who has who has heard of certificate pinning is that been discussed recently here okay so a mix of people I think that um at a high level the idea is that there's this great infrastructure of all these different CAS that are out there and we've delegated trust that if we get a legitimate CA to sign it that we're probably trusting whoever it is that that's all the onus of responsibility is only on the server side to get a good CA and if someone registers anything they

want with a good CA like our app is going to trust it and so painting is basically saying somehow I got to scope down that trust and I do it either by just embedding certificates in my app and only trusting the CAS that are embedded or by scoping at you just a set of CAS I trust and that helps address them out in the middle because the issue here is that people can put proxies in the middle of your session and they can you won't even necessarily notice it's happening because the way that the TLS session works your client will go out and connect up like some of these public Wi-Fi scan do this easily and they'll

intercept it and they'll actually pretend to be the server and they'll have a signed certificate from somewhere that lets them do this and your client just trusts it they decrypt the session they look at all your payload they turn around Riaan crypt it and connect it out to where you want it and then send it back to you through and you don't necessarily came in to text this has happened in some cases and so client-side certificates I believe can also address that problem because the camera how that would work though I'm spacing off when you get launched I'm here so painting is something I've been meaning to investigate further I haven't really spent too much time on it to be

honest the idea of the CA being a trusted thing I mean yeah I'll have to look into that more so other questions thoughts you guys want me to do any more deep diving on reflex in general or if you want maybe I'll just go ahead and wrap it up and come talk to me privately and I can actually show you some real live examples that works and if you guys have any questions actually the other one um I love my book um I just got it we just released it to month two weeks ago and so it's up on Amazon and yeah thanks a lot everybody for coming and if you have any questions come on up and

talk to me and feel free to link up with me on LinkedIn and keep the dialogue going if anybody wants to help with the reflex project feel free to reach out to me and talk to me as well so thanks a lot [Applause]