02 - Building A Zero Trust MCP Server Gateway Policy, Isolation, And Observability For AI Tooling

Show transcript [en]

Okay awesome. Hello everyone. Um, thank you to the Bites team first for giving us this opportunity uh to present on MCP server security and thank you for this audience for showing up on a Saturday morning. So bit about us. I'm Akanga and I have total of six years of experience spanning security operations, cloud security engineering and cloud security architecture. and my focus for the past two years have been on AI security and I'm accompanied by my co-presenter Nabj. >> Hi everyone. So my name is Navjot. I'm currently working as a cloud security associate architect having a total of seven years of experience. Uh my focus areas are cloud security, AI security >> and today we are here not as

encyclopedias of MCP server security but as security professionals who have observed this technology in action and notice some security risks and want to make want to share our learnings with you in an in a in a in an easy way. So today we will touch briefly upon the risk associated with the MCP servers and how MCP gateway can help implement those security controls to mitigate these risks. So as as our guides of on MCP server security today we will also have a quick demo where we will also show you MCP server in action and as well as highlight the risks while explaining the MCP architecture and then we will also introduce you to the MCP gateway

highlight its importance and also show a quick demo there. Then we will also try to understand uh the different components of MCP gateway and finally how security controls can be implemented via it.

>> I'm trying to bring up just give us a second before we try to switch. >> So we just trying to bring up the demo here on the screen. >> Okay.

Okay, so hopefully you have seen this uh screen and we'll start with a quick demo. Um, and we want to show you what an MCP server looks like in action. So we have built a very simple MCP server which connects to my Gmail account. So it's been granted access to two tools. One is list Gmail and the other is read Gmail. So for demo purposes I'm using claw desktop as my MCP host but it could literally be just any AI agent or client. So what you can see here now is that we do not have any security controls. We don't have any gateway. So when I asked Claude to show me my recent emails, you would be able to see because

I'm authorized to access my Gmail account of course and I did the setup in that way. I'm able to see all my recent emails listed with all the data from my emails. Claude automatically pull pulls back the entire emails including sensitive information like account statements, phone numbers and other personal data. Now the difference between me reading these emails in Gmail versus accessing them in claude via an MCP server is that an AI decided to just retrieve that information on my behalf. Now imagine inside an enterprise claw desktop still has some inbuilt in inbuilt security controls but if it is an in-housebuilt AI agent and it has no security controls imagine thousands of employees using either these AI

assistants or in-houseubuilt AI agents and being able to scan sensitive documents information query sensitive databases and access file shares at this machine's speed. So this is what we are talking about and we see this as a huge risk. Every single request here will succeed. If I try to list all 50 emails, probably my a AI agent would be able to list them and it would not validate any requests that I send and there are no audit logs as well. So here is where it even gets worse and I think Rene covered it beautifully in his talk. What if the tool itself like read Gmail or list Gmail was poisoned? What if a developer published a malicious read email tool on

the internal registry and attacker swabbed it and there was a supply chain attack? What if the model decides to autonomously call that malicious tool because it looked relevant and brought sensitive output and exfiltrated data. So you don't just have data leakage, you have AIdriven exfiltration happening completely autonomously, especially when there is no human in the loop. That's why we think securing MCP traffic matters. And the problem isn't just who is allowed to connect or what but it is also what these tools can do and how easily can AI be tricked into using them. So okay I think we'll just move on to the next slide.

>> Sorry about the interruption. We're trying to switch between Okay.

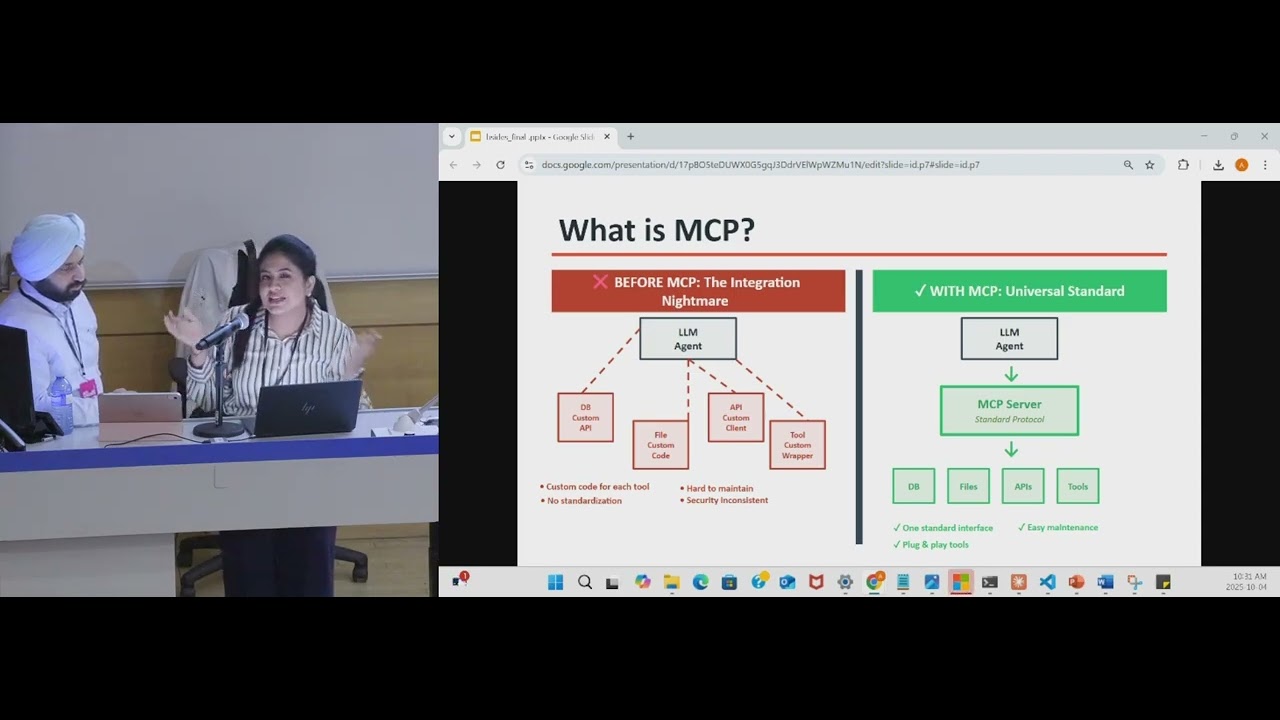

Okay. Now, let's learn a bit about MCP if you're not already aware. So before MCP, if your AI assistant wanted to access a database, it you had to write custom code, custom APIs or custom wrappers to access your file systems. So that was a mess to maintain. But MCP standardizes this one protocol and any AI agent can use it. Cloud desktop, charge GPT, anything. You write one MCP server and everyone can connect. It's of course elegant but as you might know this is also insecure by design. MCP focuses a lot on functionality and it is beautiful but it does not emphasize on security. Just like what you saw in demo one I was able to list all the data very

easily and there were no security controls. So just giving a formal definition of MCP. MCP defines a structured way for AI models to request, execute, and exchange data through a client server model using JSON RPC 2.0 messages over transports like HTTP or SGDIO. Let's quickly understand how anic solution with an MCP server can typically work. On the left, you can see that we are starting. Let's assume that I am the user and I'm using this Gmail server MCP server via cloud desktop. So I'm sending my query for example, show me my recent email. The query goes to the LLM which is the brain of the entire solution and then the LLM decides that it needs to use an external capability.

So it would pick one of the tools like list, Gmail or read read email and then it will ask MCP client which tool can help and finally it chooses the right tool and then runs the MCP server runs that tool and the MCP client after sending this request to the MCP server. The MCP server invokes that tool and finally the tool results in an output which is sent back through the MCP client. Now the LLM is generating a human readable response for the user. LLM is deciding what to do making it autonomous in nature and MCP client is handling how to connect and MCP server is executing the actual tool behind the scenes. So this means that every new

tool you expose will become a potential attack surface. So MCB adoption is exploding. Enterprise enterprises startups open-source communities are racing to build LLM powered workflows. So there are more than required number of MCB servers in the wild connecting AI agents to the databases, file systems and APIs. Even though MCP servers in prod are less today but the m with with the MCP ecosystem booming we can expect to see more number of MCP servers in prod environments in the coming future. Now let's talk about the risks. So the first first risk here is the arbitrary tool execution. So in this one um so LLM can call any tool without any restrictions. The second one here is the

expilistration of risks. So where uh the sensitive data leaks through the side channels. Uh the third one here is the unt untrusted context. So where the user input flows directly to the tools with no sanitization. So fourth one is the zero visibility. So where there is no audit rail, no way to detect abuse. So every one of these these ones were presented in the demo as you saw. MCP dramat dramatically expands the AI what what AI can do but also dramatically expands the attack surface. Now let us try to map where these attacks happen in the flow we just saw. So at user input that we saw in the previous uh diagram the there are prompt

injection attacks. Someone tricks the model into calling unauthorized tool at the tool call level. there is no authorization enforcement. That means the server doesn't check if this request should be allowed. So when we talk about authorization enforcement, we are not just talking about who's allowed to login, but we are also talking about who's allowed to call what tool when and in what context. That's the layer that MCP lacks by default. So in the response that is supplied to the user sensitive data can leak and there is no audit logging. So there are four main choke points and there are four opportunities for attack and MCP protects none of them by default. So the solution that we are proposing

and you've heard this zero trust architecture and there are three core principles. One is that you authenticate and authorize every single tool call based on identity and context. Second is least privilege. You grant only the minimum permissions that are needed and nothing more. And third is assuming breach. You design your systems like they have already been compromised. Never trust. Always verify and try to minimize the blast radius. And that's what the MCP gateway will help us with. >> Okay. So here our gateway will act as a security proxy that sits between the AI and the MCP servers. So below are some components and features of MCP gateway. So the first one here is the policy

enforcement. So this exactly defines which tools can be called and by whom. The second one here is the identity and authorization. Uh so which verifies every request with role based permissions. So third one here is the schema validation. So which validates and sanitizes every input parameter. Uh uh so and the third oh sorry the fourth one here is uh observability and logging which logs everything with full audit rails and the fifth one here is the isolation. So sandboxed execution with resource limits and the last one uh is lease principle of lease privilege. So which scopes down the permissions with per agent and tool. So um so MCP server behind completely unchanged. You just drop the gateway in

the front. So that's the only change. So let's talk about controls that could be implemented by this MCP gateway. Layer one is identifi identity and authentication. At this layer, we will verify who is making the request. So in our demo that you just saw, claw desktop was the MCP client and the gateway would require it to authenticate via an oath token or an API key issued by your identity provider whichever you are using. So if a random unregistered client tries to access the Gmail MCP server, the gateway should immediately block it. Every request that is sent which is passed via the gateway has to be tied to an authenticated identity. So think of it like entering this uh

university. The badge is checked before we enter uh the room. Second one is authorization and policy engine. So once you have verified the identity of the agent, the gateway will check what the agent is allowed to do. For example, in my case, you notice that my agent had two tools and the access was granted to list email and read email, but it was not authorized to call delete email, right? So, if I were routing my request via an MCP uh security gateway, then my policy engine would automatically block any requests that I make to delete emails. So even if an LLM tries to call a tool it shouldn't, the gateway would deny it even before it reaches the

server. The third layer here is schema validation. Now we also want to check how the request is structured. The gateway validates the parameters which are passed to the tool. Things like limits, table ids, table names, etc. In our Gmail example that you just saw, the model if if the model sends a request to fetch 10,000 emails, but the schema says that the limit is just 100, the gateway has to reject it. So schema validation can not only prevent attacks like prompt injection, but it can also take care of any unrecognized or unsafe parameters and block them. Last layer which we feel is also very important is audit logging and observability. Finally, every single approved or denied action is logged with

full context. Who called what from where, when, and what the result was. In the demo with the cloud desktop MCP server, we saw that we had zero visibility. Now with gateway if someone runs let's say if you're able to successfully deploy our MCP gateway and it has policies which will block emails from unknown client we will be able to detect it in our audit logs and our security teams can monitor and alert on them. So these are the four main layers identity, authorization, validation and audit. Together we can convert MCP to a more trusted protocol and build this MCP security gateway and deploy it in our enterprises.

So I've tried to keep some uh backup slides here. So if my MCP gateway is running and if I have defined some policies let's say for my Gmail server for my for my MCP agent which in this case was claude if I have defined that I can only do read and list if I try to execute the command with delete my MCP gateway should block it and I should also be able to see audit logs I want to highlight that there are several open-source MCP the security gateways available. We can drop links to few of them. You can try experimenting them, experimenting with them and see some of these controls in action. As highlighted before, MCP gateway will

act as your choke point. It will enforce policy, validate requests, and log every action. So your backend servers, your tool registries and your data sources are all isolated and never exposed directly.

Okay, so let's do some risks risk to control mapping here. We already covered some of it uh previously but for prompt injection we the gateway will help intercept malicious prompts using input validation enforcing policies and sanitizing any parameters and then for sensitive data disclosure we will ensure that the permissions are scoped and the response is reducted so that only safe data is returned. We have seen in some um real uh agentic solutions that we do not want to output PII uh to the end user. So we can have some of these reduction policies also applied at the gateway layer. Schema validation and boundary enforcement can stop these tools from misbehaving. Then the gateway can use the tool registries and only

approved tools can run. This is to mitigate any supply chain risks that we spoke about. Then arbback and lease privilege scopes can also ensure that agents are not able to call the any tools that they are not meant to. And last but not the least, audit logging and alerting will give us full traceability for every request. Now this is an actual policy file um which can enforce some of these rules that we talked about. So we have defined an agent in this case u the Gmail server which is authenticated. Now as you can see it is explicitly allowed to call only two tools database qu it's only allowed to call two tools list email and get email

and you you can also see that we have made sure that we do not allow send delete any state change operations. So such kind of policy configurations can help us and these will act as like arbback plus schema gods and as you can see we can first vet who is calling then we can see what each agent can use then we can see we can validate the tools and finally we can log every call. So this single EML file uh which is used as an example defines who can do what with which data and how it's monitored. That's zero trust in practice. >> So this is how the real world deployment looks like. Uh so on the left we have

the DMZ tire. So which is a standard parameter control which has a load balancer, VAF and API gateway. So in the middle is the security tire which has the which that's where the MCP gateway resides and it integrates with O service policy engine and audit logger to enforce zero trust at the MCP layer. So on the right is the back is our backend tire. So this is where the actual MCP server tool registries and data sources reside. So the key principle here is no LLM or agent talks directly to the back end. Every request passes through the MCP gateway for authentication, validation and logging. So you have seen the problem and the solution. Now some practical guidance

for deploying this in production. So for the policy configuration, we recommend that you start with a default deny though that may not be liked by uh your application teams and you block everything. then explicitly allow what's needed. You can you must define granular scopes per agent role and version control these policy configurations in git just like you would do your application code and perform regular audits. For authentication, we are saying generate strong API keys, rotate them every 90 days so that if a key leaks, it has limited lifetime. Integrate it with your existing identity providers, maybe octa, Azure AD or whatever you're already using. Do not we don't think there is a need to build another identity silo

although we may notice that in lot of organizations and enforce rate limiting per identity, not just global limits but per agent. so that one compromised agent cannot exhaust everyone's kota. Logging alone isn't enough. Uh it would be good if you could set up alerts for anomalies. So if if someone in your organization tries to call tools that they are not allowed to use, that's recon. So we must deny those patterns. But at the same time, we must have sock also monitor such kind of activities. Finally, isolation and hardening defense and depth. Run your MCP servers in sandboxes so that if one gets compromised, it cannot affect others. Set resource limits to prevent denial of service and if you can possibly red team

your MCP servers, conduct static code scan of your MCP servers, especially the ones that you built in house. So these four areas can turn your proof of concept into a production deployment. The gateway code is open source on GitHub. We'll drop few links but this checklist can help you get started safely. Uh there is one thing performance overhead. We have noticed that even with geni gateways that we have deployed there is some latency observed and there might be some latency observed with MCP gateway as well and scaling might be an issue but I think every organization can cater to it as per their needs. >> So quickly I'll go over the key takeaways and then we'll uh close the

session. So MCP brings power as well as the risk. Every tool is a potential target. So zero trust is essential. Never trust. Always verify. Enforce lease priv privilege. The gateway provides defense in depth policy validation and isolation and obser observability with the logging. Um so here if you can't secure what what is is is all what you can see. Uh the solution is open source and production ready. Start building uh secure AI solution today. Uh but yeah, thank you very much for everyone's attention and yeah

>> so we will be on site if anybody has any questions actually time you want to take some questions >> yeah we can take them >> yeah any have any questions >> do you see the emerging ways to secure MCP do you see different divergences or their convergence on an MCP gateway approach. >> So if I think your question is like will this approach suit every kind of MCP server? >> No, I mean do you see emerging approaches coming up like different ideas to the MCP gateway out there? >> Um there are few open source solutions available and we can link them here but this is the one that we have explored and we have been trying to deploy it in

our enterprise environment. Thank you for your question. Yes.

>> And I wonder do you think it's happen

to us tokenization?

>> Yeah. more natural. >> Yes, you're right. So, first thing we could do this without an MCP server, but teams are building it. But I think your question is more around can we do this via an API gateway, right? >> Yeah. So, like for instance, like in Rene's presenters. >> Yes. So we take we just take the user token and then use that if you're able to read those and just do that instead. >> Yes and no. So API gateway can be a possible solution for some of these. But if you notice in API gateway uh you are mostly reading the headers but here we're also recommending that you read the payload and the context also which

comes with it. So an MCP gateway uh is recommended approach but you're right it can be done via an API gateway too. Thank you for your question. >> I think there was one question there. Somebody has a question there as well or okay

in your opinion how how Do you think these will become more of the norm within the existing cloud architectures and I'm thinking of us and then the companies coming up with solution of their own kind.

So is it worth as it is? >> So uh what we have noticed at least in our organization and ours is a large one uh with more than like 25,000 employees. So that gives you uh the scale and we ship agentic solutions every now and then. So when um we started with just the model endpoint uh usage we developed our in-house like um AI gateway of course we deployed it on the cloud and we used Azure for it but you're right it will become the norm and we also expect that for MCP server security at least in our company we see these servers being spinned up left and right and being deployed to production and that's why we

thought because for some of the use cases visibility is so less that it becomes important to have gateways like these and we are already working on like implementing it in our company. um maybe organizations would build it in-house and I don't think with help of AI it's as difficult today to build your own but uh if you want to use open-source or vendor provided solutions I think you could do that but to your question would it become the norm we think it would be but then our knowledge is just limited to our company right but thanks for your question

>> Yes. >> So we don't want to deny. You can if you want to. >> Yeah.

>> Yeah. You can follow that approach too if that works. It depends on because in some of the organizations it's not easy to just uh have explicit deny and then allow. So it depends on what threat model you have and if you can do an explicit deny I think that also works. >> Yeah.