The First Hour of Incident Response: Every Second Logs

Show transcript [en]

All right. So, thanks ever so much for everyone to come along to the talk. This is the first hour of instant response. Every second logs and effectively in this talk, funnily enough, as it says on the tin, this is the first hour of IR and effectively how we can make the most out of that time and how if you're on an instant response team, how can you use some of the tools and tricks I'm going to talk about today and avoid some of the common pitfalls. So, firstly, a bit about me. My name is George Chapman. I'm a team leading senior consultant for a company called Prism Infosc. We're based out of Chelenham. And my work primarily is

divided into three areas. So I do pentesting, your sort of standard pen testing. I do vulnerability research and red teamming but obviously as a bolt onto that but also instant response. And alongside that I discovered a CVE uh in 2023 which was critical in nature for the ABV aspect appliance which is a Primester route. I hold a a BC in computing and cyber security from the University of Worcester. My former lecturer is right here as well, which is fantastic. Um, and I was a London marathon runner in April 2025 last year. So, anyone that's doing it tomorrow, best of luck. And, uh, obviously if you have any questions at all, um, obviously please feel free to ask after, but I'm

also on LinkedIn. So, you just put in George Chapman one or George Chapman, you can come and say hi and happy to answer anything you might have. So to kind of bring back to the core of the talk, this is going to focus on the first hour of defining the outcome, getting a single source of truth, avoiding those pitfalls, and how we can use tooling to accelerate clarity. And the reason for this is in IR it is critical you make the most of that what often health services will call the golden hour because as time goes on much like a snowball effect the quicker you find the issue the sort of the stop the quicker you'll stop that snowball effect

and you will effectively be able to you know minimize the damage and minimize that impact. So why is the first hour critical? I want to know if you're on my team as an instant responder. What's happened? Where is it? How are we isolating it? How bad is it? And is it still spreading? And that doesn't mean switching off the machine. That means what's affected? Have we found out through maybe an instant response retainer? Have we found out in a cadence call or a bridge call what's going on? Where is it? Has the DC been compromised? Was it an external workstation? Was it an access broker? How long, you know, do we think this has been going on for? what looks to be

affected. If you delay, these are the main things that will start happening. So, if we don't get an eye on that quickly, obviously adversaries move throughout that network. Lateral movement occurs. There's privilege enumeration. You'll start seeing, you know, who are my groups, who are my privile, you know, horrible stuff happening. And effectively, we want to know what what's going on. Are they looking for something like an ERP? Are they looking for the customer relation database? And all that good bad stuff really. And how bad is that business impact going to multiply? So what I like to think about is the puzzle book theory. Does anyone remember the books where you would look for a scenario and it would say turn to this

page to go down this route or open this box. Well, decisions are sometimes made circumstantially in IR. You could view it like that where you see a DC compromised, you see NCDS dip get dumped go to the end of the book. It's or you know you find that credential dumping activity. you see ransomware spreading, you know, things of that nature, except in a real world, iOS is kind of not really like that. In real life, there are 400 pages. You don't know what's on there. Often, you don't have the full picture because for some reason, logging might not have been on DC01, but it was on Exchange O2 or it was on workstation 01, but it wasn't on, you know, SNMP3 or

whatever it might be, or on the file servers or wherever else. You don't always have that single source of truth and in IR the best thing to do is to get that single source of truth quickly. Everyone is asking for answers immediately as well. You're going to have people on calls who are panicking especially if they're stakeholders especially if they are responsible for that breach or responsible for that data and obviously you know some people might not know the direct value of their data and they need to have that communicated quite quickly and we need to kind of understand that as fast as we possibly can which leads into you know how do you deal with a crisis generally

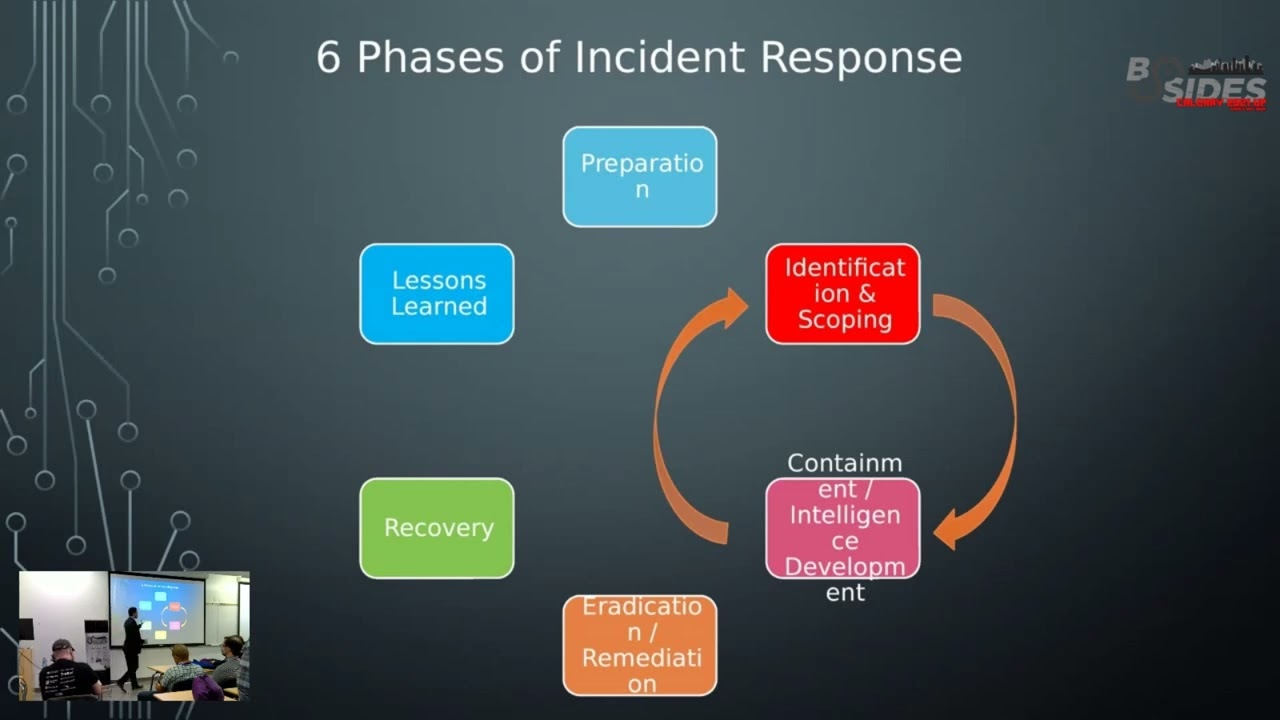

so the framework that uh I was taught by someone else and effectively I've now bought over to IR is a high level one I've spoken about it in another talk but it's a psychology of cyber is effectively what aircraft pilots use and it's aviate navigate communicate and the reason this is important is because in a crisis such as you know an where you're flying an aircraft you need to keep flying before you do anything else once you've started flying and kept flying you need to know where you're going and once you've done those first two things then you communicate and then you go onwards of okay this is where I'm going this is what I'm going to start thinking

to So you maintain control of the systems, you isolate those affected assets and you stop that attack of momentum. That's the aviation part. You continue going at the level you can as much as you can. And at that point, we don't know how much that business as usual is going to be affected. That BAU activity, we know that it's going to be affected in some way. And how do we effectively go from there? Once we navigate that, we establish the scope, identify the crown assets, and prioritize those next actions. I want to know where. Okay, so workstation, let's give you an example. Workstation one's been compromised. What can they see? Were they a local user?

Were they a local admin? Okay, if they're a local admin, did anyone ever log into that machine? Did they ever have a hash on there? I.e. a domain admin, which is a much, you know, a much worse day because they start dumping that. It's where can they go from there and looking at that lateral movement. And finally, once I know that from the initial bridge call or from looking at those initial logs, I will then set up cadence calls. I want to have that frequent communication back to the stakeholders and back to those in that position. I want to, you know, keep them in the loop. And they're going to want answers quickly. And it's important they get the right

answers quickly and have a single source of truth and that defined escalation path. You do not want to go down the wrong path. If anyone here has ever done a cyber exam, you'll know exactly what I mean about going down rabbit holes and compromising certain things on the wrong boxes and reading all the Map output and not all of it will always be correct. So, how do you avoid those common pitfalls? Well, one of the common pitfalls is the just shut down. You know, how many people have dealt with an IR and they've immediately just wiped every shut it down. Stop it. Let's stop the, you know, stop the bleeding now as it were and let's turn off everything.

Well, teams panic. The power on systems, but it wipes volatile evidence. Whatever was running in memory is now no longer recoverable. Inmemory artifacts are lost. Forensics become a lot harder and it signifies they're on to us. Attackers now scramble. Now, that's different to isolation of a machine because also what's even worse is that when you switch off that machine, when a machine is compromised, you need to basically accept that okay, we're probably not going to move forward with this. So, we need to restore from a backup. It's already in a compromised state. So if you can isolate it and leave it running with no network connection, that is significantly better for a forensic team to come in with right blockers and view

what's going on. Because as soon as you reboot it, that whatever logic, whatever malware is in that memory is now copied to disk potentially is running in scheduled tasks, is running in startup, is able to now potentially snip credentials from a new login, for example, an admin logging in. And that is why really we want to avoid shutting down stuff as quickly as possible. We want to disconnect it or you know network segment it and effectively air gap as much as we can. But just shutting it down we almost want to avoid really. So now we go to the people, the process and the technology. If we're doing an instant response one of the problems

here is that we need to know if they're trained. We need to know is there an IR policy in place and we need to know is logging enabled. Now the people are they trained? Do those people know who is the go-to for the escalation path? Do they know effectively what do I do in this incident? Now at Prism, we offer an instant response service. We ship out a battle card. That battle card is read by whoever is invoking IR or who is ever calling that instant response phone number and it has the key steps. It's gather as much information as possible. I'm one of the instant responders. So I will pick up the call and I have

immediate questions I need to ask. What's been affected? How did you come about this? How do you know this has happened? I need to get as much information as possible and then get onto the laptop set of the bridge call and then start working out how do I get access into this world and environment. Then is their IR plan up to date? What were their backups like? Has it been the same person for the last 10 years driving to a data center and dumping into tape? Has it been the same person using the same SSH keys for 10 years? And then when was it last tested? When were these backups integrity verified? Have we been backing up effectively data

that looks to be real, but actually when we try to restore it to a test system, it the systems don't boot. The data is not there. It's just almost like a an Easter egg, if you will. It's a hollow shell of what once was. And you know, more morally, you know, you have people that leave the business. Where where does that go from there? And are those playbooks available? Do we know what happens if the website goes down because it was popped using XY Z vulnerability? And then once we know this, if we haven't got these, then we have to sort of do with the best we've got available. Technology is logging enabled is the

centralized visibility and is back obviously going back to technology backup integrity verified. The other problem we've also got are things like rolling logs. So how many people have been deployed onto an instant response? they realize quite quickly they've only got 30 days of logs, but there might be an access broker that was brokering that activity from 60 days in. So the internal tool for responders, this is one that effectively how do you build those internal tools for responders? So for me, I think AI shouldn't replace responders, but it should effectively support them. It should accelerate the hard work that you need to do quickly. You need to be able to make quick decisions quickly. AI is

great at that. Obviously you have hallucinations and you have to be fairly careful around what you give it because of confidentiality and everything else but it can highlight anomalies and reduce manual correlation. Now this bit here about AI as a whole is less about the actual data itself and more about the pattern of data and we'll come on to that in a minute because what you want to do is you want to minimize obviously any sort of leaking of confidentiality but you want to get the most of your time and so effectively we'll see in a minute where this AI tool comes from but this thing I like to put here is AI is a bit like

resources and money and it amplifies intent. If you've ever met people who are incredibly wealthy, you know, who are great people, it will often go on and do great things. You might meet someone that's really awful, but it's actually incredibly wealthy. They do awful things. So, when used correctly, it effectively accelerates that use of resourcing. It makes you more of the person you are like money to an extent. But confidentiality is the key here. And you have to use it correctly. If you use it incorrectly, you'll amplify mistakes. You'll burn time. The last thing I need you, especially as a manager of an instant response team or whatever it might be, the last thing we want to see

is a time lost in that in that sector. So I had an idea. One of the things I saw, especially when the early sort of era of AI was coming out, when the first version of CH GPT, I saw that that there was a way to effectively get it to process, you know, do things for me in a way that was, you know, fantastic. I could give it you know information about myself or whatever it might be and it would analyze that quite quickly and obviously you know you have to keep all those confidentiality rules available and that's important to do it. So how can we create something that could help and effectively I knew

that I could create a tool in Python that could parse the data but I couldn't train it on real data because that would be very wrong and very bad. So I set up a trial Sentinel tenant or a trial 365 tenant. I simulated some fake alerts, exported these, and then AI did the rest. So, what this does, this tool I'm going to show you today, it's an internal tool, but I'm hoping that effectively you can go away with this and and sort of understand a bit more about how the tool works and implement this methodology into future tooling, whether it be for pen testing. The key here is obviously the separation of real data versus what you know to be pattern

recognition, that parsing, and speeding up that that time effectively. So this tool responder SV will rapidly allow you to take the intake of bridge calls. So effectively that initial call of what's gone wrong, what is it? How badly is it affected? Structure that input of affected users and systems differ against a known good baseline of effective being what do we know to be a known good source of truth potentially. You can't always know that with access brokers and they may have been in there for however long amount of time dependent on the the sort of uh the sophistication of that of that adversary and a note takingaking quick analysis via se's impossible travel or abuse IPs.

So this is responder SV and so what you can see on the screen is effectively an incident bundle. Now this spawns in pretty quickly as soon as I get on a call. It's all offline or rather it's local to my system and it runs on local hosts as as most local Python web servers will. And what it will do is for anyone in the room that's done a Microsoft course you'll know Kontoso. Uh so this is what I used for this. It'll effectively create a quick client name. It'll have key contacts affected assets instant IDs instant types known timestamps. So as best as we can get because we can only work with the knowledge we've got. You get the primary

contacts down quickly. For example, if you're not in a retainer, you might not know any of this. You don't know who the crown assets are. You don't know who the the crown users are, who can invoke it, who are the crown accounts, you know, who's DA, who's enterprise admin, who is, you know, admin to all these systems. Once we have these affected users and affected hosts, malicious IP addresses potentially, and malicious domains, we put those all in and then hit go. Now, this creates this template. So these are obviously all just uh made up names and stuff like that but effectively you have your effective DCs effective MS workstations and you have a cadence call template of effectively

what you ask what you deliver and what we know so far and those affected users. Now remember that because here right at the bottom we also want to do what's called sentinel diff. So I will go into the into sentinel or splunk or wherever it might be or elastic and I will take a known baseline prior to the breach. What do I know? Because anyone that's gone through logs knows that when command prompt is popped, you will see things like conhost. You'll see a load of events. We know command prompt by, you know, some users, especially developers, is a known source of, you know, effectively active traffic. We want to know what is normal to an extent and

what is abnormal. That's that's the key here. So we put in the known good or rather known good and inverted commas and the known incident and right here we diff through that because it's effectively a CSV and it's you know prints out as JSON and we can see new IPs new processes user spikes instant events and what are these new IPs because these IPs aren't the Citrix VPN that came in they aren't this they aren't they aren't known to the business so why aren't they known to the business and then user spikes what new processes were spun off and we can see here Alice Kontoso Why did let's say Alice the system stop massreading SharePoint? Why did it stop

going off and looking at all these file servers? Why did it stop trying to probe the DC or look for who are my groups and priv if they've you know they've not open command prompt before? If they've not run who am I before if they're not a system admin essentially. So we get this viewful report and then we also have alongside this an IOC tracker. One of the things I want to know quickly is is this IP address known? Is it known in abuse DB or spam house? Are the domains known? Are the URLs known? I want to find out quickly, is this a C2 server? Now, obviously for those that do red teaming, you know,

there's ways of hiding if it's a C2 server through redirections and all that stuff, but effectively I need to find out as quickly as possible with the best time effectively and also file hashes. You know, anything that's anything that's been injected or has been maliciously modified like let's say team viewer.exe, which is now no longer Team Viewer.exe, XC is actually TVXE with an implant attached will have a different hash. So I want to know is that hash known on the internet does it diff up with the known version of team viewer and all that you know good stuff. I need to know this quickly. And then after that when I was running all these KQLs I

realized quite quickly that for a previous beforehand we know what are the known sort of compromises known IFC's but I need to be able to do these queries quickly and I have a set of known good queries I can build and so what this will do is it will build the queries for you based on what you've noted as affected earlier. So I know from this call that these users, marketing admin, sales admin, John admin were popped or you know affected in any way and then I can figure out in this timeline time generated IP location where are they where did they go and synchronize that down quickly. Typing this you know doing all these queries

can easily take an if you're trying to get a real quick base layer of land can take half an hour to an hour. This is done in about 5 minutes. So it immediately cuts down that time and it makes the use of that golden hour. So here we can see we had affected hosts, affected systems and then I can now start seeing you know were they accessed was anything run on them you know was malware.exe run on dco1 was it run on mail failover? Was it run on the SQL server? Did someone get in use XP command shell? Did they turn that on? I want to know what these IoC's are quickly and these queries when they're

pre-built will allow me to find that data quickly so I can tell the customer how bad of a day it's going to be. So while the tooling works well, it saves time. It structures the logs. It structures correlation. It allows me to quickly get that knowledge from a team over to the client. That noise is then instantly reduced. I'm not chasing uh potential rabbit holes. I'm not looking at why was command prompt run on these six systems at these random times. Well, actually command prompt is run when this internal tool is run because it actually spins off this process. Conhost or anyone that's looked at command prompt internally will know the amount of traffic it generates in Sentinel. So,

how can we cut that down? How do we know what's known good if a system is running something periodically over a period of time versus the actual hit point? When did they get in? What else was run? and what differs and that's the meaning part differing known good from known evil. So to reflect on that it's a matter of time now I don't want to sort of be the AI boogeyman and say that AI is going to hack systems and and do all these horrible things because I don't think we're at a point yet where AI is that sentient and able to do those things. I think it can help both good and bad. I think it can help you create

trade craft if it's structured in a way and let's say you're jailbreaking it. I think can do all those things but at some point because of this race an instant is probably going to happen whether dependent on how you actually view that is that just a hit of you know someone tried to log in to your WordPress website or is it that they've dumped MTDS are we right on the other side of it how are they you know exposing those domain trust relationships that first hour is not going to give you the perfect information today I've actually you know given you effectively what you might be able to find quite quickly But you will find people who I mean it's

still known now that for a lot of uh for Sentinel for example the logging is extremely expensive businesses you know some businesses don't want to pay for it and that's you know understandable it's extremely expensive but the value is in the logging and I believe there is actually work behind the scenes to try and sort of get that to be more open and more accepted because you know with logging we can find out what goes what goes wrong a lot more quickly and structure beats panic Having some structure in those times is really important and the tool helps bring that structure to information that you might not have if they're for example not having an instant response retainer if

they've just rung you and they've not maybe invested as much into cyber security. Maybe it's managed by their MSP but only very likely or whatever it might be. This will help you help customers who may not have that more sort of mature cyber security, you know, approach to their business, which there are people out there who don't. There are companies out there that don't. And the main thing here is much what I talk about is a no-b blame culture. Don't blame them for not having it. Help them get to that point. Help them understand the risk. Help them understand the emergency and why this is important. Combat this through clear policy and process. So a prison obviously we know

GRC is of something we offer. So we want to help people know is there a DR plan? Is there an IR plan? How do you deal with BYOD? How do you deal with people coming in through the door? You know new starters that sort of retention of data especially and then how is that being handled internally? How you know is there a challenge culture if someone walks in with a lanyard that's yellow but you don't actually know who they are. Are people walking up to that person? It's not just the physical threat. It's not just the digital threat. Sorry. It's the physical threat as well. It's all of this. And this is a great quote I like which is history

doesn't repeat but it often rhymes and this goes back to effectively that if you have seen a similar attack you can't always follow it but if they are or rather you able to see the adversary behaving in a certain way you may be able to pattern recognize that going onwards if you know that that's the end goal of what they want to do. if they're looking for DAS, if they're able to enumerate those DAs and get forward, is that where they're going for? Are they looking for ERP? And you can start seeing where they're mapping their way out through the domain. What can they find out? And that tooling can help reduce that friction and accelerate that

clarity. Thank you.

>> Any questions? Yeah, go for it. >> George, I have a question. Um >> so your tooling responder recipe >> um >> that seems to do quite a lot of comparative analysis of response data. >> How exactly are you leveraging AI in that or is it more >> so AI doesn't touch the data. The AI has helped build the tool but it built the tool based on known fake sentinel output. So I set up my own tenant which I controlled created those logs created that known bad baseline so I could effectively find what looks like those logs and pass them quickly. What we didn't want to do is train it on >> So you use AI to build the tool rather

than AI in the tool. >> Exactly. Yes. Yeah. Yeah. So AI helped me look through hundreds of fake generated sentinel logs >> and then was able to effectively build around that. Now obviously that's a very small data set but it allows me to pass that logging significantly more quickly than if I was to done it manually. >> Okay. >> Thank you. >> No worries. Any else? Cool. Thank you very much.