Continuous Threat Modelling Using Large Language Models - Gurunatha Reddy G & Pranay Sahith Bejgum

Show transcript [en]

everyone first of all sorry first of all thank you for being here and I know what might be going on in your mind another AI Trend that we don't want to put in an area right so firstly let's Embrace a is not going anywhere a i is going to stay so instead of being uh like instead of moving it away as a security Community I feel we should embrace it to see where we can use Ai and instead of just throwing random stuff it and ask you to do an entire security engineer work which I'm sure there might be a marketing stuff but if you give it a precise instructions and a precise task to do I'm sure that we can achieve

results and that's where we're going to talk about so so we will be talking about continuous threat modeling which as a security Community uh we Face a lot of challenges in how do we update our threat models to stay relevant to the latest changes so how do we achieve continuous threat modeling using large language models so that's why have specifically went in of AI I went in llm so that it might just sound a different topic but we would be using llms so who are we we are a due of devops and devops engineer working at Glass Solutions and we are passionate about security Ai and any new other ideas before we start talking about uses

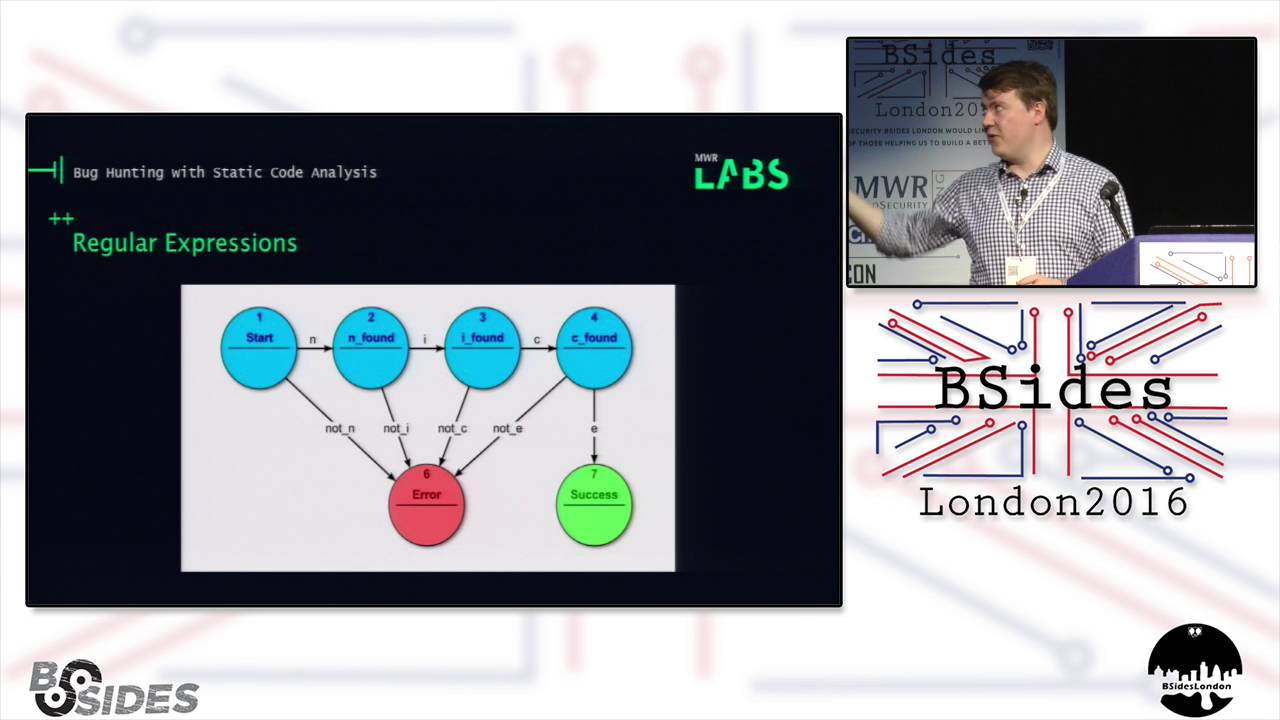

of llms into threat modeling let's first start understanding what is threat modeling if you look at threat modeling at its score what is it it's a structured approach to identify threats assess them propose mitigations and act on remediation it's simple what is what can can what can go wrong is this entire threat modeling and how do you do this threat modeling first you understand the potential threats you device counter measures and you you assess those measures and there are certain key Frameworks which you can use uh to threat model like stride pasta lyen Etc and these all has its own unique approach so if if we think of any threat modeling so how do we start we start with four

basic questions so this is an excellent book that uh if you are interested in threat modeling you should read it but we should start asking four questions what are we building what can go wrong and what should we do about the things that can go wrong and finally how do you ensure that whatever you have implemented is doing a decent job of predicting stuff right so let's understand this with an example for example consider an examp example of what are we building a login page for an e-commerce application what can go wrong login page can be bypassed or someone can bruteforce the credentials or someone can perform injection attacks and now what are we going to do about it

we're going to validate it we are going to implement account lockouts we're going to implement a lot of security controls and how do we ensure we ensure by doing let's say Security review code review pen testing all that stuff so this is how you approach as in we approach a generic threat modeling and now let's look at a traditional threat modeling when I said traditional the current state in which as a security team how is this being handled so it's it pretty much flows into this way where first the requirements are discussed and then a design is created then a meeting is set up with security teams wherein you discuss threat model and then lot of

inputs from security team and then you come up with counter measures which are integrated into design and then it goes into development phase where the things are developed M if you see there's a challenge here it's a onetime process just after design once it goes into development there are certain challenges if you look at it's only performed once at the beginning of sdlc and during implementation there there can be a change in the design or a deviation from design which is not accounted in threat model because threat model only looked at the design not in the implementation and there is no continuous feedback loop between developers and and these potential security threats so it it it it is away

from the development phase or the phase where development actually happens in some cases the threats that were discussed in uh design phase might not end up in during implementation so what we going to do we want to bring threat modeling closer to sdlc so when we say closer to sdlc work where where work happens we want the to do the threat model and to act on the threat model closer to the development teams and the first or closest to development teams is at the code and the second appro second way is work items this is where you're going to have lot of information about the what the what is the implementation look like so what

we propose we are proposing a new you field in user story which can capture the threat model which is very specific to that particular user story so instead of being at an high level we'll drill down and we look at threat model at an individual user story basis so this way we provide a continuous feedback loop but there's a problem with that the capacity of and time of security teams is very limited so a security team cannot a member of security team cannot be in each and every user story and then come up with threat or threats so that's where we bring in llm so now I would like to invite my co speaker prai to take over

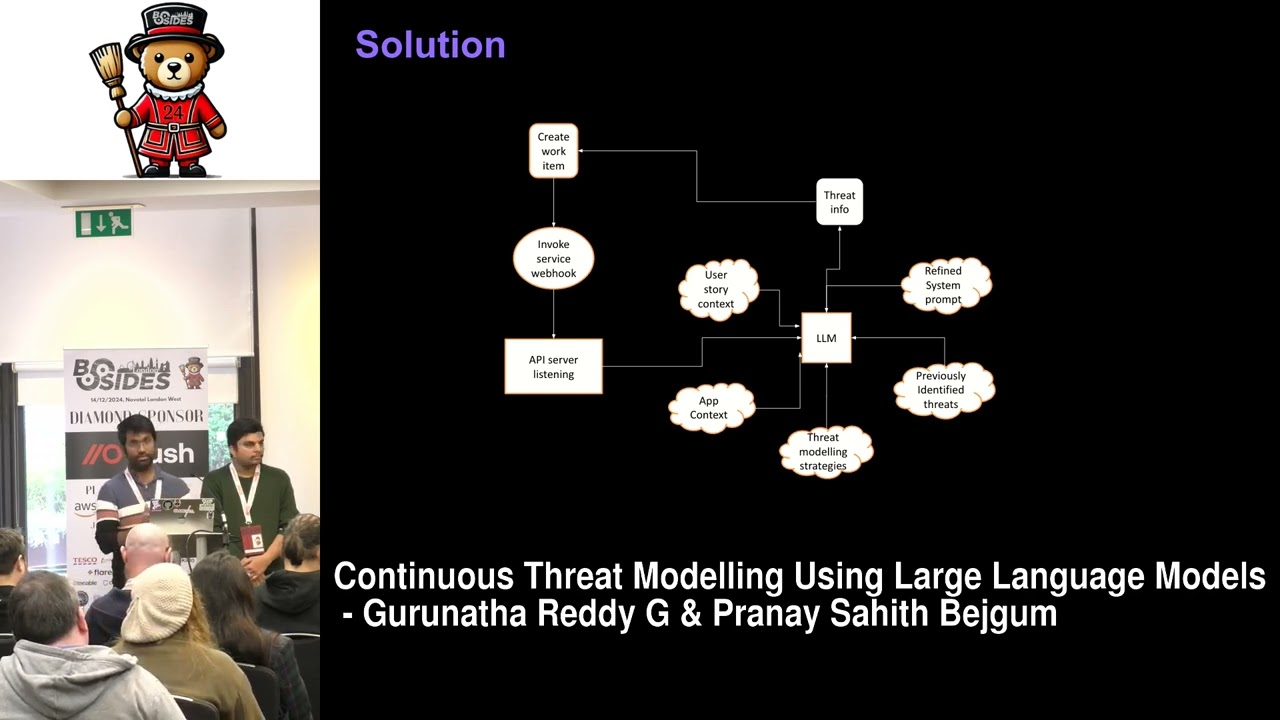

this [Music] uh thanks gurunath um so far we have seen the challenges in the traditional modeling so now let's see how llms can address these challenges uh so to begin with so the idea is to uh every time we create a story a US stories created so we send the content of the user story to the llm and then let the llm do the thre modeling and the output of the llm uh should be updated in the user story so let's see how that is implemented so the first step is when typically product managers or uh product owners when they create a user story uh they enter details of uh in the US story so things

like what they need to uh what the developer needs to do as part of the story and what is expected as the output of the stories and that goes into the description or acceptance criteria and things like that and when that working is created we need to send a u notification to a uh web API server so that that we developed uh so um which is listening for the events and that that event could be in the form of web hooks so the the API server um accepts that API calls and that API call Body contains let's say a Jason with the content of the user story it can contain the title the description of the user

story and the project it belongs to and the overall features uh uh parent feature it is it belongs to and all these details are then passed by the API server and now we have the content of the US story now we can send that information to llm For Thread modeling so uh so that's where we send the content of the user story uh to the thread modeling uh so that's good uh but the T modeling uh so the llm needs to know more about um the user story so which app it belongs to so or which product it belongs to so is it an e-commerce application or is it a banking app or something else so it

needs app context so so that it can do the thread modeling better so uh that's where we need that we need app context So based on the project name or the feature or the Epic name so we can get the app context and send it to the LM as another input uh along with that uh the llm works best when uh when what uh when we ask what we need out of it so we can give the uh set modeling strategies that we need from the LM so can it can there are a variety of threat Ming strategies so we give that and sometimes the user stories may contain existing threat modeling uh output so the previously

identified threats is uh can be present on the user story so if you give that as an input to the LM so that works uh even better uh by uh so it knows better that uh these are the variety of uh threat threats that can be possible in such kind of user stories so we send that uh previously identified threats and combining all this information with a refined system prompt uh when we give the information to the llm so it it gives the output in the in the form of possible threats and that threat information is now updated uh updated in the work item directly so as soon as the work item is created by

someone uh behind the scenes everything happens and the possible threats are updated in the user store story so that creates a continuous feedback loop and this happens for every user story along with that we update the uh content of the threads in the database as well so this is useful uh to build the threats that were identified over the time and um we can use that existing uh threats in the future uh iterations when the same work atom is uh processed multiple times Okay so now that we understand the solution let's dive into uh let's see it in action so uh this is a let's assume that um a startup company wants to create an

application called spendwise uh it's a typical expense management tool so we are creating a uh user story and uh it is to basically uh create reports and upload invoices uh so this one is to attach receipts to the US story so we enter the title enter the description and then click the save button so as soon as we did that uh the web hook API is called and a request has been sent and now behind the scenes the the the AP the server and is talking to llm and the Ser the LM gave the response and it automatically updated the AI security checklist there you can see that um okay so let me pause here so it uh for

uploading the files or the invoices to the expense uh report it gave three uh items in the security checklist so one is insecure file uploads so it's possible that a person can upload a malicious file and that needs to be addressed the file should be sanitized and the broken access control is another issue so one person should not be able to upload or over write or access other person's uh invoices so it needs to be add trust and the uh data leakage uh so the monitoring and logging should be uh set up correctly so uh all these are given by the llm and then the developers will can easily address those issues in the a in the

implementation phase so uh moving on so uh now these are the basic concept and this has a lot of potential so these are the few of the ways where we can extend this and uh so let's go through them [Music] sorry okay so so one of the uh ways to improve the efficiency of the llm so so we can give as much relevant information as possible so one is the application context so the product documentation it can be in the form of uh the design sessions or the transcript of the design sessions and um or the video tutorial and the company information so if we give more and more relevant information the LM can give better output and

another way is to uh improve the prompts uh prompt refinement so uh we can uh f unun it to uh as for our needs so to to and we can ask llm what we need um in the promts and uh another way is to use specialized cyber security models rather than using a general generic uh llms and another ways to fine tuning the models uh it can be company specific and it can be uh trained with pre-trained with company data or the security data and things like that and and finally um so while we have the threats from the llm in the user stories uh we those user stories can be further refined by security teams and the

development teams in during the refinement sessions okay so um some moving on so uh this is all good so it's a great idea so but this also has some limitations so uh let's talk about uh some limitations uh so so the first one is not really any limitation so this is this idea is not to replace the traditional thre modeling but it is to argument the security teams so so the main uh limitation is the model being a black box so uh llms are developed by some uh different companies and we don't know we really don't know how the LMS work and how a given output is U outut is given by the llm so that's

one and the model being non deterministic being um example so every time a given input is given so the model might not give the same output so that's one and uh data privacy is another concern so the user stories may contain sensitive information about the product clients and things like that so we need to keep in mind when we using based LM so one way to mitigate that is using self hosted LMS and because of these reasons the uh the teams might not have full confidence on these AI systems as we still evolving so that's something uh to note about and the llm most of the time can uh along it can give variety of threats based on the

theoretical knowledge so uh we need to prioritize the Practical ones and about the iCal one so uh that's another one and finally uh the llms when integrated with the project management tools depending on the company size it can the price of the usage of the llm can scale with the U with the usage of the llm so so the price can uh go up so uh these are the limitations and that's about it these are some of the links if you want to read further yeah thank you finally thanks to grant for being a mentor for reviewing this multiple times and providing his inputs thanks Grant and finally besides London if I may take another 30 seconds so I

was at besides London last year as a participant and I saw the environment and really liked it and then I thought that I should be part of it so then I I signed up for volunteer and then I did volunteering at besides leads and then I I should sincerely thank besides for being the warm that Community for welcoming and also providing so even just before starting this talk I was texting the previous mentor and then he was like you can you are going to do that so that's why like if you get a chance try to just be involved with the besides community and big thank you for entire besides community and the cre there thank you thanks everyone

uh thank you first for the talk really interesting topic I just wondered if you'd um given any thought to using the llm to create the code in the first place so you're having a full stack approach and you're actually qualifying the code before it's coded in in an Essence um rather than reactively assuming the vulnerabilities in the code from a human perspective um and also with within that have you given any thought into Federated learning uh in terms of ensuring the consistency of the language model training data yeah uh firstly we believe that llm cannot be just let it loose on performing just writing code on its own so we feel that there should be a

security or developer in the middle and yeah as you mentioned llm can write security code based on or can write code based on the user story and also can review this code that is present based on the code so that's comes in the implementation and what we want to address is that is making sure that developers are aware of these risks so that they can have the right controls implemented during the code so yeah developers can use these llms to address these security threats that comes into a different phase and this we want to increase the awareness of uh developers yeah thank you I'm sure in the interest of time uh but we are here if you have

any questions we would love to discuss thank you