How adversarial noise protects my selfies from the AI Deepfake dance TikTok trend | Tania sadhani

Show transcript [en]

all right everybody uh welcome back from the afternoon uh break all right so today oh for this afternoon we have Tanya who is currently an AI security researcher with Mala Labs cre labs and working on investigating unique vulnerabilities and machine Learning Systems uh and she's going to do a talk on what did we say was adversary noise protects selfies from the a I deep fake dance Tik Tok Trend which sounds very interesting because obviously this is a very common thing with everyone loving uh social media so with further drill um I'll let you take it

away okay hi guys so welcome to my talk um I want to quickly introduce myself my name is Tanya I'm currently an AI security researcher at the maleva security Labs um my academic background is in computer science um but before I became an AI security uh researcher worked several years in the government in both um technical roles as a data scientist and also like policy strategic roles as a policy officer um but that brings me here with you guys today talking about AI systems and AI security and what that is um before I want to get started I I like to play little game so one of these things is not like the others and by the end of this talk uh

not only will you be able to tell which one is different to the others and kind of why it matters uh why why the difference matters um as we are all kind of users of some sort of AI technology um but keep that in mind put that in the back uh today's agenda um I'm going to start with setting the problem so I want to share some Trends we''ll be seeing in deep fake misuse because I think that's something that we all know is a problem but uh maybe underestimate how big of a problem it is um two I'm going to go into some ideas in AI security talk about what AI security is uh where it came from and

how we can use it today to prevent deep fake misuse um three I want to show share a bit about the work I'm doing at the moment which is applying an idea from AI security which is called adversarial noise um to prevent nonconsensual deep fakes um and then four I want to end on like a fun example just to talk through all the ideas we uh explored um using the Tik Tok dance filter um so let's get started um so the problem so what really motivated me to start this project uh was all the news around the non-consensual use of deep Technologies I'm sure you guys would have heard there's like news this is the first one I screenshotted um when

I Googled it but in Australia there were problems with deep fake Technologies being misused in schools um we're seeing more and more of these kind of apps that enable you to create inappropriate deep FES um I did a like a review of the research out there um and there's this report I came across called my image my choice I recommend you have a look at it um but they found that 80% of the deep fake abuse apps and services launched in the last s months these is an issue that is becoming bigger and not going away um yeah I recommend having a look at their resources um they have a database that lists over 260 apps and services um

found in the clear web that enable deep fague misuse and that that's on the clear web I'm sure there's more that people can access um an example um I have on the screen there so that's a telegram bot I think um that lets you upload an image and it will then generate a nude from that image the reason why I've have it up there is within the first three weeks of its launch I think the number is there but it has over six oh thousand or million it just had a lot of people using it so unfortunately it's it's a problem that's sticking around um and that's just talking about apps and services people have deployed

and um provided we can also find loads of unfortunately open source models that do the same thing um actually can I just get a show of hands who in this room would consider themselves a data scientists yeah yeah um so hugging face is a repository where data scientists up upload their modelss um their AI like artifacts that other people can then use I didn't expect it to be so explicitly like shared there that like these models can be used for not say for work things uh which is really I surprising is the wrong word but really sad and disappointing um so I guess like I don't even have to be I don't even have to

approach an AI generative specialist I myself can go get these models and generate um not safe for workare content so how are we addressing this deep fake misuse there are seven several avenues that we're seeing like the government um pursue um and and Industry uh we've got people working on water marking so putting IM like putting uh what's it called hidden water marks I guess is the best word for it on our generated images so that models know oh social media firms know when something's been AI generated uh we've got work in detection so being able to detect whether a content is AI generated um we have a lot of like good policy legislative and educational

Solutions coming out uh talking to people who are working in the East like safety commission officer office and like the Privacy um office of like privacy like H yeah anyway it seems like the focus is on like a combination of watermarking and detection at the stage of the social media provider so then they can uh not show content that is inappropriate um this is all really cool uh but a problem I noticed was that these are all remedial actions the damage is already done it's whether that you can prevent it from either happening again or like prevent the damage of that instance um there's like a slightly less explored field called protective Shields that I want to talk to you guys about

today so watermarking detection the content's already been made can you detect it uh can we kind of take it down as appropriate protective Shields try to prevent content from being generated in the first place um so yeah that's what I want to talk to you guys about because I think it's an important Gap to fill um rather than can we address the problem can we prevent it from happening in the first place um so to talk about this uh I wanted to apply it on a fun example so on today's on today's agenda uh we're going to be defending against Tok dance filters with adversarial noise uh does anyone kind of can we have like a nod if

you guys know what I'm talking about just from these like still images yeah um so that was a really fun Trend where I don't know if the video will play it's not important that it does um but where people would be able to take a photo of their friends and then make them dance to a song uh using AI which is super fun and super cool it made for like really fun jokes um but um for today's uh you know this the setup we're going to have today is can I post photos to Tik Tok or social media that my friends can't use to make me dance to Tik Tok sounds um so yeah that's that's the

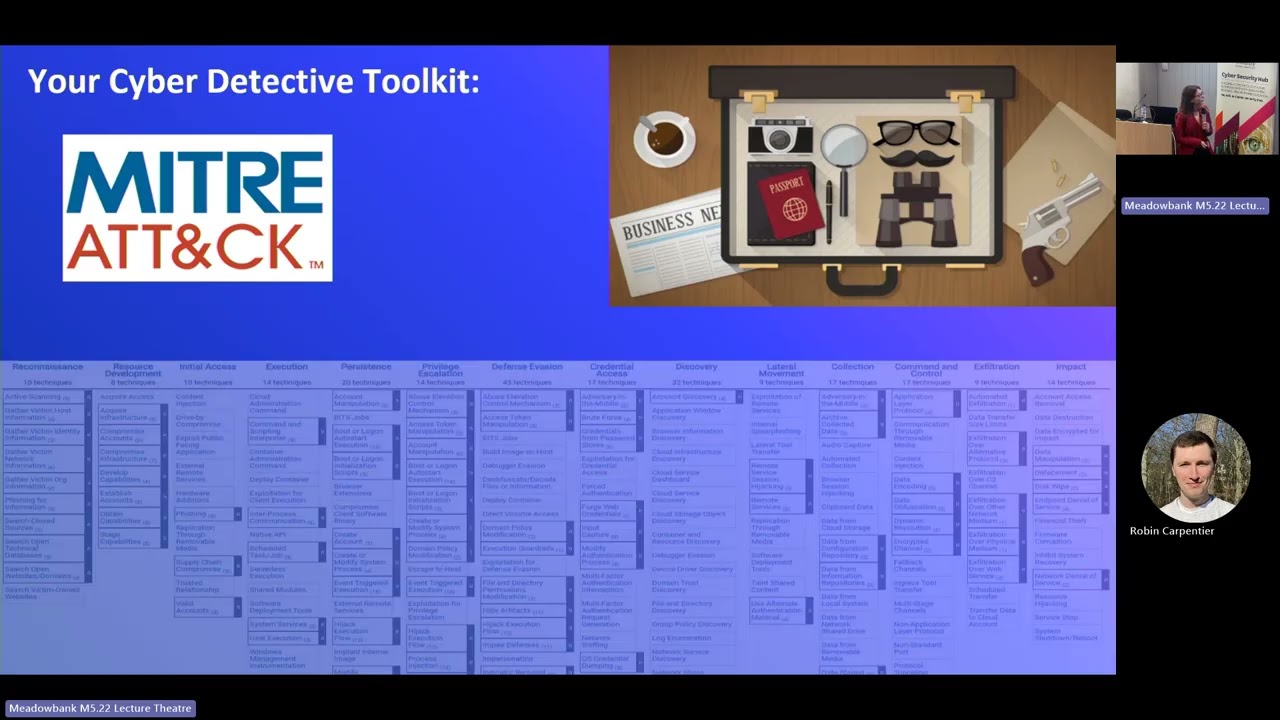

problem kind of we're g to motivate our like talk today so oh yeah you get to see bit of the dance I don't know if anyone can relate to having to handw write code I did it's really sad um yeah what is AI security so um it's a term that's sometimes used differently by different people but in alignment to how like the American government uses it and how like British and Australian government uses it uh AI security is the practice of securing our AI systems from threats so AI systems are software systems by Design so we have to consider you know the standard cyber security uh issues that these are vulnerable to but in addition to that um there are AI

specific threats that take advantage of weaknesses unique to AI components um so as a data scientist I'd actually in my past be trying to prevent these weaknesses from causing wrong behaviors but it's really funny now as it with my AI security had on we're going to try and exploit those weaknesses to get the behaviors we want uh so these AI specific threats can induce kind of three General failure modes it can disrupt the system from working uh it can kind of aieve or get a specific behavioral like output that we want and it can disclose um private private information uh so a paper I released this year was having a look on um in Academia and kind of categorizing

all the attacks we been seeing being developed into these three kind of General uh impacts uh the idea being here is um that adding AI to a system increases its exposure to other types of attacks um and like you know this might be kind of trivial like adding any component to any system increases as a tax surface you know but I think this is something that both data scientists and cyber Security Professionals kind of underestimate um mainly for two reasons one it's kind of easy to overlook the extra dependencies that can be used to influence an AI system sometimes as like software Engineers working on an AI system we kind of see the model a API endpoint or maybe just

the model weights as it already exists um but behind that we have kind of the data that e was used to train on uh if we're like taking open source models the model weights the a like machine learning libraries that are used to um run these models all those things can be influenced to then influence the output of our models at the end and the second thing is the informational Gap we have have around the risks posed by AI specific weaknesses um like it's a really good thing recently we've seen more kind of awareness around AI security um on the individual level as this field gets more mature um but we still see kind of

organizations not having to play catch-up uh the example I have there is um I don't know if anyone's heard of Tay the T bot from Microsoft um it was a gener of AI bot that was on Twitter and within 24 hours of the bot being online people on the internet which is cheky people um were able to kind of manipulate it to like do the wrong thing uh say the wrong thing just sound it's really a funny example but it just kind of highlights the like gaps we have um in being able to handle AI security risks um that we have to work towards getting like addressed so I've mentioned like that there a specific attacks I just wanted

to show some examples of other ones um you know they can render Services useless we can use AI specific attacks to commit fraud or even steal intellectual property at a fraction of the costs and for the like Keen ey viewers this kind of like aligns with the three types of like impacts I talked about before um being able to disrupt Services deceive them and disclose private information um if anyone's interested in like how these specific ones like happened feel free to come talk to me afterwards I love talking about AI security um but yes this falls back again on the fact that we can take advantage of like inherent properties of AI models uh to get the outcomes we

want so the moting motivating hypothesis for this project was I wonder if we can use these ideas from AI security to protect um to defend against the non-consensual Deep fake misuse um um so as a brief overview how do deep fake work deep fakes work um there are kind of five stages to a deep fake system uh first you have your media and you extract the faces you identify where they are and you crop them um once you've cropped them then you can do face merging uh and then Stitch the merge face back on the original media I've highlighted in blue kind of where um which steps involve machine learning um because that's what our AI specific

attacks can Target today I'll be focusing kind of on the third one face merging because I think that's where more of the complicated AI happens so there's more of a chance that we can kind of manipulate it um specifically the face merging techn technology I'll be looking at is called ins swapper uh why am I looking at it uh when I Googled defects it was kind of one of the first things that came up uh it's really easy to use and find a lot of the tools I found online use in swapper as the back end um it performs really well out of the box so I didn't have to do any additional training um I didn't even

have to do additional training per person some models you require like a few minutes of your Target's face to be able to do the face swapping um but in this Cas I only needed one photo of my Target and one photo of where the body I wanted to swap them onto to do the Deep fake uh it's quick and easy to use so I was able to run it locally on my computer pretty well and it produced quite high quality results out of the box some fun side law around this model actually uh we don't actually know much about it at all because it was taken down recently um due to ethical concerns um I think the people who are mainly

like supporting this model and the uh the Frameworks around it were aware that they were their models were being used for like unethical things uh so they did take it down um but uh copies of the model still exist in the internet and the funny thing is uh the what's it called um like people aren't able to verify which copies like are the original copies which I found super interesting uh but that's just like side law um I started my project by kind of unpacking this model um using some tools that we can find online that lets you unpack model binaries based on the architecture of the model I am like my hypothesis is that it's a style G for

anyone who is like read into machine learning things um which is kind of important later on uh because it means that we can start narrowing down the types of AI attacks that we can apply on this model so coming back to the project aim the project aim is to use adversarial noise to defend our photos against deep Tech um and adversarial noise is a technique um in the AI security world that's used to disrupt and deceive models you have your input image you make like specific changes small changes to that input image to greatly change the output of the model and this occurs because models kind of overlearn on specific parts of your input image that

we as human like we we don't um but in summary we're repurposing something that's traditionally been an AI security attack into a defense against non-consensual generative AI by applying it onto our own images uh so then if people are going to use machine learning on our images uh those M like those machine learning models won't be able to quote unquote see our images so I started with kind of like a general overview of the tools that exist I want this tool to be accessible to the everyday person um and there aren't many that is quite fit for purpose uh all the tools that I could find were created for slightly different purposes so some existed to protect

artists um art from being scraped on the internet some existed to prevent your images like to make them evade what's it called face ID but not quite what we're looking for which is to prevent the use its use against defect Technologies um it also targets very specific types of AI and we don't know if they would transfer onto many types of AI so I guess that's the point today I wanted to test if they would transfer into different types of AI so here are some examples of images with the adversarial noises add to the like add to the IM added to the image we have Forks so for's um main purpose was so that if your social media images were

taken off the internet you couldn't be matched back to you that was the main purpose of fors we have photog guard who has like a similar purpose but aims at a different type of AI um we have mist and we have Loki they all kind of add noise to deceive or disrupt na model just I think something interesting to note here based on my investigations in our Target Model um photog guard and Mist have like a similar um form like the the the type of AI is targeting have a similar form to what we're targeting so maybe they will work better I don't know we shall see so these were the like original images um and this is like with the

noise added you can kind of see like where like there's like blank spaces like where the noise you can see it bit clearer um yeah I also wanted to like add um just because these were like out of thebox tools it doesn't mean they were user friendly um a few like actually none of them really worked just out of the box I had to debug them a few times whether it's due to Resource constraints uh whether it's just to like out of dat versions like like libraries that no longer exist um dep pendency issues but I also just wanted to highlight that nothing really exists that the everyday person can use um so in the setup of the experiment I

also wanted to add random noise to see like is our adversarial noise any better than just adding random noise to protecting our images so again these are the inputs um and these were the outputs um based on the images with the noise added to them you can kind of see defamations around the face I think specifically for forks and Loki it's like very prominent because forks and Loki was like designed to particularly change how the model views the face to make it as like different to the model as possible um the numbers kind of like align with this as well but I just wanted to caveat here as well I used the comparison to face

similarity as one of the metrics here and the ones that were big so for example forks and key they had a really big change in like how similar the face was could be also due to the fact that that's the purpose of forks and low key that's what they were designed to do at the beginning um so I guess the ways to generate noise isn't super effective for this specific type of um deep fake Generation Um and I think that's just due to the like lack of consideration of transferability when people were designing this technique um so my proposal is to kind of make it more standard to develop and test these techniques amongst different

various types of deeg models what I'm working on like at the moment is facilitating this by creating an like app so that you can test yeah I know I use Jer um your image against the most common open source deep fake models like I also wanted to caveat the limitations of adversarial noise it can be circumvented by quite simple techniques I've read on the internet that sometimes like rotating the images uh compressing them decompressing them um can really severely decrease the protection on the images and again coming back to the transferability it's it's a really hard problem because AI models to AI models are different so finding one technique that applies to many of them is quite

tricky um however I think there is one use case that would be really useful for and is that like unsophisticated but automated deep fake attacks that we're seeing um more and more happen the other day I was uh in California at an AI lab and one of the guys it's really cool the work they're doing they're trying to raise awareness about the dangers of AI and all he did was he got my LinkedIn put into his like automated like process and was able to just generate fishing attacks um and like threats based on like my public profile and I'm sure many people's um maybe not in this room but in general and I think that's the kind

of attack we want to defend against um because it's low cost and high reward so I think protective Shields could be a solution to that especially we a develop protective Shields against the most common models it's this kind of technique has been proven in other domains even if it needs some work to fit into this domain and it is a form of non-intrusive prote solution we can add to our own images uh to address it um so yeah I think that's my proposal at least when I'm talking to my ml um cyber security friends but back to vigle Ai and the dance filter quickly wanted to go through what vigle AI is and why it's special so vigle AI is a company

that's advertising their new approach to creating deep fak um it's pretty cool but ultimately I wanted to find out can the previous adversarial noise defenses work that worked in other cases work for vle um and they kindly like supported me in like trying to answer this question so I want to also preface that vle AI is like proprietary and there's not much like information out there but based on the information that's out there um it works this way it takes a photo image and then creates a skin for a 3D model at the same time it creates a video oh sorry this is like the back end of how the Tik Tok dance Filter Works um

it takes a video input and creates um maps that onto a 3D model and then it combines a 3D model with the skin to create a deep fake photo video and this is different to the Deep fake generation methods we've seen in the past because often they would just take an image the pixels of an image and generate a new like pixel based image this adds a step in between even though it's like a quote unquote new approach to deep FES this like image to skin is is still like the pixel to pixel models that we're familiar with so I think we can still aim to disrupt that step um using some of the techniques

before but to do that I kind of wanted to know more information about this model and maybe properties of it um so how I did that was by quering it um to learn a bit more about it I think in the sphere of AI security there are more rigorous ways to do this coming out but it's not quite there yet so this is like my rudimentary attempt at the moment I wanted to know a how does it handle non-human subjects like does it does it produce anything with non-human subjects and it kind of does I wanted to know if if there is a face whether it crops it because it lets us know whether we can

kind of play with the background is that is that in scope um and how does it handle like multiple faces these are just questions I want to ask like what if we could then convince the model that there's a face in the back and that's not your own face things like that based on like the the like the behaviors of the model I predict that it's likely a diffusion model kind of similar to the model we talked about earlier uh so that I thought based on that a Mist would be the best kind of adversarial type of adversarial noise to add um and this is the output I don't know if it's necessarily a success but you can see a

bit of noise but that might just be noise due to the noise being in the like being in the photo in the first place um and this comes back oh this comes back to like this need to develop transferable defenses specifically against the commonly accessible deep fake models uh so wrapping up we know that generative AI Technologies make non-consensual deeps AG GR problem especially automated attacks and this is a problem that's not going to go away um adversarial noise is a promising technique to defend against non-consensual deep fake Generations but more Works needs to be done to kind of develop this um there is a need to continue investing in protective defenses that transfer onto common deep fake Tech that

unsophisticated actors use um just as an example this is a AI generated image of the Pentagon being under attack it was posted on Twitter and people were able to say that it was they detected it and were able to say that it was AI generated but even doing that it moved the US market so I don't think detect the detection Technologies are sufficient all the time there are use cases for prevention um yeah this is just more examples of AI generative AI like being used for harm today we talked about what AI security is and how maybe it can be applied to address this problem um specifically with the idea that a adding AI into a system increases its exposure

to different attacks today we use this AI to U this idea to defend our images against a deep fake AI um but with our like AI developer and like owner haton this is like a new attack Vector we need to be aware of um but yeah that's that's another talk for another day or if you interested please like come talk to me afterwards but yeah thanks for listening uh hope you guys enjoyed and if anyone has any questions uh this is the question time I

guess uh first of all thank you very much for sharing your research with us it's very valuable in this time of uh The Fakes misinformation and disinformation and I was just wondering whether there's a plan for you to have something that's readily available at the palm of your hand because this or like you know on a smartphone like an app because this will be something useful for women especially women uh who would be sharing their uh photos online and because there's a lot of gendered online you know let's just say online violence against women yeah the report I talked about it is a gender problem the report I talked about noticed noted that like 97% of the misuse examples were against

women so definitely it's a big problem for women um so I'll answer your question I think yes I am releasing kind of things publicly just for the so we can all start addressing this problem uh but talking to people especially in regulation in Asic and E safety commissioner I think if we find a technical solution for this problem um hopefully it would be implemented by social media vendors so that when you upload your image it automatically adds your defenses um that is like kind of the goal if this goes anywhere but um in the meantime yeah maybe not on the phone but like something to run on your laptop is like the goal in the intermediary

it's just a followup that's a great idea to actually provide the responsibility on the shoulders of the social media companies because I know that whenever like photos are uploaded the metadata about the location the exit uh file is actually strip off if they could actually strip off those met the data to make sure that a person's current location is not published publicly maybe they could do something about this yeah that that would be that would be ideal hey yeah thanks for your

question uh sorry just with um uh Ai and deep fakes and obviously the contential imagery are you aware of the new laws that have been passed in Australia think that that's a positive step forward because it seems like it is obviously it's not um it's remediation instead of prevention but yeah do you want to talk to that yeah no definitely I think it's definitely an important step now it's illegal to do that it wasn't before uh so people can go down the route of like I guess getting Justice for because this is not a harmless attack it really does impact people in their lives so I'm glad the government's um making moves on that and showing intention to do more I think

it is just it's it's a tricky problem um because that's at every instance of a misuse happening we have people who have businesses where their whole business is to create inappropriate defects for other people so I wonder if that's something we can address in the near future um the vendors of not safe for work deep fakes cool any last questions no all right no worries thank you very much um this is a present from bides here as well for you it's a very awesome and interesting talk obviously it's quite current um with the current environment of um deep FES and and uh social media and uh thank you very much you