How to hack an AI - Harriet Farlow

Show transcript [en]

[Music] good morning everyone um firstly can I say thank you to the b-sides organic organizers it's great to finally be at an in-person event and for b-sides again um the virtual sessions were great obviously and necessary during covert but it would be a bit disheartening sometimes seeing people kind of lose interest and drop off a call at least in person it's way too socially unacceptable for you to just walk out of the room during my talk but please don't try and test that I know we might be running into morning tea with my session um so my name is Harriet Farlow and I'm a PhD candidate at unsw Canberra looking at cyber security and specifically

machine learning Security in the context of adversarial machine learning I'm also an assistant director at the Department of Defense but my employer does ask me to clarify that this talk is not in relation to my defense role so in case they're listening I've said that now um my career experience before my current role involved defense Consulting um Academia a tech startup in New York City um which was really cool very different you know as a 12-person company to working in the public service as I do now um but the commonality between all of my different jobs is that all of them have been looking at artificial intelligence or machine learning in some way either from the selling side the buying side or

the implementation side and one thing that I've noticed across all of my different jobs is how it's really challenging to do correctly everyone was grappling with it in a very different way for very different reasons and when we look at the number of Industries and increasing number of use cases that machine learning and artificial intelligence has these days but also the risks that it compose and how many of our decisions it's being incorporated into I think it's increasingly important that we do make sure that artificial intelligence is created to be safe secure and ethical and that there's more awareness raised that it is actually an attack surface just like cyber security systems are so that's why I'm talking at

b-sides today I know that AI security isn't necessarily a typical cyber security topic but the intersection of machine learning security and cyber security has never been so relevant and I think all of you as cyber Security Professionals have a lot to bring machine learning security so I'm going to start with a question what is AI I think people talk a lot about artificial intelligence and it kind of kills me a little bit because artificial intelligence really is just the business term but machine learning um but it's also it can be a good way of describing what the real you know use cases for AI might be um but so when I ask this question I

usually get a response something like like this the Terminator um can I get a show of hands everyone in the room who has seen The Terminator okay good so I thought that uh I wasn't sure if it would be mostly students or younger people in the crowd today and it was only when I finished the presentation I thought oh bummer maybe no one's actually seen the Terminator but everyone kind of gets the gist of the plot right even if you haven't seen it and a lot of people have um so the idea is that you know in the future there's this AI called Skynet that's taken over the world um and so it sends the Terminator back

in time to kill sort of the only threat that it faced which was this guy called John Connor who was kind of rising up against Skynet leading this group of Vigilantes and so Terminator goes back in time to kill Sarah Connor who is John Connor's mother um before John can even be born um so I think this is probably a good time to Define exactly what artificial intelligence is then if this is the kind of idea that most people think about when we think of AI um so I'll start by defining our objective for today um we're going to look at you know at Skynet I usually kind of you know when people say the Terminator Ai and like

haha okay good joke and I don't know if it's because most of the people I work with are sort of born in the 80s or whether Terminator really is just a great example of AI um but I decided to lean into it so what if there was a smarter way that we could prevent Skynet or the Terminator from being successful in its Mission um what if we can hack Skynet so that it cannot identify and kill Sarah Connor entirely possible to do so that's our objective for today I'm going to show you how there are a bunch of different adversarial machine learning attacks we can hack Skynet um so it's a good time to think about

the difference between artificial intelligence and machine learning um so artificial intelligence tends to be broadly defined really as a group of technologies that can do something um that otherwise a human might have done so that's why it tends to be used as a good business term um whereas machine learning is sort of the technology that underpins most artificial intelligence Technologies and machine learning broadly um there's lots of different kinds of architectures and different kinds of ways that machine learning can be implemented but basically it's a way of building a model architecture giving it some input data input data defining what good output looks like or what what it should classify and output as and then letting

it run over many many iterations updating the information within this model architecture so that when it sees a new piece of information it can correctly classify that output um so that's a very broad term so I guess technically my talk should have been called how the heck how to hack a deep neural network specifically a convolutional neural network but that doesn't make a very good talk title and so I'm going to make these assumptions um so I'm going to assume that Skynet is a deep neural network so when we when we look at neural networks it's basically like trying to imitate the way that a human thinks so if we have our biological neural network

which is many layers of different neurons that are able to link together and perform calculations so that we can make good decisions or think properly that's a good way of describing a deep neural network and deep just refers to that there's many layers um and I'm going to assume that Skynet is a convolutional neural network because generally convolutional neural networks are used for image processing um because convolution uh refers to the way that each layer has sort of convolutional processes that it pulls different calculations so that it can process bi-dimensional data and still keep the information um but in a in a condensed way I'm going to assume that Skynet is unaware with attacking it

um this is because there are a few mitigations against adversarial machine learning attacks but actually this is a pretty reasonable assumption because most of the machine Learning Systems in the wild don't employ these mitigations anyway and then there are countermeasures to the mitigation so so it's pretty fair I'm going to assume the architecture of Skynet and the Terminator are the same that's for the movie Buffs out there maybe Skynet and the Terminator run different architectures I don't know it doesn't really matter for this talk okay so this is what our victim looks like on the outside but this is what it looks like on the inside um so like I said machine learning really mirrors the biological processes

that we have in our brain and they're generally or at least in a deep convolutional Network and I'll talk about other different architectures later on in the talk um they're really these different layers of neurons um connected to each other in a way that each of the neurons has sort of an activation function and a weight and these are referred to as parameters and so during the model training process all of these parameters are updated so that the model learns to take that input and then correctly predict what the output should be and what's really important about this is the process of gradient descent optimization um we have to give it a a way of knowing

what correct looks like so we basically tell her that if all of the parameters in that model sort of represent this n-dimensional hyperplane of possible error in relation to the model parameters then we really want to update all of those parameters so that over each training iteration we're kind of traversing down this slope so that we're reaching the global Minima in terms of all of the different model parameters that there might be um and so the global Minima is the point where the error is minimized in the model classification so most deep neural networks are really really rely on this gradient optimization process because it is that rule that tells it what accurate looks like and so that's why a lot of

adversarial machine learning techniques are able to um to sort of hijack or make the most of being able to understand what this gradient descent function looks like so if we think of a machine learning training pipeline a bit like this um so we have the training phase and the inference phase we give it input data there's a model training process there's an output or prediction and then there's some kind of feedback looped so that it can update over time there are adversary machine learning attacks at each of these stages we can think of this as an attack attack surface so these are just some of the different attacks these are sort of four classes um but they're really dozens hundreds of

different AML techniques that might fit in and out of these buckets so in the training phase we have poisoning attacks so we can poison the training data of the model so that it learns basically the wrong thing uh we then have the inference phase so we have extraction attacks where we create a new replica of the model to train and launch further attacks okay and then we uh and also in the inference phase we have Invasion attacks um which is causing predictional classification to be incorrect either in a targeted or an untargeted way and then we have inference attacks where we can leak sensitive or confidential information that the model was trained on so we're going to go through each of

these in terms of haxco note so this is what Skynet might look like on the inside so it's been trained using a bunch of images some of which have Sarah Connor and are labeled as Sarah Connor and then it goes through this training process where each of the layers learn different kinds of things about the input data that it's been trained on so they basically learn different kinds of features so lower level layers might learn things like edges colors outlines and then higher level layers might learn a bit more about you know is Sarah Connor wearing glasses what color hair does she have more specific things like this and so it then has a sort of output soft

Max layer which just gives us a probability um that a particular image is Sarah Connor or somebody else so we're going to start with evasion attacks um even though they come sort of further in that model training process but this is really where the academic field of adversarial machine learning came to the fore and there are more cool examples than the other ones so we're starting with evasion attacks over here so this is the proverbial example of an evasion attack in AML um and this was published by Goodfellow and zegeity in 2014 so it's really not that long ago um so we have a model looking at a picture of a panda and it correctly

classifies that it's a panda right um however we're then able to if I as an attacker know the gradient descent optimization function of the model that's predicting on this Panda I can then instead of sort of traversing down that slope to minimize the error I can Traverse up that slope and maximize the error and so I can either do that as a One-Shot gradient update process where I change all of the parameters to create this sort of adversarial noise or what's what's called an adversarial example um or I can do an iterative approach sort of bounded by a very small Epsilon value which is often a little bit more accurate um but basically um whatever the scenario I end up with

this specifically crafted noise um it's not random noise it's noise that's specifically crafted knowing the information about the model that is predicting on this Panda image and so when I add it to the image of the panda the model predicts that it's a gibbon with 99 confidence and this isn't just you know shocking because the model predicts it's the wrong thing um but what's really alarming is that to a human it looks identical so if we have situations where there isn't a human in the loop then isn't necessarily a way for a model to be told whether it's you know correct or incorrect it just this is just what it says so there are lots of other examples like

this I'll go through them quickly and this is a 3D printed turtle um the model looking at this turtle um predicts that it's a rifle with extremely high confidence no matter what the what rotation the object is held at um and so I I imagine the inverse scenario where I have a rifle coated in an adversarial pattern where it's consistently classified as a turtle that kind of thing's possible um these kinds of sort of adversarial patches are known to cause self-driving cars to not recognize stop signs or other kinds of road signs and then this adversarial patch is able to hide people from people classifiers so there's quite a lot out there so what I've gone and done I've added my own

adversarial example to our image of Sarah Connor over here and I've added it uh so I've created it using uh mobilenet V2 which is a model that's readily available through tensorflow because I'm sorry but I didn't have time to create my own Sarah Connor model I know it's really bad but I was able to go and put this image through a freely available um Celebrity Face recognizer API called through clarify um they do say to stand on the shoulders of giants don't they so we don't need to reinvent the wheel so I've gone and done that and for all of you who don't know Linda Hamilton is the actress who plays um who plays Sarah Connor so this is the

this is the correct image this is the original image I've put it through that model it predicts that it's Linda Sarah with 99 confidence great and then I put it through again with my adversarial example on top and we have Harry Styles I'm not sure who to who it's a it's an insult or a compliment um but it's with 99 confidence um so that's pretty shocking given that to me I can definitely see some noise in that image but it still clearly looks like Sarah Connor to me um but to a model it's clearly Harry Styles um so now I'm going to walk down and do a quick demo um because there is a targeted example

as well so that was an untargeted attack where I don't have a specific person in mind but there are ways where we can conduct where we can do this particular attack but with a specific um Target in mind as well so I'll show you quickly and I will move out of the Terminator Universe just for a little bit um to show you something that is possible in real life now as well so something that might exist in the Terminator universe and in our universe so this is a targeted attack so first of all we run it through well I've cropped the image so it's just the weapon that she's holding here um something suitable for our universe

maybe there's a a model looking at I don't know objects in a battlefield it's trying to predict who has weapons and who doesn't so it's narrowed in on this particular image and then I've asked the model to predict what it sees here and it says that it's an assault rifle a 52 percent good so that's correct we can do an untargeted attack like before I won't go through too much detail because it's the targeted attack that's interesting um but this is sort of where the magic happens because this is the loss so this refers to the loss in the gradient descent process the loss or the error error and so this is the adversarial example

we would add in an untargeted situation where we don't care what Target the the model's learning so now for my targeted example uh I've picked something totally random that a model shouldn't think that sees there um just to illustrate the example and because of the the way that we give input to the model we have to give it a sort of a label index from imagenet which is a big labeled image data set we might have heard of and so I've picked label three two seven that I want as my target which is a starfish because that's just really really random so here when I'm calculating my loss I've added an extra line um to

include the target loss as well I end up with this adversarial example I've added it to the image and I've asked it predict to predict what it is and it's a starfish 100 percent and the next four things it thinks it is is aren't related to weapons at all they're all related to starfish so that sort of took five minutes to do um it's pretty easy maybe in our Terminator example we could have made that Target TX which is another Terminator villain from one of the sequels one of the mini sequels um say something for us to keep in mind in our universe as well just how easy this is to do and this is to a model

that's available out there you know it has been it has immense IP value it's free for anyone to use um so I mentioned I'm a PhD candidate as well so my research is looking at adversarial machine learning um and I'm looking specifically at ways that we can perturb not just the entire image but very small regions of that image so that you could have a model looking at Sarah for example and that she could have small perturbed regions either on her person or around her depending on you know if it's another object it could be in the vicinity to disguise that object or person from a classifier so I don't know if you can yeah you can

kind of tell so there's a few different adversarial regions I've added here and then when I put it through my classifier we have another prediction it's Jane Fonda um and there's a few sort of nuances to this one here because in real in reality the model I'm putting it through is just looking at her face and not be objects around her but most models would look at the entire region of that image and you can show that the accuracy of the prediction um decreases by sort of like 90 even if you add these very small targeted regions around the person and it can just be a few examples um but anyway this is still working

progress for me so we're going to move on to poisoning attacks and so poisoning attacks really rely on the fact that the model training process is very highly dependent on the data that was trained on so this leads to a lot of examples we've probably heard in real life and a lot of business failures around AI um we're over here in the training phase of the pipeline and tape is probably an example that people have heard of before today is an example that gets talked about a lot um So Tay was a conversational AI that was released by Microsoft and she lasted for 16 hours live before she was taken down because she started posting

offensive things to her Twitter account because naturally you know an AI in training should get access to a Twitter account um and the idea was that she would learn from the conversation that people were having with her and of course people tried to hack her you know they were telling her stupid things and offensive things and so she obviously absorbed all of that because she didn't have a way of understanding what was real what wasn't real what was appropriate what was inappropriate and so Microsoft realized I had to take her down um now this is kind of a funny example but there are lots of examples of AI where the training process is informed by some kind of feedback loop that takes

into account environmental factors in which humans trying to hack an AI are one so this leads to a lot of examples of bias that we've probably heard about so models tending to become sexist racist homophobic over time um because the irony was that you know people people thought that by delegating to decisions uh the decisions to AI they would somehow become less biased because machines can't have bias but they just ingest all the all the bias from people um so in reality they tend to amplify bias unless there's some kind of engineering gun at the training phase so we've probably heard of things like Amazon's hiring AI being biased against women or Florida prisoner reoffender

rates being biased against black people so these are all examples of why it's really important to have a human in the loop as well so that you know ideally AI can augment our human teams instead of just replacing everything um there are also some interesting examples of adding back doors so this is the mnist data set which is a set of sort of uh handwriting uh it's the Baseline for handwriting recognition it's lots of labeled images of zero to nine um and so what these researchers did they added all these little backdoor trigger symbols um to a lot of images that were labeled zero and they found that when they added those in the training process

whenever the model saw those little backdoor triggers they always predicted that the image was a zero even if it was some other number and so this is uh fascinating and at least we can see when this is happening because it's a it's an image example and because it's it's easy to see when it's just a black and white handwritten number but when you have very complicated images or if you add back doors in the way that the adversarial examples were before you know where it's noise that we can't actually see it's very hard to tell whether a back door has been inserted into the data or not and this is just in computer vision we

can also have you know time series data financial data things that we wouldn't necessarily be able to see if there's a problem um so I mean the science behind this is really cool but it's it's very worrying in theory so what we could do with our Skynet model is um assuming we have access to the training data that's going it was trained on and there's lots of ways that we would be able to do that we could either mislabel a lot of our Sarah Connor images and label them TX so instead the model learns to recognize Sarah Connor as somebody else and go after that person instead or we could add some kind of back door trigger so

that we evade that particular classification as well or Sarah Connor could wear that particular trigger on her person to essentially disguise her from recognition okay I'll Breeze through the others because I think we're sort of getting close to time so extraction attacks um are basically creating a near replica of the models that we can train other attacks um this is a a brief outline of a process we could use um but don't Focus too much on the exam on this particular example what I will say is that when I trained my technique before on mobile v-net2 and then applied it to that free API from clarify the the Celebrity Face predictor that's essentially me doing a surrogate attack

because I use a different model to create the adversarial examples and then I launched my attack against a different model and these are generally quite effective because machine learning models when they're trained on similar data sets are told to do similar things they tend to converge so all of those lower layers would be doing the same thing you know they'll be recognizing edges they would be recognizing lines or colors and then it's only the higher level layers that would have a real a real difference so that's why me launching that attack that I've crafted using another model is pretty effective so we could do that with Skynet um we could at the inference phase um give Skynet a bunch of specifically

targeted queries so that we can understand what the model architecture is like so that we can build our own surrogate of Skynet we could also use a model well we could also build our own model from scratch but train it on data that Skynet was either definitely trained on or probably trained on and we would find that it would become basically what's going it is it would converge into a skylight model by inference attacks um so these are attacks where we can leak information about the data um that a model was trained on and given the kinds of industries that machine learning exists in these days you know Finance Healthcare defense um it's extremely likely that we might

be able to pull some interesting data from here or understand if a particular piece of data was included in a training set um so this is a an example of a sort of pipeline or a process we could use um

a little a little bit blurry that's okay um I'll Breeze through this there's uh I think in my mind it really speaks to the importance of data scientists understanding that just because you have a lot of data doesn't necessarily mean that you need to use it to train your model I think generally there's a recognition that we're sort of moving away from just big data as an ultimate goal and moving towards maybe smaller data sets that are of higher quality or being able to use synthetic data to train models on um for all sorts of reasons you know like data breaches is one very big reason but this is another particular benefit that we could see from moving

towards that kind of ecosystem as well so in our example we could add some features to Sarah Connor in real life she could wear some things that Skynet probably hasn't seen her in in the training data set so maybe a funky pair of glasses I don't know she could dye her hair Skynet has only learned to recognize her based on the images that has been provided so if we're able to disguise her in some way then Skynet would be so successful um assuming that we can you know if if this is using inference attack methodologies um then that would be more about the process of finding the intelligence around what particular pieces of data she was trained on and then identifying

what would look new to Skynet okay so let's say we've implemented all of our attacks against Skynet and we have success Sarah Connor survives um for those of us who have seen the movie I don't know what kind of implications for the future that would have if Kyle Reese doesn't come back in time and save her um but that's okay we can just leave that in the Terminator movie would John Connor still be born I don't know that's okay um but what about all the other kinds of models so convolutional neural networks are just one kind of machine learning model like there are many many others out there like first of all there are many different ways of building a

convolutional neural network um there are lots of other deep neural network architectures you could use like lstm Gru language models these days are built on Transformers and uh from an autoencoder decoder structure these might all be very technical terms I wish I had more time to go in in depth um but the takeaway is that there are lots of other different kinds of model architectures that are vulnerable to either the same kinds of attacks that I've already shown up here um all very similar attacks and that there are actually a lot more attacks as well than I've demonstrated um so language models are really the sort of cutting edge of AI these days I would consider we've probably heard of

gpt3 and how amazing and realistic it is at generating conversation and doing sentiment analysis and summarization things like that um but there has been a lot of research into how vulnerable language models are to attacks and they are extremely vulnerable and they have their own kinds of specific vulnerabilities too um they are all known to be um uh able to be hacked with um uh different evasion attacks poisoning attacks um bias is a big problem backdoor attacks in language models um I'd love to you know go into them in more detail without being another talk um diffusion models are all the rage lately as well I generated this image using Dali I think my prompt was

something like an AI taking over the world as an oil portrait um and I'm sure a lot of you have heard about how an AI generated image one um an art prize this year as well um these are all fascinating but we still don't really know how they work like there are lots of interesting phenomena that we're seeing coming from these um some people may have heard about lobe which is this particular character a very gory horror-esque character that appears when people enter certain prompts into um like stable diffusion models and they they don't know where it comes from um this this in a way is a way of kind of injecting ideas into models and

because models are historically black box Research into explainability and actually interrogating why particular decisions are made and how is really important but there's there's still a massive culture in machine learning of throw data at a problem try to make a really efficient and accurate model and that's kind of good enough you know um but it but it shouldn't be we really need to start um building a culture and a way of you know ensuring that culture actually happens you know we need Assurance mechanisms to make sure that ml systems are created with the mindset of security from the start just like cyber security systems have been for a while now we need to find a way of transferring some of those

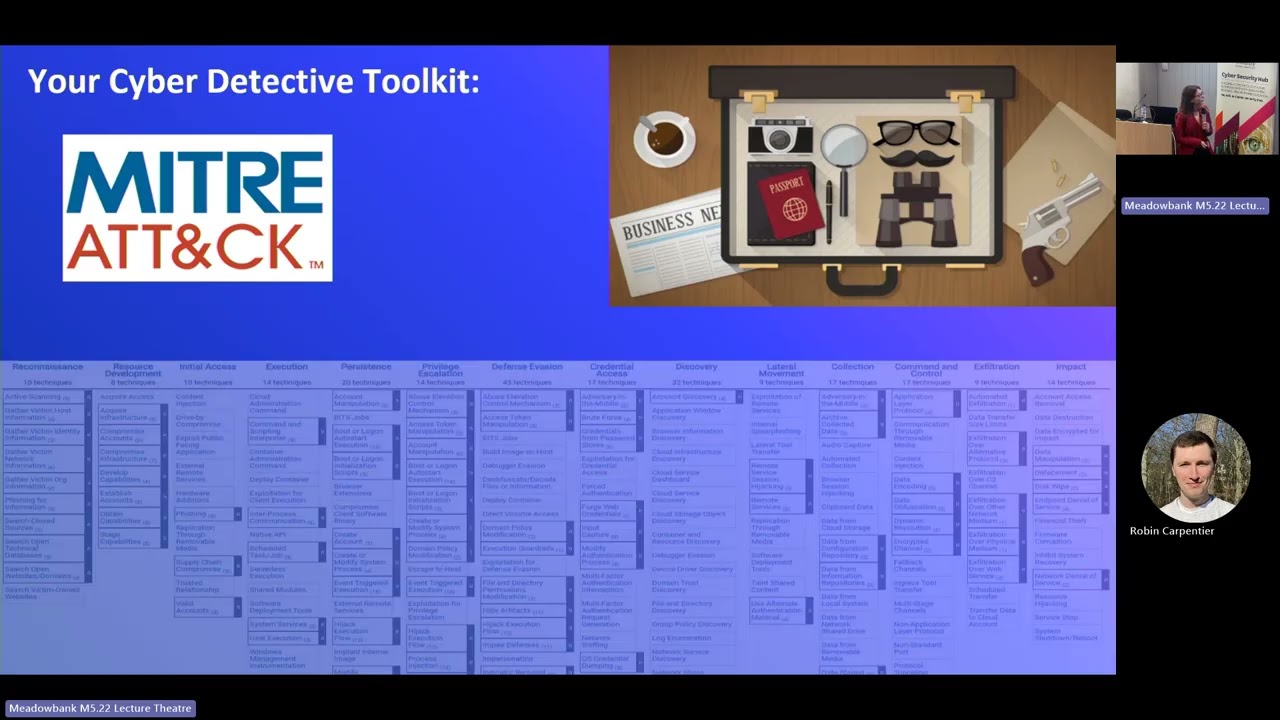

best practice into machine learning as well so there is obviously some work in doing this so this is Midas Atlas Matrix it has a bunch of ttps around AML attacks hundreds of them if you go through them in a lot of detail there are quite a lot of governments or organizations like there are ISO standards um in development at the moment and how to um sort of principles around AI things like safety ethics security but then there isn't necessarily a way of building uh sort of pulling those down to the developer level of making a toolbox that we can actually use um for for mitigations and assurance and ml audit because these are all things

that we need so this is getting into what you should take away from this talk so firstly AI is a real Attack surface I think AI is still analogous with magic a lot of the time these days you know the way that people use it but AI isn't just some ephemeral thing that is somehow able to make predictions really well um it's a real technological um mix of systems and it has real uh you know vulnerabilities and exploits and there needs to be some way that we can actually assure that ml systems are safe and secure and so that's why I think you as cyber Security Professionals come in because even though ml systems have you

know some key differences to cyber systems there are quite a lot of analogies things like the kinds of mitigations you might use um Assurance practices audit practices um even zero days and things like that have their own analogies in ml security um I also personally think that ml security is something that should be legislated um as cyber security especially for systems of national significance has started to be like I said cyber security can inform ml security there needs to be more of a mindset of security from the start when it comes to ml um we also use AI every day like I I encourage you to think about all of the touch points you have with different AI

systems every day um at the end of the day um there is a real benefit from making sure that ml systems are more efficient more accurate but when we Define progress in ml terms it shouldn't just be constrained to technical benefits like those we should really be measuring it in the ways that we actually are able to help humans you know help ourselves like AI is a tool uh for humans it's not progress for progress's sake and until AI or ml is able to really cover the full gamut of intelligence that humans are you know we talk about artificial intelligence as though all we are are reasoning agents you know that our decision-making power is the only kind

of intelligence that we have but it isn't there's emotional intelligence there's physical intelligence there's creative intelligence and so I think the ultimate way to hack an AI is really just to be a human and we need to be really intentional about the way that we design AI so that we're actually benefiting humans as well um because I think when when everyone was connecting to the internet in the latter half of last century um sort of with unfettered trust it was so convenient um people really scoffed at the idea that cyber security would ever be a great threat um but here we are today and in 2018 General nakasoni announced that cyber security was one of the US's greatest

National Security threats and we wouldn't be having conferences like this if cyber security wasn't a real threat I think that there's a lot of lessons that we can learn from cyber security to assure that ml security doesn't become the next greatest threat in 20 years time thank you [Music]