From Prompts to Agents: Building Agentic CVE Analysis Systems

Show original YouTube description

Show transcript [en]

Yes. Um, thanks a lot for having me and um, I just really wanted to show my experience with working with the in this exploring this field of agentic AI. I'm on the vulnerability management corner of cyber security and basically um we have uh the torrent of the vulnerabilities CVs like 40,000 CVS were published already in 2025 like over 3,000 300,000 CVS were published altogether and uh obviously this year uh it's kind of a new solution sort of emerged. Uh new companies are launching which propose agentic AI solutions uh to trash threat vulnerabilities and uh prioritize uh real threats for uh patching. So um I guess uh since I'm uh starting this blog of AI related

talks, I wanted to share this stats. there are coming from Richard Steman and basically uh he is telling us with his IT harvest database that uh 91% of the products launched after uh 2022 mention AI. So basically at least maybe not the cyber security industry but at least cyber security marketing really believes in AI but uh I guess that adversaries believe NI AI2 because um this is from the checkpoint blog post on hex strike from I guess August uh this year. So basically this tells that people were automating the getting access for Citrix uh vulnerabilities and uh this hack strike repository is on GitHub. uh essentially it's not kind of the real working product which you can

take and start then testing uh it's more of a shell of the I don't know um shell of the aentic AI tool uh with advertised 150 um MCP tools but they are mostly L implementation and what's uh most important uh this kind uh repository lacks um real uh orchestration. But uh essentially essentially then essentially the um essentially the kind of promise of these types of tools that they are move us from assistance when they only provide us with the information about the systems we want to penetrate or uh defend. It's like everybody knows this in map and map scanning and it shows you the ports and the software. Then some tools can offer you some

options how to penetrate or how to defend or what to remediate. Basically these type of tools they promise uh us um autonomous drive. So we just set point A where we are, point B where we want to go and uh they take care of everything which is uh I guess I guess um is very promising and uh I guess uh in the background this is built on this um software epoch uh which are um I know it's the term basically coined by Andri Karpathi and uh he introduced this software 2.0 all when he joined Tesla as a head of U vision for their autonomous uh driving and basically the thing he promoted at the time in his

talks that they are writing and replacing actual instructions which are written in the uh codes uh to training the system showing it uh all the samples which it can encounter on the road and uh training it to understand the situation and make uh decisions. But uh basically that was kind of based on a showing examples. And now I guess um on one of the kind of AI conferences this year he introduced uh software 3.0 O which is basically uh when you move from programming in any kind of language or training uh models to instructing the models uh in a plain uh language giving example examples giving it uh instructions and um the system based on a language models on

generative AI capabilities will uh actually do um the work and I know if we kind of think about cyber security and I just was thinking what can be examples so uh EPSS for example is basically it's definitely the software 2.0 all when we show it uh hundreds of thousands uh CVS uh millions of exploitation attempts and it tries to predict tries to predict um probability of uh exploitation in the next 30 days. Uh and again this new generation of the tools which uh started to emerge uh this year they are based on a Jing AI and under the hood they use this 3.0 or uh programming and uh of course there were uh a lot of metrics

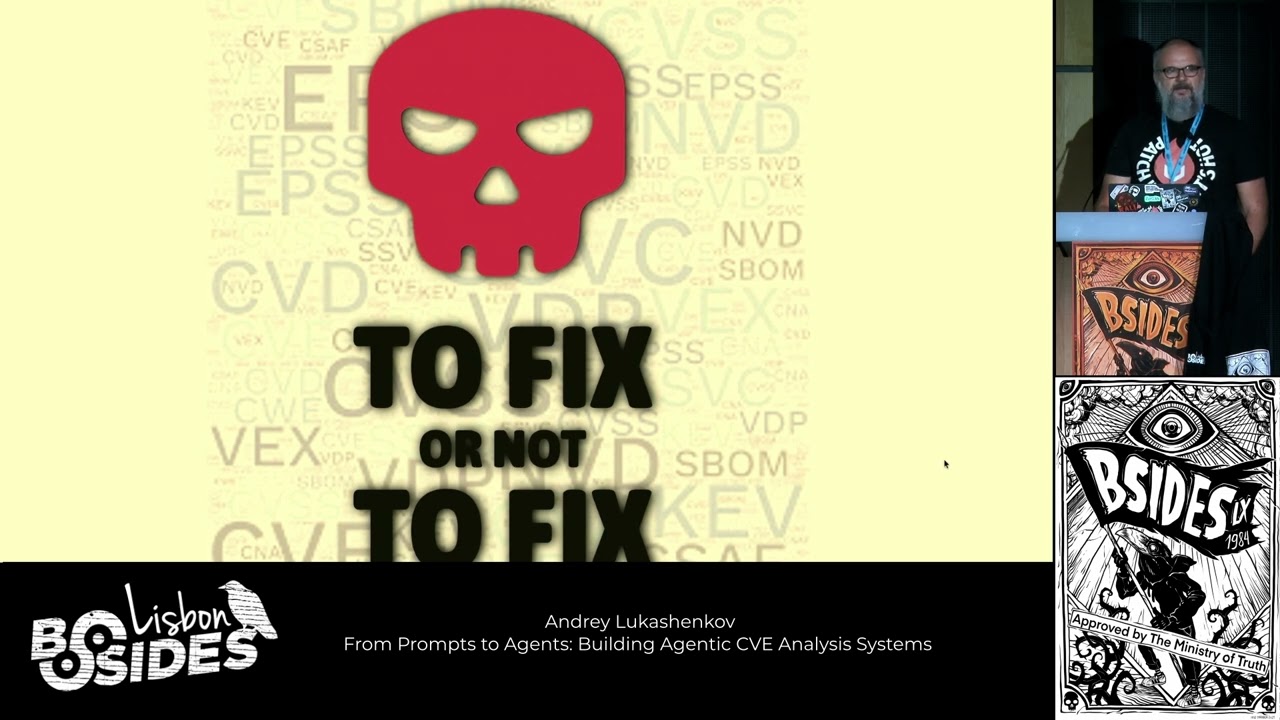

which used to um which we used to prioritize vulnerabilities remediation but now I guess we just need two buckets we don't want this uh low medium high critical CSS scores or we don't want to try to realize what's the probability of exploitation we want just two buckets to fix or not to fix basically the uh all the tools which you see on the market now they show you these funnels uh they start with 100,000 vulnerabilities in your system and they try to get to kind of a bunch of them which you actually need to fix because they uh show the real uh risk and when you um I don't know start talk about uh any sort of uh

machine learning system or AI system you uh of of course need to think about uh benchmarking and probably the easiest way kind of to start think about it and try to use and see what's can be done is of course the charge you just launch the application maybe you have subscription maybe you don't um the tools offer you kind of something uh even for free and of course you can start uh writing something like analyze this CV or find me recent uh exploited uh product of this vendor or whatever. Uh the problem with these kind of tools, they are not specialized. They are super generic and they are open-ended. So they don't focused on particular task you want to

solve. They want to make you happy and they just want you to keep talking to them and um I don't know answer the questions the best uh they can and you probably seen on the YouTube on the or on the internet uh this kind of a super prompt guides or uh people offering the guides uh the I know prompt packs which solve uh your problems will help help you to automize guys automate this your business. And uh basically this all this kind of prompt template super prompts they are built in a similar uh similar ways. Uh you you specify the tasks you uh specify the uh steps you need to make to uh accomplish this task. You uh give it contain

context the sources it need to look at. uh you kind of clarify how it needs to present results to you in which forms they are usable for you and you basically uh let this prompt run. Uh but this is not super useful in enterprise context. Uh, of course it's super fragile because models actually this consumer products they evolve all the time and uh you can't be super uh rely on that they work all the time um in the same way. So uh there's a new generation of kind of a programming frameworks that try to u orchestrate the things and essentially they are trying to replicate this behavior of of spec broking the process into tasks assigning agents to uh

execute these tasks and orchestrate the uh execution. So QI is one of them. I just kind of for my project for my exploration I just took it because it's uh description was kind of the closest to what I wanted. So to orchestrate the team of agents uh to uh execute the task in a manner that uh would be kind of similar to a team of people accomplishing uh the task and um basically uh I built this kind of BM agent prototype or or whatever uh it's code is on GitHub. Uh the link is on the right and of course if the slides are shared you can get it and to play with it. Um so um a little bit how the crews are

on on how the crews are set. So basically you have the agents and you can think about the agents like people in your team. They have uh job roles. They have uh job descriptions which ex basically describe how they uh need to what they need to do to accomplish the tasks and how they need to present the results. Uh agents have access to tools which they can use to accomplish uh their uh work and um a few other parameters which basically are internal and yes agents can delegate work to other agents and if the process requires uh they can ask their colleagues for help and um you build your process out of the tasks. which are particular steps to accomplish

your uh process and um the task of course has a description which is basically a prompt to LLM how to uh what what it needs to do. uh the agent uh you assign the task to an agent and for specific tasks you can um also uh add some tools uh to uh kind of work it and um this is kind of all run by the process by by by the pi framework which uh orchestrates the tasks execution by the agents. So uh I tried to kind of present this project composition. It's like 25 hundreds of uh code lines and uh almost 1,000 of those lines are just text. So basically agent definitions is just the

wall of text wall of the of this kind of plain English which um programs uh the agents and the tasks in this software 3.0. uh manner. This is how the crew is set up in this uh project. So just look at the bottom. It's basically I use here a hierarchical uh process which basically defined defines um the order of executions defined by uh Q AI management agent. If you use sequential then the tasks will be executed in a order that you specify them in this uh array. And also this I played a lot with the memory management uh played with various um metrics. Uh it's this stuff actually evolves super fast. Crew AI um releases new version

like every two weeks they add something new. uh behavior changes a little bit, you need to kind of rework uh this uh super interesting, super fast and I ended up for this kind of uh vulnerability management AI CV analytical uh agent. I ended up with the seven uh ended up with the seven uh tasks and seven agents. Basically my my uh working process was I started with two or three tasks and engines and my working uh process was uh super easy. So I would give the prompts to an agent. It will give me a report. I will uh do the same prompt to chat. I will get two reports and feed them into cloth uh and

ask them to compare for consistency, quality, technical details. uh collo was able to use MCP server to check details uh of the vulnerability details uh in each uh report and at some point I just started to feed the output of the code into the cursor prompt basically this was kind of this uh wipe coding uh cycle when you don't really it's not like you don't think but you don't really you just steer the process uh you obviously see mistakes or when the uh agents want to deviate in the direction you don't want uh them to do and you kind of need to correct the course but but uh basically I wrote just almost nothing uh myself.

So seven tasks of course uh research planning uh research planning uh is u important in terms that you can start with the vulnerability ID or with the particular uh security advisory but if you want to ask generic questions you need some sort of um step in your process for model to understand either it needs to go and get information on something particular or first search internet for something you ask you ask it uh about. Then there's a vulnerability research and it basically takes uh information from vulnerability intentions database uh exploit analysis looks for exploitation traits uh looks at EPSS looks at uh exploit PCs are available threat intelligence searches the internet for the trade for the information about uh

adversaries which might be using this CV uh or vulnerability in their uh process and get got the attribution. Another task uh is the technical exploitation analysis. And here this task actually uh uh needed. This shows that you actually can achieve better results if you split your process in a smaller more focused task. Because when this kind of technical exploitation analysis was bundled into just explo exploit analysis um agent couldn't answer you two questions. which are exploits are available and how they are working. So basically you need to focus your agent uh on particular tasks and uh basically that helps uh a lot uh to improve results. Uh risk scoring tries to get everything together uh from uh all the previous

tasks. Uh you see that the for for each task um there's a context uh and that's the results of the previous tasks and uh basically come up with the risk score which would which would aggregate this information uh all this information in just a number which you can use to kind of uh to direct your action to fix or not to fix. And the final anal analysis analyst report uh just generates four or five paragraphs of of text uh because it's just kind of not a production system but uh we can't uh project and here are agents and um these are exactly the their um goals uh written in the text and tools they can use.

And uh speaking about the tools, uh AI now supports uh MCP and MCP is a new framework which allows you to connect traditional APIs to large language models in a standardized way. And you probably saw a lot of kind of diagrams that MCP uh takes all the requests together and spread them into various APIs but I think most of those diagrams they don't appreciate uh one thing about MCP. So this kind of tools resources it prompts on the lower right part of the diagram they actually the metadata which MCP server can advertise uh and uh the client the MCP client uh through the MCP server can actually understand which tools uh MCP server can provide

and how to use it and this is super super powerful I I think uh because um at some point I ended with two MCP servers uh which provides uh the same uh information uh from database uh but um they structured completely different. So they have different tools. They have different tools descriptions. Um but your my my agent uh it can work with either of these uh MCP servers without any changes. So I actually try to do it uh super uh generic in terms that it only looks for particular metrics. It doesn't depend on uh know tool names, tool descriptions. It only needs to understand what two can return uh back and that that creates a super kind

of flexible integration layer because uh everything exchanged as a text or as a JSON which is also a text but structured and um this this I don't know opens opens a lot of possibilities to uh kind of flexibly connect your system to the data uh it needs And um of course my server only works with the vulnerability intelligence but I easily now can imagine um that it can work with various MCP servers to get internal context for vulnerability remediation and basically uh it just needs to know which MCP servers it can uh access which tools they have which information they can provide and then it can decide what it needs to uh make a decision about the

vulnerability risk in a particular um in a particular um environment. So, uh I guess here uh a bunch of examples. Well, uh the text the text is super readable. Uh I'm super happy about it. I wasn't sure. Uh you don't need to read it. Uh it's it's the output of the agent. Uh it's of course not kind of a not it's return it returns all the time uh a little bit differently structured uh report because it's a kind of a trainerative model but uh you definitely can uh structure the output if you provide some sort of I I actually spent a lot of time to make this uh narrative if you give it structure it loves the

structure a It falls uh intoruct it falls into structures just uh instantly and you see that uh basically it returns all the uh kind of I highlighted involved all the bits and pieces of vulnerability intelligence that it got from the uh MCP server and collected also there's a risk uh calculation and um another thing is another example This vulnerability is in Apple Safari and uh basically uh CV they include only because they are published by Apple they only include a list of Apple vulnerable affected uh Apple products but because the Safari is based on webkit and webkit is used by various uh Linux distributions uh they also get uh affected. So uh here on the top you see that because this

agent has access to a vulnerability intelligence database it can uh correctly um correctly uh indicate that also some uh versions of uh Linux are uh affect and here's just example of how this uh how how this vulnerability is handled by this CV is handled by CH GPT. It just shows the Apple product and on the right you see that it's super verbose. This uh structure is uh all the time different and uh basically it just tries to be as helpful as it can be. Uh another another example is um public but not public CV. Uh the CV ID which was mentioned by in this case uh various exploits but the CV hasn't been released yet and again uh

this is the output of the uh agent uh you can see that it correctly show that there are evidence of the CV being present and also was able to extract the information from them this advising did this exploit PC about what uh product the CV might be about but this CV is not is not on the CV list and not in the NVD and uh of course the risk score was calculated with a huge uncertainty so it's kind of a not super risky and the uncert but uncertainty is uh huge because uh it lacked a lot of the uh a lot of the data about this vulnerability because doesn't have CVSS doesn't have EPSS only

a bunch of exploits mentioning it uh casually and here is the example how CHGPT handled this uh v vulnerability uh it actually found very similarly looking uh ID and told me at first it told me that uh I did a typo there's another CV and here's the information about this CV uh from the NVD when I insisted it spent two paragraphs of text insisting that there's a authoritative uh source of the vulnerability data uh and uh but if I insist some third party sources uh not very trustable reference this uh CD which I wanted to get uh information about but but uh in fairness uh Chad GPT is super good about this unpublished public but not public uh CVS because their

search engine works uh fantastic and if there's some sort of uh context about vulnerability, it will give you the product to give you the affected versions and spec especially if there are vendor security advisory referencing this uh CV, it will work uh super good. It it wasn't the case uh like maybe three or four months ago. it will just go that that uh CV is not public and will go again on its um how say uh on its free flow that's uh based on the index it was published in this year and start to and will do a tons of various uh assumptions uh and of course of course uh this kind of vulnerability agent vulnerability

management engine. It can answer more kind of a more kind of a way questions like give me a recent exploited vulnerabilities in Citrix product correctly finds this CV 2025 7775 and uh basically gives you the same uh same information about it but what's interesting is that this desktop applications they also move in this agentic direction they just used quote here because it's uh better shown in the UI that search it first it did the search uh in the internet and then came up with the uh CD ID uh asked MCP server about the details and started to write uh its uh report. So uh a bunch of takeaways wipes are all the way down. So uh

uh basically I started this project with the assumption that I will of course wipe code the structure of the uh project the kind of use of the QI framework but I will be writing uh the prompts uh myself by hand. uh no no no not no not no not the and I established a very kind of a elaborate logging system. So I actually wanted to capture all the prompts, all the results, all the execution tree to see how my prompt changes are uh helping that kind of turned out to be super useful. But I didn't write a single line of this prompt because of course writes all the prompts much faster, much uh more elaborate than I I I would do. But

still this loging system used turned out to be super useful because you can just copy paste this uh kind of blocks of this logs into the corset prompt and say okay here is the problem you fix it and it would kind of come up with the come up with the fixes and uh again this because it's a lot of text uh it's a little bit hard to control uh typically uh when it needed to solve something it would uh make changes at least in two places like task description and the agent uh job description. sometimes in it would collect uh also affect the um planning task but uh again so uh V coding is a thing and it's uh work it uh

flawlessly and um you can't build what you can't evaluate uh and uh here is I guess uh the biggest problem with the uh this fentic system at the moment at least in a way that kind of I built it and anything that gives uh suggestions and analytics because uh you need some somehow to uh evaluate it. Uh building deterministic uh deterministic evalations is possible. For example, if you have uh your uh code repository, you have some scanner to find vulnerabilities in it and then you unleash your agent on that repository, you can see which uh vulnerabilities were fixed or not. Uh that would give you the deterministic evaluation. But uh but that that is difficult because if you for example uh

try to build a system that that uh determines if you are compliant to some uh particular uh cyber security uh products cyber security standard or or framework you would need to answer the question what it does mean to be compliant. uh and if you have several locations, several many systems that would be super difficult uh to kind of give a deterministic evaluation. And again there's a caveat you can't for for any kind of um evaluation you can't control inputs. You need to be able your system to handle correctly any input. So your repository is better to be some public repository not your kind of a pet repository which you kind of know and you overrain your agent to answer

correctly on your uh repository. Then the next uh is um MCP uh so this is from the in the recent horitz article article from the March uh this year and they basically outlined the MCP market map uh I haven't seen actually it updated uh now everybody writes uh aenti market maps but uh some people uh think that uh MCP is a new thing that will kind of in a in a way maybe it will get rebranded or uh will evolve in different standard but this this way will be the primary way to access APIs in the future. So basically you want to make your data your APIs uh accessible and workable for uh large language

models or whatever uh will come next and uh this of course creates uh just a ton of news application security uh issues and probably a few companies will be built around it and uh this kind of MCP ecosystems definitely evolves. I weren't able to find just a nice uh slide friendly uh map of MCP uh ecosystem but it definitely grew much more than uh it was it used to be in the March 2025. Um the more data you give to the model to decide the better. So basically um We I experimented a lot with various types of present information we have uh in Bulmer's database. I I tried to very refined short snippets or just

unleashing all everything we have and uh if that data can fit into the model's context window uh the results are much better when you give as much information as possible. So it's you you need to be super careful not to uh overshoot not to overshoot the context window but you need to give uh model uh everything you know about your environment the vulnerability intelligence and it will kind of work uh much uh better but uh there's a trade-off for it. Uh of course the more tokens you put uh to the model the more expensive it uh becomes. And speaking about the costs, um, here is kind of a report of what I've spent working on this project. You can

see that it's basically the weekend project and it's from early August to yesterday this accounted to around $80. So each particular all $80 in uh, OpenAI credits each particular particular poll is super cheap, but they uh add up very quickly. So, so $80 probably it's not that much over three or four months. But uh I was actually a little bit surprised that it cost me uh that much just just just uh playing with the uh models essentially. So that's uh pretty much it. Um, what I want to add, follow the white rabbits and uh, it's much better, much more fun and I know much more enlightening to tinker with the code than read all the possible

uh, reports or watch YouTube uh, videos. Uh, it's definitely with VIP coding, it's super easy to be a programmer now, at least if your code is not goes into uh, production. And uh I don't know um here is the link to the uh repository and you can find me on LinkedIn and uh I guess we still have some time. I will try to see if the demo gods are good to me kind to me this today. I will try to show you how it works. probably will go up.

Do I How do I Okay, there's my mouse. How do I How do I move? How do I move? Uh I'm lost. I'm lost because I can't I can't uh output this. Oh, here here it is. Okay. Fantastic. Fantastic. Let's let's do it. Let's select some prompt. Basically, I I just have this three prompt. Uh if you understand, you probably understand that this are three ways to ask the same question. So, let's just kind of try to see if it's works. Yeah, it started to work. So, basically you here here you here you see uh the all the prompts that are flowing inside the model. Here is the execution tree and it starts to kind of a think what it

needs to do. Uh what else? It's yes it start it starts to research uh it's kind of a strategy how to answer the question about this uh CV it's already kind kind of you see this context of the model because it's already the uh CV ID is inside it uh and this will take three or four minutes uh it's kind of again it's called the tool uh get get the whole uh tool output And it starts uh to process it. And it also works with the memory and try it tries to find the memory uh in the memory the patterns uh it already uh saw and the entities uh it already uh processed and uh basically

that's pretty much it. I can take questions while it's working. So machine needs to work like that.

Okay, I can't I can't see and hear you. Sorry. Testing. Okay, it's working. So, uh thank you for the talk. I've been doing a lot of similar work lately and I agree with the agent workflow. What I've also saw is every time you try to do this uh in a cyber security environment for the AI everything becomes critical uh even though it probably isn't. So what I'm asking is have you seen any cases of uh an early false positive or hallucination uh in your early agent that causes uh major false positive uh in the final report. uh and also if you only use this debug output as your observability tool or uh if you have a separate

observability tool to analyze uh the reasoning chain. Well, uh, hallucination is a problem, of course, uh, because, um, it's probably at least 30% of the prompts are anti-h hallucination rules and that's it's super simple agent, uh, which is obviously doesn't work in a critical environment and you need to strive the model from kind of hitting all the familiar all the familiar uh, places. it it can make a lot of assumptions uh based on its training on the data it's seen and you need to strive it to work with the data to stay uh in the uh context uh and you often kind of need to kind of tweak the large that the model the agent uses kind

of working with its temperature to kind of for report you put it higher for data analysis over. So it kind of this stuff and um what was the second question? Sorry. >> Uh if you have any uh particular observability tool to analyze the reasoning chain. >> Um no no it's it's just a weekend project and uh nothing serious. >> Okay. Thank you.

Yeah, I guess it it actually uh completed the task. So basically here you can see it's it's a little bit small but here you have this result. So thank you >> the question. Sorry it's super super hard to appreciate anybody. >> I just got the microphone. Uh so congratulations for for the work. Um I just have one question. So have you thought about u from what I saw this is not uh the models are not trained. So you're just doing the a model with a different context right you're not retraining a model with >> no no it's just stock uh chat GPT40 GPT4 model I I guess it's a year one year old. So uh I made this tool to work

with GPT5 but uh it's super slow. So it's unpractically slow. You can't actually uh make any kind of iterations if you wait GPT to complete. It's it's a stack stack standard uh GPT from the API. >> Have you thought about using smaller LLMs but with specific training models for specific agent use cases? Well, I again I it's sort of weekend project. I I haven't done a lot of evaluation. Uh but I guess just wanted to share what I've learned. >> Thank you.

>> Thank you.