Backdooring LLM and bypassing HuggingFace malware scanners

Show transcript [en]

welcome back for this small recess and now we'll move forward with David who's going to be backing LLM so I'm really interesting in hearing so thank you >> thank you thank you uh hi everyone uh well thanks for having me here uh it's my first time at besides Lisbon so really really happy to be here I had a bumpy ride this morning with a plane so you Lisbon is going through some strong storm But it was good. It was good. And I'm um I'm excited to um well be talking today about how um how to back door uh language models um and to bypass controls malware scanning on face. So in this talk we're going to it's a short talk um but I will

try to explain how uh hugging face security controls work and what does it mean to back door um language models. So um while when we talk about LLMs or SLM small language models, we always think about big providers like OpenAI, uh Facebook, you know, Lama, Gemini, um and more. But if we ask scientists or researchers that really develop those LLM and you know explore different LLMs uh or SLMs and try them more than once uh a day or try them continuously then we think about hugging face. Now uh if you're not familiar with hugging face how can I put it? Hugging face is the GitHub of uh LLMs uh or SLMs or models, data sets. So everything you

need to um pull a new language models or data sets or uh training data you can find it on uh hugging face and it's um open source. Now what is the problem with um well with open source? Open source is great. Yeah, don't get me wrong. I love it and we contribute a lot uh with different projects. But of course when uh these kind of platforms and we see it also with you know GitHub um become famous well they become famous also for uh attackers. So hugging face um has been flooded with uh malware and especially with um malicious models, malicious data sets that where and are actually because it's still happening targeting uh developers or scientists or

researcher or anyone that is pulling models um doing inference on their machine and executing and using models on their uh on their systems. So well these are the guys behind the hugging face. I guess after those uh news they they don't look so happy anymore but so today uh I will try to give you a brief overview of what that means how we can create malware to upload uh on a face. We can use it for different purposes, right? But we shouldn't we shouldn't upload malware to hugging face. Let's put that down. But um if you are into red teaming, for example, that's a great way to maybe get your foothold inside um a company. Um I

didn't say that. All right. So, uh just a few words about me. I'm uh David Chacha. I come from Italy. I live in the Netherlands. and uh I'm the founder of uh decodex. Decodex stands for defensive coding and exploitation. Uh we are a small cyber security company and uh we love research. Now you see my titles that I'm also CPO at SECD. Uh so SED um SED we provide secure coding training for developers and security engineers. And basically how these two connects is that whatever we research at the codeex we transform that knowledge into learning lessons as sec um about me uh well I'm a speaker sometimes I try and um I also train at conferences like

black hat defcon and uh sometimes at osapse you can find me there and I'm also uh leading the devs the Netherlands chapter So if you don't know dev secon it's um meetup or conference uh that happens once a year where uh we talk everything dev sec ops. Uh on the fun side uh I love tennis and padell. So I know you guys in Portugal play a lot of padel too definitely better than me for sure. Now um what are the security implication when anyone can publish models u for example on a phase right when models become open source um well the first the most obvious one is that if a model is tempered of course

it can execute unwanted action and the second implication is that we often trust models and data set implicitly so we use them right we pull them, we use them. Now, of course, it's very easy to use APIs to uh do inference on our models. But um it's also very easy to pull models from aface and do inference towards that those models. Now the third problem is that models are most of the time black bots. We don't know what's inside, right? How they were trained. Um we can't assess them. And the biggest problem here and we're I'm going to talk a lot about this today is that we don't have scanners. We don't have like SUS tools, SCA tools that are

very effective against um well against certain category of vulnerabilities or uh we don't have malware scanners that are effective. I will show you what hugging and phase actually use. Um they're almost non-existent. So the question is and this is u the title of the talk. How can we be vector um an LLM or an SLM or a language model or even create a malicious data set that we can use to compromise a researcher uh machine. Well, there are um two techniques mainly. Uh the main techniques that we're going to explore today is the pickle dialization um related to the usage of common libraries like PyTorch, NumPy, Scit Learn, but there are there are more and

um other techniques that we're not going to touch today uh relate to TensorFlow and the Keras Lambda layers. All right. So I mentioned picle. Um now if you are in um application security and you love Python you know what ple is but um if you don't ple is a way of serializing and des serializing um data now ple come with a big problems right uh if we use pigles and in in python we most probably are exposing oursel to desalization vulnerabilities and with desalization vulnerabilities it means is that we are exposing ourselves to command execution. Um now how models or data sets are shared on an interface is as you can see from the slide through or via uh pickle

because models are pretty big right for example I don't know how big is this one but hundreds of megabytes and before sharing uh a model with uh you know with the world we are going to we need to serialize it and you see that that model has an extensionb. So it's pretty much a binary and whenever someone wants to try the model they will need to dialize that model. Now in application security we solved these problems with ple long time ago. we just said, "Hey, don't use peles." Right? So, it went pretty well. The realization issues kind of became um less and less, but unfortunately now they're back. Um, and why are they back? Um, they are back

because the libraries that we use to load models and to do inference on those models are based on ple. So for example, this is um PyTorch. Uh if you're using PyTorch uh then torch.load um is definitely uh using ple um is definitely doing visualization of ple files and you see a big warning on all these libraries. I will show you a few more. Um, and that says, "Hey, look, this library is using pals, so be careful when you pull models." Now, uh, I haven't seen well when these big warnings uh, worked well. But, PyTorch is not the only one unfortunately. Uh for example, numpy uh load is numpy is another library um used a lot to create data sets to share data

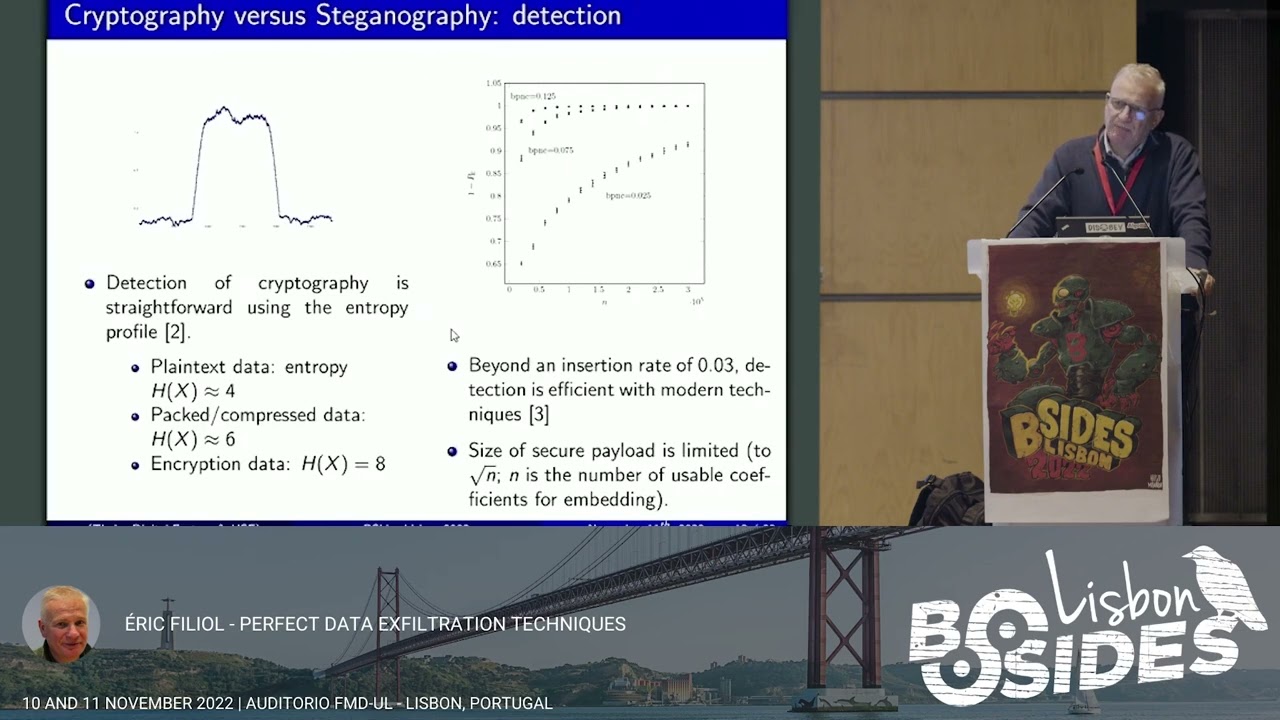

sets and uh it comes with the function.load to load uh data sets or to load models. Um and you see that the third parameter of this function is allow people false by default. That is good. But unfortunately most of the models and data sets that are shared on a phase are picles. So in that case you will need an allow picle true exposing yourself to malicious picos and malware. Um one more um I don't know if you have uh heard about scikitlearn but or jobly basically learn is another library to load models and to create models that we're going to publish on uh on a phase and the format that is used by seglearn is uh job lib. Now when we look at job

lib it's kind of the same unfortunately. Yeah. Um so job.load load in the end uses um uses pagle. Now I'm um mentioning pigle a lot but I haven't told you how a ple looks like well like that if you when you eat pigles but um for example um x dump of a serialized ple uh object looks like this right it has a pickle protocol uh is um that depends on the on the protocol that that we're using um it it stores uh the frame name length. So the length of the p the payload for example the binary or the python object that we serialized um and it ends with uh op code 2 e that

is the stop sign. Now um if we think like how do we produce that uh hex dump or that binary right that the content that would be stored in a binary uh basically python has two functions um dump and load. So we dump we are going to serialize an object and we load we are going to des serialize this object to be used and this is what happens in every library um mentioned here like saglearn numpy or uh pytorch they use the load function and the dump function uh from python to serialize and the serialize pickles. Now, how do we create a malicious behization

vulnerabilities 101? Um, but it's important to understand how we're going uh what we're going to do later. So, um to create a malicious sple, we can abuse the method the method reduce. So you see in the slide I don't know which under the in the class exploit we have this function called reduce and inside the function reduce we have our malicious instruction in this case we're just running ID so we're just printing the um user groups but you can imagine that you can put any Python code in there so you can actually write your own shell your own backd dooror and embed it in a Python object Now why is this reduce function important? Because every time we do we

run load on a serialized object on a vehicle object the first thing that happens is um the serialization we look into reduce to understand how to represent what to do with that object. Right? So in reduce is the first function that is executed um that helps the dialization process to understand how to show the real object right the real data and in this in this case we are using it to basically execute commands. Now you will say okay um we create this uh malicious pagle we upload it on hugging face so so what well this is as we saw from the news is a is a big problem and hugging face has been taking some

remediations. So as part of these remediations they actually included a bunch of security tools to scan every file that is uploaded on a game face. Um you might be familiar with JROG uh it's vendor commercial tool um protect AI the same these are commercial tools that basically scan those files for uh I will show you how how it's done but basically they actually implement uh this allow list and an allow list looking for signatures so we are back at you know 1980 when we're trying to writing antivirus with signatures Um but one of the tool that actually I think it was maybe the second tool that was added by a face if I remember correctly is the last one. I don't know

if you can read it but it says pickle scan. Now, pickle scan is an open source tool maintained by one person and it's used and it it was and it still is one of the main tools that is used by hugging face to detect malicious uh models or malicious binaries, malicious peles, malicious data sets. And the last one is um claim AV. We're just going to leave it there. Now um how does vehicle scan work? Did you watch this Siri? Do you know this Siri? No. Yeah. Yeah. Okay, cool. Yeah. So, let me just try and see whether I can open this. No, I cannot. One second. Whoa. I'm trying to move my Oh, here you go.

Okay. Can you see this? Okay, cool. So, I want to show you how Plec Scan works and why I said that um we're back at the you know old times when we were writing antivirus with uh with keywords. So how pickle scan works is that it's basically trying to um read the pickle files or the binary files and match um malicious primitives that might be hidden inside those files. Right? So you can imagine that this doesn't really work, right? We experience it with malware. It's easy to bypass on devices that implement signatures. And this is the same uh this is exactly the same. So one of the biggest problem that I can face is is

facing um is that the security controls that are implemented are not really really strong. So it's pretty easy to um to bypass them. And let me see if I can go back to the to the slides. I hope so. See?

Okay. This goes like this.

Okay. Yeah. So um in the end it's it's a whole you know cat and mouse game. Um there is a new malware there is a new um dangerous pagle or binary or model that is update uploaded to hugging face machines are compromised you know um and basically the signatures are updated. Now one of the uh most recent incidents or ways to bypass these security tools and upload you know valid malware on uh on the platform is called nullify uh attack and it basically it's a very simple attack. I showed you how a ple looks like uh at the beginning of the slide like the the hex dump that that we saw. Um but what this attack exploits is

the fact that these scanners uh need to scan valid pickles. Now what um researchers from reversing labs did is that they thought okay let's do like this because these tools actually scan the whole file the whole binary to detect malicious activities in the language models or data set. Why don't we change one op code um in that X dump and see whether we can bypass the um uh the security controls or the scans. So what they did you see it in red down there they basically changed one op code from it was 62 to 52. What does that mean? That means that the the whole payload the whole malicious ple that contains our vector um is not cannot be scanned because it's

broken. So when we run those tools like pickle scan on this content basically the tool will just raise an alert saying hey the pickle is broken um can or even worse it will say is not a ple but actually when we're going to load this one and I will show you if I had time as short demo when we load this blob this binary using one of those functions like numpy.load or pytorch.load load it will it will execute the um malicious codes that we embedded in it. Um oh there you go not sure it's readable but this is an example of what happens with the broken ple. So when we try to load a binary that contains the

uh the xdump that that we just saw with the with one flipped uh op code. Well, you see that the command is still executed on the machine, right, when we run it on load. But actually the library will return an error saying like, okay, this is not a valid model. This is not a valid uh file. But unfortunately, um we are already able to compromise someone that loaded that model or pulled that model from hugging face for example or from any other vector, right? And this happens because how picles works uh how picle works is that they are read the file is read in sequence starting from top till the end. So this flow breaks only at the end. So our payload

our malicious payload is most probably in the middle and it is executed. Um now as I said right whenever there is a new bypass pos scan is updated or all all of those tools are actually updated with the with those signature but there is much more to research so um and this is what we're doing um at the codeex and um xad uh I want to share with you I don't know if you know hunter but basically Um they have a bug bounty program if you are into bug bounties and u they recently launched a category there is uh compromise models and if you can find a way to bypass the security restriction security control security

scanners on hugging face using one of those techniques uh well you will get a bounty of course um And you see there there is there is much more there is much more. It's not only about the model but it's also about the libraries that we use. Um I think the in the previous talk um um you mentioned that you know things are evolving very fast uh and actually they are and also you know all the frameworks libraries that are used to um load models to use models are changing continuously. So definitely uh it's a green field for bounty hunters. Now I promised in the description of this soft that I would share um some CVS well two

CVs. Unfortunately I will share only one because the other one is still not public. Um let me Okay, this is I'll try to do this. I cannot

all right so this is um this is the pickle scan project as I said it's an open source project it's on GitHub right is the maintainer there. No. Okay. Um, it's on GitHub. So, it's an open source tool that is used to pretty pretty much detect as a big bone big bone of a phase. Um, and as you can see, the security advisory is growing is growing quite a lot. When uh we first reported the issues, we were here. So, we were the one, two, three, four, five, six, seventh. Right? So what we did is so picle scan is pretty strong in detecting common execution threat but um it's not it was it was not too good at

detecting data filtration threat. So most of the times uh when we create a back door or you know we want to compromise a machine is not always about uh the ability of executing commands but actually exfiltrate data that can um bring us you know to new avenues or escalate our privileges. So in um just to show you uh the PC that basically uh resulted in the CV in um well in this PC we we had to use well we had to be a little bit creative um and find primitive primitives that um could allow us to call um an endpoint and um load or read a file. So um we identify the uh let me see uh the the primitive

uh line cache. Do you know it familiar with it? We were not either um to to read for example the etc password file. And then uh we had the other problem. Okay we can read a file but how do we exfiltrate it? We cannot use HTTP request. we cannot uh really u use the common libraries in Python. Um so we decided to give it a try um using DNS and we were actually successful in uh basically um using uh DNS calls to always filtrate data. So at the end um this uh we put everything in a in a binary file. We uploaded to a phase. No alerts. Everything looked green. Uh fine. And um well, it gave us a bounty.

Now I cannot share the other uh CV because there is no CV yet, but it's still in um uh under approval. Um one second.

As you can see, I'm struggling with with the monitor. So, um, how do we defend ourselves? How if we are using a phase, if we are using models, if you're trying small language models, why not? Um, well, the first thing of course is the same advice that we had in ABSC long time ago. uh pickle is broken by design. So don't trust you know models um implicitly right. So make sure um if you can assess it you know if it's a vehicle you can see what's inside do that um pickle scan is not really trustable. Uh so don't rely on it. um aim phase doesn't as they're adding more and more tools and if loading pigles is needed because you

know a model that we want to use maybe spiggle then is published then uh make sure to use safe load so newer libraries implement better um loading methods um and the last thing is well a hugenface comes with a function that is called from pre-trained that is safe by default and allows you to uh pull in and do inference safely on um on models. No, well, right on time. Um that's all I had to say. Um so if you want to want to be in touch, that's my LinkedIn email, uh Twitter, still call it Twitter. I'm nostalgic. Uh so feel free to send me a connection or you know uh if there are any questions happy to to answer. Thank you.

Great presentation. Thank you. And how was the DNS Xfiltration? How did it work? Actually, >> how did the DNS exfiltration work? >> Uh DNS sitration. Sorry. Um so the question how did the DNS sitration work? Um we we basically send um a txt uh record towards uh an endpoint and we had to normalize uh the the content. So in that case we read etc password for example uh we had to normalize we had to remove all the semi uh columns and replace it with underscore make it one uh one line. So basically we had to exfiltrate line by line the whole content of the file in that case >> and it would go to your DNS server

right? >> Yes we will uh yeah it was towards our controlled DNS server. Hello, thank you for your presentation. Do you have any your opinion about using deal safe tensors called pickle? Do we all suffer the problems from pickle or like safe tensors or deal avoid us dealing with pickle directly? Does avoiding us to having these kind of vulnerabilities? Uh wait if I understood the question uh >> yes there are other uh serializing libraries for python like deal uh ple is one right you have deal as alternative and egging face now are using a lot of safe tensors to make stilizationization if you have any thoughts on that did you dig if safeters actually solve the

problem they don't uh if you have any thoughts about safety sensors or even deal or cloud pico. Yeah, I mean um all these libraries right are um are using big like what we see is that PyTorch maybe is the most used one and it's um well the load function is definitely vulnerable but uh newer version as I mentioned are uh providing safer ways of uh of loading of loading pigos too like most of the time you don't need to uh expose yourself to dialization and uh with newer frameworks of Of course, this is not really um an issue. The problem is that when you use for example PyTorch to create your binary then in that case um well the the best

way would to prevent it actually is awareness uh towards like the developers uh and um well using if possible the safe load alternative. So um that will be >> okay. So thank you. We'll need to to move forward. So I think David will be happy to answer any further questions. Nothing. >> Thank you. >> Thank you. Um,