Ignorance is bliss - does privacy matter?

Show transcript [en]

Hi guys, nice to meet you all. Um, so this a little bit about me. That's me riding at Cheddar Gorge last weekend on a bike for 128 miles. Don't know why, but um I'm a bit of a geek. Um uh I do stuff for charity. I love uh infosc and all that kind of stuff. STEM ambassador and I'm currently at IBM uh as a security architect. So, let me just kind of jump straight in, I suppose. Uh, ignorance is bliss. So, who's seen the Matrix? Has anybody here seen The Matrix? So, you probably know what I mean by ignorance is bliss when when Cipher uh makes a deal with the Sentinels to go back into the Matrix

because he knows that that stake isn't really a stake. Yeah. So, so what we're looking at there is a is a kind of willful ignorance. So Corey Doctoro had a good deal to say at the 11th hope conference uh last year in which denial begets nihilism uh the belief that nothing in the world has real existence or or a willful ignorance and we choose to ignore it uh because it doesn't exist. So, you know, a lot of the time we we do that to kind of fulfill our own self-preservation and and more often than not it will kind of lead us down to a to a kind of confirmation bias which, you know, is a

whole another world that we could go into, but we'll keep it brief for the 15 minutes that we have. So, you know, one of the things that kind of fascinates me about this, I suppose, is, you know, you might be looking at a social media channel where, you know, you like certain things and you follow certain trends. And of course, you know, the thing about that is is we then start to kind of get down uh a road of of uh echo chamber. And I don't know if anyone's ever heard of echo chamber before, but what what I kind of mean I is that if you're in a room full of people that all agree on the same

principles and the same ethics, then you're never going to have uh uh a two-way conversation. You're never going to have conflicts of interests. And you know, I think that's kind of a little bit sad in a way because, you know, I I like a bit of banter. I like a bit of, you know, no, I don't think you're right on that. And and and I think that's what makes us good. The thing is, you know, this might be good in a perfect utopia, but you know, we're human. We're we're not perfect by any way, shape, or form. So, that kind of takes me on to nothing to hide, which really really kind of makes me laugh. You know, people

always say, "Oh, I've got nothing to hide." Got nothing to hide. So, so you know, a few years ago, I used to say to people, "Well, take your clothes off." You know, tell me, uh, sorry. Thank you, pardon. Take your clothes off. Tell me, tell me what your pay is, you know, tell me what ailments you have. And I'm completely screwing the slides up there, but um you know, and again, Cy summed it up perfectly last year. Um and it's not what it's it's not what you you you you've got to hide. It's about what you choose to keep private, you know, it's about what you choose to keep personal. and and and sometimes you know I think

there's a a boundary which is being crossed quite a lot of the time um you know we we all know what you do when you go to the toilet right you know we we all know how people were were made you know your mom and dad you know but it takes a special kind of person you know to go to the toilet with the door open right so so you know it's about choosing what we want to keep what we want to keep private so I can't really do a a presentation on on um privacy, you know, without agreeing to the the T's and C's. You know, who who agrees to T's and C's in this room? We do it all the time. You

know, oh yeah, I look that up. Yeah, I'll uh agree to the terms and conditions. You know, what does it actually say? Heck, you know, I'm I'm sure if I know and it reminds me um of a an old episode of South Park in which they make this human sent. You know, you may or may not remember this, but um you know, little Kyle there um he he gets a new iPod and and he uh he he wants iTunes. So, you know, yeah, he willfully accepts the terms and conditions and then Steve Jobs and his crew come along to to kidnap him and make him into a into an Apple concoction. So you know and kind of going back to you know the

tick box you know this this uh you know this one that you have to read three four times to actually understand whether you are or are not sharing your details with a third party you know and you know have to read it three four times and and the problem that I see with this as well is that you know do these third parties you know this use the same security mechanisms that that the company that you're entrusting your information with in the first place use or or for instance what are they doing with that data how are they measuring that data what are they using that data for the chances are we probably don't know and we'll never know um and you

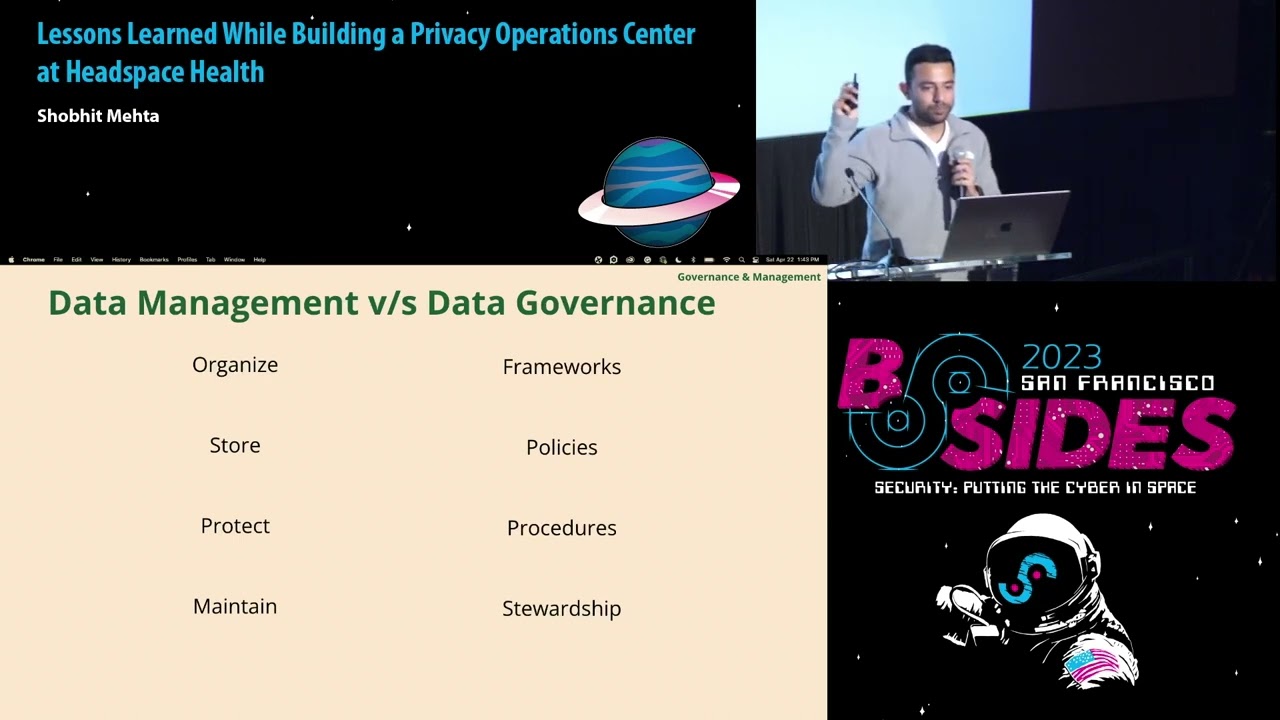

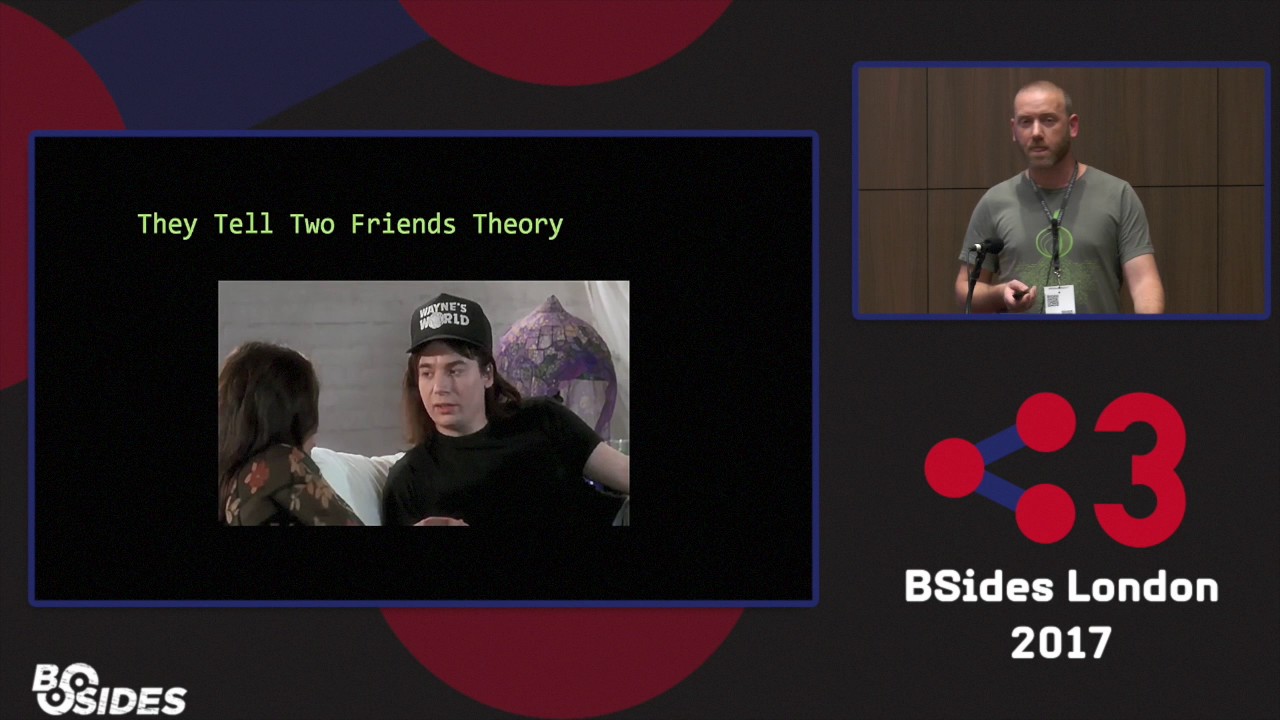

know what what what I call that is the is the kind of the uh the I2 tell the I tell two friends theory so if this works

Well, you know how these things start. One guy tells another guy something and then he tells two friends and they tell two friends and they tell their friends and so on and so on and so on. You know how these things go, you know. So, I mean, you know, we've got all this data out there. We don't know what's going on with it. And and ultimately, who now who who how now has it, if that makes any sense at all. But I think you know what I mean. Sorry, just get get this uh so we've covered uh ignorance and privacy denial, nothing to hide, T's and C's, which takes me on to the data deluge. You know who's got cloud storage

nowadays? You know, everyone, right? You, me. I mean, even granny's got it, right? You know, we're we're got it because it's helpful for all sorts of reasons, you know, and we can use it for many, many different things. The thing I see with this is that every single day billions of photos, videos, texts, the metadata uh not to mention uh databases, banking transactions uh all across the globe the businesses are connect uh are collecting our consumer preferences. Yeah. Everything about us and and this deluge of data is growing so fast. I mean in in 2013 we're looking at 4.4 4 zetabytes and and in 2020 we're looking at 44 zetabytes. Um for those that don't know what a

zetabyte is, we're looking at 44 trillion gigabytes per zetabyte. So it kind of puts into perspective what you know this kind of data deluge is doing. And you, you know, we drive down the street and there's cameras taking pictures of us and and all this information being collected and and used for, you know, whatever reason. And, you know, of course, it doesn't matter because I've got nothing to hide. Um, and and you know, there's, you know, there's new laws and legislations, data protection regulations coming out, you know, GDPR, but there's still a lot of ambiguity around, you know, the actual um ways in which they can process your information. you know, I've been on the

phone quite a few times for the ICO and asked them questions about the data that I'm processing or handling. They've actually come back and said, "I don't actually know. Um, we don't have an answer for you." And and so, you know, what is it that they can actually fundamentally do with this data going forward? So, does privacy matter? I mean, what are the concerns? You know, what's information overhang? what's UBI and the and the singularity. So that's what we'll cover right now. So you know in a society where we're taught by measuring from such a young age, right? You you know how much is this one better than the other one? You know, I want the latest iPhone 42

and and and things like that. You you know and I get the idea of measuring to a degree. You know, if we want to measure something to to put a new health care system to to treat cancer or renew a renewable energy force, great. I'm all for it. but you know to to to build up build up a digital profile about you know what I'm looking at on the internet what I'm doing on the internet what things I've been browsing um you know and every single interaction which you know you're possibly doing which is being logged you know not to mention uh the mass surveillance which is going on and the and and the uh recent request by

the government to uh have next to near time um real time access to with the ISPs. Um, you know, we we we start using this stuff for for for even more purposes and and purposes that we didn't perhaps realize uh why we were measuring this in the first place. So, so it's it's really interesting to kind of, you know, understand, you know, what what information it is that's being built up on our digital profiles. Um, and and how this would be used in the future. The big problem I see is the information overhang, as I like to call it. So, you know, this is just a gueststimation slide, right? We've got a load of compute out in the out in the

wild right now. Loads of compute. How much of that is actually how how much of that is actually being used? Um, so you know, I mean this laptop here right now, it's a quad core with however many gigs of RAM. I'm showing a PowerPoint presentation right now. Is that making full use of the compute and memory power of my computer? Probably not. So, so this is what I call the information overhang. And you know we're getting better with you know using scalability AWS containers you know the average life of container now is 7 seconds in the cloud which you know is pretty pretty gripping stuff but you know with with the adoption of AI you know every single

week you hear something new about some new fangled AI or AGI you know now does anybody know AGI or deep a AI? Um so AGI is the term for artificial general intelligence. So, so when um when the AI becomes self-aware and it's able to it's able to pass a churin test um you know what will happen with this with this um extra compute power which is available to it uh you know we don't actually know what's going to happen. So when you look at the information overhang and an AGI which is able to change and and upgrade and expand and scale beyond any recognition that we can actually fathom in our heads, you know, and will it will it develop at a rate

that we can't actually keep up with? Um, you know, is is a really kind of scary thought. I mean Google's uh director of engineering Ray Kurszswwell he he estimates that around 2045 is when we'll start developing the real first true AGI and you know and that's when probably we'll start looking at what's called the technological or the economic uh singularity. So uh UBI is the universe of basic income um and the technological and economic singularity. So Finland are already processing universal basic income and you know like I said when the AGI really starts to take off you know it's hard to say whether it will be great or if it will be destructive. Um you know like I said

it's changing at a at a rate that even we can't comprehend. It's it's making better decisions. It's making you know technology grow uh uh exponentially. So what then happens with our cognitive jobs? you know what happens with you know the the jobs that we know today um that are going to be able to be done by this AGI or this robot or this deep learning you know and last time rounds you know when we had the the industrial revolution we we you know it took our mechanical jobs you know when we have the economic singularity or the technological singularity it'll be gunning for our white collar and our cognitive jobs So, you know, it's it's it's a really kind

of interesting uh subject, you know, especially when we correlate all the information that I talked about previously about all the information that's being kept about us, how it's how it's being measured, how it's being scored, how how we get these annoying adverts down the side of all the things we've been looking at, pie hole, if you ever want to get rid of that at home. But, um, you know, it kind of brings me back to the rhetoric, you know, that we first started up on this uh conversation. At the end of the day, does privacy matter? Well, ignorance is bliss, right? So, thanks for listening. Have you got any uh questions? And there's some links up there.

[Applause]