PW - Detecting Credential Abuse

Show original YouTube description

Show transcript [en]

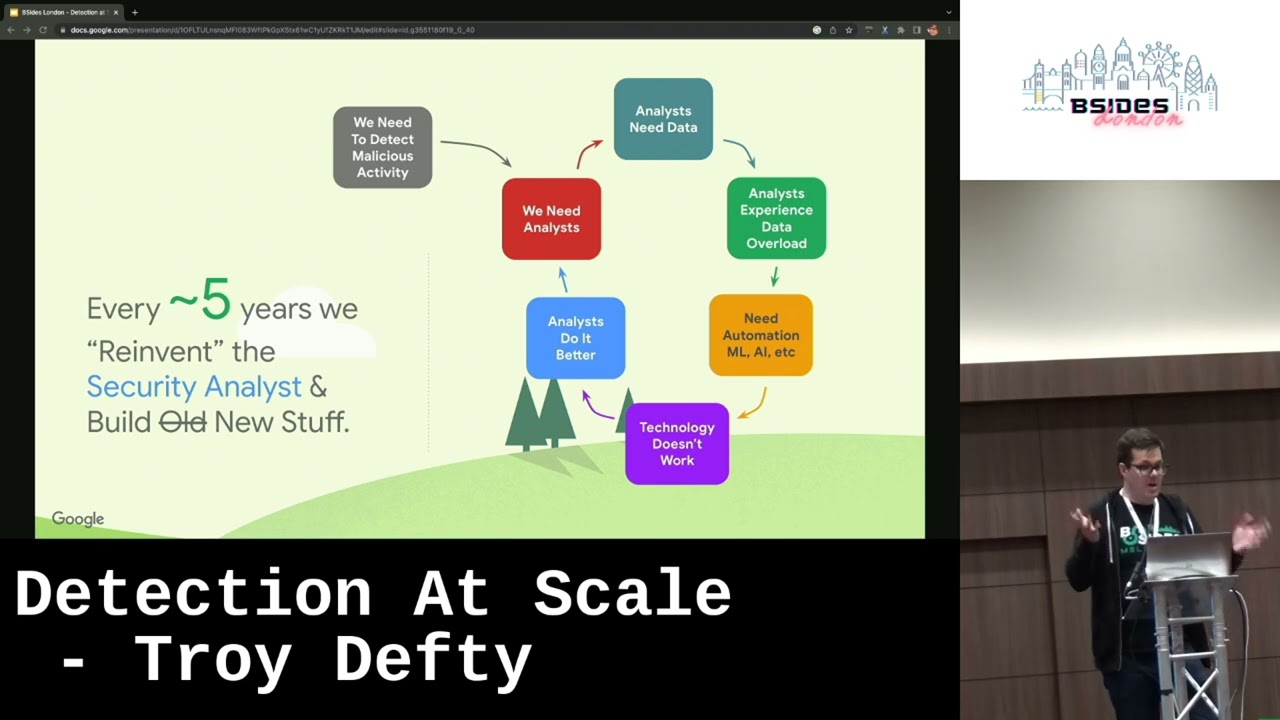

hi there folks thanks again for your time today as well um and thanks for the opportunity to present to you all and to the volunteers for making it happen um before we introduce ourselves I like to pose a question to the audience here how many of us in this room full of security enthusiasts has been the victim of a count compromise fishing stuff like that I was really nervous when I thought I was going to ask this question because I had no idea how many people would put their hand up actually it looks to be roughly speaking about like a third maybe 40% of the room the rest doesn't know it yet maybe no maybe so right and

I think the interesting here the interesting part of this is like we're a hardened Target right and if you can imagine if we asked this question in a in a room full of folks who are maybe not as enthusiastic about security as we are um that number is likely going to be significantly High um so we're here to kind of talk about a pretty common attack Vector nowadays um that we still see kind of happening in in pretty large scale in the wild um it's worth noting that the detections will be talking about here are um possible and commercially available tools open source and there's nothing specific to the company here um but in the interests of like

furthering both the red and the blue sides of the proverbial coin um we're going to look for basically looking through the needle looking for the needle in the kind of like log hay stack just to introduce myself quickly I'm Troy I'm originally from South Africa I've spent most of my life in the UK and Australia I've been industry for about 13 14 years something like that about nine of those years I was in red teaming before about four years ago I joined uh Google's blue team and I'm Kathy I work with Troy here in the detection space at Google I have worked in the security industry for about a decade and currently I'm a security engineering Tech lead

professionally my passion is in detection engineering and solving complex problems with coding outside of work I love running love the outdoors and reading books do you thank you so just quickly to cover the agenda we'll first be talking about um some typical attacker motivations that we see targeting credentials why they target credentials what they use for we'll talk about some credential fundamentals for those of you who may not be familiar with some of this stuff you know what they are what they represent how and what we know about them or how we can use us to our advantage then we'll go into the majority of our talk which is talking about detection patterns that we

use to detect credential abuse um and including some controls that we can support us doing so as well and some of the challenges involved in this for those of you in blue teaming roles some of these might might sound quite familiar finally we'll talk about what we can do after a detection has fired um and what we need to be aware of when investigating to help us respond and actually remediate the incident that when it happens and we'll hopefully have some time for Q questions at the end but if not we'll be hanging around outside so please feel free to ask us outside as well but when we talk about credential compromise we need to remember that

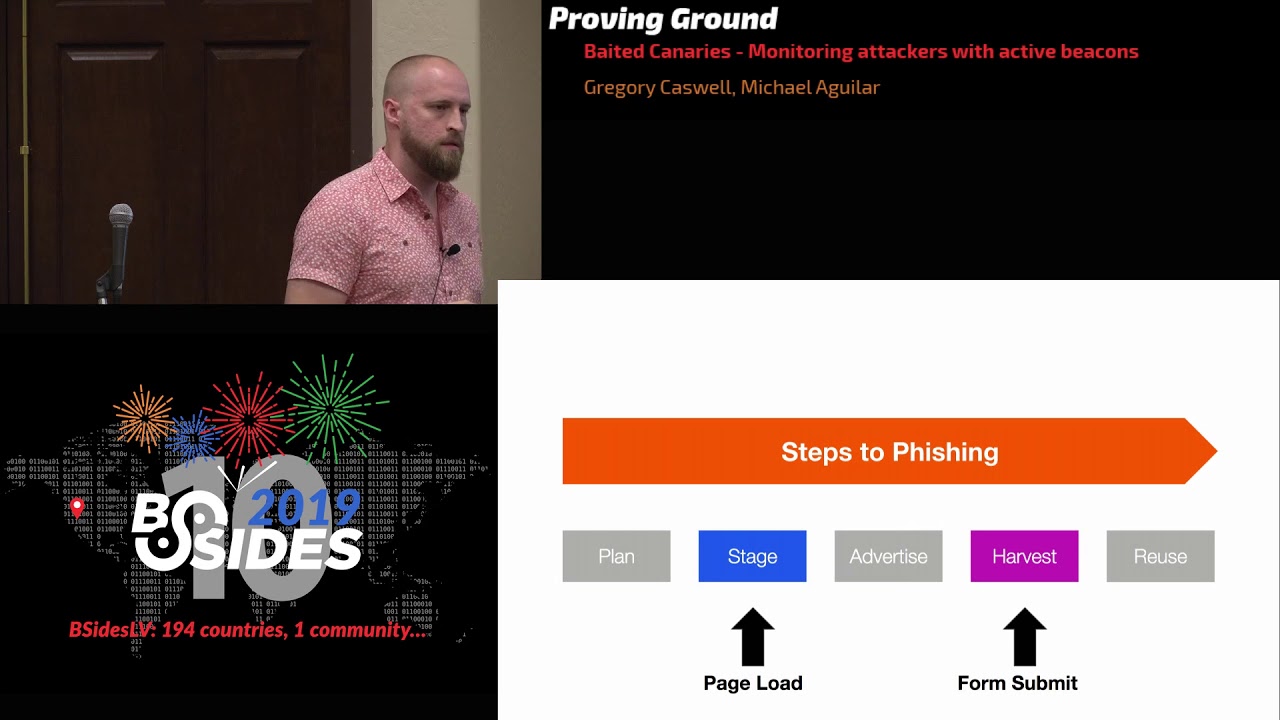

credential compromise really means account compromise and account compromise can be used for many many things including you know spam or abuse campaigns U ransomware scams social engineering more generally or in like the more sophisticated instances you'll see like initial foothold and Target organizations like lateral movement privilege escalation all these kinds of like really bad things as you can imagine for an attacker this is like a highly sought after um set of accesses right some of you might remember lapsis the group that used to um and I guess maybe still does um purchase credentials as a form of initial access amongst other things it's worth noting that this approach is not necessarily lower sophistication because even though you have the creds you still

have to be able to M maintain operational security to main maintain stealth um because just because you're in the front door doesn't mean no one's looking for you another highly relevant case from June last year um storm 0558 or 558 um a China attributed AP group that targeted Microsoft and gained access to Consumer account signing Keys uh these were then used to create credentials for specific high value end user Outlook accounts and subsequently compromise those accounts and attacks like these are highly highly sophisticated and often require the backing of nation states or similar due to the the resource Investments required and due to this High investment cost the targets are often of of significant importance to the attacker and hence why

we see these kind of like high investment um high value capabilities being burned to gain access here and finally many AP and cyber crime cyber crime groups sorry also abuse credentials to a variety of means as well including things like you know crypto scams via social engineering vote manipulation using large volumes of targeted accounts stuff like this um but the really important thing here is to remember that a lot of these groups operate as a business and they need to have a return on their investment so as Defenders detecting the attacker trying to do this sort of stuff really means we need to be looking at first of all credential theft um the attacker stealing a legitimate credential issued

to a legitimate user and using it to typically compromise the account we talked about before uh credential sharing in sale an attacker purchasing a user's credentials or the user voluntarily sharing their credentials with an attacker and in many ways this is similar to the credential theft problem but it has one important Nuance which we'll talk about during the investigation process and finally credential forgery which is an attack of really compromising chy material as I mentioned a minute ago to generate arbitary credentials out of Band of the identity providing system or more generally speaking just being able to generate credentials without actually being the IDP and just a note we won't touch on credential exfiltration here because

that's like a subset of the detection of exfiltration problem which is like an entire field in itself by this point I'll hand over to Kathy to talk about some credential speedr running Basics no speed and credential Basics even okay so before diving into the details of the detections we'll take a step back and do a walkthrough of the credential Basics like try mentioned so credentials can be a bunch of things depending on the context for example we see passwords Hardware multifactor off tokens browser cookies or tokens certificate backed cryptographic signing keys and the list goes on more generally credentials can be uh considered to be something that that is used to provide what used to prove one's identity and

tend to be unique some credentials can also be used to derive or exchange for other credentials for example you log into a website with a username and password maybe MFA challenge as well the website gives you a cookie which you then use in subsequent request during that session rather than providing your password and MFA challenge every single time similarly a refresh token issued to a client can be used to exchange shortlived um access tokens these are all examples of credential exchanges important point to bear in mind credentials can be used to attest one's identity and by extension their access as well as credentials not only act like a badge or ID that proves identity but also act like a key that

can be used for unlocking access so if a malicious actor manage to steal your credential and then there aren't good controls in place then they can test your identity and obtain sensitive access and finally credentials generated for users and identities are related one can have lots of credentials linked to their identity we can often computationally build a model of this relationship using log data containing different context of their of these credentials this relationship is often the key to join similing unrelated data source and piece together the full picture for both detection and investigation so when it comes to beaut feing detections for credential abuse it's also important to understand some of the common properties that can be

leveraged to enable such detections here are some of the things we know about credentials firstly the trust we can bestow in a credential is positively correlated to the strength of the challenges which needs to be met for credential to be issued for example we shouldn't think you know password to be like a strong form of credential where I have all seen the password bad meme the user gener ated single Factor Unbound values so we can't trust passwords by themselves but we can bestow higher amount of trust to credentials that have stronger authentication challenges and controls although we should keep in mind that there could be legitimate reasons why the week credentials are still supported for example dated software

backward compatibilities and secondly many credentials will look to secure rarely leave the context upon which they were generated this contest could be a browser for cookie for example a device or a TPM a trusted platform module for those who are not familiar with the term um they there are exceptions of course for example credentials such as passwords or bar tokens uh they are often times intended to be transportable but many credentials um rarely leave the original contacts they were generated from and therefore can leverage that for our detection credential are also rarely accessed outside of a specific set of executables for example cookie jobs are only accessed by browsers most of the times but if you see a random binary

coke. exe accessing your cookie JW then there's something wrong another example of this could be we see many executables signed by the same certificate accessing uh C credential and all of a sudden an onsign credential is accessing it this will stand out aside from from password past case MFA many credentials also rarely get access by end users typically access to credentials are automated or performed by a program although human access is not impossible it can be interesting in the context of other detections as well okay so before we start talking about how to use these properties to implement credential abuse detections I would also like to address a very important prerequisite we need to talk

about logs we cannot talk about detections without emphasizing this point logs are extremely important a crucial precursor to everything we're about to talk about going forward we we'll be assuming we have good log availability and access we have the ability to ingest filter and detect maliciousness within a reasonable time frame or latency we're not going to talk about how this can be done as this is not a detection pipeline talk but we can chat about this afterwards if folks in the room are interested okay so just to reiterate the points the main takeaway here is your first step shouldn't be try to implement the detections it should be making sure your logs do what you need them to do as

your detections are only as good as the data is built off you know following the garbage in garbage out principle you'd want your pipline to log the right things reliably log the right things retain these data for a reasonable time frame make these logs available for ingestion and tell you when these things aren't working as intended obviously this requires resource compute memory and disk space to enable but they're very important to build reliable detections okay I'll pass to Troy to talk about credential abuse detections now thanks but all this being said let's talk about um how we can use what we know about credentials and and how attackers can use them to to try and

detect some Badness so itial theft often manifests itself as some kind of identity impersonation compromise of a user account something along these lines and there's many many vectors through which credentials can be stolen this is commonly the result of things like info stealing malware um of which there are many many variants every day in an even greater number of infections that we see around the world but it also could be things like cross-side scripting um dumping and exfiltration of credential databases all these kinds of things that effectively result in credentials ending up in places we don't want them to be um we won't be talking about all possible variations of detecting credential abuses the there's a lot of it as you

can imagine uh we'll be talking about um a couple of examples including the impossible travel problem which a lot of people might be familiar with um credential binding violations and also discussing credential forgery as well um which is similar in terms of impact um on the end user accounts here but very different in nature when it comes to detection engineering um whoop that was too fast so to talk about impossible travel so for those of you who haven't kind of experienced as much before let's say we have a user that's normally based in bogatar in Columbia that's their main working location this is where we see them he from in log data and where we

see their devices talk to our infrastructure where they originate from hypothetically after a period of time we then see authenticated user traffic originating from Seattle the question becomes is it possible for for a human to feasibly travel between these two locations within the time frame that we've seen this essentially boils down to a question of how fast would they have to travel to get from A to B and is their velocity above what we would consider possible in that time frame for example the velocity of a commercial passenger aircraft is is probably fine within reason but if we see people traveling beyond the speed of sound unless you're in a very specific set of jobs you're

probably not going to be doing that regularly but remember that all of these observations and speed calculations come from log data as Kathy mentioned a second ago we need ways of determining where the user is appearing from first of all this is kind of this concept of geolocation IP address is probably the most common means of figuring this out generally speaking but if we see the user in two places when looking at IP indicators there could be many other legitimate reasons why this happens things like VPN usage for example is the most common one and remoting it devices somewhere else or geolocation attributions sometimes changing on a given IP address block because this is just how the internet

works so we need to move Beyond just did we see a low Fidelity indicator present and we can't really solely rely on IP addresses we and we really want to try and figure out where the real user actually is based on the other indicators that we see so password is unfortunately also a weak indicator things like you know fishable credentials that are designed to be somewhat portable for the user if we see a user authentication event and all we all they provide is a password we can't really say for certain that it's the real user actually at the keyboard and this is particularly D due to the port portability of passwords uh and their fishability

soft back backed MFA creds things like SMS security codes for example are slightly better and particularly if we see them in combination with other factors passwords and security codes this is like the concept of two Factor authentication nowadays basically but again SMS things and like softback security codes are designed to be portable again and reasonably fishable and some of these are vulnerable to outof band attacks like sorting the effectiveness of soft um MFA tokens really depends on the specific token type and these can be quite varied which makes it quite hard but we might be able to determine for example that a company-owned device is present at a given location if a hardware backed credential issued to a device is used as

a second factor or if we issue users with Hardware MFA tokens UB keys for example or if our infrastructure requires the use of mutual TLS with a certificate that's stored in the TPM this at least suggests some kind of legitimate strongly tested factor is present but it's still doesn't mean the real user is there stallen devices credential sale um which we'll talk about shortly all of these are situations where we might not see the real user at the keyboard here and we kind of need Biometrics to be sure almost which I'll talk about in a sec but finally observing the same session based credentials used from two devices or two IP addresses at the same

time is typically a strong indicator for account compromise session based grul things like cookies for example typically are not designed to be transferable between devices so why would you see them moving that's the real question you have to ask so when we see an event take place where indicators like these are present we need to figure out like you know which indicators do we see of geolocation and of user device presence how confident are we in their Fidelity their portability the transferability what activity do we see before and after the event and does any of this seem strange um and do we have other log sources from which we can enrich this data did the

user badge in recently to an office for example if so was that office location near one of the locations where we see them in log data and combining a bunch of these indicators can give us a pretty good idea where the user really is uh and therefore May what may have happened to trigger a detection like this but this is really dependent on the indicators that we see and what we know about the user and this changes our confidence level in the detection itself and also how we investigate it but again the effect effectiveness of this detection this detection pattern The Impossible travel problem depends on another variety of factors for example we want to be able to correlate as many

log sources as possible the this we really want like strong log diversity you can think of this as where we have as many relevant indicators as present are present across as many log types as we can because the more logs that we have the more diverse data points we can geolocate and calculate the distance between this gives us generally speaking a higher Fidelity signal otherwise we heavily limit our visibility to the specific log sources that we choose and the attacker just may hide in one of the ones we're not looking in similarly but kind of almost inversely we want High log granularity we want as many event types to be logged for any given log type and this means

that the more events that we have for a given log the more geolocation indicators that we can compute and we can compute this signaling more frequently and with higher um higher Fidelity but there is a trade-off here the biggest problem is the more stuff that you log the more stuff you ingest this basically means the more thing you have to account for in your detection logic and the more expensive things become you have to store a hell of a lot more information so implementing a good detection here gets increasingly complex um both in time and resources but even beyond all of these indicators it's important to consider the context of the events that we see

firstly we need to consider the devices we know the user might use for example um in traffic rooting terms mobile devices over mobile networks um or Knack gateways at Wi-Fi on Wi-Fi networks at home General Mobile roaming um Network routing um all of these things affect user attribution and geolocation accuracy users also Express different behaviors from mobile devices for example in comparison to desktops or laptops and some devices just might fundamentally not support the security features we need for some of this stuff some things may not have a TPM for example we may not be able to Hardware back credentials it's also typically rare to see multiple concurrent active sessions on multiple devices most of the time

people will use one device at a time interactively they may flip between two in very quick success session if you have two laptops next to you for example and but they'll typically have one main device and this won't be for a very long time so that's to say that observing two concurrent fully interactive sessions from different devices should almost definitely arise suspicion and particularly if this happens over a long period of time and it can be hard to determine interactive here but it's possible within reason um but the fundamental question here really still comes down to how can we be sure that it is the actual user at the keyboard at the location where we see them or more

specifically how can we be sure that it's the actual user expressing the credentials that we see user behavior and attacker behavior for those of you in red teaming roles will know are very very similar um depending on the role of the user and we can tune our detection output to any volume we like almost and we need to consider the volume that we can deal with either manually or through automation those in blue team roles might be very familiar with noisy detections being tuned based on capacity rather than accuracy and the CH this is also a challenge here in the impossible travel problem um unless we have the right data the right enrichments the right automation to tune this stuff

appropriately and for those of you in red team roles um this is where the attacker really wants to blend into the noise they want to be able to blend into that volumetric tuning limitation of this detection pattern and as blue team is we also need to consider this angle when we investigate these events but we also need to be considerate of the user we don't want to inconvenience the user with unnecessary remediative AC actions or we risk them circumventing controls that are too cumbersome but those strongly back credentials those mtls certificates UB keys and things like it we mentioned before can help us answer some pretty important questions for example as we talked about briefly did we see any company issued

devices to present at a given Source IP address if so we need to consider the context of what happened a company device being seen at a given IP address might suggest that this is the user's home IP address location for example or the typical working location or at least that someone in that location has access to a company issued device not that's may not be the real user this could be an attacker could be a family member could be almost anyone if not we need to consider the credentials that we see how these are issued and how they're stored on the device but this can also help us answer a question of do we see evidence of

human Presence at a given Source IP address observing human presence from IP addresses that are geographically far apart is the effectively the essence of this detection pattern of the impossible travel problem but again we need to attest user identity to be 100% sure within reason of of who's actually there because human presence can be attested more easily than attesting the real user identity that might be present at the location usage of second Factor tokens that aren't biometric login activities using fishable or transferable credentials interactive netflow or process logs on the device these are all signs of human behavior they may be automation as well to be fair and you can pretty normally figure that out

quite easily but it's not actually the real user you can't be sure from logs which although is more difficult question to answer we now come to our final question of can we determine which of the active sessions is the real user the person we issued the credential in the device to if we have biometric Hardware backed second Factor authentication here this can be pretty conclusive in determining what's going on um specifically that we can know within reasonable bounds which session is likely the real user but this also has caveats when it comes to enrollment we can also interdict user processes with additional biometric checks things like selfie verification for example but ml models are getting

really good at rendering video and you can imagine the application of that there but effectively determining real user presence typically can comes down to the best possible combinations of trade-offs and limitations in the impossible travel detection problem and it's worth noting that for non-biometric Hardware second factors we we can't make the same activation here we could only state that a human was present at a given location but we'll talk more about this when we talk about credential sharing and sale but to summarize the points on the impossible travel detection problem real quickly um if you find yourself implementing or investigating the output of one such detection like this try and combine as many indicators as you can

and weight them as appropriate some logs are going to be more relevant than others we want to filter out known background activity and or noise where you can um because we don't want those things to trigger inoss travel events and as a result you don't need to ingest everything but we want to try and avoid shooting a detection like this for triage capacity because it's much better to implement better to touch detection logic and noise reduction and enrichment so we can actually outscale the attacker rather than just burdening ourselves with toil we should also consider how much trust we place in credentials that we can see being presented if we see high trust credentials and we got confident

in their security posture we might be able to imply a given session as the real user within reason we also need to consider geolocation granularity and maximum velocity you might have to ask this question of like how far does someone have to travel for this detection to fire given how accurately we can measure geolocation and how fast do we think too fast some cases may be fundamentally too fast like botar to Seattle example but some may be just unreasonable like un reasonable sorry like for someone who would fly from Manhattan to New York this is probably not going to happen in real life sometimes there might be better just to ask the user what happened if

you aren't sure um often if we aren't sure it's just easier to ask to understand the weirdness but this caveats with this which we'll talk about shortly as well but it's also generally worth trusting gut instinct from what we know of the user they role with in the company they likely work times and locations is this likely to be them you can kind of normally figure this out although blending into the noise as is a thing um attacker Behavior does generally stand out again with with a massive set of assumptions there so Instinct and ooms Razer can help make these gut decisions a lot of the time it's also worth keeping in mind the limitations of impossible travel

detections um unless session based credentials are available available as indicators here the detection itself is going to be heavily dependent on geolocation changes to a user account and we talked about how some of those are better than others and also some of the challenges there as well and finally in practice as coverage of detection increases uh so does the noise level it's important to complement the detection with additional controls for example credential binding which I'll hand back up to Cathy to discuss all right so as Troy just mentioned credential binding is a great control to complement credential abuse detections so this links to a property mentioned previously some credential we look to secure should rarely leave the

context upon which they were generated and if we can bind a credential to a context for example device we can in theory detect its theft when it leaves that context and get presented back to us so let's take a closer look at how credential Bing Works say we have a identity provider IDP and a client where a user wants to authenticate by a client and get get the credentials they can sub subsequently use for example a cookie we have some binding conditions configured that we want to enforce we need to compute The Binding criteria when we receive the authentication data generate the credentials we want to return and then apply our binding criteria and log

the credential generation event and then return these Bond credentials to the client and when a user try to use the bond credentials they would present present these credentials back to the IDP when um and then the IDP then compute The Binding criteria again check that our computation matches those present in the check that our computation matches um the bind the the B the binded criteria that's present in the credential or presented by the authentication client and then make a decision of whether the credential binding control has been met and in this case um because it's a legitimate client let's assume it has and log the credential usage event with the binding control validation result and then

return the data or content to the user as requested but in the case where these credentials were stolen or used from a different malicious device for example we still do the same thing compute The Binding criteria again check the criteria make a decision and then log the event but in this case as as you can imagine the control will not validate and will we will be logging a violation of The Binding control and this is the event that we look for in our logs when we write our detections and then we would like some sort of actions to be taken to remediate the situation we might expire the suspicious session lock the user out log Force

reauthentication there are false positive use cases here too for example um like a user might accidentally back up their device and or reimage their device and get issued a different certificate that doesn't match the existing binding but it is their credential they're trying to use uh it's important to consider these edge cases um When developing detections and similarly to our impossible travel data points there are a variety of binding mechanisms to consider although the usefulness of these controls might vary depending on the context and their use cases so starting from the top we can bind a client side credential to a machine certificate for example that is backed by a TPM this is a strong binding as it

is non-trivial for the attacker to retrieve and lift data from a TPM remotely TS Channel binding is an alternative mitigation which could also be highly effective Channel Bond credentials are bound to the endline TRS session for example cookies may be Channel Bond or all tokens Can Be Channel bond with ch Channel Bond finding leaked or stolen credentials cannot be used in the attacker session the attacker must compromise the victim's browser session directly both the top two options um they protect against credentials taken out of the original context and use elsewhere however they don't protest against abuse from the compromised device or session directly and the compromised credential may be change for new Unbound credentials that can be used

elsewhere this is not foolproof but it's highly effective to SP a class of attack and next we have IP addresses and net blocks attacker in possession of a credential needs to be in the same IP address range or originate from the same IP address from where the credential was generated to make use of the credential we can detect you know offset mistakes basically um very easily using this approach but there are a lot of options for the attacker to bypass this um binding like using VPN or large retail ISP ranges specific IP address might be a bit harder but not impossible depending on the circumstance as well and finally we have time it can be

considered as a binding control in the form of an expiration window Unbound credentials can be expired to mitigate the impact of any potential compromise having said that time in general is a weak binding control this is where we see a lot of credentials targeted per credentials St today and automation can be easily used to bypass time window as well so we just talked about controls that try to prevent credentials from leaving the original context to leverage these controls for detection it is important to understand why a binding control was engaged in the first place if a binding control F this implies the credential has been moved moved elsewhere we can mitigate this by force the user to log out but it we should

still know why did this happen and investigate it um it could be a m infected laptop for example or it could be just use a procuring a credential for attacker we never know until we look it is important to remember that the attacker can easily try again uh so just because we mitigated this one time we need to make sure we keep the cost high for the attacker per iteration as well we've been talking in depth about credential theft so far it's time to talk about a similar threat credential sharing and sale this technically is not a standalone detection pattern but nonetheless an augmentation of what we've discussed so far the difference is complicity and intent in the case of

credential sharing or sale the user is likely complicit this means we need to consider intent in our investigation someone might be sharing the credential so they can get access to a personal doc save on their CP account uh because their family member they're traveling and their family member still at home they could also be sharing their credential because they have sold them post abuse context enrichement is very important here ideally we should find out what actually happened after the credential were used in an unexpect unexpected manner um and also biometric Hardware backed MFA tokens are very useful here if we see evidence of a hardware backed biometric token used this is a strong indicator that the user is at present or

complicit not if a user is complicit we also need to be careful during our invest vation and response first we need to consider when and how to reach out to the user this can be a very sensitive discussion don't an don't underestimate your time investment um be especially conscious of your tone and don't assume male intent just because someone gave away their credential doesn't mean they personally hate you for example they could just be asking a family member to get a personal dog they could be a in a very hot position and this seems to be the way out manufacturers many factors can be easily Mis assumed so be sure hypothesis and prove don't assume next we have one notably

different detection pattern this one is forgery um as Troy mentioned previously we have the storm 0558 case from the last year as an example at a high level we can think of the attack in a similar way to that particular case normally an IDP will query a key material stored in uh in a a storage device to sign a credential this is where highly sensitive key material is typically stored but then an attacker might use a foothold to compromise or leak the key material somehow and then ex exfiltrate the key material to their C2 infrastructure and the key material can be used to create and sign a credential out of Band of the canonical IDP and

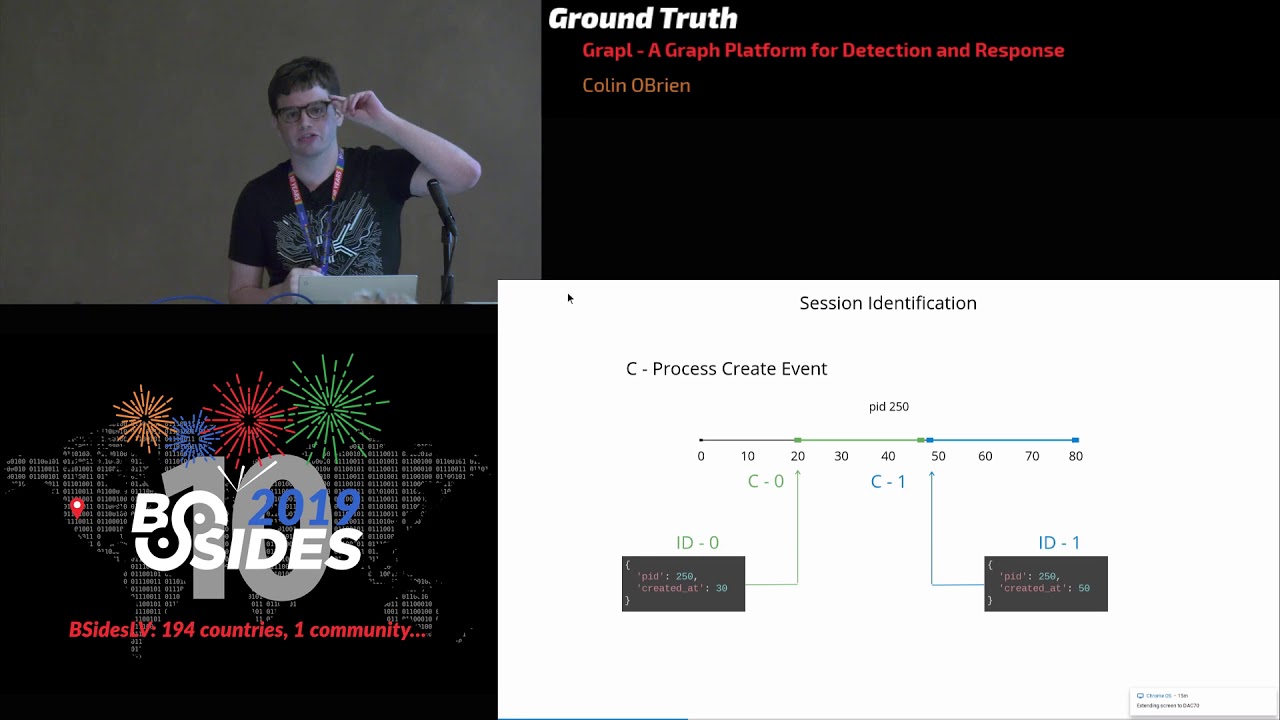

then they can provide the credential to authenticate themselves which the IDP would recognize as cryptographically and Grant access and this is the whole flow to detect this type of attack let's take a look at some logs let's say we have time extending down we have two streams of events from the standard credential workflows we have a creation stream and a usage stream both streams should have a unique ID for each generated credential such that we can map every usage event back to exactly one creation event that happened in the past with this premise holds true we can then check for the violation of this condition and this is basically what the use um the usage of a forged credential

look like a credential usage event was without a corresponding creation event we don't have the creation event because the attacker minted the credential out of band and it wasn't created by the canonical IDP which writes the log having said that there are some underlying assumptions and limitations for this detection that we haven't mentioned so far so the first thing is our pent here assumes that the forge credentials are generated out of the band out of band by the attacker and then used against the canonical IDP so the system that issues the credential does not retain the state of any generated credits so The credibility of the presented identity is solely established based on the valid validity

of the cry cryptographic signature and then secondly the credentials here cannot be forged online I.E the IDP itself is not vulnerable to any exploits that affect credential generation so the Integrity of the generation process is strong and we can trust it in terms of the limitations of this detection this detection pattern requires logging to be as close to 100% accurate and complete as possible for example if we don't have a credential creation event we need to be sure um like it's not a log loss some where an alternative to this is State statefulness um if the IDP itself the server side maintains States for the issue credentials we can then just check the state machine for given credential

to ensure it's authenticity and alert on any invalid credentials being presented to the system and I'll hand back to Troy about what to do about these detections thank you all right so you can imagine if some of these detections fire we obviously want to do something about it right um so you might be wondering tangentially to this why not just detect all things like info stealing malware um well the problem is there's just so many ways to steal credentials and we often have quite not that much control over how and where they're stored trying to detect all the theft options here can be really expensive timec consuming and also highly variant meaning a lot of this can

be hard to write but there's a very small number of places where the credentials that we're talking about are used validated and checked and these are all like core identity providers and we also have much typically at least we have much more control over those core idps um as well as where and how they log things so we can Implement detections basically at the choke point and save time and effort there with greater accuracy rather than trying to solve the M execution case for example that being said this isn't to say that we shouldn't do anything obviously except right right rules looking at idps right like detection and depth is still a completely valid concept here in the same way that we

want multiple detections to fire for Badness we don't want this one cigra detection rule fire in in the worst case either okay let's say we've detected some grenal abuse and we know it's legit like now what do we do and we've probably already answered questions such as where was the credential generated was it generated out of band which user was it account account was it tied to how was it stolen how we just do we suspect it was stolen how was the user potentially complicit things like this but we still have a lot of questions to answer like we need to determine the impact like how widespread was the activity that we saw what did the

credential do Post compromise and then we need to figure out out what to do next we have to first of all figure out is the credential still even valid and and if so when do we kick out the attacker and then what do we do next like do we need to like urgently fix something even is like just a stop Gap fix or do we like urgently need to deploy additional controls and what do we do later like do we have to fundamentally fix something do we have to fundamentally deploy more controls really fundamentally rework our application of identity and this is this last one's probably overkill for the most part but many an active directory

Forest has re been rebuilt for this very reason but at the end of the day if it's the user credentials that have been compromised we always have to be cognizant of the human in the picture we mentioned before the need not to assume situations when investigating suspected credential abuse um we don't want to assume the intent the circumstances cuz log data does not contain the entire context of existence users might be complicit for reasons beyond their control so we really can't always assume Mal intent here we should be sure of what's going on and then act upon it as Kathy mentioned before we should we're probably going to have some hypotheses which we should be able to reasonably

prove but then we should take action only after we've done that obviously here are strong security culture blameless culture all of these things are really worthwhile Investments because we need to acknowledge that all of us including the users that we're protecting are on the same side and we need to work together to make this problem harder for the attacker because really you don't want to be the security team that's feared this makes collaboration significantly more difficult and fundamentally makes it harder to build trust with the very things you're trying to to protect including your user base so then we're going to talk about a few controls quickly we talked about credential binding as an example here um

but statefulness Kathy mentioned is is a really good example if you can track the state of a credential then we can validate it State when presented back to us and invalid invalidate sessions where we seen invalid State transition and this requires the attacker to be a aware of a state machine and of the credentials given state in a point of time within that state machine which is very hard to do unless you're internal we can also start automating expiration or forry authentication processes these are good in theory um but you know if the initial authentication challenge is weak or fishable then the attacker might already have fished all of the components that they need to be able to pass the

checks we also especially need to consider usability when it comes to controlling credential abuse if we challenge too often or if the challenge effort is too high then users are going to start working against us and usability here is really a function of the challenge overhead we're implementing the frequency at which we present it and the actual effectiveness of the control and we need to get this function right to avoid overburdening the user human expiration and Remediation these are kind of like the um actions that may be taken by someone who investigates one of these incidents sometimes you might need to know more before control kicks in but the challenge here is to know when to act um

with this can really vary by the user that's being targeted the processes in place but we don't want to alert the attacker or inconvenience the user and this is a hard balance to strike but we also don't want the attacker to progress too far far either so there's a very delicate balance in making these decisions but this doesn't mean however uh sorry this does mean however that we can be considerate of what is appropriate given the user needs just want to talk about obscurity before we close out um it's obviously a meme to like solely rely on on obscurity um but an attacker doesn't know what they're up against so a single obsc failure can often lead to detection if

they don't know all of the impossible travel data end points data points sorry or if they don't know the binding mechanism or if they don't consider a working patterns or something and they guess and they fail that's when you can detect the weirdness it's obviously a not to not solely rely on obscurity right but like don't discount it in reality because if we Implement a control with high complexity which is as transparent as possible to the user this forces the attacker to make a leap of faith and we want the attacker to guess and we want that guess to be really hard but to conclude really quickly um we talked about some core tenant of

credentials and the importance of logging and remember that your detections are only as good as your logging um we hope this has kind of helped further the understanding of some of the detection patterns we've talked about today and some of the complexities involved in implementing this logic um as well as within reason how we can overcome some of these challenges for those of you on the red side um common attacker techniques that you might think about may not always be as effective as you think like VPN to a local network range for example but nonetheless we still see credentials being abused in the wild but we hope that the attacker needs to tread lightly that's the whole

premise here the detections controls we discuss can introduce uncertainty into their operations which gives us a greater chance of finding the Badness and finally if one such control fires your detection team should likely be interested if a control exists for a reason so even if the risk is mitigated how did you find yourselves in the position where a control had to fire that should be the question your detection team should be trying to answer and finally finally finally um if you're interested in one such project to make credential abuse harder um and which gives us actually really good unities to detect this sort of stuff we suggest looking up um device bound session credentials um this is a control

um underway it's been designed and implement the at the moment by a bunch of folks looking to make this thing particularly hard um but we don't want to spoil it in this talk um so feel free to look it up afterwards and in the meantime thank you for your [Applause] time questions got questions good oh sorry somebody was standing up behind me so I thought it was him um thank you that was great I have two questions I'm kind of just curious about your thoughts on one was to do the kinds of things you're talking about for a single event is you know something a human can run down but when you've got a large Enterprise with

hundreds of these well I mean thousands of things happening that you might want to look into obviously you have to have an enormous amount of automation I'm curious about your thoughts about that and the other thing I'm curious about is most organizations have scores of different types of you know uh authentication systems um and hardening them all and monitoring them all is difficult you might be tempted lots of us do to start to harmonize down to a single try but now everything is in one place so if that gets popped you're really screwed I'm kind of curious about your thoughts about that also good question do you want to take the ultimation one yeah sure uh so your first question is about

how do we automate all these detections at scale basically um oops too short uh um yeah this is this really can be a different talk um but like to summarize what can be done to automate things at scale like for detection pipeline we have like three main stages we need to normalize the log write detections and add enrichment if possible and finally automate the investigation as much as possible um normalizing the logs is very very important because that's the stage where we can make sure we have the data available we have really good valid data um and is structured in a way that we can use use it the detection part is also very important obviously um it's

usually depends on the log volume you might need to leverage some sort of data processing pipe pip line that supports a big data kind of scale processing um and in the end of the day it needs to be flexible enough to support different detection patterns it can't just be like a filtering of certain thing you're looking for it might need to support like Bachelor Cup streaming streaming kind of ingestion like comparing different Windows of of things like we can talk about the detection patterns later but like to to to enable some of these detections you usually need to ingest more than like a few data sources and the way they come in they get delivered and how you can

process them like varies very uh quite a bit um and finally I guess we have um we have enrichment um this is where we can like Auto clo things at scale um because like you said lots of these things will happen like thousand thousands of users will make all sorts of weird like what what we think is weird anomalies in a in a log and now what would end up like not being able to investigate all of them we would come up with criterias of like how can we determine this is false positive like really like confidently and codify all of these and put that into the detection as well as enrichment and then autoc close as much of this as possible

so the only thing that gets surfaced to the queue is mostly like High Fidelity um uh detections anyways I will time or respond we can have one one or two more questions just answer the second part of that that one really quickly actually um sorry to just interdict real quick um I think like the the trade-off between like consolidation and siloing of identity providers is like there's also another angle of like business requirement because some stuff you know you can't have in certain regions or whatever it might be um I think first of all if you have like sharded you have to prioritize them like typically I would say like look at the things that are

known in The Wider world first right if things can procure credentials to The Wider world that is you know well understood as an attack Vector like I'd probably look at those first um internally is also equally as important but like there's a higher B to meat so maybe get around to those eventually but not right away um on the kind of like siloing versus like distribution problem I think um yeah s makes it easier but then you have to look at really all it does is shift the complexity from many systems into the specific subcomponents of one and then you have more subcomponents in one than you did in the others um it's just a a different kind

of complexity you often have to have more knowledge across more things in the sharded sense of the model and have a lot more in-depth knowledge about the the ladder which I don't have an answer for you basically it's a tradeoff at the end of the day thanks though last one yep hi night uh given limited resources where would uh what's the best way to start what's the what's the lowest hanging fruit here do you think give I can give it to go now wow for me like maybe Troy has something different in mind but like if you have unlimited amount of resources make sure you get access to as many data points as possible that's usually quite hard when

way harder than people would imagine I think yeah managing stakeholder relationship and a lot of a lot of the times the data source comes from external Partners as well which makes it even worse I just turn it all off you know just don't let anyone log into anything and no just kidding um honestly I think in limited resources right it's like it's prioritized what you're detect I mean it's a similar theme unfortunately so it's a bit of a cop out of an answer but um prioritizing what you need right it's like threat intelligence is great here like if you know what you're trying to detect then just prioritize your resource towards those things if you can um that may not

always be possible for various nuanced reasons but um typically speaking when you're looking at like you really have to make a decision on if it's you're going to detect large amounts of low sophistication high volume or high sorry yeah low high sophistication low volume in in the inverse if you're looking at like a lot of low sophistication attack vectors then you're probably going to look for a small number of log sources cuz most of your data is probably going to land in like your core IDP logs inversely if looking for like high sophistication then you're probably looking at more log sources but probably not looking as many events per log source so it's kind of like the point I

mentioned before about um like log diversity and log specificity you have to probably have a trade-off between those two things but that's a good question thank you okay that was the last one thank you Kathy and Troy another round of applause thank you folks