Detection At Scale by Troy Defty

Show transcript [en]

all right hi there everyone just to check everyone can hear me at the back and stuff like that right yep Back Road awesome um so first of all thanks for everyone's time and thanks for the to the b-sides organizers for kind of giving me the opportunity to present today um it's great to be able to give back to a community that supported me for so long when I was here in London but Google's Google has a pretty big security presence right and pretty much the entire security team is really focused on defending and protecting Google alphabet all of our users I imagine many of whom here might be users as well and from Badness right and we do

this through building Technical mitigations and controls into our platforms our environmental sort of stuff that basically mean that they're more resilient to attack and part of this posture is building detections for when gaps are found in these controls so we can kind of like cover our bases as best we can but in detection our priority is to defend against nation-state backed attackers and these are the groups of people who we think are probably most likely interested in taking taking a pop at this right so when we think about defending we have to think about this problem at a pretty large scale because of the size at which we operate so today I'll talk a bit about how we're

just our mindset to basically you know work at this kind of scale but before I get into that sorry I need to talk this way I always talk that way to the slide um for the past two and a bit years I've been a security engineering manager at Google um down under in the land of dinner plate size spiders and drop bears in Sydney Australia and if this isn't a detection team and basically as a team we are the people who comb through the you know Galaxy of Haystacks looking for the needle that is Badness right that's the people that would try and see us fail but before this I was actually on the other side of the fence I was in red

teaming for about eight and a half years both here in London and later on in Sydney and I haven't quite hung up those shoes for good yet I still am headed into like ICS and Hardware stuff so if you're into that as well you know please let me know but to start with a very existential question you know like why are we all here and I'm not asking you know why are we all like watching me talk right um it's more about you know how we come as an industry to be where we are where we see today and how we all gravitate gravitated together on a weekend to kind of like spend our time at these

conferences right and a lot of this really unfolds because of events over the past sort of like 45 to 50 years so back in the 1960s the US government realized that they had a problem on their hands they had these big computers like you know would fill this entire room but they realized that they were no longer single user devices they shared resources they had multiple users using it at the same time and they really asked pretty early on that they had a pretty big problem on their hands and that was what if all of these users could like see each other's things so that's probably not a good thing I'm not sure if that was me or not so apologies

if it was it is me okay oh because I'm breathing into the mic okay um I'll Stand a bit further back so apologies for that um but by 1972 James Anderson had written a paper on this topic called the computer security technology planning study and he correctly posited in this study that the things we were building couldn't defend themselves and James had a background in meteorology which gave him a unique understanding of like the interplay between systems particularly as things might play out into the future when they become more connected over time and by the 1980s he further developed how we needed to start auditing and monitoring what users do on these systems he wrote another paper a

computer security threat modeling and surveillance paper which described that we needed to you know audit logs we had to centralize these logs we needed people looking at them and we needed or they kind of started describing the kinds of auditing that we needed to do and this is actually one of the seminal papers that kind of like fueled a lot of the careers that we see today and these are the examples of the kinds of things that these papers that suggest that we do and each box here represents a topic that turns up in academic literature or commercial products around the time and the very first thing they suggest that we try is manual analysis it's

literally just like looking through order logs and shortly after that we start seeing things like behavioral anomalies come up this is still pretty early though but it's looking at answering questions like what does normal look like and can we use statistics to determine what normal looks like and can we use these like self-learning expert systems to help us determine what normal looks like and all of this comes like very early in the picture but by the 1990s we start to hear things that we hear about today data mining you know artificial intelligence you know we give people user interfaces to do analysis in so they can like actually click on stuff like wow nice clicking on

things like and make this stuff much easier to find that it would have been seen previously so conceptually kind of like our e ancestors I kind of thought of this stuff already and by the 2000s we start to combine a lot of this stuff we see a real emergence of like machine learning and using different algorithmic representations to try and like model security problems and it's pretty interesting to see how all of the stuff progresses but there's one common theme that all of these technologies have and that they are meant to be assistance they're meant to point a human in a direction you know when we are humans we're out there looking for Badness you

know these Technologies are here to assist us in finding that Badness so anyone here who's ever started a sock can probably relate to this history so far you start out with a bunch of people looking at logs and once you figure out what to do with all of this log stuff you can kind of like use assistive Technologies to help make this task easier and when Google first started our sock we did exactly that we hired analysts to effectively stare at logs and until we figured out what analysts were good at doing and we figured out that we had to draw a line between manual investigation and automated investigation and in some ways this is almost the

original intent behind our careers according to James Anderson back in the 1980s so when we read that same literature and we look at the same timeline through a slightly different lens we see that we've been classified almost we became the names and titles and way back in the 1980s James Anderson called this the activity security officer which in many ways is kind of like correct today still yeah we monitor activity with a security lens and even in the 1980s this concept is still around we also start to see words like order to appear and the the security out of this role was kind of born around the 1980s as well so this job that we're talking

about has been around for a pretty long time and then we fast forward we start to see you know the Security administrator appear which is a Twist on the it admin and some of you may be familiar with both of these roles at once which is you know the better or worse the reality of the world at the moment and by the late 1990s and 2000s we invent the sock this is like collections of all of these people and you're working together to try and solve the security problem and then we invented the term threat Hunter but the work here is relatively similar to some of the other roles that we've already seen so we can't by combining these views can

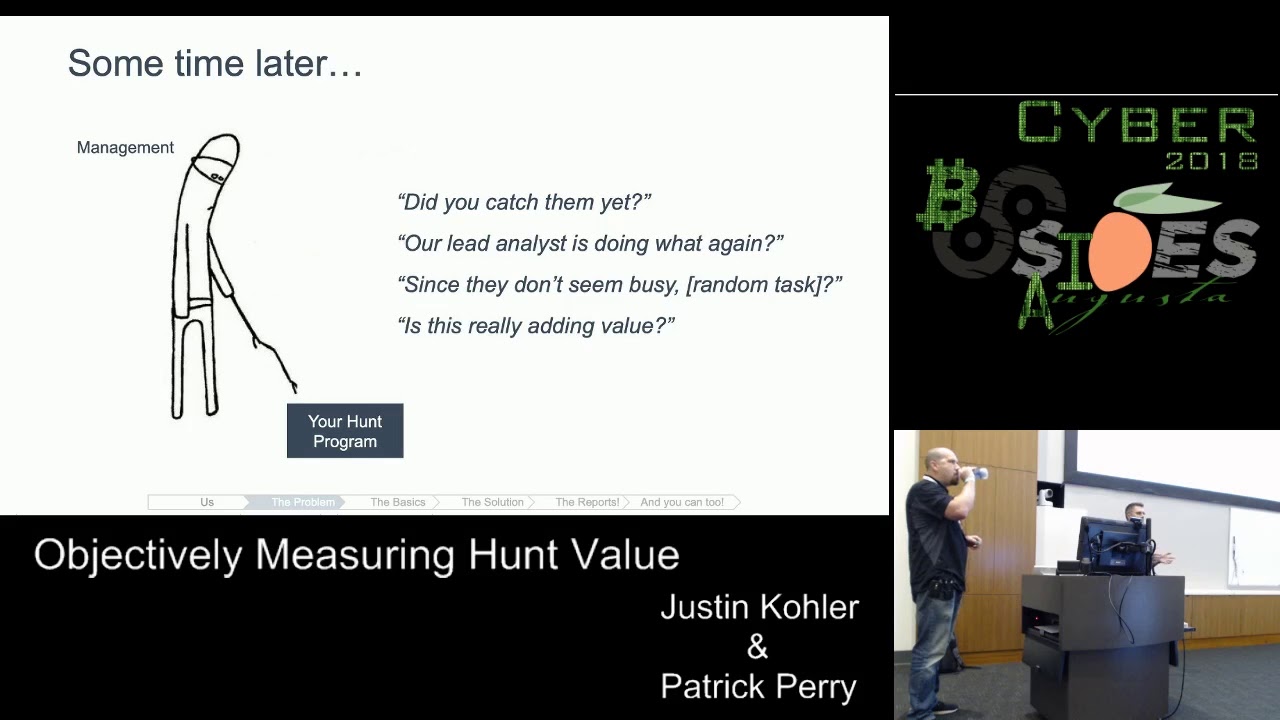

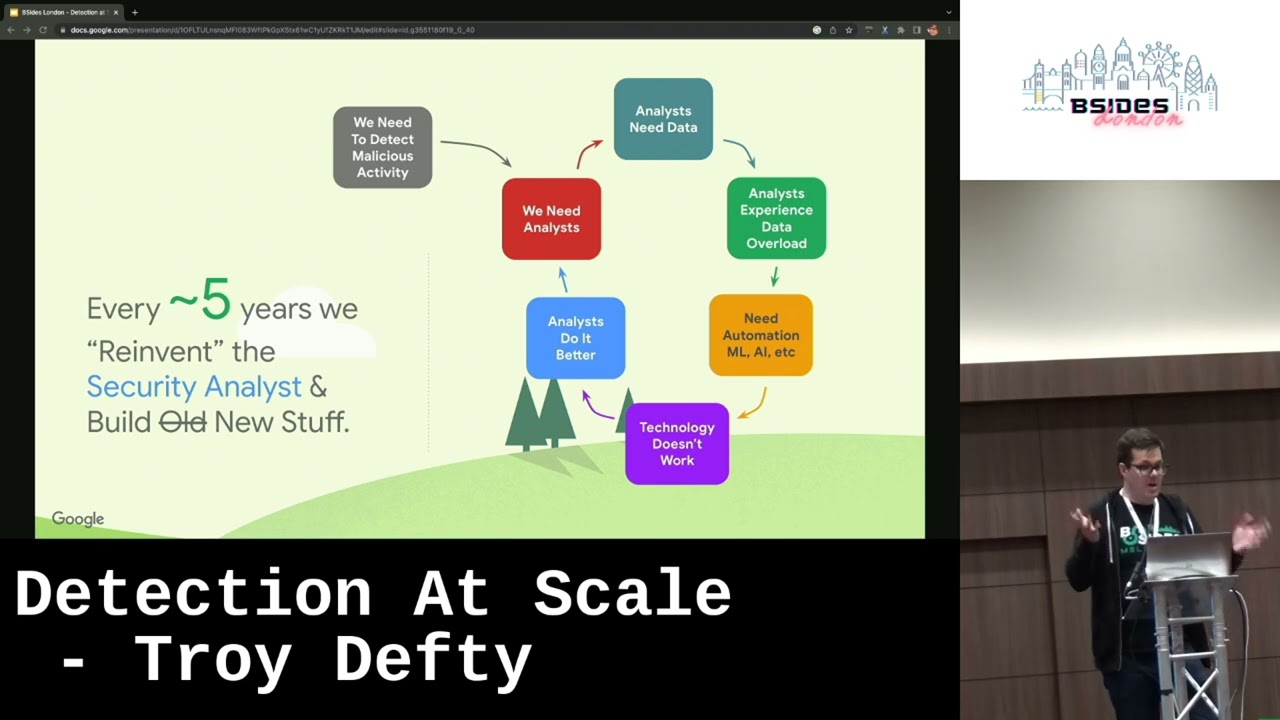

see that you know assisted by technology the whole principle here is that humans try and find bad things and that's relatively speaking kind of like where we find ourselves today and you might be asking like hey why do we keep Reinventing this stuff like why do we keep seeing the same themes emerge over time and we have a bit of a hypothesis it might be poorly constructed and might not hold a huge amount of weight but we have this feeling that every four to five years or so something might just not quite be working as we intended to do we're not detecting what we think we might should be so we basically decide to start a new and we start with humans

we always start with the analysts in this picture but analysts need data and it's really easy to give analysts too much data when you before you've figured out like we have a lot of data here we have to we have to do something with it so then we naturally start to build tooling we build assistance but it turns out that this doesn't entirely work either because we know that humans can do this better so we kind of end up conceptually back where we started we may have probably learned a few things along the way but we pretty much end up where we began and when we look at this cycle over time and we look at the kinds of technology

that have been available and the intelligent assistance that we've been giving people it begins actually with something very simple and back in the 1980s James Anderson's advice was to print out the order records create a case File and hand it to an officer to kind of like read the stuff and review it and you can imagine that people doing this work probably didn't find it very rewarding and it actually doesn't really work because fatigue toil all these kinds of like human elements have an impact on the investigative quality of this kind of methodology so what we do is we invent a new technology we give them database backs user interfaces we have pages that can

go off when rules fire all this kind of stuff and by the 2000s we start getting more sophisticated we're doing signature detection and seam and then we put a sock in place to look at all of this stuff 24 7. and now we have you know like threat hunting tools like themes data science ML and a whole bunch of other things right but every five years or so we kind of go through this revolution of trying to improve the intelligent assistance that we're using and this kind of results in the somewhat iterative approach that we see here yeah this might sound pretty bleak it's like hey like we did every five years we kind of end up back where we started we

might have had a little bit and sorry for kind of like bringing the tone down on the on the Outlook of the security industry um but let's look at the future right so the future really holds something quite valuable I think and it might sound somewhat controversial with these opinions but I think by about ultimately 2050 we'll have something in place to solve James Anderson's original problem and we'll basically have some kind of a system or systems that can defend themselves they might be either better constructed or self-healing but they typically won't need humans in the same way that systems need us today and this means that the systems we have today where the machines are kind of

like Reliant upon humans to defend them will become less and less relevant over time and this kind of is a solution to the original problem about James Anderson outlined in the 1980s and it's probably going to take a while to get there but I'm pretty hopeful we kind of see signs of this already um you may be familiar with the Cyber Grand Challenge held by DARPA um this was building about cyber reasoning systems that actually played in the the 2016 Defcon CTF and the challenge itself was held over the span of about two years and the goal was to build a cyber reasoning system that could detect vulnerabilities exploit them and then patch them and you can

kind of think of this in some ways as being a system that can kind of like defend them within reason heal itself so the real question then is like what's the role of the human in this cycle right like the real reason that we're all here that the meaning behind all of the work that we're doing and the reality is that once we build a system that can learn we need to teach you pretty much everything that we know we need to teach it things about like how understanding how threats work how to defend systems how to identify and attack what an attack looks like how attackers work how governments build teams to attack other systems all of

these kinds of fundamental principles and all of that will play into how we teach the computers to kind of like defend themselves and I think that's the real reason we're here right like this kind of like educational like wave of computing in the meantime you know we still get to defend and we have to chase attacks too so enough of the conceptual stuff right let's talk about how we do things at Google um this is a high level view of our detection pipeline whenever a computer light does a thing you know whatever that thing is um they generate a log for it it might be big it might be small but it's logged and logs are essential for debugging but

they're actually crucial to detection and response and we consume huge volumes of log data every day we store it for long periods of time in this big data store which we replicate across multiple data centers and then over this data we run a number of detection systems on a very regular basis and some of these detection systems are pretty simple right they use static rules for example looking for malware fingerprints or hashes and some have more advanced models where they're using a bit of machine learning statistical analysis like anomaly detection all this kind of stuff but generally we know we use a lot of different tools to comb through this data set looking for indicators of

Badness we also test everything to make sure our systems are reliable and behaviors we expect now as a former pen tester like Michael was terrible right um and having joined this more engineering focused role you miss an end-to-end an integration tests are fantastic so if you're writing any kind of tooling as a pen tester like I highly recommend unit testing it you will thank me later and anyway um detection systems in this case you know generate events to generate alerts and these are bundles of data that might be interesting to a human right that a human can look at and about 80 of these events that managed to get to a human are already enriched with everything as an analyst

you need to know about the circumstances that led to the event firing and this is kind of almost in many ways like everything you would need to know to determine whether or not a given event is truly bad or not so to give you an example imagine you have a binary execute on the fleet and when I say Fleet I mean every device within Google's corporate Network and we have an antivirus program that notices you know this execution take place so we get the AV alert we know that I'd execute we will go and grab a copy of the binary and run it through you know many many binary analysis pipeline systems you can think of virus

tilt as an example of one of those here we'll also know like everything about the user right we'll know where the user was we'll know how long the machine was there for we know how they authenticate to the machine all these other these bits and pieces and all of this data gets grouped up and put in front of an analyst and the idea is that they spend the minimal amount of toy toil doing this toils and tasks right they we want them to spend their time doing the things that humans are really good at we don't want them to answer questions that we know are going to be useful we solve that problem for them

and the idea is they can spend that time determining whether or not the system is actually compromised or not and when we talk about machine learning like this is actually where we want machines to help us today we want them to assist the human in making a decision and and to kind of try and help point this in the right direction but we definitely don't do this alone we have many many partner teams that we work with and organizationally this is roughly how we're structured for those of you in blue team rules this might look somewhat similar we have a team that does threat analysis some of you may have heard of tag or the

threat analysis group they post some really great blog posts I'd recommend checking them out if you haven't seen them yet but these are the people who spend time learning about what different threat groups are into like being a financial crime or spying on different countries and this all like informs our detection strategy we also have a team that builds all of our security tooling and these are basically huge projects that can store and analyze massive volumes of telemetry and alerting and all this kind of stuff we then have the security surveillance team which is where I sit the detection team I mentioned before and these are the humans that we were talking about earlier who see the output of our

detection pipeline and we've actually borrowed a lot of this language from James Anderson this idea of surveillance not just detection and surveillance in this context is actively looking for bad stuff on the network not just waiting for alerts to come to us and in some ways this is kind of like active defense but this term is like very overloaded nowadays we also have a digital forensics team and an instant management team and these are the folks kind of that handle doing deeper Dives on things or The Wider coordination of incidents um when they when they do happen and we also draw very clear boundaries of responsibility so that we understand you know how many people in each of these

teams we need to engage at a time and but in reality a lot of this work is heavily cross-functional we also work very closely with our internal red team and their goal is not to find every single bug but it's to find the gaps and the places where our detection systems may be lacking and they often mimic you know ttps from Real actors they they help us fine-tune our detection and test our response capabilities and they really do keep us on their toes in many ways they're actually our most targeted threat actor that we regularly see and we also regularly run combined red and blue team exercises where we kind of work together to better understand our

posture and so you know focus on the blue side go with red for a bit and vice versa um because a key component of being a good attacker is understanding Defenders and vice versa of understanding Defenders being an attacker but all these collaborative events as you can imagine are great learning exercises but this is probably the most important thing that we've realized that we should only ever hunt once and this is somewhat analogous to only ever automating once so when we dive into hunting we have this kind of like thought process similar to the slide here so the first thing we'll do is typically start with research right we'll try and understand something new well understand the threat

model the ttps the behaviors whatever it might be that we're basing our research on so one of the things for example hypothetically might be let's say we're trying to understand the difference between persistence mechanisms on Windows 10 and 11 and see whether or not an attack is trying to do that stuff honestly so the first thing we do is build a hypothe or a set of hypotheses these are basically the questions we want to answer with the investigation that we undertake and then what we do is we start hunting we'll initially hunt manually through the log sources all this data that we have and then we kind of like have this iterative automation process and we iterate on this process

until the Hunt works as we intended to until we've also created the backlog of relevant events until we can basically understand whether or not the fleet itself is compromised and once we've done that and we're happy with where it is we'll kind of refactor the code and we'll re we'll actually write like a true detector that will run indefinitely into the future and this is where hunting is entirely automated at this point and we'll also write unit tests for it as I mentioned before and the idea here being that you should never have signal right you should always know and be able to attest that your detection Works in a way that you would expect it to work

and this cycle continues but it basically means that we never hunt for something that we research before more than once and the only exception here is when we have to do it again in a new environment so for those of you in companies where you might acquire another company and that company might have some negative Legacy infrastructure or something it could be anything so redoing your hunts in this situation is actually a really good thing to do and it can help you basically understand whether or not the infrastructure you're about to kind of like on board is already compromised and pre-owned for all intents and purposes or if it's kind of like relatively clean and this and this model requires minimal

setup and deployment because as long as we have eyes in the right places as long as we're ingesting the right log sources all of our detection handles the rest of this the entire detection pipeline kicks in and does the job for us which affects us kind of like outscale the amount of stuff that we're looking at so I've mentioned hunting a few times now and we actually have three kinds of hunting grounds I guess you can call them one of course is like this big repository of stored raw data which we hunt through with a sql-like interface um and we can also take the data out of here and process it into other tools if

necessary and this is data like DNS logging file monitoring execution logs user Behavior there's a lot of stuff that kind of like falls into this bucket and all of these things stand in the historical record right and they're very very good for manually hunting through the second category because our detection generates sorry our detection system generates so many alerts we actually make a clear delineation between high confidence signaling and low confidence signaling and we actually spend a lot of time looking through this like potentially noisy mess of low confidence signals and this is actually where we see the creativity of analysts come into play so those who might enjoy a particular detection domain that we

know has been researched or developed at some point in the past sorry at some point in the past or what kind of I typically gravitate to this kind of a domain and usually we have a few tricks up our sleeves to kind of like help sift through this data but there's no right or wrong way of doing this and that's kind of like a really important point but threat modeling definitely helps reduce the ambiguity and the complexity of this kind of data set and there's also natural variance here due to analyst creativity and we actually kind of want this we want to have diversity of thought and kind of approach here because we know that an

attacker or the set of attackers will be you know doing exactly the same thing as what we want to do and once you can find stuff in the noisy data then you iterate on the existing detections to try and make them more accurate try and make them have a higher confidence and then finally we have the live Fleet and an agent particularly called um this is probably one of the most powerful Tools in our Network it's an agent-based system originally designed to do live forensics remotely um historically for example in some countries it was pretty challenging to get devices shipped to us to be able to image disks and stuff like this but since deploying we no longer have to you

know wait for these this to be physically present with us and we've had it on the fleet for a while you know probably nearly a decade at this point sorry um It's relatively stable it runs on your windows the next Mac or the sort of stuff and we use it for all kinds of hunting and detection as well I'll talk a bit more about later on as well if I mentioned the the security surveillance team a few times and this is effectively our attempt at making a fully automated sock so everything sorry about 80 of everything that ends up in front of an analyst has been enriched with data of some kind and we also have a large proportion of

incidents that are handled by the pipeline which are automatically resolved by your machines today and so for example if it's like a very mundane event which is either routine or not really concerning and all of this stuff will be resolved automatically by the pipeline we also split our time between basically two core sets of activities forty percent of our time is what we call tactical surveillance or operations and this is very task driven uh it's typically going to shift kind of model so that you can spend your series of time oh that was really loud sorry about that um so you can send the set amount of time kind of like doing this sort of

stuff before you can take a bit of a rest um but this is kind of thing is like event triage you're hunting in pre-processed data at Fleet checks for new intelligence to see if it's actually been seen on the feet or not um things like that and the other 40 of our time is spent looking at technology-specific projects so Google has like a lot of Technologies as you can probably imagine right we have like Windows Linux Mac Android iOS like cloud like pretty much everything um so we divide the responsibilities for this 40 of time amongst the different platforms and this allows us to become very specialized in a given field and means that we can learn or try to learn

everything that there is to know about for example Linux persistence or Mac persistence and all of how all of these platforms have like work together but we do treat all of these platforms more or less equally because we you know can't consider one to be more or less important than the other a lot of the time and we know from experience that our adversaries do the same like they have people who are highly specialized in one particular area if they need someone to go and I don't know like do dll stuff in Windows they'll go and get the person to do dll stuff on Windows we want to be able to replicate this kind of experience

and we also work significantly on a meta level of things like you know Automation and testing within that respective field you know spending time on how many platforms we have how they change over time and how many people we need to engineer detection coverage in a specific platform and this is really all about you know determining how much time we set aside to do creative hunting that's what we want people to do most of the time and this helps keep us move forward and moving forward helps keep our detection detection posture kind of advancing and the key neither of you might be like hey well this is only 80 and you're right obviously um the rest of that 20 of time is this

kind of like Google wide um sort of thing where you can do projects on other teams like engage in community work all kinds of other stuff so we were looking about 80 of the time across full-time employee analysts but this might be the easiest to visualize with some examples like the hunting activities we do so um to get a look at some examples of that raw data set that I mentioned before and it's it you know the way that we interface with this is through an SQL like system that Google invented um it's very much like a tool we actually make publicly available called bigquery and bigquery is really good at tasks like this because it is basically

a means of massive massively parallelizing single query tasks across huge volumes of computing and then you know aggregating the results back very quickly and this means that we can search for things like executions or of a particular binary or a command string across all of Google across all time and we receive the results in like a few seconds basically but anyway when we're hunting the very first thing that we do is kind of like build a set of hypotheses so let's look at an example of that let's say we have a hypothesis of okay we have an attacker using a given domain for command and control so the very first thing we do is consider like what what does this look

like like what might the attacker do with this domain what might be C what kind of threat intelligence do we have to support a specific technique or the specific domain and then we can compare the DNS requests that are happening and have happened on the Google corporate network of which we have all of them we have every DNS that's ever been sorry every DNS request that's ever been issued at Google since about September 2009 and keep all of this and using this data we can kind of like find hits to this potentially bad domain and this is the kind of hunt that we probably do once initially and then we automate and kind of like you know kick

it into the pipeline so it does all this stuff for us another example looking at the live Fleet this time using go as I mentioned before there's a few things that we might like to do particularly when we're trying to onboard a newly acquired environment that we might have just bought something like that so the very first thing we'll do is typically deploy amongst other things ger and we have like this basic hypothesis right maybe the attackers if they were there left something really common behind that we can find and this might be for example something like an after a Cron job so for those who may be familiar with this these kind of tooling you use to schedule automated

tasks and they're pretty small files you can collect them across the entire fleet and process them with some some code um and basically understand what's been executing as scheduled tasks in this new environment and then once you have this big data set you typically start looking at the outliers because there's obviously like stuff in the noise still but the outliers are where you really find like the the clear signs of Badness here and it's pretty basic but honestly about nine out of ten times it works reasonably well and another example you might see is like launch demons on Mac so this this is where detections can come a bit more complicated so launch demons are scripts

which automatically manage system processors on OS X um and attackers might use these to maintain persistence on a device and generally if we're looking at server infrastructure here for malicious software like this kind of a search can actually work pretty well because servers are like usually well maintained you kind of like know exactly what should be running on them they're typically you know single or limited purpose but once you get to end user devices they become this becomes significantly less clear you know people will install just about anything on their laptops and as a result you can end up with way too many launch demons to review manually and a lot of the results aren't actually that interesting

either you might just find a lot of like adware for example which is that AOK you should do something about but it's not like the APT group that we're looking for but automation can help us out here too as you can probably imagine so another way Google attempts to tackle this detection and scale problem is through a tool called Deja this for those of you who are fans of Resident Evil I'm aware of what the logo looks like um its name itself is a portmento of deja vu and disassembly Deja this is a system developed by tag Google's threatened threat intelligence group or the threat analysis group and the Google Dynamics Team amongst others um it's designed to tackle a few of the

really difficult problems when dealing with binary analysis at scale today and part of this is that first thing we need to talk about reverse engineering and reverse engineering is really hard and really expensive to apply for example like a single module of the flame malware contains about 40 000 functions and Flames nearly a decade old now so even if you could reverse engineer one function every 60 seconds which isn't really realistic the entire module will take about a month to understand and there's obviously all the other modules as well you have to go through so the learning curve here is like really steep the knowledge even required to start reversing is pretty high and so when we're talking about binary

detection and binary analysis at scale we have to kind of acknowledge that reverse engineering is a scalpel that we have to apply very judiciously so anyway what stage of this so these are this is basically a similarity engine for functions and executables creates a met like a set of meta information associated with functions things like function names like specific attacker techniques common cryptographic routines all this kind of stuff and it's made easily discoverable to any analyst who might need it and it also earns new information every day it can be used to find names and uses functions or label entire sets of executables just to determine for example if a particular set of functions is a common everyday part of openssl for

example that we don't need to investigate and every binary that enters the Google binary analysis pipeline is also available and processed by Deja this so this basically provides us a constantly updated reference of how binaries are connected how they relate to each other and we can also approach Deja this from another angle so we can use data this to quickly pivot on a single function in highly variant malware so if we can find a particularly common function through disassembly which we use judiciously and give Deja this a sample hash and a function offset it will spread out and find all the variants available in its data store over all time and label them as related known Badness to the function

the function that we're looking at and then automatically find the label all future binaries that it sees for the same malware fan money that enters the pipeline at a future date but it doesn't necessarily need this human step we actually once it's actually seen enough kind of types of Badness of a certain kind it'll start formulating its own ideas about how binaries are connect connected and related together if a new never-before-seen binary is linked to a bunch of existing known through actor tooling via function similarity for example that you'll automatically generate a new detection rule out the back of that our pipeline can kind of see this Rule and propagate it through all of the log data that we

have to service a new detection that didn't exist before and all of this is really designed to save time effort resources of the humans involved remember humans are the key part of all of this I don't have a huge amount of time to go into like War Stories using Deja this but I will leave with one which is how it was able to attribute a sample of now the now Infamous wannacry so back in May 2017 uh the one of crime malware was really hitting in full force fire Eternal blue exploitation of you know unpatched Windows systems worldwide I imagine that many people in this room are very familiar with it given the impact here

but one of the questions that actually for those who aren't familiar want to cry was ransomware right it's like you encrypt your stuff demands payment for their safe return in theory but one of the questions I was asked very quickly was like what's the origin of wannacry and for those of you who might be in threat intelligence you might know that attribution is like really really hard to get right it's really expensive as well it often requires a large knowledge base of like threat actor ttps time patience to reverse engineer all of this stuff before you can come to a good conclusion and even with that conclusion it's difficult to be sure on the 15th of May 2017 Neil Metro tag

posted this kind of like somewhat cryptic tweet with the hashtag wanna Crypt a hashtag a one equipped attribution hashtag so like what did he mean with this tweet he was actually posting the results from data this so they should analyze Wanna Cry and as well as you know malware from many many other operations they had seen and it's normal operation mode it was able to find an 81 similarity between the functions shared in wannacry with canopy the back door malware that was linked in 2015 to a Lazarus group also known as North Korea and following Neil's tweet um Kaspersky Symantec and a bunch of others went public to also State their opinions of strong links

between wannacry and North Korea but the the ability to quickly pivot on this information allows us to understand our adversaries quickly and it means that we can also understand the motives behind the threats that we see daily another technology we use to try and Tackle this detection and scale problem is the notion of State collection and state reconstruction across the fleet the fleet Google's corporate infrastructure and this is basically about being able to recall the state of all of our machines at all points in time and the kind of state information we're interested in is things like process executions Network flow disk operations all of this kind of stuff and we want to record it in such a way that we can

reconstruct for a point in time the state of the machine and this process is most often seen in digital forensics and usually applied like ad hoc during these kinds of Investigations and it typically involves things like memory and disk acquisition and in this case it doesn't kind of prevent itself to solving the detection problem but it's still actually an incredibly valuable system because it kind of gives us the ability to understand in real time the state of the fleet you know what's executing who executed it how they affect the network stack for example how they relate to each other and so as a result we built the system that can do this the idea being that

maintaining the state for our entire fleet in real time could be a solvable problem with the massive caveat of providing you have a modest amount of computing and memory spare and we call this the shadow machine but what we really mean is that some kind of an abstract virtual machine we actually borrowed some Concepts from virtual machine introspection as part of this abstract VM architecture and with VMI guest applications run on a guest operating system this generates events which are observed by a monitor layer at the host layer for example so first of all we get input from the fleet we know it comes from all of our platforms from like a variety of detection agents and

some of these agents we write ourselves and but also some of these kind of like things we can ingest come from third-party tools as well but for example on OS X we have a binary agent called Santa and Santa is an open source allow listing tool written by Google if you're interested we also open source the allow listing management platform upload um and in Linux we use things like Integrity measurement architecture or IMA and which we get via order d and so the system monitor receives all these events as input it starts pattern matching to determine if the input depends on the host site or not and this bit this is basically to try and decide

whether or not to send the input to an abstract interpreter and the abstract interpreter is responsible for modeling the effects of the input on the host state and we kind of use this in things like you know compiler optimization program analysis as well but in that case we use them to understand the effects of the program without running it here we're trying to do it with a machine so yeah we're trying to understand the effect of the event without re-running the entire system and in our in our monitor the state could be considered almost as like a graph where State changes are adding or deleting vertices on this graph and we know all of the state changes and

can play them forward and backwards as the state changes over time and the abstract interpreters I mentioned before can freely call other abstract interpreters so we can kind of like have this multi-layered kind of a stack of events in many ways so the idea being if a host change happens the change or state change is recorded and the end result is this detailed state of a real machine that we can almost consider to be a shadow and we can query this in real time using a query language invented specifically for this purpose so while processing and recording State changes we have the opportunity to evaluate them against the rule set and this is probably the most important

usage of the system for the detection team so the idea being that with minimal code we can describe a state change of interest and this might be for example bash forking tools normally associated with reconnaissance right and a new detection is born but we can put more constraints here too so we could say that it matters that in this kind of like flow of things there's also netflow in this execution so for example I bash my execute it might Fork python which then communicates over the network and then that might Fork to do some kind of like Recon command or something like that and this might even be an even higher priority signal than the one I mentioned before

so the graph on the slide here is an example extraction of a small section of the state fired from a detection rule Written In This Very system where the rule is described in a few handfuls of lines of code and it's designed to look for a reconnectivity congruent with someone who successfully gets a reverse shell but there's one thing I didn't mention about this Rule and the fact and it's basically the fact that we don't have to Define what a reverse shell looks like we don't have to say like oh a reverse shell probably starts with someone running like python hyphen C import socket whatever or like bashes piping stuff around and we didn't describe any

of that all we described is that if we see any new process execution that results in outbound net flow that Forks a Recon tool of some kind like bin mount for example then that's of interest to us and once we write this kind of a detection then we can run it across the entire fleet of Shadow machines for current and historical State information and this basically means that we can find any machine that has previously exhibited this kind of behavior and the result of this kind of search is actually very very high fidelity foreign so to return kind of like fundamentally to an existential question I mean like what's coming next to this model we've

got some stuff like what do we think is going to happen next and I think the the reality is like the more complexity we build into our systems the more we need to understand and acknowledge that humans won't be able to defend them forever the complexity is rapidly outstanding out expanding what we are capable of people as doing so as a result kind of by building these self-defending systems using AI ML and all of this stuff might be the best path for us but this takes you know research development experimentation proof metrics testing many many other things and it really isn't easy I'm definitely not acknowledging that this is you know an easy task going oh just you can do

this no problem but it means in some ways that we actually are putting ourselves out of a job right perhaps we'll move into kind of like new and more interesting rules of actually teaching machines how to do this themselves but I mean realistically the only time we'll tell here so I've included a few resources on the slide for completeness here and feel free to take photos of the slide if you want to by the way um I'll pause this for a few seconds while we do that if not I can like leave the slider at the end so don't worry about like smashing the camera button okay I'll probably take it down now so apologies

or not um but for those of you who might be interested in finding out more about the wider security team at Google I'd really recommend checking out the hacking Google documentary which is available on YouTube and it's available at g dot Co slash safety hacking Google and it actually features many of my colleagues and the partner teams that I mentioned before um but at that point I think thanks for your time and I can probably stop the questions thanks [Applause] awesome guys thank you very much uh we'll take some questions uh there was a hand here very quickly foreign thank you uh so the question is how do you test your detections after they've been rolled out

as in like once they're live in production yes so it's probably partly through unit testing I like each component of the pipeline that's like using the whatever platform specifically like in golang for example just the unit testing languages at libraries and golang we also have some systems that can do extended integration testing so we expect at one end to put something in three or four systems down the pipeline we expect something to come out and we can do this pretty granular granularly between each system I probably can't mention like specifics of how we do it because it's very bespoke to be honest um but it's like a blend of unit testing and integration testing and

within reason in some cases end to end um with the idea being we fire an event at one end ourselves using a set of testing Rigs and we see if it propagates all the way out to the queue [Music] yeah yeah so the question was do we do internal Road teaming on top of that and we do have an internal red team um it's the offensive security team um they Sorry probably one of the best uh tests of production detections that we have to be honest um and we work very closely with them like every time we do one exercise or they sorry they do an exercise we kind of like get their activity log and we go

through and see hey dude how much of this did we see like is the things we can improve on so they're like the real broadcast I guess um but we have all of that other kind of like back testing that supports it as well does that answer your question awesome cheers thanks yeah uh first of all thank you very much um and one of the earlier slides you talked through the life cycle um one like uh this one yes yep so you said that you take something uh as a TTP you go away and you research it with the amount of ttps there are coming out and the rapid changing how do you decide which ones to

prioritize and do you automate that as well yeah or does that kind of the manual start of it all that's a good question because ttps are unbounded in their growth right and we probably do a few things the first is to know whether or not we have threat intelligence to back their usage in the real world that's probably the most important factor the second factor of it is whether or not we know between detection and control like what the current hardening state of it a given set of systems that might be targeted by a specific ttb and a lot of this stuff is done semi-manually but we do have like some way of automating kind of like you

know what stuff do we see most frequently we have metrics that can support it um but you you're right it grows unbounded and that's one of the things like we're currently trying to figure out it's like rttp is a good way of expressing detection like or expressing Behavior because they're very difficult and they're very granular which don't always map well um so it's a few things but threat intelligence definitely have the informs it as well let's answer your question awesome yep great talk um sorry thanks cheers um my question was around desjardis and uh false positives um that's a good question um you know as with every kind of like granular system where it's like a number

between zero and a hundred right it's like was is 73 bad or is like 24 bad like and defining that takes a lot of tuning to be honest like in this case um this was actually kind of like manually determined to be correct before we went live with it um there is definitely a false positive writing it I'm not gonna it's definitely not perfect right and typically what happens is if we see something where it's between thresholds of what we think is almost bad we'll kind of like go back to human be like hey like we probably need to figure out a bit more accurately whether or not it's bad um but to be honest with some of the

detections we have do actually utilize this but only above a certain threshold when we know it's going to be bad or it's likely bad um the stuff that's kind of like towards the lower end of Badness we're like not that convinced yeah so it's again it's like combined with a bunch of other stuff as well to make a decision um Sansa yeah microphone yeah thanks for the talk um I'm curious if you could speak to some of the challenges of introducing the AI into environments that you don't control so it makes sense when you're talking about this on your own corporate Network corporate laptops but Google obviously provides uh lots of services to users and I imagine it'd be a lot harder to

kind of Baseline what normal looks like there when it is a lot more out of your control yeah so actually one big caveat I mentioned here this is actually only internal Focus so we don't ingest any user information in this in this model um but you are right I think a big part of the challenge of AI and this kind of detection problem is determining normal and whether or not you have sufficient data to determine that um so I can't really comment on the user Behavior stuff because we don't do like customer data it's really internal only for Google um but there's a lot of training and a lot of proof and we've often just been

like hey this thing just doesn't work so we just like scrap it basically like it might take us a few months or years to get there but we're kind of a big believer and not continue on something if it's not worth continuing on it's like kind of answer your question

that's a very long answer um we can talk about outside if you want okay um thanks for the talk it was really interesting with regards to the shadow machines that you're running what level of granularity do you have in terms of being able to go back in the data are we talking like literally system level calls or just like process executed or something like that yeah and it's a more kind of like something like symbolic execution or in terms of processes or something like that yeah we do draw a line and only look at certain like for example on The Cisco example of only certain ciscals of interest and that's primarily because it's it there's a lot

of data that we can pull here so we do have to make a call in terms of like what we're interested in and typically like the Cisco level is roughly where we in terms of writing detections we don't typically go lower than that in this particular engine but we do have some lower stuff than that and but it really heavily depends on the platform on which it comes because if it's basically if it's not logged we don't see it right that's kind of the Crux of it so um some platforms are more effective in this model but um typically like Cisco is where we when we're writing detections the lowest will typically go yep thank you for the talk a good

question about the hypothesis testing what do you do um say you made a hypothesis and the hypothesis didn't span out do you use the data you collected or do you just start from scratch and go back to the slot yeah I think you know like it's always worth documenting everything that you do right and that this is basically one of the main reasons for doing it it's like you might never realize like two years now I'm like oh we've looked at this before like how far do we get there so even if we go through this hypothesis and get to a point where it's not feasible we might still have a detection that's in that low confidence

signaling base that I mentioned before that we might be able to combine with other stuff at a later date um but sometimes we do actually just see stuff where we might even leave it in that low confidence signaling bucket and it might be so inaccurate that it's just worth turning off entirely and that's pretty pretty common as well because we when we combine all of this stuff like we can be reasonably sure about how it all plays together but it's not until you actually run it in the real environment that you realize how Goods or bad something is um so some some stuff we reuse and part of that is turning it off if it doesn't

work um but not all of it is kind of in that bucket at the same time is that good okay awesome um just before I finish um if you have any other questions that you didn't want to ask them in this forum I'll be outside so please just come to me and ask him wonderful Troy wonderful thanks very much for getting one more time