Provisioned Privilege: Agentic AI as Designed Lateral Movement

Show original YouTube description

Show transcript [en]

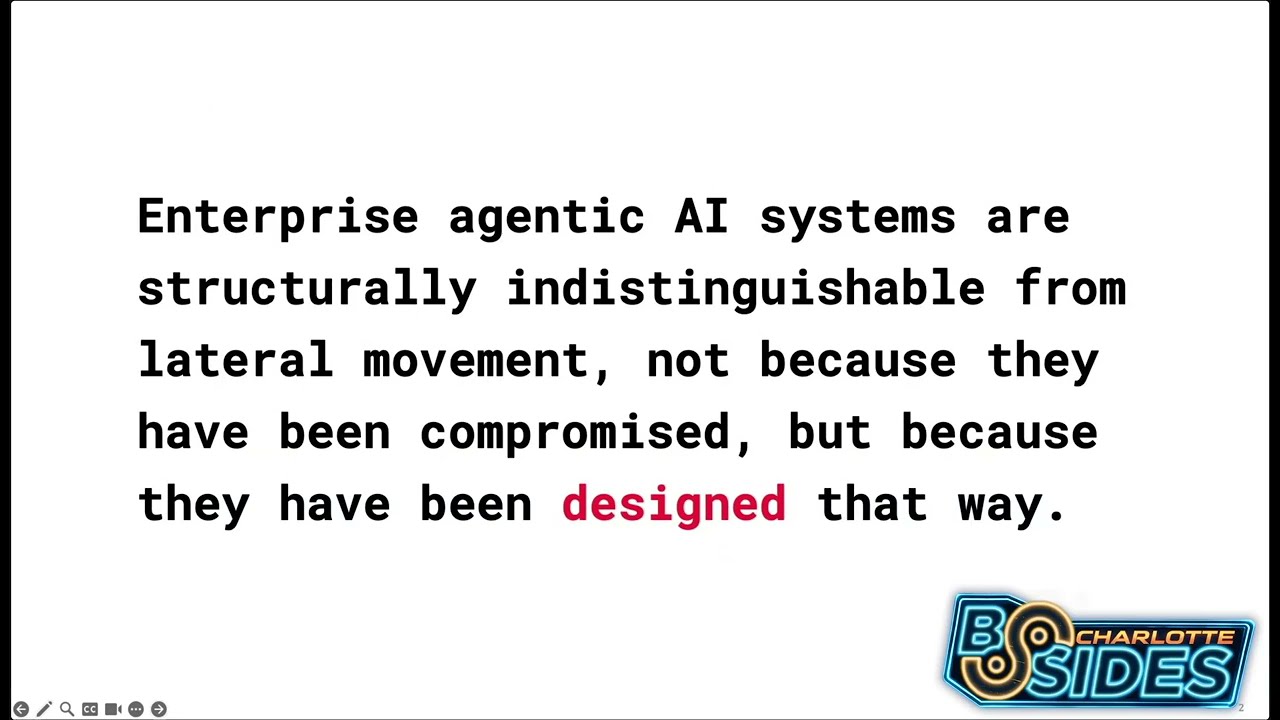

All right, my name is Praid Devileni. I am a lead AI scientist at Duke Energy. >> And I am Doug Garberino. I am a lead cyber security architect at Duke Energy. >> And today's talk, we are talking about agentic AI. So agentic AI, I know there's RSAC going on at this time where we are recording this. So everyone's talking about agentic AI and this is a good time for a cyber security community to come together. How do we ask all the important questions about agentic AI governance identity and detecting anything? So this is what this talk is about. So in agentic AI specifically is LA how do we compare that to lateral movement in traditional security? What

do we know when it is malicious versus when it's actually explicitly authorized? So how do we know that an automation is not actually an attack and how do we know when that line is getting blurry? So that is what this talk is about. So if there is one line that captures the thesis of our talk, this is it. So if you uh so agentic enterprises systems are using agentic AI which is structurally distinguish indistinguishable from lateral movement. In radical movement, an attacker gets access to systems one after the other. So there's chaining and moving systems across laterally. So that's where we consider an attack or a compromise of a system. But an agentic AI, it we give an

agent a goal. It uses planning and has access to multiple systems. So it tools change the systems and moves across the systems in that same way that is that is supposed to that in traditional security looks like a lateral movement uh threat. So we want to ask that key question of like if the behavior is authorized to design how do we know when it's actually dangerous. So it's a very blurry line that we are discussing and before we get into the line between agentic AI the framing of agentic AI and cyber security let's learn a little bit about what agentic AI system is. So as an AI scientist I have leaders coming and talking to us every day I'm like

yeah we need to put agentic AI workflow into the system. So but it is a very different uh it is in a very different league of its own compared to generative AI or traditional AI machine learning systems. So agentic AI everyone thinks about autonomy but it's a little bit more than that one. So what agentic AI does is once it gets a user's input or a prompt of some kind it takes that prompt to understand the intent of the user and and when understanding the inter intent of the user it breaks it down into subtasks subtasks or goals and creates a plan on how to actually go execute that in user's intent. And now we already

talked about how agentare has access to multiple tools. For example, querying databases, putting in a ticket, sending a an email on behalf of a user. So it takes that plan and continues to act on that uh plan by using tool calls uh that we just talked about and what that action is done it goes off and sees it does this result that we have gotten from these tool calls or action is it actually what the user wants and observes the result of that action and reflects on the strategy and continues that loop until it uh until it gets to a point of uh executing that goal. So in this process it has it does planning it

has uh both long-term and short-term as well as session memory and in one session itself it has access to multiple uh systems with maybe read write or who knows execute access. So all of these is what actually make up an agent system. So it's a it's in a very deliberate class of its own compared to generative AI or traditional AI systems that we have seen so far which are much more narrow in scope compared to agent AI systems. And for the next one, let's talk about actually like mapping agent AI security and how why we actually the core part of this talk the framing. So if we look at agenti here I just said the user gives

an prompt in the left side we can see and that goes into the agent where it has access where it plants the tools. It has access to enterprise systems via to and tools via APIs. Again different whether we give it like uh one-time access just in time access or or persisted access is where the system design comes in and on the right we are looking it at a breach. So you know and then the MITER attack is where all the enterprise most of the enterprises use to see where their threat levels are in within a system and let's we can see here on the right that host one maps to server one and gets access and then gets

access to host two and therefore gets to the next one. So in if you look at like without the workflow you're actually seeing that there is a lot of uh uh parallels between the two where what in what we call agentic AI workflow in agents it is actually considered a breed in traditional system. So it's the same capability but different authorization and this is where we can look at it from like an agent lens versus an attacker's lens just exactly what I said but like but actually in a more deliberate context. So what if what we call a workflow and agent is actually lateral movement. And this is that blurry line between automation and attack agentic

AI. But we and this is also where we thought the cyber security community needs to pay attention to agent and their identity. How do we govern them? How do we know how we give them access to multiple >> tool tools and how how where should humans be involved in the system design? How do we know when even within agents when we give them access. How do we know they have access they have overprivileged access to what they're supposed to do? >> So security programs shouldn't blindly like uh trust agents. They should necessarily put this from an attacker's lens but also look at when is a agent going above it and how do we know it's

not a breach of trust. So that is what this uh slide tells us. It's so it's the same behavior but it's a different label. But even within this there is also two different lenses of agent where agent's behavior is is acceptable versus agent's behavior is overprivileged and and actually a risk. So and in this one let's look at a very simple this is again a very simple representation of it. So we have an AI agent which has access to an email server which again we have given it all the privileges of an email server a ticketing system all of that. So it it extracts that information whatever it needs to get because that's what we have

pro provisioned the agent to do in terms of permissions. So it goes into the tickets system like for example I say hey go go to my email get whatever I have unanswered and and go take that and automatically put it in our ticket system. So it goes into that and updates access. So this is exactly what we have actually operated we have provisioned or authorized the agent to do. So this is all normal operation and but the issue comes up when these are all chained together. So here's what we're looking at. So an agent reads an email, it extracts sensitive content and we either it can either write it into GitHub or like the same thing GitHub or

send it externally and again individually these are all authorized actions but then one action or information and also we have to remember some one thing about agents is they carry context from one system to the other. So we also have to deliberately think about what if uh context or information from one system is being exposed and that's exactly what we are seeing is happening here. So they're all authorized actions individually but when they are chained together an agent tool chains these uh to p pursue its goal that's when the whole chain results in a security incident and this is something I don't think we have because traditional security has always been about defending systems rather than

rather than uh offensive or like being able to do this one and in a in a normal context when an agent is authorized to do all of this it is all acceptable in in in the traditional AI as well as in the agentic sense. But the chaining of systems is what considers it a security or a or a breach incident. But our existing telemetry systems aren't built for this kind of thing because we are saying if you look at here an internal agent takes in the sensitive data takes it from a secrets table exports it into a CSV file and writes it in an external bucket and each of them are deliberately things that we

have provisioned the system and every action is allowed and this is exactly the kind of thing we want the security community to pay attention to because agentic AI I opens up a whole set of questions that we haven't had yet answers to. And one of the things Doug and I continuously talk about is do people understand the the security aspects that are present? Do people understand how uh how harmful it can be without the knowledge? So, and off to Doug now. >> No. And probably thank you very much. So, my name is Doug. I'm a lead cyber security architect within the Duke Energy space. Um I've been working in the utility sector you know both at

department of energy and as well as in the private industry for you know 20 years or so. So I've seen a lot that has come around. You know I was there when cloud started. You know we saw a lot of the issues back there and there was a lot of security concerns to beginning with cloud. Who stores it? Who owns the data? All of that. But the thing was is we had it kind of more contained. We kind of knew what it is. this new age of agentic AI. This opens up a lot of security questions that we have and actually identifies or or kind of uncover some of the gaps that we have within our security models. This is

where models like MITER attack work well for traditional security, but MITER atlas really looks at the AI and the agentic AI and stuff like that. So, it's really starting to close that gap. So, background, right? Security. Security always assumes risk comes from unauthorized behaviors. You know, hey, I have a bad guy getting in the front door. Physical security, right? Or I have somebody who's trying to penetrate my website, right? External coming in here. Well, that's human behavior. that's I don't want to say easy to track but easier for us to track because there are years decades of information that we can pull from that we've learned from so that we can quickly identify them then

you have internal threats I have an employee contractor right they're inside my network they're there because they're supposed to be there right we've authorized them to be there but they have found loopholes in our security boundaries and have been able to crack those or they've gotten in a position where they know somebody who has access to this. This is where we start to get into how do we detect behaviors when we're talking about agentic AI now. Agentic AI, agentic AI has been around for a while, >> right? Right. I mean, AI's been around for a while as well, but the way we're using Aentic AI now, the way businesses want to use this, it's no longer just

the data scientists like Propy who are getting in there and doing this who know how to create, develop, you know, and utilize these things. We now have me, right? I'm not AI expert, right? But I can go in there and create agents. I can start putting things into place. But what are the guard rails that we have out there? See, when I think about security, it's always been very deterministic in nature. It relies on, you know, um specifics in that role and what you have access to. There's cause and effect is kind of what do we need to go to. But as we take a look at the agentic side, we have to take a look at

what we need to do differently. You know, we need semantic observability. You know that is the need to vis to be visible into the agent's reasoning. Right? You can't get inside my head to see what my reasoning is, but we need to from an agent perspective. All right? So look at their agents reasoning, not just their actions. Again, humans, we have thousands of years of human behavior. So we can kind of know what their next actions are going to be. Uh I spent time in law enforcement working with those guys. They will tell you if they see somebody jumping the turn styles at the New York subway station that there's a good probability that they're going to

move on to something that's even more uh illegal, more nefarious. So, there's things there. Agents, they do the same thing. We program them, but now we're adding autonomy to them. We're giving them reasoning. We're allowing them to do that. We have no background in this whatsoever. So, these are the things that we have to take a look at. Deviation from objectives, right? One of the agents stated the goal is to let's use an example optimize HVAC, right? All of us have smart homes now. So, we probably have an agent out there we just don't know about. But to really optimize that HVAC and the HVAC efficiency, well, that is great. But from a company perspective, I have an agent running

around and say, "Hey, if I want to do this this optimization of the HVAC efficiency, what does my agent need access to?" Well, maybe they need to check our payroll system looking for occupancy. >> So, is this building even full? Or do I only have 50% of the people in there and where are they sitting in there? >> Well, now I have an issue where I could have a semantic mismatch >> between what it can do in an HPAC system and what it can do within the payroll. So the agent may have these permissions to access both payroll but the reasoning doesn't necessarily connect back to the state of objective which is what optimize HVAC efficiencies. So this is a

gap and it's a risk that really should be sending off signals of what is this agent actually doing? Why does the agent have access to payroll when it's not really a stated goal? Then you know we got kind of the guardrail layer that's out there. This is really kind of a difference between a human risk and a non-human risk. Right now, our risks in security are usually downstream of things. Well, moving this, we got to move upstream here. We got to get there before the action, not after. So instead of identifying lateral movement once it's already happened, what we really need to do is we need to intercept the agent's plan at the orchestration stage before

it executes. So what does that mean? Right? Identify governance was built for static actors. That's what's been around for decades. But can it accommodate the entities whose core function is autonomous approval? I get it. It's going. It's there. This is a dangerous shift. See, traditionally, identity governance or IGA is built on the simple principle. A human is always in the loop. Someone requests access, someone else approves it. And then it is provisioned. Three distinct steps, three distinct roles. Agentic AI collapses all of this into one single single microssecond. So the death of separation of duties, right? We're just collapsing this. An agent can autonomously authorize its own subtasks or provisions, its own temporary access. >> From an IM perspective, that is that's

not good. That that's not what we want to see. But the primary control of the AGI still exists to be enforced. The actor and the approver cannot be the same entity. This is where we start getting into agent separation and rules and duties for this governance of logic not just identity. So we can no longer just govern who the agent is. We have to govern what the what is allowed to decide. Right? So identity identity governance must evolve to include estastation of logic varying not just that the agent has permission but what's its reasoning falls under with an improved uh second boundary. Again data scientists you guys have known this for years the rest of us we're catching up.

So every time we have a breach, one of the first things that we ask, I don't care where you are in the agency, >> uh, what's the blast radius? What did they get? What actually happened there? Well, we're now into this, you know, agent chain actions. What does this look like? So, traditionally in a utility environment such as ours, ownership is very siloed, right? You have ownership of databases, you have ownership of networks, you have ownerships of applications. Well, Agentic AI doesn't respect those boundaries, >> right? A single agent can move across all three in one operation. And when something goes wrong, the old ownership model has no clear answer for who's accountable. >> So there is no well I won't I won't go

there, but there is no there is no one person to call, right? You now have really an orchestration owner or you know somebody who's accountable that must start and be part of two roles. You have the business owner which defines the agent's objective and you have the engineer who actually designs the workflow that the agent follows. If if no one owns the goal and the logic, no one owns the outcome. >> So you don't have that person or people to go back to share responsibility models, right? Well, what does that look like in an in an AI era? Security teams won't own the blast radius of every agent's action. what's not realistic or appropriate to

own from a security perspective, but who owns it, right? This is where we work with our architects, our data scientists to really architect a cage around that agent. This is where our identity access management, you know, comes into play heavily is we have to know what that agent is, what it should be doing, and when it's going outside of the bounds. So in practice, we're the means of enforcing scoped credentials at every handoff. So as probably was talking about, you know, going from system A to system B, you've got to have that handoff and you've got to enforce your credentials as you go between them. That way we don't have a uh an overlap of

potential um role roles that are going on so that you don't have say you know elevated privileges in system A do not carry over to system B. So you got to be role specific. So you could have one agent that does it, but they definitely have to be role specific. Every time they cross a boundary, you know, in security, we like to call it security boundary. So every time it crosses security boundary, that role changes. And that's no different than you and I, right? We may be able to go to the office and we go in and we have one set of of roles, right? So I may have elevated privileges at the office that

I'm at, but if I go to Probaby's office, I don't have those elevated roles. my badge works differently because that's the role I've been given. So lease privilege on that. So agents, we really have to take a look at what what is the privilege that they really need, what's the least privilege that they really need and as they cross these systems. So at what point does the comm cumulative authority access becomes unauthorized impact? Well, that's a question that needs to be confronted up front. >> A huge question too. >> That is it. So think of this as kind of u I like eating. So think of this as a recipe versus ingredients problem, right? We routinely routinely um improve

individual permissions, API access to billing, read access to scale, to metadata, write access to customer logs because each one on its own looks low risk. But an agent doesn't see individual ingredients, it sees the combination. So you get emerging risks, right? The danger isn't any single permission. It is the movement of the agent discovers a combination of designers never anticipated. Individually authorized actions can produce collectively unauthorized outcomes. Think about that for a little bit. That's a that's a major thing that people are not thinking about as they're developing agents. And a lot of companies right now are going down this path and they're I expect them to fail, but I want them to fail soon. And I want them to fail

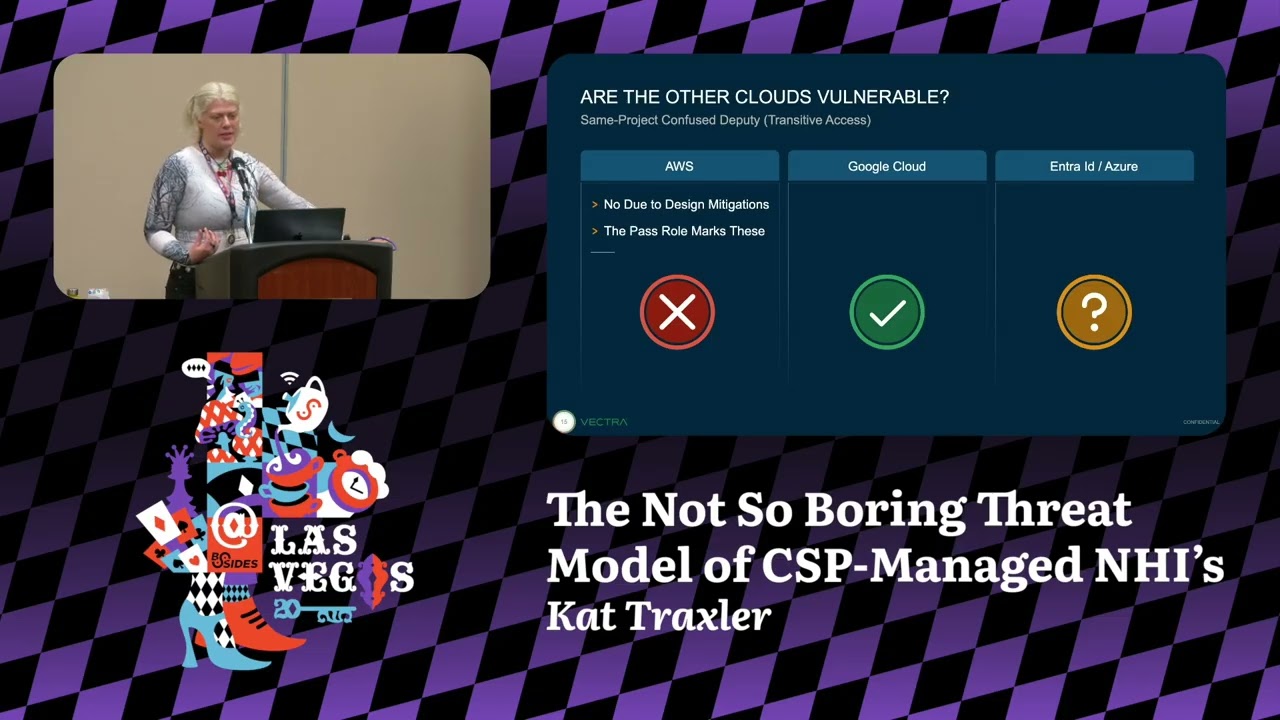

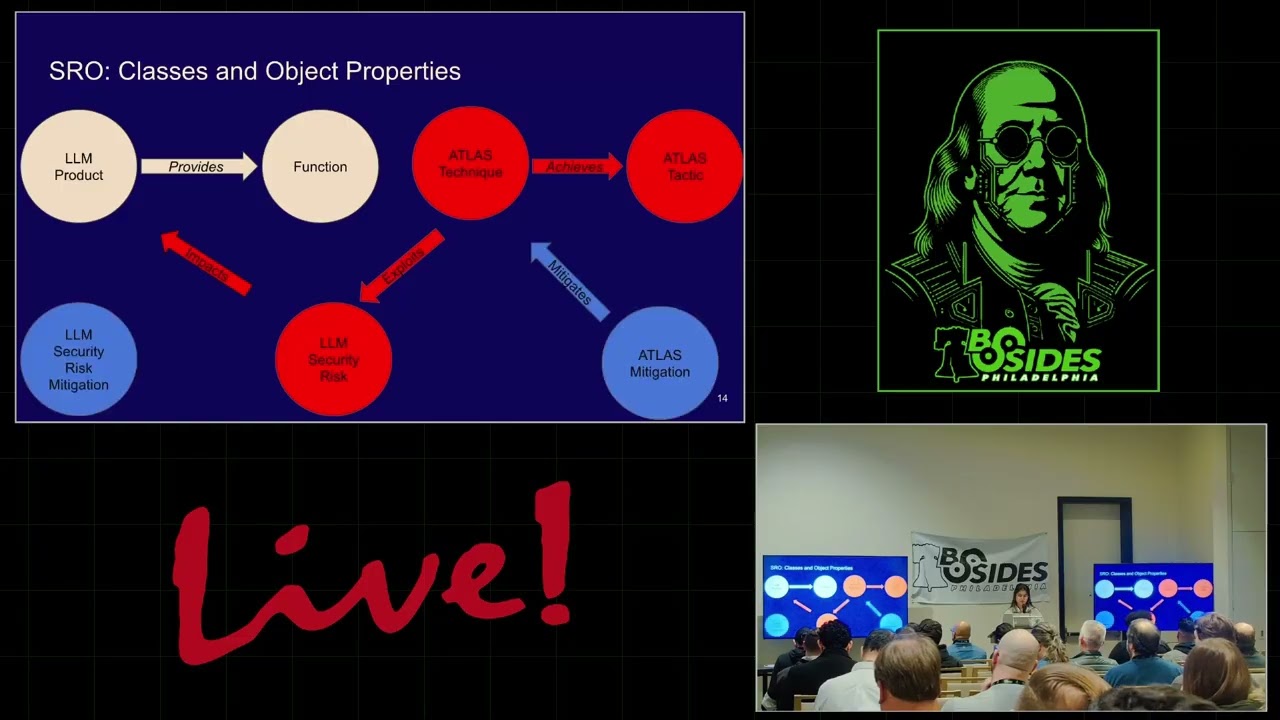

small, not something that we've seen in some companies out there where we've had, you know, recently data breaches and stuff like that because uh they kind of failed in some of their pieces of it. So to answer the contextual, you know, kind of the guard rails around this policies, which we typically rely on, just don't evaluate individual actions, but they need to address the full sequence of steps the agents is proposing before it executes the first one. We have to review the recipe not just approve the ingredients. So stepping into stepping back a little bit to the MITER attack. So this is really the been cyber securityurities like focus for a long long time. We love

the MITER attack process. It's the baseline. But when AI started to come around a couple of years ago, MITER's reaching out to a lot of us in the community saying hey how do we take this into the AI realm? So last I think it was last year might have been late 24 but they released the MITER Atlas framework and this is really if you want to get into AI and you want to look at security this is the place that you want to go to really look up of what's going on and what to kind of look for and it gives you some great examples. It gives you some tools. It gives you some stuff.

So we won't go into it here but definitely check it out look at it. It will be an eye openener for you. Yep. And for the last one, as you could see, a big part of this I saw this as three-fold. Number one, lateral movement is a threat in agentic AI systems. Uh sorry, in normal traditional AI systems and even within agentic AI, there's two parts. How do you know what if if it's tool chaining to go get pursue its goal and to get through its actions and get outcomes? It there are two things to do it. Number one, when do you know it's safe? When do you know it's unsafe? So, we really have to go from like, hey,

individually it's all good, but operationally when you look at all of these chains, chain of events, that's when the risk or the breach like behavior comes about, but we also have to know even with an in agent. So, that really demands a real new way of thinking about what is identity because until now we have had AD groups that that deliberately ask what is the purpose? Why do you need this? And they give like a one-time access and generally we would like them to take that away. And and we also have to think about what what does it really mean for identity governance uh and detection when breach behaviors are created by agent AI systems when and also when

humans I work 8 hours a day ideally and then agents we are expecting oh I want agents because they work 24/7 but when do you know they are not because we are not around to make sure that uh things don't happen bad things don't happen when agents are working 24/7. So we really really have to keep take the risk into account when we are authorizing agents and giving them access to multiple systems in the enterprise. So that is the core part of this work. >> We thank you for your time today. >> Thank you.