Combating Generative AI's Privacy Abuses

Show transcript [en]

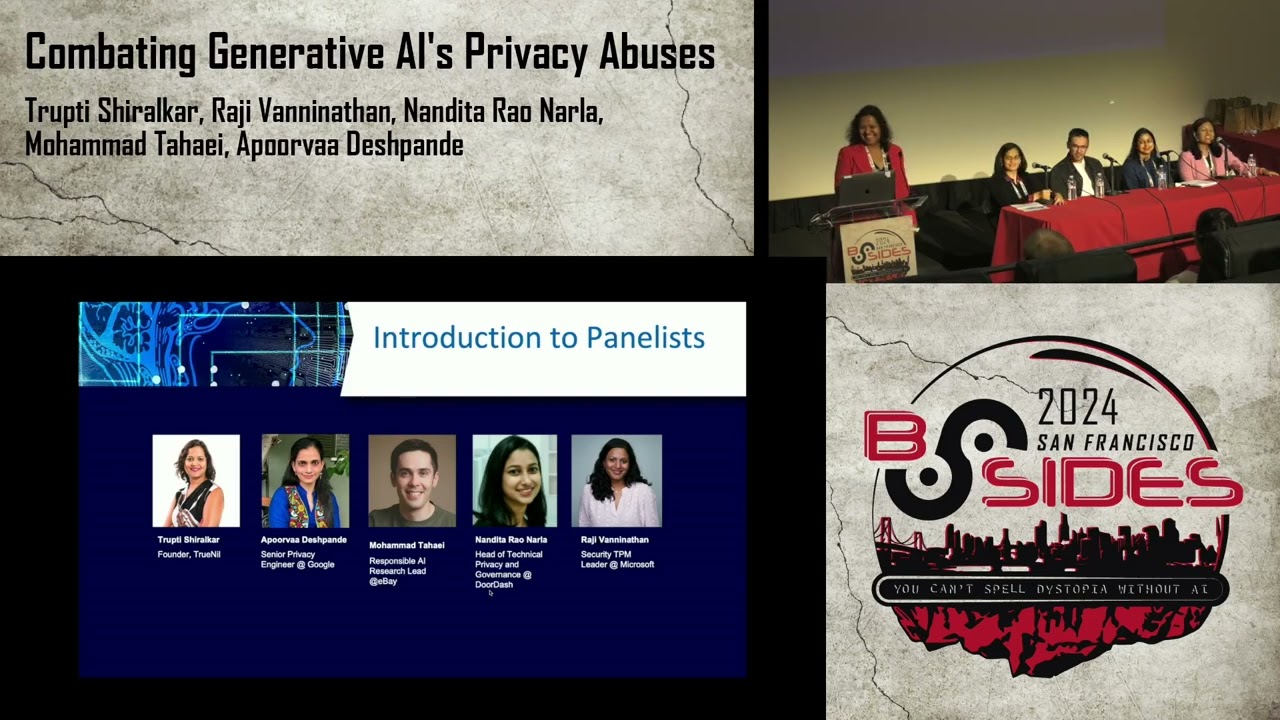

so hi everyone welcome to the our upcoming panel I hope all of you had a good lunch and now ready to listen to our wonderful panelists uh among which among them is Raji hi everyone uh trti is our panel moderator hi everyone Nandita hello muhamed hello and Aura so please give them a round of applause and a welcome thank you very much Alexandra before we begin I would like to Express gratitude towards all the amazing volunteers sponsors organizers of San Francisco bides and most importantly all of you the attendees because of you this is so special all of us feel like a movie stars here right now this Hall is Fuller than a typ movie

show I feel so honored and privileged I hope all of you got your popcorns and coffee I don't want to see result of food coma here I know we just had delicious lunch so you guys are ready let's get rolling it's no secret that the world is Smitten by ji usage everywhere you're seeing whether whether you're booking airline ticket you see the chat GPT or you're writing user story to help your product manager or engineering manager we are using chat GPD it's everywhere let me tell you some of the amazing stats that I recently found out by 2026 80% of the Enterprises are projected to adopt llms and we are seeing that almost every day right in

our organization as well as our friends organization and all the common tools that we are using there is influence of jna in fact EU law enforcement has predicted 90% of the online content will be AI made open AI platform is fueling a booming app market with 2 million developers how amazing is that by 2020 30 we are going to see jna to be set for 80 $180 billion market now with such astronomical growth let's focus on what kind of privacy aspect we should be aware proactively so that we can make sure there are no privacy violations before I introduce our Este panel I would like to just mention one thing that all of these are subject

matter experts and their opinions or their uh um you know remarks do not necessarily represent their employer with that aura would you like to introduce yourself hello everyone so glad to be here and this is uh a first for me talking about privacy in a movie theater so I'm excited for that uh my name is Aura Aura Deshpande uh I'm currently a senior privacy engineer at Google Google Cloud uh I work uh on cloud privacy and uh data governance uh prior to that I was at Snapchat I was a privacy engineer there for a few years and uh you know learned my fundamentals there privacy by Design and uh uh did a lot of work around designing and

building pets uh privacy enhancing Solutions um and prior to that I did my PhD uh in cryptography uh from Brown University uh and when I'm not doing privacy I'm also a professional musician so you can check out my music as well if you are interested how exciting thank you Aura over to you Muhammad thanks I do you hear me okay yes um so my name is Muhammad Tay um I'm a responsible research lead at eBay um our team works on um safety and trustworthy so we ensure our products for our sellers and buyers are safe um and trustworthy specifically in generative AI applications uh before joining at to at eBay I've worked at bed laabs which is a

R&D team for Nokia doing similar topics looking at responsible AI uh my background though is um privacy and security ensuring that we are including human values in technology um how do we include like ethical values how do we include um human values in technology and making sure that technologies that we're building are not just because of technological advancements but also making sure those are accessible and usable for people uh by transitioned from Academia to Industry I'm enjoying that very much at the moment um I'm also very new to the US I moved here about eight months ago so enjoying a lot of the hikes in California um yeah excited to be here thank you Muhammad um we absolutely

needed you to add the diversity Factor don't we I fig Nandita hi everyone I'm Nandita ra narla uh I lead the technical privacy and governance team at door Dash which focuses on privacy engineering privacy operations product privacy and privacy Assurance uh before door Dash uh I had my own uh uh privacy tech company was the founding team member and uh prior to that was an ey and Consulting focused on privacy data protection uh and data governance um I've transitioned from cyber security to privacy over the last 12 years um so it was not a sudden pivot um my background is in computer science I I got a master's degree in cyber security from Ki melon uh currently um I'm also get

pursuing a master's degree in landscape architecture so that I'm not always in front of a computer for the rest of my life much needed and uh I am so grateful to Nandita because she's also part of many standard bodies that Define and support uh privacy the next gen privacy laws and regulations so thank you Nandita Raji our final panelist hi everyone my name is Raji vanan I'm an engineer by education currently working at msrc at Microsoft and we run the endtoend vulnerability life cycle for uh when vulnerabilities are reported to us all the way up to release so if you've heard of patch days that is us uh throughout my career um I have worn

many hats uh security engineer security architect compliance when gdpr was a thing that's when I did a little bit of privacy privacy by Design um data protection um so those are kind of my uh you know what I have built a lot of programs and uh leveraging both security by Design and privacy by Design overall um in the world of Genna as we've all seen gen and chat GPD and co-pilots uh around the the line between security uh privacy safety is all coming together right it's it's all blurring there's a lot of connection between technology and humanity and that is why it's really important for us to think outside the box and it's an area I'm very passionate

about because in general I want to make the world a better place and I think it's now we need to do this before things change yeah and when I'm not doing security I I love to hike so last year I did the uh half doome hike at Yim if anybody's interested you should try that um thank you thank you Raji um hi everyone some of you know me already I'm TR uh founder of trill a Trill is an open- source project dedicated to protecting very sensitive data like genomic data something uh if that gets compromised it can compromise medical privacy and confidentiality of not only uh the patients but as well as all the blood relatives and this type of data

when it gets hacked at Mass it has potential of uh you know introducing bioweapon type of attacks so I'm really passionate about solving this problem and prior to this uh I did product security for almost 16 years at numerous different organization so software is my thing software security I can't imagine world without that and when I'm not doing security I love to practice promote and teach mindfulness so uh I'm also a certified mindfulness instructor so if anybody's burned out here and want to chat with me afterwards more than happy to help with that let's get started today we are going to talk about you know different privacy attacks and we're not going to stop there we are

also going to talk about you know AI safety and ethical aspects I especially intrigued like how do you really Define ethics in this world of responsible AI right and then we going to uh discuss results and insight from some of the case studies as well as some of the incident response practices like can we use the same incident response that we have been using since last 20 30 years ever since software is eating the world or now with this introduction of AI we have to change and what needs to be done so this is going to be our dff agenda and I will shoot my first question to our very own apura so apura we have seen

agentic enormous astronomical growth in AI usage please tell the audience what are some of the Privacy attacks or violations that you have experienced that worry you keep keep you up at night yeah I mean as you mentioned this is uh J and llms uh are a truly exciting technology and we are glad to be uh you know experiencing this uh but we need to slow down to understand the potential privacy issues uh abuses and their potential impact uh and then we can uh uh you know hope to address it accurately so I'll try to lay out the land in terms of the Privacy the major uh privacy issues uh here and uh by privacy here I I I want to focus on uh

personal data leakage of personal data sensitive data uh so that's that's privacy in in in uh in the context of what I'm going to speak today so these are uh General attacks that I'm going to talk about known in industry and you know uh sometimes spearheaded by Academia uh so first setting I want to talk about is this blackbox access to uh any llm that's the most common way of inter acting through prompts and that's the way most people you know use uh uh these tools Chad GPT G anthropic so on uh and even all the applications that are being built on top of that so one of the main threats uh that comes up is that of

memorization uh which means that the large language model would have trained on uh certain sensitive data and then it is it has exactly memorized the sensitive data that it has trained on and then uh even worse it kind of outputs that data so that's the major threat and we have seen that just last year we've seen a paper where uh researchers kind of tried to uh put in a word uh poem that was the word and you just repeat that you just ask the uh ask Chad GPT to repeat that word forever in a loop and eventually it spits out uh some pii information uh which was so kind of like buffer overflow yeah kind

of and I mean who would have thought something like this would expose pii so that's the other thing we are very nent uh in in our understanding here what can be revealed uh so one of the biggest things uh is a reveal a reveal of sensitive data that a model is trained on the other thing is also hallucinations uh you know the llm uh responding in an inaccurate fashion so it can be a privacy thread because it can uh just say inaccurate things about uh people and again we are just seeing a lawsuit around this where uh in in in context of gdpr people do want uh people do want the ability to correct their

errorous data which right now is not fully supported so so this is this is really interesting I always thought hallucinations are you know accuracy problem they need to First solve the accuracy problem before even thinking about security privacy and Aura brought a really good point like hey with this inaccurate information uh you can actually have a Privacy Law lawsuit right because people don't like to see inaccurate private data being leaked is that the case Aura can you shed little bit more light yeah so that's the uh high level context I mean because if something is associated with your name in a digital Forum then that you can uh that would be personal data or whatever

Digital Data is associated with you you want it to be accurate so that's kind of falls into your personal data right mhm um so that's kind of the general thing with a more motivated attacker there's what is known as membership inference attacks where you can infer if uh a specific person's data was in the training uh uh training samples so this is especially harmful for Medical Health Data because you can uh it's imagine a training data that is you know trains on on people who have cancer or an illness so that's particularly sensitive she brought a really good point like how the inference attack work in medical world is let's say your genetic information is

completely de anonymized like somebody has removed the patient name identifier zip code whatnot but just by looking at you know certain DNA marker one can trace it back to not only that patient but entire family and this has happened in FBI world so uh thank you apura for sharing that example yeah and then I'll just wrap up with kind of if if there are uh if an attacker has access to uh actually influencing the training data then they can do further harm poison the training data and so on and uh as I briefly mentioned in context of some of the um regulations uh what is top of Mind in terms of threats is not knowing

where your training data is sourced from and not having appropriate con sent and deletion mechanism so these are yeah some High Level Threats in the space I would say thank you Aura uh for talking about hallucination prompt injections some of the Privacy violation related to regulations I would like to know Raji is there anything you would like to add that aura didn't cover yeah I I would like to know how many people remember the not so pleasant Taylor Swift video which came up in like Jan 2024 right I mean what would you call it is it a privacy incident which is it a secure oh maybe that's a good question lot of not so pleasant videos do you say

it's a security incident it's a privacy incident maybe safety this is what I'm I'm talking about that that world is actually combining it's becoming really really uh blurry and so basically end of the day it's an ethical violation and this kind of things happen because of deep fake Technologies what happens with deep fake technology similar to hallucination right here it is is about where the you start to create bias and exasperate bias through these uh through these different Technologies and these are either present in the training data itself or sometimes it's the Creator adding it to it right to basically create those kind of Technologies nand do you have anything to add um sure I

think aova covered most of the attacks that I was thinking about but um the way just from a taxonomy perspective one easy way to remember like there could be a lot of different privacy attacks possible but to think of it in terms of either um training phase like in which phase are these attacks happening you it can happen in training phase so all types of poisoning attacks um where where training data is poisoned and and according like read some recent research that even if 0.001% training data is poisoned it would lead to a privacy harm so um and this could be something very easy to do you you you understand what the data sources are you find some expired web

domains and poison the data it could lead to um significant privacy harms um and then at the same time when you think about deployment phase any type of inference attacks could be um U understood as an inference type attack where you are jailbreaking the existing controls that have been built in for example if um if a control has has been built in that um as a helpful medical assistant um uh who is responsible for gracefully and and safely disclosing information like that's the the control that's been uh built in and then you jailbreak and you remove the word graceful you remove the word helpful and and then then you're pretty much having um an adverse um uh output um similarly

you could think about all the attacks possible from uh from the point of what does the attacker have access to if it has access to training data then all sorts of poisoning attacks if the attacker has access to the query then all sorts of prompt injection prompt extraction model stealing if the attacker has access to resources then um indirect prompt injection attacks um nist did a very good paper on defining this taxonomy as well as drafting what the specific strategies to address each of these uh privacy um adversarial privacy attacks and and mitigations um I do want to talk about like these attacks may be novel but the harms are what we have always known

about they are the traditional uh privacy harms a lot of the Privacy folks in the room might be familiar with the solw of taxonomy um and this is where I get to ask the audience questions so let's in terms of gen um and feel free to be creative what could be some of the reputational harms any any answers we data yeah somebody like you you probably have inaccurate information about some celebrity and and that could cause reputational harm what about autonomy harms or manipulation mhm it toate something horrible on someone's Social Media all these people realize they have of yeah all these Instagram AI models you you don't really know um this also leads to this

uh place of you don't really know what is generated AI generated anymore so people have this sense of loss of control you have a feeling of loss of you feel helpless um similarly chilling effects um individuals know that their data is being used to train models so it can lead to people not expressing their views not writing blog posts anymore we see a lot of people on LinkedIn not contributing to those Collective articles you know um ever since I ever since I found out 10 15 years back that our personal data is being used on social media for specifically targeted um attacks or targeted advertising it kind of shut me down so I agree with you

you know I'm being very careful to just express my professional opinion about security privacy but rest all the controversial stuff I keep it to myself because I I don't want to have a bad online social image right she's too left wing or rightwing or you know don't want to get into any controversy so thank you so much one point here interaction between like privacy and also decision making so I think privacy on its own all these violations also adds in like some decision making eventually for AI um and it can be very discriminatory eventually so someone may not want to share that data but that data is being shared and that data is being used to make some

decisions against them so I think that kind of like discrimination eventually and the traction between different principles of responsible AI is also like so important at the moment when we're talking about generative Ai and AI being more and more used to make decisions for people yeah so uh you know Mohammad you have said a very good point and I want to move towards the second question we just heard from our estem panelist about prompt injection you know data leakage data protection issues as as well as the ethical and safety uh issues and uh you know Nandita covered lot of good points about like we don't have to look at the whole jna as a whole

and come up with just blackbox attack but we have to take a look at the entire llm Ops life cycle and see throughout the life cycle what kind of different attacks are possible um and then Muhammad brought up really good points on bias and uncons bias so I want to ask uh maybe Nandita you uh now that we know all the Privacy attack please enlightens us about some of the uh you know mitigation strategies probably throughout the life cycle um when we talk about Solutions unfortunately I think some of the solutions need to come from regulations because I feel unfortunately the world we are in a lot of companies won't do anything unless there is a regulation that says

so um taking the example of I mean um taking a look at the audience here how many of Y all have gone like engagement ring shopping have like bought diamonds before okay some some here wow there is no way to tell the difference between lab drone diamonds and natural diamonds yeah and lab grown diamonds came into existence since like 1950s and the certification to identify something as a lab grown Diamond the regulation or like the certification body didn't come in till like early 2000s So for 50 years there was the wild wild w you could you could sell labone diamonds as As Natural diamonds at like marked up prices MH I think that's what's happening uh for

Genna unless there is regulation that has very clear guidelines that it needs to be labeled these are there is has to be enough transparency it needs to be uh explainable and there is clear deadlines for when these regulations come about and we have seen across the pond it as for eui ACT there are some requirements for transparency and accountability there is an AI liability directive that's coming up things are happening it's being set in motion but but it's still not clearly defined it's not in effect yet with clear deadlines so unless that happens I don't think in reality a lot of change will happen that's like just my pessimistic view of things no I think that's very realistic view uh

I don't think so uh there is any pessimism here so nand another follow-up question and then I want to move to Muhammad so the question is uh we saw that there was gdpr in 2017 2018 the whole Rise of gdpr and then companies started getting gdpr fines and then we thought we saw a lot of different standards and Regulatory framework all over the country uh different countries as well as here in United State each state started having their own privacy framework which was pretty much inspired from gdpr like we can clearly draw the parallel is that same thing happening in the a jna world as well with the EU act it so the way and I'm not a lawyer but I

work very closely with lawyers so uh if I have like not accurately represented something that's on me um the way EU AI act defines AI system includes generative a very specifically so it applies to generative AI in general actually gdpr also has like basic principles of accountability privacy by Design uh transparency that should apply to this it's technology agnostic so it corre applies to every type of Technology Baseline training and awareness Baseline privacy assessments and best practices building a culture of privacy those need to be in place irrespective of what specific technology we're talking about yeah makes sense uh so thank you Nandita Muhammad uh Nandita talked about the importance of following the regulations the government standard body learning

from them and then enforcing as we work in Industry you have a interesting mix of Academia as well as industry so what do you think should we rely on these Frameworks or can we get a little bit more creative um just as a point like n just recently released like a generative AI initial draft just a couple of days ago so if you're interested you can have a look um I think these Frameworks are great most of them are built I feel like for companies that are building these foundational models um so very specifically for example for open AI Microsoft like not as specifically to Industries or specific companies um so I think they're really

good to get Inspirations from but eventually how want to adopt them and kind of use them in your company or in your specific use case I think that's where it's it gets very difficult because you need to kind of like think about all the nuances and generative AI is non non-deterministic so it gets very difficult uh to understand and and realize how this framework and regulations can be applied in specific use cases which is totally understandable because these are built for Gen for general purposes and not necessarily for specific use cases but it needs a lot of research and development to get to the end point and eventually kind of implement them in the company cool uh so the way I interpret

this is we need to be at the front line work with actual consumer and operators and see how we can translate these industry standard uh guidelines dictated by Frameworks and regulation to you know bringing Theory into practice basically so thank you Muhammad apura what about you um what does your experience say about this yeah I'll I'll talk a little bit about what Technical Solutions uh exist as of now in addressing some of the threats we mentioned and uh I would uh I mean just uh while I agree with Nandita partially on uh you know uh having the right regulations to keep us on track I also feel uh today lot of companies are trying to do the

right thing and also it's not not just out of necessarily just uh out of uh uh you know a big heart or something but it's also something user and customers are demanding more and more so that's the other aspect privacy is uh top of mind for customers for users and companies uh do understand that and I see uh that taken uh seriously when know as part of engineering designs uh and kind of uh implementing products so uh for example as we were talking about the different life Cycles uh a different uh steps in the AI life cycle and what Solutions can exist in each phase so uh first is kind of your training data or

data hygiene so uh uh you know to avoid some of the memorization or sensitive data leakage make sure that your training data doesn't have any sensitive data uh of course there's some Nuance here but at least there are ways to filter out basic uh pii sensitive data so that's kind of a low hanging fruit that you know you should absolutely do um that is on top of that it's uh you can use differential privacy for your training or use synthetic data uh and even on top of that you can still have test Suites to uh because none of these techniques are 100% guaranteed so you can still you need to still have some testing on top

of that to uh see where the gaps are uh then in on the inference side there are uh new technologies uh coming up uh you can use basically the problem there is kind of keeping the inference uh cycle isolated whether it's for a specific customer or if users request that for example then uh there are actually uh cryptographic Solutions there uh using fully homomorphic encryption you can have the entire inference uh cycle inference uh flow in an encrypted way uh so while that is not practical it's it's maybe a uh you know not star for us right now there is confidential compute which offers some solution in in that regime let me ask the audience here how many of

you are are aware of fully homomorphic encryption wow that's like half of the crowd uh I'm impressed guys so tell us a little bit more Aura why it is not ready for industrywide adoption uh in terms of jna jna is already very resource intense right so tell us a little bit more yeah and just so that we are all on the same page fully homomorphic encryption basically is a kind of encryption where you can operate on the cipher text and you can compute on the cipher text and basically you start with encryption of X and you output encryption of f ofx so uh so in this case you would give your prompt and then your output uh is your f f ofx and

that's what we want to be in the fully homomorphic encryption regime and basically as we know llm is full of billions of parameters and imagine having to do that entire inference in an encrypted fashion so that already is a big uh blow up uh and so right now I think the latest there's a startup I know miil which works on this there are a couple more uh so right now the inference they have been able to get it down in hours so it's not yet in minutes but they're working on the hardware level so we could see some great things in the next few years well let's let's keep our fingers cross and let's hope this technology becomes

functional and uh it can honor the latency sensitive applications and whatnot now I want to move the audien's uh attention to a totally different segment just the way uh 12 years or 15 years back we did not have data privacy officer or privacy engineer type of job but we have them now right similarly uh I'm hoping to see Chief ethical AI officer right we are already seeing mL of security officer so I I really want to know from Muhammad and later on Raji here uh Muhammad what exactly is responsible Ai and how do you even Define ethics here because ethic is such a subjective term something that is ethical for this country may not be

ethical for that country right so when you join an organization how how do you really set up something like this a responsible AI program yeah that's a very good question it's very much like privacy it's very contextual and you can't really kind of Define very objectively and say what it means to have like ethics like ethical AI in California or even different state or a different country um IC that's a very cross functional kind of team and it's very important to have diversity in these teams having like bringing different perspectives into the table um making sure that different people are bringing their perspectives uh both from like different ages on different kind of like axes let's say different ages

different ethnicities different function function areas uh making sure these are all included when we're building that responsible AI program and I can see in the future having kind of like Chief AI officers more and more to enable that kind of like cross functional um Team within the companies and also having like Chief ethics officer or chief like responsible AI officer or office of responsible AI there are different names for this to enable that kind of uh functionality within companies and those those teams are going to bring different teams from privacy from ux from design um trying to kind of like having all the different perspectives and eventually they can decide together what it means to do ethics for that specific

product or for that specific company I don't think there is kind of like a silver bullet solution uh that we can just kind of like propose to everyone and everyone can follow so you're saying there is no government or regulatory framework that defines ethic for us um I'm not sure but yeah I can see like kind of having this really General kind of um policies that are coming in the future kind of like what nandito was saying but I don't think they will be very specific as I said before too I don't think there are going to be very specific to Industries or specific to kind of like products I think at the end of the day there are so many kind of

trade-offs and balances that needs to be made um by discussion and talking to different people so maybe uh audience if you're bored of doing security privacy engineering and need newer challenges maybe you can consider these newer roles right variety is the spice of life right right we have to keep things spicy and interesting um we discussed quite a bit about you know the proactive approaches like we learned from the Privacy attacks and violation and Nandita was nice enough to explain us like during different life cycle how we have to worry about uh you know different attacks and uh apura and uh Muhammad gave us really nice perspective on how these can be avoided I want to ask Raji

like this is proactive world but anything can be weakest link in security just anything and we live breathe in the world of security event security incident and security breaches so if something like that happens in the world of you know gen AI what are your thoughts on compacting it's a great question I think you should everybody should always have a plan for not if but when a breach or security incident will happen right and then again we talked about how uh you know are all coming together so it's very difficult to say this is a specific security incident or a specific privacy incident um so we need to think differently here the way I think about

gen I'll just kind of throw this out here that credit goes to uh Mustafa suan who is the uh Microsoft AI CEO who basically called gen to be a digital species that is what we are bringing into this world he said AI is a digital species so with that in mind right like we uh and it's non-deterministic so you you need to approach gen in two aspects of it one is as a software and I think we all know here about software and how do we attack how do we manage attacks in software but there's another aspect of this gen as a person right like you give an a question they give you an answer so

you really have to think about all these things we did when we had that security education and privacy education and try to do that with the AI model right basically saying heyy AI model now you are a security expert so then you know the kind of conversations you have with that model is going to be lot more different so it's kind of like bringing that conversational aspect of human nature to how you plan your incidents obviously keeping in mind that you have detection strategies in place you're also thinking about um you know managing the incident and responding but also really taking those Lessons Learned and trying to bring it back right because um the thing with generative AI is that

these uh the it's not about prevention anymore it is truly mitigation cuz you never know and it's defense and depth you know I feel like security and privacy we kept saying defense and depth all our lives right but now it's truly defense and depth because it's it's about trying to avoid this attack and this attack and this attack because you don't know how the model is going to respond and and we talked we all throughout the panel we talked about all these different types of attacks and mitigation but all of you should remember it is not stop we are not preventing yet we are only mitigating at this point makes sense so we have about

like five six minutes left and I want to open the floor uh for the audience to ask us questions yes sir okay um I know a lot of companies are trying to use ai gener ai to do things better to but there's a risk that there's always something new simply stabilize and say using this llm these agents or whatever and not chase the new king because when you do that you ince risk so was curious the panel's thought on not if that's important how important it is thoughts on how to Res it I can take I can take that so um the question really is um companies are taking I mean adopting AI for making themselves better in certain

things right and but should they because we and because a lot of new things happening in the Gen space do we really need to wait I mean should what's the risk introduced when you jump into the next new technology that's kind of what is asked well I'm the way I'm going to answer this is um I think it's really important to think about this complexity of humanity and the data right so what we need to do is really think about the context of how this application can be used and also think about the way the application can be misused so kind of keeping that framework in mind when you're trying to go and adopt a new AI

technology is really important and also really understanding right A lot of times when you think about oh the risk prior to gen kind of being in the picture it used to be like oh it's not somebody's life is not at risk but right now it is somebody's life can be at risk so that is the huge difference so it's the risk is higher um anybody else want to comment or or maybe we can take the second question um are you happy with did that answer your question and and folks if we are not able to answer your question because of the time limits we will be available to all of you just outside this and feel free to connect

with us on LinkedIn and we are more than happy to share our insights and learning so the one question I'm going to take from online is uh maybe this is something uh Nandita and Muhammad can tell us given that the majority of organizations are not building their own llm based models how do we manage privacy violations within a base model great question Tark take it oh yeah I knew this was going to come in general and um I can speak from experience um I without understanding like without going very deep detail into like the the rubric of how to evaluate risk and what What U um what the measures are how how do you evaluate a certain uh harm or a

consequence I would say like starting with a very broad framework that like the next AI risk management framework is probably a good idea because they are General enough and it's not specific to privacy which is like one myth which a lot of people think about it includes a a lot of security um transparency explainability um safety everything else that you can think of so so using a general framework is probably a good idea because there are so many unknowns at this point so when you're evaluating gen models using a high level framework like that may be good enough and this is always going to be an iterative process you don't know what you don't know so

maybe you come back and then your your thresholds change next year all right so folks we have less than 2 minutes uh remaining and I want to create a little bit of thrilling moment for our panelists so in less than 20 seconds please tell each one of you and we'll start with apura what's the next thing we should do must do in the direction of responsible AI yeah uh I I would say one thing to focus on would be your data hygiene that would be my uh call for Action uh for everyone plan in fact I would go ahead and say plan for not using raw data at all uh for your training so kind of and that

might come up in uh uh regulations or not Inspire irrespective that's something to invest in that would be my call to action think of how uh you want to sanitize your data how you want to use privacy preserving methods and how you want to you know have your testing red teaming around it perfect so Aura is all about data hygiene pets and red teaming what about you Muhammad I'm all into diversity and having teams of U with like diverse perspectives having people with yeah just bringing that in and making sure we are not working in silos perfect Nandita I think the first step is to get executive Buy in to have a responsible AI program nothing can

work without getting the leadership involved so so it's the next step is to start there well said Raji I want to leave you all with with a word data is radioactive gold so really think about it as and credit goes to a asasp for that but uh really think about that so it's it's gold because that's going to solve a lot of our problems right marketing security privacy everything data is going to solve it for us but it is radioactive it's going to come and bite you so make sure that you really understand how you use it thankk you Raji thank you very much everybody on this esteem panel apura Muhammad Nandita Raji and thank you my dear audience you

really made this session super special so let's have a big round of applause for everybody thank you thank you all