Leveraging LLMs for Advanced AI Applications

Show transcript [en]

good morning everyone I'm Satya and I'm honored to be a speaker at the security besides athens's 2024 conference a quick introduction about me I'm a senior engineer at Amazon in the brand Protection Organization in my role my team and I are dedicated to protecting the Integrity of our website by monitoring and preventing infringements and counterfeits from being listed on Amazon retail websites we focus on preventing the misuse of intellectual properties of Brands ensuring that our customers can shop with confidence knowing that they are receiving genuine products in building these systems we leverage multiple llms including multimodal llms to accomplish this goal today I am excited to share insights on leveraging llms for advanced AI applications this topic is particularly

relevant for my work at Amazon and specifically in brand Protection Organization as these models play a crucial role in maintaining the Integrity of our model sorry of our platform next uh we'll look at the today's agenda uh let's take a quick look at the agenda for today's presentation we have a lot of ground to cover uh I will start with an introduction to large language models this section will provide a foundational understanding of what llms are their significance in the field of AI and why they have become so prominent in the recent years then we'll understand the uh llm architecture a little bit uh explore how these models are built the underlying technologies that power them and the key

components that make them effective at processing and Performing tasks then we'll also look at the methods for leveraging llms I'll discuss how to use llms effectively by leveraging apis playgrounds and in some cases deploying these models for production use cases I'll also cover how to customize llms to meet your specific needs by Rag and changing the fine tuning it fine-tuning the model and how to deploy these customized versions I'll also touch upon how to create and use effective prompts to get the best results for LMS then we will look at the constraints on using llm let's take a closer look at what llms are and their key components capabilities and applications across Industries large language models are

Advanced AI models trained on extend ensive data sets to understand and generate humanlike language these models are designed to perform a wide range of language related tasks making them incredibly versatile and Powerful tools in the field of AI the key components of LM llms are Transformer architecture pre-tin par parameters and fine tuning the concept of fine tuning at the heart of llms is the Transformers architecture this architecture allows the model to handle long range dependencies in text making it possible to generate coherent and contextually relevant responses pre-trained parameters llms usually comes with millions billions and sometimes trillions of pre-trained parameters these parameters are learned from vast amount of text Data enabling the model to understand language nuances and

context CLA Opus is rumored to have around 2 trillion pre-trained parameters uh the more the number of parameters uh the more the the better the model at understanding the

language now let's dive a little bit deep into the Transformers architecture the Transformers architecture is a GameChanger in the field of language models because it overcomes the limitations of previous models like new recurrent neural networks and long short-term memory networks rnns often forget most important information from earlier in a sequence because they process words one by one which can make them slow and less accurate for long texts LST M help by trying to remember better but they are still slow because they also handle words one at a time the Transformers architecture overcomes these limitations and it was first introduced in the paper called attention is all you need by wasani and others the key components of

Transformers are self attention mechanism positional encoding feed forward neural networks encoder decoder multi-head attention layer normalization here is the Transformers architecture picture from the paper that was published by wasani and others in 2017 this is this part is the encoder and this part is the decoder and self attention mechanism uh think of self self attention mechanism as a way for the model to look at all the words in in a sequence and decide which ones are most important when figuring out what each word means for example in the sentence the cat sat on the mat the word cat might pay might pay more attention to word sat and Matt because they are closely related then position coming to the

positional encoding since Transformers do not inherently understand the order in which the word words are in a sequence uh the positional encoding is used to provide that information about the position of each word in the sequence the next part would be feed for feed forward neural networks after applying the self attention and positional encoding the process tokens are passed through feed forward neural networks within each layer of the Transformer these feedforward neural networks consists of multiple fully connected layers with nonlinear activation functions enabling the model to learn complex patterns and representations from the input data now the encoder and decoder these are these are represented here uh the the trans the encoder processes the input sequence while the decoder

generates the output sequence each encoder and decoder layers contain self attention and Fe feedforward neural network Su layers along with additional normalization and residual connections one other important uh part of the Transformers architecture is the multi-head attention the multi-head and attention feature allows the model to focus on different parts of the sentence at the same time helping it to understand the text better for example one part of the model might focus on nouns while another focuses on verbs in a translation task to enhance the learning capacity and capture different types of information this multi-ad attention is used then comes the layer normalization and residual connections these these help stabilize training and ensure the model learns

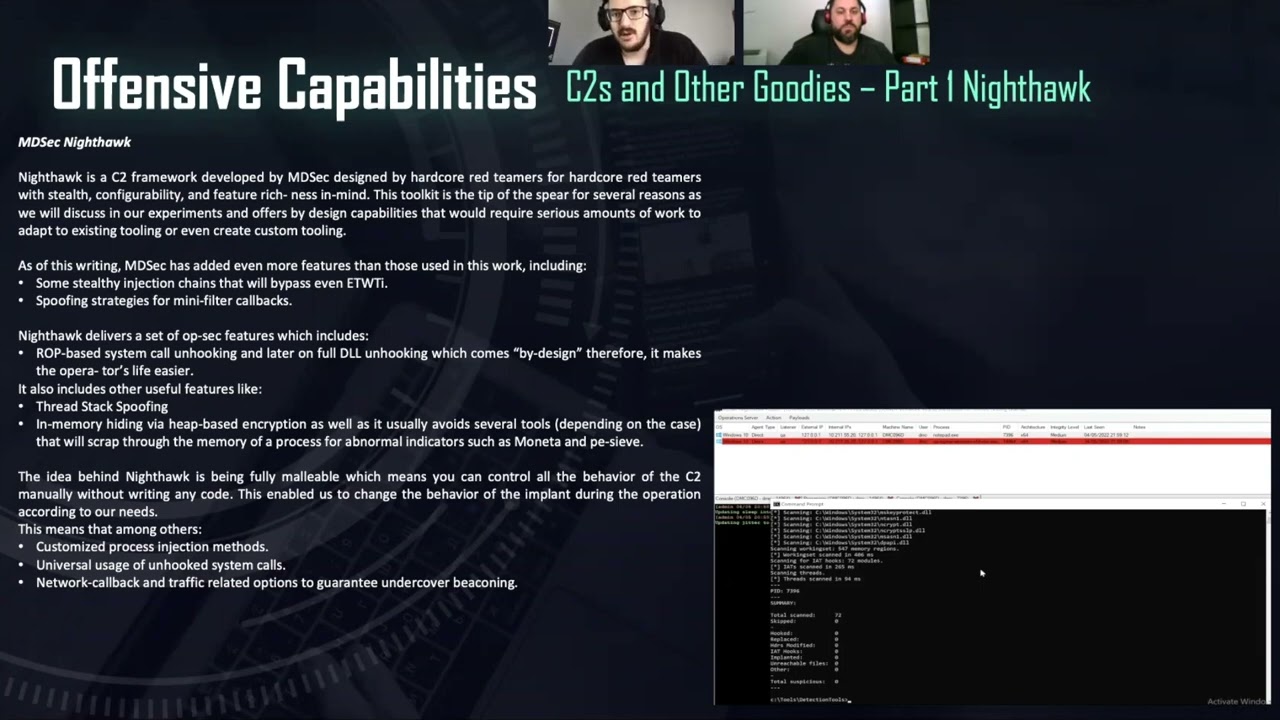

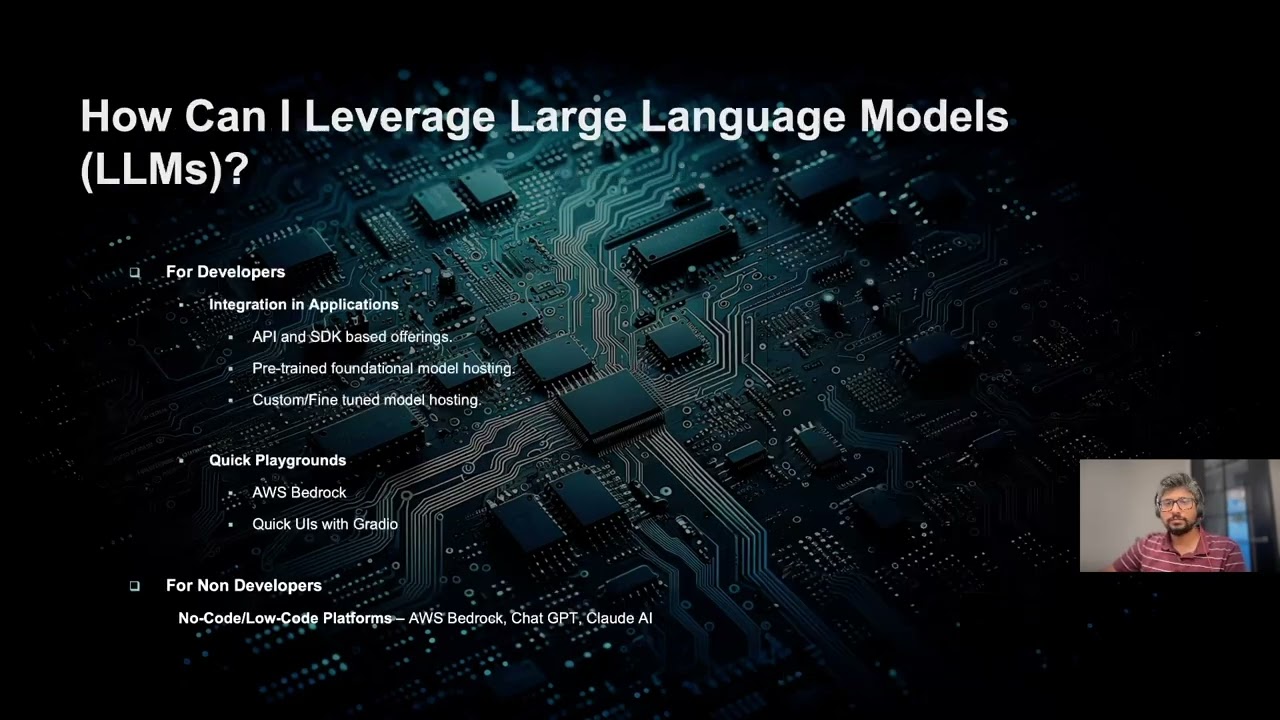

efficiently by allowing information to flow smoothly between the layers now with the with the understanding of the Transformers architecture let's see how we can leverage large language models in our day-to-day or in our uh application building process the for developers integration in applications is one of the ways you will leverage large language models there are multiple ways of integrating large language models into your applications starting from easy to somewhat of a difficult uh method uh one easier way of integration and we will look at look more into those specific things in the upcoming slides is API and SDK based offerings uh you call an API and you get the response you don't have to host a model yourself

there are apis and there are playgrounds to achieve to achieve the functionality you need then there is uh for production use cases or for high throughput use cases you traditionally want to deploy the model and maintain the model yourself so you have greater control over the fine tuning process and all those so in there are few options where you can deploy the model into your uh in into your uh machines and we'll see those and we will also see how you can basically uh deploy a fly fine tune model with your custom inference code then there are quick there are playgrounds which will help you basically in deciding uh or running uh quick checks on uh or understanding how the model

performs or what's the expected outcome of the of a particular model some of the playgrounds that we have today are uh chpt which everyone knows then there is AWS Bedrock uh offered by AWS uh where they have playgrounds for uh multiple foundational models like mistl Claude uh uh llama by meta and you can also build quick uis with gradio with lot less code uh and uh that helps you uh in experimentation of these large language models for non-developers uh the playgrounds and maybe with little coding background uh you can probably come up with quick uis with gradio and or you can use bedrock charp and CLA AI now look at the first way of using

llms via API integration so here is a way to interact with anthropics Cloud V2 model using AWS Bedrock apis it's a simple enough code that you can basically integrate into your applications if they are built on python there is an equivalent Java SDK to uh call the invoke the model and get inference so uh you initialize a Bedrock runtime client using the boto3 python uh uh implementation and provide the prompts and you give the model ID and content type uh in which you wanted to see your response and then invoke the model uh this is a straight enough uh this is a straight way to get the response from the model with without having to host the model yourself into

your infrastructure some of the model types that are supported on Bedrock today is for the text based uh models like text classification or text understanding you have Jurassic 2 Titan text Cloud V2 which is the popular one uh cloud oppus cloud Sonet and all those things from anthropic command R and R plus from coh llama 2 and three from meta mistal which is another popular one uh from mistal AI uh for the image generation it's technically not llm but for as part of the umbrella uh of uh uh gen in general for image generation there is Titan image generator stability stability AI stable diffusion where you can give text based PRS to generate image for the multimodal llms uh where you

have images as well as text or queries as part of your prompts you have CLA 3 hio and Sonet from anthropic uh these are hosted on AWS uh infrastructure you don't have to host it yourself all you need is just invoke there uh invoke the model using the model ID and uh uh by creating the uh s uh the Run uh the client using their uh sdks uh there are embedding models also uh for similarity based searches kind of a use case uh like embed by coher and Titan BY Amazon if you are if you want to experiment uh there are chat playgrounds as well well uh here is one of the a Bedrock chat

playgrounds for CLA uh all you have to do is enter your query uh and ask some relevant questions and then hit on run they charge you by the number of tokens that they process so it's basically a playground to experiment with uh large language models and see if it if a particular large Lang language model fits your use case uh here is one of the playgrounds for anthropic uh

then here uh one interesting thing about this example is uh anthropic uh can uh you can provide a a a a document are the content in the document and format your uh query in a in such a way that it kind of uh gives you a response based on the format that you have asked for we'll go more into some of the prompt engineering techniques where you probably would get a good idea on how these prompts would be uh would be constructed to get uh more efficient response from the uh llms hugging phase is another popular uh model Hub where you can basically uh run your inferences or uh do experimentations before actually deploying the model onto your

infrastructure uh uh we'll go to through some examples of how you can use hugging face uh shortly here is a quick demo of hugging phase uh there is a model Hub in hugging phas where you can PR probably pick some of the models there are quite a bit of models that are registered in hugging face uh but let's pick llama llama 3 so you have uh you can train this model or you can deploy this model into Sage maker or one of the popular cloud service providers or you can directly use hugging face to basically uh uh deploy it to your uh deploy it in hugging face hugging face can provide uh dedicated endpoint to you uh by hosting

the model in one of these uh cloud service providers M instruct yep so the mistal 7 billion parameter model uh so for example for quick playground kind of an experience you can probably do this way uh uh you can ask your questions uh like what is hugging phase as part of quick experimentation it gives you the responses so you can try it out uh there is a inference API uh which is serverless basically you can create your own API keys and use this code to basically call any model that that is supported by hugging face uh you can manage your tokens uh these are the tokens that I use you can create a new account uh and have a set of new tokens

that gets generated so you'll be charged according to uh the based on the token ID uh and the number of requests you put however the inference API serverless I believe is free uh you don't have to pay for it but it is not recommended for production use cases because they don't guarantee the availability uh but this is this can be used for quick uh experimentation of sorts uh now coming back to the slides uh here is one more model that is on hugging phase which detects the image and segments the image uh and identifies what all are present on the table image this is the serverless request INF serverless inference request that we have seen in

the demo this is used for prototyping but not for production use cases then you can host the models on uh for production use cases you can directly host the model this is uh one more thing that we have seen where you can deploy the model on a dedicated endpoint uh with host backed by sagemaker or AWS or Azure or Cloud Google Cloud sorry and if you want to deploy the model yourself onto the sage maker uh into your own account into Sage maker on the aw sagemaker uh Service uh here is a sample code where you can probably which you can use it in a uh Jupiter notebook to deploy the model directly into your

sagemaker uh Service uh all it needs is the hugging face model ID in the model Hub you will have the uh uh access to uh hugging face model ID then you can name what is the task that you want to perform uh and then on which instance type you want to uh load the model uh it creates an endpoint and the endpoint you will be able to invoke the endpoint with any uh sagemaker runtime client uh similarly there are other ways to invoke or deploy the models into Azure and Google Cloud uh you have uh the code uh on how you have the code on how to deploy this particular model into the Azure or glogle Cloud uh listed in the

uh hugging phas documentation or under this deploy button this is one more thing that we have looked at already as part of the demo uh this is for deploying Microsoft's 53 mini into a CPU uh you have a way to compare cost across multiple service provided cloud service providers and pick a particular uh instance you can also set the automate uh uh Auto autoscaling uh to better account for the cost there is another popular solution for hosting models by using as aw Sage maker Studio where you have a bunch of foundational models and they you have button at the at the click of a button uh you will be able to deploy it into uh

your own sagemaker AWS personal accounts or uh the service based service accounts

if you want to deploy if you have a fine-tuned instance of the foundational model then you have uh multiple ways of do deploying those uh for some of the foundation models AWS Bedrock will allow you to fine tune it uh by providing uh uis that are targeted for uh providing your fine tune data set uh do check it out and uh they offer fine-tuning uh for llama Titan and command models uh you can import your custom model also uh in the Bedrock but currently it is supported only for mistal flant 5 and llama architectures you can also build uh a synchronous or asynchronous endpoint on Sage maker via Sage maker sdks if you have your model artifacts you should uh

put your model artifacts in the tar ball uh and then have this inference code which can load your model into the uh uh inference code which can load the model artifacts and then create a model and then run the inference uh using this piece of code uh this is one more easy way to deploy models and customize your inference code in such a way to handle uh little bit uh a small amount of customizations based on your needs now we will go into the limitations of Standalone llms and why do we need uh some other techniques uh to address the limitations of Standalone llms first the llms can produce content that is inaccurate or completely fabricated known as

hallucinations this can be problematic espe especially in applications requiring precise and reliable information secondly the llm struggle with providing upto-date information uh because they are trained on data available up to a certain cut off point any developments or changes that occur after the point won't be reflected in their responses another challenge is that general purpose llms often have difficulty handling domain specific C queries effectively they might not have specialized knowledge needed for specific Industries or Fields without further customization limited contextual understanding is also a concern llms may not have grasp of the full context of complex queries or conversations leading to responses that are of Target or incomplete ethical and bias issues are significant as well this model can

sometimes produce biased are ethically questionable outputs reflecting biases present in the training data lastly fine-tuning large llms to improve their performance for significant tasks or sorry specific tasks require substantial computational resources which which can be costly and time consuming to address some of the limitations that that I have discussed in the previous slide we use a system called rag rag also known as retrieval argumented generation is an advanced AI approach that combines the strengths of retrieval systems with generative models it aims to enhance the capabilities of llms by grounding generated responses in factual information retrieved from knowledge bases how rag works rag has two components the retrieval component which is responsible for fetching relevant documents or pieces of information from

a predefined knowledge base based on the user's query techniques such as keyword matching semantic search or vector-based retrieval ensure accurate and contextually relevant information being retrieved the second component is generative component the generative model typically on llm uses the retrieved information to generate coherent and contextually enriched responses this integration allows the LA llm to provide answers that are not only fluent and contextually re relevant but also grounded in factual data from the knowledge base there are significant benefits of using rag first is improved accuracy by incorporating retrieved factual information rag significantly reduces the likelihood of generating incorrect or irrelevant content this grounding in upto-date verified information enhances the overall reliability of the responses second enhanced contextual

relevance the retrieval component ensures that the responses are high highly relevant to the user's query leveraging specific information information from the knowledge base the third one is overcoming the knowledge cut off the retrial system can access the most current information addressing the limitations of llms knowledge cutof dates this ensures that the responses are based on the latest available data maintaining relevance over time other benefits are reduced hallucination the risk of generated generating hallucinated or nonsensical respons is minimized as a generative mod model relies on actual retrieved information with respect scalability and efficiency rag enables scalable solutions by dynamically retrieving relevant information reducing the need for frequent retraining of generative

models now now look into let's look into the implementation steps for rag and how we can plug in the rag system to llm so first step is to select a knowledge database uh the knowledge base could be your internal company database uh just you have to ensure that the knowledge base is comprehensive up toate and well maintained second step is data preparation you have to clean up the data pre-process all the data in the knowledge base to ensure consistency and quality you can host the data in various uh Solutions offered by Cloud providers or you can probably have your own custom uh database to uh host this knowledge base uh some of the popular ones are AWS

open search uh and pine cone is another Vector database uh that is used uh prominently for Rags then in order to have the knowledge base up and running you have to index the data using appropriate techniques to facilitate efficient retrieval uh the most common way of storing uh the information in the rag or uh knowledge base is storing the embeddings in a vector database you can use multiple indexing techniques uh to store the embeddings to control your C uh of hosting the knowledge base some of the popular techniques are IVF and IVF with product quantization offered by F engine F engine which is from Facebook or meta then the next step is to develop the retrieval system

when when a user uh posts a query you have to convert the query into some way to search the knowledge repository and get the relevant documents to pass it on to the llm as as a context so here is where the vector databases will help a lot where you can probably convert the query into an embedding and then do a semantic search or uh uh onto the vector databases to get the relevant documents uh some of the popular algorithms are K and search uh to get the top X relevant uh documents for the user query now the last step is to combine this retrieval process uh with the llm so once you get all the up all the top

results for the user query use that as a context and send it as uh prompt along with the pro along with the question to the llm and now the llm will use the documents from the knowledge base uh as additional context in giving you the relevant response

there are apart from the limitations that I have discussed earlier for the Standalone llms there are cost concerns as well for uh using llms uh here is one example of how the costs look like for as part of the computational requirements so this is uh picked from the AWS documentation uh the llama 3 llama 3 Model uh here are two models from metas Lama 3 uh 8 billion one and 70 billion one uh these require M the 8 billion one requires g51 12x large and the 70 billion one requires 24x large machines uh here is the cost that you're looking at uh one instance of g51 12x large uh contains four Nvidia a1g gpus uh the

cost on Sage maker uh cost of on AWS for running this instance type is $7 per instance and per hour and for p4d 20 24x large you have8 Nvidia 00s uh gpus underneath it and the cost of running that model per hour per instance is $37 there are costs associated to hosting to host a rag because uh if your knowledge Bas is huge then you need a efficient way to store that knowledge space in and to make it available uh for the llm there is operational cost and maintenance cost associated with the llms because you have to regularly find your net and uh uh regularly uh update it and regularly uh uh scale it up in

case your traffic increases here are some of the reduction cost reduction strategies that you can use uh first uh you can use pre-trained models by apis and services in order to uh reduce your cost don't have to pick the model host it yourself there are apis available you just if your traffic is too low then popular Solutions like AWS Bedrock open AI offer apis you don't have to host the model yourself you just re call their apis with the relevant prompts to get back the to get the results this is a cheapest option if you are okay with the pre-train model then if you have to uh deploy the model because you want to control the cost and

you have to uh uh provide uh llms as a high throughput Service uh inferences as a high throughput service then you can deploy in the foundational models in a stagemaker and a Bedrock uh then you can probably control your cost and the scaling requirements a little bit then you can also optimize the model size if you're deploying it on your uh hose uh you can optimize the model size by model distillation uh it's model distillation is basically you transfer the learning to a smaller model from a larger model uh then you can also apply quantization and pruning techniques to reduce the parameter size of the uh model uh there are pruning techniques available to clean up uh some of the weights uh of

the model to significantly reduce the size of the model quantization usually you can deploy it at the model at a lower Precision from let's say from F floating Point 32 to floating Point 16 uh to reduce the overall uh cost of hosting the model it will reduce the size of the model as well then if you're deploying the model on your instances think about uh autoscaling it and if you're if you don't have a uh need for synchronous invocation try going going with asynchronous invocation by batching the request uh and then uh inferring the request in batches and then uh you can scale it down when there is no throughput uh these autoc scaling Solutions are uh offered by

almost all the popular cloud service providers uh one popular solution is uh Sage maker from AWS which uh default provides uh autoscaling uh which is easy to configure then you can purchase some of the instances at a lower cost and you can probably uh uh use spot in spot instances uh if if you're uh if you don't have to serve the traffic at 100% availability caching is another technique where you can probably cash some of the responses from the model uh and try to minimize the inference cost it's with respect to rag uh instead of using the uh hnsw try looking at uh IVF flat or IVF PQ indexing techniques and compression for knowledge basis to store

are indexing the documents as part of creating the rag based systems there is model cascading as well you will deploy a series of models with increasing complexity and computational cost so most of the requests go through the smaller size models which have lower cost and then for those uh uh which are okay by the lower models in order to increase Precision probably you can uh pass those uh requests through the larger models uh so these smaller models will act as will act like filters to reduce your costs now we will discuss about prompt engineering prompt engineering is about crafting inputs that guide the model towards the desired output a good example of prompt this is picked from

the abl documentation it needs to have a good structure the contextual information about the task reference for the task simple and clear complete instructions and the instructions are placed at the end of The Prompt the format of the output is specifically described this constitutes a good prompt wherein uh the llm will be able to understand the prompt better and give you a most uh accurate results some of the examples of good prompts are for text classification prompts you can use this uh uh you can use the prompt in such a in this way this is taken again from the anthropic clouds documentation uh so here is one such uh prompt for text classification you uh you give a clear instruction to the

llm on how you want your output to be here is a question answer based prompt you again give uh clear instruction uh ask pointed question on what you want uh here is one such example for text summarization this is one more uh way where you can give the whole text enclosed between the text and ask the pointed question around summarize the text in one set sentence then there's code generation prom which is very much useful for uh every developer uh this is one such template to have a uh good

response now let see how we can leverage llms as a software engineer or a tech professional so firstly uh llms can be used for automated code generation and it can significantly speed up development by handling repetitive tasks and providing code suggestions for example GitHub co-pilot Amazon q and AWS code Whisperer Can generate code Snippets based on comments the second is codee review and debugging llms assist in code review and debugging by identifying potential bugs suggesting fixes similar to tools like deep code and code Guru then the next is document generation llms are very good at generating documents once you paste the code into their uh once you paste the code into the prompts they can generate detailed

document uh strings readme files and API documentation from the codebase next is the natural language interfaces you can build more natural language interfaces which allow for more intuitive software interactions enabling users to perform uh task used using chat BS or voice assistants for technical support uh and troubleshooting you can use llm uh with AI driven chatbots that provide first level support reducing the burden on human teams and improving response times llms can also analyze data generate reports and extracts extract insights from textual data aiding in decision making and strategy formulation

specifically in the field of cyber security uh we can leverage llms for threat detection and Analysis llms can an analyze large volumes of data such as logs of and network traffic to detect patterns indicative of a cyber threat this leads to faster and more accurate detection of cyber security breaches then you can also use llms in autom ating the incident responses llms uh can be used to trigger predefined actions by configuring the LMS with the help of agents that can take the actions they help in quickly mitigating threats and minimize damages in the fishing detection and prevention by analyzing emails and Communications llm can identify and flag fishing attempts this reduces the risk of successful fishing attacks

and enhances email Security in terms of vulnerability management llms assist in identifying and prioritizing software and SEC system vulnerabilities by analyzing code repositories and security bulletins leading to more effective vulnerability management user Behavior can also be monitored and analyzed with the help of LM and it can detect anomalies and potential Insider threats coming to the real world examples here are some of the examples for how Amazon uh used uh llms to serve the customers so when you open the Amazon website uh you can basically see the review highlights uh where it summarizes the reviews given by all the reviewers uh into a small condensed form of uh review then it also helps sellers to generate content for selling their

products with very little information and saves time for the sellers and allows for more uniform creation of products uh and fills the missing details Amazon Pharmacy uh deployed the llms to answer questions more quickly because uh they use a knowledge base which is which is fine-tuned with all the internal V keys and uh other sources of information about the prescription drugs and then summarize the findings so that uh customer uh requests are addressed uh quickly and finally uh thank you for this opportunity and hopefully I was able to uh provide information for your uh uh in aiding your pursue to leverage llms to a greater extent thank you