The Perks And Perils Of Persistence: AWS Attacker Techniques

Show transcript [en]

Thanks, Jen. Uh, good afternoon everyone. Uh, welcome to the perks and perils of persistence AWS attacker techniques. So, uh, my name is Ashin Brennan. I'm a senior cloud security engineer at Immersive, uh, where I've been honing my cloud security skills for the past 5 years. And so, Immersive is a cyber security training provider. So one of the key parts of my job is understanding the latest tactics, techniques, procedures that attackers are using across cloud providers so that I can teach people how to defend against them. And of course, persistence is one of the key tactics in the miter attack framework that and a crucial step that attackers take after gaining access. I believe my job offers me a relatively

unique perspective because instead of spending all day battling those alerts, securing resources, implementing the latest secure best practices, I spend most of my time researching and learning. And this allows me to step back and evaluate the latest developments in the field across a range of cloud services. Now, to the best of my knowledge, there aren't many sources out there that compare the effectiveness, the technical complexity, and the detectability of persistence techniques across AWS. So that's what I'd like to talk about today. Um, attacker techniques in AWS with that focus on persistence and evaluating the difficulty for attackers, the risk of detection, and the potential for compromise that each technique offers. Now, of course, if you have the right

permissions, there are a myriad of different ways with which you can persist in AWS. And which one an attacker chooses will depend heavily on the target that they're after, the permissions that they're available to them, and so on. So the aim here isn't to cover every possible technique, but rather to highlight the advantages and disadvantages of some of the common methods that we see in the wild and to draw your attention to some of the more niche techniques that are still worth looking out for and locking down. And for each of those techniques, we'll also dip into some reasonable uh detection and prevention methods. So we'll start with identity and access management. Fairly obvious starting

point. This is both the bread and butter of attacker persistence, but simultaneously gold dust. If your initial access includes compromising a principle with identity and access management permissions or you've managed to prove your way to those permissions, then it's a good day to be an attacker. And you might be thinking, sure, what are the chances that an attacker compromising identity with the required permissions? Well, in 2025 of all leaked credentials leading to AWSbased security instance, approximately 40% of those had administrative privileges. So, it's really not that unlikely.

Uh so, the most straightforward method of persistence is to create an IM user. uh in that same 2025 data set of AWS based incidents, IM user creation was used for persistence in about 68% of cases. It can be made in a single easy API call or via the console with the option to add inline IM policies to the user to assign permissions. Or for bonus points, you can just assign administrator access policies straight to them and go right to the top. the potential for compromise, the potential impact here is huge, especially if the compromised identity has permission to edit those IM permissions. Uh, in terms of detection, the create user IM API call is logged by default in cloud

trail. Um, and it can make that very easy to detect in small organizations where the new user creation would stand out. In larger organizations, uh, especially with many users, you may need to get a bit more clever with some analytics of these logs. So you could look for example where that create user call is made by an unexpected principle or comes from outside of expected working hours or was immediately followed by the assignment of excess permissions in an attach user policy call. Um cloud faxers are also usually quite noisy. They have quite poor operational security a lot of the time. Um so they'll often immediately attach things like the administrator access policy to the new user even if it's

entirely unnecessary for their goal. Uh so you can watch out for those events with the that policy and request parameters. Now I think life is too short to be manually trolling through logs all day um and looking through cloud trail event history in the console. So I'd recommend setting up obviously alerting rules uh and uh your with your choice of seam or something like cloudt trail lake so that you can build and run these complex queries in a reusable manner. And this applies for any advanced querying of cloud trail that we'll see throughout this talk. Um, you can also look at setting up alerting with tools like Cloudatch or of course your own external tools to actively

notify you rather than having to be constantly manually trolling through these logs. Another thing that you can look for relating to IM user creation is actually the creation of inline policies on users via that put user policy event. Uh, so these are generally recommended event again. They're generally recommended against by AWS because it makes policy management difficult. So you shouldn't be using those anyway. And it means that these kind of events in theory, if you're already following good security security practices, they stand out like big red attackers will hear signs. The same applies to IM users themselves. You should already be limiting your use of those and instead replacing them with shortlived credentials via federated

access and roles. So the creation of those users should really stand out with your alerting. The good news for defenders is that it's easy to contain this method of persistence. Your first instinct might be to simply delete the new IM user, but actually you may wish to preserve them for forensics as a forensic architect with your investigation. Um, and in this case then you can apply things like the AWS deny all managed policy to the user to revoke their permissions with immediate effect. Now one thing that attackers usually follow after creating a new user is to create access keys and login profiles. they want to be able to exercise those new permissions. Um, but this can also

be used against IM users that already exist in the account which offers a really easy method of persistence. Uh, red team tools such as Rhino Security labs pu or data dog stratus red team. They both include modules for backd users like this which really demonstrates the ease with which an attacker can create persistence with this technique. Uh these techniques also offer long-term persistence because they don't expire unlike some of the other methods that we'll see later. Now, similar to IM user creation, these uh setting up this these methods of persistence, they create events in cloud trail that realistically you should already have alerting rules that are looking out for these events. They're relatively noisy. As an attacker, in

theory, there's quite a high risk of detection. Um again as recommends uh not using access keys where possible because they're a form of long live credentials. So again if you've already pre uh prepared and you're minimizing the usage of these documented any require required exceptions then the creation of new keys should really stand out. And once you've detected that persistence you can just remove any new login profiles or disable and delete any access keys. Just be careful. Don't delete anything that's used for legitimate operations. Now, I mentioned earlier that you should be using IM roles where possible instead of IM users, but are those ROS 100% secure and infallible? Well, no, of course not. That would be way too easy.

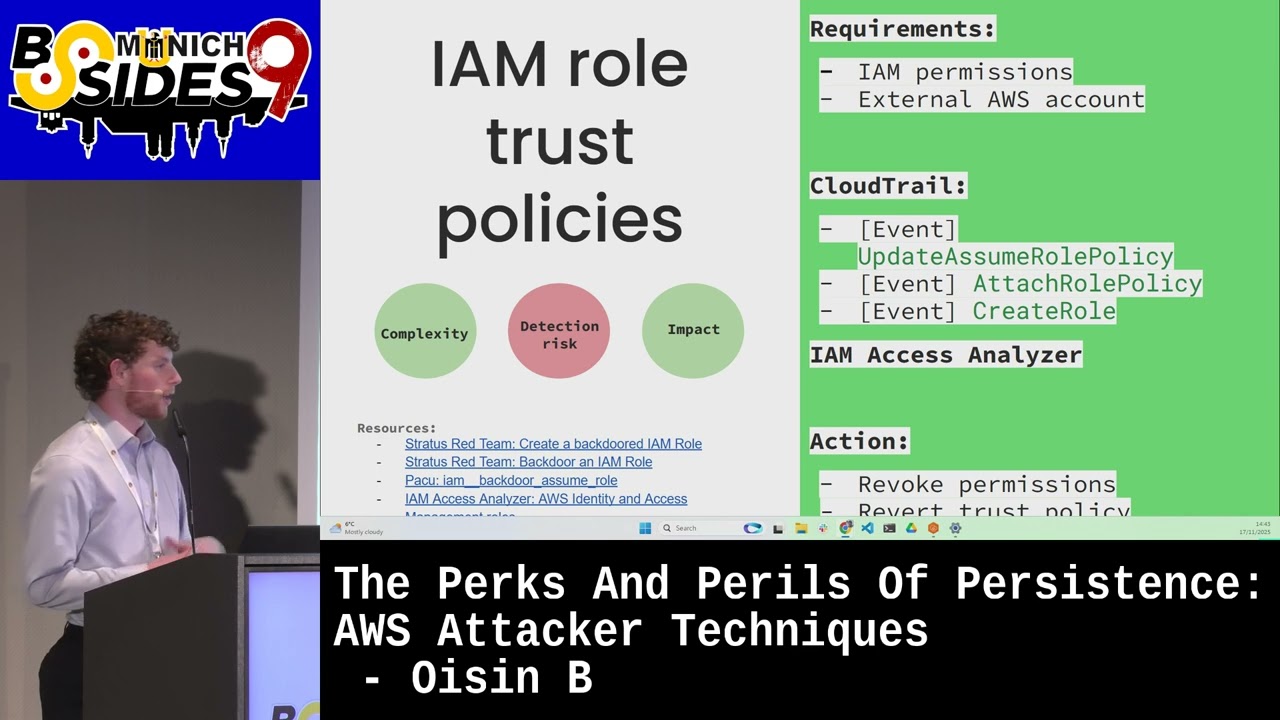

Um, so another common technique that you see attackers using for persistence and one that's frequently observed in the wild is to backdoor IM roles. So these roles have a trust policy dictating which identities can assume a role and users permissions. This could of course be an entire AWS account and all the identities within. Could be a single external IM user or it could be principles federated via OIDC. Uh these roles especially those that are intended to be assumed by bots and automated deployments. They're often good targets for attackers because they're given excessive permissions due to the complexity of permissions management. So a classic case would be being able to read data from all S3 buckets rather than the one that it

needs. So they're quite an attractive target for attackers and it again means that the impact from this method of persistence can be quite large. Now where the role already exists, those malicious actions taken through the role might really not stand out, which does make it harder to detect. However, I've still classified this as quite a high detection risk from an attacker's perspective because to actually set up that method of persistence, you're generating cloud trail events which again most alerting rules should already be covering. So things like creating RO, attaching RO policies, especially where that role policy is attaching something with high level permissions and additionally you can also use IM access analyzer to detect those IM royal

back doors and it'll generate a finding whenever a ro trust policy grants access to external identities. Once you've detected back door IM roles, um you can delete that role if it's newly created or similar to IM users, you may wish to preserve that role as a forensic artifact for investigation purposes. Instead, however, you can always apply the AWS denial policy to it, which takes immediate effect. And one thing that's also worth considering is that if your role already exists and is required for legitimate operations, you obviously don't want to just go and delete that or potentially disrupt your operations by denying all of its permissions. But ideally your AWS deployments should already be made in

and documented with infrastructure as code rather than being made through click ops. So ideally then you can redeploy to a previous secure version of that trust policy. So attackers know that access keys are easy to exploit, but they're also easy for defenders to disable or revoke them. So [clears throat] because of this they often request federated tokens from the STS service if the compromised user has the required get federation token permission. Now these tokens are primarily designed to allow nonAWWS identities to temporarily access resources but they can also be requested using IM access keys and it's really useful for attackers because the tokens persist after the original access key is revoked up to a maximum of 36 hours. So

this gives attackers that lingering session in which they can continue operating even as containment is happening. So for security reasons AWS does prevent all IM operations from the CLI or to the free API if you're authenticated with these temporary federated credentials which does kind of limit the impact that this persistence technique has as an attacker. Um, and additionally, all STS operations such as this get federation token are also blocked, which does prevent an attacker from perpetually obtaining a new set of credentials. However, that 36 hours is still a good amount of time in which you can do damage, especially if you're performing a kind of smash and grab style attack. Additionally, as well, if the

compromised user has the get signin token permission, those federated credentials can be used to generate a console signin URL, which then unlocks all of those original IM permissions. So, it really allows an attacker to carry on persisting in the environment, but going much more under the radar. It makes that detection and instant response much harder because you can't follow the trail of an attacker using those original compromised access keys. Instead, you have to kind of look for say where the user identity section might show the federated principles on which would then include the original IM users name. It's also difficult to monitor because there are numerous legitimate users for that get federation token API call. Of

course, if you don't use federated sessions in your organization, then this will stand out. And you can look for events, for example, where that user identity type is federated user because there really is a telltale sign. You can also look for where the sign-in token is used to update or create longive credentials such as access keys or login profiles because this would be a really unusual way of operating within AWS and would be quite an obvious sign of uh malicious activity. So although it does kind of obfuscate your actions a little bit, there's still a medium kind of detection risk of this persistence method as an attacker. Now, this is a bit more of an unusual

method of persistence, but one that has nonetheless been seen in the wild this year. So, AWS Identity Center, formerly AWS SSO, uh allows you to connect external identities to AWS and enable SSO login and is currently actually considered one of the best practice options for managing human users and identities in AWS. However, if an attacker can gain access to your identity center organization instance, you might be in trouble. Of course, this does require an attacker to compromise an AWS organization's management account. So, it's not easy for attackers. But if they can manage that, they can create new groups and users in their instance, add the user to a group, or attach wide ranging and powerful permission sets to that group,

which can apply across multiple AWS accounts. And with the right permissions in identity center, the malicious act can also change and disable MFA settings across AWS organizations, which in turn enables other methods of persistence. Now, the detection risk here is very high because legitimate instancewide configuration changes occur infrequently. The permissions to be making those changes should be limited to a very small set of individuals. So, you can be on the lookout for API calls from that SSO Amazon AWS.com event source. And that will include things such as updating the instance itself, update instance access control attribute configuration, very catchy event name there. Um, and then you've got things like creating those permission sets, creating the account assignment, and

creating those groups and group memberships cuz all of those if made by an unknown user are of course heavy indicators of malicious activity. [clears throat] So we'll move away from IM now and look at some other methods of persistence. This time focusing on AWS Lambda. So the function as a service offering for serverless code. Uh there are SDKs for AWS available in most languages that Lambda natively supports. And importantly you can grant lambda functions permissions to assume roles which means that the function can theoretically perform most API based operations across AWS provided it has the right permissions. And this opens up a fairly easy persistence method for attackers. You can simply create a lambda function that performs the

operations you wish. add that function URL with no authorization requirements, assume a role and then you can just trigger it from the internet at your will. And in one really interesting case this year that was observed in the wild, this was used for a sort of persistence as a service. The attackers created a function that would generate new IM users with permissions and access keys. So whenever the defenders disabled one user, the attackers would just invoke their function and pop up and it was like a game of whack-a-ole. Of course, there are many other possibilities here. uh you could set the function to terminate EC2 servers and cause chaos or list and sync S3 bucket

contents to an attacker control endpoint. There are limitations um which kind of really restricts the impact that you can have as an attacker through this persistence. You're limited to the functions maximum runtime of 15 minutes and that's a hard AWS limit but you can see it's a relatively easy persistence mechanism. It just requires a bit of coding knowledge. If attackers actually choose to update an existing function as well rather than uh creating a brand new one, uh it can be harder to detect because those events that come from the function can blend in with legitimate operations. So in terms of setting up the persistence, you can look for events such as create function or update

function code events. And these management level events, they're logged by default in cloud trail. Alternatively, you can monitor events from other services where the user agent contains that uh AWS Lambda string because this is a real telltale sign. As a general aside, setting up alerts based on user agents can be helpful. As I said earlier, a lot of the um cloud threat actors have quite poor operational security. They really give themselves away. If you see a user agent related to Kari Linux, that's and you don't have an internal red team, that's usually a fairly bad sign. [clears throat] Just do remember that the user agent can be easily spoofed. Uh the other thing you can look out for is the way that the

lambda function is made publicly invocable. So things like create function URL config or where the functions resource policy has actually been modified to allow public invocation. And that would be an update function configuration event. And additionally, you can use IM access analyzer to alert when a Lambda function is made accessible outside of your AWS account. So lambda functions actually then assume their roles using secrets that are stored as environment variables in the functions runtime environment. So this means that if you can gain remote code execution rce in the lambda function you can steal these credentials and assume the function role yourself. Now due to the complexities of permissions management these function roles they're often given excessive

permissions. Um, so it does mean an attacker can easily continuously operate in an AWS environment using this method. However, there are some technical drawbacks. So firstly, as an attacker, you've got to find a suitable RC vulnerability in the first place. If the target has good coding practices, this should be easier said than done. Secondly, these credentials have a default MA lifetime which matches the functions maximum duration of 15 minutes. So as an attacker, you need to continuously steal these new credentials to refresh your persistence. And finally, the potential for impact is quite limited by the functions roles permissions. And because Lambda functions are often used for small serverless tasks, they're not often given such wide ranging permissions as

you might see attached to an EC2 instance. The major advantage of this technique though from an attacker's perspective is that it's very difficult to detect. All of their operations masquerade under the guide of the function role and there are no cloud trail events generated directly from setting up the persistence. So detection requires in-depth analysis of where the function might be making unexpected API calls as seen for example by the user agent string. [clears throat] So Lambda also supports extensions which allow you to add reusable code across multiple functions such as for adding observability or security tools. And these extension layers, they can be shared and you can use those layers across accounts and organizations. And importantly, those extensions have

this access to the same resources and credentials that the function itself has. This means that similar to updating the function code itself, you can actually include backd doors in an extension layer to leak data externally or run AWS operations using the Lambda's role permissions. It's a little bit more technically complex for an attacker to set up. You need to have knowledge of the code. You need to investigate the target functions runtimes and you need to build your custom extension. However, it is harder to detect than just going and blindly updating the function code because that original code stays untouched. So there's less kind of evidence left behind and depending on the environment that update function

configuration event that's generated from attaching the new extension could very easily blend in with normal operations. Another advantage uh from an attacker's perspective is that the malicious extension can be deployed across numerous functions if the runtimes match which allows for a more widespread persistence and obviously requires more effort to remove and you persist across Lambda instances. That extension is pulled each time the Lambda server spins up a new instance uh which allows for longerterm persistence without needing to keep that function warm with regular invocations. From a prevention perspective, your best bet here is to use IM policies or service control policies. restrict who can attach extensions to a very small set of developers or you can use things

like AWS signup profiles to prevent the use of untrusted extensions. So we move on to EC2 now everyone's favorite computing service in the cloud. Uh and this is probably one of my favorite persistence techniques that you see out there. Um misconfigured IM pass configurations. So the IM pass role permission, it lets you pass an IM role to an AWS service so they can perform operations on your behalf. If an attacker compromises a user with those permissions, they can pass that role to an EC2 service, spin up some instances that they control and then persist inside the EC2 instance. It's quite sneaky. Of course, I do use EC2 here as an example, but this applies to many of

AWS's compute and container services. Now this technique can be quite noisy because utilizing the permission requires setting up compute resources for EC2 instances that you control and can access as an attacker. So you could be looking out for cloud trail events for creating key pairs and importing key pairs for that SSH access. You could be looking at things for creating security groups, modifying those security group rules to give that networking access, running the instances themselves, and then things like associating IM instance profiles. However, that pass API call itself is not logged in cloud trail. So if as an attacker you've already compromised EC2 instances, you can really use this to sneak under the radar. And although it's

noisy, the potential impact here is quite large. And it can even allow privilege escalation if the user can pass a role with more privileges than they have. Additionally, because that password permission is required to run instances that have instance profiles, it's not unlikely that a compromised user who has EC2 permissions will also have the required password permission. One thing, one saving grace as a defender is the EC2 RO delivery field. Now, this will be present in a cloud trail event session context field if the API call was made using credentials from that passed in RO. However, of course, there are going to be many legitimate events from your instances that will have that field. Now, this kind of brings us on nicely to

the instance metadata service um which provides EC2 with the capability to interact with AWS services uh through granted IM roles. Uh the metadata service is an endpoint that's available within the instances. So, you can call out and get credentials and make those AWS operations. If as an attacker you can find the rce on an instance or for example exploit serverside request forgery to the metadata service you can then ext either manipulate the requests that have been made using those credentials or you can even extract those credentials and use them directly and currently in 2025 uh data dog's state of cloud security report estimates that around about 20% of EC2 instances uh have overprivileged roles. So this can be quite a good

avenue to go down as an attacker. uh and then maintaining access to this instance offers you a persistent gateway into the account and you can combine with with methods such as the IM password abuse to give your compromise instance extra permissions and allow you to work your way through the AWS estate with no extra API calls required. So that's one of the things that makes it very difficult to detect. Similar to RC and Lambda functions, there aren't any cloud trail events that are made in the course of setting up persistence. So instead you have to look for the actions that are taken using that persistence. Uh and then one of the final ones now then is modifying instance attributes.

So when instances are launched or rebooted, those user data scripts that can be passed in to run custom setup commands such as installing a tool or joining domain can be really useful thing to have when you're making these automated deployments. However, if an attacker has that modify instance attribute command, they can edit this user data script to beacon out to C2, add authorized SSH keys, insert vulnerabilities into your web server files, or more. If those user data scripts are pulled from S3, as is often the case, it becomes even easier for an attacker. You can simply overwrite that script file with a malicious version, and that reduces the detection opportunities to a single S3 object event. And these data

level events by default aren't monitored in Cloud Trail, although I would recommend enabling that with appropriate filters to log for events for important files such as this. Now, it's a bit more technically complex than creating an IM user, but it's not impossible for an attacker to pull these changes off, and if if they do so, it allows them to backdoor your server the next time it reboots. When you then combine this with for example changing a launch template uh which is targeted by an autoscaling group then of course you can set that user data across a range of instances. Uh the launch templates can then also be used to for example modify SSH keys that

are associated with new instances to one that an attacker has access to and this gives you nice persistent access across the servers. So, if there's anything you remember from this talk today, um, as you can hopefully see, there are many, many ways in which you can persist in an AWS account. Some of them are high impact yet very easy to detect. Yet, others may go unnoticed for a long time, even under the scrutiny of some top security teams. Thankfully, most cloud threat actors, they stick to the basics. They love just creating a new IM user, coming in, smash and grab, attach admin access permissions to go straight to the top. Unfortunately, there's also still a lot

of work to be done on our side. I think over 40% of leaked credentials having admin privileges, I think we can all agree that's shockingly bad. Um, so you need to go and enable that resilient cloud trail logging with backups. Set up alerting through your seam or your data lake for suspicious events and make use of the security tools at your disposal such as IM access analyzer so that you'll know if your admin role has just been made publicly assumable. And of course, prevention is better than detection. So evaluate those access keys, reduce those permissions, don't just give administrator access to anyone who walks in the door, and switch as many of those credentials as possible to

be short-lived. Best of luck keeping the attackers at bay for another day. [applause]

All right, the thank you very much, Oshin. We have time for one question. Does anyone have a question for Whoa, we have way in the back. Go, go, go, go, Michael. Well done.

Thank you. Oh, awesome talk. Thanks a lot. Just a quick question. What do you recommend from a like a daily monitoring point of view? How to catch this stuff? either like pre-built sigma rule sets or just use Prowler and you're good. >> Uh I think one of the things that you should really consider is kind of using the tools that you have available to you. So obviously I think a lot of the thing you can do is setting up those alert rules in advance. A lot of the detection actually is you make it a lot easier for yourself by preparing for this accordingly. So as you said there you can have kind of these predefined

rule sets and there are plenty of different options that you can have for those and those will allow you to set up the automated alerting so that you're not having to go through it yourself. You can of course use those tools like Prowler and other offensive tools to essentially perform your own internal penetration test and check for those um check for those vulnerabilities. But um I'd say yeah the main thing really would be kind of setting up that alerting and the monitoring. Obviously, you want to find a compromise with not getting alert fatigue, but if you use the automation and even obviously nowadays, as much as you hate to say it, AI can come in handy

every now and then to help you speed up the analysis of those alerts and the triaging and the investigation. So, I think those are the best ways to go about it. >> And is there like a a rule set that you can recommend? Open source one best case. >> I think the the Prowler rule set is quite a good one. Um, some of the other ones include Trivy is quite a good one or some of Acquisex resources are quite good. >> Okay, thank you. >> Okay, thank you so much, Oshene. Give Oshene a big round of applause, please.