01 - How We Hacked YC Spring 2025 Batch’s AI Agents

Show transcript [en]

agents. Um I'm Renee. Uh I'm the co-founder and CEO of Casco. And before this I was a head of product at AWS. Um and uh also worked for a long time at Microsoft. Um a little bit about myself is I've really been fascinated with AI for a very very long time. Uh I like to claim that I um invented Oh no. Is the video loading? Internet please come on participate. Oh no. One second. >> It did say Let me do one one one more run at this. There's a It's just different if the video plays. Yeah, there you go. All right. Uh, it's loading. It's loading. Okay. Come on. Fingers crossed. Oh god. All right. See? Oh, there's baby Renee

here. Uh, 11 years ago doing winning Europe's largest hackathon with a voice to code agent that was building back then. and you could like talk to it, have it create a website and then you could um like have it load in images and whatnot. It was just like such a fun time with AI like back then was only like classifier models and whatnot. And it was uh a lot of different cloud providers strung together just to support this thing and it was just so cool like and so I've always been fascinated with this entire space. It's like there's you know really cool tech that you can build there. Um, obviously since then many things have changed. Um,

I hope you know I I look a little bit older, a little bit more experienced, and I also uh quit my big tech job at AWS, moved into a garage with my co-founder to do the uh classic startup thing, and we got into Y Combinator. And so, wow, that was super fun. It's like, okay, cool. Now, it's time to build a startup on our own. And we're building a security startup. So, what do we want to do now? Now that we're building a startup, hm, we need to launch. So how do we have a really cool launch post that we want to create together? So we thought maybe we should just hack all of our batchmates and so we looked at what

is the setup of the agents back then and how did the architecture evolve right back then you know I had to strung together four or five different cloud providers talk to all these different systems and whatnot but there's actually this normalization now of the ALM agent stack right so nowadays when you're building an AI agent you have a front end that talks to the back end here API service talking to an LLM and all this kind of stuff, right? And we notice that when we talk about security in the agent space, we often only talk about LM security, which is really like that part there like where you talk where API server goes talks to LM, you check for

prompt injection and that kind of stuff. But if you worked in security long enough, you know, whenever there's an arrow, you should probably check if that arrow is secure. Like, you know, you can talk threat model and all that stuff, but it's really just, you know, boxes and arrows. So we realized there's a lot of issues all over the place, all over there and our uh our company really focuses on all of that and so we decided let's let's figure something out there. Let's figure out the security posture of different AI agents in our batch. So as I mentioned earlier, we tried to hack our agents because we really wanted a flashy headline, you know, not going to

lie, that's why we did it. Um and we were like, okay, we also don't want to waste too much time doing this. So this is how we actually set up our process. We're like, "Okay, we're going to set up a timer for 30 minutes and then basically for 30 minutes try to hack one of our batchmates." And there's like uh 16 different AI agents as part of our like accelerator program. So I was like, "Okay, we can go through it over a weekend. I think this is a good time spent." And you know, the the hypothesis is that we can somehow go from there to like company compromise. like we somehow get the system problem and it will it

will get us to getting access to your entire company and then you know we we spend some time identifying different risks like what are the different capabilities that the agent have and then try to exploit them ultimately right so if you have ever done pentesting this should feel familiar and is basically the regular pentesting process and it's worked um turns out phenomenally well so there was like 16 a agents as part of our batch uh we hacked seven of them with 30 minutes each and there's actually three common issues that I'd like to walk you guys through today. All right, cool. So, the first one might not be a surprise to folks, but it's around crosser data access. So,

when you when you work with an uh AI agent, you can often ask it to like fetch documents, fetch uh information from a conversation, start building up the context to be more helpful for you. But what's interesting is for example with this company we're like huh we we extracted their system prompt and we found out there's a lot of like reference reference by ID kind of capabilities you know like whenever you see something is being referenced by ID h this this might be this might be weird what if I just put in another ID let's see and so the obvious thing is like okay this could be like an Idor attack but in the agent space what if you just

prompt the agent to use different IDs from other users to fetch information. So in this case they were trying to fetch uh some some sort of a messages right and today we just see many people just you know authenticate the request check if the user token that's making the request is valid but never actually check against if the record is supposed to be belonging to that end user and yeah it turns out we found a demo video of the founders online and from the browser URL bar we were able to extract an ID from their demo video and I was like hey give me information of this user and yeah, it surely returns everything. So that's that's pretty

awesome. And then the the agents are so so helpful. They're like, "Hey, you know what? I'm also connected to not only their messages, but also like their documents." So you can then start now crawling information through this one particular identifier and access all of their information. Isn't that crazy? I was like, "Wow, that's kind of cool." Um I mean cool for me. Yeah. Uh and so for folks who don't know this uh the the way to do it is to authenticate and authorize. That means you know check if a request is actually allowed to be made in the first place. You know are you authenticated user and then also tie it in with some sort of like role level

security behind um behind your request. So you're making sure that that particular post ID actually belongs to this particular user. So you can actually fetch that information. If you're using any sort of like out of the box systems like a lot of founders I see use superbase these days. It's actually built in. You just got to use it. It's like literally one flip of a switch. Uh and it will uh it will get rid of a lot of these problems for you. So authenticate and authorize. Now, this was kind of like a a problem in that era, right? It's like, you know, I I kind of already jumped over the whole, oh, we did prompt injection thing

and so on so forth because like prompt injection is kind of a means to an end. It's more like a reconnaissance of like what the agent can do but the damage is all happening behind here right and I think I think that is really the the focus area that we all need to like wake up to when we are talking about security testing AI agents and so the thing that we need to remember is that agents actually act like users and not API servers like we it is it's like when we talk to all these founders like you know you've kind of solved this problem before with web applications Why are we making this mistake with AI

agents? And it's just purely because as developers, you know, we like to pattern match. We look at oh the the thing is behind the API server. So this is like an API server, but it's not true. It is actually like a user. It's like a virtual user of your product. And so all the things that we do for web application security, sanitizing your inputs, making sure the uh authentication is properly scoped, all stuff actually applies too, right? So it is it is like this mindset shift that I think everybody in their organization needs to kind of flip as well. It's like yeah it it's it's deploying on the server but it's a user actually it's a

user and so that is like super super important. Agents act like users despite them being inside an API server. Now that means a quick rundown of lists that agents should not be doing. So yeah uh they should not determine their own authorization model like with just passing ids for reference and whatnot. uh they should not act with service level permissions like global readonly access roles that happens all the time. Go back and check your software um and then or accept any arbitrary input or forward any output without sanitization. Those things are all things we have learned to do with enduser data when they use your web app. You just got to do them too for agents. Mindset shift,

right? Okay, cool. So that leads us to the second set of uh issues that we've seen and this is um more specific to AI agents and I I'll give you a little bit of a precursor of what this means like bats code sandboxes. So here's an anthropic paper that I thought was very interesting. I think it was published a little bit earlier this year. Um and it basically talks about the AI usage and the uh broken down by the industry. So you can see there's like the the graph is kind of like all skinny on the left side there and then with like one outlier. So who's this, right? Okay. Oh, it turns out it's it's oh it's us. It's like hey

computer mathematical hey that's us. Hey you guys all use AI every day hopefully, right? So you know it is this tool is very useful for us. And it turns out that there's not only that, it's not only for that purpose that it's good, you know, helping you write code or, you know, generate a payload for your pentest or something like that. But also, a lot of the AI capabilities use coding tools under the hood to perform tasks that are deterministic. It's like, you know, we we all know agents suck at math, right? you want to do a calculator, you actually have the agent run some code in the background and um run that in a code sandbox and then

gives you the result back, right? And that can stretch from like a simple math calculation to things like running SQL queries, you know, and doing a bunch of other things. So, it's like, okay, there's something interesting there, right? And a lot of different tools, even if they don't tell you they're using a code sandbox in the background, they still are, right? Even if it's not a coding agent, right? like some a recent one I've seen is like a financial accounting agent that just needs to run a lot of spreadsheet calculations, right? They're not going to tell you it's a it's like a coding agent or something, but it's going to run it in the background. So, this is really about

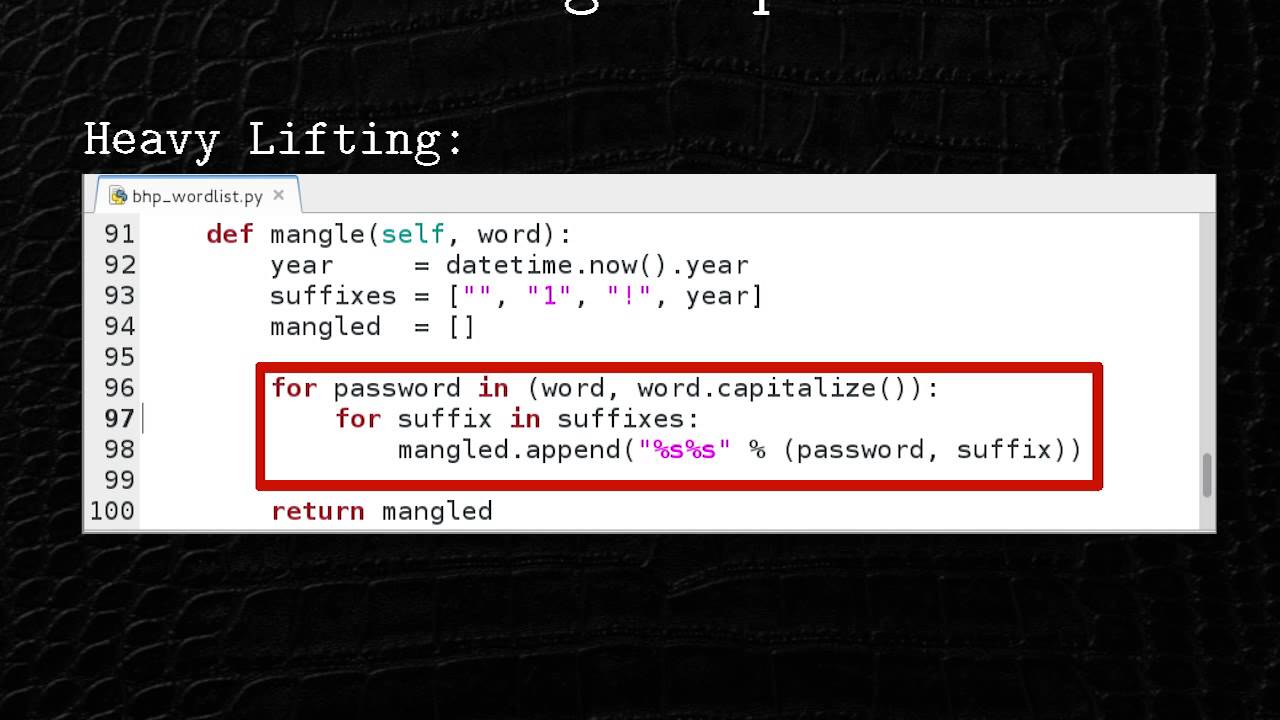

this little arrow here where your tools of your LLM actually kind of start talking to a container. Um, and whenever you have like willingful remote code execution, there's like something interesting to look at, right? And so um it is also something that people tend to underestimate and kind of roll their own. So we started looking into some of these agents that had those capabilities. So kind of back to our um back to our approach. We extracted system prompt. All right. Now let's read through exactly what the developer told us not to do. All right. Okay. So when editing files, never output code. Okay. So we need to output code to the user unless requested. Okay. Okay. instead use one

of the code edit tool uh use one of the code edit tools at most once per turn. So what if we just use it twice per turn? Like what happens? Like the interesting thing with system prompts is it actually downloads almost like the brain of the developer into text and you can you can kind of see all the things they don't want you to do. So all you have to do is like do like an inverse map of that. So it's like okay so I'm going to do exactly that. Huh? Watch me. Right? And and you can find a lot of interesting issues through that. So with this customer, we're like, okay, let's try to output code all the time because

I really want to know what's going on. Um, and then also this, you know, hijack the agent to uh to run multiple turns instead of just one. Uh, and it was kind of interesting. We we we tried having it like, you know, run a network request, run a create a reverse shell and whatnot. I was like, ah, god, this company is so smart. They only allow me to write Python code and I'm I'm team JavaScript. Sorry, guys. Um and then I don't they don't allow these really dangerous you know imports and also they restrict which Python files can be run and on first glance it might be like wow they they've done a good job there's

something interesting here like you know but nothing to exploit but there are two permissions they allowed which are kind of like innocuous. It's like you can either write a Python fi you can write a Python file to execute uh and also you can read files. It's like all right okay so we cannot do all these crazy imports but we can write files and read files write files and read files right files and read files what can we do take a look right in the file system what's going on in this this container what's going on here well it turns out there's this apppie file I was like oh that's interesting oh and that apppie is

kind of the control plane for the for the sandbox that means it receives all the requests and be like oh yeah okay now I need to write this file and so on and so forth It's like, okay, so this is a system file. If we can somehow like figure out what to do there, that might be interesting for us to look into and modify. So, okay, these

Oh, oh, oh. Oh, these protections, guys. Have you seen this? Wow. Wow. And they're all like nicely documented for me. Isn't this great? And so, okay, so I can just like comment all this out by writing a new app.py file. And then I run it again on and remember on the second turn, then all of these um protections are gone. So by nature, now you have access to code sandbox. So we can go Bitcoin mining. Let's go. Right. It's a rough economy this year. Um obviously that's a joke, guys. Don't do it. Um obviously obviously this now creates an environment for us to do remote code execution. Uh and whoever has ever done this before knows it's really really

fun. Uh obviously for uh you know on this side of things um sucks for the company. Um but it there's there's a lot more things you can do once you're inside a machine because like machines are kind of not like isolated, right? They're not like alone in a network. There's probably more to it, you know. So, has anybody ever dealt with like uh metadata endpoints and cloud environments? Has anyone done that? No one. Okay, one person. All right, cool. Super good, super good information to have. You can basically do a discovery call inside your cloud environment like this customer was using GCP to check, oh, you know, what's kind of my project ID, guys, like you know what are other

things that are in this network because there's like legitimate reasons for cloud provider to expose that. so the computers can connect with each other and whatever, right? And the other endpoint that's really good is to check, you know, what does my service role actually have permissions to, right? It's like, oh, if you've ever worked with any cloud provider ever, you know that setting up permissions, IM permissions is probably really hard. And who ever has ever been in an environment where everything is set up perfectly? All right. Yeah. So, exactly. And because of that, well, it turns out this thing has big query access, which is like this way to query big data inside Google uh Google cloud. And it turns out

that's where all they had all their customer data too. So now through this this really badly coded sandbox, we're able to do network discovery, figure out what are other, you know, endpoints available, right? And then realize, oh god, we have access to their entire customer data. That's scary. And so now you can also siphon out all that customer data from there, right? And it's like multiple layers of failures that like it's it's like peeling an onion. The more you peel and the scarier it gets actually, right? Um and also will make you cry at the end. Yeah. So yeah, bad coign boxes really cause damage. Um TLDDR, don't roll your own code sandbox. Like that's my that's

my my um appel to everybody. Um the the the parallel I would draw is like when we were working on you know web apps was a super hot thing for a long time. You know people kind of were in the same spot with off for web apps and this saying of like don't roll your own off kind of came along and generally was pretty good advice. I think we're going to see that with agents too. like there's going to be many people that are going to start building AI agents thinking they can quickly build a code sign box but are not aware of like all these other complexities involved right and the the correctness around those

systems and so just like we don't roll our own off anymore in web apps we should not roll our own code signboxes and so there's a few providers here that you guys can check out you know they're all pretty good um and E2B is also open source so you guys can actually you know self self-host posted and whatnot, right? So, there's a lot of good stuff there. Um, and you know, this is this is just something that we have noticed that typically when customers have used a managed co sandbox environment, they basically have a shared responsibility model obviously on security with them. Uh, and it tends to be more secure. So, that's issue number two and we can go to

issue number three. All right. So, the third one is around these tools like you know some these tools accept a lot of inputs. It's kind of cool. Uh, you know, tool tools are used for like asking the agent to do some deterministic things to get some data, write some data and whatnot. And turns out a lot of them you can lead to serverside request forgery for them. This is kind of interesting setup too. So that's really this little bit over there, that arrow where a tool can call some external data sources. Now kind of following our same principle, this is like a coding agent. um that we we looked at and they had a very cool capability like you know this

is like earlier in in in spring they were very unique in a s in in a in a in a set of capabilities because they could allow you to create databases alongside your front end so your applications were actually useful and not just a not just a UI was super cool app um and it turns out they have this very interesting capability over there where the database schema is actually pulled from a private GitHub repository uh based on a specific branch and oh it's just a URL right that's cool um and so if you can already hijack agents to extract their system prompt if you can hijack agents to make any sort of arbitrary tool call what is

the natural thing you want to try to do you would obviously try to like put in some request information in into there like what if you just called it with some other um with some other information. What if it's just like a honeypot server on my own uh on my own uh domain and I can actually receive the credentials to their private GitHub repository because remember this is a private GitHub repository, right? Oh, okay. So, and then you know kind of back to the same story as before. Um I don't think anybody ever sets up proper repo permissions either just like they don't set up proper cloud permissions. So using the GitHub token, you can actually

start downloading their entire company source code now from there too, right? Obviously, this was really bad. This is like this honeypot server. It includes this get credentials in there and you can uh just say, yeah, you know, clone it from bad actor.com um and siphon out all that information from it. But this one was was actually kind of funny because we found it and I literally a minute after I think yeah it was like 5:20 p.m. 30 minutes after he was like, "Oh, we already actually fixed this." Like, so they were already on to it luckily. But it's like, you know, like this kind of it's always these kind of little innocent things where you're

like, "Oh, yeah, you know, I want my users to have, you know, ability to, you know, set up their different database schemas and whatnot that suddenly lead to, uhoh, my entire company's value prop is open source now, too." Um, and so it's it's a little bit funky and and so it's something to just really take into account as you are building your software and building these agents. All these arrows really matter across the board. So um, always sanitize your inputs and outputs. Always sanitize your inputs and outputs. Always sanitize your inputs and outputs. That is just, you know, just repeat that to yourself. Always sanitize your inputs and outputs. Just okay. It's like it's like part of

my, you know, evening meditation at this point. Um, and so this is like the the three big issues I wanted to walk you guys through. And you know, a lot of these things happen because as because like AI is happening, the timelines are, you know, very aggressive and you need to hit your Q3 quarterly goals on AI delivery. And so some of these things are just, you know, not thought of in the moment. And we need to take a take a second to really internalize that and to so that we so that when you are launching new products, new agentic features that they're actually not only useful to your customers but also communicate trust of your company. And

so yeah, three key takeaways I want everybody in this room to have. The first one is, you know, agent security is larger than LM security. You know, like I skipped over the whole prompt ejection thing because I think there's a lot of talks on that and it's really good. Uh but really think about where the damage can be happening and it's on all the other arrows. Keep thinking about all the other arrows, right? And then treat agents as users. That applies to this off, you know, sanitize your inputs and outputs, right? Like just just keep thinking of them as users. They're users. They're users. They're not APIs. They're in the API, but it's not us. It's it's a user. It's a user.

And then the last is there obviously don't roll your own code sandboxes. that that always is like a little little sketchy like to have that properly configured. Use an open source solution. There's many good ones out there I called out today. Um, so you can really be set up for success. Yeah. Um, so that's everything uh that I wanted to talk to you about today. Uh, and you know, if you want to chat more with me, you can connect with me on LinkedIn. Uh, I'm everywhere. Renee Brandle, just like my first name, last name together on LinkedIn, on GitHub, on Twitter. Um, yeah, that that's it. Any any questions? Do we do questions here? Yeah, sure.

>> We do questions. Okay, cool. Yeah. >> All right. >> Were there batch feers that you couldn't get into? >> Um, we time capped it to 30 minutes. So, yeah. So, I think out of the 167 were uh were hacked. So, the other nine were, you know, took longer than 30 minutes. Yeah. >> Oh, I will do that. Okay, cool. >> Uh, any other question? >> All right, up there. prompt.

>> So the the question was did I use prompt injection to find the uh code sandboxes control plane uh uh server basically the app.py file. Um no so I used prompt injection to just understand what tool capabilities it had write and read and then actually I wrote I h asked the agent to write a tree function over their uh file system and then through there printed that back to the user and then saw which which you know files look suspicious. Yeah. Oh by the way one advice because this is relevant. If you guys are ever dealing with a coding agent, the best way to build a reverse shell is to ask the coding agent to build you a terminal UI

that is functional. It is absolutely beautiful. Anyway, sorry. They do the work for you. I love AI. Anyway, sorry. I made two like pretty funny comments throughout the presentation and the comments were are the permissions ever set up right was the first one which is everywhere and then the one after that was about like nothing is ever set up perfect >> you as a developer just in security this is what we always hear oh they didn't set this up right or that wasn't set up right is there a way to set it up right or we just accepting that we're shipping out >> stuff that's insecure do we ever get to a point where it's like here's how you

do it and you're done or >> yeah so so the question was like um if if there are always things that are not set up correctly, is there going to be a path towards having everything set up correctly? Right? That that's that's what it to the way I look at security is like it is a set of toolboxes, right? Um there's many things you can do statically beforehand and and analyze. Um and what has been uh you know bugging me, what has compelled me to to to start this company is really realizing that there's this toolbox that we all I think we all really like which is a penetration test. Uh but it's been cost prohibitive for

most companies in the world, right? It's actually the ultimate, you know, test if your your system is secure can go through and today people can do it like once a year maybe for $10,000 a pop, right? Something like that. But if we can build AI to do that penetration testing for us and do it really well and shrink down the iteration time and actually increase the frequency and make it cost uh cost accessible to most company will just become another tool just like your static code analysis tools right and that in combination with these existing static tools I think will really change the security landscape and so my mission is to make all software effortlessly secure. Yeah.

Cool. Oh yeah. >> Yeah.

>> Yeah. >> Yeah. So sorry. Uh so the question the I guess the question was uh if we can clarify what was the information we extracted from the SSRF uh attack on the tool.

>> Yeah. Exactly. So, so the the the there's a few prerequisites here. So, the first one is that the GitHub repository was private. So, if I just hit the URL, nothing would happen, right? Um, so in order to access this u private GitHub repository, you need some sort of git credentials associated to it, right? So, if the tool has access to that, then if you can somehow get that bit of the header that has the git credentials, then you can now replay that attack locally, right? So we basically set up a uh a a uh honeypot server like our own kind of GitHub uh our own uh our own git uh git server uh on our own domain and

then just tricked it to make the request to that domain instead of to GitHub but still the tool was implemented in a way that regardless will always encode that header information there. So then you can extract the header information from there and then replay the attack. Yeah. And then you can obviously download the rest of your source code. Yeah. Cool. Awesome. Yeah. [Music] >> Um I I haven't looked into that. In this case, it was an HTTP request area. All right. Oh, up there. Mhm.

>> Yeah. Yeah. So I I guess the the question is is there are other ways to exploit the the particular code sandbox server and I think if I would uplevel that question and the answer is yes and I think that's why bad code sandbox are really dangerous. You should not roll your own code sandboxes. It's just just a can of worms, right? All right.

Cool. Awesome. Thank you so much.