Who's Driving This Thing? Hacking AI

Show original YouTube description

Show transcript [en]

hi everybody i'm pesto thanks so much for coming to my talk on ai security here at b-sides dfw 2020. it's my second year in a row doing b sizes dfw i'm super excited to be here i'm so excited to talk to you about ai security some of the projects i've been working on some of the things i've learned over the last year let's start out real quickly going over kind of what the presentation is going to look like from here i'll introduce myself and talk a little bit about ai a little bit about a 10 000 foot view of ai security before we talk about some of the specific threats and attacks um that we deal with in ai security uh

some further considerations those are really important really interesting and finally we'll go over a little bit about how we might mitigate some of these uh attacks hopefully we'll do um a good q a afterwards too so just to start out um i've been doing this for about 20 years um i met up with a uh a fun group back in the uh early 2000s late 90s early 2000s named ninja networks i've been hanging out with them for a long time we used to do a lot of fun things at defcon um mostly them i was kind of the distant texas contingent of of ninja networks but nonetheless uh we did a lot of fun things at def con and i've

sprinkled some pictures of uh of some of the shenanigans at def con over the over the years i'm from ninja networks um that i think are fun so i hope you enjoy those but apart from that i've actually been a professional since 2000 started out doing things like um firewalls and ids you know um but since then i moved on to doing some uh some some more like pen test and incident response stuff a little bit of forensics works but for the last 10 years i did dealt mainly with insider threat with corporate insider threat i became somewhat of a specialist in that field but for the last year i started a new job doing ai security

and this is what i'm here to talk to you about today and go over some of the cool stuff that i've learned and it's important to kind of think that or to understand my background that i don't come from a data science background i don't come from a mathematics background i come from a hacker background and an information security background so i'm approaching ai security from that angle whereas a lot of the researchers um who are into ai security have a more uh formal education background or more background in in science and data science before we go any further i want to make it clear that even though i do this for a living and even though i went to

school for this none of the information i'm presenting here is representative of my company or of my school these are all products of my own research over the last year into ai security and shouldn't be considered official statements from from any particular organization so with that in mind and that out of the way let's talk a little bit about ai and first thing i want to do is differentiate the term ai from some of the other terms right um i'm going to use the term ai because it's easy and people recognize it but it's really not the most precise term ai has been around for a long time and it encompasses a lot of different

technologies a lot of different concepts more specifically i'm mainly talking about neural networks and neural networks are a way to enable one technique that enables machine learning and machine learning is one type of ai so that's the relationship between neural networks machine learning and ai again forgive me i choose to use the term ai 99 times out of 100 in this talk you can substitute the word neural words neural networks for ai and you'll be right um we should also talk a little bit about machine learning it mainly falls into two categories and the first category is where i have a bunch of data and i don't really know how to organize this data i don't really know

what groups exist with this data and i need a way to kind of sort them out separate them out and and cluster them together so i have models that allow me to do that just by looking at the at the data without any other information so this is um a priori just looking at the data what do i see inherent in the data that that i can make divisions or separations on and that's called unsupervised learning we're mainly not talking about unsupervised learning in this talk what we're mainly talking about is supervised learning and what that means is that um i provided on top of like the data i provided labels to that data okay so the example i'm

going to use is uh throughout this talk is an ai model that identifies hot dogs in pictures you send it a picture it tells you if there's a hot dog in there um the way we would do this theoretically is we would have this huge selection of hot dog pictures from all different angles right all different types of hot dogs and they're all labeled hot dogs so the ai knows what they are they learns what a hot dog looks like from all of those pictures and that's called the training data or the training set right and once it's trained based on that training set it can kind of generalize the abstract concept of a hot

dog meaning that when you send it new pictures of hot dogs that it's never seen before it still knows it's a hot dog because it's so clever because we've trained it so well off of our training set it's important that we kind of have a basic understanding of what training data is because it factors in a lot into ai security now with that out of the way i want to kick things off by showing an example of why ai security deserves special consideration and it's one of the key takeaways from this talk one of the things i really want to impress is that we may think that because ai is just a program um if it doesn't really need any special

treatment it's a it's an application right and we already have you know tons of information security practices around how to secure applications right all up and down the stack but one of the things i'd like for you to take away if i do a good job explaining this to you is the idea that that just doesn't work for ai there's special considerations when it when we talk about this kind of ai especially these these neural networks and why they deserve special consideration so in this picture in this gif we see researchers shining white lights with a commodity projector onto the road and the car thinks that it's a actual lane and the car swerves and we can understand why

this would be very dangerous um if this were to be done you know outside of of controlled research now this isn't especially technical or sophisticated for a a sleepy driver may actually make the same mistake right but one of the things we can't really get into is the philosophical difference between an ai making mistake and a human making mistake but there is a big difference but what i wanted to focus on was thinking about the information security principles that we all know how would any of them stop this attack right there are some things that are kind of universal but the the point i want to make is like there's no firewalls or lockdowns or

um you know encryption that's going to fix the guy on the side of the road with the projector um some of you might have seen the guy who caused a traffic jam on uh google maps by having a radio flyer wagon full of cell phones with the location turned on call this the radio flyer attack it's this kind of attack this is one kind of attack i should say that we have to take into consideration when we're deploying ai into the wild i want to bring to your attention this uh graph on the right this is made by a man named nicholas carlini who's a bit of a rock star in ai security um he uh

helped found an attack called the carlini wagner attacks named after him and um the co-author he's done tons of talks writes tons of papers he's a very accomplished researcher and developer he keeps track of all the papers the research papers that are released on a specific type of ai security flaw attack called adversarial examples or adversarial perturbations and we're going to talk about those later on today but they're just one type of attack and you can see here just in the last year we're talking about people are releasing more than one paper a day it's blowing up and i personally haven't seen anything so wild wild west since you know the late 90s or early 2000s since the internet

boom when you know back then there were no um you couldn't get a degree in information security right i mean i i don't know if there are any real certifications back when i started um there's very little way to find good guidance on how to secure networks it's very similar uh in the ai space today because these attacks are specific to ai because they're not covered by traditional information security practices it's a bit of the wild wild west and there's not any real um authority we can look to for guidance on how to securely deploy ai and that's the second takeaway i want you to have from this talk is that um that this is important

and right now it's really open and there's not a lot of guidance there right we'll talk about the third takeaway a little bit later but um if we if we at least remember the the first two that information security practices aren't gonna cover it for ai and that there's no established guidance then i think we've done pretty well already i'll see if i can convince you of some of this as we go on let's talk about why ai is at risk this may be apparent to some of you it may not be it a lot of us just like to hack things because we think it's fine even though it may not benefit us in one way or

or another other than bathing in the sweet glory of the attack um but this this is a real deal if you have for example a um an ai that predicts whether or not a applicant will pay back a loan and based on that decision uh your financial institution chooses to give this person money or not if i was somebody with not very good credit it might benefit me to get an answer out of that ai to persuade that i had to trick that ai into making decision it wouldn't normally make um another attack is not about getting a favorable decision but about stealing the model itself this may be ip uh it may be a competitive

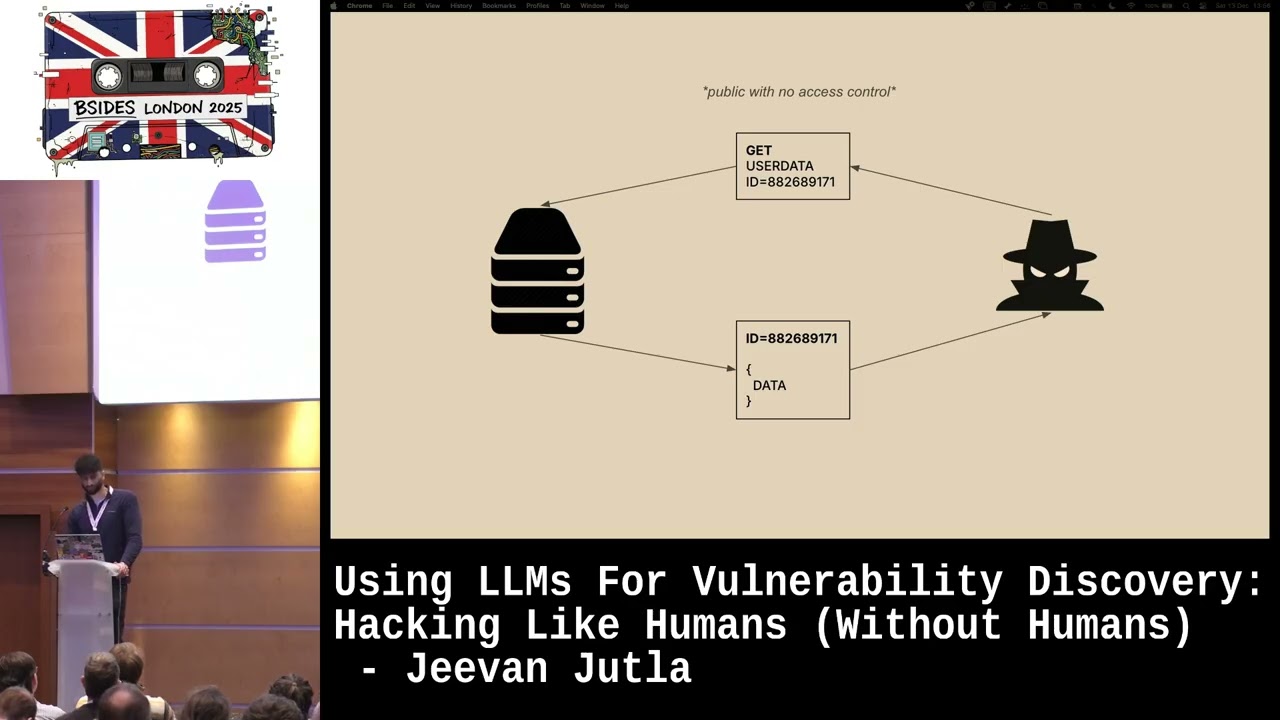

advantage whatever um stealing the model itself is is also another attack and finally um the information that was used to train the model we're going to talk a little bit about how that's at risk um what's important i guess at this point to say is that none of the attacks almost none of the attacks we're going to talk about require privilege escalation these aren't hacks in that sense of um you know gaining authorization where you didn't have it and um or exploiting um uh code um in order to to penetrate a defense or access a server or access a resource we're not going to do any of that stuff we're simply going to use the ai

in a way that it wasn't intended to be used it's pretty fun um but i wanted to make that clear that none of this involves um very little of it requires privilege escalations or a couple of times that we we think about um what could happen if somebody did have access but i try to stay away from that stuff this is the cia triad you may you may recognize this um if you don't don't worry um just this is a way that a lot of uh information security is taught that if we consider the confidentiality integrity and availability of our data then we're doing a good job of uh securing that data we're in improving our security posture

um the more that we can assure the the confidentiality integrity and availability of our data what i'm going to do is i'm going to talk a little bit about how these differ when we talk about ai from what they mean when we talk about information security i'm also going to show you how this falls short and what needs to be added to this to cover ai and i'm going to start out by fudging a little bit and say availability is pretty much availability i don't know of any real special ai specific attacks on availability i mean it's either available or not if yeah you can ask it so many questions i can't answer any others but

just know it's it's better that your model is available than if it's not available but but but borrowing availability we're going to talk about ai specific threats how they fit into the cia triad and how they don't fit into the cia triad let's start out with confidentiality in traditional information security right confidentiality is about you know keeping data that shouldn't be seen by by people unseen right when you think about company we think about like authorization you know encryption right perimeter defenses a lot is covered under um under confidentiality it's about keeping private data private right same thing in ai but specifically right when we talk about when i talk about confidentiality in a.i what i'm talking about is an

attack called model extraction i'm talking about keeping the model private um ai is almost as far as i know like unprecedented that how much avail information is available on ai if you wanted to go like build in a very powerful nuclear submarine you may be able to find plans on the internet download them and build it and it may work i don't think so i wouldn't do it you may be able to but you can do that with ai and ai is very very powerful granted maybe not as powerful as a nuclear submarine but you never know when you're dealing with ai right um and it's all out there and it's relatively easy i won't say it's simple

but it's relatively easy to get started in and to start building your own models um tons of free classes out there tons of youtube videos it's it's it's wide open them and ai has a neural networks especially have a um a feature known as transferability and what transferability means is that an ai that's good at doing one thing is probably good at doing that thing right across the board no matter no matter what the inputs are let me explain it a little bit better if i had uh you know my hot dog identifier and you could throw any hot dog in this ai and you're not going to get a hot dog past this this model is is just the best hot dog

identifier in the universe right chances are if it's good at identifying hot dogs it's probably also good at identifying enemy warcraft at warcraft enemy aircraft right or um political dissidents right or things that we weren't necessarily thinking of when we designed this model used in ways that we we didn't want it to be used or may not have known it could be used for this makes models particularly attractive to to theft right if you have a a model that's really good and i want that model then perhaps i might try to steal that from you and the way one of the ways we could do that is yeah to lift the server that it's on onto the

back of the truck or we could you know um get a shell and and scp it out or or whatever but um but what i'm gonna talk about is something called model extraction and in this attack what we're doing is we're basically asking your ai a lot of questions and learning are actually getting your ai to train my ai so if i wanted a hot dog identifier i might build a rudimentary model that basically just learns right from another model from your model and i send your model hey is this a hot dog and your model says yes that's a hot dog oh now my ai knows it's a hot dog right and if you say no it's not well i

know that that that picture is a little bit different and that's not a hot dog and i can build this very similar set and i can optimize right my ai to match your answers and once i've done that once your ai is trained my ai we have an ai that is almost indistinguishable i've basically copied your model i've extracted your model and then i can do a lot of things with it for example i can transfer it to uh to identify other things perhaps warcraft whatever that is right uh i guess the box dvd sets i don't know um enemy aircraft or whatever else i want um so that's transferability right that's model extraction and

there's one more concept i want to talk about and that's the concept of a surrogate model there's one more use for this if i extract your model i now have this this surrogate copy and what i can do is i can hammer at that with a bunch of different attacks trying to see what works and once i've got uh a good attack down then i can launch it against your ai with a ver fairly fairly confident that it will work because it worked against the surrogate does that make sense so it's kind of like having your own lab copy to hammer out on so the surrogate model model extraction model transferability oh yes and machine

learning as a service let's say your business is providing machine learning services for example um other people might want their pictures their hot dogs identified in pictures so they might you know pay you a nickel each time they send you a query asking you if this if there's a hot dog in this picture right so what might happen is each time they're asking you is they're training another model until they don't need your model anymore because they've got a surrogate model and they don't need your service anymore um if you're offering machine learning as a service it's something to be aware of it's your particular is susceptible to this kind of attack or at least um this kind of attack would

affect you perhaps more than it would affect other other people so we talked about model extraction and surrogate models and a bunch of cool stuff i'm going to talk about integrity now we think of data integrity or file integrity we're talking about making sure that we can trust the data that it hasn't been fiddled with that we can trust um where it's coming from things like that um technologies that come into mind are uh are things like hashing you know um checksums uh things of that nature certificates with ai i'm going to talk a little bit about poisoning and and data poisoning is a i think a fairly intuitive concept um if i am depending on this set of

training this training set i'm depending that all of these things are labeled correctly i'm depending on that fact i should say well if you're able to put in a bunch of pictures of hamburgers labeled hot dogs and my model learns that hamburgers look like hot dogs and it starts incorrectly identifying hot dogs where there are none um if i'm able to put in those pictures of the hamburgers then i've poisoned your model now one way to do that is to yes either access to database um without authorization or or what whatever um or the data warehouse wherever these pictures are and just put them there but i'm not really going to talk about that too much because i think um traditional

information security practices would kind of apply there what i am going to talk about is the fact that we often don't have control of the training data we often put that control initially in the hands of end users or even the public at large right um you you may think um for example a good example of spam filters so let's say you have a button that says report spam and imagine that when you click you see spam in your inbox and you click report spam i want you to imagine that that is sent to an ai and what you sent to the ai is the content that says you know you know the piece of spam

and the label this is spam well then you have you can build a training set and your model can learn what spam looks like and when we roll out that model it'll start denying those kinds of emails so what will happen of course is that the spammers will change how the spam looks a little bit until it bypasses the filter and you click this is spam again and then your model is updated in this case we've seeded control of our training set into the users and enough malicious users or enough malicious use or the right malicious use could enable a spammer to bypass the spam filter basically by mislabeling data um or if they're they're a little

bit more sophisticated than that they label certain phrases and then include those phrases in um in key pieces in the pieces of email but you can think of it just simply as the model says okay there's a line and on all the the spam is over here right and all the ham all the good email is over here and what we want to do is we want to take a email out from this side and we want to put it on that side and that'll allow us right to send that email and what we need to do is we just need to move that line just a little bit and that's called model drift right and that's a

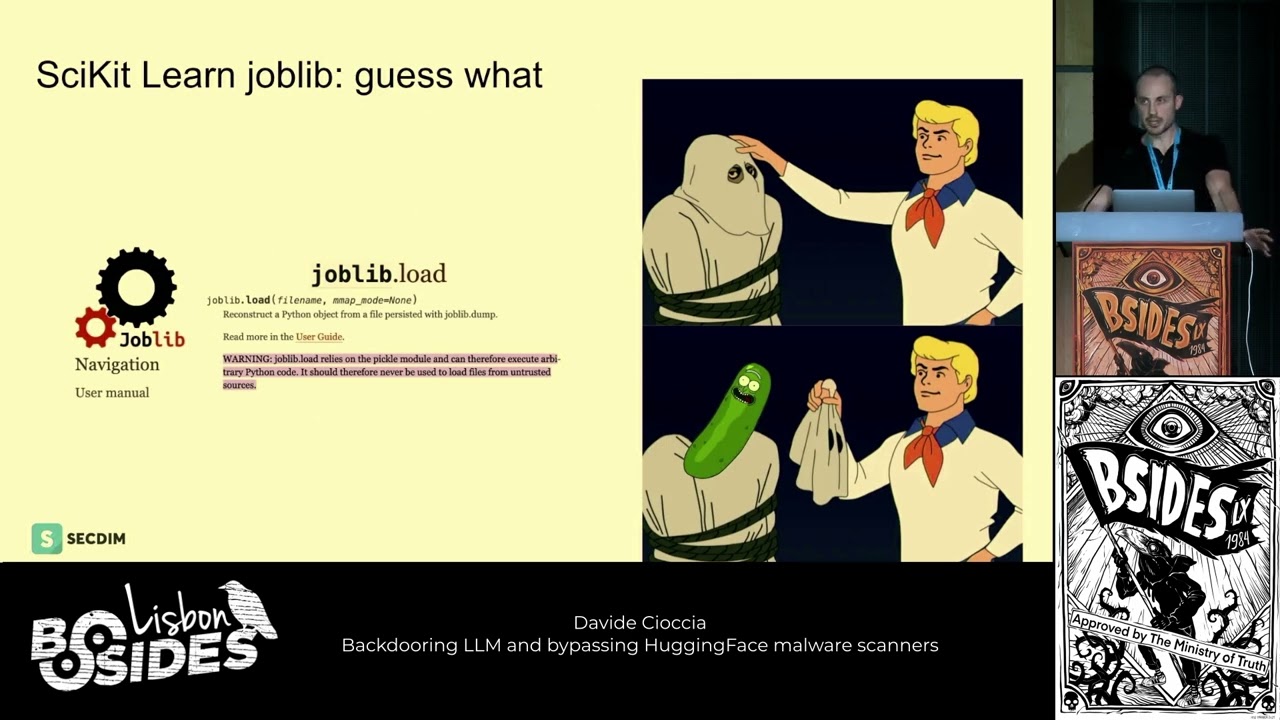

an indicator of model poisoning of doing exactly what we just talked about you can measure this one way a good way to mitigate this is to have a known input and known output of your model and make sure it's consistent or if it's not that you understand why or whatever because if it's not if you're starting to suddenly get different answers it may be an indication of model drift and model rift can happen in the normal course of business but could also be a an indicator of compromise um backdoors there's a the terminology is not necessarily um equivalent to what it would be in infosec uh not everybody uses the term backdoors but there are papers

that use the term what what they mean when they when they say backdoor is basically poisoning the data and hiding not really hiding the fact but not exploiting the fact until later that's all it means um so for example if we had right turn signs and we were teaching um cars to learn what a right turn sign is by noticing a car turning right and then noticing all the signs are around it and finding the common right turn sign well let's say someone started putting markings on those right turn signs and your ai learns that those markings are part of what a right turn sign looks like and you deploy this model um you know in in all of your your

wonderful cars no one's going to notice anything wrong it doesn't have to have those markings it can see the rest of the sign and say it's still a right turn sign but if it does see those markings on something like a stop sign if i then go mark put those markings on stop signs and it's able to for your ai then i've triggered that back door so it's still it's still you know model poisoning it's still um in this case data poisoning but um it's not really evident and it's not really triggered until later and we it's called a backdoor so again no privilege escalation is required to do this um in in many many cases especially with

dealing with model drift and it's surprising that the spam filter was one example but it's surprising how often because it takes so much data to train ai that that data is sourced from places where it was out of our control we talked about c talked about the i glossed over the a now we're going to talk about the rp and i i would like to you to introduce you to the ciarp pentad it's a very catchy name it this is not a thing i just made this up um but if you want to make it a thing i'm cool with that you can just go around talking about the cia rp pentad as if everybody should know what it is

um i think that that that you should um absolutely uh but anyway um i've added privacy and robustness privacy uh we all know what it is uh we all know how important it is we all know that nobody cares about it but they should um but uh again i'm gonna differentiate what what why privacy is is a little bit different when we talk about ai but i'm going to spend the most time talking about robustness and adversarial perturbation this is what nicholas carlini was graphing earlier and this is really kind of the meat and potatoes of ai security this is what all the hubbub is this is where a lot of the research is um

so let's get into it right model robustness is the ability or the degree to which your model withstands attacks from adversarial perturbations so i'm going to talk about this attack that we see on the right hand side this gentleman in the top left uh picture right um using a state of the art at the time facial recognition ai was correctly identified as himself when he put on the funny glasses he was identified as mila jovovich one of the interesting things about this attack is that this is called a targeted attack and there's a couple of different use cases we can think of the first one is in a future far future dystopian place where where this would never

surely happen in real life but imagine if you could um facial recognition technology at airports that looked at your face and compared it to a the faces of known wanted people right and if there was a match then you weren't able to board the plane and the marshals came and got you and made your day very bad in that case a bad actor wouldn't really care who the ai thought he was as long as the ai didn't think it was him or her or perhaps another bad actor right but the point is is that it doesn't have to target any specific person it can just be anyone but me and that's called an untargeted attack but this is actually a

targeted attack those are mila jovovich glasses when you put them on always male adjobed not random a specific pattern on those glasses and those are just an inkjet printer cut out and glued on to to drugstore glasses not very not very high tech right um convinces the ai that's mila jovovich on the right hand side we see a stop sign with some stickers on it that makes the model think it's a speed limit 45 and i don't want to confuse anybody this is not model poisoning not like the stop signs we talked about and just like a slide or two ago it's completely different um we did not poison the model here this is just

um the way that this uh computer vision ai worked the way it makes its decisions about the difference between a stop sign and a speed limit sign are subtle more subtle than we may think and they can be tricked by putting just a few stick by making small alterations to it this is just the way uh these uh these neural networks work how do we exploit this so we just put a bunch of stickers on or do different colors and see what happens you can um let me know how it works or we can use a lot of the uh available tool kits that are out there to help us by people who have already discovered

these attacks bully finding new attacks yourself that is super cool but to get started if it you're new to this um we're going to talk about a couple of tools that we can use later on but i want to talk a little bit more go a little bit more in depth about an adversarial perturbation because this is often what it looks like right here now these are read left to right not up and down so in the top left we have the alps which it kills me that whatever ai this was in this research paper from this picture could not only correctly identify the white bit as a mountain but as the alps mountain chain is

unbelievable especially with that degree of certainty um plus this adversarial perturbation that we generate as a bad actor as an attacker as a security tester right um we overlay one on to the other and we get the input on the right now that is called an adversarial example the image on the right the ones when we create that by adding the adversarial perturbations and we get this kind of snowy figure to the right um in the in the lower right on the puffer that's now a crab it's hardly hardly noticeable one of the things i've started to notice is google captcha looks like this sometimes now and i don't know if they're training their models to ignore adversarial

perturbations or not but i can imagine if like you say click all the buses and you've you've done adversarial perturbation to these pictures right they can learn what a picture of a bus with an adversarial perturbation applied looks like and they can label it that so if they ever see you know a bus with that adversarial perturbation they know not to not not to trust the classification from it um they're training they may be training i don't think i don't know if they are but it occurs to me when i saw that that perhaps they're training their model to see adversarial perturbations which is a way to actually increase your robustness it's not a great way because unfortunately

the workaround is you just add another adversarial perturbation onto that and it does the exact same thing and then you get into this like how many layers deep are we going to go um depending on your use case it may be feasible it may not be um i love this dog 99.99 i wish i could get an ai to be that certain i mean if i if i saw that i would think my model's over fit i don't even know if i would trust it but anyway a hundred percent that is a crab um a little bit odd but anyway the the the technology they're talking about is sound the adversarial perturbations do work and because they just operate on the way

um computer vision models work they're very hard indeed to mitigate the best thing to do is just to test this yourself so you know your risk before you deploy and we will talk about how later but first i wanted to talk just a little bit about data privacy not data privacy uh model privacy so this is weird if i told you hey your model may be leaking training information you may not think that that's that strange but if you know a lot about ai you might look at me like i was an idiot because the training data is not located anywhere in the ai you can delete the training data after the model is trained you don't need

it anymore so it's not like the model is like checking to make sure or talking to the training data it's not if you were to run a debugger on the model you wouldn't see any information from the training data there all you would see are the weights it it put to each neuron to each um decision kind of it made it's a it's a coefficient number it's not representative of the data it's of the decision so or of the um feature um so the training data isn't located anywhere in there nonetheless there are certain circumstances in which your model may leak training data i'm not going to lie this is not that common this is kind of a fringe example

but it is important to know that these things exist because you never know when the next you know big it might blow up right um this isn't that common and it requires a very precise kind of set of requirements and one of them is returning a confidence score remember when it said crab 100 or out to 94 that's a confidence score and if you return that to the user when the user asks you to classify something it can be kind of used against you so the the upshot is if you don't have to uh return a confidence score don't because this can happen basically you send it a if if you have this guy's name i'm gonna

call him kyle kyle here on the right um if you have his name right um and you have a model uh that um that returns when you give it a picture returns a name and a confidence score like it's 50 sure that this is bob and just 20 sure this is sue or whatever um i can basically just send it random stuff until it thinks it's like 0.001 john and then i can take that and start building off of it right and and taking that small percentage and optimizing for a larger percentage until i come close to the original picture right um so i add a squiggle here a squiggle there and now i'm at two percent oh

great right so i keep those and i keep adding on to those using a specific technique and we come up with a kind of general idea of the pictures in the left and right kind of scary images but this is known as model inversion and it's especially disturbing when it's paired with a concept called membership inference and membership inference i would imagine it's kind of big in the osn world but what it means is that if you take one set of data like the training set and you combine it with another set of data right it becomes you know quite powerful you don't need that much information to positively identify someone if we look at the example on the right from this

white paper we can see that a publicly available voter registration list that's available for purchase contained all this information including zip birthday date and sex and if we match that zip birthday and sex against medical data we can ex we can kind of glean the name and address of the people in the medical data because not that many people share the zip birthday and uh sex you wouldn't think it'd be that precise but the confidence scores are actually you can't be 100 sure but the confidence scores are rather high in this example so that's membership imprints and when it's teamed with model inversion it can mean some some pretty nasty stuff this is still a

little bit fringe and it does require a very specific set of um you know set up in the environment so hopefully that was super helpful or interesting at least maybe i think it's kind of exciting personally um and i put further considerations at the end and i didn't talk a lot about it not because they're not important but because i didn't want to scare anybody away um from the third takeaway which is things that we don't normally talk about when we talk about information security in the context of ai we should i'm going to make the argument that explainability ethics and fairness should be the job of the information security professional of the people who

are doing ai security the biggest reason for that is that nobody else is right now and that doesn't mean that people don't care tons of people out there are doing great work on this tons of great research not many of them from an information security background it's more like if you were to go to someone in your company and say hey who's responsible um for making sure that we're only releasing ethical ai who talks to the vendors of the applications that we're using that use ai um who's making sure that those vendors are enforcing fairness and ethics standards and if you do that i would love for you to email me uh what their answer is because it's so

new not a lot of people are paying attention to this and it's a big deal it doesn't matter how like secure your ai is how well you're protected against um adversarial perturbations and model inversion if your ai is out there doing things an ai shouldn't be doing in the first place right um or if your ai is is making unfair uh decisions um i'm gonna talk real quick about explainability um explainability is a bit of a contentious issue um what it means is that if your ai makes a terrible decision that puts you in the news and somebody comes to you and says how did this happen why did your ai choose this if your

answer is something like i don't know or nobody knows or because stats or probabilities it's not really a great a great answer ai explainability is about understanding the features how what features went into the model and how your model used those features to reach a decision it doesn't it sounds pretty straightforward but it's it's under it it's kind of contentious because people don't not everybody and i'm actually one of them not everybody likes the idea of calling it explainability because it doesn't really explain much if i tell you um well i thought so you know i thought this was a hot dog because it's kind of red like a hot dog and it's kind of long like a hot dog and

and i know because i've researched this and i know uh you know ai explainability is very important to me so i know that these are the two biggest features that my ai uses in order to make a determination about whether or not it sees a hot dog honestly it doesn't really explain much well what is long well what is i mean how red does red need to be right and the more you you you dig in the more and more vague your answers get because the answer is like stochastic gradient descent and hard things right things that we don't always necessarily um understand 100 and calling that explained is misleading but my my stance is that any explanation you

can give that reaches you closer than nothing that is more accurate than nothing is better than nothing right um my stance is that you should be able to do some elementary analysis and understand you should know what your features are in the model and you should understand um how they how the model uses them one of the tools you can use for this is lime analysis um lime analysis does just that it tells you what features played into each decision which is another problem with explainability here ai explainability is very important to me so i've done this complete live analysis it should answer all your questions okay well how does a lime analysis work well

you get to the exact same problems how does the alignment analysis know that this is you get into the exact same problem so um explainability a bit contentious um but something's better than nothing um ethics and fairness there's a couple of different flavors of this right the biggest ethical issue is is should ai be making this decision at all or should a human being is there a human in the loop so to speak or does the ai make decisions by itself and if so is that okay um like i said at the beginning there's a difference between a.i making a mistake and a person making a mistake if a person makes a mistake you can say bob you made a mistake and

we're going to make sure that you don't make this mistake again one way or the other it's ai makes a mistake who's responsible for it you don't always have attribution um to to ai and sure people will get out of blame but we all still kind of know right um with ai it adds a layer of abstraction that's sometimes very difficult especially if like oh we didn't even made this ai we bought this ai from airs you know it can get pretty difficult and if you're using ai to do something that affects people's lives and you don't understand how that ai works you're putting yourself in a very precarious position and we've seen this before unfortunately this has really

affected people's lives before there is one example you can you can look up about an ai that was used in the criminal justice system it was used to um basically um talk uh rate recidivism i'm not gonna be able to say it but the odds that uh someone would would commit a crime again would they were they released on parole so this ai was making suggestions recommendations about who got parole and who didn't it was a pretty racist ai unfortunately and the ai learned from actual you know parole cases right um the unfortunate part about that is is the inherent bias in in law enforcement and in the justice system i should say excuse me and racism in the justice

system was used to train this ai and you got a racist ai out of it um yeah it's it's bad for for people to make decisions based on this but i maintain that it's even worse if ai does especially if people you know don't understand that that's how it works um we need to be very careful about whether ai should be making these kinds of decisions at all we need to understand what happens if this doesn't work the same for everybody right what if only a certain population is able to receive the benefits from this ai because of the way they look or because of some other um you know protected uh feature or thing

things like that really that is the most important part and that's the third takeaway is that as information security professionals this should be in our domain and under our purview almost last how do we actually go about fixing this this is really going to be the subject of my next talk so i'm not going to give it all away here but i do want to give a little teaser that it begins with a comprehensive risk assessment it involves really understanding those features and how they're used in ai involves understanding the models that are being used and how they're being used um once we've done a lot of of that research we then start actually testing robustness inversion

all these things we've talked about and understanding what our risk is and then we continue to monitor um as it's in production as it can does its inference to see if it's behaving correctly that is pretty much all i've got i have had so much fun um talking with you about uh all this fun stuff um before i go this is my email address reach out to me if you're curious about this if you're a professional doing this if i've made a mistake hit me up in the q a or hit me up on my email and give me both barrels archive.org is um a where a lot of these papers are released where you can get

the primary documents you know the actual source documents and not some article about the document the actual white papers are um the ibm art toolkit adversarial robustness toolkit is a very simple toolkit it's it's a library um you use python which is what a lot of people uh code in to do ai um and basically you just tell it what attacks you want to run against a model and it goes to town it's very cool i've used it and recommend it it's ibm art toolkit the ibm art developers have a slack that you can join and ask them questions another one that was recently introduced to me was is privacy raven and that uses pytorch

which is um a little bit of a higher level layer of abstraction so it makes doing um ai a little bit easier one might say um and i know the author of privacy raven is um looking for feedback on how it can be what else it can do and how it can be improved and how people are using it so um definitely give that a try there's tons of other ones full box is out there clever hans but um i wanted to bring these two to your attention xai is a buzzword it's explainable ai and it's a great thing to google that term xaio to get you right where you want to be in in talking about um explainability

um fairness accountability and transparency google has a good document and microsoft has a good document um both of them are are kind of looking into this and coming up with ideas on how we can get um a handle on on accountability and transparency finally the ai village at defcon these are they have a discord hit them up on twitter get information about the discord join the discord join the discussion these are really smart folk who are right on the cutting edge of this research and um they're they're they're good people and and and great to to know and to have his resources to discuss ai security with and with that uh that is my time

i uh again just really appreciate this opportunity to talk again at b-sides dfw thank you so much for attending this i hope it was um exciting maybe interesting at least maybe useful but in any event i really appreciate all of you thank you so much and i'll see you later bye