Using LLMs For Vulnerability Discovery: Hacking Like Humans (Without Humans)

Show transcript [en]

Hello everyone. Firstly, thanks to everyone at Bsides, all of the staff and the sponsors for putting on the event today. So, I want to talk about hacking like humans but without humans. This is a culmination of around 8 months worth of research and development between myself and a few others. So, I'm really excited to actually get this out in the open and share this with everyone. So, just as an introduction before I get started, I'm JJ, one of the co-founders of Gecko Security, where we build an AI security engineer. I started off doing security research and pen testing for the government. Then I worked in a few red teams mainly in the financial sector focused on security tool development.

So, I think when you first see the title, you might think like what a weird thing to say like why do we need to hack like humans? Why can't we just hack however we want? like why does it matter what the workflow or the methodology we uses? I think a good way to answer this question is to go back and look at a few different vulnerabilities and how they've evolved over time. So I wanted to start with something a bit more engaging and I have a snippet of code here that contains a vulnerability. So a question for everyone in the audience. Can anyone spot what the vulnerability is? I guess you can just shout it out.

But yeah, I'm hearing a bunch of people say, "Yeah, it's a SQL injection." But obviously, if you didn't know that, it's 2025. So, we can ask Chad GBT the answer for everything. And it confirms that the value of the user is inserted directly into the query without any sanitization. So, if we go back to 2017 when OASP released their top 10 list, we can see the number one category is injection attacks. So, vulnerabilities just like this. And this made sense. Injection attacks were very widespread and a big issue, especially in all the legacy code that was running everything. And for those that remember, pretty much every pen test that was done back then ended in some form of a dumped database. Like

it was so widespread, we even had memes for it. So for those that don't know, this is the Bobby Tables meme. So a parent named their kid Robert dropped tables. The school added him to the database and he dropped the table. So it's just a textbook SQL injection that was me. So if we fast forward to 2021 when we started to realize this was such a big issue. We started to improve our security around injection attacks and this was reflected in that year's top 10 list where injection attacks dropped down to third place and looking at it today with OASP updating their list every four years. Last month they released a 2025 version and we can see

injection attacks dropping down to number five. So this is good security rate over time. What was number one ranked issue is now dropped down to number five. So, how did we actually fix this SQL injection problem? Well, over the last few years, we've made several shifts to stop these issues being committed to new code. Modern frameworks started to evolve and become secure by default. We had things like parameterized queries and prepared statements and they became the standard to what we used. And the newer frameworks, which you can see on the right, they all use OM libraries which have these built-in protections. And more importantly, developers were somewhat successfully educated around what a SQL injection was, what other

types of injection attacks there were, and how they worked. But if we go back and take a look at another type of vulnerability in this list and how that changed over time, like for example, broken access control, we can see that has an opposite trend. It moved from fifth back in 2017 to fast in 2021 and remained there over the last four years until today. And I'm sure you guys have all come across some form of broken access control before and familiar with what it is. But just to round the definition out, OASP defines it is what it's up here on the slide. And really what that means is a user is able to access privilege data that

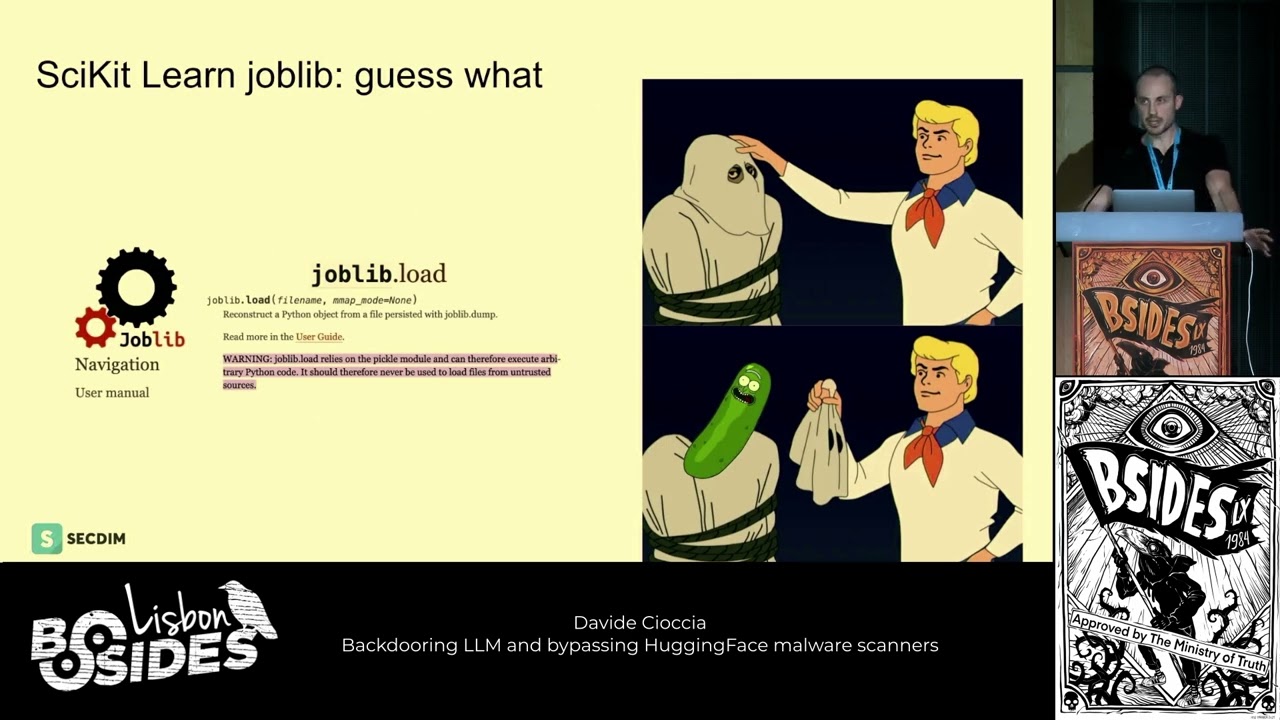

they're not supposed to. So this looks like being able to access things like server data or other users data which breaks user and tenant boundaries or elevating their privileges from something like a readonly user to an admin user. And the diagram here shows an example of this. The blue lines flowing from user to server to database is what a normal allowed path is. And a vulnerability is just a floor in the access control where a attacker can just directly access the information. And I also included some of the map CE that fit under this category. So things like path traversals, improper orth, idor, and then several others. And last month when OASP announced their release candidate for 2025, they had

justified broken access control at number one, saying 100% of the apps that they tested had some form of broken access control. And to me, that was quite surprising. I don't think they've mentioned how many applications they tested in the survey. But in 2017, it was 114K. In 2021, it was 500K. So, I'm expecting that to be the same or somewhat larger than last time. That's 100%. So that means every app they tested has some form of this broken access control. And in preparation for this at the start of the year, OASP created a separate dedicated top 10 list just for business logic vulnerabilities largely based around this point that every app that they had tested has this form of this

issue. And they go deep into building this framework explaining and categorizing all these issues. And you can see broken access control listed here as well as other adjacent logical issues. And I mean this data is all useful, but I wanted to look at real examples of broken access control being found and exploited in the wild to really understand what is the actual impact. And one example I found from July this year is where GitLab disclosed three CVs that allowed users to bypass um group invitations and restrictions on forking. And these are pretty straightforward vulnerabilities. As you can see, they're relatively low severity. But because GitLab hosts a lot of open source repos, they focus on privacy and security,

that's mainly why a lot of enterprises choose them. The impact is a bit more noticeable. So one interesting data point I found is if you look at the GitLab stock over this year and then map out when the CVS were published on July the 9th, you can see a 10% stock decline on that day. Now, I know it's hard to say whether this was a direct causation, but I think it's it's worth noting it as a potential impact. And another more interesting example is one of the largest data breaches in history. So in 2022, Optus, which is the second largest telecoms company in Australia, suffered a breach that exposed 10 million user records. And that's about a third of the population

of Australia that was affected. And this breach is a good example of two of the CWs that I showed from before missing access to endpoints and insecure direct object references. So an idle. And just to explain what happened. So an attacker found an exposed endpoint where they could query user data. And the idea around this endpoint is you're logged in as a customer. You query it inside of the app and it returns some information. But the first issue is this endpoint had no access control around it. So it was remotely exposed and had no session or middleware validation. So anybody could make a call to this endpoint. And obviously sometimes in security we we operate on redundancy. So this would

have been fine if we had secure ID referencing. and it shouldn't be too much of an issue but and what I mean by that is when we create a user ID we create is a very unique number or a very high entropy string so it's we can't guess this or we can't enumerate from it but this is where they had a second issue which was that the direct objects referencing was using sequential ids and so combining these two allowed an attacker to write like a 50line Python script to enumerate all user ids and retrieve all customer data so two failures in broken access control that led to the breach of sensitive information for a third of the

population of Australia. And so a big part of OASP announcing a separate top 10 list and then highlighting the fact that every application that they tested have these issues is one, they're everywhere right now. And two, this is going to get worse in the next few years. And so driving awareness to it is an important thing. I think one of the most obvious and most talked about reasons is everybody's favorite words, vibe coding. Now there are several studies that found AI generated code has more bugs. So, one of the recent papers on the far right called is vibe coding safe? This came out this week and there were several researchers from CMU that created a data

set to test all the newest LLM models on secure code generation. And they found that 60% of the features generated by AI work correctly, but only 10% of them meet security standards. And I think all this data is very useful and we all kind of share the same sentiment around vibe coding. But one of the more interesting studies I found was from Dan Bonet, a professor at Stamford, and he ran two experiments with software engineers and told them that they were going to program something. And one group could use AI coding tools and the other group couldn't. And ultimately, this wasn't about the code that they wrote or the functionality because afterwards he went to both groups of engineers and said,

"Do you think your application was securely developed?" And the group that didn't use AI said, "Oh, maybe. We don't know. We did the best of our ability, but we're not really sure if it's securely developed. And the group that used AI said, "Yes, it was securely developed. We did everything possible to make it secure." And I think the difference between that alone is telling, but what was more interesting was there wasn't actually that much difference between the two applications in terms of security. In fact, the group that didn't use AI performed marginally better than the group that did. They were just doubting their own skills. and Dan goes into a bunch more experiments and discussions, but he concludes that

he concludes that engineers using AI to write uh code write less secure code but trust the output more despite it being was and I think the second factor that's multiplying the scale of these broken access control vulnerabilities is really how modern architecture is becoming more complex and spread out. there's this growing shift towards cloud container micros service based apps which ultimately increases the number of trust boundaries the number of exposed endpoints we have and just all these areas where logical flaws can slip through and I think looking ahead in the next few years there's going to be a bunch of more bunch more challenges we have with this whole identic identity around how do we authenticate them how

do we authorize them especially with all of this being tied to MCP and the third reason which is this very popular narrative that everybody body's nation state attacker is using AI to automate campaigns and discover vulnerabilities at scale. So last month, Anthropic released this report about detecting their first AI orchestrated cyber espionage campaign. And then shortly after a few other AI companies announced some similar findings. I think from the community there was a lot of mixed opinions about the reality of this. But I think what's more interesting is just making the assumption that this will be possible in the in the very near future. And what impact does that have on security? Like how do we avoid turning the whole global

internet into one big bug bounty scope where all these agents are continuously running campaign level attacks on everything that has a public IP address? Because at that point being vulnerable and then being hacked are not going to be two separate steps. And I think a small timely point where we saw a glimpse of this was a few weeks ago with the react to shell vulnerability. So just over a day after the vulnerability was announced, we had a working public PC. And if you compare that to the timeline of something like log 4j, it took a lot longer for us to get a public working exploit. And that was in the pre- AAI era. So we know business logic

vulnerabilities are bad. We've seen the impact from real world examples and we know these issues are basically in every application. So why do we not have a way to find and fix these issues? Like why have business logic bugs not had their Bobby Tables moment that made injection attacks become a meme? Well, if you compare them to injection attacks, these types of issues resist the fix that killed SQLIs because they're unique to your business logic. We cannot just parameterize them away. In in fact, true business logic vulnerabilities are actually fundamentally different from a typical security vulnerability. You need to know how the app supposed to work. you need to know that this endpoint should have had access control and

shouldn't be publicly exposed in order to actually find and fix them. And so I think it's useful to look at all the really powerful techniques we have to find vulnerabilities currently and why they've fallen short in detecting these types of issues. So to start off with static analysis tools, these scan source code and then do pattern matching against the rule base to find vulnerabilities. And actually what makes SAS so powerful for all other vulnerability types makes it incomplete for business logic issues. So logical flaws often require very long sequences of dependable operations to become exploitable and they don't fit well into known shapes or patterns without without very high false positives when you run this on your code.

So the next part is DAS. So DAST is dynamic testing. This works on your running application by sending inputs and then observing the responses usually without access to the code. And these are great techniques, but they're really more of an exploration technique. They struggle when you have very complex applications and really with understanding if the responses are correct without extensive setup or tuning. And then finally, runtime tools. So runtime tools monitor live traffic and then block out malicious activity in real time. The issue with these tools here is that they flag malicious payloads and business logic vulnerabilities don't use payloads or patterns. They just abuse what is the intended functionality. So they go completely undetected by runtime issue

uh detection tools. Okay. So how do we currently detect them? Well through humans and manual testing. So manual testing brings what no other automated tool can. They have the ability to understand context. We can think creatively. We can chain multiple smaller issues into a bigger exploit. We can read API documentation, understand the business model. This is why all the previous approaches have struggled because they don't work how human vulnerability researchers do. We don't find bugs by trying random inputs or grepping for strings like these do. So, manual pentesting and code reviews have basically been the gold standard for us to detect these issues. And that's basically why we got pentest. That's why bug bounty programs have been

a very critical part of most abstack programs. But it's also the reason why we're struggling to keep up with the rate that they're being committed to code. So the real question here is can we get the computer scaling benefits from all of these automated tools and techniques that we have but use this bug finding methodology and understanding that humans have. Now luckily there have been some great technologies in dealing with the ways of thinking like humans like maybe you've heard of chat GPT. So when I I was thinking of making this slide and I thought it would be great to have a timeline of progression of LLMs over the last few years. Then I realized we

had so many updates I couldn't even fit it in a slide clearly. But going back to the start in 2022 in November, Chad GBT was released and this spawned this whole thing of LLMs being great and there's all these different models and just in the past year we have things around agentic coding reasoning models and all of these things have just been inundating us and here we are at Besides London 2025 in December kind of in the middle of all of this with new models coming out this week. So GPT 5.2 and probably new models going to come out next week. And so the obvious question is to look at all of these new technologies and see if this might help.

And part of this when I think when we look at it today, we're like, well, obviously this can help. LM are really powerful. But when we started to look at this problem, this was back in 2023. So this was still early in this whole wave of LLMs. And it was really more of a guess like this might work, but it might not. It's just a hypothesis that we need to test. And so that's why I think it's really useful for us to talk about our approach, some of the stuff we did partially because this is a really expensive area of research to work in. So it's nice that we can share the results with everyone so they don't have

to replicate it from scratch. And so the goal here is we want to use LLMs to find these bugs using the same methods that human researchers do. So if we're trying to build a system that can detect these specific business logic bugs using LLMs, what new tools do LLM give us? Like what can we do now that we couldn't do before? I think people kind of basically get the idea of what LLMs are and what they do. People talk about it of being kind of like an autocomplete, right? All they do is take a bunch of input text in the form of tokens and then produce a bunch of output text also in the form of tokens.

And part of the thing here is that's part of the fundamental limitation. If you're doing this input to output thing, you have to base all the data that is created in the output based solely on the input or on the training data. And so a good example of why this matters is if we do something like let's say we wanted to ask the LLM what's the weather today in London. Well, the LM has no idea what the weather is in London. and all it can say is, "Wow, you should get an iPhone and read the weather app." Which is helpful, but it's not what I wanted to know. So, obviously, it's a really powerful building block, but

there's some limitations we have here. The next obvious thing we can do is hook this up to something called an agent. And so, everybody talks about agents and agent coding, but it's really just a simple idea of throwing a loop around this text completion. And now we can do additional things. So, if the agent wants additional data, it can request it using something like a tool call. So, if we take the example from before and ask the LLM, hey, what's the weather today in London? But this time, we insert some additional data into our prompt, saying, hey, LLM, you have a tool which allows you to get the current weather. Then the LLM is going to say, great, I can solve

this problem. I'm going to check the weather in London using the tool you provided. And we can we can add all these messages together and then run the tool on the get weather thing that it requested. And maybe it says 59. And the nice thing about the LMA is it's pretty smart, right? So 59 isn't a complete answer. So it can use a bit of intuition and say 59 great you probably wanted it in Celsius and these agents can be a lot more powerful. They can keep working on these problems until they come up with something useful. And so the next piece in our toolbox that we're going to use is an LLM based classifier. And classifiers are a very

common thing in machine learning. They're a very simple concept. We have a multiple choice question and we want to know what the answer is. And the common example that people use here is this hot dog not hot dog classifier. So the idea is you take a picture of something, you ask, is this a hot dog or is this not a hot dog? Very simple idea. On the left we have a hot dog. On the right we have a shoe. The shoe is not a hot dog. So easy problem. But there is a problem when you start to use this a bit more naively, which is that there's no real score of confidence. So what do you do

if you have something like this? It's kind of in between a hot dog and not a hot dog. So it's a bit more ambiguous and you really want to have a bit of nuance and reasoning into your responses here. Yeah, you get the idea. So, LLMs don't just output words, they output probability distribution of words. And what we can do is use that directly instead of this binary output of 0 and one, yes or no, true positive or false positive. We can get a whole spectrum of answers based on confidence with reasoning and say something like 60% no because hot dogs don't have seeds on their buns. And this is much more powerful than just saying some binary

result. And so, this is pretty much it. I mean there's plenty of other cool things that people have built with LLMs like embeddings and other things but these are the only key building blocks that we need. Okay. So how can we use this to build something interesting? And again the whole point is we want to take a code base and find these business logic vulnerabilities things like broken access control ID doors authentication bypasses that previously required a human to find. And we want to use these new magical LLMs to do it in the same way that a human does. And so when looking at this problem from more of an engineering perspective, the two main design choices you have to

consider are how do we accurately get the LLM to navigate the codebase and two, how do we get the LLM to find and try to understand vulnerabilities. And so we're working on the constraint that we have the source code and we're going to be focusing on web apps, APIs, microservices, things like that. And we want to be able to support a range of languages that are going to be used here. to everything from pi python to JavaScript to PHP, Java, pretty much everything. And so there are several different ways that we can index the source code and give the LLM the code base in a format that it can work with. And to touch upon

why you really have to pay attention to the accuracy of this this intermediate repres representation of the code base that you give to the LLM. For example, if a agent is looking at some code and then it sees a function that runs a workflow, it needs to accurately find where that function or class or symbol is in the code so it can build up the correct call chain and maybe see where the definition is. And lots of functions are going to be called the same thing and going to have lots of instances of the same methods. Like how do you know which run function to get? So you have to narrow it down to the correct code

and symbol that it's interested in to build up the call chain because if you have a bunch of inaccuracies here because of missing context, the LM's going to build up incorrect call chains and this just isn't going to work or you're going to get a bunch of false positives. So I've listed the common approaches here. Everything from dumping the codebase into the LLM's context window to indexing it the same way an IDE does using LSPs. And to compare all the approaches, each have their own advantages and disadvantages. Like zero shoting into the LM's context window is very easy, but you'll get context rot and pretty much the AI will essentially forget the codebase. And as core graphs are very

powerful and versatile, but they struggle sometimes with complex multi-step call chains, which is often where a lot of these business logic vulnerabilities occur because they lack things like semantic accuracy, especially for some of the dynamically typed languages like Python and JavaScript where objects can change at runtime or you can have reflected roots. So we settled on LSPs, so language server protocols, as this was the most accurate way to index code. And this is the same way that an IDE indexes code. And a bunch of research has showed that this is the most effective for using LLMs. This is what tools like GitHub, Cursor, Windsor use because they have very high accuracy with code searching. And just for a bit more technical color

into how this LSP indexing works, we take a graph structure with the definitions and reference nodes and we end up storing this in Protobuff. But because protobuff is a statically typed schema, we need to store all this dynamically typed information. So we have to use the language compiler and this essentially looks like linting to resolve all these dynamic relationships into a static schema. Okay, so now we have a very accurate index of the code in a way that the LM can query the call chains and data flows to find and search for it wants. And this brings us to the part that I find much more interesting, the vulnerability detection part. And again, we established the reason that business

logic issues are so difficult to detect is because they require an understanding of the application and its context. So we can't just pattern match or brute force our way to find these issues. So I think it's important that we follow the human workflow here. And obviously all human vulnerability researchers, code auditors, pen testers, they work differently, but there is some sort of vague pipeline of steps that everybody follows. The first part is we come up with a set of ideas for vulnerabilities. Maybe we do this by reading code. Maybe we do this by getting really drunk. Maybe we do this by going to bed and waking up with a wonderful set of ideas in our head. But

the point is we come up with a set of ideas. Then we have to go and investigate them, right? And a lot of these ideas are not going to resolve to anything. But if they do look plausible, then we spend a bit more time trying to validate them and triage them. And so maybe we can use the LLM to do the same sort of thing. And what this looks like is we take that index code base. We use the LM to read and try and understand what is the functionality of this application. What is it what is it doing? What is the core business logic? Looking at things like business invariance, what are the trust

boundaries? What are the different user levels this application has? Then the agent threat models the application and comes up with vulnerability test cases that try and break this functionality. These test cases essentially look like a list of potentially vulnerable code paths that another agent will then go and do all the vulnerability analysis and validation on. And one important thing to mention here is about using LLMs for threat modeling and the whole point around non-determinism and hallucinations. So if you've ever played with LLMs before, you understand that their responses can vary wildly between prompts. And there are several steps here that we want to make as repeatable and deterministic as possible. So when we generate the

codebase index, this is always going to be the same. We don't use lms here. This is just compiler math. Going from the index to the codebase wiki and building the specification sheet and understanding what is the logic of the codebase. We want to avoid hallucinations at all costs because if you make a mistake saying this endpoint does something else than what it actually does, this is going to lead to a bunch of false positives. But going from the wiki to the threat model, this is where we can use think a bit more creatively about hallucinations and hallucinations can become a bit more of a feature because a key part in finding vulnerabilities is being creative and

looking at the non-obvious attack paths. So here we found that invoking hallucinations by doing things like changing the temperature and switching models creates these non-obvious attack paths and it kind of increases the coverage. But as long as you don't really ask generic open-ended questions or miscontext, hallucinations aren't really a big part of an issue if you don't want them. And so I know there are a lot of pieces here, but to tie it all together, this is a simplified diagram of what the final architecture looks like. And to walk you through it, you start with a code repo. You download the code repo. We index the code using the LSP indexer. We then take that index code base and

use an agent to write a specification sheet and a wiki. We use that to threat model and create test cases in the form of potentially vulnerable code paths. It then does the core chain of vulnerability analysis on these code paths where the LLM looks at the code. It requests certain functions by quering the indexer and at the end of that it builds up the entire call chain from the source to the sync and it can be very accurate about the reasoning of whether this specific vulnerability is in the code and then it spits out the final result and the final result contains things like the chain of thought, the CVSS score aligning it to the impact on

the application looking at what invariance it broke from the specification sheet and the confidence score and reasoning using the LLM classifier I mentioned. So now to the fun part where we can talk about all the vulnerabilities it found. So to test this on real apps, we ran this on a bunch of popular open source repos and we found 35 new CVEs. I've listed the findings by type in this table with the majority of them being improper access control and path traversal. Two interesting findings were we found a critical and 9.9 in bento ML. So this was an SSRF that allowed a complete server takeover and I've added the CV ID there as well and also a CVE

bypass that fixed the bypass for a prior CVE which was an SQL injection. So to go over an example, this is an or bypass in autog. So it allowed an authenticated user to access results belonging to other users. I've added the snippet of code here as well which is the vulnerable endpoint and this is supposed to only return results that belong to the requesting user and there is an orth check on line 121 that checks that the user has access to this object but immediately after that on line 125 the code fetches data using a userc controlled ID that has no ownership check. So if you run the call command like something on the right with a graph

ID that the user owns but then using an execution ID belonging to a victim the request succeeds and then you get data for another user. So this is a relatively straightforward finding but it's just a subtle example of a broken access control that all other CI/CD static scans missed. Another one which is a good example of a multi-step call chain is an rce we found in letter. So this was an rce through a bypass in the sandbox that allowed you to call dangerous modules and system functionality. So remote attackers can execute arbitrary code on the host which runs as root. Okay, so great we have something and it works and LLMs can kind of follow human

workflows. I don't know that it's perfect but they can kind of do it and this whole area of research is still in its early stages. But the nice thing is as we get better models, this whole system is just going to magically get better because these models are going to be smarter. Okay, I'm going to go through a couple pro tips because I know that was all very high level talk and I want to leave you guys with something useful. So if you want to try and do this yourself. So the first is to minimize the amount of work that you get an agent to do. So if you ask the agent to do something

that you know it has to do 100% of the time, you probably should have just done that work for yourself instead of using the agent for it. So for example, if you ask the agent to find all sources of remote user input and APIs in the code, you should just probably detect that programmatically and then give that output to the LLM. Like you don't need an agent to go and find all that stuff. The second point is if you're using tools, you need to think very hard about what tools you're using and how many. You shouldn't give every single tool imaginable to an LLM inspect and expect it to do a good job. One of the examples

I like here is if you think of it like a video game like maybe something like Portal. So if you're playing the game of Portal, you need to be given a portal gun. Obviously the game tells you you're going to complete the levels, shoot portals, jump through them, fire lasers, whatever. But if someone gave you extra tools, if someone gave you lockpicks in the game of Portal, this is just going to confuse you. Like what are you supposed to do with lockpicks? Every time you get to a new level, you're going to think that you're supposed to pick a lock and you're going to be really super confused about what's going on. So, you need to think selectively

about the tools and you shouldn't just give every tool that can do everything imaginable. Otherwise, it will reduce the chance the LLM will use the correct tool or use that tool correctly. The third point is you should run lots of evaluations. I think everybody gets the basic idea here. Like overall, there's a lot of nuances with how we do this. And because LLM's changed on a weekly basis, the only way to understand how these things are going to work for your specific problems is to do the evaluations for your specific use case. Like I can't tell you if things are going to be good or bad or which models are going to be suitable for your

workflow. You need to go do those evaluations. And so just in conclusion, LM are solving real problems. They can do things somewhat like how humans are going to do it. I don't think people are using them to their full potential. I think the whole limiting factor here is that these tools were built for the tools we're building for these systems are just not there yet. And I think if we took the LMS from last year and built better tools for them, they can keep getting better for years to come. And another good thing is we didn't talk about math here, right? Like this is a whole black box. We don't have to talk about tensors, transformers, or matrix

multiplication. I think given it's nearly the holidays, I think you should all go out and try and build something with this. And I think you'll find it easier than you think. So yeah, that's it. I think we have uh some time for questions. I'm going to be providing a more detailed write up going into all the vulnerabilities. So if you want to connect with me also be around the conference so you can find me and ask me stuff just let me know. But thank you guys.

Uh thank you very much JJ. Uh do we have some questions please?

Um yeah, it was a really good talk. So thank you. Um volume of code seems to obviously be quite the enemy here and especially when a code or you can't always rely on just the code as input when you're doing a lot of these reviews. I can imagine like even on a threat model perspective, you need to understand the product. What's what's been the difficulty when you've been looking at open source projects for this? Like how much do you rely on just the code or do you try and load in uh existing like uh wik wikis uh to enrich your own? >> Yeah, that's a that's a good question. That's exactly it. So this is the

architecture diagram and so when we generate the wiki and the threat model, this is all in natural language. So this is text and the good thing about open source repos is we can supplement information in natural language here. So things like previous vulnerabilities so we can look at different code paths or documentation so we can have more context. And that's exactly the point. You're going to have certain things that are just not in the code. Like you can have an open port that might be a source for a vulnerability, but then you can have an AWL like Lambda in the infrastructure that's going to like mitigate this. So there are several areas where you can add additional

context usually in the wiki or thread model. But the good thing about that is that's all in natural language. Yeah, sorry. Super interesting and thank you again for that. Um, I was just going to say in terms of obviously we think about this from a vulnerability detection purposes, but the tools arguably could be flipped to work the other way on the behalf of a threat actor. What what makes this not an arms race effectively between threat actors and defenders where it becomes a question of my LLM is bigger than your LLM. I have more power than you. >> Yeah, I know. I I don't think I have the answer to this question, but I I agree

with you. This is this is kind of the territory that we're going into. I think the thing about us is we operate on source code. So this is either you're the developer of the code, you have the private source code or this is an open-source project that everyone has the access to. So if this was like blackbox web applications infrastructure, then it would be a different game. And like I mentioned, it's going to we're going to end up in a if I'll go back to the slide with what all the model providers are describing the future to look like. we're going to end up in a position where everything that's on the public internet is going to be continuously

pentested by different threat actors. So, I don't know. I don't have the answer to this problem, but hopefully someone will figure out. >> Hey, u the super super useful talk and just a quick question on how you verified the vulnerability. So, I think you showed that as the LLM agent traversed through the codebase, you also showed like curl commands as an output. So one were those manually generated and two if not how did you actually generate uh what what that what those call commands or what the output look like to verify the the vulnerability actually exists. >> Yeah. So there are two things that we do to validate the vulnerability. The first one is to think about it from a

programmatic level. So is the sourcing core chain correct programmatically? So without LLMs we need to make sure that this is correct. The second part which is a little bit more difficult like as you mentioned with about context is is this actually a vulnerability and we do things like when we build this wiki in the specification sheet we outline certain business invariants so core business logic kind of these things that need to hold and if we find a vulnerability that breaks one of them then we can conclude that this is a vulnerability and so there are several different checkpoints that we have in the validation and once at the end of that we output a confidence score. So

vulnerabilities that hit a lot of these checkpoints then have a very high confidence score. So it's programmatically correct. It violates some business invariance. There's a very clear uh source for user input. It's remotely exploitable. Things like that. So that's how we have the confidence. >> Any more questions over here? >> Hey, thanks. So the last question covered a bit on the kind of precision of it sort of thing, but did you um do any kind of experiments around uh like recall on there to see the coverage to see if it's actually retrieving all the vulnerabilities or if it's missing a lot there um around that sort of thing. And I guess that kind of ties into um what

sort of models did you use and did you find some more accurate and did you find that the data set they had had what they needed or you needed to train or fine-tune them on additional data sets? I guess if so what data sets >> yeah so we use offtheshelf models we found the whole all the models from claude and anthropic to be the best for this but in terms of benchmarking so we so if you if you scan like any LLM on juice you'll find that juice have comments for all the vulnerabilities so you don't even need an intelligent system you can pass this into chat GP and because it comments a vulnerability it can spot it instantly so we try to

stay away from a lot of the traditional benchmarks cuz they don't really work for the LLM scanning uh tools that we're developing. So we scanned it on a bunch of open source repos. The difficult thing here is you don't really know all like the full state space of vulnerabilities in application. So we did a lot of backlog testing. So checked out to a vulnerable version. We know there's a vulnerability there. Can it find that? And that's what we did to kind of build up our data set and improve our evals. But I think somebody should I think this is one one thing that with evaluating all these different tools coming out we don't have a very

clear benchmark or data set that's univi like unvised across the whole industry for these types of tools. So I think that would be an interesting problem if somebody makes one >> questions answered. Yeah.

Hi uh thanks for the talk. I'm I'm curious about um how the findings like the sort of format of the findings that are produced by this model. Is it like a call chain from a source to a sync? Does it have you know like you've obviously got the pox in your slides but is that from manual triage and then you figure out how the puck would work? >> Wait, I didn't I didn't hear the last part. Um so the proof of concepts that come out of it is that like is that part of the results produced by the like is it producing a natural language um sort of output that's like this is what it

looks like. I'm yeah just curious about how how the outputs >> yeah all of so the outputs are things like internally like the um scratch pad of the LLM. We also align this to a CVSS score and then yes the PC is also outputed statically from the analysis cuz we have the full sourcing call chain. we know which endpoints it needs to hit and where the sync should be. So yeah, we can generate those. >> Next question. >> Yeah, great presentation. Thank you so much. uh you know the current LLM models do suffer with bias and hallucinations and and I'm sure with your test have captured a lot of uh false positives or not but there are potential that in in

real life scenarios LLMs might generate many false positives uh because of that. So um I think you made a very good point in the starting that using LM like humans not without humans. It should be used as uh an assistant to a manual pentester, not a replacement. So it's just just wanted to make that point. I don't know you agreeing. So is your question like where does this fit into everything really? >> Yes. >> Yeah. I mean like I'm not going to sit here and say this is a replacement to all humans, but I think it's just trying to mimic the workflow that humans do so we can detect these harder to find vulnerabilities. But yeah, this is no

way a replacement to any very detailed code audit. This just can be an assistant for it. Even generating the things like the wiki and the threat model that can assist you if you're doing a code audit to say, "Oh, it didn't look in this area or it's already checked all these things. I can go spend my time over here." So yeah, it's just a enabler for teams to move quicker essentially. >> Any more questions? >> Oh, two more. I'll go this one first and then second. Um, sorry. It's the when you reported the issues to the open source products, did you say it was found by AI and did they push have any push back because it was

AI or were they accepting of it? Yeah. No, before we reported everything, we manually validated everything. So like this, you just pop the car request into Bob. We validated everything. There were a few false positives in here due to missing context or sometimes incorrect call chains. So we had to validate everything before we reported it. We didn't just want to flood them with noise because yeah then you would get a bunch of upset maintainers. >> Hi uh really good talk. I think the heart of this system or the most interesting bit is the threat model like uh the quality of threat model. Um how are you or what are you doing to make that bit better? uh is it just like a

system prompt which says uh create a threat model given the wiki or you are adding intuions uh from your own experience as a security researcher or pentester. >> So it was a question around how did we do the threat modeling? >> Yeah like how are you making it better like uh because that's the quality bit right uh coming up with threats and then verifying them. So how are you coming up with threads and better threads? >> Yeah so this this is a good question. This is the the way this works is we will generate the specification sheet. So this is the logic. So the LLM has a foundation for what this app is supposed to do and that's all kind of like a

document. It's in natural language. From that there are a bunch of LLM things you can do like uh iterative prompting and several things like that. But one of the things I spoke about which was hallucinations that can allow you to add more creative attack scenarios. But from this specification sheet, we just look at that as well as the index codebase and then generate things that try to break any of the business logic invariants. So maybe it says, okay, these endpoints need to be admin authenticated. It can then go query the indexer, look at all the endpoints and say, okay, we can build a test case that maybe these two endpoints shouldn't have admin or something

something like that. So we'll combine the indexer and the specification sheet together to build these threat model test cases. Does that make sense? Yeah, >> I think we've got time for just one more.

>> Thanks. Great talk today. Um, very insightful. I guess one question with the test data sets you use to validate the model and kind of um generate our findings from the threat model and CVS. Was there any cases in that that you're aware it didn't find? And if so, how do you see this going forward in terms of the maturing and bas not giving false confidence that for example that found 138 findings but of that three were missed and going forward. >> Yeah. So I can go back to so when we ran a lot of these experiments the the core kind of thesis around this was can we detect the vulnerabilities that usually require humans to so we focused on these

business logic vulnerabilities things like broken access control. We could essentially tune the agent to focus on a different subset of C.WE, but from from the data right now like I don't have a conclusive set of where it would be underfitting or overfitting. This is something that would require a lot more iterations. But I think it's very versatile and like what I mentioned I don't think people are using them to their full capabilities. So yeah, maybe maybe you should try it out as well.

Thank you very much. Thank you.