Chasing Whispers: A Pragmatic Review of Adversary Emulation Processes

Show transcript [en]

Kyle Smith: Hey, good morning everybody. Hope everybody's doing all right, sorry, I'm gonna sorry. We're gonna get started. Thank you. This thing's still tracking. All right. Good morning. Yeah. Welcome to B sides. Looking forward to meeting some of you guys and talking about all this good nerdy stuff. My name is Kyle Smith, and my talk's titled chasing whispers, a pragmatic review of adversary emulation processes. So today, we're really going to talk about the what and why of adversary emulation, some processes around us. We're going to talk about detection engineering, obviously adversary emulation, but threat modeling as well, all these little things that go into adversary emulation programs. And yeah, please write down your questions and we'll talk about

it afterwards. I'll have some time for questions, and obviously find me in the halls and such. And we can, certainly drive on and talk about it, talk about it. Then quick background about myself. I'm a senior cyber security analyst, actually a penetration tester by day, big football nerd by night, but my previous role was adversary emulation for a Fortune 20 organization. Also served in the Army National Guard as a non commissioned officer. So if any any vets here, please seek me out. Definitely want to talk about some things, and I was previously a financial advisor, Certified Financial Planner. Basically. What that means is I was a social engineer for a large company, and tried to convince people to invest their

money with me and invest it a certain way. So little old ladies in Boulder, Colorado, quite, quite frankly, yeah. So

before we get started, as I mentioned previously, we're going to really talk about the prerequisites, like what areas of knowledge and skill sets and tools might be helpful for adversary emulation programs. I don't want to come in here and assume people you know know about it necessarily. So we're going to talk about that again, the what's and the whys, and specifically, some of the tools of the trade as well. If you guys have questions around labs, I don't have a sexy live demo today, unfortunately, but if you guys have questions, happy to kind of get you started in that direction as well. So the very first thing that we're going to talk about is going to be the

miter attack framework. I don't want to beat a dead horse. All of you probably know what it is at the very top each individual column here, like the Recon resource development, initial access, all the way down to impact that's going to really be the objective for an adversary, right? And all the little blocks within are the actual behaviors that those adversaries take to achieve those objectives. So I start here because this is really going to be the absolute foundation for this, because if you can't speak the language of the adversary, you're not going to be able to emulate them appropriately, right? Somebody here was mentioning too, if I was going to talk about any llms, or how to Red Team llms and things of that nature, no,

but be on the lookout. Miter actually maps back to the cloud. I don't think they map any AI specific actions just yet, but we're going to be seeing more of that. I think Microsoft already started an inkling of that, by the way. I don't know what it's called, but feel free to check that out. So everything starts with miter, in my personal opinion, and we'll be kind of hitting hitting some of that here later as well. You alright. So what is adversary? Emulation? This is kind of my own words, so don't shoot me if you disagree. Just come talk to me afterwards, please, using actual intelligence to discover and mitigate control gaps and thus risk an organization's

environment. So all of these things are comprised of it. That last piece says collaboration. If you can't read it, I know my big head's in the way. But again, you kind of have to start with risk modeling. You have to start with cyber threat intelligence, a lot of research, a lot of upfront digging and deep diving. And then you can move on to execution. We'll talk about all these processes more so, but it's not just executing actions on an endpoint or within a network and seeing what security controls might trigger. A lot goes into it right now. You can separate this out across multiple teams. I will add that caveat. But my team, specifically, when I was

performing this, we kind of owned it, womb to tomb, right? We were, we were judge, jury and executioner, if you will, for our particular program. And that's, that's kind of where I got the idea of putting this whole talk together. So why do we do it? Why do we do anything in cybersecurity? Because it's fun and cool. Obviously, you can't take that to your director and say, Hey, boss man, I am. I'm going to do this because it's fun and cool. They don't really like that answer, right? I answer, right? So truly, we do this so we have just a deeper understanding of our environments. We don't want to wait until an incident to be scrambling. Where did this come

from? How did this get in here? We have to know, not only you know our environment, but we have to understand the teams and the stakeholders involved, meaning. Okay, Jim, you own this part of the network. Joe, you all own all of our virtual hosts. You have to understand those things, and it's much better to practice that and to find ownership and determine who owns those security controls before you're scrambling and have your IR team, or, even worse, your external IR consultants paying big money to come tell you what's going on. Right? So we'll talk a little bit more about quantifying and reducing risk briefly. You can again, have it. You can have a whole talk on this, in my opinion, just how people kind of

formulate their risk reduction program as it's tied into adversary emulation and purple teams and things like this, and then obviously improving detection. So really, I take this like there's a big human element to this, in my opinion, the quality and fidelity of your alerts and reducing fatigue. I mean, who's been a SOC analyst, or staring at a screen and or waiting for that alert, or maybe you have a false positive, you go down this rabbit hole, this piece of improving detections is huge, and I cannot understand understate that. So really, the way I see it as like, if you have a deeper understanding, and you can mitigate any gaps proactively, and you can improve detections and make your employees happier, you're just

going to naturally have an improved security posture. And again, I think that's where we talk about, especially the quantifying of those risks, and maybe how you might approach that. We'll kind of go through a very juvenile description of that here soon, and again, that's what you can take to your leadership. I guess, going full circle here, you just can't say, Hey, boss, I want to do this because it's fun and cool. You have to have the numbers behind it. You have to have an actual, maybe algorithmic approach, if that's a if that's a word, all right, so why stats edition? So got some stats to back it up. If you don't believe me, I think these are going to be some good

things to keep in mind as to why we have a proactive adversary emulation program and how it can benefit folks. So yeah, in 2023 as you see here, it took an average of two seven days to identify a security breach. That is, according to the IBM, cost of a data breach report page 14, that's quite that's quite a bit, right? That's over half a year. And ideally, we can shrink that, shrink that down to, you know, weeks, months, and be pro, be proactive in it as well, right? And I'll talk about some cases, case studies, and some examples of where my team actually used a rule that we created to find some bad activity and shut that

down really quick, very exciting when it happens. Of course, ransomware occurred in 24 24% of breaches in 2023 self explanatory, don't really need to go into that one, right? That's from horizons breach report in 2023 and then phishing was the most common attack vector in 2023 as well. So again, probably not surprising that one is from also the IBM cost of a data breach report in 2023 so again, nothing overly surprising. But again, if you are starting a program, it's something that you can take to your leadership and maybe say, hey, we can shrink, we can shrink these problems, right? Maybe it's most common out there, but it doesn't have the most common attack vector for us, or if we are going to be

breached, it's not going to be by ransomware. It's going to be around social engineering or something else, right? You can highlight those gaps and point out to your leadership what might be going wrong in your specific organization. And I think the one thing I'll touch on with the ransomware piece is the kill chain for ransomware. If you look back in miter, it's very, very broad. So you can have things like, you know, droppers, you can see PowerShell. There's a lot of defense, evasion and bypassing, EDR, you know, macro, still and visual, basic scripts, right? Unfortunately, but those things do happen, living off the land, binaries, like, all these things that make up behaviors in miter like, are going to be a big part

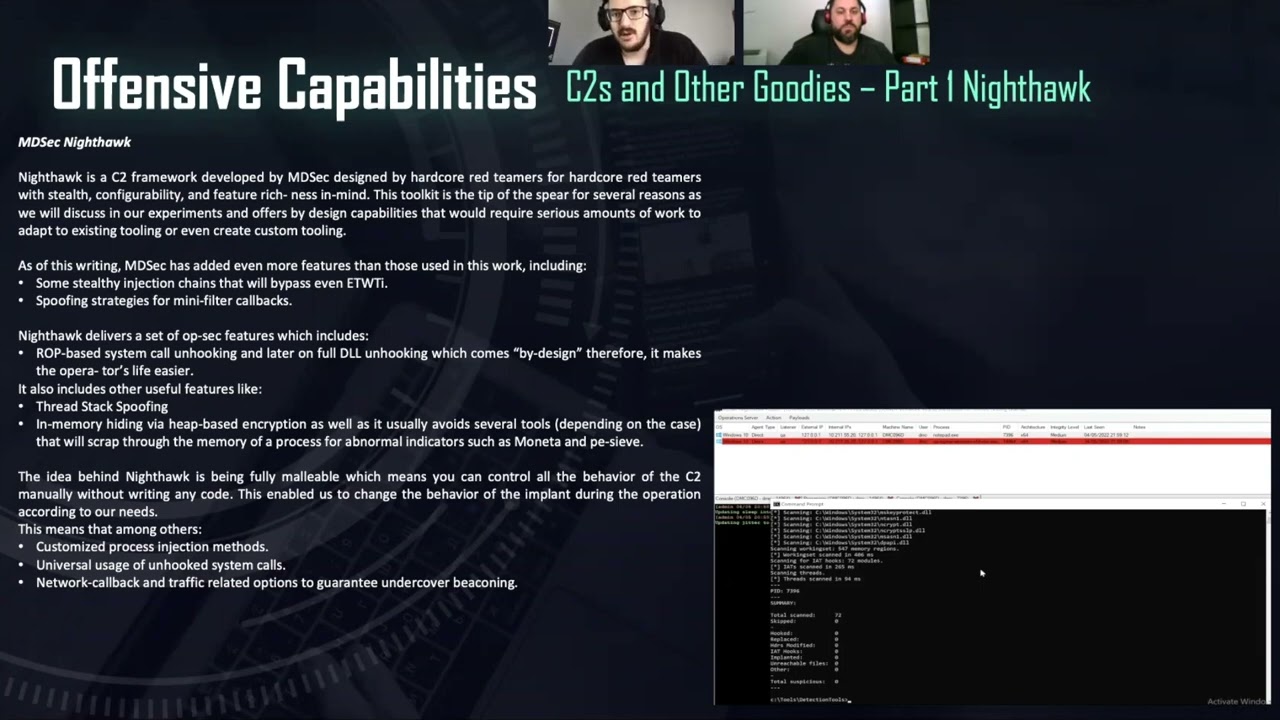

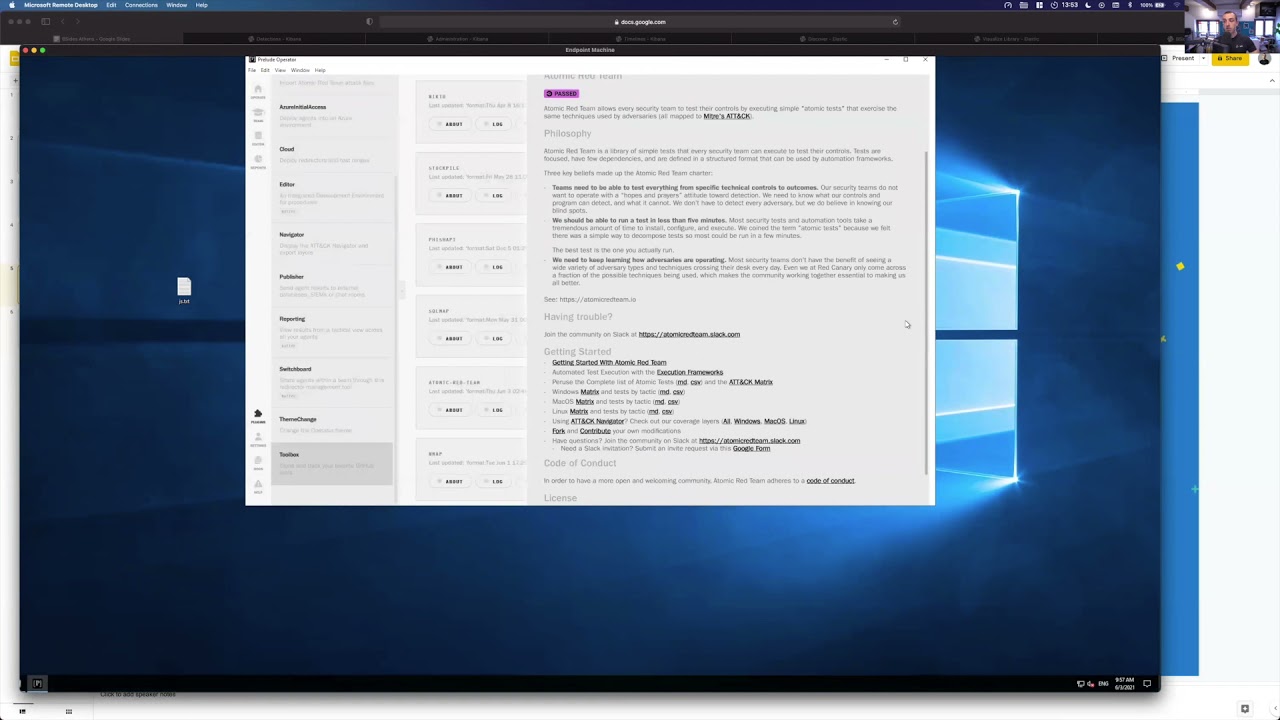

of that ransomware piece. So if you can just mitigate and kill a good chunk of that kill chain, I think organizations are going to be better off, all right. Unknown: This is where we'll talk about some platforms and tools. There's a lot of them out there, as you probably know, the few I want to highlight. I can't say I have firsthand experience on all of these. I well over half of them, I do, though. So there's Prelude, signulate or simulate, however you want to pronounce it. I do not care. They're out of Israel, good organization. I know a few of those guys, Mandiant, security validation, called mSv, attack IQ, and then safe breach. So these bas stands for breach and attack simulation. I don't think

I covered that yet, but essentially, it's a platform to give you the scalability of an adversary emulation program. You could certainly hand jam it, put a bunch of things in Excel. You can use atomic red team. You can create your own type of program and or process, but these really allow the scalability you can deploy. Typically, their agent could be agent based. You can deploy those agents in certain parts of the network to do network specific actions. You can keep them on the host to perform host specific actions, or anything in between. Team, and that's where kind of building out that kill chain comes down to your threat model, which we'll talk about. But again, these are a time saver. They absolutely just save so

much time, effort, energy and the reporting from, again, from a leadership perspective, for the use that that for those of you that lead an organization, that's gonna be very, very helpful. So that's probably where you get the most bang for your buck, truthfully, as a leader reverse engineering and sandboxes. So we'll talk about extrapolation here in a little bit when we talk about detection engineering. But that's gonna be better for folks that really want to dive into particular program and figure out how it works and then use that piece of how it works into your adversary emulation program and then attack navigator. It's nice, especially leadership doesn't really wrap wrap their heads around okay? What is wizard

monkey doing? What's that threat group? How are they performing this? You can use attack navigator to really map out and show the kill chain in a very nice, appropriate manner. All right, we're gonna talk about processes and procedures now. So this is really how I view the emulation process. I'm just gonna go through all these really quick, and it's a living and breathing process. And I hope this makes sense once I'm done explaining it. But obviously, have Threat Modeling. We'll talk about threat modeling in a couple different ways in a moment. It's fascinating. And again, you can have a whole talk on this type of topic, but essentially you have to understand who your threats are, what tools they're using, how

your organization's comprised and built, all these things. So once you understand your threat model, you can gather process that intelligence. I have a little asterisk here, because actionable Intel is very important. If anybody has been a part of the intelligence community. Intel is great, but it has to be actionable. It has to be relevant. It has to be something that you can go and use, hopefully today, type of idea and who's here? Heard of the IOCs, indicators of compromise, those are great, but let's be honest, like when you're looking at it from this angle, they're not that fantastic. It's great to figure out who's doing what and where their assets might lie. But truthfully, I can't take an IP

address. I'm not going to use that to test because that's very trivial to change, something we'll talk about in a moment, as well. Research and Planning. We hit on it earlier, but you got to be reading reports. You got to talk to your intel folks. You're going to then execute and analyze those results. So, okay, hey, did my EDR pick this up? Did my WAF pick this up? Did my network firewall? Did it allow that communication to that known malicious DNS server? Right? These types of things that you have to execute and then analyze, put in the fancy report, send off to your management, and then the fun part, detection engineering. We're going to cover a lot of

that here later. I know it seems like I'm talking very fast, just trying to condense this. I know I have 45 minutes, and I think originally this was planned for an hour, so forgive me again. If you have questions, just come talk to me. We'll, we'll address it. So that's how I view it. Actually, let me go back, because again, living, breathing model here. So once you get down to like I'm doing this because I that's how I envision this whole layer in this wave. So once you get done the detection engineering, you're not necessarily done. You've got to go back to your threat model, because threats are constantly changing. New groups are constantly evolving or coming

out and trying different methods andor rebranding themselves as well. This is a little snippet from the purple team strategies book. You can see it down there in the bottom left. So when I share this out, that'll be a click of a link for you guys. But this is how they view the purple a purple team model. They call it the peer model, prepare, execute, implement, and remediate. So kind of fuzzy here, but you can see identify threat and TTPs, execute attack steps, document outputs, identify and prioritize gaps, quick wins. Implementation. That is a problem with me, and I'll explain why in detection engineering and the validation, which is a big step as well, and a part of detection engineering. So we'll talk about those

things. I wish it was that fast. Truthfully, I do it just from my experience. It never quite is all right. So we're going to talk about that outer layer of that, of that process, on how I see it. So we're talking about the threat model. So who here, how do you go about securing your home? Who here has locks on their doors? Show of hands. Who here has security cameras? Who here has a vicious attack Chihuahua, right? So these are things that kind of goes into your threat model, right? What type of neighborhood you live in? Do you live in an apartment complex? Are you on five acres of land like? What do you have

to protect your home now? Who here is going to install locks, install a security camera and just walk away. Yeah? Hope it works. No, you're gonna you're gonna twist the lock 17 times, right? You're going to wave, or have your spouse wave in front of the security camera, make sure it turns the light on, or the infrared works, right. You're gonna check all those things. So why would we ever deploy an EDR? Why would we ever put a device into a. Particular network segment without testing to make sure our controls work doesn't really make sense, right? So this is the best explanation I found from Threat Modeling. I think it could be an overly complicated topic.

Personally, I like to simplify things. Josh frelinger, he's a contractor and an editor for a magazine, I found this quote. He says, Threat Modeling is a structured process through which IT pros can identify potential security threats and vulnerabilities, quantify the seriousness, seriousness of each and prioritize techniques to mitigate attacks and protect it resources. So again, I think that's the most concise, straightforward Threat Modeling description that I found, that's why I included it in here. All right, it's probably my favorite slide, getting a little cut off, but essentially, that Latin the bottom right, that newspaper says journalists, just so you guys know, but this is Batman and slash Bruce Wayne's threat model. Credit to Tiffany Lou. I think this is a very fun slide,

and just kind of gives you a nice representation of what all constitutes a threat model, or, in particular, Batman's threat model. So you see here the assets that he has, right Bat Cave, Alfred, emails, texts you have. How do you protect those things? Well, you have a security system. You can hide your location. You can practice encryption, maybe when you're using emails and texts. Who are the threats? Well, the police, because they don't want to necessarily out or he doesn't want to out himself and determine that Batman's Bruce Wayne, even though I just did apologies the Joker. And then journalist, right? And then these lines represent the different types of risks as well for each so obviously, if we

take a look at a retail organization, for instance, they're going to have a different different risk than a nuclear subcontractor for the Navy, right? They're gonna have a completely different set of threat actors that pose different levels of risk. Not to say those threat actors wouldn't target that retail organization, but they just might not be as high on the totem pole as that nuclear subcontractor. So, all

right, detection engineering. I'm just going to get through these really quick. This is the most fascinating thing about adversary emulation, because I don't think you can really talk about it without talking about adversary emulation, without talking about detection engineering specifically so it's the process of developing capabilities to continually identify and minimize threats and their impact as it pertains to a threat model. So again, looking at Bruce Wayne's threat model journalists, for example. Can we can we identify what journalists out there might have the information that we think could out Bruce Wayne? Right? For example. How do we do that? Well, we have tool policies. We have tool alert, logic creations. That's where you're working with the engineers,

right to maybe create a EDR, specific rule, rule set to trigger on a particular action feature request. The list goes on and on. I'm not going to dive into it, but sigma rules, cert cotta rules, snort rules, yar rules, I'm sure you've heard of them all. That is where we create those rules to help the engineers create the actual logic in the tool. I say share here because you should be sharing internally, and you should hopefully be sharing publicly as well. I mean, the more detailed rules organizations have, hopefully the better engineering capabilities they have and can detect threats. And why do we do that? Well, this is going to ultimately help us create a

scorecard for each TT TTP, tactic, technique or procedure based on a threat model. So that goes right back to miter. And I mentioned here you can map the miter attack. You can either map that to NIST 853 controls as well, something we've dabbled in and tried at times. It can be inefficient, but it does. It's nice from a leadership perspective, specifically. So good example, like, Okay, can I create a custom detection logic within carbon black to detect PS, exec usage, right? Like, that is a pot or your sin, or whatever cool it might be. And then you can map that to the kill chain, map that to NIST 853 give that a score, and you can determine how important that is

to you based on your threat model. And I think we'll have an example that here in a moment. So detection engineering kind of dives into these two fundamental concepts. I did not come up with them by any means, but I love talking about them. So you have capability abstraction and you have pyramid of pain. So I will start with the pyramid of pain. We basically want to force adversaries to the top of the pyramid, right? That is where they have to change those behaviors in the miter attack framework. So if they have to change the behavior to achieve lateral movement, that's gonna be very annoying to them. That's gonna be a win for us. Again, I mentioned the hash values

earlier, IP addresses. I'm not gonna necessarily test anything on that, because that can be changed in the blink of an eye, and it often does, like with a c2 or something that an adversary is using, it's virtually worthless from a from a proactive testing perspective, you could automate it and get some quick wins, sure, but the larger the organization, the worse that idea is going to be, in my experience. So this capability abstraction piece is really just we're. Reverse engineering of a particular behavior, a particular procedure within the miter attack framework. Here is a kerberosting example. So you kind of look at the different tools that can perform kerberosting. Obviously not an all exhausted list. You have this managed code. What Windows

APIs are is it using, what remote calls is it leveraging, and what protocol does it? Does it implement as well? So just kind of logically breaking that down in a map to determine where you might want to test or what tools you might want to use to test that particular behavior. Obviously, again, though, it depends, like, if your threat doesn't necessarily use Rubyists, you might not, you might shy away from that using that particular tool. Or if they do use Rubyists, and they've been seen using the Kerberos action and not the Ask TGS action, then you might pivot to that specifically, right? That's a very simple example, all right. So the de process really quick. Lot to this. I think there needs to be a lot to it,

but if you can move quickly through it, that's going to be benefit your organization, the team the most obviously identifying the gaps. This is after you've executed those actions. This is after you've analyzed the results. You got to see what gaps you have, nice little spreadsheet, nice little chart, so the leaders can understand it. You got to create the logic for those gaps. You got to then validate that the logic works. Because the worst thing is, like the sock Anas are going to go crazy with the with the false positive, right? You don't really want that. You got to tune it. If it doesn't work, you got to revalidate after you tune it, and then you can throw

it into production, hopefully. So it is a long process, especially if you're working with multiple teams, multiple tools. So you might want to maybe start at your Sim level and work down into your specific tools. Or if you have a large enough threat that bypasses your specific EDR, for whatever reason, you can, you can approach it that way as well. Some EDRs, you know, they're, they're their own worst enemies. I won't say anymore, but you guys get that. So this is where we talk about quantifying the risks as well. So how can we quantify the risk to an organization via adversary emulation, something we've racked our brains a lot about so many different ways you're going to be able to go about this is

an overly simplified example, but this is kind of how my team started with an outline, right? We kind of said, Okay, what? What exactly do we want to what exactly do we want to have in our formula? How can we keep it simple and not over complicate it and build up and automate it from there, right? And using GitHub actions and using Python like this is very trivially done. It's just a matter of coming up with how it impacts and what your leadership wants to see quite truthfully. So I say the attack weights. So again, we talk about those 14 different objectives on a target from miter attack framework, right? So you could say, okay, resource development, we don't

like how. We don't like they're gonna adversaries are going to do that. We can't really see that in our environment unless they're in the environment scripting things and like that. You got a whole nother problem in your hands if that's happening. So we're going to get that a weight of one. It's not really important. On the other hand, you have impact. That basically means your entire organization potentially has had ransomware run amok. That's going to be a problem. So that's going to have a heavier weight, right? The fidelity scoring bands to this of a, let's say, an EDR rule. How often does that thing alert? How loud of alert is it going to make, basically,

and is an analyst going to have five different alerts of the same thing? Are they going to just see one alert? Oh, I haven't had, I haven't seen that type of detection in 37 days. I should maybe pay attention to this versus something that's just triggering non stop, non stop that's going to hold a lower score in that fidelity band. And then, of course, the frequency band as well. Again, I guess I kind of explained both of them at the same time. Basically that tells me how good the rules performing those last two bits there again, we want to minimize the work and the fatigue of the SOC analysts in the operation as a whole. So hopefully our rules are high in

fidelity, meaning they're not going to be triggering every day. When they do trigger, it should be kind of a not all hands on deck. But hey, I want to stop what I'm doing focus on this particular alert, because it points back to wizard monkey, or whatever threat I am particularly looking at in my organization. So that's the idea behind that there. All right. This is really interesting. So I guess we have two different two different case studies. One I put in here because there's a whole article about it, and I actually got to work with this individual. His name is Aaron. The link goes to, I think his blog that kind of outlined what he found. I'm not gonna read all

this, because I know you guys can read but essentially, this was, this was actually a penetration test that was designed to assess the monitoring and detection capabilities of. Of okta's logs. And can, you know, can, can I log in? What is it impossible traveler? Can I log in from Japan and also log in from Charleston at the same time using VPNs or something? Or maybe, if your team travels a lot, that's a possibility too. And can I trigger alerts based off this? Right? That's a very simplified example, but you get the idea you don't necessarily want to have multiple sessions going on, that concurrency going on in a web application, especially if it's an administrator session. So this

was a case study that Aaron, the individual, he basically found this bug. Found a gap, I should say specifically, we're talking about the emulation piece, found a gap in okta's logs. Said, Hey, you might want to fix this at the very least, I'm pretty sure, in his blog, actually as well. At the very least, you should create an alert, created a sim detection to tell you when that's occurring. That way, when, when somebody does find it and it becomes a problem, you can kind of sift through those very easily, and you're not scrambling to create something brand new. And they didn't, and that is where the attacker stole customer uploaded session tokens, and 134 organizations

were affected. So really fascinating, real world study that hey, finding a gap beforehand and proactively working with people to close that gap can avoid situations like this and again, like you can extrapolate this, right and say, okay, instead of just alerting if, that's if maybe a big enough distance between the sessions occurred, let's just kill one of them, or kill both of them. You could extrapolate that in and engineer it however your team needs to, I guess is my point here. And again, I didn't put this one down, but from my team specifically, this is pretty early on in the in the emulation process, but essentially, we did find a rule that be triggered, right? And found some potentially nasty

behavior. I think it ended up being like an insider just doing, you know, things administrative, things they shouldn't be doing, right? We know the engineers and the programmers, they like all their fancy tools and like to automate things and things like this. So that was good, a sigh of relief. But the fact that, hey, it led us directly to an individual into an action that we could kind of perk up our ears and say, What's going on here, that was pretty fascinating. And again, we could have taken that further and said, Okay, let's, let's create a sim rule, sure, but integrate the EDR with that and just kill that process immediately, if we would, would

have liked to. And I think that is going to be it for now. I think just yeah, in summary, now, obviously the creation of an intelligence driven adversary emulation program is going to help any organization improve their security posture. And I think you really can take a systematic approach to reducing risk in an organization by by doing this. So I appreciate you guys coming to the talk. Please, questions, comments, concerns. If we have them right now,

we have people scratching I think they're about to answer ask a question. All right. Well, hey, I appreciate everybody coming again. If you have a question, I'll be floating around today in and out of talks. Yeah, anything to do with purple teaming, adversary emulation, penetration testing, please come up and ask me. I'll be happy to address and we can discuss privately. Thanks, guys, bye.