Your Security Dashboard is Lying to You: The Science of Metrics

Show transcript [en]

Hello everybody. Welcome to the Bside Stalland fifth edition. Uh oh, we have slides. Nice. Um this is it. This is the biggest baddest uh most super biz talent ever. Uh you made it. We've made it. So let's start with a round of applause because this is

Okay, next slide please. Uh we usually start with thank you because uh who has time for thank yous at the end of the event? Nobody, right? Besides is taking place here today now because of all these people. everybody here. Uh we have wonderful people who want to present who have been selected to present the speakers. Thank you to the speakers. Uh we have workshoppers. We have you know if you want to dive in and do something more get your hands on. We have people giving workshops. You're attending workshops. We have the village people today. Uh lovely village is up and running. You must go and check it out throughout the day because uh you might

learn something and you might have fun. Two very important parts. Of course, the sponsors attendees they are the I mean attendees if you would not be here these sides in this form would not exist. We are here because of you thanks to you and I hope you're here because of us. So we each need each other. The sponsor are integral part of the community. Uh huge thanks to the sponsors who have been making uh everything happen who have you know given their shoulder that we could execute this idea. Uh team volunteers staff we have of course people who will take care that you can hear me and the slides are up and we can everybody got

breakfast and lunch. We have a lot of volunteers. The team, everybody who you're seeing with uh blue or uh what's that color? People say it's orange. Uh maybe it's pink. They're doing volunteer work just to make it happen. So this is the thank you slide. Let's give a thank you to everybody of us. [Applause] Next things, the important stuff are on the next slide. What is Bites? We are growing. We have never been so large at Bides Stalin. So, because the community is growing, we've got to be everybody on the same page. What Bites means? Bides is a global event or it's a local event at the same time. Two opposite things. So, how it

works? And all infosc topics are welcome here. It could be difficult technical topics, soft topics. I mean you will see throughout the day how much variety there is. Everybody in the local community can propose what is important and relevant to share. And this is the call for papers CFP and of course we have invited a bunch of different people with different background to be the independent committee CFP board who will make the choice. uh which uh of those proposed talks is the most relevant and those ideas become actual talks today when you're hearing them and seeing them. Thanks to everybody who helped organize the event and this is the global format of Bites. Uh today we

should be giving actually warm greetings to uh greetings to Bides Hanoi in Vietnam. Yes, that uh it was supposed to be the first Hanoi B size today on the same day. Uh just showing how much that there's this global part that's going on, but they actually postponed the event to I don't know 3rd of October. But the point is whenever you go in every city in the world and you look at look for Bites, this is the same format that the talks on the stage are vetted by independent committee. You cannot buy a talk on the stage and that kind of guarantees that the event is going to be interesting and relevant and not sales

right uh everything else around that format is up for us to decide us as the community like what we want to do here do we want to have a village do we want a workshop a beer corner that's what we decided but everybody has the right and privilege to pitch in and say what is besides talin so it's not a closed off thing that somebody other somebody else tells us what to do besides these rules. Everything else that's for us to decide what is our community looking like. Okay, this is the bsides slide. I hope we all know now what is bsides if you didn't before. Next slide is the most important housekeeping. Uh type in agenda.bsides.

If you want to get a shortcut to the agenda, take a look. We pushed the first part just 10 minutes. Let everybody in, but I think most of the other parts of the agenda should be on time. Uh we use headsets today because this from all the alternatives I we believe is the best way to have those two tracks. Uh I hope you already got a bit of instructions. If not then the the the headsets have underneath yellow switch it turns on blue on the side there's yellow switch with three positions. Uh might this do you want to correct me? Am I wrong? >> Yes, >> that is correct. But uh just saying that uh if you don't find a seat on the first

stage, go on the second stage. There's a 200 seats there, go sit down. We are broadcasting the same thing that's uh happening here. And the first keynote will be also broadcasted on both of the lead uh screens. So if you don't find a seat, please go to the to the other side. So there are two stages and that's the point of the headsets. The switch here, the lower position means you hear stage one. The middle it turns red means you hear stage two except for the keynote. It's all coming from uh the same uh speaker. I think it's on both channels, right? Both channels are uh broadcasting the keynote. There's also a third option. It turns green if you want

to, you know, zone out, don't want to listen anything. Up to you. I mean, okay, but we're going to see if you're like, if you're green, then we see that you're not interested. Okay. Okay. Uh, we have workshops, first come, first serve, limited capacity. We have parking and smoking. If you're that kind of person, if you need to park and smoke, P3 is the closest place to do that. We have the code of conduct. Please follow it. It's on our website. It's printed outside. Uh, this color is the correct color. Yeah. uh orange, pink, whatever, blue color shirts. Ask help if you need help or write us an email or on in Slack. We're monitoring it constantly. That's it. Do

we have one more slide? We do. It's Let's go. Before I hand it over to our keynote speaker, I also want to introduce the MC for this stage. This is our legendary MC that you already know if you're a Bside Talent veteran SC Alexander Papuff. There he is.

>> Let's see. Oh, cool. Hi. Welcome. I'm so happy to be back at my number two favorite shopping center this time for a good reason. I've never seen so many people at Tavan at one day. Wow. You know, I came in, I thought they were opening another little, then I remembered. Nope, it's Bides. How's it going, ladies and gentlemen? You feel good? By the way, they actually do have chairs behind this lovely curtain, though. I know it looks a bit weird, but I'm I'm not going to tell you to go and sit down. If you want to stand, go ahead. I'm going to stand with you, just like I stand with Jimmy Kimmel. Okay, I

said it. Welcome to the Bides. Before we while the people are trying to figure out how to get slides on the screen, I know the feeling. I'm a humanities guy. For some awkward reason for the third year, they keep calling me back. I think it's my mom. There's some kind of a secret agenda. She hopes I going to learn math by hanging around with you. So, thank you very much. See what's going to happen. Though, I have a dark side as well. I tried to study it 25 years ago. did a lot of Counter Strike. Uh didn't learn much it. Uh that was a good old times where my parents keep telling me that stop playing computer

games and go to college. Well, now I'm unemployed. Everybody else are doing esports tournaments. Thank you, mom. Uh as Hans already said that this this time the Bides is a bit different. We're going to start all together as a family and then as its custodial in posts Soviet space we're going to separate you up. Some are going to stay here, some are going to move on the other side of the curtain. It's going to be what we call kaka in this country and uh some people are going to go to the village play computer games you know do all the cool stuff many people nowadays do at work. This is why I love fintech center.

I recently found out that there is a job called senior fraud specialist. Uh when I went to law school, they told me it's a felony. Uh now it turns out people are hiring for the position. I'm a bit confused. I mean, I hope the guys in Tartto prison know that they have a future once they're going to get out, >> which is great. >> We're good. >> No, >> here we go. >> I forgot one thing. >> All right. I guess you're all wondering what is the weird thingy that tracking device around your necks. >> It's tracking device. Sorry, I well I should have not said that. >> This is the badge. >> It has a switch in case your badge is

not blinking or has lights. It's a switch in the uh let's say 4:00. If you look at it like a watch 4:00, if you switch it on, it should start blinking. And then there's some goodies here hidden around it. And you may find it, you discover it. And if you need help, there's a special area in the village where this thing gets even more features. So turn it on and in the between of talks, discover what it is or step by the village. That's it. >> Amazing. Uh oh, they're not tracking me. That's the first time in my live history, but no worries. Coupon knows where I live. Um, so ladies and gentlemen, without further ado, I think

it's time for me to get off the stage because plenty of people are already wondering who let the garden know. Uh, yeah, I dress like this on purpose. It's to confuse all the straight guys I meet on the street. Yeah, I know. It's It's as long It's funny because I buy my clothes in H&M and I don't have any choice. I buy what I can put on myself. Thank you for the t-shirt, by the way. Besides, I needed it. So, uh, now we are ready. I see the slides. hopefully are working. Yeah. Well, we're going to find out in a nancond because ladies and gentlemen, we're about to find out how your security dashboard is lying to you.

Oh, I like my ex. The sciences of metrics. So, please give it up for

[Music]

[Music]

All right. Hey, this is working. For the first time, I'm at a conference where the organizers are more stressed than me because of some yellowish issue. But now it's solved. So awesome. All right. Imagine you're trying to get fitter and you get one of these or whatever brand you like. You stand up from the couch, you do five steps, and it says, "Hey, you did uh good today. You did 10,000 steps." And then you go back to sit on a couch and watch TV all day long. Now, that is what corporate and many security dashboards and metrics look like. If you zoom in with the lens of a scientist and using the scientific approach and that's

what my talk is going to be about. I am a little bit worried that there are people on the other side of the wall. I want to break this wall because this talk is going to be pretty much very interactive. So people there I cannot see you but when I'm asking questions raise your hand and maybe shout. So my first question to the audience is I know it's weird. I'm going to ask you questions here not you me. Um this is a vulnerability dashboard and I'm wondering if this is a good dashboard. So we have a total number of vulnerabilities and some risk score which is calculated based on let's call it magic for now. Who thinks this is a

good dashboard and good metrics? Nobody. Who thinks this is a bad dashboard and bad metrics? All right, good. Who doesn't raise their hands? Why? Why don't you? Who thinks it depends? Okay, good. Now, from this point on, I'm not going to give you the option. It depends. So, it's a yes or no. I'm going to have another one. Training dashboards. So, by the way, these are stuff that I've generated using AI, but these are based on real stuff that I've seen in the field. Who thinks this is a good dashboard with training completion? How many how much percentage of a team or a business unit has completed the mandatory training? And then some total awareness training hours. Who thinks

this is a good dashboard and good metrics? Okay, couple of hands. I don't know what's there going on. I would assume that statistically saying it's also a couple of hands. Who thinks this is a bad dashboard and bad metrics? And still the majority of people are not here. Not don't want to raise your hand. Don't worry, I'm not going to say it's good or bad. But I will give you from this point on a scientific way to measure and report completely useless things. design bad metrics, make sure your metric mat makes no sense, whatever, and then dress up all these metrics in a super nice dashboard and make bad decisions. Obviously, I'm going to do the absolute opposite of that. Um,

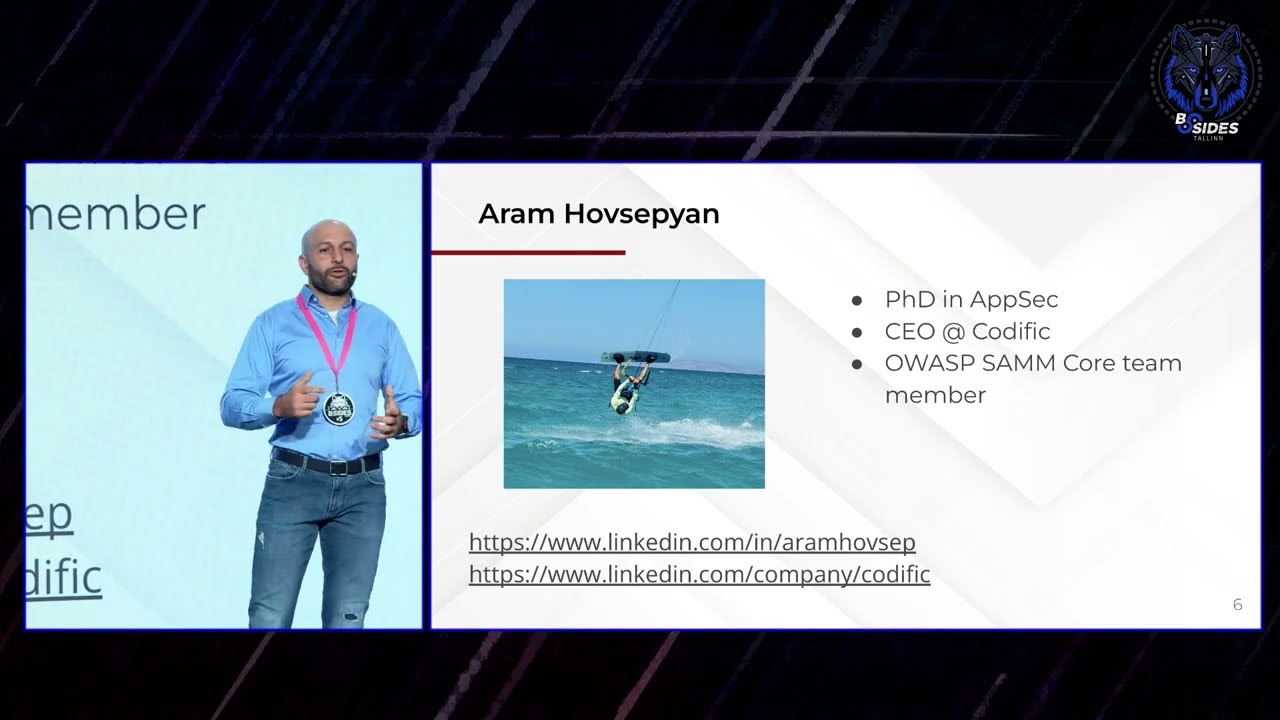

but before that, let me introduce myself. I have my name is Adam. My last name is pretty difficult. That's why it says H. So, uh, I have sort of three hats. I used to be a PhD researcher and I did research on APsec partially on metrics as well. Uh then I was I still am a CEO of a Belgium product firm and we are the builders of the Sami tool but this is not a product pitch so that's it. And then lately and more excitingly I've joined the OASP SAM project as a core team member where I fight crime cyber crime at night or that's what I like to think of myself. uh ping me on LinkedIn, connect to me. I

am, by the way, I'm also a semi-professional kite surfer and I like to show off. And that's the picture here. Let's do this. So again, back to you guys. Are these good metrics? Number of exploitable vulnerabilities in production. A second metric, impact score of each of those exploitable vulnerabilities and likelihood score of each exploitable vulnerability. If we combine these together, who thinks these are good metrics? Oh man. Well, okay. More hands going up. Who thinks these are bad metrics? One hand. I'm not going to ask you why you think that, but you're close. Actually, well, I made these metrics with the idea of I got to now come up with good metrics. Everybody should raise their hand here. But here's the

catch. What are we trying to do? And this is the first tool I'm going to give you when you're looking at metrics and dashboards to go uh systematically with a scientific lens and to see if these are good or bad metrics. The first thing is called goal questionmetric framework. And the idea here is very simple. Uh it's ridiculously simple but yet organizations out there are doing the complete reverse of this. So I gave you some metrics on the previous slide. These are maybe good metrics but then we don't know because we don't know what the goal is. Maybe your goal has nothing to do with vulnerabilities. Maybe goal is completely different. So you should first start with a goal as an

organization and then your goal should be specific and related to uh a process or an application and it should be with some certain quality in mind and you should try to uh for instance in this case improve something improve the time to fix high-risisk vulnerabilities in production and let's say this is a good goal. I'm not going to focus on how you make up a good goal. Obviously, there is some it it's a bit nuanced. It's not very very straightforward because saying, hey, we want to reduce risk, I don't think that's a very good goal. But then you always start with the goal before we go to metrics. The second level is uh you cannot really jump from

goal to metrics. And this is also the second problem that is often overlooked. You first have to formulate questions to measure progress towards answering your goal. You cannot just jump from goal to metrics. Here are some questions for this goal. Uh how many high-risisk vulnerabilities there are in production because we want to improve that. We need to figure out what is the problem, how big is the problem, what is the current time to fix them and then are are those times improving. And now we have the questions and now you can go and pick some metrics. And many of these metrics are the ones I've presented on the previous slide. And now these metrics make sense. They're good

metrics. I claim that definitely not bad and then time to deploy each vulnerability fix. So this is go question metric by the way there this is not my idea I it's just a scientific publications and what is shocking is this is something from 30 years ago and we still haven't figured this out and this is an a framework that was created to be used for defect management and vulnerabilities are type of defects you could see them as overlapping or a subset vulnerabilities could be a subset of defects or security issues but I'm not going to go further in there for There is one exception though um from goal question metric approach because sometimes you cannot really

easily come up with goals uh because you want to figure out what's going on and that is called a little bit more qualitative analysis. So in the case of qualitative analysis, it's okay to look into metrics directly and then cir uh sorry uh repeat a couple of times create an initial goal go around and then refine your goal and basically figure out what is the problem and in in this setting you're going to actually generate goals and refine them until you switch to the full GQM or goal question metric. All right, this is the first tool um gave you to look more critically in dashboards when you see one. Now once you have selected your goals and

questions which is I'm going to say it's more straightforward than the actual metric science. The next question is what makes up a good metric? What is a good metric and a bad metric? What are the key qualities and properties of metrics? Let's see this goal. By the way, some of the goals I've simplified it. Uh if you are paying attention now, you should say this goal is not that great. I know but stay with me. So our goal is improve security awareness of developers. And then our question is what is the current security awareness of developers? And then we came up with two metrics. Uh we're going to test them. Again, this is an example. Don't go too far. I know

you want to go too far. I know him by the way, so I'm not going to point fingers to random people here. Um, two metrics, pass or fail. We have a test, pass or fail. And the second metric is a zero to a 10 score. Who thinks metric one is better? Okay. Who thinks metric two is better? Who who doesn't like either? Okay. No. No. Um, okay. Now, put this in your RAM memory. I'm going to come back to this a bit later. How about um oh sorry there is a third metric. Yeah by the way who didn't who thinks metric 3 is better the best the best metric is metric tree. Okay. So stay with me. Keep

this in your RAM. I'm going to come back to this later. Another example. You want to risk uh the goal is to reduce the risk of getting breached. And your question that you formulate is what is the likelihood of getting exploited due to non vulnerability in production? And again we have in this case we have two metrics not three. I didn't skip anything on the slide. So the first metric is a likelihood score which is a yes or no uh using KEV. Kev is a known exploitable vulnerability score. The second metric is a likelihood score from 0 to one based on EPSS. And by the way for people who are not familiar with these two. So Kev is a list that says

this vulnerability has been exploited. Yes or no? It's actually been exploited or hasn't been exploited. EPSS is a prediction model. It says there is 20% chance this vulnerability is going to be exploited. doesn't say really was exploited, was it not exploited, who thinks metric one, by the way, you are probably going to combine these metrics together in real life, but now I'm going to tell you, you have to pick one. Who thinks metric one is better? Who thinks metric 2 is better? Okay. Now, we're going to go back uh with some uh I'm going to say science. It's not really science. It's an introduction to science. By the way, the material I'm using here is super super deep.

Obviously, I don't have time. I'm going to need like couple of days to go in depth everywhere. So I'm going to stay rather shallow and then I'll give you resources to to read more. But let's start with that. Uh so there are two qualities that make up a metric. Two properties. One of them is called precision. And the first example was related to that. And precision is basically the smallest unit of measurement. So if you have two rulers, metric one has less precision than metric 2 because it has like more fine grained. It has millimeters versus centimeters. And then uh in the case of our testing test results, metric one has less precision than metric 2. And intuitively

it does seem that more precision is better. But as if you've seen on metric tree a couple of slides ago, having zero to I don't know one billion billion is not really giving us much more value. So there is some sort of uh cutoff or um margin of error that you want to be able to take into account. But then clearly a binary score doesn't give you that much. Obviously, if you go a step further, if you're using these test results, if you get a score from 0 to 10, a four is going to tell you much more than a no or or a fail. It will tell you, okay, how far is this person from being a

champion? A nine versus four is going to make a difference. So, this is why we need precision. The second property of a of a metric, I'm not still saying a good metric or bad metric. The second property is reliability. And reliability is easiest way to explain you this. You step on a scales and it gives you one value. You step on it 5 seconds later it gives you another value. You step on it again and it let's say it's your weight. You're going to toss it out. You want metrics to be metric values to be reliable which is every time you measure you get the same value within certain of threshold or margin of error. Right?

Um coming back to our example with the likelihood scores again I'm simplifying things. You want to take these metrics together but M1 which is the EPSS score has worse reliability than M2 because Kev is going to tell you has this been exploited yes or no. If you measure it 10 times across 100 days it might go from no to yes because new information came up it's been exploited but it's very very reliable. Uh, EPSS is by definition not reliable. Whatever whoever tells you the opposite. It's a time prediction. Uh, it's based on a time prediction basically. It does pull in some data, does some magic. I honestly I have no idea how it actually actually actually

actually works, but it's less less reliable. But you want to take them both because one is going to tell you this has been exploited, especially when it's been exploited. EPSS is going to tell you what is the chance that you actually are going to get exploited. I have no idea again how it calculates it but it does add some information. And then combining these two together, there is a trade-off. So it's not you take the best precision and best reliability. And here's an example to illustrate that point. Again, we're looking at secure code test results. Uh secure code training test results. You do a quiz and then we have our three metrics, right? This time it's 0 to,000,

not 10 million million. And then I tell you we did a test with five developers with similar level of knowledge and these are the values that they get. So metric one everyone passes. Metric two we have two developers score four out of 10 and three developers score 9 out of 10. Metric three everyone scores 300 out of thousand. Which is the best metric? Who thinks metric one? Who thinks metric two? Who thinks metric 3? Okay, by the way, it's the similar level of knowledge. So, metric two is a bit shaky here because it's it's uh precision wise, okay, 0 to 10, but then it's not reliable because two out of two of the developers score four out of 10

and three score 9 out of 10. Similar level of knowledge, I meant security-wise. So, in in that perspective, metric 2 has a has a reliability issue. You do a test and then it comes out that two of them pass. Three of them three of them technically fail. Sorry, the opposite. Um, but of course at this point if you know the signs already, you should tell me, hey, what if these developers had a bad day or or even if you don't know the science, what if they had a bad day? They didn't understand your test. Your test was not that great. We'll come back to that later. So we have precision, reliability. Here is a metric which is super precise and

super reliable. Lines of code reliable because you have a tool. It's going to give you edit every time the exact same number. Uh precise because well you cannot count lines of code by any other means than just counting the lines of code. Who thinks this is a good metric to measure risk security risk of let's say getting breached? There are cases. I know it's a little bit controversial, but here's the thing. It has bad validity and I'm going to talk about metric validity now. So, we had two properties, precision and um reliability, we still need validity. So, our metric cannot even if it's very precise and reliable, it might have a bad validity to when we go back to the

questions, right? So, we have the metrics, we need to go back to the questions and then back to the goals. And this is where validity comes in. There are three types of validity in a progressive complexity for explaining them. Although only the last one is a bit tricky to explain. The first one is content validity and it is how much of the outcome does the metric cover. And here is an example to illustrate that. Again my infamous example with awareness of employees. Metric one is how many hours of training they completed. It doesn't tell you anything about the content. Maybe they did a training on something completely irrelevant, not even relevant for security. Maybe they

did a training on something very specific and niche. Metric 2 tells you how much of the top security risks they have seen throughout the training. So in this case, metric 2 has a much better content validity. That's what the claim is at this case. in this case. I know the examples are a little bit um superficial I would say but that's on purpose otherwise I would lose most of you. The second type of validity is criterion validity and you should probably know this one. It's the correlation with the outcome. So how well does my metric correlate with the outcome? And if we take again the same uh the same um awareness trainings. So metric one tells you the percentage of

top security risk that people have seen in the training. But then metric two is going to go deeper. Maybe people were just clicking on the next or they were not really paying attention. So we're going to do a test right after the training. That's much better correlation with the outcome. Metric three is about we're going to do that test but we're going to do it a year after the training. So we know have they really really retained that material? Have they really gotten that? This is criterion validity. Okay, let's go back to the example where we had the three metrics and you had to pick one. Which is the best metric? If I tell you

that these five developers with similar level of security knowledge are also great in sense that they have very good knowledge of security. Who thinks metric three is the best? These people have good security knowledge. Metric 3 tells you technically all of them failed. Who thinks metric two is the best? Some hands. Who think metrics one is the best? Okay, the right answer in this case is metric one. Although it has bad u precision. Well, bad. It has very low precision. It's a yes or no. U given this information, it has the best correlation with criterion uh with with the outcome. So in terms of validity, it's the most valid metric. Metric two might also be interesting

because like I said, uh although given the sample, statistically speaking, it would be hard to claim that metric 2 is the best. Say we had 100 developers and only three would fail. Three would have four out of 10. The rest would have nineish. Then you could claim that metric 2 seems to make more sense. But metric 3 is the worst because it tells everyone fails while they're actually good developers security wise. Um, and then the third type of validity, which is the hardest to explain, is how well the metric correlates with a concept. This is also the hardest one. This is the easiest one to miss in real life. And then here is an example to

help you out a little bit. I have two examples. Um, how well are we prepared for an actual cyber attack? Metric one is your awareness test score a year after a training. It correlates very well with the outcome. But does it correlate with a concept? And the concept is actual attacks. So if we have attack simulation exercise course a year after training where we try to create a setting where this is an actual attack, if we see people are reacting to that, that has a better correlation. And then the actual actual concept is real attacks. It's not awareness trainings. It's real attacks where people might have might face also something they haven't really seen in

the training. So they might have retained all the knowledge in the training but you might have a new type or variant of the attack and if they don't know what's going on then it has the metric might have a perfect correlation with the outcome but not with the concept. Another example to solidify this is IQ tests in early 20th century previous century. They were designed to measure the intellectual quotient of people and the it was I think they were designed in US and people local people especially white uh middle class people would score very good on IQ test but all the migrants would score horribly. So some psychologists jump to the conclusion, a yeah uh so white people are smarter than

all these migrants coming in. Uh but they were essentially flawed. These IQ tests were not really created and they don't really measure actual intelligence but they were when they were created they were measuring how much let's call it common sense people had. And these people who grew up in America went to the schools they actually knew the stuff that was in the test and that that those IQ tests are the huge construct validity issues. Okay. So I gave you now uh how sort of look at metrics and wonder are these good metrics uh are they are they going to give us good validity when we go back to the questions to answering the questions and

going back to the goals. The next part or the next topic is mathematics of these metrics. So something I haven't told you is by the way metrics don't have to be values. They can also be qualitative. I don't have time to go into this further but apparently qualitative subjective metrics have been shown to be pretty good. Uh for instance in um physical sciences or or in in sports apparently if you ask someone how tiring you think how was your perceived level of effort after this training apparently whatever they say they perceive subjectively is better or at least equally as good as whatever their uh tracking technology is saying. That is an example of a qualitative metric. Although it does end

up with a score. How well was it between zero and five? you still have a number but that is again that is a subjective thing it's not a number that is pulled by the tooling you can use those also to do quantitive analysis but again that goes too far so I'm going to stop here and let's look at the measurement scales okay which of the following statements is true injection is worse than XSS anyone you don't have to take this too literally I know you might think like oh my god I can do a lot worse stuff with SQL injection than XSS But strictly speaking I I it would be strange. These are just pinning categories or let's say not SQL

injection some some other curve is better than XSS or worse. Okay. One high vulnerability is better or worse than 10 medium. Who thinks this is true?

One person. uh CVSS score of 10 is twice more severe than a CVSS score of five. Who thinks this is true? I was expecting more hands here. And then finally, uh by the way, CVSS score to just give you what it is. uh it's a it's a vector that represents answers to the qu to uh parameters like attack complexity which is a value between low medium high privilege is required non u I don't know by heart I think it's non and administrative there are probably two values uh user interaction yes user interaction no user interaction impact on confidentiality integrity and availability so you get these values which are um ordinal. I'll tell you in a sec what an

ordinal value is. These are just uh attributes. And then CVSS came up with this huristic to translate that in a score because that was going to be so much easier and it is. But then it leads to horrible other things. Uh final statement, EPSS score of one is twice more likely than an EPSS score of 0.5. Who thinks this is true? one person uh by the way only one of these statements is true. So and it is the last one because EPSS measures likelihood which is expressed as a percentage. And here is an explanation of why all this stuff why why uh only the last one is true. We have measurement scales. So once we have the

metrics once we're pulling in the values they fall into one out of four measurement scales. I have three things here just because I jumped over the fourth one. Uh it's actually between ordinal and ratio because I didn't want to make it too complicated because the difference between uh interval and ratio is very small. So interval scales is not here. There are four. The first one is easy. It's nominal. You just have values in bins but you don't have any ordering there. XSS, SQL injection, Curf, these are vulnerability types. You cannot really say at least by design one is better than the other or worse in terms of ordering. So the only operation you can do using these and now we're talking

about mathematics. It's mode operation. You can pull in u all vulnerabilities and say okay for this system we have the most common vulnerability is excss. That's the only operation you can do. You cannot start combining them with weird magic and mathematics. Um the next scale and every scale gives you more value. It's more advanced skill. But what is essential here is you cannot assume a higher scale because your results of anal your analytics might give you completely wrong results and you might go and conclude the wrong thing and say okay we met this goal but actually it's not true because you use the wrong statistics uh the wrong analytical methods underneath. And I'll give you an example of that. So

if you have no idea what I'm talking about, I'll tell you on the next slide what what I mean by that. Ordinal, we have ordering but the ordering intervals are unknown. So we don't have any intervals. And the perfect example is vulnerability uh impact with a low, medium, high or anything that you say it's low, medium, high. You know this thing is low, this other thing is medium, this other thing is high. But you cannot really claim is that low is 10 times worse than medium. There is no interval there by definition. That's how you bin them. And by the way, CVSS scores fall under the ordinal scales. Although we have this weird number. Well, it's not weird.

It's a huristic. And it's a good thing to have a number. You don't want to communicate CVS with a 10 vector value saying H L M. You want to have a value which gives an idea, an approximation, but still it falls in the ordinal scales. And you have a little bit more operations here that you can do. You can pull a median and the median is uh on median our value is 6.5. The median vulnerability uh severity level from from this data set. But you cannot average you cannot start combining CVSS score and saying the average CVSS value is 5.5. That doesn't make any sense from scientific perspective. And then we have ratio which are the

ratio scale which has the biggest uh it supports basically all statistical and mathematical operations or sort of analysis that you can do on top of them. You can pull in average as well and EPSS scores fall in this category. If you try to express risk value with dollars, you can also do that. And there's actually a book that says how to measure anything in cyber security risk where they try to translate risk into dollar value and then they can do this sort of analysis on top of that. Um, but there are very few metrics I've seen that fall in the last category. Why I'm saying this? Remember the first slide with a dashboard and a risk score?

Um, many tools nowadays give you that risk score. And here is what a sample calculation looks like. This is generated by AI, but I'm pretty sure it's close because I know one tool where I know the actual formula and it is it is there almost there. So, we calculate risk as a function of a CVSS score, then an EPSS score, uh, and then two values prod and cloud. And then here is what we have for those values. So, and the risk is risk of one vulnerability. For every vulnerability, we're going to calculate the risk. We're using this formula. And again, this is this is a fake formula, but the actual formula is close to this.

Um, and then that dashboard number, remember the risk widget that's at 700. So much, it's going to be the average of the risk score of your top 5% of vulnerabilities. That's your asset risk. That's the risk of your application. And you get a nice shiny number, the board is happy and they want to have green scores for most applications and maybe orange score for other applications because they can these numbers are too complicated or the the executive board wants to see it's not like they're stupid or anything. That's not absolutely not what I'm trying to say. They want to see a quick snapshot. They want to see like, okay, is it green, orange, or red? Do we have to invest

more time, effort? Do we have to help them more? Unfortunately, uh that thing makes absolutely no sense mathematically. So these risk scores, the only thing I can question is are they useful? I don't know. Maybe they make no mathematical sense. This is completely flawed maths. There is no such thing as pulling in and doing this calculations using ordinal numbers. Mainly because they come from the ordinal scale. Same for production cloud. I have no idea why you want to pull in non-production stuff in your risk calculation, but I'm I'm not a tool vendor. Well, not for this conference. Okay, I have couple of examples of meaningful go question metric. Um and I'm going to show you only one of them

because people after this presentation typically ask me okay so when which metric shall we use if you are saying these ASPM risk scores are bad CVSs scores are questionable here's an example we want to reduce the risk of getting breached due to known uh due to an OAS top 10 risk in our application again I picked out pretty specific goal and the question you might ask is how many OAS top 10 risks have we actually mitigated in our applications and then there is a great standard which gives you a little so OAS top 10 by the way it's a great project but unfortunately people think that OASP is OAS top 10 it's cannot be further from

truth OAS top 10 is just a marketing tool there are I want to say thousands there are close to 300 projects in OASP and ESVS is one of the flagship projects which you can actually use to pull in very interesting metrics to create interesting metrics I know tools are not going to give you this and you hate it because as an organization you want to pull in the metrics in an easy way using an easy way I I'm not here to blame But then this is a metric that makes sense because you're going to then look into ESVS controls that you have actually implemented related to OAS top 10. So you're pulling in a subset. You

don't want to make your life complicated by pulling in the whole ESVs cuz then it's going to take forever. And then when we go back to the qualities and the validity criteria I've mentioned. So unfortunately we have imperfect precision and reliability. Why? because implemented if you look at the same piece of code by looking at the SVS controls and my friend here who is the SVS uh guru looks into the same thing he might say no you didn't do good job your implementation is barely done someone else might say oh yeah you did a good job so they might end up with different counts and it is both the case for reliability and precision because

precision is also what does it mean to have fully implemented versus partially implemented But on the other hand, it has a very strong content criterion and construct validity. That's what I claim here for for this metric. If you want more metrics, come and talk to me after uh the presentation. Um I'm going to share the slide. So you're going to get this. And now I'm going to go in the last part of my presentation. Is this seven minutes with the questions or seven minutes for me? Okay, I'll let's say I'll leave some time for questions in this. Um, once you have your metrics, the final part is we have data analysis techniques. And I've sort of hinted to

that with using measurement skills, but then it goes further. It's not just the measurement skills. And we have four types of data analysis techniques in progressive complexity and value. The first one is descriptive analytics. You just look at the value, pull in averages, you do some charts maybe. The second one is diagnostic analytics. And actually in security, I've never seen this. This is almost never coming uh to shine. Most of dashboards and metrics I've seen are using descriptive statistics. And I have a nice example to show why we need the second one. Then we have predictive and prescriptive analytics which go even further. They fall into the uh AI domain as well. um where we are going to try to build a

predictive model based on certain metrics to predict the outcome of another metric. Let's say you pull in a lot of values, you build a model that will tell you what is your risk, but then using the proper math, not the SPM risk scores. Couple of examples of these. Here is a um test scores uh security awareness test scores where you could look at the values themselves but then it is interesting to also visualize this and the visualizations are demonstrating an interesting thing. We have completely different pictures here because here we have a single peak in the middle here we have two peaks that tell you a different story. So if here everyone falls on the

average and everyone needs some help. Here we have two groups of people. One of them are doing pretty good job. The other one need more help probably. You might ask like why why are you showing this? If you just look at the numbers the average of the two is the same. The standard deviation is also the same. So it is interesting to also visualize your data when you're doing descriptive statistics. And again, by the way, this topic is a whole course on its own. So, I'm just going to give you some things that I thought are interesting and then then we'll finish. And then diagnostic statistics, diagnostic analysis. I have a very interesting example. My example is

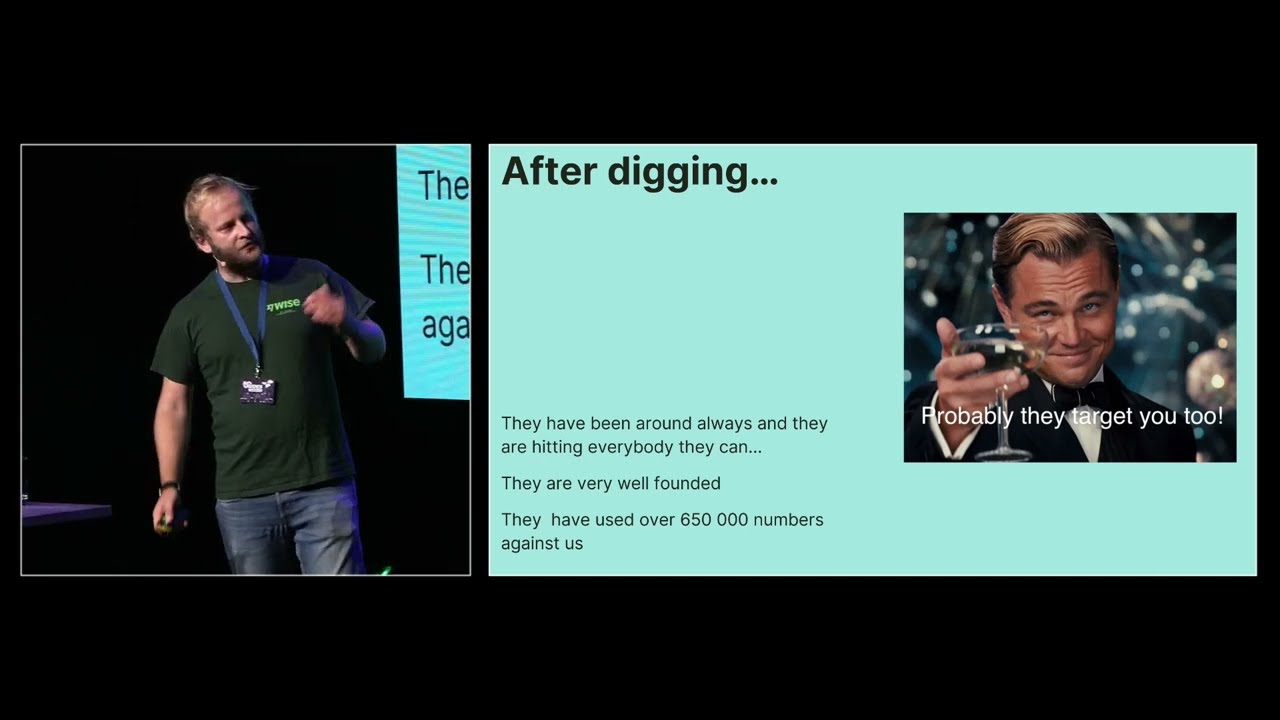

completely cooked up. I used CHP to give me this uh but this could also happen and it it does happen in real life. So, we want to see we have two teams, team A and team B. and we want to see which team is faster to respond to uh incidents or let's say vulnerability fix times. We have team A with an average of 38.2 hours and team B with an average of 45.5 hours. These are the detailed response times by the way. Maybe you want to eyeball them as well. And my question is uh who thinks team A is faster to respond? Who team who thinks team B is faster to respond? Okay, I see some hands. I I don't

understand. Uh if you look at it with the diagnostic statistics with the previous type of analysis, team A is much faster. Well, not much faster. It is faster. Team A is faster. Team B is not faster. But then this is where diagnost diagnostic statistics come in. Diagnostic analysis. You can't really claim anything based on this data because you have to go in actual statistics and prove that. And in statistics, we cannot prove something directly. We have to prove the opposite. And that's called hypothesis testing. So if our hypothesis is based on descriptive statistics, team A is faster than team B. Your um it's called the opposite. the the zero hypothesis is both teams have the

same response times and we're trying to disprove H0 in favor of H our actual hypothesis and then we pull in a statistical test called the t test and actually based on the data I've shown you on the previous slide so this is the data we cannot disprove the hypo the null hypothesis so it means we cannot really claim team A is faster than team B so and this illustrates an interesting uh an interesting issue because you if you just pull that data and you show it to your executive board they're going to say a yeah team B needs help they are much slower but actually if you look at the actual data you will also see that there are some outliers

and this is the reason and again I cooked up this data on purpose there are a couple of outliers and you could also do a descriptive statistics by leaving these out or looking more qualitatively into it and checking what happened to these these uh issues that took so many hours that are skewed doing the average value. Intuitively, probably these couple of issues were a little bit too complicated or maybe the measurement was wrong. Maybe something went wrong there and they skewed the average. If you leave out the last three data points, you're going to end up with more or less same average. All right. Um, predictive analytics. Final thing, you could use this to go a step further

where you try to predict things and then here is how it would work. I don't think anybody has ever done this. I've also heard some issues with privacy of this and the whole idea sounded weird but still going to give you that. So instead of measuring the awareness score of employees where we have to make them do the test and then figure out is this a good test or a bad test we can actually use some predictors which are readily available and predict that score and the predictors could be how much is this person engaged in trainings whatever however you're measuring that what is the role their tenure how many years they are in the company how many fishing

attempts they've uh reported and then we build a predictive model that just tell pulls in this data and says okay these people are good. These people might need some more training because we figured out they're not they're they don't have a good awareness based on this black magic. Again, I'm not here to say it's a good idea or a bad idea. But this is what this analysis takes us to. The final one is prescriptive, but I don't have time to go into that and the only thing I do have time is to conclude here. So good metrics should answer meaningful questions, not convenient ones in the sense of if you are pulling in just metrics from tools and trying to come up

with some numbers that is perhaps not the best approach. Good metrics should be reliable, valid and precise and unfortunately are pretty hard to find. So you will have to work a little bit harder to find good metrics. Your averaging might tell very nice lies to your board and then your dashboard should reveal the truth and not dress up vanity metrics which is unfortunately happening too much. Thank you very much. These are some resources you can take with you and I'm happy to hear some questions. [Applause] Amazing stuff. I always knew that privacy is overrated but you know statistics are with me on this one. Sadly, not the European Union law, but we're not going to get into details. Uh,

any questions? Uh, definitely now I know that I'm lying to my cardiologist every time I send stuff from this watch, >> which is good to know. Uh, I I would like to ask if there are any questions behind the curtain because I cannot see you. I'm having a seance moment here. It's somebody somewhere is gonna ask a question. Wow, it has happened. Here we go. Please. >> Hello, Yanek here. Uh, why you speaking about averages? Why don't take your the worst score or not? Because you are the worst. How you are performing? Worst is your worst. >> What What do What do you mean by worst? Worst >> you're measuring something. This is your score.

Do you mean the SPM risk scores? >> Because that's what the tools are doing. They pull in the average from what you have. They don't they I mean they they're also going to show you your below that risk. They're going to show you a list of things that where you score the worst and tell you you have to go and fix these. But then the organizations are often focused, especially the executive leadership. They don't want to see 100 vulnerabilities that are have high impact. They want to see the average on average I have 10 departments. How well are each department doing? They of course then and then the average reflects the worst of these departments.

Does that answer your question? >> Okay. I'm happy to hear ideas how to how to do the worst the worst average. I don't know. >> Oh, that's basically every other math class in this country. Uh, any other hypothetical? I mean, is Yeah, there's a question right there. Gentleman raised his hand. >> No. Oh, >> he bailed >> this. Now, this is how you learn. It's kind of like being on a Tinder date. If you raise the hand too early, she actually is going to come over. >> Statistics, not me. Statistics. Oh. We we still like don't be afraid. I mean, we're not going to tell people if you ask questions. This is very Anestonian. We we don't want to show

interest because this being recorded. >> Uh I doubt it. At least not officially, but you know, the drones and stuff. >> You can talk to me on a coffee break, by the way. Just grab me. I know some people don't like to ask questions with a big audience. >> We know some people who know some people who have questions. >> Excellent. >> Things can happen. Okay, three. Going once, going twice. Okie dokie. Apparently, you know, this is what happens when you talk about statistics. People are like, "Yes, yes." Going up, going down. Amazing. Let's see what's going to happen. Give it up for a rample everybody. Thank you very much. Enjoy.