Committing CSS Crimes for fun and profit

Show transcript [en]

Welcome back, ladies and gentlemen. Did you enjoy the lunch? Yes. >> Okay, now I know who ate my meat. Um, good. Very nice. Go have some ice cream later. Um, now that everybody's happy you've been fed. As a former school teacher, I know how important it is to give people the reason to battle sleep before important conversations. Give them some good food. Let's see what's going to happen. Um before I going to announce our next speaker, I just want to remind you that there's a work workshop about to begin on privacy by design in the age of artificial intelligence, the new battle in Estonian schools for all the teachers. Behind the curtain, there's going to be a talk about

unleashing the crowd, lessons from building a human firewall. Uh I remember something from history class about peoples and humans and and firewalls, but maybe not the same thing. But here on the main stage, we're about to hear about committing CSS crimes. It seems that the main topic of this year's Bside is um crime, organized, and otherwise. Very good. It was time to make organized crime profitable. Committing CSS crimes for fun and profit. Please welcome on stage Lean.

[Music]

[Music]

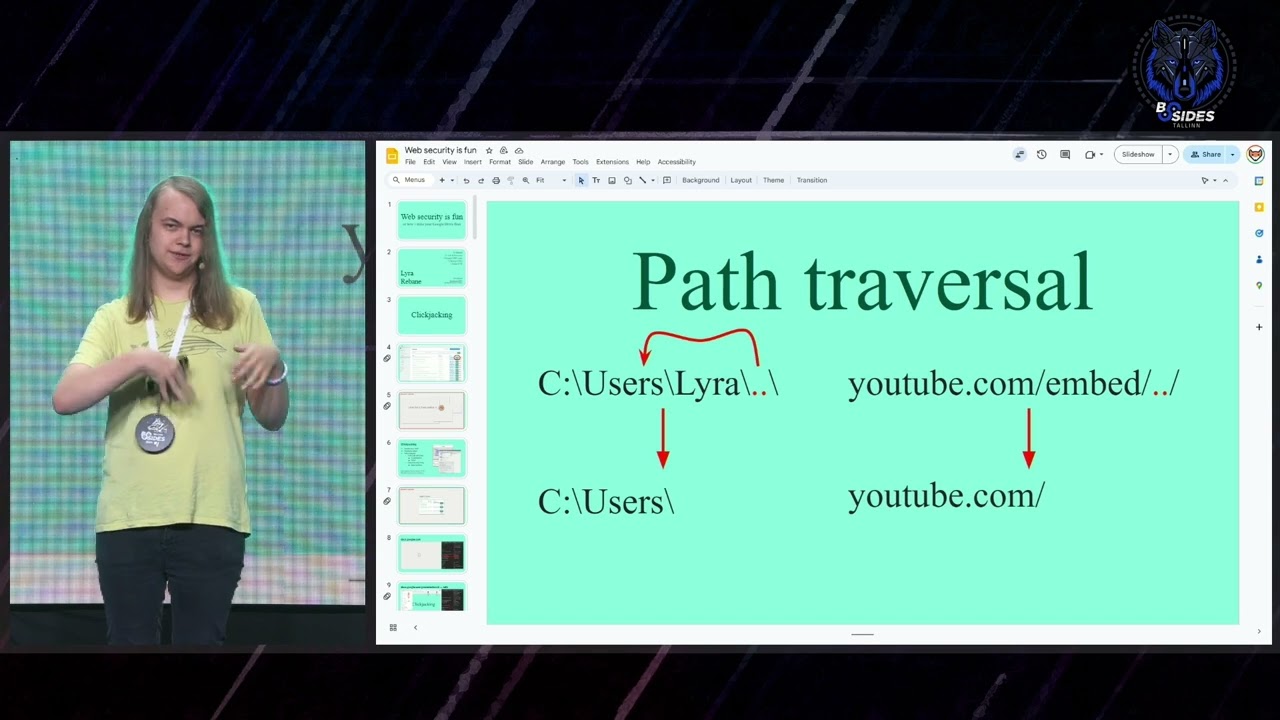

So, uh, hello everyone. Uh, I'm gonna start off this Whoa. going to start this off by um turning my computer off apparently. Okay. Uh I'm going to start off uh by asking a very controversial question. So, show of hands, who of you think that CSS is a programming language? Okay, not that many. So, uh I am Lyra. You might also know me as Rebane. and I like to play around with the web and web browsers and sometimes I find bugs and recently I've been having a lot of fun with CSS. So let's start off with the fun and uh in this talk I'm going to be focusing more on the security side of things but

I think a bit of fun is important for everyone. So um let's start off with that. Uh the story starts off on this website called Cohost uh which was this Tumblr uh Tumblr uh social media site um which as you can see is no longer around. Uh but the cool thing about the site was that you could put HTML inside of your posts. Of course, this was limited in that you couldn't, for example, put style tags and other such such tags in. it was sanitized, but you could put styles on the elements that were allowed. And what this led to was that uh people started making really cool looking text posts uh with cool animations, colors, styles,

and effects. Uh and in the Gohost community, these became known as CSS crimes. Uh this is something you may all be a bit familiar with. Um, so yeah, this is also uh CSS crime. You can see it's made with uh HTML and CSS. Uh, pretty cool. Um, but people started making cooler and cooler things with those CSS crime things. For example, uh, this one here is actually interactive. It's a Windows parody thing where you can open the different programs and, uh, do stuff inside of them. So, okay, I've shown you a lot of stuff, but how does this kind of stuff actually work? Um, let's try to make something like that. Uh, we start off with the details

element, uh, which just gives you like a summary thing that you can click on to reveal its contents. Um, but this is a very important building block for interactivity and CSS crimes and stuff like that. So we can turn this into something more like what we saw earlier by like making the content of this details element look like a window. We can make the summary thing look like an icon. Uh we can move around the window so it's not stuck to the icon. And uh we can also, you know, clean up the little arrow there. Um and that's how easy it is to use the details element to make something interactive. And this concept can be taken very far.

Uh for example, uh this here is a point-and-click adventure game on co-host made with just inline CSS and the details element stacked upon each other. And uh you can see you can do a lot of stuff in there. There's multiple parts. Um so here's a graph of everything you can do. A lot of stuff. There's items. It's really cool. Uh, and this concept can be taken even further to create something like that, which unfortunately today I won't have time to fully explain. So, I'm just going to link to uh a write up on this. Uh, but this is just an example of how with one trick you can do cool stuff like that with no JavaScript, uh, I should add.

Now, this here is also a CSS crime, but this one is a little different. This is a story about a website called One World Story that became defunct. And the story goes that even though this website no longer exists, sometimes when visiting other social media, its logo changes to say One World Story. And the plot twist in this post, of course, is that if you were to read this on co-host website, you would see that the co-host logo says One World Story. Pretty cool. How does that work? It works by using the position fixed attribute. Um, as you can see there, which pretty much lets you put an element anywhere on the page, but it's different from

absolute and relative positioning because co-host does have some code to make sure everything stays within the post. And it's this CSS right here. You can see it sets its position to relative and overflow the clip. And what this means is if you try to move elements outside of this box, uh they don't appear. But for fixed positioning, it doesn't apply. So um co-host fixed this by using the modern contain paint uh property in CSS. But it's a really cool example of how a bug like that uh can be turned into something really fun. Okay, enough of the fun. Let's get to the profit. Um, this is zone webmail. I'm going to open up uh an email and um

suddenly it's no longer zones uh webmail. So, how does that work? Well, it uses the very same position fix trick you just saw. So, just an example of how something like that can be used in the real world. Of course, here I needed a little sanitization bypass. Um, and the way you can make uh profit with that is by seeing if there's anybody from baby in the audience who would like to pay for this. No. Okay. Um, an alternative approach would be to do some um, uh, social engineering. For example, we can make a popup up here or we can subtly change some details around in the email as you can see. And uh, we can also make it interactive.

So this uses the same tricks from before. Um but as we saw in co-host it uses the uh backdrop filter for the hover effects and uh the details and summary for the interactivity. So okay uh I reported this vulnerability to zone in uh June and uh they fixed it within six business days. Uh and uh I got a bounty of uh 1.5K. Uh though it should be noted that this was for multiple vulnerabilities uh not just uh this one. Okay, let's take a look at another mail provider uh Proton Mail. They are a company focused on privacy. So they also even show on the page there's like some tracking protection or something like that. Uh so

let's see if we can maybe bypass that. Now the way email trackers work is by uh you including a link um to an image in your email and if the user loads the image uh you get some data on them. If we try this on proton we can see the image does not load. Uh we have to click this little button to load it. But even if we do click this button and we load the image, uh if we look at the request, the IP in the request belongs to Proton. So it's being proxyed uh to hide user data. Okay. Can we bypass this with CSS? This is how you uh do a background image

in CSS. So let's try to send that as an email. And uh it does not work. If you look at the code, uh, it seems that it's because there's this like proton URL thing in front. So, there's some sanitization going on. Okay. Um, so let's take a look at the sanitizer. This is proton sanitizer. And we can see here, uh, this is the regx that's used to match the URLs. Uh, we can verify that by changing our URL around and seeing that it still matches. Okay. So, um, let's try something that's not inside of the regax. I'm going to try a CSS escape as you can see there. Now, a CSS escape, uh, like that,

uh, is still parsed as an URL, but it looks different. So, it bypasses some sanitization, but we can still see that Proton still manages to catch our um, attack there. So, why is that? Well, let's take a look at the code. We can see in the code that here's where the regax is used and it's done on this unescape encoding variable which comes from here which comes from this function uh which comes from this function and uh there we go there's the regax to detector escape and uh there's the code to unescape uh or escape. So this reg x looked a bit suspicious to me. I wanted to take a closer look. Um, you can see

that this TX and how do we figure out if the TX is correct? Uh, we look at the CSS spec. We can see that it does in fact begin with a backslash. That is correct. It does have one to six hex uh digits which is correct. And it seems like the regx for them is also correct. But then we get to this space in the end which in the spec is marked as white space. What's whites space? Whiteside space is a new line, a tab, or a space. That's not what that is. Over there is just a space. What if we try to do a CSS escape but with a new line instead? Let's try it. So, I put a new

line in our payload and it works. We get the URL uh in the sanitized output. Uh the reason that works is because when Proton does its uh email rex check, if nothing gets changed, it just returns the original style. So let's try it out. That's the attack. I send it to myself. And we can see the image loads instantly without us clicking through anything. And if you look at the requests, we can see that this request is coming from my own IP. And also we get all the browser headers. So that includes stuff like your operating system and your browser version. I reported this to Proton in July. Uh it took them three business days to fix it, which is pretty cool. Um

and because Proton's uh source code is public, uh I can also see uh what they did to fix it. Uh and for this, they gave me a bounty of uh €200, which seemed kind of low for a company like Proton. Okay, let's take a look at another privacy focused company. Apple with their iCloud mail. Um, I don't think I need to introduce anything. Just let's just get to the CSS. We try the very same CSS as we tried before. And we can see it does get sanitized uh once again. And the way it gets sanitized is it just removes the URL. But now the question is if it removes the URL like that, what would happen if

we were to have two URLs inside of each other? Would it just remove the middle one and leave the outer one intact? Yeah, that's exactly what happens. You can see the URL is still there. So, we get the very same impact. We sent the email. We get the like headers and IPs and whatever. Um, so I reported this to Apple in July and they took seven weeks to fix it. So, quite a bit more than Proton. Uh, but also their bounty was quite a bit higher than Proton. Okay, let's take a look at another mail provider, uh, Fast Mail. Now, Fastmail does things a bit differently from the last two mail providers we looked at.

So, what do they do differently? Well, they display their emails differently. When you display your emails, you can do it inside of an iframe, which is what Proton Mail and iCloud Mail do. And this is very easy to sandbox. And the CSS threats are very minimal. The CSS thread is just like making requests out and leaking some information about the user as you saw. Then you can put your emails inside of a shadowdom which is what zone webmail does. And in this case a lot of the sandboxing still happens uh but you have additional threads such as the um UI spoofing you saw earlier. And then you can put the email inside of the main DOM of the page which is what

Gmail, Yahoo and Fastmail do. And now this is really hard to sandbox uh and it comes with a lot of different threats. So to mitigate those threats, what we need to do is we need to sanitize a lot more in the CSS. So of course we need to sanitize the URLs as before. But we also need to sanitize some at rules that can do network connections. And we also need to strip out some unsafe properties. And most importantly, we need to limit the scope of selectors because if you can put CSS in an email and it can affect the rest of the page, well then, you know, that's not good. Um and because Fastmail has so many

different things it needs to deal with um they needed a different approach to sanitization. Now iCloud and Proton Mail do sanitization like this um well this is simplified of course but they do string based uh sanitization where they just do reg uh and that's it. But this gets very very difficult to get right if you want to do all that sanitation sanitization we saw before. So what fastmail does is it uh is it does parser based sanitization. Now the difference here is that instead of looking at the text of the CSS, we instead let the browser uh parse the CSS first so that we can use its own parser to go through the rules and do stuff. And this is similar to

dump purify which is the industry standard uh for sanitizing uh HTML. Uh, and what's actually really cool is that don't do purify has a bug bounty. Uh, and this bug bounty is sponsored by fastmail. So that's pretty cool, I think. Let's take a look at fastmail sanitizer. Uh, we can see here it gets the CSS rules. Uh, it then goes through every rule. It checks the rule types to make sure we only allow the at rules that we can deal with. And then it uh sanitizes the style rules which are the like normal type of rule you see in CSS. So first we need to get the selector uh split up then add prefixes to it to make

sure it can't affect any other part of the page. Um then we want to get rid of all the like dangerous properties and stuff. Uh and then we just you know throw it on the string and output it. Of course this sanitizer also parses uh some other at rules uh and also does stuff recursively if required. Uh but okay let's take a look at that uh property sanitization. So we can see here the way it sanitizes properties is by getting their name and value and then checking that uh against a list of allowed properties to make sure only allowed properties can be used. Then uh we then the sanitizer does like a position fix check. Uh and this is I

think done in a really good way. This is how you should be doing it. Uh and then it uh gets rid of external URLs. But now this URL thing here is very dangerous because you know because we're using the browser parser to sanitize the CSS. If we mess up this rag right here, we could break everything. So that's very dangerous. Of course, we then you know add the stuff to the output and return it. Um let's take a look at this regx thing because well it has the little triangle icon so you know that there's going to be something up with that. Um so this is the regx that Fast Mill used to use. And um well you can see it removes a URL

from CSS as expected. Uh but what if we try the same trick we tried on iCloud mail. We put a URL inside of a URL. Well, this doesn't work. But the reason for that is interesting. Um because Fastmail uses your browser CSS parser. Um this CSS is not valid. So it never gets parsed by the browser in the first place. So this kind of attack cannot be done like that. You can work around it uh using tricks like that. Uh but even in that case it's not very useful because fastmail has a very strict content security policy set up that doesn't allow outside images. Okay. So is there anything else we could do here? Well, if you look at this rugg,

you'll notice that it doesn't differentiate between the two different types of quotes. So if you make a fake URL like that where it starts with double quotes and ends with a single quote uh fastmail gets rid of that and the rest of it uh just become CSS and you can inject whatever CSS you want. So that's profit. Uh okay so they updated their um regax after I reported that vulnerability. But this regx still has one crucial issue which is that it doesn't understand context. What this means is we can do something like that. You can see there the URL is inside of a comment yet fastmail thinks that this URL here should be removed and

thus allows us to inject our own CSS. So that's more profit. Okay. So I've shown you that you can inject CSS, but what's the actual impact of doing that? Uh I prepared this little demo here for you. Don't worry, I'll show you a video. Um, so pretty much I just send an email that uses that thing we just saw. And when I open this email, I get this little popup where I have to click through the next next next thing. And in reality, the next button is bound to some other buttons on the site. And you can see just like that just by clicking a button a few times we have stolen a file from the persons the

targets um personal cloud storage on fastmail and emailed it to the attacker. So you can see how powerful CSS based attacks can be. Um yeah. So okay let's take a look at another part of the sanitizer. So the sanitizer must split up the selectors and prefix them to make sure we can't affect anything outside of scope uh of the email. So how does that work? Well, this is the split selectors function and it splits the selectors by the comma. But it's not that easy because if we have a selector like that, we do not want to split it like that because that's wrong. We need to make sure the part in the quotes does not get

split. So for that we do this out of quote check to make sure it gets split correctly. Okay. So there is a bug in this code in this part and I don't know if any of you can spot it. It's very hard to spot if you don't know what you're looking for. Uh but the bug here with this back slashes escape thing is this line here. This line here should be saying index plus two because uh in reg x if you do that match index thing like that the zero index is before the backslash the first index index is after the backslash and only the second index is after the backslash plus extra character. So the

goal of this backslash thing here is to make sure the next character over doesn't get accounted for in the sanitizer. But we can see it's not actually working over here because of that simple mistake. And um just a side note, I want to tell you that like JavaScript's um rag stuff is very foody. For example, uh this here, what do you think the output of this code would be? True false true. So yeah, but anyways um what this uh back slash ignoring thing lets us do uh is it lets us make think that something is a string like that. But in reality we are injecting a character such as that asterisk over there that is a selector

that is not scoped. So we can affect the rest of the page. But we can make this attack even better because fastmail uh gets rid of selectors that have the like curly bracket thing in them. So if we put the curly bracket in the selectors and trick fastmail into thinking there's two of them, we can do attacks like that where it breaks out of the um thing and we can inject CSS once again. So that's more profit. But what's even cooler is that the location of this uh attack uh allows us to inject code like that. And what code like that allows us to do is uh put import statements in the CSS. Now, usually in CSS, the import statement has

to be the very first thing in the file or else it will not work. But by messing with the CSS syntax like that, we can make the browser interpret that bit of CSS as just being the import. So why is it so good to have the import thing in our email uh attack? Well, having at import in your CSS injection allows us allows you to steal data. As you can see from this research here, it's really cool research. I recommend checking it out. So, you can steal uh DOM data, you can steal text data. Um, now on Fastmail, it wouldn't actually work because Fastmail has that CSP uh that blocks some stuff. So, in fastmail,

what you can do is you can exfiltrate single selectors at a time um through the Fastmail proxy. Uh but you can't do like a crazy uh recursive uh attack like that. Uh okay. So uh I reported uh the first vulnerability I found to fastmail in June uh and they fixed it in 36 hours which is amazing. Um and then I reported an additional vulnerability uh to them uh and they fixed that in 35 hours as well. So that's really good and they pay me 2.5K for it. Okay. Um, do you do do you guys want another email uh service to look at? No, you do. Uh, too bad. I don't have another email service to look at. Uh, I

have one more thing to show you. Um, so this is a bit of original research I've done on like a completely new type of attack on the web. Uh, and it all starts off uh with this. This is the liquid glass effect that Apple is forcing onto everyone. That's maybe not so nice to use sometimes. Uh but I remember the day this came out, uh I thought to myself, could I recreate this in CSS? So I sat down, I worked on it for an hour and uh I came up with the proof of concept uh which actually went kind of viral uh to the point that it was put in a news article. Um, the

reason I'm showing you this news article is because it has probably one of the coolest lines uh I've ever seen about myself. Samsung and others have nothing on her. But okay, uh that aside, um there is a real reason I'm showing you this cool effect I made. Uh and it's that I was playing around with iframes later and I thought to myself, what would happen if I were to apply this cool effect on an iframe? And to my surprise, it actually worked. Now, the reason this is so surprising to me is because the top level page is never supposed to be able to touch the uh cross origin iframe. But somehow this effect here is able to

take pixels and move them around in content I'm not supposed to access. How does this work? The liquid class effect. Uh this core part is the FE displacement map filter from SVGs. So what this filter does is it lets you offset an element based on another image. So you can already see how this can do some interesting stuff, but I don't want to take a look at that. There are uh oh, and there's the there's the code for it. Um but I don't want to take a look at that. There are a lot of other CSS or like SVG filters out there. Um, I want to take a look at this specific funnel here, the FE tile, um, filter.

Now, uh, this F tile filter lets you tile stuff. So, if I put it there twice like that, I can use the first, uh, filter to crop out the region and the second filter to repeat it over the page. Uh, so let's say for example, we want to detect someone hovering over this file button. When we hover over it, it goes darker. It should go darker, but I don't think you can see it on the screen very well. Um, so uh yeah, if you hover over the button, it goes darker. Now, let's take our tiling thing and let's combine it. uh or sorry uh let's take our tiling thing and let's make the original crop so small that it's a

single pixel and now when we hover over it it goes darker which uh you can't very well see on the screen but just imagine it goes darker when we hover over it. Um, okay. So, we can get data on whether someone is hovering over the file button like that. But is this but like can we use this data somehow? The problem with SVG filters is that there is no way to get the data from an SVG out of it. So, this data about what the user is doing is stuck inside this one filter here. And it's protected against timing attacks and stuff like that too. So, that wouldn't work. Now, if uh you're one of the people who

in the beginning didn't raise their hand, doesn't think CSS is a programming language, you might get stuck here. But if you know how to think like a hacker, the obvious thing to do is to just run that tech logic in the SVG. How do we do that? Well, we do want to detect the like hovering over the file button thing, right? So what we can do is we can take our uh tile color thing from earlier and convert it into transparency. So here you can see there's nothing here but if we hover over it with the mouse it becomes white and if we stop hovering it goes transparent again. We can use this transparency as a mask for our

attack images. So for example, we can have something like this where you can see the win button is just white. But if we hover over it, it gets this like color effect on it. And we can now take a lot of these masks and combine them together to make a more complex attack because with all the like color filters and stuff, you can do logic kates in SVGs. So the next stage here would be for example once the user clicks on there we open another menu and then you know the user has to click the next thing. So this is incredibly useful for certain kinds of clicking attacks because usually if there's like a clicking attack where a user has to

click seven buttons and type their password, it's very hard to trick them into doing that because they there's no interactivity and they like feel like something's off. But using this trick, you can do attacks that are more complex while still feeling responsive to the user. So I'm not going to go too in depth in depth in the switchy filters because we're kind of um don't have that much time, but I am going to show you a demo of a real attack I did on a real application some of you may be familiar with. So this is the demo. I have this little AI generator thing where I click a button. You can see a text box opens up.

It's a working text box with like focus and everything. And I need to type in this uh capture. I then press enter or click the submit button. Looks good. But in reality, we just stole your Google Docs file. So um that's pretty cool. How does it work? Um let's take a look at this demo again, but this time uh let's actually see what's going on underneath. So you can see here we are actually using the AI document generation button and then we are typing in the prompt text. In the prompt text for the AI thing, we are uh tagging a document of our own. As you can see there, we are then trick tricking the user into selecting that

and then we are making them generate the document and this goes into the attackers document as you can see there. So this is an example of an attack where with traditional click checking it would pretty much not be possible because there is just so much different stuff going on there. so many different things you need to look out for that a user would see right through it. But by using SVG filters and running attack logic within them like that, uh you can do it. You just saw how natural it felt. Um so yeah, uh Google gave me uh 3K for this bug. Um so yeah, uh okay. So uh about the SVG thing, I only covered the basics here,

but within a month or so I'll be posting a full blog post about it uh with more uh detail. If you're interested in that, uh keep an eye on the blog. Uh also, if you want the slides, they are available on my website also. Uh and uh these slides were made uh with my own editor. This is the first time me using my own editor for the slide stuff. So I hope the slides looked uh cool. Um, but yeah, that's it. Uh, questions. [Applause] Okay, my fellow criminals, this is your chance to get better right here, right now. Okay, I can I can ask it for you. If there are any representatives of law enforcement, raise your hand.

Nobody. Okay, let's go with the crime. Any questions? Here we go. Uh, thanks for the cool presentation. First one is comment. Actually, I am surprised that only Fastm made is using properly CSP headers and not others. Uh, do you have any idea about this? >> No, I'm not sure. I feel like more email providers who like provide like proxying images should do CSP like that. I'm not sure why they don't use it. It's weird. >> Okay. Uh main question is do you think using CSS prep processors or even like framework like Tailwind would prevent this kind of issues? >> So So okay um would using those things prevent issues? >> Yeah, as far as I seen mostly examples

are like pure vanilla CSS. So the um attacks I'm showing are the ones where I write the code. So I don't think the choice of framework matters as much. Uh it's just that if you're parsing something like an email, you need to support vanilla CSS. And if you get um an injection on a website where CSP blocks JavaScript but not styles, you can also inject your own vanilla uh CSS. So I don't think what you use to build your product really matters. You can use whatever is the best for uh you.

right there. >> Very cool presentation. Thank you for that. Uh how much luck you have had getting JavaScript running using the same techniques in uh web mail clients. uh the luck with that is very bad because there are industry standard things like the dom purify uh I showed that uh you know it's very hard to bypass those but with CSS there isn't like a single sanitizer that everyone can use and also people don't really focus on CSS text that much so that's where you can find like bugs like that >> one and then right there Thanks Lar for the presentation. This is really cool. Uh my question is that you have reported quite number of uh uh

incidents to the providers. Uh how many reckless providers have you met that you report something and they don't uh they don't care. They don't fix it. It it stays open and wild and exploited. >> Uh usually I try to tell based on the wipes before I do the research. Um so I try to avoid those kinds of providers. Um, yeah, I I did have uh what like one email provider tell me that my report was invalid. So, I don't know what's up with that >> over there.

>> So, first and foremost, thank you. Now, I'm absolutely terrified of the web pages, but more important question is regarding the SVG attack vector that you've just discussed. So to mitigate it whose job would it be? Would it be on the provider on the web page uh owner side to ensure that this attack is mitigated or should something be done in the standard to ensure that this weird behavior is not allowed or is not supposed to work like that? >> Uh this behavior has been around for a while and there have been a lot of attacks on it. Uh more so on the timing attack side of things. So I assume that it's supposed to be a part of the

standard. The web platform doesn't really want to remove stuff because it breaks compatibility with old websites. Um I think uh and I also think that um this is still uh abusing an underlying clicking vulnerability. So I think uh web uh like website developers should make sure that these kinds of attacks can be done against their services. Uh, also I would like to add that there is um an API in JavaScript intersection observer v2 that lets you detect when an SVG filter is covering um your iframe and uh make sure that the user can't do anything sensitive then. >> Thank you.

>> Any more questions? at the back there. >> Hello. Um, thank you for your presentation. Um, you mentioned about that uh the SVG filter for liquid colast effect. Uh, and it shouldn't touch to the underlying pixel. Let's say this is how you mentioned it. And would it be possible to read the pixels and try to build up the image underlying it to steal the data? Just hypothetically asking. >> So it's not possible if the implementation is correct. Historically many browsers have had timing attacks on those. So people have been able to read pixels one by one by just measuring timing. uh but uh the SVG filters are supposed to be uh implemented in a way

where timing attacks do not work on them. So in that case you wouldn't really be able to extract the data. >> Okay, thank you. Anybody else?

Always good to see such a recognized group of well-established criminals in in one place alto together. That's cool. Okay, but if there are no more questions, going once, going twice, sold. Thank you very much, Lar. [Applause]