End-to-end Supply Chain Integrity – Stian Kristoffersen

Show transcript [en]

So thank you for the introduction. Happy to be back at B-Sides. So I work as a lead security engineer at Telenor, where I focus on software, supply chain security, which this talk is a part of. We're going to focus on the integrity part today. So shout out to the people at Telenor and Hackeria that has contributed to this. Everything I'm going to show is open source at the supply chain chain tools organization on GitHub. So you can check it out there. It's still early days, mostly it is experimental, but it's also a good time to get involved somehow if you would like to do that. So just introduction, try to motivate, okay, why do we care about securing the software supply chain? So here we have a

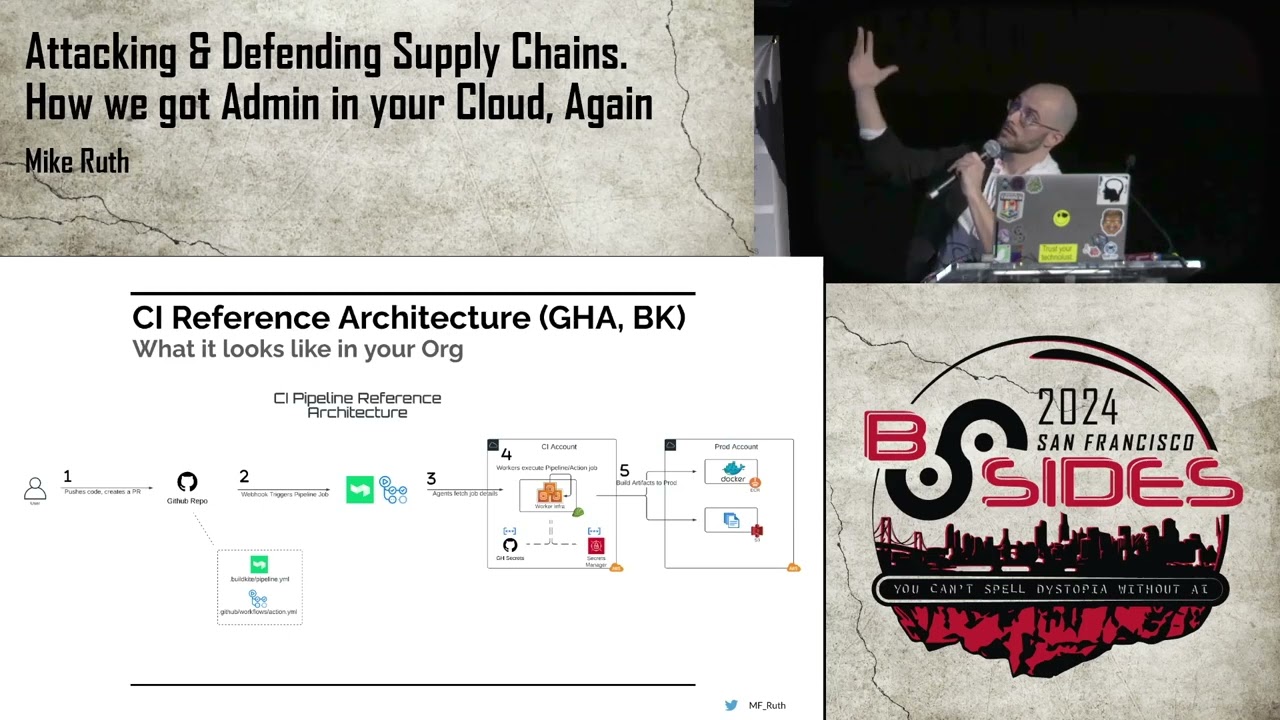

typical supply chain. You have the producer that produces some source code.

upload it to, say, a force like GitHub. Maybe there's a pull request review before it's being merged in. And then you're building the package, maybe on some of this laptop, maybe in a GitHub action or something like that. And then you upload it to a package registry like PyPI or NPM before it's consumed by the consumer. Each package usually depends on the packages as well. So that's also part of it. So Salsa has this great overview of everything that can go wrong, which is basically everywhere. So on their website, they have a good list of actual real life attacks on all of these eight places. We can divide this into three main categories. So you have your build threats, the source threats, and

dependency threats. I don't have time to go to give examples of all this, so I'm just going to highlight two actual attacks. So back in 2011, kernel.org was hacked. That's the home of the Linux source code, the Linux kernel source code. And not great, and they did some mitigations afterwards. And I think Most people don't think about that you upload code to GitHub or something like that. And in most scenarios, you rely on GitHub not getting compromised for your security. So next up, we have a build example. So that was the SolarWinds hack back in 2020. So it was part of a bigger attack against the US government where the attackers made a backdoor into the build process that in a legitimate product

by Solowins that was used by the US government downstream. So,

it's actual attacks and this is just like two of the many things that can go wrong and of course, you might be using different solutions for each of these steps. So hopefully some motivation why we care about trying to secure all of this, which is what the rest of the talk is going to be about. And these are the parts. We're going to look at how to secure the build, the source threads, the dependencies. And we are going to use signatures to establish trust in both source code and dependencies. We need to establish trust in the keys of the producer. So we're going to look at that. And then we're going to conclude and bring everything together. So it's

worth noting that we are not covering that the source code that the producer creates is of poor quality or that it's malicious. We're only looking at making sure that what the producer intended to create is what the consumer actually consumes in the end. That hasn't been tampered with along the way.

So first up, build integrity, we're gonna start at the end there because it's more mature, there's more specifications in this area and there's also more open source code you can use to improve the security here. So first up we have the Salsa specification, so they not only do they have the great overview of the threats, they also have some levels you can use to get more maturity in the build process. So at the build level one, you're basically saying that, okay, I have some at the station. Maybe you say that, okay, I use this script to produce this binary that has this hash. And then at level two, it's the same, only you sign that at the station. It should also run in a

hosted platform rather than on somebody's laptop. At level three, there's more requirement to harden the build platform. Typically that means that you're running each build in a separate VM. And also

that the signing keys should not be directly available to the build process. So if the build process is fully compromised, you can't sign whatever artifacts you want. We can do better than this by using transparency logs. So transparency logs can keep track of when assigning key is used. So basically when you do a release, you record it to this immutable log. And then you can make sure that there was only one version one release. There's not another malicious version that exists on the side. So as is the main use case, you might be able to detect something before it happens. Or if after a compromise, you can use this for forensics to see how, like when this thing was introduced.

Yeah, so this is based on Merkle trees, and if you're interested, you can read up on specifications on this stuff. So we're going to use the SigStor transparency log, which is like the main two projects this consists of. It's the Recor, which is the actual transparency log. And then you have Fullseo, which is a way to sign stuff on the transparency log using OIDC tokens, which is exactly what MPM did when they introduced this in 2023. So basically you have your GitHub action that is using the OIDC token of the action to be able to sign at the stations that are included in the Recore transparency log. And then you upload both the attestation and the binary to the MPM

registry. And when you do that on the mpmjs.com page, if you scroll to the bottom, you'll get our provenance box with the relevant information to be able to verify that, yes, it's indeed built and released correctly. So this is exactly what Homebrew did as well. this year, back in May, it's still opt in to actually verify the integrity of each homebrew package you install, but hopefully in not too long it will be on by default to verify this attestation. So both in Toto and sorry, so both MPM and homebrew use in Toto as the attestation format. So I talked about that they both produce provenance and basically the file format of that provenance is in total, which is just a structured, standardized way of

storing that data. It can also be used to further describe how each build step should work and be able to verify it. We're not going to use that here. We can do even better with reproducible builds. So Google's definition, running the same build commands on the same inputs is guaranteed to produce bit by bit identical outputs, which means that we can always get the same binary given the same source code, basically. So that takes us to the release process we have in the example report, which we're going to look at shortly. So you push a tag to GitHub. that triggers the salsa workflow, which runs in a separate VM that we don't control. And the salsa builder

is created by the salsa people, not us. Meaning that when they produce the bits, if you trust them, you can trust that the transformation was done correctly. So it's included, so it's signed with Fullcio, like for MPM and Homebrew. and then included in VQR in a similar way. Then the binary and the attestation data is uploaded as a release to GitHub, which we can then download and we can reproduce the binary locally to verify that it's the same bits. And then we can countersign it to show that yes, we also got the same bits as the salsa builder. So overall giving, Very high assurance that what the producer as a consumer gets in the end is

what was intended So that takes us the first demo

So So here I'm in the release prototype repository just gonna quickly look at the workflow Just scroll down to the bottom You can see that I used the Salsa Builder. So this is a Go project, and it takes the source code and produces the binary. So let's look at what was produced.

So here we have release 004, and we have the binary itself, and we have the Intoto at the station. So if I pop over to the terminal, I can verify that the salsa release worked in the correct way so they have a verifier and we're going to verify the artifact which is this file and we have the provenance here we're expecting it to originate from the release prototype repository and we expect it to be version 004 so if I run this it passes it's happy meaning that That's also part of this past. Then I talked about that we can reproduce the binary locally. So I'm going to do that. I have a reproducible build script which produces the

binary. You can do a SHA-256 of that. And we can then see that that's the same hash that we got in the attestation. So I only verified the attestation file. I didn't show it to you. So let's do that.

So DSSE is called dead simple signing end envelope. And it's the wrapper around the attestation. And we just made a CLI to make it easy to extract. Need to do that in total. So here there's going to be a bunch of extra data from the build. If we scroll to the top here, we should be able to see that the most important part is the file name and the hash, which is the same hash as we got when we reproduced this locally. So what we can do next is that there's two signatures in the in total association, not just one. So if I do... just get the file here. We see that there's actually a list of signatures. So the first one is from

the salsa builder and the second one is the one I countersigned after verifying the build. So we can extract the signature just using JQ into a file and then we can verify that signature using SSH.

So it's a good signature. In the future, this should be more smooth, but it was what I had time for this demo. So end result is that we get very high assurance in that it's verified reproducible. It's also level 3. It's built in an isolated VM. And it's also added to this transparency log so that we can make sure that this is the only version 0.0.4 build, and there's no other malicious version.

So that was the build part. So let's move on to source integrity. To warm up in GitHub if you don't sign your commits, it's possible to spoof the identity. So the committer email and the author email is just metadata that you can tamper with. So you can pretend to be some other person that might be more trusted in the project you might try to attack. So if you sign the commits, there will be this verified box. And if it's signed with a key that GitHub doesn't recognize, it's marked as unverified rather than wrong or malicious. And if you don't sign your commits, it's just going to be blank. So what you should do is to sign your commits. you should turn on Vigiland mode that

always includes the verification box, if it's correct or not. And then you should then force sign the commits in the branch rule sets. Moving on to a bit more advanced attacks. First, we need to have a high level understanding of how the Git metadata works. So you have your branches, say main, or a tag that points to a commit. And then each commit points to the parent commit, which can be one or more commits. So both the commits themselves and the annotated tags can be signed, but the pointers themselves are not signed. So that's where the attack comes in.

There is some mitigation for this, called sign pushes, that kernel.org introduced after the hack, but it's not supported by GitHub, so we can't use it there. So let's look at some attacks. So this is from a paper from 2016 by Santiago et al. And these are the three main attack types. So you have rollback where you point, say, the main branch to an older commit, rather than the most recent commit and then you just hide that the more recent commit existed. So you're rolling the history back. Next we have teleportation where instead of pointing to the right commit, you're pointing to some other commit in the repository. And at least if you're signing the commits, it needs to

point to commit signed by the correct key. it might still be possible to do an attack. And of course, for both the rollback and teleportation, as an attack, what you want to do is that there might be a non-vulnerability or some other functionality you want by doing this attack. So you're getting the source code you're looking for. Then there's the deletion, where you basically just delete the branch and all the commits, which is more of a denial of service or something.

So this is partly some of this is made harder by just how Git works in general. But to get even better integrity protection, we've created a new tool called Git Release where we're verifying more of this stuff. And one of the main insights in how we can verify the integrity is by using merge commits. Like using merge commits in Git is optional, but if you enforce it, you get some extra properties. So basically, in a merge commit, the left parent is the previous commit on the same protected branch, but the right parent is the feature branch you're merging in. So we can use this geometry to enforce some invariants. And we can anchor this by saying that, okay, we expect this commit to exist

on this protected branch, which reduces the rollback window to these commits, because we can't know if there's a new commit. But then each time we see a new commit, we can add that and make the window smaller. And also, this type of merge commit will not naturally occur in normal Git use. as we also prevent the teleportation attacks. So, I don't have time to talk more about this, so there's more details in the repository. So you can look at the threat model there. So, we have the same release process as earlier, but before we push the tag, we're going to use this Git Verify tool, and we're also going to use the Git Release tool,

which I'm now going to demo. So if you jump over to

the repository, I can run git verify and it's validating as okay. That's great. So then it's run a bunch of tools. The tools, rules, and the rules are listed here. So we have a bunch of identities with their signing keys. We have what kind of roles the different identities have. So here we have three maintainers. We have a bunch of rules that are applied. So for instance, the require merge commit is true. And we can also require up to date that tags have to be signed. We're using SSH signatures, so that's turned on. And we're also using hardware keys to sign the commits. So then we can also enforce that the user actually touched the hardware key when doing

the commit. And there's also support for requiring entering a pin or something on the security key. You can optionally allow the forge itself to do commits, either merging pull requests or do actual content changes. Then we have a list of protected branches, which is main here, and the list of repositories. So we have the UI for the repository, and we have both SHA1 and SHA2 in case of SHA1 collisions. And this is how we anchor it again, as I mentioned. So that's verifying the source integrity. And then when you do a release, you can release using Git release, which will create both a Git tag and also a tag link, which is an attestation. like I

showed before with the binary. So let's look at how the tag looks. So here we include some extra metadata as the tag metadata field in the tag. And if you look at that, we can see that there's some extra information included. So we have the repository URI in here. So that's is to prevent that there might be a teleportation attack to a different repository. So say, doing forks in GitHub, very common. And if you do a release on a fork, you don't want that release to be mistaken for a release from the upstream repository. So that's why we include that. We do SHA-1 and SHA-2 of the commit itself. We also have the previous tag What this

does is that you get an ordering of all the releases. So when you send release to the build server from typically a forge, the forge can't omit the release or reorder the releases without the build server being able to detect that.

We also have some extra protected branches metadata. Which is useful to detect more of these teleportation and rollback attacks

So so that's Concludes this demo so basically We can have a higher assurance In the source code and in the release itself before we continue with the same build steps before and Tying those better together is work for the future Next We have dependency integrity. We're using Go, so we already get a lot of functionality from the Go language itself. It has a transparency log for the releases with all the same benefits as I talked about already. But maybe you don't trust the hashes you get from Go itself, and you want to verify that they are consistent with the hashes from the upstream source code. So going back to the release prototype repository, I can

look at the dependencies for this project and it's depending on the Go Sandbox project in the same org and a given version. So then I can go upstream to that repository and see that okay, Git verify, verifies that source code integrity is correct. And then I can run go hash to recreate the checksums and see that yes, they are the same as I'm getting from go itself. So basically the checksums are stored in go.sum and it's the same here as in the one we recreated. So then again, you have very high assurance in that it's exactly what you expected to download as a dependency.

So apart from the Sigstore stuff, where we don't have to store the keys of the maintainers because you're using the OIDC token to get those keys, For the other stuff where the maintainer is signing the commits and the counter signing the binary release and stuff like that, we need to establish trust in the developer keys. This is a hard problem which many have tried to solve before. Here we're going to look at our variants based on the update framework. So the update framework is a specification to establish trust in a set of keys that are then used to update the next set of trusted keys. It's what's being used in SIG Store to establish root to trust there. So I think Tuff is

pretty good, but for smaller projects it can be a bit much to set up. So I'm sort of suggesting a more lightweight version, but also some extra metadata to Protect against key reasons and stuff like that So it only lives on this branch right now a toughish branch in the root of trust repository and The main idea is this that you either Already trust developer. Maybe you met physically at the conference Maybe you already trust projects and then you can use want to establish trust in another, or simply that you're downloading source code or binaries for the first time, and then you use trust on first use to establish trust in a set of keys, and then

those keys can update themselves over time. That's the point of root.json, which is part of the TUF spec. It delegates trust to target.json, which is also a part of TUF. which can delegate trust further to say the get verified JSON config I showed you. So that's sort of the idea. This is like draft stage. I think it could be useful. So one use case could be that distros when they package stuff, they could establish trust in a project like this and then over time make sure that they're getting the right source code to package in their distros.

Okay, so, sort of sum it up.

There's a lot of things, a lot of moving parts to harden the supply chain. For the verifier, hopefully you can reduce it to three steps, that you establish trust in some keys, then you download the package, and you verify the attestation, and then you're good to go. And if you want further assurance, you can verify the integrity in the source code, and you can reproduce the build and verify all of the dependencies recursively.

And ideally, there would be more people verifying the builds and transparency logs and all of that. I think the main challenge going forward is to get something that scales, something that's easy enough for a lot of people to implement, so that it's not just the big flagship project that uses this, but it can also be used by a smaller open source project that are still important for a lot of people. So we, in Telenor, we are looking for people to help secure our cloud native platform. So feel free to visit our stand or come talk with me. And we also have a CTF that's live now at this address. And I think it's open until 6. But you have to be physically here to be eligible for prices.

And with that, thank you for your attention.

Thank you, Stian. Does anybody have any questions for Stian about the end-to-end supply chain security? How some of this might apply to your own SDLCs that you're working in? Yes. Mr. Ingebretson, over. You always sit as far away from me as you can. You mentioned that GitHub didn't have some of these features, and you sort of you wanted it. How about the other vendors of Git out there, like GitLab or is there anybody better that has implemented more of this? Also, in terms of show 2 support, GitLab have experimental support that they added in August. So I guess that's a bit better. In terms of the pushers, I don't think anybody has that. GitHub, one thing they do have is that they have a private version

of SIG store. So they basically run one SIG store for all customers of GitHub and then you can use that as at the station which is not public instead The downside is that you can't do a full audit of the log when you use that

Anybody else? I have a question What do you think will be the main drivers to get this? This stuff implemented in SDLCs is it companies trying to manage their own risk or do we have standards coming that will or audits of existing standards that will look closer into the vendors SDLC? Sure. So there's some EU regulation coming. Maybe that will help. But I think for the smaller open source projects is making it as easy as possible to do it. So that's what MBM and Homebrew and PyPy and others are working on it as well to make it as easy as possible to implement this. I think that's key to do it. I think for companies, they're mostly going to do it internally,

right? So it's not going to be visible in most cases. All right. Anybody else? No? You're here. Yep. And you're also at the hardware hacking village. So you can find Stian there and ask him about hardware hacking or supply chain and any number of other topics potentially. Thank you very much once again, Stan. Pleasure. Thank you.