BSidesSF 2025 - Scalably Securing Third-party Dependencies in... ( Ziyad Edher, Chris Norman)

Show transcript [en]

All right. Good afternoon, Besides SF 2005. My Woo! Right. Yeah. Give us some love. I'm here to introduce our next two headliners. But before I do so, one of them flew all the way from London just to be here to speak for you. Guess which one? This one or this one? Oh, you know them, don't you? No. Fair. Cheater. Cheater. Pumpkin eater. All right. So, we have Zeon and then we have Mr. Chris, the London man, right? Yeah. Thank you so much both for being here. I think you flew the furthest. I'm going to give you a special prize after. I'm going to ask for one. All right. So, guess what they're talking about? This

is a tongue twister for me. Scalability, securing third party dependencies in heterogeneous I didn't even say that right. Environments. All right. Now, please take it away. All right. Hello. Hey, thanks for thanks for being here. This is amazing. And the screen is really big. So that big QR code over there, as you're listening to this talk, I would love to have some questions and to just like interact with you all. All right. So my name is Zad. This is Chris. We are both here talk. We both work at Anthropic where we're uh building and securing really large AI clusters for both training and inference. Anthropic's infrastructure is pretty unique. We have an intense threat model and we approach

supply chain security a little bit differently than you might usually see. So I'm hoping this talk is going to be an overview of how we're thinking about our threats and maybe how you can learn from that. All right. So, hypothetical attacker here that wants to compromise your infrastructure using a supply chain attack. There you are in your beautiful infrastructure, your beautiful castle. You're building things and you're getting a lot of attention and money bags rolls up asking to be let into your systems. This isn't particularly unusual for Moneybags. He's well known for going around to castles and infrastructure and asking to be let in. Uh, usually people say no, but sometimes they say yes, and

that's good for him. Obviously, you say no because you're really responsible. You ask, you know, who are you? Why are you trying to touch my stuff? You care. You care about your infrastructure. You care about your castle. You care about what you're building and what you're using and all sorts of stuff. So, you get pretty spooked, right? Maybe he won't be so nice next time around. So, you need to build and buy a bunch of stuff that keeps him out. So, you grab a wall. You hire a guard. You maybe like dig a moat or something. Uh, whatever makes you feel good about the security of that castle over there that you reside in. So, Moneybags rolls around again and

this time he sees the wall and he's like, well, you know, there's no getting past that. Uh, he's not really interested in being that aggressive. Moneybags is mostly this rich pacifist that just like really wants to get into your systems. So instead of like trying to confront the guard or something, he just goes and does a bunch of homework and realizes that you depend on a lot of stuff. So walls, guards, bricks, water, food, electricity, whatever it is, you need it to make your castle go, to make your infrastructure tick, to just like do whatever work it is that you do. So, Moneybags like thinks to himself, I don't really want to bother with these walls that kept me out. Is

there something I can do to just make the walls ineffective? And so, Moneybags finds out where you buy your walls from. And goes to the wall factory or the owners of the wall factory and says, "Hey, can I buy the wall factory?" And they say no because everyone knows money bags is a bit of a slime bag and so we don't want to sell the factory. but he really wants to get into your systems. It's worth a lot to money bags to get into your castle. So, he offers a huge bribe, this like exorbitant amount of money. And the factory agrees. It's not worth that much money to them. So, whatever, you know, give me a million dollars. I'll let you

in. They kind of know that folks depend on the walls that they're producing, but like whatever, you know, they'll they'll deal with it. They'll figure out a new wall supplier if Moneybags ends up actually being a scumbag. And so now Moneybags is in control of your walls and the factory produces some new walls and some time rolls around and you need to replace your walls. And so you consume some new walls from this factory and again Moneybags is in control of those walls and maybe sneaks in some you know bad components into those walls that gives him control. And this is a classic supply chain attack. You want to get control over the defense mechanisms so that down the line when

you want to conduct an attack or an operation against your target those defense mechanisms aren't so useful anymore. And you know this this feels a little bit too simple to be true and maybe it is but not really because we've seen this happen a lot in the industry with things like LibXZ, PyTorch, Eventstream, CodeCV, some other like high-profile incidents where really it was open-source community vigilance that uh prevented like a huge security breach or maybe one still happened in those cases. It's like if someone noticed that Moneybags bought this factory and then I don't know like started screaming at everyone, you have to stop using the walls that that this factory is producing. So this isn't like super

far-fetched and it's becoming really cheap to use a supply chain attack to get control over specific targets. Anyway, moneybag rolls up again this time to your castle and he just lets himself in, right? He has control over your walls. It doesn't matter how much you've propped it up with other security controls. it's still a fundamental part of your supply chain. He really like he he's compromised a core part that you really depend on and now you've been betrayed by your walls. And there are so many different ways to get betrayed by your walls and by other components of your critical security supply chain. Force is a really effective tactic like a vulnerability in the supply chain. So like compromise a

public build system and take over the packages that that build system is producing. This happened not too long ago to PyTorch nightly. Uh there was like some kind of dependency confusion attack against uh a core component of their build system and attackers got control over all the packages that PyTorch Nightly's uh builds were were producing and so they had control over the packages that ended up on people's machines when they tried to pull those things and loads of folks trust PyTorch. They trusted PyTorch nightly and they were upgrading their dependencies over and over and so now they're implicitly trusting money bags whether they like it or not his code is in their hands. Another great tactic is

deception. So like trick the factory into producing whatever you want it to produce. So say like you know the libexz example is a great one here where there was some you know maintainer contributor to this libexz package which is a critical like it's a compression library that's used in things like open ssh open ssh is the most popular SSH client and server uh and someone opened a PR against libexz and it contained a back door to SSH off and this was like a contributor to a bunch of different open source repos including lib XZ. Yet they opened this PR that had this very subtle backd dooror. The PR was reviewed. It was approved. It was merged into

mainline and it was slated for release. The only reason that we actually ended up finding this, as far as we can tell, is because someone was just like really curious why SSH was taking a few hundred milliseconds extra to conduct its O checks. So we got pretty lucky there. Another example is eventstream where a maintainer transferred uh this eventstream package to just some other set of owners and the owners decided oh like eventstream is used by I don't know 4 million people. This would be a great way to get my malware into the hands of 4 million people and so that's what they did. They just put a bunch of malware into the next release sent out and that

was that. So like again, moneybags as code gets into your hands whether you like it or not. Here another fun way to do this is by like just doing these same attacks somewhere else in the chain. Folks depend on a lot of things nowadays even for the most basic applications like I don't know if you if you do like create react app or whatever you end up pulling in 100 150 dependencies uh and then try to make anything more complicated and you have a lot more. So all Moneybags needs is just like one weak link in this entire thing. Just like one factory that'll sell or kind of one uh one corrupt person trying to commit code to

one of those repositories. So hot take supply chain attacks are the most effective way to infiltrate specific highly secured environments nowadays. And I'm not saying this because I think supply chain is getting particularly worse. I I don't think so. I think it's actually getting kind of better, but everything else is getting better so much more quickly than supply chain. So like here's a basic comparison against some like other attack vectors, you know, software vulnerabilities, social engineering, and supply chain. we end up seeing that like supply chain looks very highly leveraged with like one very important caveat and that's that it's not scalable. So, you know, let's say you get an rce in some popular web framework. You're probably

going to be able to use that over and over and over, you know, maybe for weeks, months, years, however long it takes for people to patch it. And you'll have a huge target surface area. you just like develop a payload and then you toss it out and you get a bunch of a bunch of free exploits. But if you try to backdoor libexz, that's going to be kind of harder and you're probably going to get caught. You know, we had those near misses, but you're probably going to get caught if you're trying to do something super widespread. So recon and specific targets are super useful to actually being a able to execute some kind of

supply chain attack and that isn't very scalable. I mean, there's probably a bunch of people that maintain open source software like in this room. If someone came up to you and was like, "Hey, you know this 50 star GitHub repo that you own, I want to buy it for like a quarter of a million dollars." I mean, if someone came up to me and said that, I would be like, "Oh, like that's wow." And then be like, "Oh, I'm I'm starting a new company and we're building something similar to that. So, I I just don't want when when I monetize, I don't want to run into issues with like licensing or whatever. I'm like, "Oh, okay. That that's fair.

Fine. I'll sell it. I don't care about that repo that much. I'll sell it to you." That's a lot of money. Uh so, that might actually be money bags, right? Uh that might be money bags going and like trying to buy up real estate wherever he thinks might be useful in the future. or it might be money bags realizing that you are a weak link in kind of some long supply chain of some specific target way down the line. So it's turning out that it's like a lot cheaper to buy up that random repo than it is to develop a novel rce nowadays. So supply chain security, it's kind of brittle by design, by it's like

distributed design and it's becoming a bigger and bigger target as other parts of our security maturity get better, but supply chain kind of stays the way that it is. So like the security industry as a whole kind of ends up with this natural immune reaction to problems like this which is to come up with acronyms and salsa supply chain levels of security artifacts is one of them and it you can think of it kind of like factory seals on dependencies. So salsa tells you that an artifact like the wall is what you think it is and is contained or or contains what you think it contains. So again, let's say the wall is like I

don't know OpenSSL here. Uh you want to know that like OpenSSL, which is something you really really depend on, came from the OpenSSL foundation. You want to know what went into it, when they built it, how they built it, all sorts of stuff like that. Salsa gives you a way of reasoning about that in real time and very accurately. So Salsa gives you kind of two primary things. One is a framework by which to generate and verify dependency providence which is a fancy way of saying you know where did this dependency come from what makes it up how was it built all sorts of stuff like that. It gives you some standardized language to speak about that with other

kind of like components of your systems and then it also gives you a core set of principles to measure your supply chain security against. So the core tenants here are things like understand all of your dependencies and like what they are and figure out where these dependencies were made, how they were made, what they were made out of, all sorts of stuff like that. And you also want to verify that that stuff is actually true. That you know the dependency is what it says it is and it was made in the place that it was say it says it was made. And you want to be able to kind of ask yourself if that's good enough. So let's say you

wanted to say uh ensure that every dependency you pull in is signed by Google. You can describe that in the language of this salsa framework. And this is a huge huge step in the right direction. Right? You're minimizing and you're rooting trust in very specific things and that ultimately reduces your risk surface area and kind of the number of things or the places that things could go wrong. So, moneybags again in this example, money bags rolls up and you know, he's expecting to be let in by your completely compromised uh supply chain. The wall just isn't behaving the way that it expected to or that you expected it to. But in this case, Salsa kind of

gives you a heads up when the wall arrives. Salsa's like, "Oh, this this isn't signed by the thing I expected it to be signed by. It didn't come from the place that I expected it to come from." So moneybags's plans are kind of foiled. Uh except like not always factory gives or or salsa gives you these like factory seals but it doesn't really tell you anything about the product itself or the logic of the product that you're getting. It also requires you to trust the thing that is doing the factory sealing. So for example, you know, OpenSSL, you have OpenSSL version X. Salsa will tell you that, you know, it came from the foundation, was signed by

them. It has dependency A and it has dependency B in it, but it won't tell you anything about if like version X has any vulnerabilities or if the OpenSSL foundation like went rogue or something. And that's not the point of salsa. The point of salsa is to uh give you confidence that you're getting what you think you're getting and it's up to you to figure out what you think you're getting. So usually this is totally fine, right? Like risk surface area is already minimized here or at least made much much smaller. Uh but I mentioned anthropics infrastructure is pretty weird. So we care about our supply chain security specifically for a number of reasons. And so we want to think about

how we can go a little bit beyond the standard model of just verifying dependency providence. The like super basic way of thinking about anthropics infrastructure that's kind of wrong is like a traditional SAS company. You maybe don't trust your development environment but you do trust your production environment and you promote between them and run a bunch of checks and balances in between those things. But anthropic is really more like a shared university supercomput. Loads of folks are running on the same set of machines and those machines are critical pieces of infrastructure and also we're in a very fastmoving research environment. So folks have a lot of software that they want to deploy to these machines

constantly. We also have very powerful technology and lots of folks are after that technology and we have a we have an imperative to protect it. So there's a huge target on our backs and so just following salsa for us might not be good enough and I'm going to hand it over to Chris who can talk a little bit more about why. Thank you Zad. So, as Zad mentioned, if you're um a regular SAS company with development, production, um you know, maybe you have some branch protection on your repositories, um you're kind of implementing Salsa and um maybe your road map and your maturity model looks like what I've got up on the screen here. Um so you're you know

understanding the dependencies that you use. Maybe you're putting on things like um GitHub dependabot and you're um you know using like pull request based sort of workflows around controlling your software supply chain. Why does this not work for Anthropic? As Zad alluded to, you know, we care deeply around protecting the the workflows and the security of our research environments in addition to production and our inference environments. So pre-building, checking, vendoring, everything works well when your environments are well known. So if you have you know like development staging production canary sort of the you know these like well-known sort of um uh single environments homogeneous environments um the previous workflows and the kind of offtheshelf out of the

box tooling will work well but anthropic needs the opposite of this. We have researchers that are using you know dynamic dependencies often pulling in new patches new particular versions for their individual experiments that they run. Um and they run they work across many different platforms. So anthropic we use multiple cloud providers, we use multiple types of accelerator chips and so the environments themselves end up being very heterogeneous. And so it's worth mentioning as well modeling the kind of the the loop and as we understand our end users with the the security controls that we're trying to build, what do our researchers actually do? I've put up a very simple uh diagram kind of like describing the the core in a research

loop here and it looks sort of like write run monitor and then analyze results. Now our researchers spend a lot of time in the terminal. They're operating on really large compute clusters and they want to iterate really really quickly. And so we ended up building some tooling and building security controls to help protect and govern the third party dependencies that we use. And the systems that we system that we built is called dependant. It's flexible and researcher friendly admission control for our packages that we use. The guiding principles behind our tooling was that we wanted to focus intensely on the user experience. So a big component and a big motivating factor for us was meeting our end users

where they were and not trying to change behaviors too much. We wanted to provide clear and actionable steps. We didn't expect our users to have to read, you know, lots and long documentation to be able to adopt our security controls. We also entrust users with a big escape hatch. Uh, often, as I mentioned, the the environment within anthropic with research is very fast-paced. Uh, and so often users um needed to break the glass to be able to um move forwards and kind of like bypass controls in exceptional circumstances. And then finally, we wanted to define a consistent threat model because it's frustrating for end users if they need to jump through security hoops without knowing

why. And so what does dependent do? It manages our third party dependencies. So specifically, it sits between the internet and our internal artifact registries. It runs admission control checks to ensure that packages that we pull into our environments in our clusters meet our security criteria. In doing in doing so and building a system like this, you get a natural inventory of the dependencies that you actually use. And so we have interesting metadata around the usage um and other kinds of metrics around how packages are actually being used by our researchers. And then finally, we add thoughtful friction on adding new dependencies. And the interesting thing I think about this is that it actually contributes to a

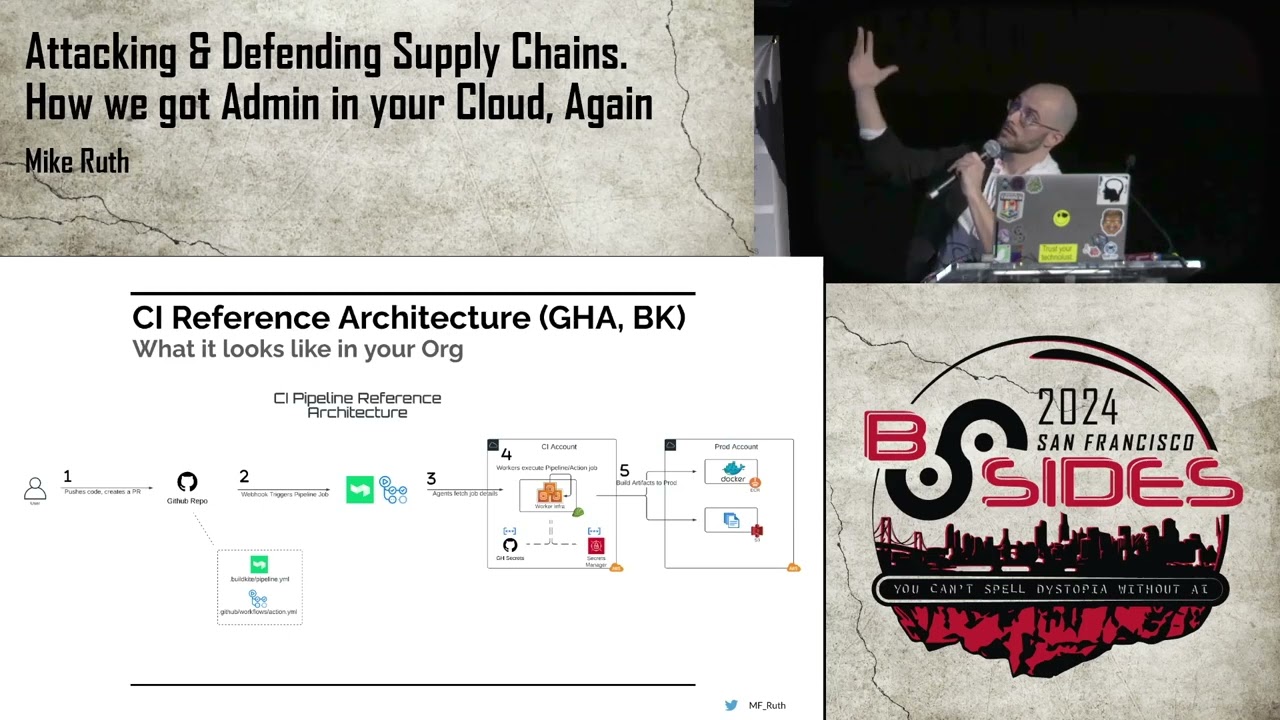

cultural shift within the company around trying to minimize third party dependency usage by adding a small amount of friction to try to bring them into our environments. I've got a bit of an architecture diagram that I've written up here and specifically depend sits as I mentioned before between the upstream ecosystems and our internal package repositories. The users run a CLI command which I'll show you in a moment um where they request dependencies. The admission control checks are run and then when they pass, the dependencies are then pulled from upstream through into the internal package repositories. The workloads then communicate with the internal package repositories to actually load the dependencies at runtime. And I've got a very quick demo

to show you of some of the um the tooling in action. So we've got here a couple of requests and some admission checks that are being run on the dependencies. Um, as part of this as well, the checks are also being run on transitive dependencies that the packages themselves um are um depend on. Um, you can see here as well that um we've got a um a search and a um a list of various versions that are able to be requested and then the end users are able to go and like make requests. uh and as part of this process our admission control and checks run on the packages here users we require some justification and this is what I again

mentioned around the thoughtful friction here um we're trying to encourage a culture around trying to minimize third party dependency usage and these small checkpoints and touch points here allow us to say okay you know have you considered something that we already have in our ecosystem I wanted to run through some of the features as well um we've got money bags and he's going to get quite angry at the the kind of the various uh ways that we're meeting users where they are. Um with this our admission control checks uh in the in the tooling that we built are written in Python. So they're expressed as code. This is really beneficial because it allows us to apply

the same software development life cycle controls that we have already on the admission control policies. So things like testing, validating, verifying, you know, um allowing um mandatory code review as well when we're changing these policies has been very beneficial with the system. We're meeting with users where they are as well. And you can see Moneybag is getting a little bit angrier because users can request new packages without actually having to leave the terminal. And this was a key kind of uh critical component in the design of the system because our researchers are really focused on working dayto-day in the terminal environments. And then finally, Moneybags is getting really upset because it works natively with our

package managers. So, uh when a package and a dependency is approved and transferred, it can be installed natively using tools like pip and npm. If you're building a system like this and if you're wanting to introduce checks and balances before you pull packages in for internal use, some of the admission criteria um that I'd like you to consider are things like package maturity checks. So maybe considering a time delay before bringing in brand new packages. Um open source threat intelligence APIs. So um tooling and and um uh platforms like socket.dev um are really helpful in providing additional intelligence around packages. Um and additionally checking for things like vulnerabilities um and uh you know not permitting in packages if they have

critical CVs uh as well as ensuring that the license of the package is compliant with your organization. Now it wasn't smooth sailing rolling this out and we did face some challenges uh as we kind of um you know pushed adoption of this system. Um some of the things that I wanted to highlight uh that we faced was that for new packages we required a human review. Um and initially the the review here was centralized. Um this is really difficult and it really just didn't scale because the the volume of packages was um really large. Um this led to the second point here around um tension with engineers here. So um the uh researchers uh you

know often to actually like evaluate a brand new dependency they actually need to like build something with that dependency and so it ends up with a little bit of like back and forth tension around actually needing to try something out before I can request it to be you know approved for use on clusters. And then the final thing is that I mentioned in the demo that we have um you know resolution of transit of dependencies. So often you're not just pulling in one package, but to use that package, you need to know everything that the package depends on as well and have that approved as well. Um, and this is really challenging to build that logic that exists in package

managers. Um, and so finally, um, I'm going to hand over for questions in a moment as well. Um, and I just wanted to touch on our road map to protect against money bags in the future and basically like kind of open it up here for discussion will be up. um and would love to chat to you um around um some of the points that I've got here, particularly things around like betting on nyx um things like the network controls that we're looking at uh and also things that we're really excited about um with sandboxing and isolation of third party dependencies. Um thank you very much. Wasn't that fantastic? My goodness, great job. I didn't even have to ask you to

clap. Okay, we have a few questions for these gentlemen. How might we build some shared assurance and detection systems for compromised changes coming into changes in the OSS supply chains? This could range from typo squatting and XY XZ style attacks. Yeah, this is um really interesting and I was actually like just having a discussion with somebody out in one of the corridors around kind of a similar sort of topic. Um my personal take is that it would be really interesting to um extend concepts like salsa with maybe there are like verified um uh you know uh particular like trusted parties that may run um scans on packages. So I mentioned some of the the vendors that

we work with here. Um uh I personally think that it could be interesting as an industry if um we had these um sort of scanners scanning packages in the ecosystem applying some sort of like stamp or additional um you know information and metadata saying hey we think that this package is safe. Uh and then as consumers of the package maybe we could like look at those um additional stamps to say okay you know we've like we've checked it and say socket for example thinks that this package is safe therefore we can pull it in um and that may be an easier way to scale adoption of this you know we built a lot of tooling ourselves for this um

but um you know we have kind of had to develop a lot of this ourselves. Okay there are numerous ways for engineers to install packages. What controls were put in place to block these and force usage through dependent? Yeah, that's a great question. Um, the primary thing that we want to go for here is just network level controls. You shouldn't be able to talk to any other dependency registries other than what we are allowing and what we're like uh controlling using dependent. So kind of like maybe closer to object level or realistically this means making sure that we're configuring all of our package managers to be pulling by default from the registries that we're controlling and that we're managing with

dependent. But like longer term this means no talking to pipi.org whatsoever. You don't need it anymore. All you need is our artifact registry. Okay. Final question. There's a plethora of questions. So um doesn't this approach of friction slow down pace of development and hinder innovation when an engineer is forced to explain their usage of a third party package reviewed manually by a security engineer? Yeah, another great question and in a way yes sometimes this does hurt. uh we tried to be really thoughtful about where we introduce that friction like Chris Chris said so folks can provide that reasoning directly in the flow of pulling in a dependency and also again like I mentioned we we're running on

these like supercomputer environments you should be really thoughtful about the dependencies you're pulling in because maybe they're not actually going to run well in those environments or maybe they're going to slow things down for everyone else. So this does add some friction. It does annoy some people, but one of the core things that we try to do a lot of here is education. How, you know, why are we doing this? Why are we subjecting you to this? Here's like how we could actually be compromised and here's what you can do tangibly to like prevent these types of things from happening like running in sandboxes and whatnot. Thank you so much, gentlemen. Uh really appreciate that. Also, they're willing

to go to their booth on the fourth floor to answer the questions that unfortunately we didn't get to. Um, but we are closing that level at 5:00 sharp because we have our closing ceremony in theater 13 um at 5 at 5:15. Okay. So, we really appreciate you taking the extra time. Thank you so much for coming. Uh, especially Mr. London. Not that we don't love you, Zad. Um, but we do have something a little special for you. You said you were going to give Chris a special gift. Yeah, I know. This one's for you. His different. That one looks empty. Why is it empty? [Laughter] Oh, no. It's something extra special. Fancy. All right. Thank you all so much

for coming. We appreciate it. We hope to see you next year. Um, let's see. Just a couple quick announcements. Remember, 5:15 Theater 13 um will be closing ceremonies. Also, let's see. If you haven't gotten your head shot for free for your LinkedIn or your portfolio, that's also closing at 5. So, you might want to rush over and do that real quick. Um, let's see. As a reminder, you can also support charities, EFF, Internet, Active, California Community Foundation by buying a t-shirt at the coat check um on the fourth floor. And they actually are the thing that closes last at 5:30. All right. We look forward to seeing you next year. Thank you so much for coming. We appreciate you. Yes.