StreamHash4

Show original YouTube description

Show transcript [en]

Good morning everyone. I will be talking about cryptography. I'm very happy that so many... that so many cryptographic topics have been beautifully spread at the conference this year. I think I managed to encourage people or show that it's not that scary. Today I will try to be a little bit more scary, a little bit deeper into the subject. Maybe someone met me with my program. Did anyone meet with ZetTunnel? Yes, a few people met, nice. I don't know if anyone met with libp11 or with PKCS11 engine. No one? Oh, one person met. With PKCS11 standard in general? Does anyone know what PKCS11 is? It is an API interface, through which you can communicate with cryptographic cards. For more

or less two years I have been the guardian of the interface between PKCS11 and OpenSSL. This is my new project. And here I will talk about the evolution of something I started a few years ago. The first version of this shortcut function was launched in the competition for SHA-3. It collected a very interesting cryptoanalysis. I think that was the point. The second version served me well for the doctorate. And here is another iteration, the fourth and the fifth. I will tell you what the shortcuts are, such an introduction, such a baseline, so that everyone knows what we are talking about, what we are talking about and what are the requirements for the shortcut function. We use this concept somehow plus minus

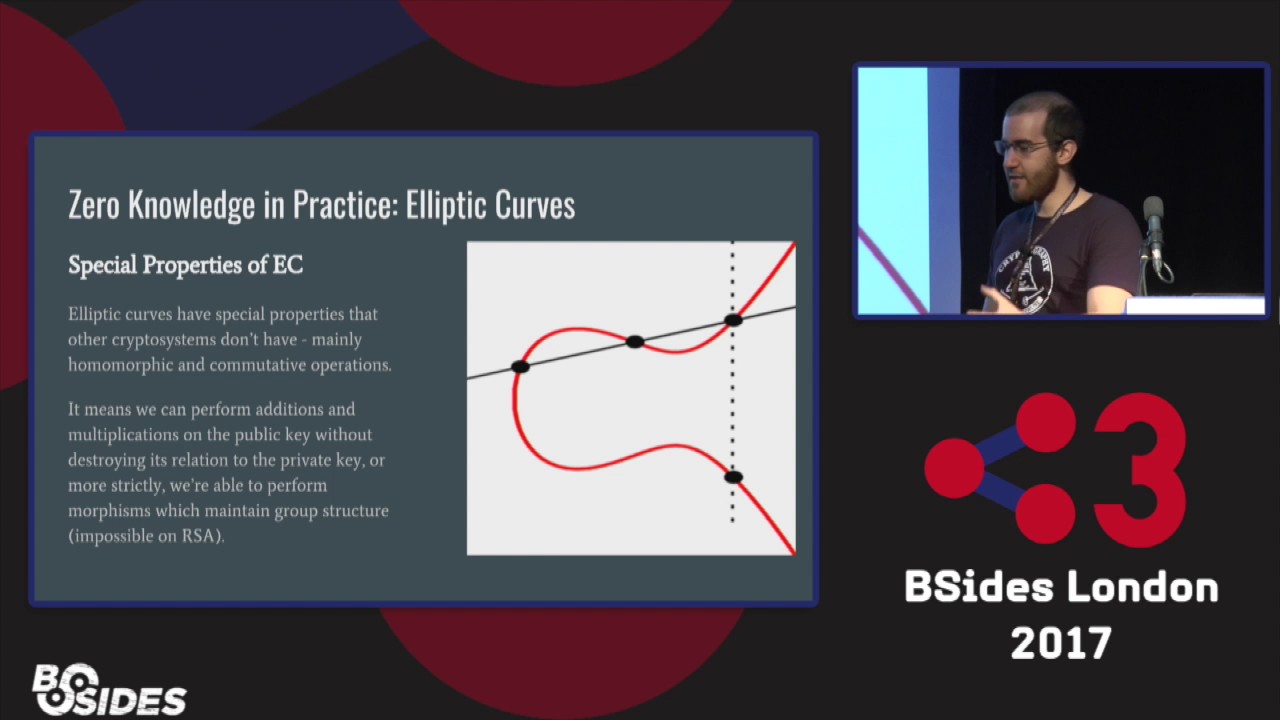

intuitively. It would be nice to systematize it. Later I will talk about these classic shortcut functions, which for many, many years, up to the function, up to the actual of the competition for SHA-3, all the shortcut functions we met were the functions based on the Merkle-Degmar-Damgard construction and I will talk about it. And then I will tell you about my idea of improving it. So, the shortcut functions. Short function is a function that has an input of any length, some string, bytes or bits, and an output of a constant set length for a given variant of the shortcut function. It would be nice if this layout, if this dependence on input to output was fairly even. These are

all the requirements we have for the shortcut function. Does anyone know this shortcut function? What is it for? For control sum and what? PESEL? Who said PESEL? I have a notebook. Congratulations. The second function is CRC32. Has anyone heard it? Does anyone know what it is for? For transmission errors. There were a few things that used floppy disks to detect errors. The third one? Some Zlibs use this kind of stuff.

Cryptographic function of a shortcut. Here is a confusion. If we have a shortcut function and a cryptographic function of a shortcut, what is the difference? The difference is that the usual shortcut function is used to detect data modifications. But not to detect target data modifications, but to detect random data modifications. Like a transmission error. For example, the first one is made to detect when someone changes two digits in reverse order. So that the control digit always changes if someone makes this kind of mistake. Or one digit changes to change. So it detects this kind of things. But of course it is done in a trivial way. generate the right code knowing the algorithm, because there are not many possibilities for this reason. In

the case of cryptographic functions, we are looking for a bit more complex properties. The first thing is unidirectionality. We would like the shortcut functions to be unidirectional. This term will come back a few times. in my presentation, so that on the basis of the output, nothing could be said about the input, about the value that is, that is shortened. Yes, the second thing, cryptologists, smarter than me, claim that it is impossible to bring it to the first or the other side, that it is a separate property, that on the basis of some one, one value of the shortcut, so that you can't find another output that gives the same function, that gives the same value, The third thing

is resistance to collision. What are collisions and what is the difference between them and finding the second counter-image? The second counter-image is the second input data which gives the same value of the shortcut as the first one. So we have one set and the second one is different and gives the same shortcut. In the case of collision, however, we are looking for two values, we have full freedom of choice of these values, two random values that give the same shortcut. Is it simpler? Yes. We'll see why in a moment. To abstract from the function, from cryptography, from the shortcomings of the function, which can be hidden somewhere inside, there is something called the birthday paradox.

As it is always explained, when we have a class, there are about 23 people in the class, we have a 50% chance that two people in the class have birthdays on the same day. And this probability then increases. with 365 days. It is translated into large numbers in such a way that if we want to ensure security at the level that is currently considered acceptable for the 2 to 128 type, for symmetric algorithms, that it is necessary to do something with 2 to 128 iterations, to make the attack effective, or the size control, then we need as many as two times as many attempts. To ensure safety, we need as much as 2 times the

length of the shortcut function. That's why the shortcuts are not shorter than 256 bits. To be more precise, the function 2 to 256, to have a 50% probability of collision, these functions must be generated in 1.2 times 2 to 128. a little more than the element of this value. So that's where it comes from. What do we use cryptographic shortcut functions for? To detect target modifications. And here are the shortcut functions. We know MD5, SH1, SH2, RIPEND was for some time such a competitor for SH1. All of these functions, except for SH3, are based on the Merkle-Damgard scheme, which I will talk about. How do we calculate the shortcut function? It's a bit like what it looks like from the

API side. We have initialization, where we set the initial values of this state, which we will update. Then we update this state many times for subsequent data portions, because we can calculate shortcuts of larger data blocks than would fit in memory. Therefore, this function can be called many times. And finalization. This is the perception. When it comes to collisions, to protect against collisions, the update must ensure them. When it comes to other properties, it is enough to ensure them finalization. And to the promised construction of the Merkel Damgard. Here things may start that not everyone will know. The construction looks like this. We have a function called compression function, which is a one-way function. It is a holy grail of cryptologists. One-way,

something is happening on one side and on the other side it is impossible. We have a mathematical construct, which I will tell you more about in a moment, which is supposed to ensure this one-way. We divide the input data stream into blocks. in case of mentioned functions, 512 bits or 1024 bits, for example SHA 512. We divide it into blocks and introduce them to the compression function input, along with the value of the previous block or at the beginning with some vector initiating value. And now, if we are able to find some two input blocks that give us the same value in this place, two blocks that give us the same value in this place, then we have

a collision. How does it look for example for SH1, such a one-way compression function? Here we have some bit rotations, here we have some simple function of the type XOR and OR, such operations of four words. Here we have five 5 32-bit words at the input, the same at the output. Here we have the module adding operation. Here we introduce a constant, which also changes. There are 4 different constants and 4 different functions f. And here we introduce a piece of these 512 bits that enter, there, processed.

And we do it all together 80 times and we believe that it is one-way, that it cannot be moved to the other side. The disadvantage of doing it 80 times is that it takes a little time, even if it is simple. It is worth noting that when we add these four things here, they reach the first fragment, the first 32-bit number, which in the next iteration, in turn, reaches the second one. So, to a certain extent, these operations can be a little parallelized, that we are able to count two rounds more or less at the same time, because we do not use the result of one round immediately. So there is a very limited possibility of parallelism.

But we are limited anyway. These 40 rounds are performed one after another and every second round depends on the previous results. These non-trivial results that we have to calculate the function f, add and so on. This is a simple rotation operation, which is simply switching bits. And the finalization. Here we come to the attack we heard about the first day, the length extension. The finalization is that at the end we write the number of bits converted, the total number of bits converted, we supplement the block to the end with zeros and we convert it normally as if it were an output block. That's why this attack is so trivial, that you just need to add this block

and then you can add anything to it and make another finalization and get another correct shortcut starting from the same number. So if the secret was at the beginning, then in the new shortcut, the secret will also be counted at the beginning. The security of this solution, generally, There are some more or less theoretical points to the structure of Merkle-Damgard itself. It can be nicely proven that if the compression function is a one-way function, behaves like random oracle, another holy grail of cryptography, then the structure is safe. However, in practice, cryptologists show that if this function is not entirely ideal, then these properties allow, for example, to replace blocks with places, if only some fragments, blocks' lines are found, which have the same

input and output, then they can be replaced with places, because each block is identical, each compression function is exactly identical.

and length extension, which I've mentioned before. Efficiency. If we have to ensure one-way performance in every block, in every iteration, then unfortunately there is no chance to work super fast. Also the idea of multiple iterations, the function of the round to obtain compression function,

it is not very easy to be parallelized. For the simple reason that each next operation must use the results of the previous iteration. In this case, the SH1, which we talked about, every other. But there is a lot of it. So here I saw a possibility of improving this solution. And we come to my idea. The idea is that if it really is, as it seems to me, that one-way is required only in finalization, then let's not do it at every step. Let's not do it at every step. Let's not convert every block of data so that it cannot be reversed, because there is no such need. Because it is wasting time that we can use for

something else. The idea is that instead of having a block of data that we iterate, that we process with subsequent batches of input data, we divide the input data into fragments of 128 bits, not 512, so a little shorter, and we process them, we XOR them from with 128-bit state vector. It is a 4-element state vector. We process these 4 elements of the state vector completely independently, using 4 different non-linear functions. This is a common method for the whole StreamHash family, with accuracy to the number of blocks. One difference concerns the last state vector, where apart from In addition to the previous value and the input value, we also doxorize the number of bits converted so far.

What is the use of these two things? Firstly, it makes it impossible to do all these attacks related to block transitions, because each operation is different. and the second one is the finalization of the case in the Merkle-Damgard construction, which means writing down the number of bits converted so far. In the case of Merkle-Damgard, it is writing down the number of all the bits converted. I write down the number of bits converted so far every time, which means that in each block with exception of the last one, it will be the multiplicity of 128. In the last block it can be a number smaller than 128. This increase in the number of bits converted. And the finalization. The finalization is done

by remembering the state of the function. I remind you, the state of the function is these four 128-bit numbers. I will remember this value. I reset the state and perform 12 iterations of my non-linear function, similar to the actualization function, not identical to the actualization function. One difference is obvious, I do not provide input data, original, because they have already ended, I have already converted everything I had to be converted, and now I finalize it, so I give these four values. Here is the difference between the usual AES, I mean I skip the function of key expansion, which is in AES, which prepares the round keys on the basis of the main key. Here I simply enter these

four values in a circle three times. I perform these 12 rounds and I process the key. I process it and add 12 more rounds to the end and the result is the value of the shortcut. The value of the state function of this state vector at the end is the value of the shortcut. One question, please. Do these NLF functions have to be identically fast so that the parallel processing would not have to spend too much time on sync, that you can do it now and you can do it now? I don't know if I pronounced it right. Yes, yes. Could these functions be different? I mean, significantly different in terms of efficiency. These functions have the same structure, they are performed at the same time in all

versions of the shortcut function. In the original, maybe it's a good time to tell you what such an NLF looks like inside. In the first, second version of StreamHash, we are at the fourth, In the first and second versions of StreamHash it was just an S-box, a lookup in the table. A simple transformation that provides a sense of non-linearity. Here I used the function of the AES algorithm round. with a constant key. We have four different non-linear functions. They are implemented in such a way that AES is calculated with different constant keys. So there are four constant ones and they are used here. Okay, finalization. What is the efficiency effect? The efficiency effect is such that in comparison

with these SHA-256, SHA-512 functions,

Both scales are logarithmic, here is the number of bytes, so the less the better.

A more general question about efficiency, because it is possible to say that the faster the shortcut function, the better, but for example, to store passwords, you use bcrypt, which is cool because it is efficiently heavy and here it is nice that it is heavy and it is difficult to brute force passwords, so the faster the better or the longer the better, or does it depend? Good question. To the shortcut function, the faster the better. As in cryptography in general. Generally in cryptography, such a philosophical side note, it is not difficult to make a safe function, a safe shift or a safe shortcut function or a safe algorithm. It is difficult to make a safe algorithm that works in a

sensible time. So the faster the better. But if we want to use it for key derivation or for an application where it is good to work in a free way, Even the efficiency of such raw calculations is not necessarily sufficient, because if we talk about bcrypt, scrypt and this type of things, they are optimized not only in order to perform - The idea is that it should be done on general purpose software with the same efficiency as in optimized solutions for breaking, so that the use of dedicated hardware does not give the attacker an important advantage. Because at the same time, even if we use it for passwords, we don't want to stop the attacker, and we had to spend most

of our time on our application, which is not only used to check password logging, so that the application only checks passwords for logging. We don't want the user to wait a minute. Our application is the key. Another question for these ASICs. What are the typical tricks to slow down the hardware optimization? The typical trick is that we generate some table based on this password, and then we count the table as a shortcut. In a way, there are some mechanisms like that. We better count the abbreviations from the back. We fill out the table on one side and count the abbreviations on the other side, so that we can convert what we have calculated at the beginning, so that we can write it

down physically. So here are the two most popular SHA functions at the moment: 256-bit and 512-bit. Here is a curiosity that the 512-bit is faster, that is, it needs fewer byte cycles, although it is safer. Does anyone know why? Maybe the length of the word or something? Who was the first? I think here somewhere. That's good. Fortunately, I have a lot of messages. Thank you. Yes, the answer is good. SHA-512 has been designed to effectively count on 64-bit processors. Therefore, they can simply process twice as much information in a cycle. And so from the first day of today's conference. A mistake, right? From the perspective of always seems stupid. Well, single round of AS does not provide full diffusion

of bits. What is the diffusion of bits? Diffusion of bits is the property of cryptographic transformations, that all bits of the output depend on one bit of the input. The avalanche effect, and such terms are used in cryptography. And the result was a completely complex, not very manageable attack. I will let myself go a bit deeper. To provide diffusion of bits in AES there are two operations. The first is ShiftRows. Maybe one more step earlier in AES. AES processes 128-bit portions of information and processes them in such a way that it puts them in a table, at least for the sake of explaining this algorithm, in a 4x4 table. 16 bytes on one side, 4 bytes on the other side, 16 bytes, 8 bits,

128 bits. Thank you. Unfortunately, these are not my props for the author. So first we move it, so if, let's say, this bit, only this one byte has changed, we have Sbox before, which I omit here, so it only processes single bytes, but here is an important element, that if we have one byte here, it has only changed, then this byte remains unchanged. So this operation in the first throw gives us nothing, but in the second throw we have a mix columns operation, which is the most beautiful thing in AES, actually the only such innovation of this algorithm. This is a little magic performed as a multiplication of these things in columns by the mother, or left-sided

multiplication by the mother. The multiplication through the left-hand matrix. First there is a matrix, and then there is a vector through which we multiply. So we have these four vectors, we get new vector values, where all these bits mix quite nicely. The effect is very pleasant, that within such a column all the input bytes depend on all the output bytes. Very elegant thing. After the second step of AS, we have the effect that each column is nicely mixed. And this is how it looked in StreamHash 4. We got 4 nicely mixed columns instead of the whole 128-bit value. If we go back to the previous operation, what would happen if we repeated it again? It

moves. Each line has a piece from each column. If we did the "mix columns" operation again, we get full diffusion after two iterations. That means that each output byte depends on each input byte.

Hence the first idea for StreamHash 5, i.e. two AS rounds in each step instead of one, for the simple reason that they provide full bit diffusion. The second idea that came to my mind, which I liked less and less as I analyzed the issue, is to increase the size of the state vector.

That means instead of counting 4 128-bit numbers, you could count more of them, even up to 12 and only then shorten it at the end. I think that the implementation of StreamHash 5 will appear on GitHub before the end of the year. I would like to think about it a little more. Although I already think that I am strongly convinced that this attack can be fixed, that it is not an attack that fundamentally questions the very idea of the StreamHash family, and only the implementation error of this non-linear transformation. I have clearly exaggerated a little with simplification. But the idea is that it should be as simple as possible to achieve such an effect. If I go back to the issue of efficiency, here is the difference in

efficiency, let's say up to 128. Why does it look so strange? Because this finalization must be carried out every time, even if we count the shortcuts for one byte. for 1 byte, 2 bytes, 4 bytes, it's like this, one byte per 100 cycles, one byte per 1000 cycles, it takes to calculate it, only when we reach a few hundred bytes, 512, 128 bytes, the results stabilize on some level. What is the difference between this? Between one and the other is a row, between the faster SHA-512 and my StreamHash is more or less 12 times the difference. I have a question. There are Shadva functions on this chart. Shadva 3 won the Ketzak competition. What does it look like in the background of

the other functions, including StreamHash? That's why it was not accepted that it does not look better. The competition for our 3 was strongly modeled on the previous competition for AESA. When there was a competition for AESA, there was a triple DES. By the way, speaking further, the accuracy of the attack of this SWIT32, that the data block is too short, which is not a weakness, implementation of the functions, but only the foundations of this function, then the triple DES is still quite safe. There are no practical attacks, so that the triple DES could be attacked practically. However, it is terribly slow, because the triple DES was designed for hardware implementation. It did not predict implementation at all. It predicted the implementation of the software

and defended itself to be as slow as possible in terms of capabilities. The idea was that the breaking ones would be the American government, and those who would try to secure themselves would be some sort of weaker financial institutions. Therefore, DES was designed to make the hardware implementations fast and the software implementations to be as slow as possible. and the bit change in places that are very unpleasant to implement. So when the AS algorithm was created, the main motivation was not to make it safer, but to make it faster. And in fact, this effect was achieved. Much, much faster. Even in software implementations without a hardware installer, which currently has processors. At the moment, both Intel and ARM modern processors

are equipped with hardware-related AS instructions. And with Kecak, as I understand, this acceleration procedure did not work? With Kecak, this acceleration procedure simply did not work. Exactly. And that's why the problem with the adoption of this algorithm is that it is comparable, a bit slower even. I have a perception that the biggest advantage of KetSak is that it is not based on Merkle-Damgard and if there is more milk in it, we have something wrong. Yes, that was exactly the intention, to come up with something that will not be based on it. And with this thought, StreamHash was also announced first for this competition, as something that is not based on Merkle-Damgard. This is sponge, a sponge. This is also a very interesting

idea, not orthodox, another solution, another approach to building a shortcut function. Thanks. So, here we have a 12-fold acceleration. Returning to... Going back to my idea, if we could do two rounds of DAS in each step, we would get, in the worst case, only six times faster than the previous functions, which is still a bad result. So I'm of good opinion when it comes to performance perspectives. The issue of safety assessment, if someone could come up with an attack that would be applied in the improved version, it would be valuable.

As for implementation, at the moment the current implementation of StreamHash 4 and the next one, StreamHash 5, uses the ESP manuals. Maybe it would be worth it if someone would spend some time and implement some kind of a side-channel-resistant implementation on pure C. Or maybe also for ARM. That's what comes to my mind. If someone has any other ideas for other platforms, where it could be implemented, it would be valuable if someone wanted to join. There were two different solutions for hardware AS in ARM. At least as processors. Yes, I mean which ARM, because ARM is quite a wide range of processors. What calibre do you want? I was thinking about 64-bit HARMs, because that's what's included in

64 architecture. The general question is: where did you get the idea to play with it? If I remember correctly, you also have a encryption algorithm on your account. Is it a hobby or do you think that something will come out of it? I mean, I think you are probably the only person in Poland who is so serious, or I don't know, to be used for it. These ideas were not rejected at the application stage to various contests of yours, so I assume they are not bad. Only a question from the point of view of the idea for such a hobby or an idea for something more? - No, I was not dealing with the keywords. - I think I must have been wrong.

- I listened. - I must have been wrong. Or with another contest for the European hash? - No, no, I was only dealing with the short functions so far. Why the idea? Because it's interesting. Like with everything. In the first slide, when I was talking about myself, I didn't say where I was employed, unlike all my predecessors at this conference, or almost all of them. I have a lot of things to do outside of work to bore you. I don't need to tell you about work. Of course we are also looking for employees, everyone is looking for them. Thank you. I don't know, maybe... Yes, I told you about the functions of the sruta, about the construction

of the Merkel-Damgarde, about the function of the StreamHash. Does anyone else want to ask any questions? We have some time, we still have a few minutes. Okay, I think I'm seriously considering making some t-shirts for the next year. For now, I still have some notes. No, seriously, very nice. These are more compact than last year. If someone wanted, they are still to be taken, they are still there. Thank you very much. I made myself beautiful new business cards this year. If someone wanted, I would put them. Okay, for those who did not use the tunnels, it turns out that most of the room did not raise their hands, maybe it's just so hard. This tunnel

is a proxy that can receive connections via TCP and connect to the indicated address via TLS or vice versa. to receive connections via TLS, and connect to TCP. This is a situation when we have a legacy code that is running on mainframe, we don't have a source code, and people who wrote this source code are no longer alive, and we need to put it on the Internet, then adding to this encryption with cryptographic verification and so on, can be done in a minute. It ceases to be an impossible task. Normally, in the case when we would not have the implementation of the TLS in the application, it would be a classic solution. You have to take the source of the application and change all network commands to the

commands of our SSL library or TLS library. Here you can add it quite easily using proxy. Yes, that's right. So what we are saying is that having access to the certificate, you can do it in the middle of the domain, if needed. Yes, I sometimes use it in the middle of the domain. Maybe it would be worth it if someone would sponsor some automatic generation of certificates signed by the CA data. These things are available. The idea is that I do business based on this product, the product is free, but sometimes it happens that a company uses it in such a way that it would like to have technical support, which is no longer free, or would like

to have some features like, I don't know, HP, for example, ordered me to add OCSP verifications to this type of things, because they needed access to their LAN. And the programmer is still alive. So, the question was whether I added it to the a public repository or just for one company, private or separate. I try to encourage clients to make the functions they add public. For the community it is of course valuable, but for clients it is valuable in the way that they have updates. to their functionality, that they don't have their own private fork, which is also possible, of course, if they use it internally, or, as it happens, they buy a license that is not GPL. So

in this case I have succeeded. I almost always succeed. I had a few cases when clients asked me to fork it and to have something exclusively, a piece of code and I hope they regret it. Honestly, I had three such cases. Thank you once again.