Big Data Lake, Big Data Leak by Ben Caller

Show transcript [en]

yes yeah uh thanks yes uh i'm ben cala and i'm gonna be talking about how i found some exposed data science clusters which used for big data analysis i'll describe how you can run code on these exposed clusters and what you might get if you do and uh how i can work out who owns the cluster i find in the wild so i can report it to the owner and then i'll finish with some recommendations so i started a new job and we got this email from aws abuse telling us that someone was using our ec2 instances our resources to perform ddos attacks which isn't great um so after taking a look i discovered they were part of an emr

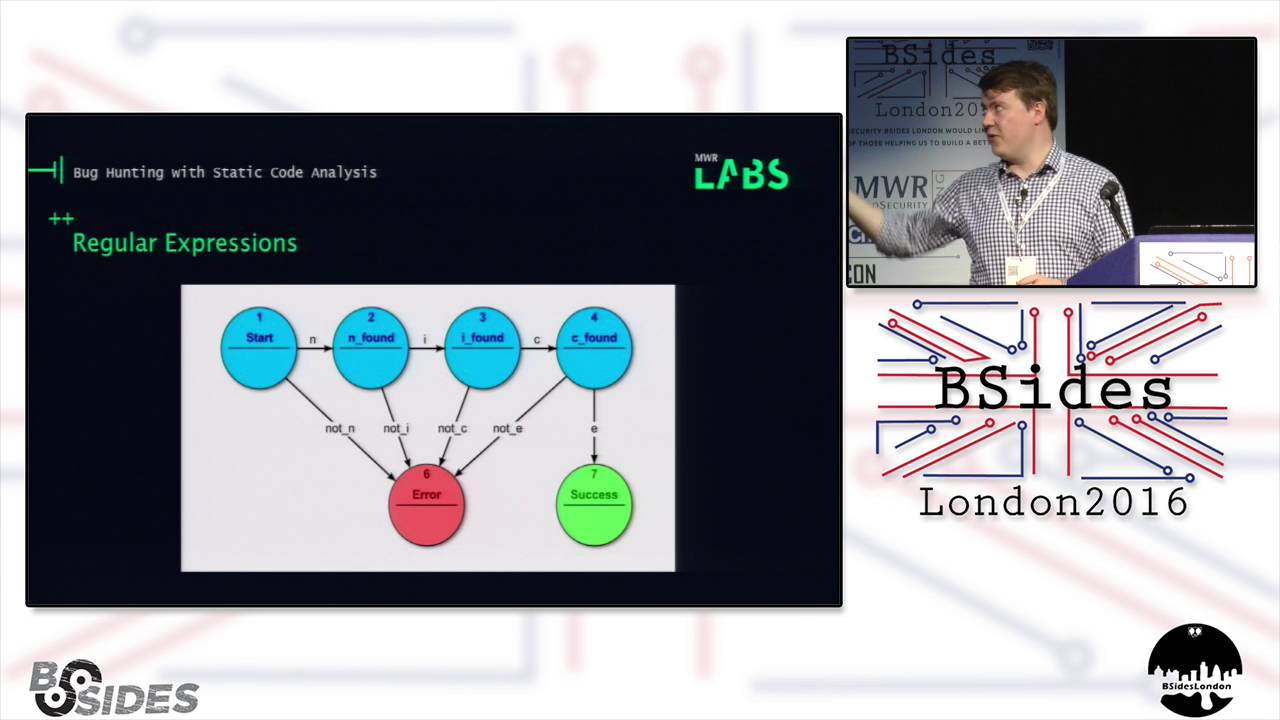

cluster um so emr or elastic map produce it's an aws service that lets you fairly painlessly spin up a cluster of tens of ec2 instances um they come with a collection of open source data science applications installed and it's all connected to your data lake in s3 which is where you dump your exabytes of raw data files that you want to be processed like for your machine learning or whatever um there are several useful tools running on the master node of the emr cluster that people might want to access remotely and if you follow some of the tutorials on the aws blog you'll end up with ports for some of these services exposed to the internet which is an

issue because the majority allow you to execute your own code on the cluster um so top right we've got zeppelin which is basically the same as jupyter notebooks you got interactive notebooks so obviously you can run code on it bottom right we've got the livy web interface which is when there are applications running it's just going to show the status of your running applications and it looks like there's no functionality so it's not too dangerous to expose it but it has a rest api that allows you to submit applications to the cluster on the left we've got the resource manager the resource manager has a queue of all the applications you want to run on the

cluster and it's where you submit your application you tell it what resources you need so how many how many containers how much how much memory and then it looks the space in the cluster to run your to run your job when it's available and again this this interface is non-interactive it looks like there's just logs and application status and but it also has a rest api which allows you to submit code to the cluster and um bottom left is the resource manager again but it's not an http api it speaks protocol buffers with a custom protocol um so this is kind of what it looks what the resource manager web interface looks like if you've left your

cluster open to the internet for a while um so you've got 10 000 applications in the queue which is the default maximum q length and there are lots of these jobs with name get shell so this tends to be the bash light malware which is what's performing the ddos attacks and it's running as user doctor who which is the the anonymous user in hadoop um but if you want to log in as a different user you can usually use the passwordless hadoop simple auth where you put user.name equals root in the query string and then you will have root permissions on the cluster um so normally if you want to run applications on the on the emr cluster

you'll use the aws emr apis but for that you need aws access keys however we just found an open port where we can talk directly to the cluster software so um in order to submit our application to that we can we can talk to the resource manager for instance and ask it for a new application it gives us an application id and then we submit the application specification you don't need to read that but it's just giving it some environment variables and telling it the location of the code and some data files that you might need and then the resource manager is going to launch the application master container somewhere on the cluster for your

particular application and your application master is then going to ask the resource manager for the resources it actually needs to run your application and then when the space on the cluster is going to schedule and run your job normally from the internet we're only going to be able to connect to the emr master which is where these interfaces are exposed we're not going to be able to usually talk to the machines inside the cluster where the application actually runs and also normal emr applications they write the output to s3 so we're not going to get the output from that because we can only talk to the resource manager so you'll need to get your jobs to ping back to

your own server if you want to if you want to get something out of it um so what code do we want to run if we find an exposed cluster um so the malware is doing something which makes use of the compute resources of the cluster so things like uh running ddos and that's gonna that's gonna cost you a lot of money in the network traffic mining monero they're probably not going to make that much but yeah you can do that and if possible you want to run this in parallel on the cluster you got to make sure you ask for enough memory because the cluster has some monitoring in it and lots of malware only gets to run for a

few seconds because it gobbles up too much ram and gets killed um but apart from compute resources uh there's very little of interest stored on the cluster machines what you do have is potentially from your foothold inside the cluster um you might have security groups which give you access to databases or other hosts but what i'm most interested in is just uh grabbing aws credentials because that's where that that's where the fun stuff is and grabbing credentials is actually quick and simple because you don't have to run your code distributed on the cluster um one way to do it is to specify a custom command that's run to launch the application master container because if

the container launch fails the resource manager receives 4 kilobytes of the error message so we can set our container launch command to print temporary credentials to standard error and then fail and then we're going to be able to grab the credentials from the resource manager log so i was interested in how common this misconfiguration or leaving leaving these ports exposed was so i did a bit of a port scan of the ec2 ip range uh try and get some credentials from clusters and then i can do a targeted enumeration of those accounts in order to give me clues as to who owns the accounts and then possibly i can report them to their owners so

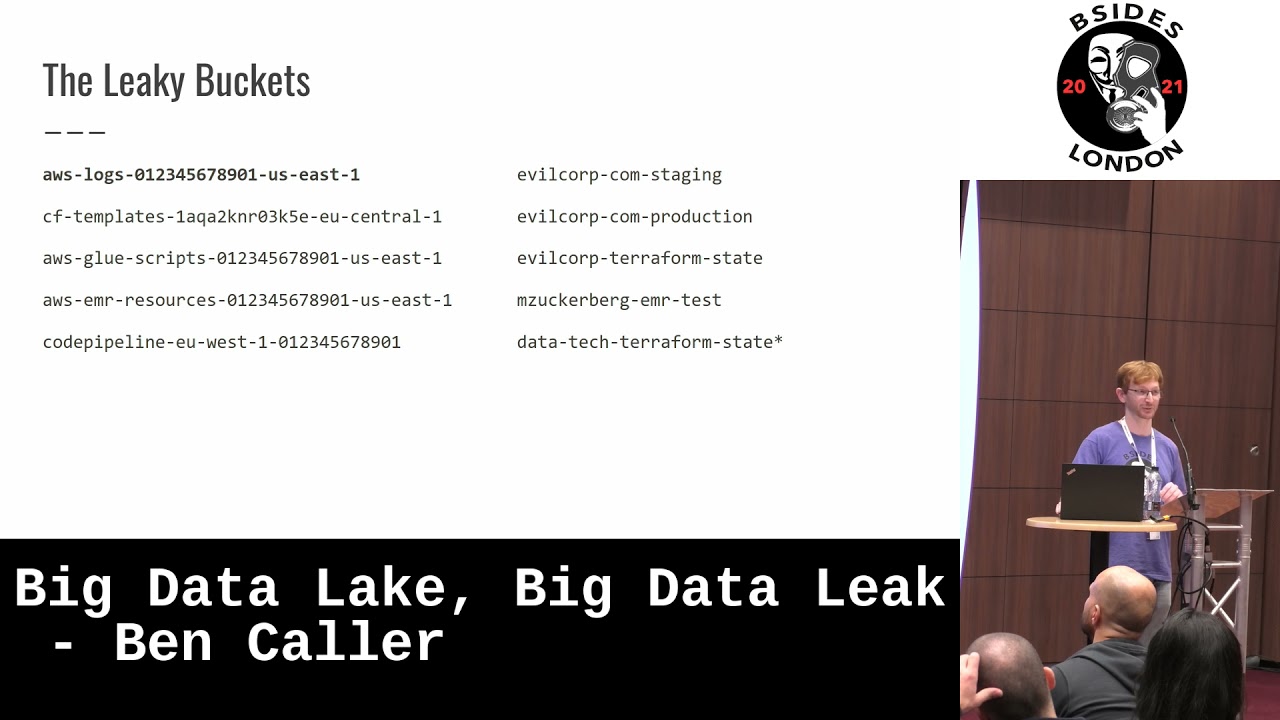

once you receive aws credentials the first task is to get the associated account id it doesn't give you much information but it's just it's just so you know if you've already enumerated this account and you can ignore it because you're going to get a lot of repeat customers um the next you want to list the s3 buckets and a handful of file names in them maybe this is where you get all the good information um you i probably have access to all the buckets in the aws account so that's your data lake backups code logs you name it most buckets i found in one account was 913. you can almost always list them but uh

in rare cases when you can't uh i can guarantee that this that there's a bucket that exists with this aws logs account id region um so if you can you can probably access it and plunder the contents to hunt for clues in the emr cluster logs which go in this bucket um apart from that the other buckets with just account idm region don't give you much except um you know what regions the resources are in for further enumeration um well what i'm really looking for is bucket names with a company name in them so um that's probably the best clue i'm gonna find um occasionally if you find someone's name you can go on linkedin

and search for data engineers um you do need to be a little careful because sometimes you'll find salesforce or uber in a bucket name but it's just that come a different company's salesforce data or their uber ride receipts for expenses um and a bucket i find quite often is like terraform state um you don't get anything from the name but there's usually lots of juicy secrets in the terraform state um it's quite a useful bucket um apart from s3 sns um so you might have an event like monitoring alerts and on sns there are subscribers there are subscribers to the events that could be a lambda hitting a web hook sending sms or email so if you

list the email address email addresses and they're all from the same company email domain that's a great clue as to who's who owns this cluster um it's very common to have permission to list all the ec2 resources of the account like um so you can look in tags um tags of instances might have an email address security groups will probably like name the office or the person's ip like person's home ip address um you usually have access to dynamodb but it's not so useful for clues and then there are a bunch of other services where you'll find secrets and all sorts of interesting things but uh that's rarer it's more like when they've launched the cluster with basically

administrator access um santa regions aws instances are hosted in different regions and over to the east you'll find the cn region which is china so it's a partition of aws which means it's like a parallel universe so uh you can use the credentials but only but you have to contact the cn aws endpoints the creds don't work on the endpoints on this side of the great wall and um again usgov is the partition called govcloud um so that again you can only use them against usgov endpoints i only had access to this once um and i found that i could use the credentials on the cluster but when i took them onto a different machine they didn't work

anymore um i don't know if that's normal or not um because i only i haven't had a short amount of time with that one so is this a common misconfiguration um so in total scanning intermittently over about a year i found 900 aws accounts um as far as figuring out who owned them about half of them weren't very relevant so they were either test data and tutorials or um i just couldn't figure it out further eight percent were amazon accounts doing like um proof of concepts um but i managed to find um i'm actually report 11 of the clusters to get bounties each between two hundred and fifty dollars and seventeen thousand dollars but vastly skewed to the lower

end of that so that was that was actually ten companies because i got one double offender um so recommendations um if you want to access one of these um web interfaces on your cluster the aws documentation will now recommend ssh port forwarding um so for this if you use a command like this as long as you can ssh into your cluster um with this if you go to on your web browser and access localhost port 1234 your ssh client is actually going to root that onto the masternode of your cluster on port 8088 so this is a more secure way of accessing things without having to open lots of random ports um abusive your infrastructure for ddos is

going to cost you money and the credentials leak is going to be much more damaging so um emr big data tools are remote code execution as a service so don't expose them um but really it's a dumb bug and it's kind of an organizational issue i think because in our case just the data scientists opened the ports so they could work from home and i'd say um love your data scientists but apply the same controls to them as you do to developers like which could be training or change control or such as terraform code review because they're often a blind spot of of the security team so um have devsecops look after them um and yeah not everything manages to

get reported because you know it's very difficult to find a security contact for a lot of places um so yeah that's my talk i found some exposed data science classes um yeah [Applause]

um so the ones with the ones with broadband tv programs obviously uh paid out so they weren't so upset um some just didn't care i was like [Music] it's tough reporting um i don't know there were lots of different types of companies some i just i just can't figure out or i wasn't too sure how they would take it so i was a bit too scared to actually report it to them in the first place um yeah yeah what about the other half the other half of your data set you don't know who the companies are yeah i don't know i'm afraid um well so at some point maybe they're going to notice that they

they will get emails like i got that they're probably ignoring about their instances being abused but um i just couldn't work it out like either they had locked down their permissions of their accounts so that i couldn't do the enumeration like i might have been able to access some like buckets with lots of their data because obviously processing data which is why they've got the account um so um i don't think they care i mean so it's sort of the aws um shared security model which is like they take care of the security of the cloud but if you want to if you want to like expose your stuff um and give code execution to the world

it's not really their job like they will send you emails if it's affecting their services like you your aws is being used for ddos but other than that uh they're gonna have so much like and this is just one thing [Music] yes yes a lot of a lot of things like i can i can tell but like they're just they're just doing like tutorials and they don't have anything else in the account so i can i can tell from the bucket names that like there are common data sets but sometimes they've launched these tutorials but it's in a mass an account with all their other tests all like the test account of the company and they might think it's okay

it's a test account but they've still got like all that code and a bunch of like staging instances and things

i mean it looks like like sometimes i see a lot bucket names talking about nyc taxi data set it's just like so there's lots of a lot of stuff just working on that um and that's probably how they've ended up exposing it to the internet because it's their first time playing with it um rather than running a production cluster