Malware Campaign Tracking Using Big Data Analytics And Machine Learning Clustering

Show transcript [en]

hi everyone have you ever wondered how threat actors can leverage your vulnerable web servers uh to spread malware and achieve their malicious goals through wde widespread campaigns have you ever wondered about the techniques that they use the vulnerabilities that they exploit and the samples that they deploy you might even have wondered how you can expose this activity on your own in your own data sets so over the past year uh imper threat research has been attempting to answer these questions and we want to share the framework that we've developed and some of the campaigns that we've uncovered using these techniques with you today so my name is Daniel Johnston I'm a security researcher um within the

threat research group Adam perva my domains of specialty are web application security bot detection and malware analysis with threat intelligence hello can you hear me uh is your mic working I'm not sure now it's working I'm a car I'm also a FR research I'm 16 years at in Pera and my interests are big data and data science and my domains are both application security and data based security so I'm going to talk a bit about the agenda um in the introduction I'm going to talk about the the data that we collect Adam Pera um along with some of the challenges that we have uh in that data set I'm also going to talk about how uh threat actors leverage web

application vulnerabilities um for malicious G and to the to deploy malware campaigns then I'm going to move on to talk about the framework that we've developed for automating malware handling uh I'm going to talk about how we identify U malicious samples and malicious URLs in our large data set um and then I'm going to talk about how we uh safely handle those samples then I'm going to hand over to AI who's going to talk about uh M campaign identification uh using machine learning clustering and uh using anomaly detection and then finally I'm going to move on to talk about some of the campaigns that we've identified uh using the Frameworks that we've developed okay so onto the

introduction um so one of the flagship products that we have uh at impera is our Cloud Web application firewall or Cloud WAFF and within the cloud WAFF we have the impera proxies and the security engines located in points of presence all across the globe um so the the point of the security engine is essentially to mitigate malicious traffic from malicious actors while simultaneously allowing uh legitimate traffic from uh benign actors to pass through to the origin web server so we see billions of of malicious requests every day and we store those uh events in in our threat research data Lake and as you can imagine it amounts to a huge amount of data that we can then leverage for

threat intelligence um so I want to talk a bit about how threat actors can leverage web vulnerabilities uh for uh uh their own malicious gain uh so at our work in impera we see uh many different threat actors leveraging many different types of web vulnerability for various different objectives in the pursuit of uh an ultimate goal and I don't have time to go through all of the different uh uh attacks with the different uh objectives but I want to focus on uh uh web web vulnerabilities RC web vulnerabil ities uh in the pursuit of malware deployment to achieve objectives like ransomware um uh uh deployment of Crypt miners uh and Bot Nets just for for an

example okay so in a report published by Verizon business their data breach investigation report they actually outline just how important over Target web applications are for threat actors um so about uh 40% of the top action vectors in data breaches can be attributed to web applications so furthermore in non-muse or uh non uh error breaches uh over 20% of the these breaches can be attributed to web V web vulnerability exploitation furthermore they go on in their report to outline some some web vulnerabilities that are commonly used or have been commonly used in the past year in data breaches and among those are vulnerabilities in atlassian in move it manage engine and Sugar CRM and and these are

vulnerabilities that we're very familiar with in our work at impera and I've been working to mitigate them uh over the past uh year or two so I want to uh talk about a specific example of a an RC vulnerability um I think we've lost the the screen here but um yeah I want to talk about a specific example of an RC vulnerability that we might observe in our data set ad Marva it's a exploit attempt of the vulnerability CV 2024 4577 and um some of you might already have recognized it as an exploit attempt of the um of the PHP CGI vulnerability that was disclosed uh earlier this year so um actually the the the guys who who found

this vulnerability were actually present at the black hat conference earlier this week and they actually talked about this vulnerability and some other vulnerabilities that that came about because of it uh so essentially the vulnerability allows uh a threat actor to to append a soft hyphen um denoted by the percentage ad um in the in the screenshot there uh surrounded in in red it allows the attacker to append additional parameters to the query string of the request which essentially instructs the web server uh to treat the post body as PHP code and execute it on the server so in this case the uh attacker is invoking PHP system which then invokes mshta to download and run a

Microsoft HTML application um so this is this is a great example of of thread actor activity it's quite interesting but there's some clear limitations here um we don't know what the file is that's being downloaded um and therefore we can't deduce any objectives of the attacker because we don't know what they're trying to do so that leads me on to talk about the limitations that we have in our impera data set we have no access to the end points um so we can't see any installation of the malare on the asset we see any communication between uh the command and control server and an infected uh uh server and therefore we can't deduce any actions on the

objectives we don't know what the the attacker's intent is really we can only see the first four links in the Cyber kill chain um so clearly some enrichment is required to be able to reveal the real intent behind uh the actions of the attackers so that leads me on to talk about uh the framework that we've developed for automating malware handling um so this is a very high level diagram of the framework that we developed and um I'm working from left to right here but as we know uh threat actors will send malicious requests to uh sites and assets protected by the impov cloud wff we will store all of those malicious events as logs um and we in our threat

research data L uh so on this huge uh quantity of data we will then apply some fairly sophisticated um etls and queries to be able to extract out the malicious URLs we'll send those URLs to a Sandbox environment where they're downloaded some basic processing is uh applied they're zipped they're hashed and they're stored in our isolated cloud storage and then those events are uh we we uh get the metadata from those from those uh samples and we reimport those back into our threat research research data l so then we can cross correlate between the firewall events and the the samples themselves the final piece to this is our enrichment from open source so we uh use apis like the virus total

API to then uh en enrich the information that we have about samples that gives us a very rich uh set of data that we can then apply further processing to um so we have some challenges when it comes to extracting URLs from HT like a large quantity of HTTP data like we have and one of the main challenges is the number of false positives that we observe if we try to extract the URLs um so lots of false positives can lead to a lot of a lot of time spent filtering and less time actually analyzing an interesting sample or investigating an interesting campaign um so the solution that we came up to this was to actually split the

URLs into three different categories um so firstly we have ipon URLs these are URLs that don't have a registered domain and what we noticed is these URLs are much more likely to be malicious than uh other types of URLs for example so we extract those directly and we apply some puristic to filter out the final false positives and we send them to our downloader environment secondly we have regular domains and or regular domain URLs which as you can imagine if you try and extract those directly from a large HTTP data set you're going to get a lot of false positives so what we do is we actually extract the command injection um from uh all of the malicious HTTP

requests that we see and then we ex we further extract the URL from that and this allows us to filter out a lot of the false positives um we then app a set of heuristics to those URLs to filter out some final false positives uh and uh other scanning domains that we're not that interested in and then we send those to the downloader as well uh and the final set of URLs that we handle are we uh refer to as Lots or living off trusted sites this is a project that's actually external to impera um but um it uh it basically lists a lot of um services and sites that are legitimate by nature but have been known to

um to host malicious files in the past so things like uh GitHub user content things like pay spin um and things like um uh one drive and Google Drive all legitimate services but they can host uh malicious files uh so we handle those in a separate way we apply a separate set of heuristics um and then we send those uh URLs to the downloader handling the URLs in this way allows us to strike a nice balance between being too permissive and having too many false positives and being too restrictive and potentially missing interesting samples um okay so now that we have our uh malicious URLs um we can uh send them to our downloader environment and for

that purpose we have a dockerized python service um which essentially will um download process and store um the each of the samples so each of the samples is stored as a a a zip file um a password protected zip file and we name it with a sha 256 hash of the sample itself this allows us to easily retrieve it and uh and do some manual analysis if required afterwards um so baked into our um our downloader service we have something that we call Second Stage URL detection and that's um so for web delivered uh samples especially we see a lot of uh textual samples things like bash scripts or par shell scripts um which are

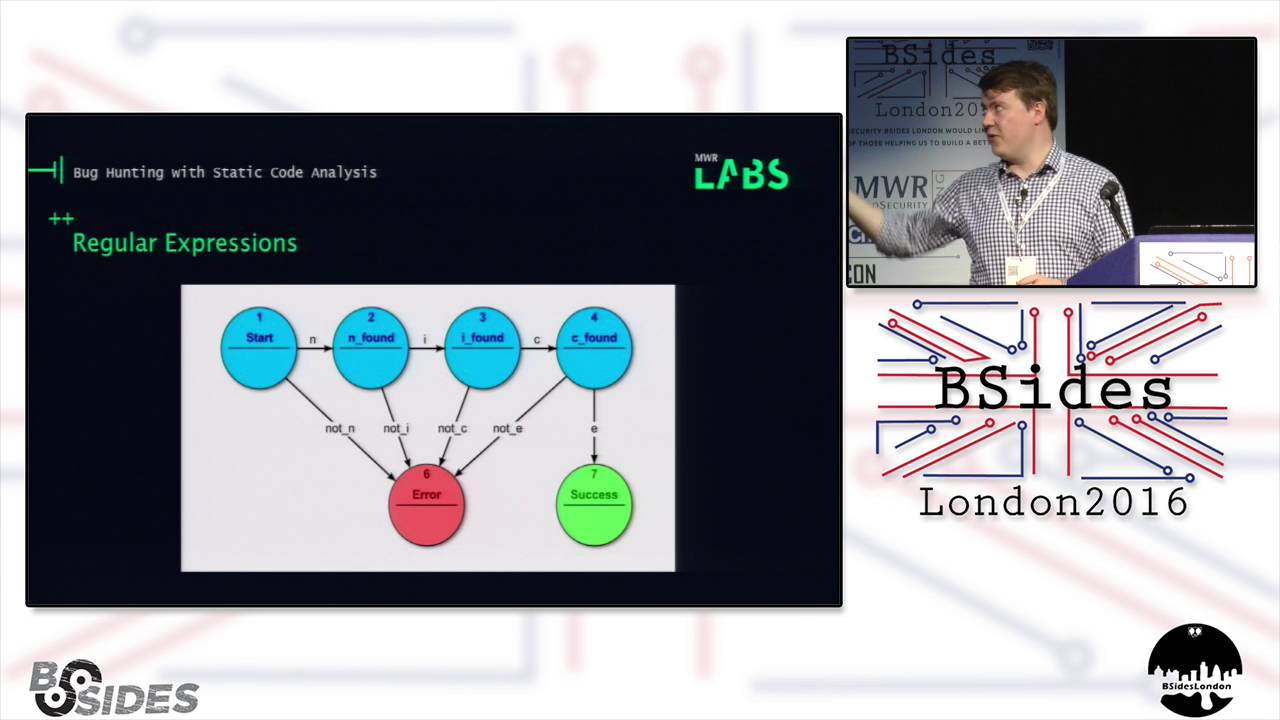

essentially downloaders for second stage binaries so so what we did was we would apply a regular expression to these textual samples uh to try and extract the uh Second Stage URLs um but what we soon found was that um for textual samples where um URLs are split between parameters for example a regular expression is just not going to cut it uh so that's why we came up with uh llm Second Stage Ural detection essentially we send the content of a textual sample along with a prompt to an llm and and this uh is generally pretty successful at reassembling the second stage URLs and then we can take those and send them to the the downloader okay so just to summarize uh

the how we uh get the enriched data set that we have um impera has a large volume of uh events um malicious events that we store in our through research data Lake from that uh vast volume of data we uh extract the malicious URLs we send them to an an isolated environment for downloading and processing and then we reimport that metadata back in so we can cross correlate between the um firewall events and the uh and the samples and then finally is our enrichment from open source uh so we use apis like virus total and uh sandboxes and honey Poots to then further enrich uh the data set so that gives us a a great platform to then do further

processing and uh and extract further value from the data uh so at this point I'm going to hand over to aie who's going to talk about the anomaly detection and the Mal clustering that we then apply to this uh enriched dat set thank you can you hear me yes okay so I will go to more technical part and I will tell how we uh detect interesting anomalies inside of our data uh to find interesting campaigns and later I will talk about about how we do ml clustering H cluster attacks into campaigns so first of all uh I will start with our anomaly detection system here you can see a chart where you can see the requests or attack requests over

time for a specific cve specific CV and a specific client what is a client our Cloud W classify all the requests that we get into client we have a a different client different attacking clients that can they can be browsers and they can be hacking tools and others and in this case you can see that the IDE of the client is 589 uh we have something like 1,000 clients so what you can see here is a counter for the number of the requests over time for this specific cve and this specific uh client and you can see that in the middle there is like a a peck for two days with something like 100k attacks so this is something that we

found using our anomal DET detection system and this is a point for a drill down for researcher we know how to correlate between a between an anomaly and a marel campaign so we can see the anomaly we can click on it and get to the campaign and then analyze the campaign so this is like an entry point we can now look at the data we can look at the pay s and understand what happened there so let's look at another example uh our anomal detection system has many many counters and one one other counter that I wanted to show is the number of requests again attack request for an injected URL or IP and in this

example you can see an IP injected to our data uh this time it took something like two weeks and you can see that in the final day we got to some like 800k or 600k request for a specific day and again we found this in our data again it is a point for a drill down we can now look at the campaign and understand what happens and the anal detection system helps us to find the interesting uh campaign interesting valuable insights inside our data not only for malware so let's talk on how we find such anomals so we have a solution which is completely serverless uh we used a managed query engine and managed Cloud

function to do collection aggregation and anomal detection on our data H the result of it is hundreds of counters like what we seen we we count the number of requests for a cve cve and client injected URL and much much more and we have at any time 20 million anomalies H that we can browse inside of our database so let's understand how it happens so this is the data flow for our normally detection system we have a data L like Daniel explained before the data leg has thousands of tables and terabytes of data added daily to the data L and uh we have counters I will explain very soon how we Define these counters and the idea is that we collect

the data by reading the data from The Source state table into dedicated counters table inside of our anomaly detection system we read the data from The Source table exactly once why we do we do it once because reading data from Source table is considered heavy we perform aggregation and filtering and it might take a lot of resources so we prefer to do it once and hold the data redundant data inside of our counters table once the data is our in our counters table we again use cloud function to do the aggregation H and to do anomaly detection I will explain how H but everything is done inside of dedicated tables which can be explored uh using our query engine so let's

understand how a researcher can Define counters so we have a Json configuration files we call them data sets h and a data set is H simply a a list of counters where each counter is like a SQL statement um for for getting data for example CV over time so I will get data for all of the CVS that we protect against H um over over time okay so it's it will be something like we will choose the CV and later we will go by the CV the CV will be the key and I will hold the numbers for all the cve daily hourly and so on So eventually a data set is like a group of counters for example if we have

a cve data set we will have a a cve counter or cve plus client counters and so on and we have hundreds of counters not only for malware but we use it also for malware detection H how we do the anomal detection again we are completely serverless we have managed qu engine managed PL function no servers so what we have is SQL we do it using SQL and we are using trino as a manage query engine H and I strongly recommend to all of you if you are using and I believe you are using a query engine H look at the functionality it provides look at the docs because today quer engines are very powerful and I will uh show you some

example of what we do so let's look at a fex example of how we do anomal detection using SQL the first example uh is pretty simple uh the first step is to collect the data from our data set the data is already collected and saved in our database so we query the data for a specific data set we query all the counters all the keys and hold them in memory for a certain period of time for example 90 days back H later we colle we we calculate statistics and in this case case we calculate the average data per counter H and the standard deviation the last step is to find anomalies and in this example I defined anomaly as a

value bigger than the average plus two times the standard deviation now it's very important to understand we Traverse billions of Records H and the data doesn't leave the database which means it's very efficient billions of Records in just minutes and it's also very cost efficient let's look at another example uh the second example I used the match recognized function which is supported by multiple databases the match recognized function gives us to query data according to patterns and I created like a pattern for example where there is a start value uh then I request that the value will go up at least four times and get to a final value and there are some restrictions for the final value

which has to be three times the start value and two times the average value so this is a pattern and it is like a a it's like a regular expression language that you can use to find patterns inside of your data again Traverse billions of Records in just minutes you can find um if you can find v-shapes in our in in your data U shapes and we use it to find like PS and regular PS in our data and again you can transverse billions of Records in just minutes so it's very effective and very cost effective so that's it about our normal detection system uh I will move to malware clustering so first of all why are we

clustering malw we have like millions of requests every day for only for the malware injection and we have to to somehow show it to our security analysts so we are not going to show them million of requests we want summary so what we do is to Cluster data and you can see some examples uh how thousands or tens of thousands of records are clustered into a single record where we have like start time injected URLs and a lot of other columns that we finally present and say this is the mar campaign you can decide if you want to analyze it or not so let's understand how it's done we are using the same technology stack that we like we have manage query

engine and we have Cloud function and in this case the manage query engines helps us to group the attacks the requests group the request into attacks and then calculate distances and I will explain in the next slide how we calculate distances between attack to understand how far attacks are from each other and so this is a a very heavy operation of calculating calculating distances H but again we are doing it using SQL data doesn't leave the database we don't have python processes and we don't need to load data frames and so on very effective and very cost- effective H once the distances that I will explain how we calculate H are calculated we save it into some Object Store and the

data is moved to the next step which is the clustering algorithm in the clustering algorithm uh it seems weird that we are using Cloud function for a male clustering because the M clustering is normally heavy processed which takes a lot of time a lot of resources but because the distance calculation was done by the query engine H it works for us and the 15 minutes of time and up to 10 GB of memory which which are our limitation for the cloud functions that we use are completely Ely fine and I believe that it can work for many other projects too so we take the distances we load them into memory run clustering algorithm and then dump the results back

to the object store once the results are in the object store we use again SQL uh first of all when we first created the pipeline we had to find tunit uh validate so we joined the data with the source data and decided how to change it how to decide which feature to use and so on uh and uh now that we have the PIP and up and running every day apart for several days that it failed I feel but uh normally it works um we do a data enrichment and we have the data ready for our security analysts okay so let's last thing for me is how we do the distance calculation so we do the distance calculation using SQL

it's a heavy operation it takes know 10 minutes 20 minutes but again it's in SQL manage F engine it doesn't cost us much very effective and very cost effective so let's look at an example we take the attack data attack data is the aggregated requests data H and we try to to look at every pair of attacks to decide on a distance uh okay so we self jooin the dat with itself so we will go over all the pairs and you can see how how we are doing that and what's important is that we now have to calculate the distance so we are using different features of the attacks for example we check if it is

the same client we have two attacks here we have left attack and right attack from the left joint left side of the joint and right side of the joint we check if it's the same client I explained before about the client classification we use the lein distance which is also called the edit distance to see if the injected URLs are are the same or close to each other and we also use the coant similarity function to look at the attack sites histograms to see if the two attacks were done on the same attacked sites and of course the real distance function is more complex we have more features but this is only the idea of how you can calculate

distances H between attacks using SQL that's it for me and back to Daniel okay so now that we know how we apply the machine learning clustering and no detection to identify the campaigns in our uh large enriched data set I want to talk about some of the real world campaigns that we've actually identified using this framework uh so I'm not going to get time to go through all of the campaigns that we've identified so if you're interested in that I would encourage you to check out the impera blog on the impera website um and you'll be able to to see more of of the uh campaigns that we've we've seen uh so the first one I want to talk

about is that of the cisv botnet uh so csrv is a well-known botonet malware written in go um and it spread using well-known web vulnerabilities so uh we saw like widespread exploitation of atlassian Confluence and Apache struts um uh basically propagating this this campaign so the idea behind the campaign is to deploy uh the exm rig Crypt Miner to as many vulnerable servers as possible to use the uh CPU resources of the uh web servers to uh mine cryptocurrency um so using the uh framework we were able to aggregate together all of the events of the campaign along with all of the uh samples and all of the the metadata use the clustering algorithm to bring all of

those uh those records together uh for uh easy analysis for for our analysts um so uh using the the workk we were able to uncover some brand new uh ttps and ioc's that hadn't been seen in the while before including the use of a compromised uh uh domain or com compromised website of a well-known uh Malaysian academic institution we also saw the use of a Google sites Doman to host the second stage binary uh so uh as you can see in the screenshot what looks like a legitimate uh Google uh 44 error page is actually uh uh hiding the the bites of of a goang binary so you can see a big blob of text in the in the

page source so we were able to extract this this binary and do some analysis we were able to extract the configuration and we were also able to extract the Monero wallet that was used for the campaign we were able to see that uh the uh thread actors were netting around 57 Monero per year which equates to around 7,000 GBP which doesn't sound like a lot but if you think that they might have multiple of these campaigns ongoing at at once it can amount to a considerable amount um the second campaign that I want to talk about is that of the tell you the pass um ransomware campaign that we saw propagated using the PHP CGI

vulnerability that I um talked to you about earlier in in the uh in the talk um so this is a ransomware that's uh targeting uh PHP web servers uh and uh they use the PHP CGI vulnerability to deploy a Microsoft HTML application which was VB script enabled um So within the BB script was a an encoded um uh string which as you can see in the screenshot is in the S parameter so you can see that um that's the encoded uh bytes or the encoded bytes of a a net binary we were able to uh reconstruct this decode it and uh uh load the net binary into DN spy for some analysis and we were able to determine the um the

actions of of the of the binary what it actually did so upon execution it would reach out to a uh C2 server um with a HTTP request disguised as a u request for a CSS resource to blend into Network traffic to other network traffic uh it also enumerated directories encrypted files and then put a ransom note on on the web directory of the PHP server so interestingly enough it's uh it's a ransomware that's that's actually targeting uh web server specifically again using the the framework that we developed we were able to aggregate all of the events of the Campaign together along with the samples apply the clustering uh and uh get it all together in one convenient place for

analysis uh so the final campaign that I want to talk about and probably the most interesting one that we saw um is one that we believe we can attribute to uh apt29 um or Koozie bear so Koozie bear is a well-known threat actor uh that's thought to be a part of the Russian svr or foreign intelligence service so they've been um a associated with many different um uh high-profile attacks and campaigns over the past couple of years including the um the the supply chain attack the uh solar wind supply chain attack and the mass exploitation of Team City servers uh last year the activity that we actually observed uh we observed some very targeted and coordinated

attacks against polish government websites back in March and uh what they were trying to do is they were leveraging vulnerability in uh F5 five big IP their load balancer solution to deploy a sliver implant for those of you who are unfamiliar with what sliver is it's uh essentially an open source alternative to Cobalt strike um it allows for a lot of uh different post exploitation activities on an infected host including information gathering and uh command execution so as you can imagine in the hands of apt29 it would be a very useful tool given the the right target we also saw some follow-up activity uh last month in November um where the same uh activity was happening

on UK the sites of of Ukrainian financial institutions um so how is it that we think that we can attribute this to apt29 so for three reasons sliver is a known uh tool of um of used by AP apt29 uh according to miter and cyber reason so it makes sense that if they used it in a previous campaign they would use again in this one so secondly and and probably more compelling is that the 2 that we observed in this campaign was actually observed in a previous apt29 campaign namely the the Jep and team City campaign that I mentioned and then thirdly only targets of interest to the Russian St state were observed in the

clusters of this campaign um so uh it makes sense that not only can we attribute this to a Russian threat actor we can attribute it to apt29 okay so on to the uh takeaways from from the talk so firstly automation big data analytics and anomaly detection provide excellent tools for threat hunting and scale so if you find yourself in the position that you have a data set like ours we would encourage you to use the tools that that or is outlined um to be able to extract the value uh from from uh your data sets so secondly threat actors such as the ones that we've demonstrated are regularly leveraging web vulnerabilities for malware deployment and this is something

that we're seeing day in and day out at our work in impera and it's something that's backed up uh by the likes of the the DB report from Verizon that we mentioned uh so finally identification correlation and tracking of mare campaign activity has real Community value so being able to expose the ioc's and the ttps of threat groups like the ones that we've we've seen um is of real value to stakeholders in the security Community which allows them to do their own detection and attribution based on those ioc's um and that is all that we have for this talk I want to thank you for for listening and uh we'd like to open for any questions that you might have

thank

you thank you so much for insightful talk H I have two question the first question is what type of clustering technique have you been used have you compared to any hierarchical and density based algorithm as well and the second question is um regarding the different type of the AP group how many group did you identify Apple Earth from the apt29 thank you so I I think the first one's for so so the qu can you hear me the question was about the loss function so what type h because clustering we know different type of algorithm so in your pipeline what type of clustering have you been used is it h and then the algorithm have you been used

have it been compared with the density based algorithm because I'm doing quite similar research with the APT in terms of ips so I'm just that's why I'm curious um and the second question is regarding with the different type of AP group okay so thank you so regarding the clustering because the clustering is unsupervised it's hard to find to use like a common metrix uh and we haven't used any known metric H instead what we did is to take the Clusters that we found we took the Clusters and then we joined them with the source data now uh joining the the Clusters with the source data allowed us to uh to Define our own custom metrics and and then to uh to

improve our data over time uh I can try to explain it better uh for example we can uh use the we can cluster the data and then take the data the future data the data that happens after the clustering um to see if the the results were clustered together but it's what all our validation was done using SQL with custom metrics and we found it like the the best way to validate such data because in unsupervised we don't have like good metrics to to do the job for us like in supervised thank you yeah and your second question about the I guess it's about the other threat actors that we've observed um so yeah it's an interesting question I don't

think that we can give a like direct answer to how many APS we we've observed we're still actually working on the attribut part as you know attribution is is a difficult a difficult thing to do um but certainly it's it's in the thousands of threat actors that that we've seen

so thank you great talk um clearly sql's doing a lot of heavy lifting uh what flavor SQL survey using is is that on Prem or is that cloud or something in between uh we we use the trino as our trino is our manage qu qu engine and if you really asking we are working on AWS and atina has behind the scene a trino

engine thank you for a great talk um you mentioned earlier during some of the malware sandboxing that you were using llms to ahead and grab more data out of it what boundaries or guardrails did you put in um to prevent things like prop injection or other kind of like data poisoning techniques that you might see Mal are trying to do to avoid these kind of detections yeah so that's um when we apply the llm to try and extract the second stage URLs so yeah we we use the llm and then we actually like um we we don't take just the output of the prompt we we then parse the the the output of the llm to to further protect is against

any

injections thank you for the talk this is purely on statistics and raw technology so when you distill from The Source data down and you index and aggregate for multiple times what's the final aggregate data reduction rate that you get up get get it down to across all your aggregations what's the data so you aggregate from Source data multiple times multiple ways what's the final net aggregate data reduction rate for the VY data sets from The Source uh what's the final aggregation so so we have several tables it's like a very generic uh framework so we have for example we can collect daily data so we have daily table with a partition it's all stored eventually in a data lake so

we have partition data sets and some kind of partition per counter so it will be efficient to query the data per counter uh so we have a daily table weekly table monthly table and that data is keep rolling and we have like jobs running all the time for doing the aggregation doing the normal detection eventually we're using a data L and so the data is stored in a data l in an efficient manner so we will be able to to query it and the best way to query data most efficient way to query data is using partitions yeah so on that one can you give numbers as what's the from Source data volume X your not final net

volume of what you should use it's going to be less than x but by how much in aggregate roughly so so you're so the source data can be huge for example attacks and eventually what we have in I don't think that I ever try to to understand how much data we have daily from the data but it's it's it's because it's only counter data when we lose everything for example if we are saving requests so we have post body and and the query string and so on when we are saving the number of requests number of hourly requests number it's only a number and it's together with a key the key can be a URL can be a cve but it's

not even I don't know it's not I'm sure it's it's very very small comparing to the data and we save the data for years H because it's so small so it's really nice because you can see Trends over time for years like we can show customer uh Trends over time for the for example for SQL injection xss and so on and of course for malw because we have this kind of aggregation thank you two thank you for the talk two small questions first is is it everything is based on just HTTP traffic or you have a way to handle https as well encrypted traffic and the second question is whatever you use only content or also metadata about requests

for example F kinds of fingerprints Etc so um yeah it's it's mostly https so like modern day applications almost ubiquitous you ously use https so yes um and what was the second question again do you use only the content of of the request or Al or also meta data about the request and just to clarify the question of about htps how do you handle shs how you how do you have visibility into the content of the request when it's encrypted okay so do you so first we uh the the sites that we protect are our customer sites and they provide us with their certificates and that's why we are able to pass it and we see all the

traffic and we also see metadata where for example can we will see HTP adders we will see we have all the TLs fingerprints and everything and that's why we can H create counters for headers for TLS we have like I haven't said that but for example we have TLS fingerprint and we classify TLS so we know about malicious TLS that that that we have and so on and I think we have time for maybe one more question if there are any no well in that case uh thank you very much to Daniel Johnson and Johnston and Ari NAA [Applause]