BSidesCharm 2025 - Cyber Deception in GCP with Generative Traps - Matt Maisel

Show transcript [en]

[Music]

[Music] Yeah, but by my watch it's uh 11:30. So again, welcome to to Bside's Charm 2025. Thanks to the organizers and volunteers and staff for putting this on uh this year. Um my name is Matt Masel. Uh I've been in cyber security for over 14 years mostly doing applied ML and software engineering at product startups. Uh but my roots are in security operations. Um, and back then, uh, I remember, and this is back then in 2011, uh, two, uh, 2011, I remember experimenting with tools like HoneyD, uh, but never quite having the resources nor buy in, uh, to deploy it widely, uh, internally for deception use cases. Um, so today, um, I'm excited to share some work that aims to change

that, change that, uh, cyber deception in Google Cloud powered by generative traps. So back in April 2015, John Lambert wrote the famous words, "Defenders think in lists, attackers think in graphs. As long as this is true, attackers win." Well, it's 2025 and it's uh you know what what a year to be alive. And uh we the security operations teams uh think in graphs now. Uh mostly we care about uh graphs of our assets. We inventory them and we monitor the relationships and the activity um between those assets across our environments. Um we also have help from our tools and our vendors and uh they build things in graphs, attack graphs, exposure graphs, supply chain graphs and

so forth. But attackers are still gaining initial foothold, still getting credentials, still escalating cred uh privileges, traversing our environments and achieving their objectives. So if we're a security operations team and we have constituents that have infrastructure and services in Google Cloud or other cloud providers, you know, what can we do now today to try to detect and deter our adversaries? I'm saying we should lie to them. We lie to them about the state of our graphs with things like cyber deception. We sprinkle in fake service accounts, buckets, cloud services, uh like cloud run or cloud uh uh lambda or cloud functions or other GCP resources and then we get the attackers to fall into our

traps. Organizations with an assume breach mentality accept that adversaries will get in and deception is another layer of defense and depth that we can use in our in our tool set. So deception introduces uncertainty about the target environment. It increases the probability of detection of adversaries. It acts as sludge to slow them down. And for security operation teams, deception can reinforce the seams in our detection surfaces by placing traps at key choke points along the attacker's attack path. traps or what we've called as decoys or canaries or honey credentials or honey tokens depending on the deployment scenario are meant to detect adversary interaction by imitating an internal target asset and when there's interactions we can alert

the sock on that activity and then from there perform immediate investigation as a high precision alert uh do initial response or potentially do further monitoring and generate threat intelligence that's uh custom and bespoke to our environment But hold up a minute. Deception for cyber security isn't anything new. Um, even for internal threat detection use cases with traps. Canaries and honey tokens and honeypotss have been around for a while. We've had deception tech argu arguably publicly at least since uh 35 years since the publication of the cuckoo's egg in 1989. Um, where although it wasn't called honeypotss, you know, entire departments were created, entire fictitious systems were created to lure in the attacker and ultimately expose

them. Um and in recent years we have companies like Tracebit and other others like Ramy McCarthy publishing security canary maturity models that help take have organizations have a northstar for taking their unmanaged uh infrastructure canary infrastructure and making it optimal uh and and move towards a mature and optimal uh state. And of course there's processes and uh work by the the MITER engage group around adversarial engagement which lays out processes for deception uh including uh scoping, crafting a narrative, deploying deceptive assets, monitoring them uh and then doing tearown. And of course there's hundreds of tools out there on sites like awesome honeypot uh on GitHub on awesome honeypot and awesome deception. There's uh cloud specific tools like Honeytrail

which is similar to the tool I'm releasing today uh that is called cloudtrail and it it uses cloud trail I should say to detect and notify on interactions with uh S3 or Lambda or Dynamob um with the rise of transformers there's now an explosion in research of LLM based honeypotss or active defense frameworks like Gala or Jennipod or Velmes um and then for application layer decoys there's tools like cloud active defense by SAP um and just in the past few weeks Um the Dino trace research team put out a uh it's called Coney. Um it's a Kubernetes operator that is used for deception policies in Kubernetes clusters and helps with the deployment of honey tokens and other fake API

endpoints to Kubernetes clusters. And finally, if you have the resources, there's around two dozen deception vendors, including startups, as well as uh consolidations over the years that offer everything from moving target defense to deception as a service. So why isn't deception more prevalent in security operations? At least public examples of this, you know, are difficult to find. Um there is one recent example for uh using deception to uh find a corporate spy uh insider threat. So the Ripling security team uh signaled to some executives at one of their competitors that hey here's this like Slack channel that there's some interesting stuff in it potentially. Um that Slack channel was never known to anyone. It was just

created and it was actually used to expose a corporate spy that was acting in its environment. Now, now there's a $12 billion lawsuit. Um, so again, it was a significant engagement by the team. Um, but minimal use of resources to ultimately, you know, find a corporate spy. Um, but in general, maybe no one talks about it. Um, there are actually several deception talks uh this weekend at Besides Charm. So, I think that signs of change and I encourage you to go check those out. Um but uh looking at like reports from Gartner um at least right now they're saying that by 2030 organizations enterprises are going to be uh 75% of organizations will have some form of deception capabilities and

that's up from 10% today. Um so this is the best number I could find. So if we're at 10% today um there's obviously challenges and there's obviously a long way to go if we're trying to get more deception in our environments. And I see that uh mostly attributed to three different buckets of pain points. Um, number one, there's the questionable effectiveness. Is cyber deception effective? Um, does it actually deter attackers? Uh, the data on this is sparse. Like I said, there's been limited examples publicly, but there's actually recent empirical studies by academic and other research groups that show promise. Um the Dino trace research team released a tool and a qu a questionnaire tool called honeyquest in

2024 and they had 50 study participants that uh basically went through an experiment of labeling um interesting artifacts as either things they would exploit or things they would avoid. And this was meant to determine uh what what types of traps would would be interesting to attackers. And in that study they found that uh attackers with with traps it reduced attackers ability to find true risks in that experiment by 22% on average. and that the humans in the experiment fell for traps about 37% of the time. Um there was a study back in 2021, the Tollarosa study where a group of 130 redteamers were recruited and basically went wild on a network. Uh they were interestingly they were in

some cases some of the red teamers were told that deception was active in the environment and others were not. And what was interesting about this is that um even when they were aware, the red teamers were aware of deception in the environment, they actually triggered more decoy alerts or more traps versus being uninformed about deception. Um also what's it was interesting is that every participant uh ended up triggering a decoy or a trap prior to exploiting one of the targets in the environment. So this uh this again shows that uh even when announcing deception uh we can waste attackers time and we can have sludge basically to to make them have longer routes to uh to the crown jewels.

And to further reinforce that reinforce that at least in an academic research right now there's ongoing studies by a group uh that is trying to understand how the optimal number the number of optimal excuse me the optimal number of traps as a percentage of the network uh trying to understand how that may or may not increase the attack path length of attackers. Um, and again, uh, there were some interesting nuggets of more like anecdotal, uh, evidence of red teamers that were super stressed out when they found out that deception was active in the environment and were, you know, concerned about it breaking all their tools, all their workflows. Um and again so the the the evidence right now um at

least from these studies and again with the limitations of the sort of experiments and the simulations and the environments that they are setting up is is definitely showing positive reinforcement that uh deception can be effective even if you announce it uh or not. Um a second problem uh that I I relate to this is uh making traps that are indistinguishable from targets. So how difficult is it to detect the use of traps in an environment? uh we don't want them to be distinguishable from targets. Um and historically honeypotss have had this reputation of being easily identified with signatures. Um there's tools like honeyet or plenty of nuclei templates to basically have scanners looking for uh looking for these

honeypotss out there on the on the public internet. Um but at least uh and also too deception vendors actually are not immune from this either. Um there was a blog post by uh the truffle hog group back last year that found that think canary's uh free AWS canary tokens actually ended up using the same AWS account ID. Um this was for the free service. I don't think it it was affecting their paid stuff. But again just like opsect stuff here where even if you're trying to use deception if you don't do it right um it could be easily signatured. Um so I think this just highlights the difficulty of crafting realistic deception personas. Um, and

just in general, it's it's it's it's manually time intensive and unless you're creating them pretty frequently and rotating them, uh, they could be signatured, uh, with the given the tools that you're using. Um, and finally, and I think most most I think has the most impact of this actually is that there's tedious and risky infrastructure um, that's required to deploy traps to your internal networks, right? Uh, who does the bookkeeping? How do I know I'm not introducing vulnerabilities that the attacker ends up exploiting? what if it disrupts the production workloads? Um, traps require maintenance just like any other infrastructure to avoid the unnecessary risk. And I think historically, uh, as put by Lance Spitzer, the the founder of the Honeyet

project back in 2019, that it wasn't the concept of deception, but it's been the technology. Um, and I think, uh, that's even more true today, uh, now in cloud environments. And a deception in cloud environments specifically can sidestep many of these issues. I think uh we have infrastructure tooling like terraform for cloud development kit or palumi which is what I use today. Um and with these uh programmatic infrastructures code tools we can deploy resources to the cloud and make them fully provisioned fully functional making them harder to distinguish. Uh we can also leverage the serverless technology uh like serverless traps things like lambda or cloud functions where we can deploy traps and they can scale to zero and

only incur costs whenever attackers are actually interacting with them. And finally uh sorry finally with infrastructure at least uh with these tools you can set it up so that they have the same infrastructure life cycle as real production resources so that the the maintenance overhead of the trap is tied to the same life cycle as the production resources that they're trying to mimic. Um, and finally, uh, right, with the explosion of generative AI, uh, there's absolutely the use, uh, potential use of LLMs to help automate traps with, uh, using production resource naming conventions, uh, tags, and other metadata that's out there, and even potentially, uh, data to help create more realistic traps, uh, for the

environment. Um, so that's why today, um, this weekend, I'm releasing a tool I'm calling Poltergeist. Um, and I I'm releasing this to help hopefully allow security operation and security engineering teams experiment with cyber deception uh in their environments. Specifically, as of today, GCP. Uh, Poltergeist is a cyber deception tool that's helps with the generation, orchestration, and monitoring of cloudnative traps that lure and detect attackers. I've built it in Go and um recently as an open source tool under the MIT license today and uh and yeah, there's three things that it does. It it generates tailor it helps generate tailored believable traps. So trap personas uh are used generated with large language models with in context

learning where there samples that are pulled in imported from your environment uh to basically uh create traps that have similar uh naming conventions to make them more believable and more enticing. Um and it can use reasoning models or other other techniques to help improve trap quality with things like LLM as a judge uh to effectively assess the trap enticingness or believability. Uh the second thing uh Poltergeist does is helps with the orchestration of this. So the Palumi automation API is used to plan and apply infrastructure against your cloud provider against GCP. Um and then it sets up interactions whenever there's any interactions with any of the trap resources. It sets up logging with a a

cloud a router a router to set up a cloud logging to have any emitted activity uh sent to another GCP project for the security team to look at. And then finally um sort of on the akin to miter engage it pulls from their their playbook of basically helping manage deception engagements. Um it it does this through the orchestration of the engagements by grouping traps uh uh that target similar have similar targets and this is done with uh strategys and strategys are template playbooks for trap use cases in poltergeist uh that describe the describe the trap where they're placed in the attack path and what they're masquerading as to the attacker. Um, as I said, right now the

coverage is limited to the GCP, but the architecture of Poltergeist and the ideas here could could clearly extend to AWS and Azure as well too. Um, so let's just take a look at a few examples of these strategimms is um, the first one I'm calling follow the yellow brick road. Um, right, security engineers are making paved roads for our developers. So, we should be building yellow brick roads for the adversaries. They don't know that the whiz is a lie and that Oz is not what it seems. Um and this is just all about detecting lateral movement by baiting attackers uh with tempting IM relationships. So service accounts that can be impersonated uh by other principles in in GCP and it is

basically planted then as credentials to lead to other traps uh in the environment. Um the second strategm I'm calling save your pets, shoot your cattle, watch your canaries. Um, and this is the deploying decoy compute resources like VMs or cloud run or cloud functions that mimic real services with tempting uh but potentially contained vulnerabilities and this is meant to help detect exploitation or persistence attempts by by adversaries. Uh, a third um strategy is called uh crown jewel gravity well and this is just uh using decoy storage like buckets like GCS buckets that are filled with uh enticing synthetic data that's meant to detect data discovery and exfiltration. Um, and then finally, uh, if the strategys are sort of all combined

together, there's enter my cloud labyrinth, which is basically an entire GCP project where everything in it is a trap resource and it's perfect for enticing threat intelligence and um, and yeah, potentially generating your own threat intelligence for your organization. Um, so what does this like look like? I'm just going to run a demo because I did not want to attempt the demo gods today. But, uh, Poltergeist, uh, the binary has two commands right now. It's one one to basically deploy and one to destroy. Uh currently it requires you to have GCP credentials that can uh import through Palumi uh import resources or import the state the stack from Palumi to uh basically load up um the uh the target resources and

then a secondary project access to a secondary project in GCP that's set up for again the logging of interactions with the uh with the traps as well as use Gemini which is the uh the models uh that I use right now for trap generation. So this is kind of happening quite fast but uh at one point there was a scroll by of two strategiums that sampled uh target resources and then uh were generated uh generated a uh several service accounts uh traps as well as a bucket. Um and the rest of this is basically just plumi automation going through refreshing the trap stack going and provisioning the resources and then configuring configuring um cloud logging

routers that have filters specifically on the trap resources by different identifiers. And this is what setups all the logging to go from the target uh projects to the poltergeist project where the security team is is looking at this. Um, so now that all these trap resources are deployed to uh a project, let's pretend we're an attacker that may have found uh maybe we got on some engineers uh device and we have credentials now. So we're just going to go and list uh list a particular bucket. Uh we're going to go get an object from that bucket and then also maybe we found a service account and we're just going to go print the access token uh after

impersonating one of these service accounts. Right? So this is uh that's the screen that was showing those commands and right now uh we're just waiting and this is again cloud logging uh just there we see one of the uh one of the traps activities was was logged uh through cloud logging we have useful metadata around um like what uh what like what actual resource was used IP address and other metadata about the requester and then uh who who is the actual principle that made that request. Um and then as well too we can see finally the actual uh yeah they already came the um the actual call to get the access token for the account. Um so

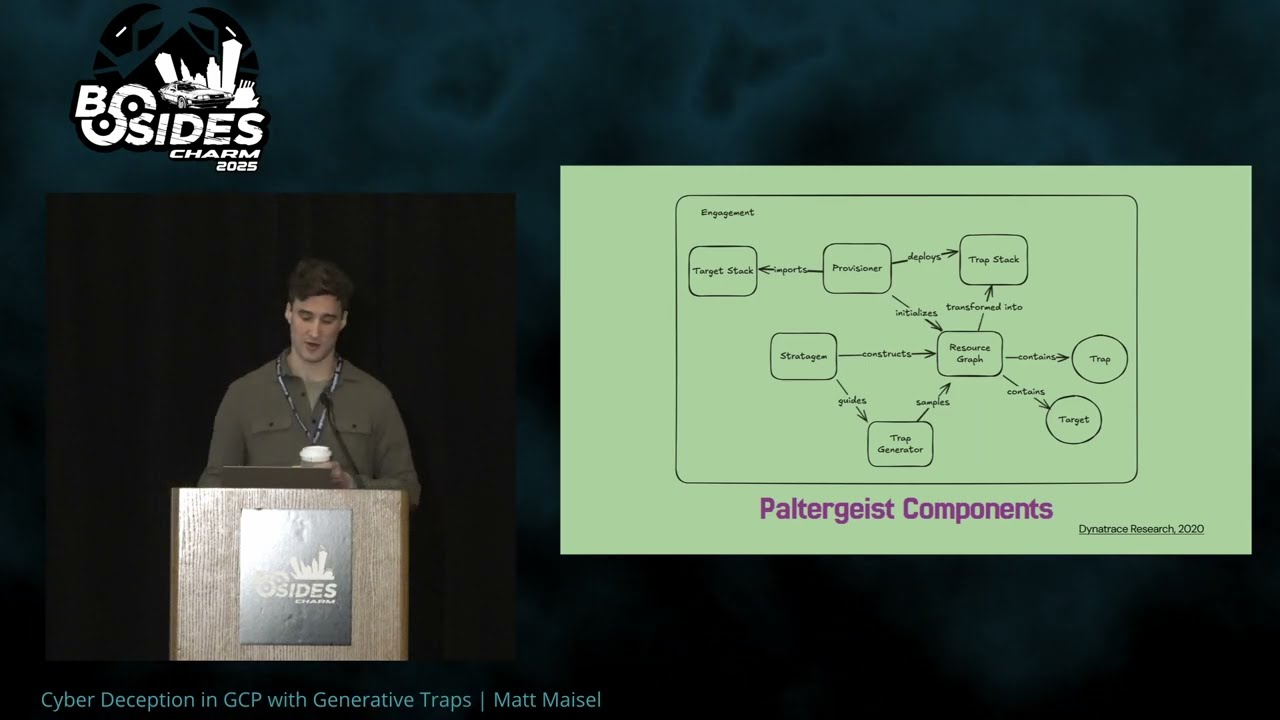

that's just a demo of just showing how quickly interactions can then be uh generating alerts or other logs for for use in investigation or response. Um so yeah so how is this how does this all work? Um this is just a diagram of the poltergeist uh components. Um this is an architecture inspired again by Dino trace research which by by now I think you know throughout the talk they they published some great papers they have some great work around deception. Um there's a framework they put out a couple years back that kind of uh formulates uh the use of deception modules in the cloud specifically trying to inject them into different uh different resources and build up these

these again these graphs of of multiple cyber attacks that that sorry that could alert on multiple multi-step cyber attacks with traps. Um and uh what's happening with poltergeist at least with this implementation today is that it basically it creates a a palumi uh provisioner that again attaches to the target the target stack which is just a standard Palumi project and stack those resources that state file is exported and then imported and constructs a resource graph um in Palumi which then given the strategys that Palumi takes as input the strategys guide trap generation again using Gemini currently right now, but it could be set up with any LLM provider or even Olama. Um those are set up in a way to create the traps

and then add those traps back to the resource graph alongside the other uh target resources and then finally um that is then deployed to uh deployed by Palumi uh back to GCP. Um so that's the architecture. So where do we where do we go from here? Um there's multiple directions I think that could be worked on. Uh one there's an idea around like where optimal trap placement is. Um so it's pretty naive at the moment right now. These are just like there's just static logic that says hey go deploy this trap and uh set it up so that principles can impersonate it. But given the resource graph we could be more intelligent. Um we could try to maximize

discoverability by looking at inbound edges or traversals to traps. Uh and then also too we obviously want to minimize exposure to production resources and right now that's just done by hard constraints. Um thank you. Uh so second uh benchmark evaluations. Um benchmark evaluations are needed to to more uh empirically measure the effectiveness of generated traps versus manual traps. So I don't have any numbers right now on like are the traps generated by uh by Gemini or other models. Are they better in uh along the lines of discoverability, indistinguishability or deployability at this point? Um so I think that's one area of of research. Um, and this is where uh the use of again potentially honeyquest come into play where some of

the trap generation could be done without having to implement that in Plumey. And I think it would be pretty easy to hook up um hook up LLMs as a judge or agents to basically be like the humans in in the study that they that they had at the time. I think that would be an interesting research direction. Um and then finally uh the final area of direction I think right now is uh implementing more strategys. I only have a couple of these strategims implemented today in in code and then of course getting more coverage for different cloud providers like Kubernetes, Amazon and Azure. Um so it's it's 2025 and uh now with deception tools like

Poltergeist, everyone thinks in graphs, defenders think in game theory, attackers win. Or did they? Um if you work in sec ops or security engineering and you have uh constituents that and environments in the cloud that you're responsible for, I hope you'll check out Poltergeist. It's up on GitHub today. Um, and if it helps build out your internal dissection capabilities, uh, let me know. Um, beyond that, thank you for your time today. Uh, happy to take questions now or, uh, please come find me. I'll be here the rest of the day. Thank you very much. [Applause] Yes, sir. So, when one of these is deployed, how would I

annows to know that there needs to be something. Yes. So, uh opsec around these traps. Um I think sorry, thank you sir. Uh the the question is uh if you deploy these traps, how do you annotate them in a way that you can tell your DevOps team or other stakeholders that they're out there and that they they basically don't like trigger false positive or at least they don't worry about like what what is this thing? Um I think uh I I guess maybe I didn't probably demo it well enough with the the scrolling, but like this right now uses the Plumey cloud. Um so that trap stack is added up. So if you have uh you know obviously uh ACL

around your your pleumi and you know whoever can go and inspect to see what projects and what stacks are deployed uh there would be a way to clearly see like hey here's the stack of traps that are deployed relevant to this project and I think that would be one way to to to get access to that without uh without having to announce it or have like other things that could be potentially signatured with like tags or other things in in GCP

question. Responsive actions are built in able to lock people out of accounts or artifacts or offsite log activities. So the question is uh what basically what sore activities what what sort of automated response activities would be uh in the tool or potentially could be done uh when there's an alert from one of the traps. Um I currently have that at least in the tool right now not implemented whatsoever. Um but I think yeah that's where depending on your organization what your policies are what your playbooks are for that if you have a sore if you have your own sort of playbook automation I think it would be interesting to like basically probably monitor more than like do an immediate

response. Um there's an idea where if you can have uh correlations of trap alerts that span one or more traps, right? Like that that attack path um is obviously indicating that there's someone actively, you know, traversing and and and operating in your network. And I feel like that provides a higher degree of fidelity that there's something really bad happening. Um, so again, yeah, I think there's a couple different ways you could take that, but there's no automation built into the tool currently, but I imagine with like things like now there's like trace cat and like other other tools out there are pretty cool with autom automating playbooks. Uh, you know, would be interesting to to follow up on

that. Go ahead. Um, we all know how analysts love adding a new dashboard that they have to learn. Does this provide capabilities to send logging and alerts directly to you have to spinning this up and taking a look at it. Uh so the question is how yeah we don't want another another another tool to log into as analysts. We don't want another single pane of glass. Um yeah for like trap alerts. Uh so this is all goes to cloud logging. Uh I think it's a very simple step to go from there into whatever SEM or security data lakeink or to whatever tool you're using for looking at alerts. So I would I would expect that to be the place where all

the alerts coming from traps, you know, are correlated and you don't have to you can use them in your normal workflow for like triage alert triage and stuff. You could leverage that. So there's not like a guide on there. You just basically have to set up your correct. Yeah. Cool. Uh looks like there's no more time left, but please uh if you're interested about deception, come find me. Uh again, thank you very much. [Applause]