BSidesSF 2025 - Using AI to Discover Silently Patched Vulnerabilities in Open... (Mackenzie Jackson)

Show transcript [en]

Uh now I'd just like to very briefly introduce McKenzie who will be giving the talk shadow patching using AI to discover silent to discover silently patch vulnerabilities in open source. Take it away. Thank you. Uh thanks everyone. It's great to see a full house here. Uh really uh really looking forward to speaking. Bides is always one of my favorite conferences. So I want to thank the organizers for accepting my uh my talk here today. A little bit about me. If you're trying to pick my accent, I'm from Arteoroa or New Zealand. Uh I'm the co-founder of Compago, which is an Australian company that uh still exists somehow. Uh but I live now in the Netherlands and I work

for a Belgian company called Aikido Security just to keep everyone guessing where the heck I'm going to be in the world. Uh and you can follow me anywhere online at the handle advocate Mac. All right. So this talk uh is about some of the research that we've been doing at Aikido Security and particularly around securing the open source supply chain and how AI is playing a role in that and it's been really really uh insightful some of the results that we got. But first before I want to get into the research and kind of some of the technical details around it I want to talk a little bit about just the open source supply chain in and

of itself. I think some of you will probably be uh very familiar with the next couple of slides, but I just want to go through them to get everyone onto the to the same page. So, there's this kind of stat that gets flown around a lot, which is that 85% of your code doesn't come from what you write. It comes from open- source components or dependencies. Now, this can change obviously, maybe it's more, maybe it's less, but it's a significant portion of what makes your application run comes from these components. And it's really quite scary because in some ways an attacker may know your application better than you do if there's vulnerabilities that are introduced via

some of these open source dependencies. So just to give you kind of a visual representation, we have our application uh here. Our application is doing amazing things and then we have some open source dependencies that help it do those amazing things. And this makes sense. We know what these open source dependencies are because we picked them. But what we don't understand is that these open source dependencies have dependencies and those open source dependencies have dependencies and we can kind of keep going down about 30 layers. And this still looks somewhat manageable. But then we have to introduce a new component and that's our third party services. And our third party services are also kind of connected to our

application doing things. And at this point it still looks manageable. I can see what's open source in my project. I can see what's a third party and I can kind of follow the path down. Now, if I was to make this graphic more accurate and give an accurate representation of what it actually looks like, it would look more like this. And this is because open source dependencies can be dependent on each other. Our third party services are dependent on open source dependencies. And it's all just a giant crumbling bug of mess and spiderw webs. And why is this so significant? Well, it's very very hard to understand this at all. And if one of these open source

components down here, we see that little red dock uh becomes malicious or a vulnerability is present in it. Let's just call this little box here log 4j. Then a whole bunch of things upstream can then be turned malicious, which can turn our application malicious. And what's interesting is that if we don't know that we have this box called log forj in our application, we may not even know that it exists at all. So we don't even know that we're vulnerable to it. So the open source supply chain is really important. It's also very convoluted. It's very confusing and it's hard to understand. Now there's this meme here. I'm sure everyone's already seen it before. Um it's it's it was very

popular. It kind of describes it this this problem in a nutshell where you have something where everything's kind of stacked on top of some open source project that someone's been maintaining. This meme became so popular that it sparked a term that I love called the Nebraska problem. And the Nebraska problem basically describes this that everything's so convoluted and linked together and we don't actually understand what's holding everything up. And we can see examples of this in xutilis which we were this close for the whole internet crashing or being vulnerable. Also in log 4j we we we actually this this meme is so accurate that we can even like we even know exactly what these components

are. So let's have a look at one of them. So, UA passer here I is a is a open source dependency and it does something very simple but very important and it lets you know what what uh systems what computers what if they watching on a computer operating systems that your users are viewing your website through or your application through what what what tools are your visitors using to use your application and it passes that information along. It's does something important and this would pass everyone's smell check as to what we should use in in our application. It has 10 million weekly downloads. It has 56 versions. So, it's got a long version history. It's got thousands of

dependents on it. So, this passes a smell check and a lot of organizations and big organizations use this including Meta and other massive companies because it was pretty solid. But then a while ago, this was posted on a Russian uh dark uh dark neck forum, which is saying that, hey, I have an MPM account for sale. There's no two-factor authentication on this MPM account, and uh you can do crypto mining with it, yada yada yada. So, guess what happened? This ended up being sold for $20,000. And UA Passer had this code put into it, which is very nasty. and as and along with some other malicious code, but it's basically trying to steal credentials and steal

cryptocurrency. So, how do we know if we are using a vulnerable version of UIP passer in our application? How do we know if UAP passer is one of those? Well, there's something called a CVE report and we have uh vulnerability databases and we rely on these very deeply in security at the moment. So, here is the vulnerability report for UA passer. It lets us know that there's a crypto mining backd door. It lets us know what version it is and I can check in my project to see am I using this version of UA parser and if I am then I am vulnerable to this and we can extrapolate that off to all of those

components including the downstream components if we can discover them of what these vulnerabilities are. There's a few more. Here is one another very famous one which is a which is a vulnerability in the the the Linux kernel called dirty pipe. Now this is actually different because UA passer was malicious. An attacker took over the account and maliciously made an update. Dirty pipe was just a vulnerability. Nothing malicious happened. But the same result was that we all could have been vulnerable to this simply because there was a vulnerability in there that if people knew about it, they could exploit it. So how do we know if we have this vulnerable version uh in our applications? Well, we can check a CVE

report. And this here is the most famous one probably log 4J. And uh of course we all know about this one but from 2021 it was a very bad Christmas. I think everyone remembers where they were the day that that happened. Um and we know about this because we have the CVE report. There was a little fun fact. uh Verico did a study in 2023 exactly two years after log for the log forj drama and found that 30% of applications were still running the vulnerable version of log forj which is kind of terrifying uh in a way so I don't know what it is today but it's still probably going to be a significant you know a very

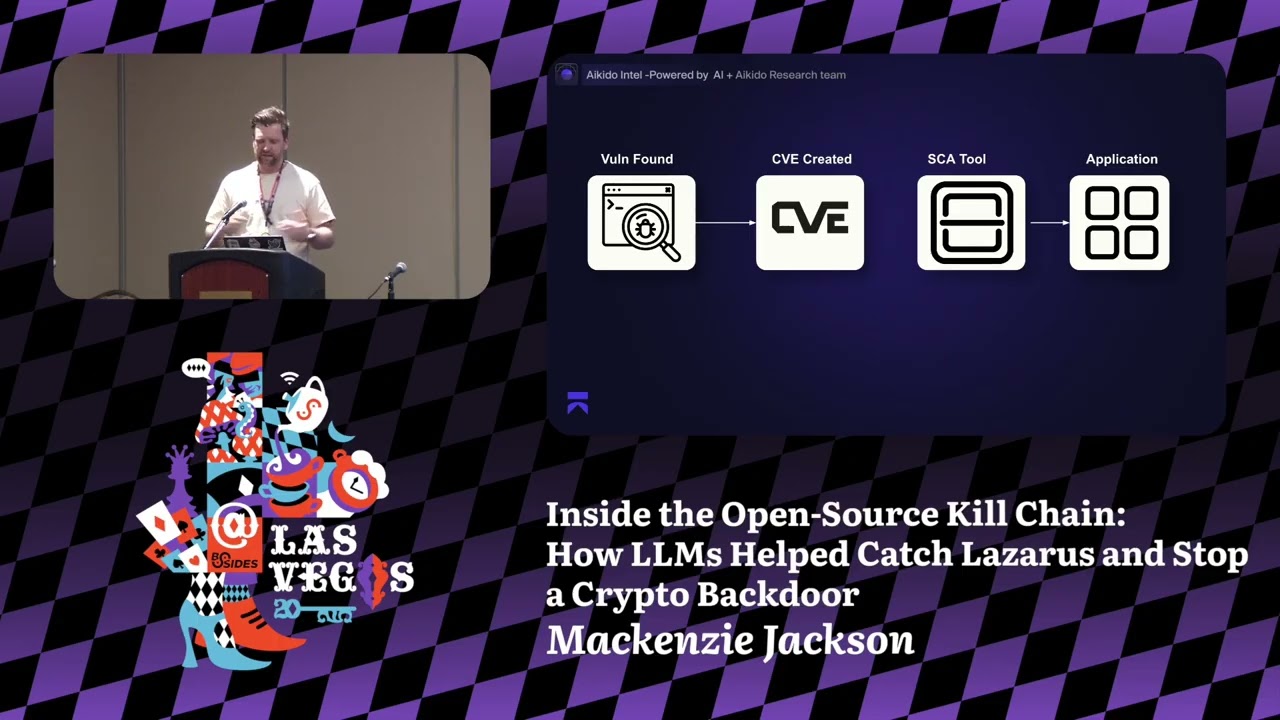

proportionate part of the internet that's still vulnerable to this so quite scary stuff here why is this all relevant and why am I talking about this and how does it relate to the research in AI I'm getting there I promise Um, so it comes back to how we actually kind of detect this. So what we do is when a vulnerability is found in an open source component, it's added to these national databases. A CV is created and then we use something usually uh something called an SCA tool, software composition analysis. It looks at all our dependencies including transitive dependencies hopefully and it will uh look in our application and tell us what we're using. It may be able to create

something like an SBOM so that we can see it clearly. But most importantly, it checks with the CVE databases, with the vulnerability databases, so that we know if we're vulnerable or not. This is how we secure our open-source supply chain now. But what if the vulnerabilities weren't disclosed? Because this has a very big flaw in it right now. And the flaw is that we this only works when the vulnerabilities are known and reported. And why this is so important is that if we know that there's a vulnerability in our supply chain, we will take action. We will update our systems. But if we don't know, we may not. You know the saying, if it's not broke, don't fix it.

I'm sure everyone in software development can relied to that. When you upgrade your dependency and everything crashes and you spend the next week trying to figure out why, you know, this this has happened. So we often don't update as regularly as we should maybe because we don't there's no vulnerabilities here. But what if a vulnerability was found? and what if it was not reported? And that's what we wanted to try and figure out with our research. How many of these vulnerabilities exist and how many of them are never reported? And it's a very hard problem to solve because we're talking about kind of recall here. So precision is something that we can understand in our research, but recall

is essentially looking at what did we miss and it's very hard to find something that doesn't exist. But we decided that this is a perfect task to try and introduce LLMs into this and use AI to try and uncover if we can find out how many of these vulnerabilities are found but never reported. So there's a name for this. It's called officially it's called silent patching. I just don't like the name. So I'm trying a oneman crusade to rebrand it to shadow patching which is why I called it the title of this shadow patching. So so far it's not taken off but we're we're going to get there slowly. Um okay. So this is this is that

and why why then are these vulnerabilities not reported why if they have it you know do they not go through there's a few reasons there's fear of uh you know reputational damage no one wants this vulnerability in their application that they've made there's you know reputational concerns from that you maybe have a lack of resources to be able to solve it correctly you maybe there's a belief that oh this is just minor anyone in bug bounty that's reported an issue that knows it's serious but has to fight with people to try and get them to understand will kind of feel with this. Maybe your dog ate the CVE report. That's a relevant excuse as relevant as any of the rest. Now, I

do want to say here that I do have some sympathy for people that are maintaining open source projects because if you find a vulnerability yourself and you have to go through a long kind of reporting process and you're already just working on this in your side project, I can understand why you would not want to report it. However, the fact of the matter is that we're so reliant on this reporting system that it's really important that we all do. And there's also another area that kind of creates concern and that's a bottleneck that we're seeing here is that there's a huge bottleneck in kind of when a report is made and then it's evaluated by various different people

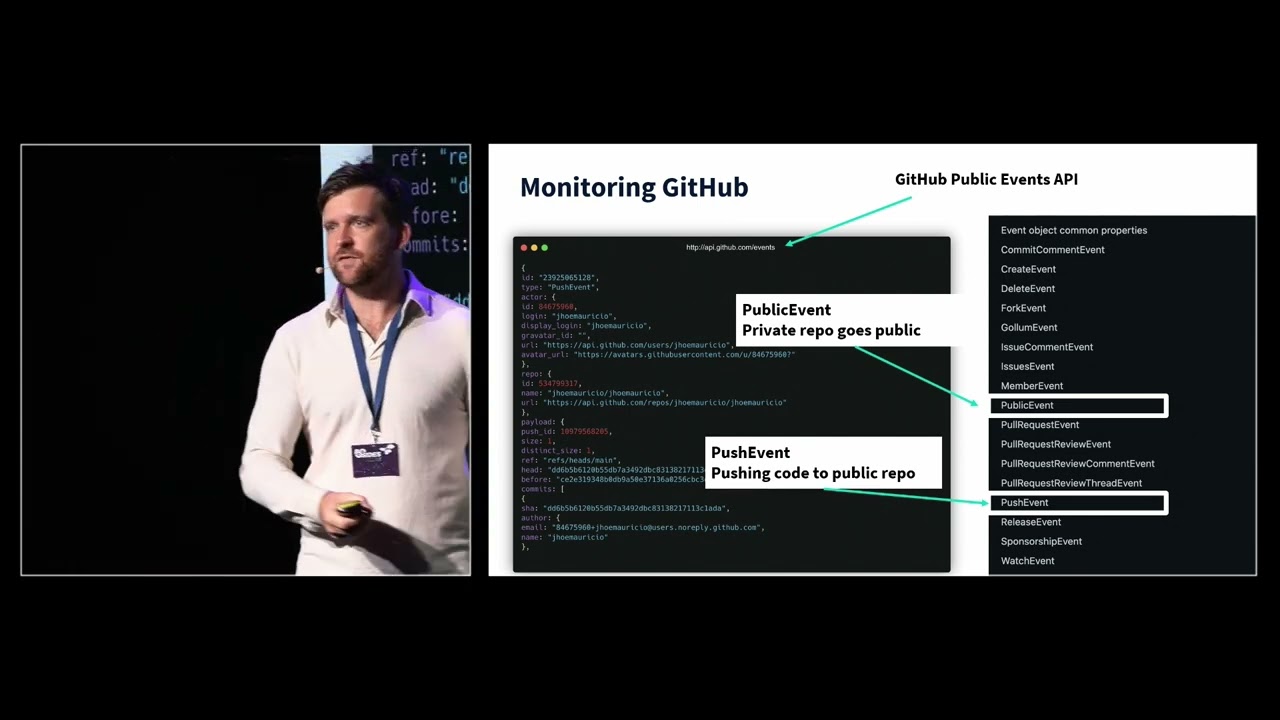

and added into the database. So this bottleneck is also affecting it because maybe a vulnerability has been found, maybe it's been patched, but there's a long period between when anyone actually knows about it. And this gives attackers opportunity. So we created something called Intel. Now this is our threat feed here. It's on uh GitHub. It's an open source threat teed. We also have a very nice pretty graphical website, but I was told I'm not allowed to show it because it was too producty, but that's okay. We can it's it's just the GitHub thing, but pretty. Um, and this was our initial concept. Our initial concept was we're going to use LLMs to monitor public change logs. And then what we

wanted to do is identify when a change has been made to software that relates to security. We will then check that against vulnerability databases and see if they've been updated and then we'll verify those results with a security engineer. A real person needs to kind of look at this. So that's what we decided to do. And at the start, we thought this was going to be very, very simple. That this was going to be a very easy problem to solve and it would be no problem. But it's very, very quickly got complicated. And there's a few things that we quickly ran into. One is that change logs has no standard format and they're not even in

the same place. Sometimes they're on GitHub, sometimes they're on a website, they may be somewhere else. So trying to identify where all these change logs were and verifying results is kind of very very timeconuming. So we needed to make sure that the model that we built was as accurate as it possibly could be. So just as an example, here are three different change logs of software of very popular open source pro products and they're all in different places. So one is on the website, two are on kind of websites. There's no standard format. So trying to get this all to work was was very difficult. So here's the architecture of how we actually did this. So, first we

created a a source, a list of the 5 million most popular open source packages. These are the ones that we really wanted to monitor. Next, we used scrapers to scrape all of the information from that and the change logs. The next step that we did, and this is the first part that we used an LLM, is that we actually used an LLM to reformat all the change logs into a standardized way so that we could then run scanning on them in the same standardized format. We then used a different LLM model to actually identify vulnerabilities in the language from that. Next, we built a system to cross-check everything that we found with the NVD well with the

vulnerability databases, multiple of them, including NVD and GitHub advisory and other ones. And then the last step was the human step who needed to confirm this and classify it. And this human step was really important at the start because everything got fed back into the models and we were able to improve. So over time, we've really really improved the efficiency and performance of these models to the point where we're getting we've really got to a very manageable area of false positives for the humans to be able to kind of identify and look at. Now some of the other considerations here is uh at the start we're kind of looking at using a custom AI model and

it's very cool to stand up here and say we built our own AI model but we very quickly actually decided against that and we just started using open AI as models. The reason for that is basically by the time that you build your own model and update it, it's it's already outdated as soon as the next update comes and then you get all this free performance if you're using OpenAI. So we started using kind of the the the standardized uh models for this and we found that they were kind of increasingly getting more performant for us. So that was kind of one of the considerations that you might have when trying to choose this. And I put this

slide in purposely because I know a lot of people are right now looking at how they can implement a AI and I hear a lot of people talking about using their own models or models from hugging face. And I'll just say that from our experience be careful and making that decision. All right. So what did we find? This is the juicy stuff. So in 2024, we ran this for a full year. We found 550 undisclosed vulnerabilities which is quite a lot. 61 of them were ext were were critical and 113 were high. So obviously low severity was the most that we found but we were really concerned by the huge number of critical and high severity vulnerabilities that

we also found in here this year uh just in January and February we found 126. So this is actually increasing. So this is partly because of our models performance is getting better and just partly because well the world's kind of chaotic. Um, but we've also found a lot more uh high severity and critical vulnerabilities from here. This is the part that really scared me. So, of that you can wonder, okay, is the problem that these were never reported or never going to be reported or is it that they haven't been reported yet or that bottleneck that I was talking about? Well, it's still really important that we under we find them earlier. The the the earlier the better and I'll explain

exactly why that's more relevant now than ever. But 67% of them were never disclosed. So the vast majority of them never got a CVE number against them. We uh repeatedly check once we once we find a vulnerability we do have a system that checks a lot uh that if a CVE is created that when then we note that. So the fact that 67% of them were never uh reported is really quite scary. And what's most scary is that the this even applies to the critical vulnerabilities. So 56% of the critical vulnerabilities were never reported. So that's kind of very scary. There is a huge number of open source packages that are popular that are being

used in production that have critical vulnerabilities that someone knows about but you don't from the person that's using them. So if we have a look at some of the more interesting findings that we had. So one of them Axius uh was one here. Now this is a promisebased HTTP client. I'm sure a lot of people probably familiar with this. This is very uh very popular project. This had a 77 out of 100 high severity vulnerability. It was discovered in January 2024. And so far uh it has no CVE fix. And this is a project that has 56 million weekly downloads that has a high severity almost critical severity vulnerability within it that was discovered by the maintainers and fixed

by the maintainers but never actually reported uh from it. and that still doesn't have a CVE. Uh here we have Apache E-Charts. This here had a cross-sight scripting vulnerability in it. That's was medium but almost a high severity vulnerability. This here is a very popular package with nearly a million weekly downloads. Again, this was discovered in March 2024, so over a year ago, and there's still no CVE for this affected version from it. This is one that's mostly interesting, not so much because of the pro the because of the package itself, but because of the name behind it. You know, this isn't a company that you would expect to not be reporting vulnerabilities. And also uh

another one here, this was a critical pass reversal vulnerability in it. Again, millions of of downloads from this one here. This is the core lateral CMS composer package. And this one doesn't have any CVE uh to it as well. So there's a a lot of very critical things in there. Uh, one of the points that I want to kind of stress here is why is it so critical now that all of these vulnerabilities are reported and reported quickly. Well, what we did what what we built was in essence a fairly simple use case of using LLMs. We're looking at public large data sets and we're finding when a vulnerability has been fixed and essentially that means that an attacker

can attack this exact same approach which means that we now have to assume that all of these projects are have also been found by the bad guys. So they now have the opportunity to to exploit them. So this is why it's so important. Now a question that you're probably all having and that everyone has uh when I talk about this is why aren't we disclosing the CVEes ourselves? So we are making sure that we do responsible disclosure of this. We're making our threat feed publicly available and available in various different ways like RSS feeds and we're also trying to be registered as a CNA. This is a company that is able to kind of uh report vulnerabilities.

This is a less than fun process I will say but we're going down that approach. So hopefully in the future we will be able to report all of these as CVEes as we go down. Now another question you might have is okay but why LLM? If you're looking at public data, this sounds like a problem that could be solved by more traditional scanning techniques, you know, or using traditional tools, maybe writing some rules to try and identify certain things. And the reason why LLM was so useful and so important to use in this case is because the language that was used to fix a vulnerability is very ambiguous. Now, when someone's not trying to hide the fact that they've got

a a security vulnerability and maybe they're just not reporting it, they will probably use something in language like here I have an easy discoverability is that they've fixed, you know, escape select text to avoid cross-sight scripting exploit. I think that's about as easy as it could be and you could write a rule to be able to find this in a change log definitely. But then it starts getting harder and it's particularly hard when you're trying not to report the fact that you have vulnerabilities in there. You know, for example, if we look at that last one there, you know, increased default work factor, uh, blah blah blah blah blah to some blah blah blah iterations like

this, like I don't even fully understand this in this project, but the LLM was able to discover this and understand that this is actually reporting a vulnerability in the project. So, this is a much harder difficult bit difficult to discover. And then you also got to think about how would you even possibly write so many rule sets to find all these, especially when you're kind of acting blind. And this is why an L&M was so important to be able to do this to do this here. All right, what's next? Uh, well, malware detection. We've just actually officially launched malware detection. So, when I made these slides, it wasn't officially launched. So, I had the don't tell anyone, but you can tell

people now. You can tell everyone out there in there that we're doing malware detection using the same methods. So, we're we've kind of gone with the same uh concept of approach, but we're using a slightly different method to be able to detect uh malware inside the open source supply chain. Um, so basically the LLM part in here is different. We're not using an LLM to actually identify malware specifically. What we're doing is we're using an LLM to basically act as a triage and act as well and act as an orchestrator to understand this. So uh we have uh these these models looking on uh MPM. I'll just look at the the different uh different sources. Mainly MPM is the

kind of our biggest source at the moment. Now we scan these for kind of traditional uh we scan these for malware using kind of traditional tools that we have and then we have indicators from this. So we have 30 plus indicators that we use to identify malware and depending on how many indicators it has depends on how strong of a response that is. We then use LLM's tools to basically look through that triage the results and make a determination to give that to a human or not. And then we have a human uh ver verification. What we can say about this approach is we we have a less than 5% false positive relate uh result in this.

And when we do have a false positive result, it kind of comes down to something that is definitely a dangerous way of doing something in code, but maybe there's no malicious intent behind it. So that's kind of so we have a very high rate of kind of being able to detect these. And here we found some really, really, really cool stuff, especially recently. But just in March, we found 611 malicious projects just on npm. And the average time to detect these with our LM model was 5 minutes. And if we kind of compare that to a benchmark using the open SSF foundation, uh they had an average time of being a detected for 10 days. We haven't missed

any that they've found. Uh but there is quite a lot that we've been able to find uh that haven't been reported there. Now, to give you a little bit of example here, this one's really fun. Uh I love this one. This one is from the North Korean uh uh AP Lazarus. And we got reported to this vulnerability that was in uh in uh in this project here. It the project was called React HTML to PDF. And at first we got reported to this and then we looked at the package and we couldn't actually see what there was anything wrong. There was nothing obviously malwary in here. This was the code and this was the package that had

the malware on it. Does anyone here can anyone here see an indication that there may be malware on this side? Yeah. Yeah. It's the scroll bar. So the scroll bar they had just offiscated using the most simple way which is so stupid because it almost kind of works but the scroll bar was in the way and this was actually what was there and it was kind of making this going to this weird uh domain making an IP uh ID IP check but it wasn't. Uh and basically what that did is that then delivered a payload of malware that did a whole bunch of nasty things. Primarily it was looking for cryptocurrency wallets from uh kind of

browser ex from wallet extensions. This is very typical for Lazerus Group at the moment. And then it was also trying to find credentials and then the last step that it did was to try and persist access by installing malware onto the machine that it was running on. So there was very very nasty things there that uh that uh we found. One thing that was really interesting about this is that we found it and we looked at it and then we noticed that a new version came in 10 minutes later and then a new version came in 10 minutes later and we realized that we were watching the uh the group debug their malware in uh in real time.

So there's a fun story that we made about that which is quite cool. Uh and so here are some of the the payload that we looked at. Uh in this case, these are the ids of some browser extensions for for browser wallets here. And it was kind of looking for C caches, looking for passwords. It was trying to get your Mac OS keychains uh if you're on a Mac. So doing lots of nasty thing. And then as I said before, installing that back door uh into it. This one here is more recent. This one we actually discovered this week, which I'm very proud about. So this one over here is from the the the Ripple XRP which is a

cryptocurrency. Uh there was a package from the the XRP foundation which is the official kind of foundation managing this and they produced a package called uh XRPPL and this was essentially the official SDK for developers to be able to communicate with the Ripple ledger and a lot of the major exchanges would have been using this. If you can think about Coinbase, Binance, Buy Bit, this is the official package. So there's no risk or no reason why you wouldn't be using this here. We discovered this week that actually uh there was malware a back door was put into this. We discovered it a few minutes after it was actually introduced. We realized uh quickly that one of the developers npm

tokens have been comp compromised and that was how they did this. They bypassed the kind of the GitHub uh workflow that they had. The one thing I will say about this is uh which is a which is a recommendation to sign your releases uh so that you can verify there was no signed release here from so that you didn't need to go through the official CI/CD pipeline to be able to publish this and here uh is basically introducing this uh this function here check validity of seed which then goes to this dodgy domain and then gets uh malware and kind of posts information and essentially what this was doing is trying to steal your private keys not

just for Ripple, but also all of your private keys. So, the fact that they managed to kind of do it on the Ripple, the official Ripple package was very scary, but it actually would have impacted a whole bunch more than just Ripple. So, this is really scary. And uh this one here, this this here, our reporting went uh fairly viral or viral for us uh this week. So, you may have heard about it. But how that was actually discovered is our LLM malware scanning system that we've uh introduced here. So, that's all I have for you today. I'll be happy to kind of take questions and further discussion. Uh, Aikido Security does have a booth uh

there so you can come and you can also connect me anywhere on social media that you like. Uh, but thank you all for watching. I hope you found this presentation enjoyable and I hope to hear about all the fun ways you're using LLM to detect uh security issues in the future.

Thanks. I don't see anything on Slido. Any live questions? Happy to come bring the mic to you.

Can you talk a little bit about how um intricate are the prompts you guys use uh for the detection engineering here? Um and then can are there any other uh use cases uh apart from malware you you have on your road map? Uh yeah so the the prompts that we have um they're developing over time like all prompts with LLMs and feeding back into the into the systems. So they are getting more and more complicated as we as we get down. What we actually started with was fairly basic prompts. We tried to keep it uh we tried at the start to cast a wide net and then use our results to kind of fine-tune that forward. So

whilst we do have a master prompt that's quite large now uh then this is kind of more referring to the the CVE part uh of it with the malware the the prompt is kind of less important and it's more about the information that the tools or the traditional scanning tools are actually feeding to it. And we've found that uh even just simple and basic prompts have been very effective in kind of dealing with that and understanding the vulnerabilities that they have to triage it. What's important in the prompt for the malware is kind of what it does and how it makes a decision based on that. So most of the prompts in the malware detection is not really

about detection. It's more about how does it then determine and make a decision from that. There's lots of ideas that we want to do uh from this uh and kind of go beyond malware. We're doing lots of interesting stuff at Aikido with AI including kind of things around you know autofixing things. We're definitely going to evolve in our approach. Uh I don't know how much I can say about uh uh publicly about what we're doing with AI, but I'll be happy to talk to you at the booth if you really want to know some of the nitty-gritty of of what we're of what we're doing. Just don't tell anyone I told you. We have one down the front. Yeah.

Um uh do you have data on the false positive rates uh for the findings here? Yeah, we do. We do have some data on the false positives. So for the CVE kind of reporting one um or the the kind of unreported vulnerabilities we're looking at at the moment about 20% false positives coming into that. We started off with 40% and within about 6 months we did manage to reach that 20% level. What we have noticed is that even though we're still continuing to feed back in our results to try and improve it. We're pretty consistent now for the last kind of 12 months at that 20%. I mean it varies slightly but not significantly enough. What that kind of indicates to

us is that in this method, this is probably the ceiling of what we have because we're dealing with language. There's always going to be some variation in there that will so we think at 20% may be kind of the ceiling of what we have if we want to. At the moment, that's kind of manageable for our research team to then look at that. So, we're not really looking at investing more money into that because at because this isn't a tool that we we envision others using. This is a tool that kind of we're doing the heavy lifting on with the research team and publicly making it public. Um, but if we did want to improve it, there would need

to be another layer on top of that of something and that would involve kind of going deeper into it. So, we we've discussed that, but I think with our method at the moment, we've kind of reached uh reach a ceiling. When it comes to the malware detection, our false positive is kind of almost uh like almost zero. And we also have, this kind of sounds ridiculous to any kind of data science scientist that's listening, is that we do have a 100% recall in that. That is that there are other malware databases that we monitor to make sure we don't miss anything. And we haven't found anything that we've missed that we never see. Whenever anyone says you have

100% recall, I think everyone automatically, including me, goes to Um, but that's only because uh that's our measurable recall. What we know is out there, right? there's an unmeasurable recall that we don't know that we that that we have and we're obviously going to try and find that. Um but the but the false positive rates in the malware is kind of very very very manageable for what we have. Yeah, very impressive work man. Honestly, uh I just have one thing to understand in your Intel architecture that you showed when you say that you identify vulnerabilities in the change logs. So do you just take the diff of the code which is changed or do you build the

entire abstract syntax of the code which is there and then try to pass it to the LLM because it's it's actually much simpler than that. What we're what we're literally doing is we're not looking at the diff at this point. We're actually just looking at the change log itself and the language in there of like have you so I I guess actually on my architecture slide the the wording that I used was probably not not the best choice of like identifying vulnerabilities. What we're actually doing is identifying the like in the language that they've changed on that they've made a change. So we're identifying when they've said that they've changed input validation in this area then we then that gets kind of

flagged in it. So it's actually just in the language of the change log. Then it's kind of the the role of the security researchers to go into it. What we are going to do is then add additional layers onto that into the future that is then going to look at the diffs. What we ultimately would like to do is be able to scan everything for vulnerabilities. The computational power behind that is like too vast for us to do it. You like so there's you can scan like public GitHub for things like secrets which GitHub does and companies like your guardian do but when you start scanning the entire thing for like big vulnerability data sets then the

computational kind of power from that is extraordinary. So we're not there yet, but we are kind of looking at how we doing that. And I think the next evolution of it will be will be when we identify a vulnerability to then actually look at the diff of what's been identified and then go through. And what that will also mean is we'll be able to loosen cast a wider net. So get more at the start and then put it down. So I guess that's kind of like on the on the on the evolution. Hopefully that answers the question. Squeeze one more in here.

Are your slides online? My slides are not online. I will put them online. I'll put them I'll post them to my social media. Really cool project. Thanks. Um I I just had one question. Maybe I missed this. Um for the malware detection in open source software packages, did you say that you're following the same approach of first looking at the change log? No. Uh sorry. So with the malware we kind of took a different approach but it was the same idea of kind of like how can we use LLMs in the open source supply chain. What we've decided to do for the malware detection is we started look we started scanning uh there kind of using more

traditional scanning methods in npm. It's a little bit more manageable to actually scan all of it for vulnerabilities. What we found when we first started doing that and there are there are companies that have been doing that and they've been doing a a great job of it but you get a huge number of false positives from that and so you need a huge amount of human resources to actually then sift that down. So what we did is we kind of put in an LLM to make a decision on all the information and and what we call uh indicators of malware how they are the indicators are weighted. So we kind of put all these information and then decide do we give

this to a human or not. And so far what we found is that approach like we're lazy. We will do we will do like we're not going to over complicate things. I shouldn't say we were lazy. We're not lazy but you know what I mean. Like we're going to we're going to take the approach that that works best from a computational point of view and we haven't found the package that has been missed uh through that method. Thanks. All right. That's time folks. Thanks a lot Mackenzie. That was great. And thank you all for the great questions. Thanks.