BSidesCharm - 2018 - Devon Kerr - Quantify your hunt: not your parents’ red teaming

Show transcript [en]

talk about the difference between effectiveness efficacy and efficiency those are different terms that kind of get thrown around as synonyms we'll talk about attack itself and how to apply it as well as how to measure yourselves against attack and we'll talk a little bit about some of the frameworks you can use that help facilitate this process and just be aware all these things are kind of like moving targets so as they evolve our understanding of how to measure these things will also evolve this is us uh myself my name is devon kerr i'm a principal uh researcher at endgame my focus is really on detection and response technology and before that i spent about seven

years doing incident response for mandate and fire eyes so i'm trying to bring as much of that knowledge into this presentation as possible and this is my colleague roberto all right thank you very much devon my name is roberto rodriguez i'm part of the adversary detection team spectreops and also i'm the author of a few open source projects that i recommend just to you know check

source security event metadata i was trying to come up with a you know clever name my wife didn't like it at the beginning she said i don't think that sounds good i was like all right all right so that's basically going to focus a lot into providing some structure to your data and i'm this is just a work in progress so what i'm doing is i'm trying to get as much information from the logs that i collect there is more than 380 i think like logs from windows event uh and windows event logs you know uh there's actually some new even like attributes to logs that are just you know got released last year and

things like that you know with windows 10 in 2016 so i'm trying just to gather as much as i can and try to just to give also some type of like standard like in schema that we can use to start using things on your sim um and then i'm just a former uh senior threat hunter for capital one actually you know happy to see some of the people from capital one in here um just talk about the next one so why this stock and i think that i've seen actually you know people talking about quantifying your hunt and this is great i've seen some great presentations recently a couple days ago as well and uh last year but then with today's

keynote actually aligns perfectly what we're trying to do in here which is we have to understand exactly what we have and assess exactly what we pretty much can utilize to start actually um you know going against these different you know techniques and things like that and we can just go to the next one and that also brings me to start talking about what are the things that i'm still seeing hunting struggling with and i think that this comes down into try to provide this you know transparent way to show you weaknesses to show you strengths a lot of people are trying just to you know do a check and say yes i can detect this

uh so that means that i'm blue or or green and things like that i cannot detect this and this is red that's not in my opinion the way how you should be doing it because you have to back up that information you have to support actually why you want to give that scoring capability so that's huge for teams right now i see also attack of course becoming the standard and i'm seeing a couple of teams also struggling with actually implementing attack and i think devin is going to talk a little bit about what actually attack is and then at the end of course you know i've seen also other teams that they don't even know where to start and

there's nothing wrong with that because even though you know we've been hunting for years right the concept of having your own team your own methodology that's technically new so it's pretty good to start now approaching it also with a different you know way that we're going to show you today and then when we start talking about effective hunting actually that's a term that i liked actually you know when i started you know sharing a couple of blog posts and things like that but we move to the next one basically anything is effective threat hunting over there right if you put a name in there a product or just any technique that you use it sounds badass

but actually what does that actually mean right and then after talking a little bit with actually cyber panda that's actually my brother by the way those that know about it we started actually defining a couple terms and these terms like um efficiency and efficacy it started kind of like you know arising from that conversation and then i started realizing you know what it makes sense we all focus on the efficacy i want to detect it i want to go i want to just get to it but how are we actually doing it so we are not actually focusing too much on the efficient part of what actually makes the world effectiveness and this is what we're

going to be focusing today and then when we start kind of like moving towards talking about efficiency and efficacy kind of a map to a hunting program or a conversation about detection that's when you start actually um understanding that you have to be aligning your defenses to with a threat model you have to be efficient in order to know exactly where you're going to be defending against you have to be focusing a lot on the data that you have right is this the right data is my tool just giving me the data and i just gonna find evil so you have to start actually understanding if you actually do have the right data and also if you have the right people

skills if you have also the right technology and things like that and when we come down to efficacy then that starts making more sense to me because then i can actually support what is it that i'm trying to do so we talked a lot about uh definitions but we really haven't talked about where do you begin this process and kind of begs the question when are we going to start it's going to be a little bit because before we talk about where we went with this approach we really have to look at some of the history that's already been established and roberto is responsible for some of this earlier last year he introduced a blog

post about how hot azure hunt team which quantified how teams detect and respond which is you know taking into account the human factor and attempting to quantify that in a realistic way as well as a more recent blog post on data quality and that data quality assessment is is extremely important and something that i was just trying to share because i've had a lot of conversations with people in the industry you know great talks actually but something i want to just emphasize with these two blog posts is that i also asked that question to myself you know where do i start and and the first one pretty much reflects my first kind of like answer to it and

say why don't we just visualize this in a way that would be easier to also share with others that are not probably as technical and the second one was kind of like i started moving beyond just talking about data availability which pretty much we do this tool gives me this data so i'm good right so we're trying to go beyond that try to assess the data that we get so then we can start mapping it in with analytics and things like that something we're going to cover uh you know definitely this presentation yeah i promise we're definitely going to get to that but we're not going to get to it quite yet so first we have to

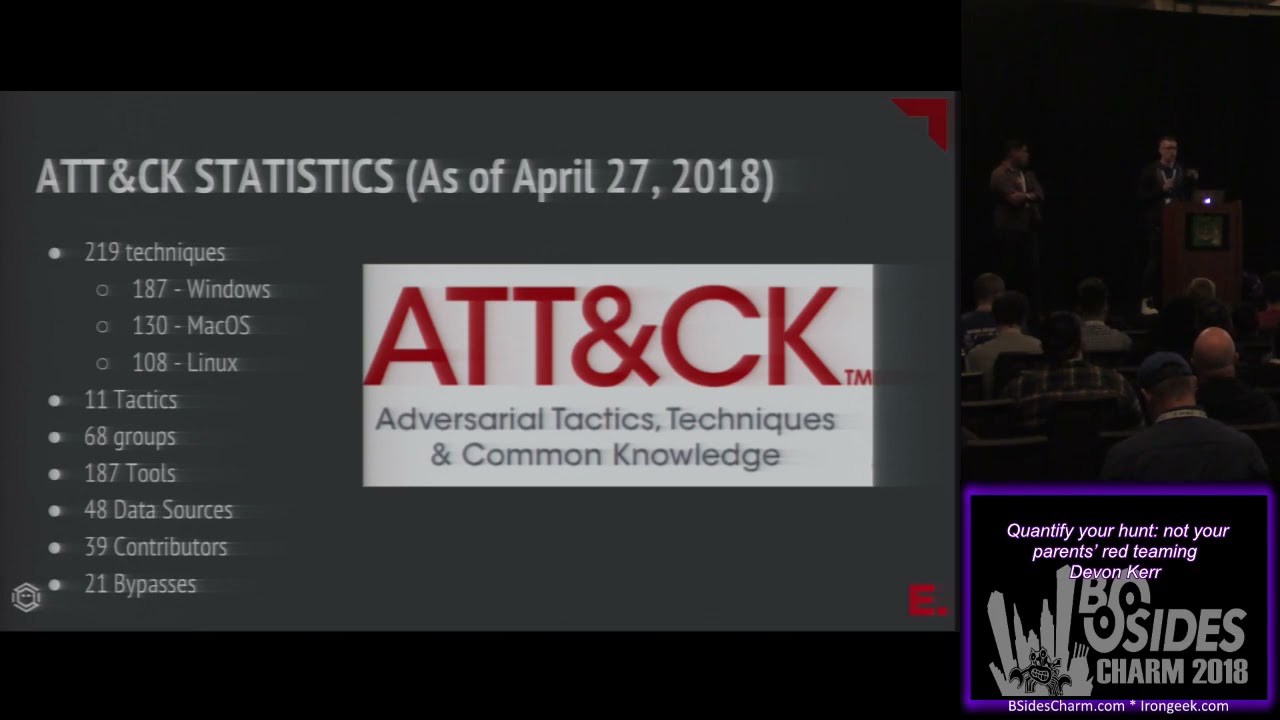

introduce enterprise attack and of course choosing an adversary model i like the way that mitre put this because a lot of folks approach attack and they think it's a process they think it's a thing that's just ready to be digested and it's not it's a knowledge base it's a really great knowledge base in fact i think it's the best available knowledge base of its kind and what it does it gives you resources so we thought we'd give you some notions of why we like it as a model well first it's threat agnostic and even though you can cross-reference some of this information by threat group it talks about these techniques discreetly and when you look at

detections do you care if it's a nation-state using ps exec or you know an insider who is abusing those controls well to a degree you do as a business but from purposes of developing analytics you probably don't care who the threat is it contains more than 200 techniques that are categorized into 11 different types of motivations and of course includes reference materials which means if you have analysts who don't understand these techniques it provides the material to educate them it also includes reference materials that are outside of mitre so you can follow those links republish reports some of those are going to describe specific applications of those techniques while others might give you a more generic methodology either from an

offensive or defensive perspective and again cross-referenced by threat groups if that's your thing i don't put a lot of stock in that but some folks really care and we don't we don't really differentiate between those use cases in this uh in this presentation to give you an idea of what a technique looks like here we've got an example of just one type of technique and you can see it's got some tactics which describe motivation tells you the os gives you uh the data sources these are the places where you need visibility to detect it not always every data source but there will be evidence in one or more of these uh for the technique and of course at

the bottom of this uh you will have links to various presentations which are are helpful um by the numbers 219 as of the april release and so they added uh several techniques uh they just had a tweet earlier today about where those changes were and of course this gives you just a little bit of information what's kind of interesting about this is 68 threat groups are associated with these techniques 39 contributors that includes external contributors so people in the community developing this material and sharing it with the rest of us so that's one of the reasons we think it's it's really valuable um now that we've talked about techniques you might think okay cool i can go and look at a technique we can

just get started i wish i wish we could um we really have to look at what pieces of this we can measure if we intend to measure against it so in the subsequent section we're going to really talk about our analysis of attack we analyzed attack to understand where to start the sources of evidence data objects and attributes moving towards a notion of like a common data model or a universal rosetta stone for these data objects timeliness of data data quality and consistency and of course we're finally going to get to guidelines for building analytics which i think is the part most people are very excited about all right and then when we started talking about understanding exactly what

you have right i don't know if you guys actually knew um you know raise your hand if you knew there was actually a minor attack api site so you can actually go into and start getting more into the specific relationships between each part of each technique for example and that's how i started actually thinking about the whole date of my attack which is like what can i start now using is and to start mapping it directly to something that i do have in my environment and i love graph modeling and i just started putting together a couple of connections based on also the attributes and the relationships of each specific data field in this information per technique so then we can

start actually mapping platforms to tactics techniques but something that i actually have not seen doing uh people doing much is actually then mapping it to data sources and i think that that's pretty much where we started actually thinking what is the smallest element that it's actually shared among all these other techniques and we started actually you know finding all these uh different data sources we actually uh started you know going through the numbers actually 48 that's what we said 48 data sources and you can actually go through the slides once we uh you know post it and you can see exactly what's going on but then the next step to me was actually now understanding

um how can i actually start mapping each data source and how many techniques i'm actually hitting and i think that that's pretty much what where i started seeing the value when i seen if you really want to talk about data sources and even tools the first step i want to know exactly what i'm covering i know that we want to jump into all these crazy nice and awesome analytics right that you want to build and you want to detect evil but then at the end i guess that this is for itself where you can actually start at least focusing now i understand that there are different names here for the data sources and some of them

actually are more related into just you know try to look into your alerts for example like if you go through all these different data sources you will find some words that in my opinion don't align directly to a tool but this is a good start and you can actually go through all of them and you can see once again those uh you know metrics so once again you know we don't just need data right but we need the right data and that's when i actually went back to the data sources and i started seeing some of them i said you know what i'm finding some relationships among these data sources once again mitre attack gives you this

information but they don't tell you exactly what should be you should be looking for exactly in your environment and i think that once again going back to the keynote today is that you do have a lot of data and you have to understand exactly what you're collecting in order for you to actually start using in my opinion the modern attack framework so we go from those two and then um pretty much i just started thinking about what is it that i'm looking for when i talk about process monitoring and process command line that aligns perfectly with a process which will start calling a process object because as object programming you have an object and properties so in this

case would be a process with the attributes that i believe is what i need in order to start mapping things to these data sources and then after that it's pretty much where i started thinking more into all right i'm going to start creating my own data objects with my own attributes i need a data model and a data model basically it's going to allow you to um define the destruction of your data in relationships and your data miter actually started a great project with the car analytics and they do have their own data model they actually started doing it from information from cybox which then migrated i think to be part of oasis i think that's what you said in english

and spanish spanish would be oasis but then you start actually now thinking that there is some type of relationships that i can find with this model and that's when i also started also building my own stuff based on the information that i had and without even looking into into the relationships i started looking at for example like in these three objects i do have a process attribute so without even like reading much about what could be related i can start them finding things that might be useful also not just for hunting but for instant response like what can i start using uh to start pivoting from my data and then i went to of course if you go to that link uh it's

actually the legacy website still from sticks project and that actually defines all the different object relationships and things like that it does have a lot of relationships so i encourage you to also build your own ones and make sure that makes sense to the data that you have and then um click on the next one so then what i did is i just went back again to my data sources and started to start testing this first idea and approach i pick process use of network and then i start actually now thinking what that actually looks like and of course makes sense a process right connecting probably to an iep connecting to to a url to our website so then if you

go back to the next one um then i started just aligning my process objects and then it started creating these connections once again i love to connect things so from a graph model in perspective makes sense and then from there i say okay now i feel more comfortable saying exactly what i need from the tools that i have in my environment let's say this month if i use the process system event id1 i can start mapping what is it that i need from a process object perspective and what i have so with that i started thinking about how complete is my data now and then if you go to the next one 4688 also gives me information about a

process but something that i started realizing then is that i do have these two tools right free stuff but then i realized that for example windows security 460a doesn't give me a hash but sysmon does give me a hash so i start actually now defining what is it that i need what is it that i have what's complete and what actually might complement each other because information that 460a provides might be information that i will need also probably when i start using sysmon as well so with this first i would say basic approach i start now thinking about what is it that i can measure with what i have in my environment from a data perspective

yeah so we've this has been really dense i know we covered a lot of stuff really quickly hopefully everybody feels like these are some commonly understood terms for data analysis what's kind of important about linking your sensors to data objects is not all sensors are created equal some are designed to be tamper-proof while others are easily hijacked and so you want to be aware of that because that availability is going to be something that becomes important later we're just talking about getting the the primitives defined so uh we talked about a lot of stuff uh what can i measure can i finally start writing techniques please uh we kind of have to talk about what type of data we have and what we

want to do with it um we're approaching this from the perspective of hunting hunting is a blanket term my perspective on hunting is that it is just one of many forms of detection it is not a special workflow we detect and then we deal with the things that we've detected so from a hunt perspective which is just one form of detection we could look at stuff like well i know i need some amount of data do i have the data that i need in general to begin this process based on those attack data sources then i could say break it down further if those apply to a specific part of my fleet do i have 100 of those data

sources on 100 of my windows endpoints or on 100 of the windows endpoint archetypes like servers workstations etc that i've defined yes or no this helps us to understand where we're going when we start developing analytics we can ask questions about whether we have visibility in the environment based on just things like retention if i have an sla an internal sla that i will provide detections within say a 30-day interval and i only have two days worth of data well i'm going to be completely unable to address that requirement and this gets a little bit into like the hazy area between authority and responsibility we want to make sure that we have the data because that's part of the way we

measure we don't want to measure based on can we just do it if we catch it within 24 hours because that's not the cycle we work on we have to know if that sla's 30 days we can cover 30 days we have to not give ourselves credit we haven't earned and similarly we have to look at things like talent and talon's a subject we're not really going to spend a lot of time on in this talk but we do promise eventually we will come back to it in a different presentation and of course data consistency which we'll define uh shortly data consistency from multiple sensors whether those are built into the operating system edr tools whatever we deploy

we have to understand the data that those sensors produce we have to use the data model to assess that so not all of these data sources are created equal we saw this kind of in the sysmon versus uh detailed process auditing example you are not going to be able to get these answers if you're using some commercial tools what i encourage you to do is work with your vendors your vendors know this they have schemas which define all of these things make sure you work with them to get those answers don't try and assess it independently that will be a very long and frustrating process now roberto borrowed this quote in his blog post on data

quality but i think it really establishes what data quality means in this category data are of high quality if they are fit for their intended uses in operations decision making and planning if you are unable to plan a hunt that is within that bounded period of time with the data quality that you expect then your data is is not sufficient to the task and then you have to work towards that goal so each one of these barriers that you encounter is a goal to work towards and it sets a pretty clear objective now to give you a standard for data quality the department of defense has this wonderful table that gives you uh the six properties for

assessing data quality now two things i like about this is i agree with all the quality uh descriptors all the attributes that are described here make sense from a hunt perspective the other thing i like is the example metrics are all provided in percentages because even though this is a quantitative methodology it allows us to give relative quantities for some of these values so it's not simply thumbs up thumbs down absolutes but it is sliding scales that we can work within and that's going to be really important once we start thinking about the bigger data quality paradigm and when you start talking about data quality also dimensions like the ones that you see in the table before

there are actually some of them that might be a little hard actually to start tackling first for example like accuracy like can you you know go to every single endpoint if you don't have an enterprise solution that would actually tell you hey by the way this data was tempered and then you say oh my god there is actually something temporary in my environment so certain things might be a little hard so pretty much what we started doing is actually starting focusing on things that are actually feasible right away actionable something you can go back and start doing in your organization so pretty much took those three data dimensions completeness timeliness and data consistency and then pretty much we started defining

how can that align now with our conversations about even starting a hunt engagement and the first one from a data compliance perspective that's where your coverage comes into place because now i do have all the data in my sim in my elk or anything or your splunk instance but now i want to know how complete that data is because now i want to start actually understanding the coverage in my environment from a platform perspective to perspective and things like that second concept then it starts getting up you go back to the other one second concept is start actually now focusing still data completeness but with the example that i was showing you before with sysmon and also

uh security log 688 where you can actually now start seeing how complete is that data that i'm working with based on these data structures that you define for your organization next one consistency and this is what i see in every single organization that i go you might have so much data you can tell me hey you're going to have so much fun once you get to my environment because you can hunt you can do this and that and when i get there i'm like i have to write a query that touches 20 different field names to just point and kind of like get information from one value so that to me is so inefficient because that is it's it's pretty much

you cannot just have somebody in your soccer and your home team um trying to say and touch every single data source by the way nothing is consistent so you have to hit every single data field um you know naming convention that the company that you are using actually uses so i think that that's really inefficient and we're going to show you later how that actually impacts your hunt in the next one we talk about you can go to the next one as well we talk about data timeliness and this one um the way how is defined is you have to actually show how current your data is right but at the same time this aligns uh with the data retention piece

so important we're gonna show an example later but this is basically gonna focus into is my data getting to my uh to my elk or my splunk or anything is it getting into a timely fashion that i could actually say yes i'm detecting it first in real time now that might not be as important for you but then from a data retention perspective then it comes into like if i'm gonna do a hunt how far back can i actually hunt for this critical especially when you have requests by either uh you know policing your company standards or using your literature so that's also something you have to consider and then um and then from there

this is a good reference basic page that you can actually then kind of like go through all these different points uh summarizing what we are trying to approach um you know the way we're trying to approach the measurement of a hand with attack so if you look at data if you specifically look at the data in enterprise attack based on all these criteria you wind up with a heat map that's a little bit more complex than just green checks and red x's in fact what you're expressing here is both fleet coverage coverage within a fleet based on operating system data availability timeliness the quality of that data how well that data matches up to an

expected standard and we're not sharing the legend for this um this kaleidoscope of colors but be aware that this is the level of depth you want before you start planning to hunt for a specific technique because this informs your success don't start a hunt for a technique without any idea of where you're gonna go you might walk out the gate and find out oh well we've been uh required to go look for port monitors as a form of persistence and we have zero visibility into the registry of those endpoints we just don't have it so before you even begin you know you will fail you know that you will have to change and transform the

environment to be successful and that's why doing this assessment first is very very helpful now finally techniques are we going to talk about techniques this is a tack it's 200 some techniques can we finally get to it well we want to really understand the data quality component first and understand how it influences success and failure so we've been talking about hunting and general detection and we wanted to define a couple of guidelines further for operationalizing this process one is things like keyword searches if you consider hunting to look for file names or ip addresses or hashes that should be automated that is a thing a machine will do error free which a human will probably

make mistakes with so automate that part still do it but use it as an input to normal alert based detection also understand that some techniques are better found in conjunction with other things mshta is a good example of this organizations use mshta a native windows file to pull down hta files maliciously these these might be full with uh you know script payloads but in a benign sense they are still used both internal assets as well as external assets so you might look at that and say oh well we see tons of mshta activity it's talking to some internal ip address it's pulling down a file doesn't look that strange and then you look at that endpoint and

other techniques are also being recorded within a relatively short time frame and all of a sudden you realize like oh yeah mshda was probably benign in the majority of those cases but now i can cluster it with other things related to this process like where mshta is the parent of windows script host executables all of a sudden you're realizing oh that is not right now i need to begin responding to this we also want to make sure that for folks who look at this as a quantifiable methodology probably don't want to rank techniques on some arbitrary scale of sophistication the way i'll summarize it is everyone uses ps exec equally if that's the technique you look for within the

lateral movement category and that's all you know you don't know if that's a nation state an admin somebody who just downloaded cis internal stuff and is just mucking about on the network we have to treat each one of these as if they are an even level of sophistication we also want to know which variations exist for a technique because you can't find a thing you don't know about now this leads me to powershell and powershell is my favorite example powershell is one of those cases where a tool is also a technique powershell abuse is incredibly detailed as most of us have experienced if you load up that system automation dll with any other executable it is essentially powershell has all the

functionality of powershell if you look for powershell.exe as a basic process name you will probably find it but you will not be guaranteed to find all instances of powershell abuse and this does lead to another example where very recently there was a presentation about converting commandlets from powershell into dot-net methods if you are looking for powershell abuse and you can't inspect net methods you are probably not fully covering powershell abuse as a technique and that's one of those things where we can go back to attack and reference all those papers to understand every application of the technology this is a very detailed process and you probably won't squash a technique a hundred percent on the

first try but it gives you something to work towards um now we also wanted to share this this is a tweet from earlier uh last month uh or this month depending on what month it is uh and this this illustrates a really important point so if you're going out and looking for threat activity with something that's alert based it's gonna be tuned for low false positives it wants to give you true positives that's what it's designed for while special purpose hunting platforms are quite the inverse they need false positives because that's what the hunt methodology is for to look for both the good and to try and eliminate the stuff that is malicious so we can deal with those

threats and get them out of the environment if you're using the same technology to do both that will inform your success in this process a lot of organizations just buy one thing and then they stick with it but we have to be more sensitive to that as we try and appreciate the success and failure of this approach and something that i believe uh you know it's very important to remember i think that devon touched on it which is attack variations that actually is very important because every time i talk to somebody about measuring your hunt you know it comes down into hey but i can detect this so i'm good here i can detect this isn't

that and then it comes down into like okay that's one variation of the attack you cannot just give it a hundred percent if that's just one variation so measuring those variations is really hard because for example i think that you know that was mentioned in the keynote casey smith comes with something every day i mean at six a.m like he woke up and he just did something i'm like oh my god this is a new technique in here so now i have to kind of like readjust my probably metric if i'm saying i'm good if i can detect this specific technique so that's what i like to approach this from a data perspective give me

something that can support that like do i have at least the data that kind of started getting me more into uh start building data analytics actually so when we talk about data analytics it's pretty much now focusing more into first from a behavioral perspective mitre has again right attack based analytics which is going to actually categorize um how you can start building and you know categorizing how you want to uh you know just address this detection so we got all the way from behavioral situational awareness and then you can go back through because we didn't touch that for and then the anomaly outlier and of course forensics so that's gonna give you that room to start actually touching

different ways how you might detect also this specific technique and not just focusing one query that will give me exactly that specific probably string that i'm looking for and then at the end um of course you know this is this is this slide is going to be uh you know slides we provided to you so you can go into more details in here but something that we touched today is basically the data quality piece as you can see like there is actually other pieces in there such as technology talent and we also touch a little bit of detection which we believe you have to be really careful and start focusing more into what is it that

i can actually see with my data okay so we've talked a whole lot about a lot of stuff uh we've gotten through about almost 70 percent of our content so i feel pretty good about that but we're going to talk about some specific examples because this doesn't become real until you actually start looking at techniques um so generally when we approach a single technique we want to build on that data analysis we've already done right if we know where those data sources are and our percentage of coverage and our percentage of the fleet some of these numbers fill themselves in and so we've already done the leg work now we can look at the technique and we

say well do we have all the data do we have the data from all the endpoints we care about do we have all the data from the endpoints we care about within that agreed upon interval whatever that is whether it's two years 90 days 27 minutes doesn't actually matter that's that's going to be unique to your organization we also want to know the timeliness if we ask to do a hunt we have to have the data we want to look at the consistency again each tool we use and we can pull this data from multiple data sources we want to make sure that those tools are formatting the data in a consistent way and then once we get through this we

have to ask questions like is our team actually capable of assessing this and if you're running a sock you kind of generally know what your analysts are strong with if one of these data sources involves say like packet analysis and you don't have a person who understands like the tcp packet structure that might be a barrier to success not necessarily a complete barrier but at least a hurdle and then you can start building analytics refining them over time and of course documenting this whole process documenting the technique the analysis steps uh false positives true positives everything that you can do to make sure that these workflows are more efficient and successful so what do we do to test well you're

going to need a couple things in your environment generally speaking you'll need a place that collects all this data so that we can begin writing analytics against it we'll probably need a couple of system archetypes which are going to be a little different from each other because every organization has different types of systems we'll need to know what those are they'll be part of a representative study we'll also want to know that we've got endpoint visibility enabled by something like maybe sysmon or os query whatever you use just make sure it provides the data you need to address that technique we approached this presentation as a completely tech agnostic one we tried to reference uh open source and

free solutions but if you've got commercial stuff you still have to go through this process and again that's a great place to leverage your vendor you need to forward those events to that central location if you're going to do hunting and the data all resides on the end point that can be a problem those things are not always tamper proof and so for us the baseline is centralized data that also makes this a much more performant process and things like network metadata can be very helpful in fact some techniques require it and of course you'll need a testing framework or maybe a couple of testing frameworks because they're just so darn many of them now

maybe you want to use multiple testing frameworks and even compare them against each other to see which ones more completely express a technique and this isn't about which is best that's what we're going to pick it's about making sure we can address the technique analyze the data and perform detection because that's the role so tools that help with this there are so many red canaries atomic red is another great knowledge base with tons of resources mitre wrote caldera which does this exact thing uber has meta end game release red team automation all of these things are a little bit different they're designed differently and they cover uh some of the same and different techniques but their goal is the same to provide

you with the material you need to detect adversary techniques and fortunately most of these don't do anything destructive they don't add anything to those system archetypes which are bad or cause consequences so using them is generally in your best interest now if we take one example i'll pick on install util because it's a simple one and it's one that's relatively easy to get our arms around let's just say that we've got a fictional organization with a thousand windows endpoints and nothing else flat network sysmon is going to give us hash information cert information process metadata command lines process use of network just in case we care about that and we can see that it only really

references process monitoring command line options as data sources so sysmon gives us that without any of these other configs but we might want to use that metadata in a couple other ways we also might have some netflow metadata at the switch level and some egress firewall visibility just again for for completeness sake and we want to forward everything to something like helc which gives us one place that we can go and we can match against and of course once we start looking at the data we have some knowledge of what's available and what's not and that's when you start actually now then focusing a little bit more exactly into what is it that you have mapped to what

you have defined is the data that you need the attributes that you're looking for how complete is the data and then of course the consistency across probably other you know sources that you're using in order to start getting into the detection of that technique and of course also completeness comes down into i understand my environment i know that i'm getting pretty much every single endpoint in my environment and of course you know from a data retention we at least understand that what is it how far back we want to hunt in this space and once again this this looks like hey you know like this is easy and stuff like that but this this actually is a lot of work to start

defining what is it that you need what is it that you have and start actually doing some like access management asset management also on the left and inside that so i you know i'm one of the contributors to red team automation it's written by everyone in my research org but um install util is one of the scripts that we offer specifically for remote file copy so if we just look at what i did in this script which is the information on the right and the data sources that we mapped on the left i know i have a hundred percent of the stuff i need to write analytics for this technique and this is the exercise

we want to go through we want to have concrete information if any one of these was blank like maybe we have the process name but not the command line well then how am i supposed to know it's malicious because the command line metadata is essential for this particular data source and if you look at what this rta does just really quickly it sets up a local web server it's python based it takes about a second it determines the bitness because that determines which version of install util it will invoke and there are two for each bitness it will then run it with a connection to a local web server and pull down a benign executable and that executable is not

actually malware it's just meant to be representative so now i have a notion of what is happening behind the scenes that an adversary might also need to do and i have the data sources i need this is the place where we get a little bit closer to analytics now these are just examples here we have a behavioral example up front basically looking for a combination of install util as an original file name because install util could be trivially renamed so we'll look for its original file name as parsed from the pe metadata and we'll look at the command line containing a url like this might be a thing that's legit in your organization but you won't know that until you begin

developing these behavioral analytics and we might need to extend this to make it a little bit more tailored if we want to know the general use of install util from a situational perspective we'll want to know every use of install util and we might want to break that down based on common criteria like which account used it on which systems um what were the destinations that this that this thing was reaching out to this helps us to understand what's normal for our environment and even though these analytics answer different questions they're both analytics and you might want to have each of these analytics for every technique you can also develop outlier analytics basically it's the same as other analytics but

you're stacking those looking for least frequency of occurrence i would say based on something like the account or more appropriately the command line if that's the attribute you're looking for and then of course as a forensic analytic maybe you know that you've had an intrusion where this technique was used and you have some information about how it was used you can go look at other systems that had these same artifacts and now you're scoping an intrusion each one of these analytics serves a different purpose but they're all effective and we can measure them in similar ways and just before can you go back to the one that you're talking just before we move to now another example um it's

very important to understand how you know we talk about you know stacking techniques and things like that but so once again right coming down into if we have consistency then a second technique will make sense to me if i don't have consistency across my data sources i will have to do some crazy order statements on my joins and try to make sure that it fits and i get one list only so those are actually you know real i would say examples and actually that has happened to me like i wanted to hunt and all i get is like this massive query with several different things because i cannot hit everything at once so then we start

you know you probably can start thinking yeah you know what i still am not convinced i see data quality but data detection and it doesn't it doesn't just click right there right so let me just give you one example i woke up actually that day like at 6 00 45 a.m i think and every time i wake up i just go to twitter and try to see exactly what's going on it's like my newspaper and then i saw this right here and this is exactly what happens in real world right you go into your organization for example uh yesterday actually at 3am uh because i was awake three years and a while that way but

um and casey actually released the other one uh using this specific technique uh wmi which is w me cats or something like that don't you mimi cats especially using this technique so have fun on monday because then somebody will actually ask you to start getting more into this area so let's say we pick this right here we get some information and then if we move to the next one then your team starts trying to create this crazy analytic if you have joe in your team man that'll be awesome having joined your team so then with that pretty much he tells you you know what this is a analytic a behavioral analytic it's not a signature yet because this has not

been proved that actually can detect it so that's how we start thinking about let's think about the behavior first variation that to me is just one variation of the attack because this can be obfuscated you can change the name of the executable and things like that so just looking at this variation then i can start then go back to my environment and you go next and then you go up the other one and then you say oh all right couldn't find anything awesome that means that that didn't happen all right we're good and then you go back to the i'm sorry you go to the next one then you say all right i'm ready to

send my email and say hey man we're good don't worry about it all right nothing happened well good now i joined your team right and then i say hey wait a minute do we at least know how complete our data is like do we have coverage across everywhere you might be missing a couple things tell me please show me that you have completeness of your data and if you don't have that of course you know you won't be able to see exactly what happened if you go to the next then you say um can you go back to the other one sorry all right and in this one then you tell me because i didn't see the other red

lines in here but in this one i could now see you come back to me and say hey man i actually do have completeness from a windows security perspective so i actually should be able to look into that specific behavior that's what i'm mapping into something that might be compromised and boom and you still have nothing and you're like you see i prove it to you and i'm like hey wait a minute can you look just for w make and you know 468 i just want to see what you have and boom i just find w mig right there and this is like real examples actually if you go to the next one and then i can just pretty much

tell you okay this is weird because you were not able to show me anything with wmig before uh but if you look just for that into one specific log make sense and then you say hey man wait a minute all right i'm gonna show you that it was nothing the reason why the the whole query failed is because i couldn't find the command line but i go i'm like okay dude come on the reason why your query or your analytic fail is because you did not have the complete data you might think that you were failing is because the the analytic didn't actually match what you were looking for no simple you just didn't have the data so

you don't have complete data and if you go to the next one then you tell me you know what man i actually do have complete data so don't tell me that i don't i actually have almost 90 percent of my environment and then i actually have seven days of data cover you know retention in there and then i say all right let's just go for it again let's just look for it and boom nothing and then you say i prove it to you that is nothing seven days we're good and then we go back and say let's pull some archives and then let's go for you know 30 days and then you go to the next one and the next one

and then i can tell you that okay these actually happen in your environment it's just that you just for not paying attention to the basics i think that um you know other talks have actually expressed it enough as well where is that you have to understand what you have and in order for you to understand what you have you have to go through every single piece of data that you have and you have to actually start assessing this completeness this consistency and here we didn't talk about even consistency i just proved you that with timeliness and data completeness i can actually prove that analytic that probably you're executing your environment as a hunting engagement is not being

efficient you're trying to focus on efficacy but you're not actually thinking about the efficiency that is going to make your hunt campaign or engagement an effective one closing thoughts all right closing thoughts by jack handy um so here's the thing um as as much as we love this topic and as much as we like dug into it we aren't really done um there are a couple of other ways that you could do this that you could approach it that are maybe um get you to the same place in a different time frame so some folks care about adversaries and so they might go to an adversary group uh as cross-referenced by mitre and then say okay well i wanna i care

about this group maybe you're a financial org you care about fin7 and you say show me all those techniques and i wanted to illustrate why this might be might not be as certain a bet as you think it is so looking at a group like when nti this is a chinese-based nation state threat group as an incident responder i responded to this group dozens of times so i know that the three techniques that are cross-referenced against this group are incomplete i know they do dozens of things and i know that they evolved over time to include tradecraft which blurs the lines between them and a couple of other threat groups so if you take this route how quickly

you cover the entire threat matrix of attack is going to be impacted but it also might not give you the certainty you think it does and that's an important caveat to give to leadership not necessarily among yourselves but you want to make sure that your leadership who is directing you and down this path understands that these things are only as good as the information behind them so yeah you could build out analytics for each one of these techniques understand which os's they apply to and apply a data quality rubric to them like we've suggested and you will eventually get to a place where you have coverage all of these techniques are cross-referenced by one or more threat

groups so if you take the adversary focused approach you will eventually get there but we think it'll take a lot longer a method that we liked a little bit less than that is technique focused instead of doing the data quality assessment the data availability piece instead some folks feel like i just want to pick techniques and then just chip away at them and i got to tell you i've dealt with organizations doing this and i know that it leads to incomplete coverage it's an inefficient process but the things to be aware of is when you choose a technique then you go through the data quality piece and you will repeat the data quality assessment for every technique for every new data

source you're gaining ground but most orgs are not building out some baseline understanding of what data is available and what the quality is so it takes a longer time and i think it is one of the reasons why people have not developed broad coverage of this particular threat model and of course you know beginning the process of developing analytics for a single technique does not in any way protect you from other things which you've chosen to to pass down the road for some later point um so a couple of things that we wanted to revisit go ahead you want to talk about that uh so yeah we want to we wanted to do another presentation which picked up a couple of

the pieces we didn't have time for like each of those four types of analytics behavioral outlier etc it seemed to us like there's probably some evidence of bias between those analytics and data sources now every one of these threat models be it attack or something else has a bias for a certain type of data attack as we've shown has a bias for process-based eventing that's just a thing to be important if you choose a different threat model you have to understand the data bias it has because that's going to inform the sensors the data objects etc we thought there was probably a correlation there and we wanted to explore that data retention as a function of quality

and availability we wanted to figure out for some of these data sources is there a sweet spot and across all of these data sources is there an ideal we can point to which can also be like operationalized by a team of hunters we thought that would be an important thing to dig into um and of course uh talent the uh the great mystery how do we assess our human factors and that's something that we thought that we were in a position to assess is both having a lot of experience in that realm and then uh you know something also would like to to add to this you know once we start talking about also what are the different oh man all right

what are the different also data no data i'm sorry hunting models that you could use like the adversary one or the you know per technique i think that actually that should be called data driven model actually because that actually will define exactly what you're going to be hunting for at the end so i think that's also something to consider if you have a program where you have different names for the different type of hunting engagements that you have i would challenge you to probably start also looking into the data-driven model where you actually understand your data and then you start mapping that now with something that we were going to be talking soon is with you know technology

and you know people and things like that because all those correlate not just focusing on i can detect this and that's it so and with that at the end if somebody just wants to go back actionable items and you say you know what this is too much man this is going to get a lot because i now have to look into every single event that i have create my own data objects well i give you top 10 actually of techniques being used actually uh just pulling statistics from attack and please i'm going to repeat that again this is attacks knowledge base right the database that they have those are the techniques that they actually define as the most used techniques from

a data source perspective those are also the top ten some of them of course you will have to do a one-to-one mapping what i mean with that is that back at capture you can't just say i have completely all these data fields that i need so that's something you will have to also probably decide how you want to approach that but most of them especially with process monitoring file monitoring process command line right right there at top three you can actually start uh providing impact to your organization that means that process monitoring touched pretty much 149 uh techniques out of the 280 something i guess yeah anyone have a wild guess about how many techniques in attack require privilege

like sysadmin or greater ballpark so jared you want to guess require uh non-system privilege 116 techniques do not require admin at all which means if you are a least privileged organization this is still really relevant um and then just to wrap up um we did want to make sure that you guys had a one-stop shop for all the links that we talked about we will make the slides available so you will get all these links and we also want to thank you we know we didn't save a lot of time for questions because this was really this this slide we're going to give these out so we don't need pictures but also if you want to chat with us

we'll be hanging around and we'd love to engage with people who maybe approach this problem whether they've succeeded or failed yeah and just one last thing i think the project is going to be the awesome it's going to be released tonight after a couple drinks so i i hope that i you know pull uh push the right uh pr i think to myself i guess uh and then that project is gonna have some basic information because that's a work in progress so i'm really happy to hear your feedback if that actually makes sense or not thank you very much everybody