Building a Security Data Infrastructure

Show transcript [en]

okay good morning everyone I'm gonna go and get this uh this party started uh hi I am Stephen Mitchell uh I am uh an engineer here in the Western New York area and happily so I've been doing this for about 13 years but I got my my start in Tech uh in the fourth grade checking out the old Commodore 64 and Oregon Trail 2 I think it was I was like hey man like these computers are going somewhere so I uh followed my dream right into pulling out all of the uh uh uh token ring wire out of my school which and I'm talking this is the old uh 16 gauge wire four wire setup uh that was fun and just

kept uh it's been it's been an uphill Journey ever since and it's been absolutely wonderful uh I really am pleased with the community that we're starting to build here and I want to give another big shout out and thanks to Mr Matthew Gracie on his back and I'm a little disappointed he didn't bring the cape that we got him last year uh to wear around because he is our superhero so uh I really do enjoy uh in sharing my experiences uh which is the primary driver for this talk today uh with that said welcome to uh my talk on security data infrastructure uh and this is how I learned to stop worrying and learn to love my data uh

nah this talks for everybody that's fine so what do what do I mean by security data infrastructure uh and this this quote really isn't hard hitting or all that unique but it's it's adding a layer into your security data pipeline or your the way you pull data into your security tools to fulfill all the use cases you have for your data all right and I'm what I mean by security data I mean your firewall logs your active directory logs all of the items that you have that you need to get to your tools and possibly keep for a long time uh and what I mean by invent stream processing tools are effectively ETL tools so these are your

extract transform and load tools to pull data in and kind of play with the data before you send it out to your destinations no matter what the format it is coming in and no matter what the format is going out uh and uh yeah and and generally speaking I think you know my experience in which of the uh me telling my story today is in my experience it's just been I have my producers of data and I had where it needed to go uh and I just connected the two together and that was it the problem then became was there became more and more destinations that I need had more places to send my data and I had a need for historical

data uh and the ability to put that into other tools because we were testing some new hotness uh and this is this was the story is talking my journey on finding a tool that could really do that for me uh and how excited that I got over the last six months about it so I figured I'd make a talk and share it with everybody so how am I going to convey this without completely losing you um as I I mean this last six months has been a drug-fueled fever dream for me so I'm gonna try to keep this as organized as possible because I am not uh I'm gonna tell you how I got here

uh we're gonna just recap the problem statements uh because I'd like to be clear and concise because otherwise I'll just Meander off into nowhere uh I'm going to talk about finding the tool I found and how it would have solved my problem I'm gonna show what else this thing could do and hopefully try to uh engender that I kind of plant the seed in your head like oh I hey maybe I have this problem and I can use tools like this to uh to help me out uh and then we'll talk briefly about uh some of the other tools that we can uh help to help you on your journey uh because there's not just one solution uh story time uh

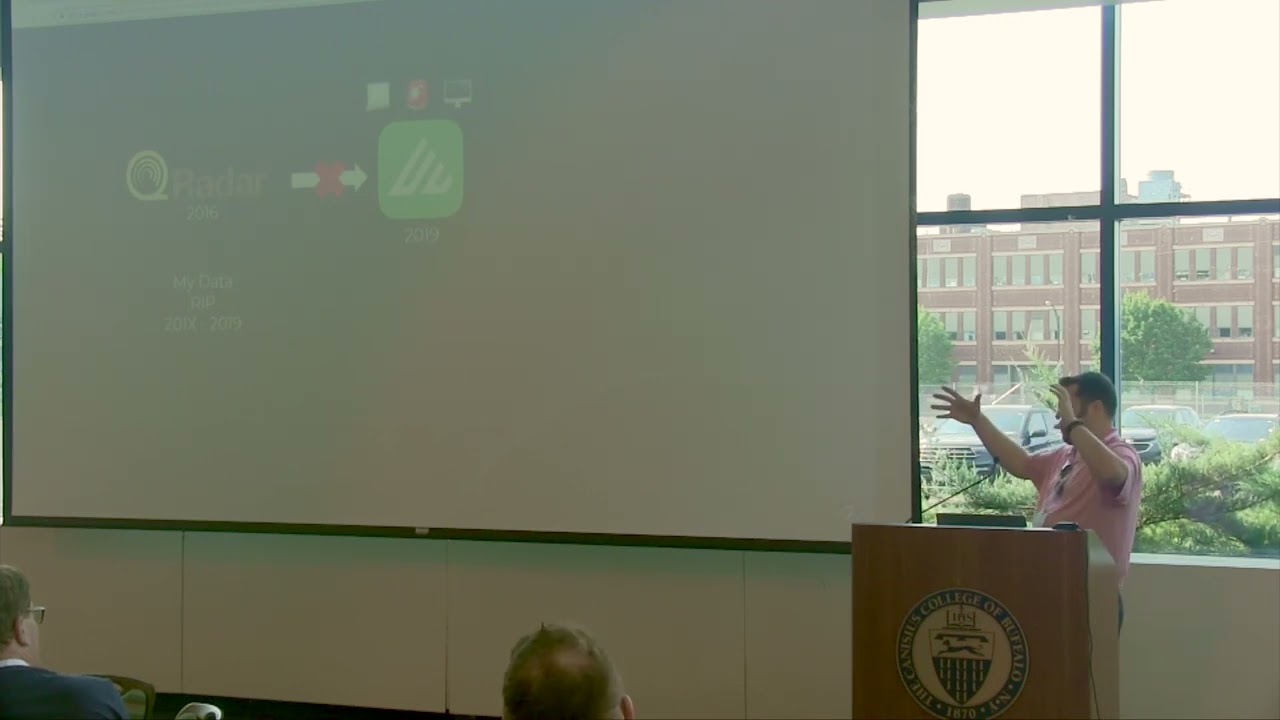

some people in the room may be familiar with the story uh so back in 2016 I got hired at Blue Cross Blue Shield of Western New York lovely people very handsome and we were using IBM SKU radar for USM and I didn't get a chance to work with it very much uh but we had all the bells and whistles the the alerts logs Network traces everything was going to this wonderful massive tool and even though we had it all in there had it all ready to go it just it wasn't great it didn't it didn't satisfy our needs properly so uh it it was either it was slow had too much junk data in it it

wasn't a cohesive product altogether you know because you had to reach out and you're doing a network Trace okay let's let's get the network trays for this particular event and it didn't quite work or I mean we maybe we just didn't know how to use it I don't know I don't know what our problem was I don't want to remember because we're moving on uh here's what did matter we decided to stop paying the bill uh so it was time to move out so we had to think about what was next so we all sat down uh got into a uh what are we going to do now meeting and some really hard questions got asked how are

we going to maintain the data that we have right now all right because we have some regulatory uh needs to keep the data for a set amount of time we have to keep our identity access management logs for X number of years we have to maintain not so much the network traces but to me you know and then maybe our Sim data just in case we have to look back oh if somebody says oh uh two months from now after we put in the new tool oh what about that uh oh we found out that uh Joe in accounting and got a phishing email and it got a lot worse than it was so we would have to look back

uh so we kind of needed some of that stuff and how uh we have an audit to satisfy how are we going to pull these logs uh out of this tool if we move to a new tool and we shut down the old one how are we going to get the logs for the next audit uh like can we pull the data out of this tool can we stick it in S3 and kind of get it into you know another format so we can actually use it later so we asked IBM hey can we can we do these things nope your data's stuck in your in this tool so what do you what do you mean no we I

mean we really need this data so I mean okay so we have to now live with this tool no my data inside of it so uh do we keep this thing running for a year how does that work with licensing what are we going to do uh and uh we turned out we were locked into the system we had to get a special license to ensure this thing would keep going and we set it aside and look forward to the future to our new Tool uh and we March towards our new sim which was examine yes security analytics all right but we're going to start from zero all the machine learning all the wonderfulness in this new wonderful Tool

uh we're going to take months to build up and we're gonna have to shift all of our data data providers to point into this tool and we're just going to have to wait and hope that everything's going to be wonderful uh so we in this this particular setup very similar to uh Q radar we had a hedge unit had a big giant set of a cluster of uh database systems uh have another machine to do some analytics and uh had another tool that was able to act as an ingest point to get all the data into the tool so a whole bunch of boxes standing up and in Amazon let's just pay all this money for

this uh virtual iron here we go uh and now we get a data Lake we get ueba we get data ingestion Made Easy this is going to be fantastic we're excited about it so we get everything set up we ported our logs at it and we did wait uh after about a year and a half we had a ueba uh the turns out the data Lake was a fantastic exploring data uh but really wasn't uh good at building Sim rules because it wasn't a Sim there was another there's another product for that uh and so after a year and a half we're like this this stinks now now what okay we learned from our lessons last time what do we so we hold

another what are we going to do now meeting we're gonna make this happen uh we sit down we're going to maintain uh we gotta okay we're gonna have a stand to keep we're going to have all our data uh Hey XV we need to grab pull this data out and get it into a format so we can have it for our audits layer and they said no oh okay so we can't uh can't can't migrate to the next system can't take any of the data that we put in there and move it to the next box rip oh boy so we uh I got put on the next uh next iteration of this and uh we're gonna move on move out to a

new tool here we go again and we moved into Azure Center all right and we're going to start again from zero uh so we're gonna have to build all our now we're gonna have to build detections actual actual detections this time uh and get all of them three point all of our data uh into this Sim uh get it all set up and report another person's Bingo we're in business uh and now we pay Microsoft for ingestion costs which was A New Concept for me at the time I had not had the pleasure of working with uh with Splunk or products like it and this is very much in that vein I didn't even know we had 200

gigabytes worth of worth of logs coming in so uh thankfully the Microsoft 365 logs were free so that's super cool but uh we that that's a lot and we set it up and we were seeing 200 gigs a day and like wow that's that's a sizable amount of money uh the good news we had retention now we had the ability to take long term and and separate from the tool I was able to set up a uh a kick out to Azure blob storage uh and so I was after 90 days of living in the tool the data would just gracefully pass on to the next life in in storage uh and we would be able to

create our own data Lake in uh in in object storage so if we wanted to go back in time conceivably we could pull that data back out put it into the tool and be able to work our query Magic on it uh Suite I mean there's a whole bunch of logs that probably aren't necessary for Sim purposes like all those Palo Alto allow logs that are super chatty uh especially with all those internal internal Communications uh and we I probably should take a look at that see if we can like tweak the tool turn off the logs and how do I do that I don't know never found out uh I got put on another project

and left the company happier there rip uh that's a lame story I've said to myself uh does any is anybody else experienced something like this you know switching tools uh you I love you guys because you don't count uh the it you you absolutely count and it's it's it was frustrating to me and uh I just I would sit long nights just sitting up in bed thinking what how could I have made this better all my data was trapped in Vendor tools uh we're taking in more data than we needed to uh come find out sim Solutions are expensive uh and we had to uh we had to fiddle Thoughts with our tools every time we

had to change that's okay that's what I'm here for uh another thing we couldn't query our data long term we couldn't take a snapshot over a matter of years and ask ourselves what is normal for us what is what happened on July the 16th you know 2017. I couldn't tell you uh we were putting our data into proprietary tools and the data was locked there we couldn't so essentially our data wasn't ours anymore I was giving it away to somebody else just like I do with my cell phone and my entire personal life to Google thank you Google uh and what what could I have done how would I make this better so I uh would then have these feverish

nightmares and see charts in my head because I had a very wonderful architect that made really great charts so I tried to emulate them so how would I have done this how do I make this happen I mean ideally I'd be able to take my data in from all of my sources bonus points for anybody who gets movie references buried in the text here that might be hard to say uh how would I take the data in parse it get rid of the stuff I don't want reduce the size if I can uh like the micro the windows event logs are the the XML logs are doubled up they have double double duty in them so

how do I how can I shrink that up so just the the vital data gets to my to my SIM but for again the Palo Alto logs I was using had a whole bunch of Superfluous nonsense in it that nobody's ever going to use in a Sim number how do I get rid of that stuff uh before it gets to my SIM so I'm not paying that money for the ingest costs uh I mean and it's I have to be able to take it in from everywhere I've got stuff I've got stuff in Johannesburg South Africa uh I've got stuff in Black Mesa New Mexico uh and and all the all my oh my cloud

and SAS tools uh how do I how do I hit those apis especially for the success tools on a consistent basis and pull that data in and have a tool to manage that for me uh okay thankfully Lambda can do that but uh how do I then work on that data as it goes through and then how do I get that to send that out to my SIM and then how do I send it out to my to cloud storage too uh because I want to keep in object storage a an extended period of of data and build my own data Lake that I can query with Amazon Athena or some other tool that can rip right through all all

the files and pull the data out to me and present it uh and also how do I then get that data out of cloud storage and stick it into that new hot tool we're testing I'm I mean even my time at Blue Cross Blue Shield we tested like five or six new tools and would have been absolutely amazing to be able to take all the data we had for certain things endpoint data firewall data uh and then just take a Year's worth and say here you go and dump it all right back in and then the tool is immediately effective you let it you let it you know let the reactor come online and the

heatsink glow red so it does all the you know machine learning magic but immediately to go from zero to effective in no time at all uh and satisfy the requirements that I have today got to be receiving ship I got a full data from everywhere and also very importantly keep costs as low as possible particularly when with ingestions for to the Sim and also going to cloud storage if I can compress the data before shipping it off to cloud storage yo winning how do I make all of this work and also make sure the system stays running and uh is Fault tolerant and it's hooked up to the monitoring team because I don't want to

be waking up but not only waking up at 3am I just wanted to reboot and just heal it though because I'm lazy uh how do I make all this happen uh and and I started trying to find Solutions talk this thing out and by the time I had gotten to the end of it I had no answers and I look like Charlie from sunny and everybody was looking at me like are you okay Steve I mean I know you're not okay normally but are you are you okay are you no uh so I I uh I I don't have a whole Dev team at my at my command uh and I know the problems that I I'm experiencing

aren't unique so there has to be a solution out there for me uh to take care of this uh and by the time I was able to to look at it I had moved on to the next life uh and I was I had all of this on unfinished business and I I was waiting for uh Jennifer love you had to become saved my soul and set me free uh and I I waited and I about what about a year and a half later the solution came General who appeared to me and said yo check this out I'm like oh what system at the Rochester security Summit and I see this tool called and I'm like oh this is

terribly interesting what's going on here I'll tell you what's going on here uh is a tool that you stick in front of your uh your your deliverables your your destinations and you send all your data to it no matter what format it is doesn't matter uh you stream then choose that up and it filters it uh modifies the event data as it comes through uh and can has the ability to dynamically route all of your data to where it needs to go also uh can create metrics out of that data as it comes through all at wire speed setting it right through uh to any destination that you see that they they support but it's a lot

uh more specifically the the tool takes in data and uses the concept of security data pipeline or data pipeline or observability pipeline uh it is technically an observability Tool uh coming out of that space and you've got your sources you can pre-parse them maybe normalize them before putting them into the tools the tool has a concept of routes that says hey I got some data coming in if it matches this particular you know job search JavaScript string or a filter hey it's firewall logs if it's Windows logs send it to this pipeline over here if it's Azure 80 logs send it to that pipeline over there and it does a uh a ordered list of of routes and sends that

data then shoots all the data up as you prescribe and sends that out to your destinations and truly I mean your date you know all your data infrastructure is a series of tubes thank you Senator Ted Stevens nailed it uh how how would this tool have have looked had I found it and got it stood up well in my case back at Blue Cross Blue Shield I would have said Hey listen I've got a whole bunch of stuff that I want to reduce and slim down before I send it to my SIM so I have some routes at the top there I then have some matching routes to say Hey listen I want the raw logs that I

can use later I want to send them to my data Lake up on S3 okay sweet uh I've got an audit log that I really don't need to in my sim maybe I don't have a use case for it in my sim at this time but I kind of want to keep it for later just in case I do I'm going to send that to just as three uh and then I'll build out later I'll show the a replay where I can take data out of S3 time based and send it to a new tool uh in my case I didn't have to uh I don't have to I don't have a route for uh sending Office 365 logs to Sentinel

because that was free winning so you don't have to use it forever let's take a look at more technically what this what this looks like uh you have our list of routes uh and today we're gonna be focusing on the uh the two in the middle and I have a little bit of zooming zooming action here if I can find the mouse to kind of help out uh we're going to talk about sending data raw to S3 and then sending it to the Sim as well and we're going to just stick with the Palo Alto model to kind of focus in uh this is these are this is the kind of the look for the routes uh it's a little low

contrast I apologize uh but again in an ordered list you set filters you say hey I want this data that looks like this and send it to these pipelines and these pipelines then send them off to this particular destination uh here we have you know my sim syslog here we have an S3 bucket uh we're gonna send all that stuff uh through now I have what you see here at the top is a pass-through pipeline that means do nothing don't touch it just pack it up and ship it uh and I have uh Pipelines which are an ordered list of functions that then masticate the data and do what it needs to do and then ships it off to

a destination and then I have a collection of pipelines called a pack hey these are Palo alto's are complex data there's a lot of different data types within Palo Alto log so I need to get a little more fiddly with it and and have a set of routes of four four Palo Alto data that then sends the data off to my syslog uh what does an S3 destination look like well it's actually quite lovely uh you give the name of the bucket you give a key prefix so this will be the uh the first path and the first step in the in the key uh when you want to go pull it back out so you know where to

find it in your S3 bucket and you set what I think was really neat this partitioning expression which was new for me uh you set a a dynamic path based on and here I have it set based on time I just said listen uh give me the year month day hour minute that these logs were pulled in from and I want you to just make a folder path on that and they'll send the object and send the data there uh you can get really fiddly with this let's say you have multiple firewalls you can say hey depending on what the source is create another folder you can do this for if you don't have you have a small environment

and don't have any cardinality issues and I learned the term cardinality I won't explain it here because it's see it for me a very complex math and everybody's nose are going to bleed uh the you can do hostname maybe it's endpoints you want to know what happened on a specific host and you can pull that data out of your S3 bucket uh just for those house you know you want to get very specific when you're querying data back out and here I'm setting up that environment later that data Lake later where I can then pull data back out specifically uh with a query and then the most important part that the one that's going to save us

money oppressive with gzip before you send it awesome I'm excited oh we also have back pressure Behavior uh just in case this the destination gets a little wonky the tool can kind of hang on to the data for you before it gets sent so very nice uh so you're not losing any data on the Fly we're gonna take a quick look here at uh the Pell off and stuff so I said this is all ordered lists of course if a rule matches it sends the data out and that stops that's it it's over unless I set the final flag so I can work on data more than once which is very convenient so what we're going to do is we're past

Palo Alto logs data this this route here and it's going to flow then to the next route right below it where we will then finish working with the data and drop those events as they come through so again it's just one of my use cases we're going to go through we're going to check this out uh here is a list or this is a pack uh which is another set of another set of routes and for each specific pipeline because I want to handle each part of the Palo Alto log or each type of Palo Alto log differently the traffic thread logs the system logs I want to treat all these differently so I have different pipelines for each

because it's different data and I'll need and then they're formatted differently so I'll need separate pipelines for those I'm going to take you through one of these uh one of these pipelines to kind of give you an idea what's possible um and that is the traffic log so pipeline is a collection of functions in an ordered list and step by step you take it you work with the Json object of the Json event data that you have this allows you to everything in behind privilege JavaScript so I uh just thinking uh JavaScript if not yeah the uh you're able to work with each of these fields and there is a dancing plethora of functions to do stuff with

so many Legos to play with it's very exciting the first here is the uh is eval statement that pulls out a hostname let me zoom in here give you a little bit a little bit better look at it it pulls out a hostname from and this is also this use case is also assuming that we're working with Splunk because uh and Splunk have always kind of going together maybe a little too close so uh so we're going to want to build uh kind of like a Splunk formatted message so I'm going to pull the hostname out of the out of the raw field uh these were pulled in Via syslog so everything is uh first uh in the Json

object all the entire messages in raw and then cribble automatically pulls out all of the syslog items into the uh to the message since my syslog data may be forwarded from like um NG syslog NG uh the host name may not be correct so I would have to correct that so here I pull the proper host out of the raw data and stick it in and stick it in the uh the host uh field I also take the date time out of the syslog message because I'm just I'm going to be sending it again and I'll just want to pull that out I don't want that in the syslog message later and then I set some other fields

conditionally to get it set up for for Splunk next I take what's in the Raw field and I parse it out and create my canvas I call all of the data out of the the raw field and I put them in the root as Json field and now they're all nice and easily worked with and I can do that because I sat I know what a padlock looks like so I'm able to set a list of fields in order that this CSV is going to be or it's going to be listed in so I give the order of the fields and it pulls those all out named for me ready to rock and it pulls the data out no matter

whether the the field is has value or not so there could be uh there might be a couple of spots here where there isn't any data at all and it will still pull those out and make the field null for me to play with wonderful uh we do some other stuff in the eval that are too boring and stuff in the in the in the of the functions here that are too boring to talk about but we get to auto timestamp uh this is super neat uh I can take I could say hey look in um look in the Raw field and or the field generate a time which is down here look at this date which is in a kind of

a full format I want this in this book format which is you next time uh so give me this give me this a new pot and I can say with a little strip time I can use this python library that they use to give the format that the time is in and it produces for me Epoch time Auto magical I like all magic it gets me home on time for dinner uh the now we enter a little bit of danger zone because I start sampling logs uh I have had limited experience and what experience I do have that's questionable uh into sampling sampling is the ability for uh to collect one out of X number of

events so instead of 10 of the same two kilobyte log I can just collect one and it'll keep doing that as they come through uh so let's say I got a really chatty rule let's say I've got a I've got a CSO that's always hooked up to his personal access database off on the network chair somewhere and you just it's always and he's using it all day and I just don't want to collect those logs so we're just gonna we're just gonna sample those so I can based on based on a filter I could say hey for this particular function if the rule is Splunk I want you to look for where bytes into zero and action is allowed and I want

you to sample that at a rate Five give me that twenty percent shrinking all that down reducing my cost so only one out of five gets sent to the sin and you get a q you get a little cool little sample field it says hey I sampled it five times uh that way for um your network folks will be like oh man how hard is the CSO hit in the data access database today we'll count that still uh let's say we're doing uh we're doing some other boring stuff and then we're gonna do some cleanup uh now we're going to prepare this message to Trent to transmit out so I say hey I've done all this work on these fields

I've cleaned up uh I'm gonna I want to clean up everything I don't need so I say hey remove all of these fields I'm not going to use just get rid of them bye I want them out and then I want you to serialize all that data back into Raw what I have here is all the data that I need and a whole bunch of stuff I don't is gone and this data this is the data that gets sent to the SIM uh uh nice clean efficient uh how much space did we save D with this very rapid exercise hour long exercise we took you know one and three quarter kilobyte log made it less than a k

reducing it by 55 percent so all of my parallelogs of course it's a small sample size you really want to do a large sample size to get better data and they have a monitoring tab that kind of shows you you know over time how much you're saving but just for traffic logs I'm shrinking them down by 50 percent that's pretty dope I'm kicking out 100 gigs a day I'm shaking it down to 50. that's awesome uh and with and that isn't then compounded by anything I get sampled which again as your legal counsel make sure you make sure you do that sparing and with very well defined use cases uh you don't want to lose Fidelity but

then I'm just not sending logs that I don't need uh anybody have a SIM that they pay you know paper ingestion all right one two okay three yes yes four a five uh yeah I mean does anybody want to reduce the amount of the space of logs that are going by 50 yeah obviously there are some what keeps cases where you're going to get 10 20. that's fine some are super huge depending on the uh the log that you're kicking out so you're again your mileage may vary but being able to reduce the ingestion costs is super dope uh and uh so we're gonna do a quick scene change uh since we're running low on time uh so

I uh we talked about you know the everyday uh we're going to talk about that new hot POC we're doing let's say we got a whole bunch of win long beat data that we want to pull in I give a I set up my collector so this is going to be a collector job that's going to hit the S3 API and pull back all the data I want based on a time frame and I just give it uh with a little bit of Json a little a little bit of JavaScript magic uh declare how the folder path looks and then say hey based on the time that I give uh years here months here days here and such uh and

then you would also include if you did anything else you'd include your machine names or your uh your hosts or your whatever else you're used to carve that up regions uh and also do a little recursions because thanks for making messy uh so we then get a cute little screen that I can run a preview give it a time I can say hey give me the last I don't know give me the last 12 minutes give me the last 12 minutes of this data give me the last year uh for a preview I'd want like the last you know 15 minutes so I can then make sure that the format comes in correctly and it gives you a nice little preview

screen but then when you're ready to go you just say hey give me the last year and play it into the new tool uh you do have to create a uh like a beginning you do have to create a route in your table uh that matches this and says Hey listen if you see Steve run his you know see collect his personal win long beat stuff uh capture that and send it to to the new tool which again for me my best purposes which is S3 we'll just pull that pull that data out and ship it uh you can also transform it as it goes through just like any other route uh just in case you need to prepare it a

certain way for that new tool uh the process takes like 15 minutes super awesome uh so I did this at home uh and I grabbed all my win logbeat data and uh because I wanted to play with win long beat uh and I shipped it up to S3 so I said okay now I have this data Lake of stuff how do I then query it out uh I also played with cripple's new tool search it's the exact same except it pulls in the data and then is able to visualize it for you uh based on your kql that you have here so I said all right let me take a look I'm seeing all this

data come through uh I want to know how much of this data is duplicating how is there something that's super chatty that's you know give me all these wind logs uh so I said look I said hey give me uh give me a query give me you know uh let me check this man you know what's this man saying uh and give me stuff where uh where the I want the Winlock I want the data in I want the image what's the file that's you know giving the most logs at where count is greater than 50 for the time period I'm querying uh let me go back here uh and then give me an order

set so I got this data back and it says Hey listen you've got you have no nothing 391 logs and you have this massive one service oh great okay 349 logs for the very small period of time that you're looking at okay well what's doing that I took a look at the technique what's the what's the technique it's doing uh I made a query and it says hey it's doing service creation over and over and over again out of the Thousand logs I pulled out for you Steve 940 of them for service creation what the hell is doing that windows image access so the windows scanner service is repeatedly creating a service weird but I was able to find that in

just a few minutes just like working with any other SIM able to figure it right out and the queries take seconds you know six six seven seconds for this one uh pull all the data in and present it to you I was like that's weird and silly and I probably should try to fix that maybe I'll Google it sometime when I have time when I'm not paying attention to my wife with your kids uh but it was just that music I loved it uh had I had to have this earlier it would have been so happy and wonderful uh but now I'm able to share this with you and be happy and wonderful uh what else can

this thing do uh output routers do uh Dynamic destinations so if you've got uh regions let's say for your S3 you want to keep regions local and use the same rule set you can say hey what as the law comes in what region am I in you know where where is this law coming from and send it to that local region uh you can do lookups just like you can with your uh up in your sim uh and enrich the data as it flows through uh should you have the need uh one of the really neat things that I liked that I know can be uh kind of a hold up for people not giving up their logs data

masking I can go in and make sure any sensitive data is redacted I have a log before I ship it I can ship it anywhere so yeah now I can just make the Auditors go away oh we can uh the collector's part is really neat um but you know it is very very necessary uh can do web hooks you know this kind of become like a pre-alerting system uh if you don't have one so you can send events to stuff and then do your metrics if you're into that sort of thing uh building metrics in real time let's say you're doing um application traces uh you can start building metrics out of that if your

apps aren't sending that it does a lot of really neat things uh but it's not the only thing that can do these things and I'm just even though this seems like a sales pitch uh this again this was just my torrent love affair uh that I just had over the last six months so I I thank you for following me through this uh the uh we got uh one of the neat little tools that I noticed that had come up was log slash this is a series of scripts uh this dude wrote uh he's gonna uh he's got a pack for vector which is another uh another observability pipelining tool and the this does time window sampling

so I can say over uh you know over the for each minute or each 10 seconds uh sample the logs and reduce them so you know for each you know 10 second period that this one network you know they either doing firewall logs again because it's usually very chatty with the allow logs uh to to reduce those right and usually placing those right at the source or uh in your vector or an Incredible uh a little bit launch Dash Apache nifi uh a very tried and true tools uh that do this uh as well in some capacities um not not all but I mean fluid Betsy seems to be the OG so uh I imagine they

they do it quite well uh and then hey if you've got that kind of Affliction you go ahead and bring your own elbow grease and do it all in Python go right ahead uh and my soul is now at rest I can if I ever come up with a situation again where I've gotta send my lawn to S3 and my sim and reduce the cost get rid of it you know get rid of all the Superfluous stuff that maybe I can't configure out of my tools um and reduce the the logs I even ship and I can pull the data out of my daylight and play it back into a new tool test it out

to do it and again what do I do now it's I'm kind of standing out here kind of standing out here in the iceberg you know of my you know first quarter 2023 uh and I have everything just to learn more about observability I just wanted to share today again this this this uh this journey I've just been on for the last six months and I just had to share it uh and anybody who doesn't have this problem I will see you at the bar because then we can you know uh we'll let people do it the problem go oh I can finally do this and then we'll come to the bar and we can all party uh and hang

out and have a soda and that is that let's check

um we got two minutes left questions comments concerns regrets yes um some more of our friend intelligence leads as well so what other items that we had is if we got like doing the nine hours

so Paul pulling it right in tagging it as soon as it comes in right out the SIM so you know hey it's cool chill and you got your rules tuned to to ignore those things I love it that's that's Superman I've seen this used also at uh uh security provider being a managed security providers uh when they're taking a lot of customer data and they got to work with a lot of it so it is a larger uh larger scale data problem uh but hey anybody else all right and with that I thank you so very much for this opportunity take it easy thank you thank you