AI's House Of Cards: Building Intelligent Systems On Vulnerable Foundations

Show transcript [en]

A house of cards take only a few seconds to collapse and the digital foundation that we are building and betting our AI future on. I think it's already trembling. Good morning. Uh my name is Andre. Thank you for joining me today. And I'm here to talk about the thing that should really concern us all. the very basis underpinning our digital future. Um, I've spent the last three decades wrestling some of these foundational issues firsthand and today I'll show you what I believe and why I believe uh we are nearing a critical tipping point. This audience, I'm sure, perfectly knows that cyber security threats have evolved from isolated incidents to full-blown digital crisis with attacks growing at

unprecedented speed in both sophistication and scale. Probably the best number to evidence that is that cyber crime damages are projected to reach 10 trillion dollars this year, which to me is probably the biggest transfer of wealth or biggest transfer of economic value in the history of mankind. But here's the thing. I would argue that all this mess isn't necessarily because or only because the attackers are getting better. It's also because we have some very serious fundamental issues in the very fabric of the core systems that power our digital society. And especially now as we are opening this new exciting chapter and starting to build incredible things with AI, addressing some of these issues is becoming hugely

important. Because what if this brilliant AI powered future is built on something far less stable? What if I told you that it's all resting on a house of cards? This new AI era isn't just about faster computers or about smarter algorithms. Very likely, it's going to cause a fundamental shift in how we live, how we work, and how we interact with the world around us. Just think about it. AI is poised to revolutionize healthcare, transportation education manufacturing, finance, you name it. every industry that we know, every vertical, similar to what computers and the internet did some time ago, but now with more vigor, more intensity, and I would say also more political support. We're talking about personalized

medicine self-driving cars, smart drones, smart cities, fully automated dark factories with no humans involved, and AI powered assistance so personal that basic they basically become our closest soulmates. Is it scary? Maybe. But the potential is breathtaking, as is the amount of dollars that's being poured in these things. This is a truly transformative force and it is coming. But there is also a dark side to all of this. AI abused and weaponized for offensive purposes. Late last year, Google project zero made a splash by using an LLM to perhaps discover the first new zero day in SQLite. Then last month, a group of Google researchers published a detailed paper where they analyzed 12,000 real world AI

based cyber attacks, clearly showing AI's growing offensive capabilities. Also worth noting is that the general trend of guard rails built into the LLMs that are becoming less stringent, not more. as evidenced for example by the latest image generation model inside OpenAI which only took a few days for the bad guys to realize this is a super handy tool for generating things like fake IDs fake invoices and other malicious items breaking many commercial IDB and anti-fraud solutions and to crown it just two weeks ago techrunch reported on North Korea are launching a new cyber unit with a focus solely on AI powered attacks. So Kim is at it again. So I would say things are get

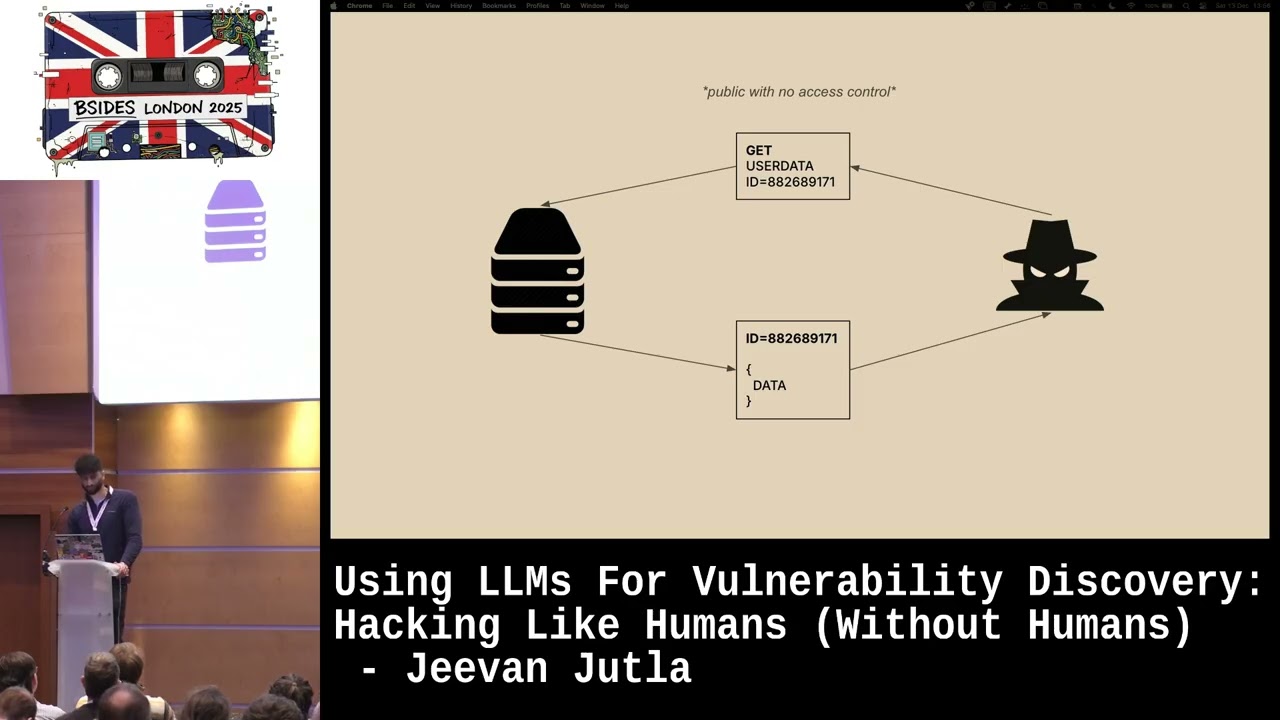

starting to get pretty wild and so with this rapid acceleration towards AIdriven future there is a critical question that we have to ask ourselves. Are we really ready for it? Let's take a closer look of what we are building on. Our digital infrastructure today is based on complex interconnected systems that are riddled with vulnerabilities. This is not a new thing, of course. It's an old murky problem that we have kind of known about, but we have ignored, but we always knew was going to backfire on us unless we do something about it. The numbers don't lie. According to the CV database, over 40,000 new vulnerabilities were reported in 2024 alone. That's a 43% increase year on

year. And the average time it took to discover a vulnerability was 215 days. This is just the ones that we know about, of course, and only cover third party systems. Vulnerabilities in our own code would be on top of that. And the comp and as the complexity of these systems grows, this is no longer a linear problem. It is actually an exponential problem. Most of these vulnerabilities fall into critical categories like cross-ite scripting, improper input validation, outofbound reads and rights, etc. making them highly exploitable. And unsurprisingly, the software that is most targeted also happens to be the one that is most commonly used. A house of cards only takes a few seconds to collapse. And today,

sophisticated attackers can weaponize critical vulnerabilities in 5 days, sometimes even much faster. But with AI now starting to also be used for offensive purposes, that window can realistically shrink to minutes or even seconds. So, how can any security team, no matter how skilled or funded, possibly keep up with such an avalanche? The sheer volume combined with the everinccreasing speed of exploitation makes traditional approaches totally unsustainable. But there's more to this. It's not just the number of vulnerabilities. It's also about where they reside. Much of our critical infrastructure, the systems that underpin our power grids, our hospitals, our financial institutions, our food and drug supply chain run on legacy systems. These are often systems that were built decades ago, are difficult to

patch, are frequently lacking modern security features, and they are riddled with known and unknown weaknesses. They were built to work reliably and maybe even slightly refactored over the years, but they were typically not designed with security in mind. And to make fix even more challenging, the attack surface has become really complex and incredibly heterogeneous. So we are not talking just about workstation and servers anymore. Of course, it's the cloud now used by over 95% of all enterprises. It's IoT where the number of devices is in the tens of billions. It's all the mobile stuff and it's the APIs connecting it all together. Every new connection, every new device, every new application is a potential entry point for

attackers. Try to visualize the attack surface of a modern global enterprise. It's fast. It's dynamic as in it's changing all the time. And frankly, it's almost impossible to fully understand. This has led to a renaissance of vulnerability scanners and discovery tools of all sorts from codes to infrastructure to cloud. Now, let's have a closer look at this ecosystem. For application security, we have SAS tools like check marks, varodes, sonar cube that analyze source code without actually executing it. We have dash tools like aunetics and oabz that test running applications. We have SCA tools, software composition analysis tools like white source or men.io and sneak that scan dependencies and third party components for infrastructure. We of

course have network scanners like Tannibal Nessus, Qualis, Rapid 7, etc. And we also have container scanners like tree, clair, and ankor. And then we of course have cloud security posture management tools like whisp, which Google just decided was worth $32 billion in their biggest ever acquisition. I guess if your house of cards is built on cloud, we are willing to pay wizard level prices. The whole scanning market is valued at over $5 billion annually now and growing at almost 20% kegger according to Gardner. The bottom line here is that people today are doing a lot of scanning and these tools have become remarkably effective. So one can say that all is good or not. We've solved the

problem. Well, not quite. This has led to a situation where CISOs and their teams are frustrated and burning out pretty much drowning in the sea of vulnerabilities. In most companies that I talk with, the number of items in their vulnerability backlog is in the hundreds of thousands or even millions sitting there and waiting to be investigated. Security teams are overloaded by vulnerability counts that far exceed their ability to triage and fix them in a timely manner. And you don't have to take my word for it. A recent study by Panon Institute quantified it even better. The average today is 1.1 million vulnerabilities in backlog per organization and not even half of them ever get remediated. It's just

terrible. Here are some actual quotes to get an even better sense of the situation. So, this is for from Chris Krabs. I actually I think some of you actually know this person uh former director of CISA saying it's like playing a whack-a-ole with a sledgehammer. By the time you address one set of vulnerabilities, three more critical ones have popped up. A forester analyst Eric Nost said the tech industry has now become overwhelmed by vulnerabilities to the point where it can't keep up. Then we have Windows Snider, former CESO of Intel and Square saying there is a systemic problem in how we approach vulnerabilities. Organizations keep investing in better detection capabilities without equal investment in their remediation capabilities.

And lastly, Jeff Moss, the famous founder of Blackhead and Defcon, would say, "Every CISO I know is facing the same impossible math problem. Vulnerability discovery is outpacing remediation capacity by orders of magnitude." All right, folks. Now, let's play a little bit. I'm really curious about this. Please take out your phones and scan this QR code to answer this simple question. How many vulnerabilities are in your organization right now? And the options would be less than 10K, 10K to 100K, 100K to a million. Please do this. Okay, it's coming. Two votes. That's not too many.

Complete 14 16. Amazing.

[Music]

Okay, let's give it few more seconds. I think we are getting some picture here. It's good that uh the over 1 million actually is only 7% here, but the we have stopped counting is a bit concerning I would say. Um so I'm sending my condolences. Anyway let's continue. I would say here is the real kicker. If you have ever worn the CESO hat, you know that it's not always just about technology or just about doing the thing that you believe is the right thing. The harsh reality is that once you start reporting a metric, you are kept accountable for managing it. But when it comes to vulnerability counts, how on earth are we supposed to do that? Which

brings me to a story that should send our chills down. Anyone here recognize this face? Would you know who that is? This is uh Joe Sullivan. I would like to tell his story a little bit. Sullivan was the chief security officer at Uber. A pretty respected figure in the cyber security world. He had built security teams at Facebook and eBay as well previously. The guy definitely knew what he was doing. But in 2016, Uber suffered a massive data breach and Sullivan made a decision that would change his life forever. So what happened? Hackers broke into Uber's network. And as usual, they did it in a pretty embarrassingly simple way. An Uber Uber employees GitHub account was

hacked by a simple credential stuffing attack. And in that private account, the bad guys found keys to the kingdom. AWS access keys for the entire Uber hardcoded into the source code of the private application. The data breach was big was really big. PII about 57 million riders and 600,000 drivers. their names, emails, phone numbers, and even driver driver's license details and payment details all exposed. The immediate response from Sullivan was to try to contain the situation. A $100,000 payment was arranged through Uber's buck bounty program, framed as reward for finding a vulnerability, but essentially it was hush money. The problem was exacerbated by the fact that the FTC was already after Uber investigating Uber for a previous

data breach that happened in 2014. And unfortunately, Sullivan decided not to disclose this 2016 breach to the FTC. Now, let me fast forward to September 2022. The trial begins and October in October, Sullivan is convicted. He was found guilty of obstructing proceedings before the FTC and of so-called misprion of a felony, which basically means that he knew about a crime and failed to report it. Now, I'm not here to condone what Joe Sullivan did, but I'm here to say that this story is actually not an anomaly. It's a symptom. A symptom of an impossible situation. CISOs are being held accountable for vulnerabilities they can't possibly remediate in a timely manner. They're constantly far-fighting, constantly behind the

curve, constantly underresourced, and understaffed. But CISOs are also humans. There is no way for them to prevent all attacks and they shouldn't be definitely criminal liable for it. Let's see what he himself had to say about this in an interview just a few months ago. It's not Can we have the sound from the presentation?

Okay. Won't

work.

See? All right. Okay. I guess we will do. He basically said that um there's only so much that you can do within the budget and people always expect the security leader to be the wizard that can save it all. But unfortunately the reality is somewhat different. Okay. Okay. We have talked about the explosion of vulnerabilities. We've talked about the challenges of legacy systems and the expanding attack surface. Now let's talk a bit about the traditional solution patching. Patching is the cornerstone of vulnerability management. Of course, applying fixes uh to remediate known security flaws, which sounds pretty simple, huh? But in today's environment, patching, especially manual patching, is becoming more and more unsustainable. It's like trying to file wildfire with a garden

hose. And here is why. First of all, the average time to patch a critical vulnerability, what the industry calls MTR, is far too long. A 2023 report from Splunk states that the meantime to patch today is 115 days. Think about that. 115 days. That's like leaving your front door unlocked for four straight months, hoping no one notices. But we already know from the previous discussion that many critical vulnerabilities are exploited within days or even hours of disclosure, especially if the exploit code is publicly available. This gap is a critical window of opportunity for attackers and unfortunately it's only getting worse, not better. Patching isn't just slow, it's also disruptive. A 2022 survey found that 58% IT professionals reported

experiencing significant disruptions to business operations due to patching. This is because organization often have to take systems offline to apply patches which can impact productivity can impact revenue can impact critical services and even putting reboots aside because of the sheer complexity and inherent fragility of today's systems. Things tend to break quite often because of patching require additional interventions and unwanted downtimes. And then of course patching can't protect you from the unknown. Of the 40,000 vulnerabilities that were discovered last year, 97 of those were zero days actively exploited in the wild before any patch existed. And lastly, patching doesn't address misisconfigurations either. Servers left open to the internet, weak passwords, excessive permissions, etc., etc. The Verizon

DBIR consistently shows that human error, including misconfigurations, is a major factor in a significant percentage of breaches. So, patching is slow, it's disruptive, it doesn't address all vulnerabilities and it's inherently prone to human error. I would just say we suck at it. We need a better approach and that is becoming coming closer to the root cause of the problem. What I mean by that is being more proactive at fixing at the source code level. This is a powerful concept, but anyone who has ever worked in this area will probably agree that the challenges here are even larger and it's by no means just the technical aspects of it. Surprisingly, the main problem tends to be actually cultural.

One could say that apps is where carrier ambitions and friendships go to die. The average enterprise today maintains over 300 custom applications with many more open-source and other components uh that are dependent. That's 300 opportunities for your security team to be the most hated people in the company. I'm talking about the silos and divides between security development of and IT operations. The problem isn't that these teams don't want to work together per se, but it's like they are working off of completely different objectives. It's like three people trying to drive one car to three different destinations at the same time. I'm sure you know very well what I'm talking about. Security wants fixes to be rolled out very fast

while developers want to focus on forward-looking stuff on innovation and the ops people with their risk averse attitude only at to the problem. If you have ever worked in appsac you know the developers and security teams usually only agree on exactly one thing which is it's always ops fault. And while we can laugh at these dysfunctional dynamics, the consequences are anything but funny. These organizational disconnects are creating massive risk exposure that no amount of tooling can ever solve. Which brings us perhaps to the most concerning aspect of the current situation. We're overwhelmed by vulnerabilities, challenged by legacy systems and technical debt, struggling with expanding attack surfaces and divided by organizational silos. And yet in the midst of all of

this, we are rushing in headlong into the AI revolution or even accelerating towards AGI. Before I get into what we could do about this, I would first like to challenge some deeply entrenched assumptions that the security industry has embraced because these may sound compelling but actually make the problem even worse in my mind. First, the myth of just focus on the most critical vulnerabilities. While some prioritization is necessary, the reality is that attackers today chain multiple lower severity vulnerabilities to create critical attack paths. A 2023 IBM study found that 52% of successful breaches involve chains of medium severity vulnerabilities that bypass defenses focused only on critical issues. So focusing solely on the top 3% or only

top 5% creates a false sense of security while leaving exposed flags. Second, the shift left fallacy. We have spent years pushing security onto developers who frankly aren't security experts and probably will never be. What's more, the nature of software engineering is fundamentally changing with AI coding assistants like GitHub copilot, cursor, and augment becoming pretty much ubiquitous now. And I'm not talking about about just about some wipe coding. AI coding assistants are now used by over 80 80% of enterprise development teams. Meaning that developers across the board are increasingly assembling rather than writing code. They are integrating chunks of AI generated code with limited understanding of its security implications. And guess what? A recent study from Stanford found that code

generated by these AI assistants contains security flaws at roughly twice the rate of human written code. We are exponentially increasing complexity while simultaneously reducing comprehension and quality. And third, the band 8 approach of compensating controls, runtime protection, micro segmentation, zero trust, various deception techniques. These are all valuable tactics, but they are treating symptoms rather than addressing the root cause. What's worse, modern infrastructure changes at unprecedented speeds. The average enterprise makes over 30,000 configuration changes per month across their IT environment according to a 2024 Gardner report and each such change potentially invalidates the protection offered by compensating controls which creates a perpetual game of catch-up. In short, compensation controls compensating controls are prone to human errors. They add fragility and

they don't address the fundamental problem at hand. Despite everything I have just said, I would like to end today on a somewhat optimistic note. I actually think there is a way out of this mess. The path forward isn't doing more of what we have been doing. Though it requires a paradigm shift in how we think about security, from endless patching to architectural integrity. From manual remediation to systematic scalable approaches, from prevention alone to resilience by design. Of course, paradigm shifts don't happen overnight. They always begin with practical steps. So when you return to your organization tomorrow or on Monday, number one, put more emphasis on your own code. All of us are first and foremost

responsible for our own stuff. If everyone focused on cleaning up their backyard first, we would be in a very different situation today. The excuse-making approach that software is provided as is just must end. Number two, elevate to the board and CEO. Present this not as just a technical problem but as a business continuity and liability issue. You should ask directly what happens when vulnerabilities outpace the capacity to address them. Should ask for bigger budgets to specifically support vulnerability remediation programs. Number three, challenge the current process. Research shows that security teams spend over 80% of remediation time on repetitive tasks. So, please document these patterns, calculate the actual cost of human-driven remediation and explore how automation

and perhaps AI could help to improve that. And lastly, focus on the outcome metric. shift from measuring vulnerability counts to measuring your time to safety. How quickly your organization returns to a secure state. I've spent my career in security watching the problem grow exponentially. I've seen brilliant security teams crushed under the weight of their vulnerability backlogs. I watch CISOs burn out from trying to solve an effectively unsolvable problem with the resources and tools they have been given and we have just heard from Joe Sullivan or we have not but uh I have interpreted what he said how bad this thing can become. I have to say I remain optimistic because despite these challenges I see a

growing recognition that we need a fundamental change not incremental improvement. I see organizations beginning to question the status quo. I'm optimistic because a room like this is filled with people who can drive the paradigm shift. People who understand that both the stakes and the opportunity are massive. So the hubs of cards at the end doesn't have to fall. But preventing the collapse requires bold action. Reinventing how we approach remediation at a fundamental level. So whether you are writing code or defending networks or finding vulnerability backlogs, I think it's your fight too. Let's shift this paradigm together starting now. Thank you so much and have a great bite.