Half-Life: Lambda Security

Show transcript [en]

uh hi everyone uh thanks for coming uh to hear this talk uh my name is artem switkov i'm a senior security engineer at skyscanner and uh we're all about making awesome products for the traveler we are app first and so we provide our app on all major platforms and we operate our services in amazon cloud and as a security engineer i get to spend a lot of my time playing around in that and it's pretty awesome and interesting and so today i want to talk about aws serverless security and present health life which is a little auditing tool that i made for lambdas so what's a serverless well uh it's called a serverless right but there's a server really

the thing is just you don't have to manage any of it it's all taken care of by amazon and all you have to do is upload your code set up some event sources to trigger that code set up some permissions and then you're good to go and what's a lambda well a lambda is an event driven serverless computing platform by amazon and the way it works is that it runs code in response to an event that you set up it uh automatically scales with high availability so you don't have to worry about denial of service or anything like this it runs in an isolated environment with a read-only file system it's one execution per request

and typically runs for a few seconds but there's a 15 minute runtime limit so the way lambdas work is that they have versions and aliases and basically this means that you can publish multiple versions of your lambda and create these human readable tags called aliases to each individual version and that way you can have one version in production one version in development and seamlessly switch these once they're ready to be taken to production lambdas also have layers and these layers is basically a way to avoid code duplication across multiple functions so for example you can publish a layer with common logic like metrics tracking or authentication or whatever and then you simply reference this layer from

your function and um that way you can share code this is also a way to introduce third-party code to your lambda functions so lambdas run with an execution role which basically identifies the function and uh controls its access to aws resources and apart from this identity based execution role other resources including a lambda also have uh a resource-based policy which also uh specifies uh what are the what are the event sources for this function and what you can do with it and if we take a look at an example here on that side we have an s3 bucket and then on this side we have the lambda function and what happens is that the user uploads a

file to the s3 bucket and there's a notification setup to notify the lambda function and trigger it and when the lambda function runs it uses an execution role that tells it what what it can and can do and who can trigger it and stuff and then executes some code that you upload on there and this code processes the file and so all of this happens without you ever configuring any servers like any servers or anything else you just go into the management console or you upload a cloud formation or whatever and all of this happens automatically so a more interesting example is a microservice so you've got your users going in through route 53 at the top there

they resolve the domain name and then they go to an s3 bucket which has your static website uh you also have uh they're below route 53 right there you have your gate api gateway uh which specifies the different endpoints that you can access and each of these endpoints maps into a separate lambda function and because all of these probably share some common functionality you can probably put that into a layer and then these functions do stuff and maybe access your database and so on and again all of this is without you ever touching any server configuration any software any hardware you just configure it like through a management console panel or whatever um and so this is great right you don't

have to worry about patching your software you don't have to worry about changing hardware everything is already fault tolerant and highly available by default um there's virtually unlimited scaling capacity in execution and in storage uh you have an isolated network perimeter inside your vpc and okay but so now [Music] you know this is really different from the typical setup with your servers and stuff so how do we really how can we check what's going on in there how can we audit sort of the things that are happening and yeah again aws shared responsibility model states that aws is going to take care of all the software and hardware stuff and you have to take care of uh

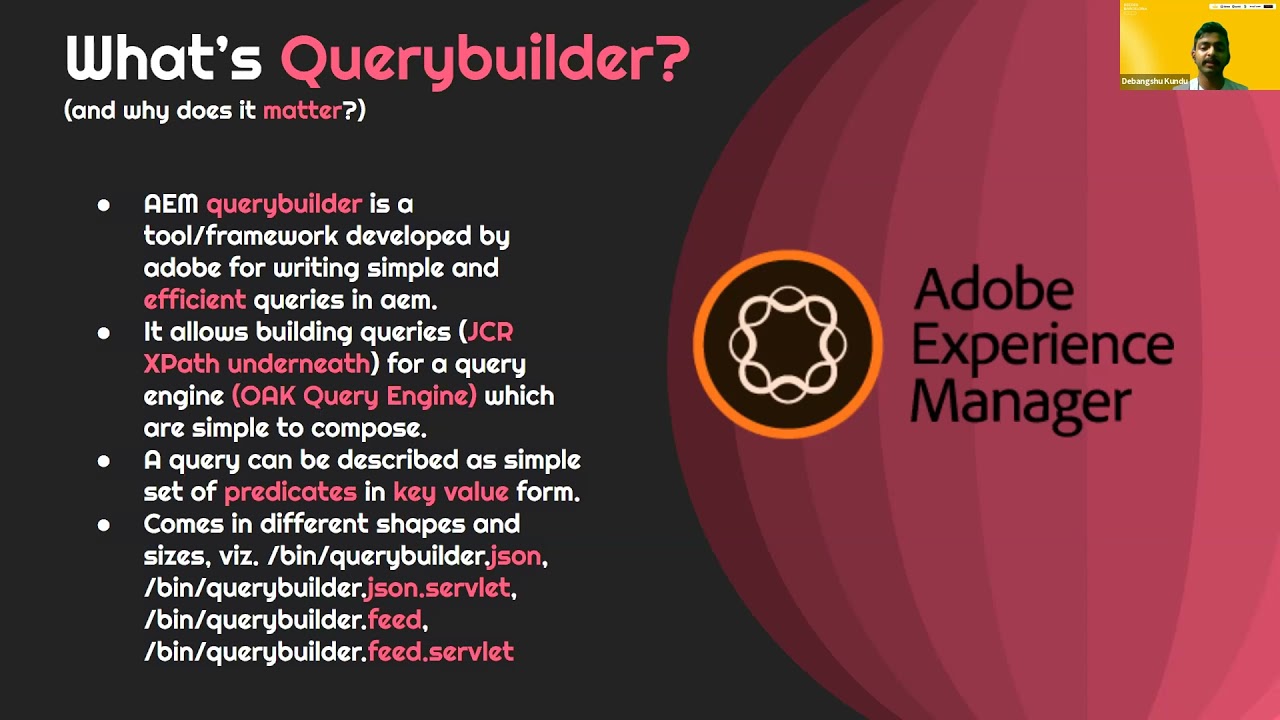

configuring your service and so a huge part really about securing your lambdas and serverless architecture is about configuration and this configuration is hard you need to set up permissions you need to set up access controls and there's a lot of stuff at play there's users roles trusted entities you know your access control lists resource-based policies managed policies inline policies and the first time you touch this you're like okay well that's too much stuff going on and you really need to figure it out and so really what happens is that people you know developers who want to go fast and they they really need to get things done uh they don't normally take the time or

they're not aware of how to configure this well so what they do is for example you have an sqs q right and when you create it uh there at the bottom it tells you okay well you have this sqsq but it doesn't have a policy so you're the only person who can use this queue and that's probably not what you want right you want your lambda functions or your services uh to access this queue and use it in a way so you need to set up a policy and the easiest way to set it up is basically in two clicks everybody and all all actions you know add permission this allows everyone to do everything

on your queue and this will work of course you know it's gonna it's gonna work uh but uh you know it's not really secure and we'll see why in a bit and this in itself the policy configuration is really a unique serverless challenge because now you don't have to worry about software and hardware but you do have to worry about setting up all these permissions and connecting your services in the right way and okay giving all permissions to everybody is maybe okay in sandbox when you want to go fast and you want to develop your product and test it but once you push it into production well you can really you shouldn't really push it like this you need to configure all

your permissions really following the least privileged principle otherwise you might get public exposure of your s3 buckets uh you know with information about your users and so on as we have recently seen in the news and just to briefly take a look at what's what's at play here for uh configuring a policy policies have an effect which is allowed with deny so it's telling you are you allowing or denying stuff it has a principle which specifies exactly who is affected by this policy it could be a user role a trusted entity or whatever the actions specify the actual things the actual permissions that you're allowing you're denying resource specifies uh what other aws services you can or cannot access or use

and finally there's a condition which is optional and it specifies when the policy is in effect and so the common pitfalls with that is you know actions unrestricted actions we specify the permissions and so at the top you see an example of specifying a specific action for downloading a file from an s3 bucket and at the bottom you see a wild card being used with these actions and so the wildcard basically says all of them you know allow everything and um if your service is really meant to allow users to download stuff well that's really the only thing you should allow because otherwise they will also be able to upload stuff to your street bucket

maybe abuse it to distribute malicious content or they could maybe delete some stuff from your bucket and so on another example is the unrestricted principle so at the top again you see an example of specifying a concrete principle saying okay this user account you know my policy is about giving this user access or restricting this user in some way and then at the bottom you're basically saying right everyone doesn't matter who public not public you know aws user or not okay well it applies here uh and then conditions they're optional but uh when you're specifying when you're allowing actions and then you're specifying a wildcard principle uh that really makes your code or your function or your your

content public and available to everyone and so unless you specify an additional condition it's going to stay like that and so this is an example of specifying a condition where you're restricting access uh by source ip so maybe for example you have i don't know a lot of services and there's no way for you to specify all the principles one by one so you say okay i allow all principles but only from this private subnet that i have and so that would work there are tons of ways to really screw this up um there's you know allowing not principle which is like allowing everyone but allowing not action allowing everything but there's a lot of ways to do

not do well s3 access control lists so you can use wildcards everywhere and there's really a lot a lot of stuff here at play and uh so when we were looking at this at skyscanner um we had serverless architectures running in production and we said okay well we want to see what's going on inside we want to see what we have and maybe do a security audit and and see what's really how do we really have it set up and so health life is basically this lambda auditing tool that's made to create asset visibility and security and help you improve security with actionable results it has been released to public has been open sourced just yesterday and you can install it

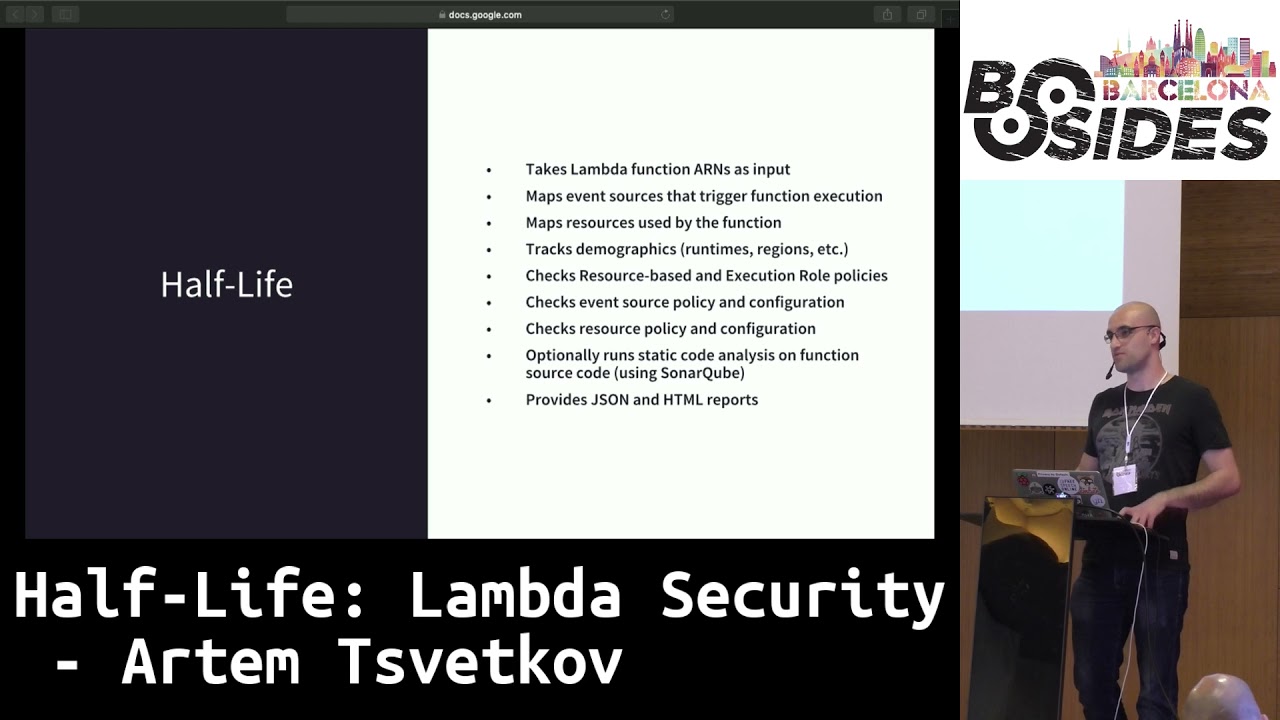

through pip or you can download source code at github so check it out and you know prs are welcome and so on and yeah so the way health life works is basically it takes in a list of function errands so you're saying okay audit all of them or maybe there's one specific one that i want and then using this function is going to take it's going to find all the event sources for this function is going to find all the resources it uses and then check uh the policies how are those configured for the function as well as all the resources and event sources [Music] and it's going to also check some configurations like for example is a

storage encrypted or not and as you know maybe not but in amazon or everything comes for free regarding encryption you can always enable it for free um and yeah so once when it does that you can also optionally enable static code analysis so it's going to download your function code and run it through uh center cube in this case uh because that's what we're using a skyscanner so i made it compatible with center cube and in github you actually have a docker file to build a center cube server for yourself if you don't have one and yeah on the output you get these json and html reports that you can use either in some automatic system that you

might have or you might you know you might want to take a look at it yourself so this is what what you get when you run it from your terminal with verbose mode on uh it's made to be run from terminal as well as say a chrome job like you want to set it up and it runs every day or whatever so you can turn off all the verbose output of course there's different switches you can specify you can play around with it and see what it does and these are some of the examples of the json output so there's a statistics file which is basically like tracking what you have going on uh in your with your lambda function so

it's for example telling you the run times uh how many functions do you have what are the different runtimes so you can see okay i have maybe like 17 by old python images that i might want to update or whatever or you might see some you know weird language popping up and there's some custom runtime that you might want to address it's telling you like for example security stuff how many high detections you know medium detections that i might want to take care of right away and so on this one's taken from the security json file and here it's telling you uh for each item that it finds it's basically telling you the function that

this belongs to the where tells you exactly which event source or resource is affected by the vulnerability and then of course the level and the text so in this case it's telling you okay you have a public s3 bucket that is affecting your lambda function so here the theory is that okay if i have a public s3 bucket um if i have a public history bucket and somebody uploads uh some content that's not expected by my lambda function uh you know what's gonna happen and so on and this is also half-life also generates per function report and this is where you see all the details for each individual function including you know the the description the run time the region

it's in uh it's going to give you all the policies including resource-based policies your execution role and so on and this is basically the html version of the json reports this is the stats that you see um sort of nice to get an overview of what's going on inside your serverless network um this is the security part so you get a list of all the vulnerabilities it finds all in one place the middle column here will tell you where and then the last column will give you a link to the lambda function and when you click it it's going to lead you to this individual lambda screen where again you have all the details as well as the

vulnerabilities that belong only to this function and yeah that's pretty much it for the way it functions there's a lot of improvements to be done especially with the html report i want to integrate lambda layer analysis because there you can introduce for example third-party code which is important to keep track of and see what it does you know more rules extend static code analysis maybe other tools not just under cube tons of bugs lots of stuff again prs are welcome and that's pretty much it uh thank you for your time and if you have any questions [Applause]

a question over there [Music] uh if you identify a problem with a function and you release a new version to fix it how can you make sure that everyone starts to use the new version and doesn't stick with the old one because for you to use a version it has to be it has a latest tag so by default it's always the latest tag that's referenced so unless you specifically calling some like version one or version two whatever you're always going to use the latest

so you can [Applause] like revoking in what sense like so if someone is deliberately calling version two for example and you have version three you can say okay version two is no longer accessible i guess you could you could delete it but um i mean normally you'd either be calling the latest or you would make uh aliases and then so you'd always be calling the prod alice and that lsu you change the mapping for it

any other questions answers

hi uh how are you extracting the source code of the functions uh when you uh using boto3 or using the console the aws key when you call function details like to get the function details it provides you a url for download that you can use okay thank you [Music]

thank you does it uh it communicates through the aws api right that you have plans on including like terraform files and not just communicating with the aws api and scanning the vulnerabilities from terraform files for example uh to be honest i haven't thought about terraform at all but um yeah not sure maybe in the future yeah okay thank you

thank you

so

that's it yeah yeah okay thank you