Intro to Privacy-Enhancing Technologies (PETs)

Show transcript [en]

Hi everyone, please give a round of applause for our next speaker, Harshel Sha, senior software engineer. Uh please if you have any questions, we'll answer them at the end. Uh you can scan the QR code and go and uh put your questions in slido. Awesome. Thanks for the introduction. Before starting the presentation, just a legal disclaimer. The views, opinions and content shared in this presentations are all my own and not rep and doesn't represent anything uh like any views or opinions or positions of my employer. Yeah. Good afternoon everyone. I'm Hershel. I'm going to talk about pets u not the one which you love to cuddle at home but privacy enhancing technology. And thank you so much for

coming today. um on a Sunday afternoon you could have chosen to be somewhere else doing something better and you chose to be here. So thank you for that. Yeah as you all know like data is the new oil. Everyone is using it in some sort some way and we are already moving uh into a reality where applications are using data uh in all sort of way and the way we are using is in a way where we are collaborating over the data. What if uh we have scenarios where we are trying to collaborate over data and we don't trust parties like different parties which we are collaborating there there are few concerns about those collaborations and

few of them are listed in the slides. Uh one could be like computing uh sorry parties can cheat which which is basically parties trying to learn more uh information than what they were intended to learn through interaction or parties can just go like in completely arbitrary manner than what they intended. So instead of during the interaction trying to send the actual value they can just send some garbage value or it's even possible that subset of some of the subset of the parties can collude and try to like take over the interaction and um in general this concern has become more and more real and that's why we had executed order passed in back in I think

2023 and this order called for technologies called privacy enhancing technologies And some of them are listed uh in in the slide and um the technologies are like secure multi-party computing um homophobic encryption zero knowledge proofs and the list is pretty long. Um okay give me a cheer if uh you have heard at least one of those technology. Okay I'm happy that people are not uh like people are still awake and they are not asleep by now. Okay. uh in some sense this privacy enhancing technologies are not uh new. The genesis of them have been started from like 1980s and we are around like 30 35 years uh into uh their initial conceptions and one of the popular like common

misconception about the technologies are that uh they are purely economic and they have never seen like light of day and it's not used in uh by by use by like general public or general general company. But this is in fact not true. Um privacy enhancing technologies have made their debut and recently in past 5 years and some of the examples are mentioned uh in the slide. Um I think back in 2018 Zeno u introduced their MPC based Wallace. Then uh Microsoft and I I believe Google is using FHE which is full of home of encryption for their um password use case where they are trying to see if your password like customer's password is uh part of a data breach or

not. Then companies are also actively working on uh custom libraries which are highly tailored for large scale applications and um there is one example actually I forgot to mention in the sliders which is very recent which is from Apple. Apple is like big everyone as everyone knows is big on privacy. uh I I think last year they introduced a private call ID lookup uh scenario where they are using again uh like home of encryption for doing uh some sort some sort of privacy um and we already in the reality where uh pets is already out there and it's getting more and more serious okay so I would like to demonstrate how a privacy workflow might

look like okay this is a example of uh election and we have in in this election we have two parties like two candidates sorry one is zero and one I know the names are pretty dumb zero and one but just for simplicity I'm having two candidates 0 and one and we we have 18 different voters whose uh objective is cast a vote and their votes are displayed in the screen below their u icon yeah so how can we do this how can we do a election. popular way is to have a secure election officer like trusted election officer and the job or the responsibility of this if election officer is to collect all the votes from all the voters

collect them up and if in this scenario if the sum is greater than half which is nine then candidate one wins if it's less than half then the candidate zero wins and the for this scenario like since we have 10 which is summation of the votes is greater than nine, it means um a candidate one wins. What if we have a scenario where we don't have a trusted election officer? Can you do this? Yes, we can. We we're going to use a technology which is out there and which is everyone is using right now. It's called encryption. Just a basic encryption. And here the red box um represents encryption of that particular vote. Um so encryption of zero is represented by

red box zero and encryption of one is represented by red box one. In general encryption is totally random. You can't see what's what's it it is trying to conceal. Uh but just for pictorial reason I'm showing it here. In some sense you can think encryption as in you're adding one layer to try to conceal a secret and whenever you you want to reveal a secret you sort of remove that layer to see it. Okay. Um I'm going to use a encryption which has a little bit special property and this property is uh even when you add those encrypted values the encryption of the uh underlying secret also gets gets added. So it has a little bit more

fancier property. It's just uh you can add the secret when even though we are in encrypted domain. Okay. And and for this scenario what we can do is uh each person we'll start just adding uh we'll there will be a centralized location where we are trying to collect all words and just add them up in a secure way. And what secure way is just uh encryption of take first word which is encryption of zero plus encryption of one. It results into encryption of one. Go on. Let's do encryption of one plus encryption of zero result encryption of one and encryption of one plus encryption of one is encryption of two. And by that we have succeededly like we

have successfully created encryption of 10 without ever using a trusted third party or trusted election officer who will collect the votes for us. And if it's possible that um in this vote voters can cheat and things like that. Uh but since um we are using the special kind of encryption which sort of adds this values if anyone had cheated then uh the result won't be like in between 0 to 18 uh and that that way you can prove that okay uh people are not cheating but that's a little bit out of scope for this slide. Okay. So this this could be one of the workflows u where you can use privacy or not encryption to solve one

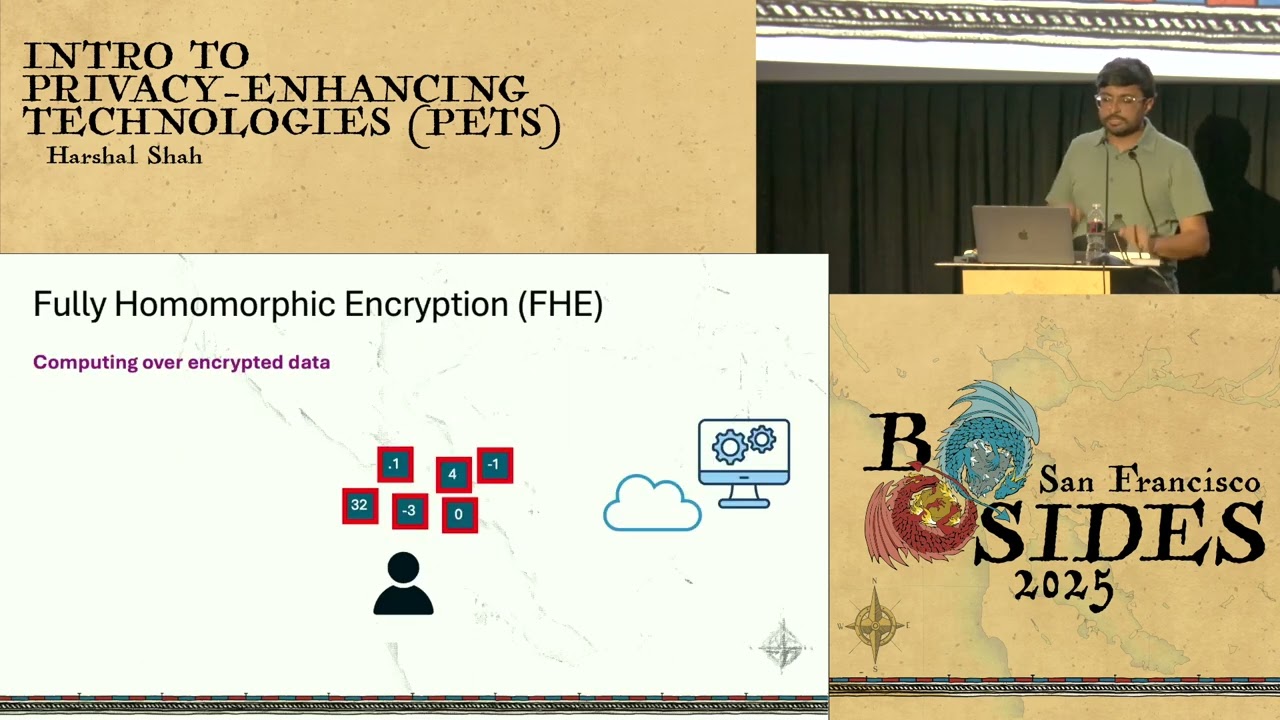

problem. Yeah. Now let's see what is fully homop encryption. Fully homophic encryption is just the encryption which I mentioned in the previous side with little more fancier properties. So uh we can not just add like two positive numbers but we can do like uh one positive one negative. Then you can even like multip you not just you can not just do additions you can do other things like multiplication then uh subtraction divisions and all sort of properties. One thing to note is all those operations are not created equally. So uh operations like additions, subtractions, they could be cheap but doing multiplications could be a little bit expensive. When I say expensive, computationally expensive and uh the genesis of homophobic encryption

came into picture when um there were there were clients who had some data like input and they didn't have the enough compute power to do something. So uh they wanted to offload their computation to some third party server. Now the trivial way for doing this is like just play uh share your plain text input to the server and it can do some computation. But the problem is like you lose the privacy and that's where like the genesis of this uh homophic encryption started. Um you might be thinking that okay why is this guy only talking about like very basic operations like additions and m multiplications like real world applications like neural networks and uh large models are really

big they are not just uh additions and subtractions but any applications can be broken down or can be transformed into lot of the trivial operations uh namely additions and subtraction uh multiplications uh there is there are some caveats to it but uh majority of the cases you can break it down. uh and uh it's also um one statement which is true is all this transformations can be also a little bit expensive but uh there is research going on out there like uh where uh people are actively researching on how to make this transformation faster and not just the transformation but even the uh encryption or uh FH in general faster like I have seen companies working on

like hardware deliver acceleration where um they are designing specific chipsets which are highly optimized for their use cases. Yeah. Um one thing to notice yes um it's true that lot of the large language models like training a large language model in encrypted domain might not be feasible right now with the current computational power we have but uh what I have seen in past is like from past couple of years like we have seen at least 2x or 3x performance boost on what's happening underneath so I'm pretty sure in the next couple of years it's possible that we could eventually be in a reality where uh we can have large language models which are um uh which uses FHE for

training. Okay. So let me give you an real world example of where we can use FHE. Um there are two different parties in uh in the slides. One of them is advertiser and second one is a retailer. Advertiser wants to query uh whether the customer ID MN OP 3456 uh not whether sorry wants to know how much this particular customer ID spend on that later uh retailer on that particular day which as you can see trivally is 16. Um there are two trivial ways to do this lookup. One is the advertiser can send this customer ID in plane to uh the retailer and retailer can do a quick lookup inside his database that okay uh this guy has

purchased or spent like 16 bucks or something and give that uh query back to the retailer but the problem is uh sorry give back to the advertiser. The problem is uh in this scenario the retailer learns what advertiser is trying to query for. And other trivial scenario is which is again um really bad which is send uh the retailer sending the whole database to the advertiser and just advertiser doing a local lookup. The problem is in the scenario the problem is advertiser is um sending lot more data or a lot more information about the customers than what it's supposed to or what it's intend to. Okay. How can we use homoic encryption to solve this

problem? What can uh what uh the advertiser can do is encrypt its query which is the customer ID in this scenario and send it to the um retailer. What retailer can do is for each of the rows it can compute subtraction of their customer ID to the queried customer ID multiply it with the spent amount and then just add them up. The uh resultant thing is encryption of 16 which goes back to the uh advertiser. Now the advertiser can use his one of his decryption key to decrypt the result and voila we got 16 back. Now we solve both the privacy problems. First is the retailer doesn't need to sell uh not sell share all the data to

advertiser and u retailer never learns about what uh the adver advertiser queried

for. Okay. Um second technology which uh privacy enhancing technology which I'm going to talk about is secure multi-party computing and it's a very generic term for collaborative computing and every time if someone says let MPC they generally refer to secret sharing based MPC. Yeah. So what is secret sharing? Secret sharing is just a way to as the name suggests share the secret. uh in in this picture uh we have number five which is our secret and we want to divide that secret into two different parts. Uh the way I have done is uh just split that uh from the diagonal and we have two secrets. Uh in general secrets are pretty random. You can cannot derive

anything about the secrets or anything about the number five from the secrets. For just for the pictorial reasons I divided uh the secret five in this fashion. Yeah, I know it reveals a little bit information about five, but uh just just just for example purposes. Um okay, so it's not like uh for like I I was just talking about how to share the secret in two uh two different shares but this terminology can be extended for uh like any any number of shares. This is the way like you can do it for three parties. uh in general uh the number of shares or number of times you want to split depends on how many parties you're trying to collaborate

with. So suppose there are like five different companies trying to collaborate on something they have a secret like uh secret sharing base um like secret like five secret shares um and it's also like it's not necessary that each of the shares are mutually disjoint. So it's possible that each of the shares can have more information than uh it is supposed to. So in this slides we have like each share represents like twothird of the original secret. So uh we don't even need all the parties to collaborate to compute something in this scenario. We we can have uh we can have like two out of three parties and we can regenerate the secret back which is like first two or

first something like that. And this is in general called T out of N secret sharing where T is a threshold which is needed out of N which is obviously T is less than N uh needed to reconstruct a secret. Okay. What be what would be a secure multi multi-party computing workflow look like? The objective in this uh is we want to securely compute addition. There are two parties uh and one party has input X second party as input three. Trivial way to do a addition is just share your uh num inputs to each other and u like just do addition over it. But there there can be some where uh parties cannot share that information with each

other. What we can use is the secret sharing both the parties uh secret share their input split them up and share with each other. Now addition is usually like very cheap uh when you're doing MPC you do some local computation and voila what we have is secret sharing of eight. Um again just for pctoral reasons uh we have answer which is revealing in the shares but in general the the shares look pretty random. Now um we have to we have the resultant shares. What we can do? One is um both the parties can exchange the shares and deconstruct the secret. That way uh you have number eight which is generated or you can design the workflow in such a way that

only one party can learn about uh the secret or it can be that okay neither party learns about anything. This can be something like intermediate workflow where this can be used for the uh further computation or something. Similarly, what if we want to do multiplication? Um first first thing is uh oopsie sorry. So if if you want to do multiplication first is um secret share your inputs share with each each party. Multiplication is uh is not trivial. You can't just do locally. You you need to introduce something like communication which requires you to send like one or two extra messages to compute secure multiplication. But after uh after doing some communication you can generate um

15 which is answer in this scenario. So apart from just doing some basic operations like additional multiplication what can we do? Um let we let's talk about data synthetic data generation. Um it's not directly related to uh pets but synthetic data generation is just artificial um way to generate or mimic real world data uh which doesn't reveal anything about the underlying data itself. So u you're just trying to generate like fake data out of the data which you already have. And what you can um in in this scenario there's two party just continuing from the scenario of advertiser and retailer we have two parties who have data set A and B and what they want to generate is

u a synthetic data which is combination of uh a like which is which mimics combination of a and b. Now again the trivial way is if both the parties can share that information to each other they can share and generate the synthetic data but um imagine a scenario where like there are some rules or regulation like GDPR or something which prohibits companies from sharing this information. You can use MPC in this scenario where you first secret share the data use a specializ uh specialized MPC engine to uh compute synthetic data and we we will have secret sharing of D which again um uh you can use for for the uh workflows. Similarly, you can also do uh

secure inference. uh I I didn't put example of secure training because again it's pretty expensive but currently we have algorithms which can do secure referrals pretty efficiently uh in the scenario what people can like what CL like there are there's one client with input X and there is a company with model M what they can do is secret share their input X and model M and the objective is to generate M /X which is M computed with input X and they again can use MPC to do some communication and generate MX. Um, I'm not going to go too much into detail, but there can be so many different scenarios for secure model inference where the input X itself is

secret shared with multiple parties. Uh, the model itself is secret shared with multiple parties or multiple servers. Um, and there are so many use cases like combination of those. uh one concrete use case which I'm going to talk about where people are actually using using uh MPC uh which is in a custody wallet setting. Uh in cryptocurrency um what we have is uh a client which has a secret key or a client which has a wallet which contains a secret key. Generally that wallet is sort of hosted host hosted by a wallet provider which is a again a third party. Um and there can be uh scenarios where the client has to prove the ownership of

the wallet uh which is basically generate a signature using the secret key which is stored inside the wallet and uh like it can use the secret key to generate uh the signature but the problem is this wallet is a single point of failure like I think um I don't know how many people have seen uh Silicon Valley where uh I think there is one guy or not even Silicon Valley. There was some guy who lost his Bitcoin uh key and he's trying to go through dumpsters to figure out uh where is secret key is in the um dumpster or something. But uh the point is like uh this is a real problem like having a single a single point of

failure. So what we can do is uh secret share the secret key. So uh here in this example what we have is the um the secret share which is the secret key is shared between like three different parties. Um it's possible that it is one single wallet provider had three different servers or it's possible that you can have three different uh wallet providers hosting each of the secret shares. Each of the secret keys again random. It doesn't reveal any information about the secret key. So even if like one of the servers is get uh hacked or something you don't lose your key and uh it's also like I have seen like some cases where user itself

can have custody of the key where one of the secret key is hosted by um client's mobile phone or personal wallet or something. Um what they can do is do a MPC B based interaction to generate a secret key and then not sorry not secret key to generate a signature and get get uh respond the client back with a signature of message M which is um sigma in this scenario. Now during the animation it might look that okay the secret key and the message everyone are combining at one place and then it's generating the secret key but in in in real world scenario the secret key never leaves the server or the location it's just for

pictorial reasons I'm showing it here so uh they are safe stored in some hardware um one thing uh to important one thing we should uh think about when you talk think about MPC is our ability So even if we have a scenario where one of the servers or one of the companies is down like you you want your uh client to um will client to generate uh a signature even when one of the things are down. So uh in this scenario you just need uh need since this is like two out of three secret sharing you just need two of the servers to generate the secret. So I'm just using like first and the third one

to generate the u uh signature. you can also use the second and third one. So um in general um if you want to generate like if you want to think about MPC workflows like I would say like there can be cases where uh you want like 100% availability and MPC can help you uh get that availability. Yeah, I'm good. I'm done. So I'm happy to answer any questions you have.

And then I think for now we have one question. Uh please feel free to add more if you have any. When should we use MPC over FH? Oh yeah that's a uh good question. So um when you have um when like as I showed in the slide uh you're okay to offload the computation to a server where your client doesn't have enough compute power to do something you can use FHE and when you're okay where you have some latency uh in the sense like u you you want to be the part of the interaction but you're okay that it might take couple of millconds extra to generate something then uh you should go for MPC. So compute think about uh FHE

latency uh and think think about MPC. That's my way of thinking. So we have another question. What PS do you think companies should start with if they're not using any yet? So uh what I have seen in um in general industry adoption is people are starting with FHE fully for encryption like Apple, Google, Microsoft if if you see even IBM um they have their some specific use case where they are using FHE and uh I think MPC adoption is also uh taking place but it's just a little bit slower. And the last question, can you speak to the utility versus compute tradeoffs of using a PAT instead of non-PAT solutions? So uh I think we it's not

exactly apples to apples comparison when we talk about pets and not uh non-PET scenario. If there is a option where you can you do things in clean plain plain text which is not using uh any of this privacy technology go for it. you cannot like it would be definitely 10 times slower or computationally expensive to do um uh lot of these operations. But the real comparison is what if you can't do any of those things? What if there are like geographical restrictions where certain regulations where you can't uh uh get that data in that scenario the comparison is either you don't get anything or use this pet solutions to uh do something. So that would be um one

way to think about this problem and uh we have yeah the last question that's uh I think with the time that we have because it's someone else said please answer that. What about federated learning? Yeah, federated learning is also uh can be think about uh like a generic term of secure multi-party computing and it depends on how fed learning you're using like people in general use like when you're talking about fed learning they use like referential privacy uh on top of it but I um I don't know what the question is intending for like I'm happy to answer that question offline uh but yeah that's But actually you were first so we have I'm not sure if you have time to answer that

but what are your thoughts on privacy washing by companies using pets to do things that are against users interests. I mean uh again this is this comes in the scenario where consent plays a really important role like uh yes you can use privacy enhancing technology to break the privacy of a user. Yes, but uh we should have a consent level which obviously uh like doesn't bypass this restriction. Well, thank you. Please give a last round of applause for Hashall. Good.