Alexander Andersson - Demystifying Cloud Infrastructure Attacks (BSidesFrankfurt 2024)

Show transcript [en]

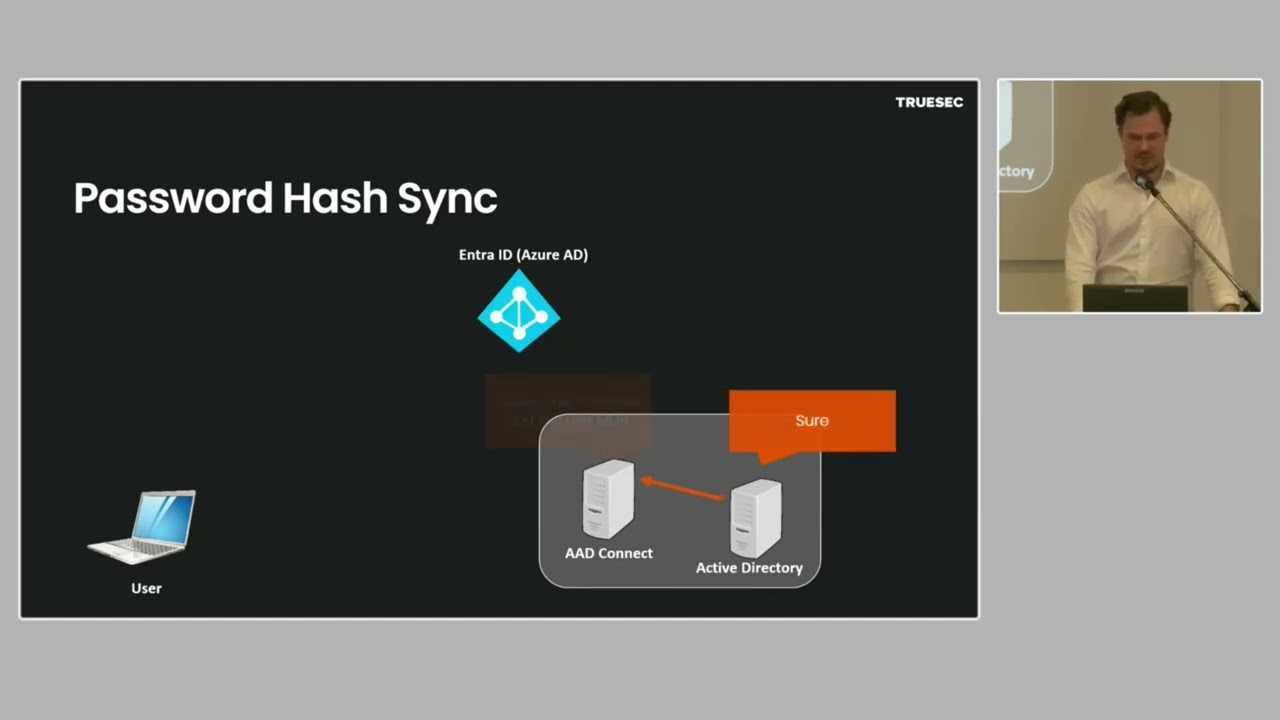

Okay guys, so our last talk for today is Alexandra Anderson from Sweden. He's a principal forensic consultant at TRUSE and he has a background in red teaming and software developing. [Applause] All right. Uh, thanks everyone for coming. Um, I'm going to talk about uh demystifying well I'm going to try to demystify cloud infrastructure attacks. So let's just start with trying to figure out what we're going to talk about. And this is a classic on premises attack. This is actually like from a old red team exercise we did like I don't know 10 years ago something like that. And you know what you have here is something typical like you know they attack like they compromise the DMZ and

somehow jump into some kind of internal segment where they like compromise something that's joined to an active directory domain and find some dependencies jump to the internal active directory eventually maybe they hit the main frames or whatever high value targets um the protector want to do. So this is like a typical kind of attack right. Um what I am going to try to answer is what does this kind of attack where you have like a fullscale attack like this look like when we are in the cloud. So to start with we have some bad news that there is no cloud but jokes aside of course there is a cloud like we can I think it makes sense to still talk

about it in terms of like we have a shared platform with APIs and the web UI and services that are standardized and so on right so that's sort of what we're going to try to talk about and this investigation that we're going to follow now is based on real investigations so like all of this is real print screens from real incidents But of course I can't talk about one single incident from start to stop. So it's like several different incidents combined into each other. So uh no one feels uh identified so to speak. Um so this all starts with a single factor authentication. Uh that's not good not to have MFA of course. Um but I mean that's um yeah

that's sort of the start what the customer reacted on. uh we see that the device that they logged on um like that of that user is registered and it's even in the EDR platform. Uh so I mean that uh that's good. We don't really see any alerts aside from one alert which doesn't seem relevant and so on. Uh we see that the customer have changed password for the user. So I guess case closed right? Uh they enforce MFA and then they reinstall the PC just to be thorough. I guess everything is good, right? Of course not. Uh the thing that we forgot to add here, which is what me I work as a forensic consultant, so I do

forensic investigations. What I like the most is timestamps. So if we add timestamps to those events that we saw, it's another story because we see that it starts with a password reset and then a device is enrolled and after that you have a single factor loon. So that's a completely different story, right? So obviously it's not the customer resetting that password and we also say security info here which we didn't really cover with a print screen but that is a I think underappreciated persistence technique in the cloud which is you know you have this self-service password reset functionality and usually you have to have like different uh factors to do that and it can be like an

alternative email a phone number so on um for persistence the threat actor can update that and when they want to get back into the account. Maybe the investigator forgot to see that those are updated. So, they still have control over the account, right? Because they can just reset the password. So, let's look into that password reset because that's the first event that we know of right now, right? And we see that the password is changed by an account called sync something. And that points us to a hybrid setup. And there are three types of hybrid setups. And I'm going to go through them now because we kind of need to understand how they work to be able to figure out what

happened here. So we have the first one which is federation. Uh usually it's federation with ADFS but it can be like there's many other uh solutions that you can use as well and it works something like this. So the user goes to a federated app and says that I want access and it says that no you need to go to Azure Active Directory which I guess is called Entra ID nowadays. They change names for things all the time. Uh, and I guess while it still says Azure AD in documentations and some like XE X files and stuff are named that still I'm still also going to mix it up if they can't keep the one name but

anyways um yes they need to go to enter ID and enter ID uh says that no you need to go to ADFS. So you go to ADFS and ADFS checks with active directory and uh if the credential is valid then the ADFS server will sign it with their uh secret and then you have that secret which is well like you have the package which is signed. So you can go to ADFS to uh sorry to enter ID with that thing from ADFS and they will give you then an application specific token which you can then present finally to the federated app and finally you're in. So that's the flow and what we saw there was this

chain of trust, right? So the federated app trusts Azure AD and Azure AD trust ADFS and ADFS trusts Active Directory. So what could possibly go wrong here if you think of a compromised company? Well, one way which we saw in like the Solar Winds attack for example was that if the ADF server is compromised, you don't need to be domain admin. You just need to be local admin on the ADF server. Then you can dump the token signing certificate and the related private keys and secrets and with that you can just sign your own uh tokens which means that yeah it's not a bad good situation right because then you sort of just fly your way into whatever

you want to fly into because yeah you sign your own tokens as a threat actor but this is not what we saw in this specific attack right we saw a password reset that's what we're looking for this would not be a password reset it would just be someone who's magically authenticated So let's talk about the second method for hybrid which is pass through authentication and in that case the user goes directly to enter id enter ID asks who they are and they provide their username and password and that is then sent to a que which is then pulled by a ad connect server which lies on prem and when they got the new thing they will

just validate that and eventually if it's um yeah successful it will send a boolean basically which is yes or no. So what could possibly go wrong in this setup if someone compromises that ad connect server? Well, there are some like a internals as an implementation for this and there's multiple ways to do it but essentially you can patch the loon user w function and say something like you know if I don't care about the username but if the password is banana then say that the login is successful and then you have a backdoor password and can can log into whatever account you want. Then again this was not a login right this was a password reset.

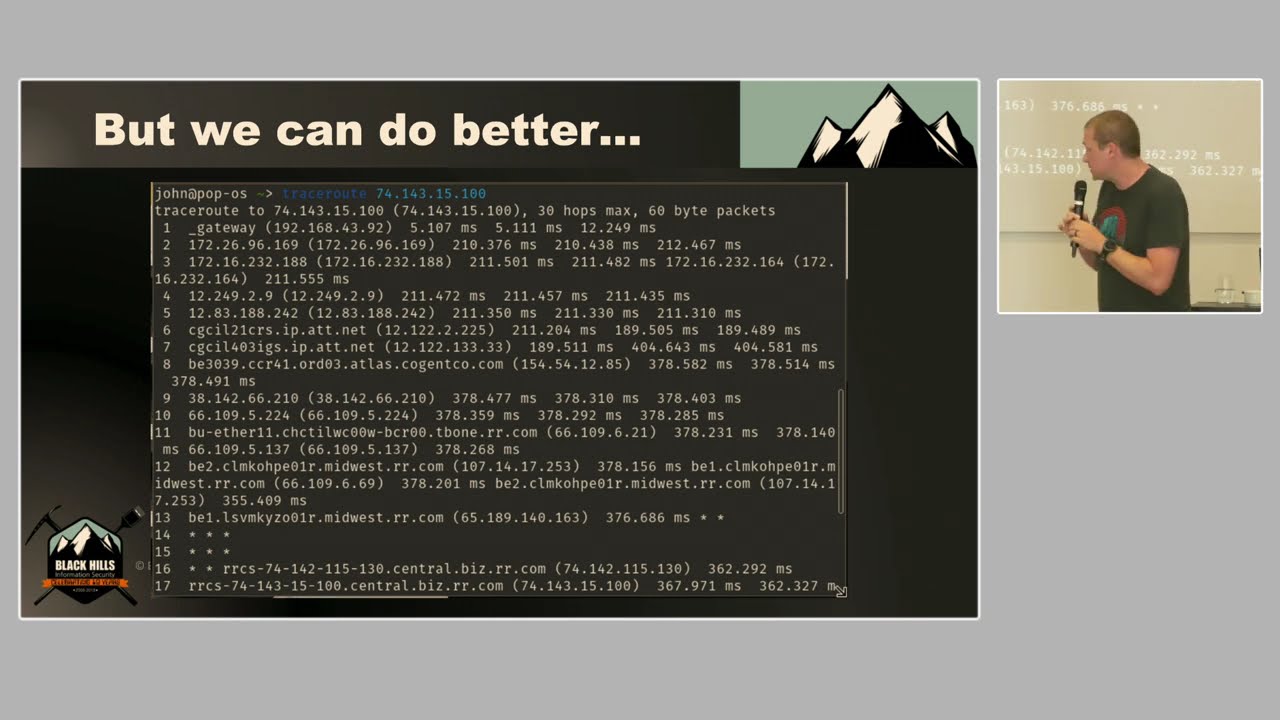

So let's move on to the third and final uh method of hybrid uh cloud which is password hash sync and that starts with the AAD server which takes all the hashes from active directory basically a DC sync maybe you've seen that if you're a red teamer maybe you've done it if you're a redeemer this is the legitimate uh way of using that and so it says sure and gives all the password and they are of course encoded with MD4 which apparently isn't good enough for the cloud. So it actually hashes it with Shaw 256 and assault. So like if you're dumping hashes in Android ID, you're not going to get the like classic antlam hashes. You're going to get something

else. Um but still if you crack that then you can get that original hash blah blah blah. So uh at this point enter ID has all usernames and hashes, right? So it can authenticate stuff uh on its own. So it doesn't need contact with the on premises at this point. Um so what could go wrong in this third variant? Well if like to be able to do this you need to have a few accounts and a few secrets right. So you need one account which is able to do that DC sync. You need some kind of a service account which is going to be relevant soon but the actual account that is running the service and

you need an account in Azure who is able to write the hash for users. Right? It needs to be able to create users. you need to be able to do quite a lot of interesting stuff because if it doesn't have that those permissions then it's not able to sync it all the time right so what happens if someone steals that sync secret that's not a great situation right so it's obviously stored on the AD connect server it's encrypted but it's encrypted with the P API so if you have the service account like if you're local admin and then you can just run as that service account and you can just use unprotect to get that in clear text you

can of course also dump the actual secret and so on and then decrypt it offline. But this is actually what we saw in this attack. So we see that someone is accessing those we're like using unprotect with that specific account to get to that sync account and we see that after that is done then we see that that account is used from an location where it's not supposed to be used because it's supposed to run on that u specific ad connect server right so um yeah what is that account we talked about it a bit Right. Um it is a built-in account which you don't really create it yourself. It has this um privileged role which is called

directory synchronization accounts and quite recently they actually changed this. So the specific method that I'm talking about now uh I haven't tried it but I'm I'm fairly sure that this specific technique of jumping from onrem to cloud doesn't work anymore but I think this uh since like a week uh but I think it's still very interesting in terms of that we can use this as an example to understand how privilege escalation and some other stuff like that works in Azure AD and this account has some uh roles as part or as part of the role it has it has some specific permissions and one of them is to update the owners of applications. So in Azure

you have enterprise applications, right? Uh they are used to do a bunch of different stuff. This is one example of a uh application which is like a backup application. That application is able to do quite a lot like read all the sharepoints and read a bunch I mean it needs to do backups, right? So it needs to be able to access a lot of things. Um in particular one interesting permission that it has is that it's able to read and write directory data. So let's think about this. The account that they stole on that server is able to take ownership of applications. Those applications are able to do a bunch of very interesting stuff which the

original account didn't have. So basically they use that update owner to be able to escalate the privileges and then act as the application. So abusing applications like this is a very interesting way that we see uh fat actors escalate privileges in Azure basically. So they take over the app they generate an app secret and then they use that to create their own user which they can with the original permissions and then they just make theirself uh global admin because they're able to read and write the directory. So the service principle credentials is interesting in itself. I mean what what it does is that you generate these credentials and then you act as the enterprise application in Azure. Um

that's interesting for uh privilege escalation but it's also interesting for persistence right because many companies that investigate uh breaches they will look for newly registered applications but if someone takes over an existing application or like generates credentials for an existing application that won't really show up anywhere you will have this entry in the audit log but after 90 days that's gone and there's no way in the UI to list all the application secrets. So I mean I think there is some way like you can do it with the APIs and so on to get get out uh like the hashes whatever for those but it's a really sneaky persistence technique right. Uh it's very common

that threat actors register new applications but use abusing existing applications I think is um clever. Uh so that's logged of course then we also get a log for when that user that they just created is now in the global admins group and I guess that's the explains some of it but still the the device that they logged in from was enrolled in the EDR right that's a bit weird and it actually turns out that the threat actor thought it would be stealthy to log on from a compliant device so they enrolled it in in tune What they didn't know was that the customer would then push out automatically an edr client to the threat actor's machine. So what we see

here is actually the threat actor's machine. Uh and my my colleague Hassan has a complete talk where he just talks about everything we can see on this threat actor's machine because it's sent to DDR right and of course they use that machine to attack multiple different uh customers. Um but yeah uh we'll get back to that. Uh so let's start draw like drawing that kind of graph that we saw in the beginning right of the classic on premises attack now that we're in the cloud. So the first thing we know is that they dumped secrets on the ADCON server. They then used that to take over an enterprise application. Um they then generated credentials to be able to act

as that enterprise application. Made themselves global admin. Enrolled their device logged in added some persistence and so on. And then finally the seventh step here which we haven't really gone through but that would be like you know syncing uh data and so on. Uh yeah to excfiltrate high value target data. Uh by the way enterprise apps is another uh good way to excfiltrate data right because it has those permissions and you can ask the enterprise app sync data and so on. Um but there's multiple ways to do that. So I guess the question that we have now as forensic investigators is how did they get to that ad connect server because they can't just show up

there automatically right or magically. So we see that there is a connection from a internal Jenkins server. Does anyone know about Yenkins? I guess um when I talk to we do a bunch of different stuff at Trusseek, but when I talk to the uh persons doing like dev sec ops and so on, they say that yeah, it's not that many that use Jenkins anymore. It's uh usually like GitHub actions or whatever that's trendy like everyone is want to use right now. But we actually see quite a lot of companies from my end which work with companies that are affected by breaches that they have that really old very important Yenkin server. they're built completely like built into it because of like the

developed plugins and whatever and it's just like this massive Jenkin server that is responsible for building everything and it's really hard to maintain it. So what's uh uh what's not to love about Jenkins? Um it has this kind of awesome feature that if you're logged in as admin, it has built-in code execution says it right in there in manual. So it has like a y it's a groovy console and you can interact and run os commands. You can also run like a simple script to dump all the secrets and guess how many secrets you have in Jenkins. If you have one big Jenkins that is responsible to you know pull code from repos push it to

where it's supposed to run and like all those secrets that you have to run your entire production is going to be in Jenkins right and that's what we saw that we saw that they did that in this case. So by carving the file system we found this deleted file which contains a complete dump uh and actually it has this weird format of uh like output format. You see it has like class space column space something. So we're fairly certain exactly what script they used which it was from like an online tutorial for how to do it. Um so so yeah that that would be the first step. Right. So we have the uh secrets dump on

a Jenkin server. Um still it we I mean we get the same question right? How did it get to the Jenkin server then? And when we look at the Yenkin server we see that there is a rat installed on the Yankin server. So we see that it goes through Gmail. Um this is nothing new but I think it's cool just because we're talking about cloud security. threat actors can also use cloud and they can get a C2 as a service as I used to call it. Uh we talk about Gmail here but the same principle is going to apply to we see I mean there's open source um C2 channels for like Slack and uh Dropbox and pretty

much any service that have uh the features needed to do this. But basically what they're doing is that they are querying from the rat. We have the rat uh where the server where the rat is and then the evil is the threat actors C2. So it questions like do you have any new draft for me and it says no and it keeps polling like that checks for a new draft and when the frat actor wants to communicate with the rat it creates a new draft and I mean this is a bit simplified but just so you understand the concept. So it creates a new draft with like execu a which is a command they want to run and then the um

rat gets that command. It executes the command and then it adds an attachment to that draft which is the output of the command and then it checks for attachments obviously on the other side of things and it gets the uh draft when it reads it deletes it and so on. So then you have like a channel where you tunnel everything through Gmail. And if you're just looking at network traffic, it's going to be kind of hard to see this, right? Especially if you're this company that we're talking about who's actually using Google Cloud, which we would get into. There's a lot of services that are uh talking to Google Cloud all over the place, right? Um and

I think that's when we see this being used by a sophisticated actor, they look what services are the this company using. If they're using Slack and they have Slack integrations for pretty much everything, then why not use Slack as a C2? It's going to be really really hard to find that in the uh midst of all that traffic. So, it's really hard to see it network-wise. Um, on the other side, we're obviously completely blind. So, we cannot see anything because it's just the threat actor accessing Gmail on their end, right? So, is this the perfect C2 that is like the C2 is to rule them all? Well, it got one big of a problem, right? To be able to do this,

you need to persistently store the credentials to the Gmail account on the compromised server. So before we turn ourselves into cowboys and log to that Gmail account, because of course we can at least do the things that that API secret is able to uh do, uh we actually found that by collaborating with these vendors like Slack and like Google and so on, if you just talk to them and say that someone is abusing your infrastructure as a C2 and you give them evidence, they're actually really helpful in my experience. So they will provide a lot of data as much as their lawyers um accept them to I guess but you will get way more data that way. Plus I don't

know how the German lawyers but in Swedish law it wouldn't be legal for me to log into that account even if it's I know it's a threat actor using it. And keep in mind that they're probably not using their own account right they have probably hacked someone else account and all of a sudden by using those secrets you're hacking into someone else's account. Um, so yeah, that is the C2. So it still doesn't really answer how did they get to the Jenkins server because now we're talking like code execution on the actual underlying Jenkins server. Um, if we look at what runs there, we see that they use Docker to run jobs. And we see that some time ago someone

figured that those Docker containers need network access because they need to be able to talk to a bunch of different stuff during the build. They need to pull dependencies and whatever. So someone put it on the default docker network which means that from the docker container you're able to access local host where you have the Jenkins server running. So that's just like the simplest docker breakout ever, right? They're just able to access local host and then uh get access assuming they have the credentials of course um which we'll get to later. So next question would be then so how did it get to this docker container? It's not like these are just internet exposed, right? They can't just find a

vulnerability and just land there. Uh these are something that's spun up and they're fairly isolated. I mean, they can talk out, but there's no like listener from coming from the outside. So, we're going to go through a few different techniques that I've seen to compromise that kind of container. And one of them, we saw this weird log uh with an error in the uh pip file. So basically pip you know in if in Python you use pip to sync dep like it's a dependency manager. So we see that it downloaded version 99999999 which is a bit weird uh because this was an internal package for the customer. So it's like company name internal something in version 99999. And

when we looked in the official pi pi repos, we see that someone had registered the same name and put the version 9999. So apparently this is a won't fix vulnerability in pi because they say that the extra index URL which you can use to point to an internal pi repo um it's designed to be insecure. It will check the official repo and it will check the internal repo and it will just get the newest version. So if you want to be safe, you're going to need to use index URL, but then of course you need to sync the entire repo, right? Which is going to be yeah quite annoying to keep that up to date. So no one uses that.

Everyone uses extra index URL I guess or actually I only see companies that suffer from attacks, right? So what do I know? I have a weird view of everything. But that's not it about this extra index URL. There was actually also a bug in this a while back which is quite interesting. Um, this doesn't have any CVE because they just silently fixed this as a bug. But we actually saw a case where the problem is that if you have an X-ray index URL and it checks the official repos, it will start by doing a plain text request and then it will get like no no you can't talk as nonSSL with me upgrade and then it will

upgrade to HTTPS. problem is that we saw was that someone had compromised some infrastructure in the middle of everything which means that it checked the first package that it wanted to download and it says no you need to upgrade no you need to upgrade the next one no you need to upgrade and then all of a sudden it said yeah here's your package so basically what they're doing is doing a man in the middle of that first HTTP request and you basically have a remote code execution vulnerability in pip um this is a very old version this was fixed in to 17. But since there's no CVE for it and no one, you know, updates their Docker

containers, if you look at Docker images, they are full with vulnerabilities, especially for uh like things that doesn't really have a CV. So, it's not that uncommon that you still use this old pip version, right? Um and so that that's way number two. Uh it's not what we saw in this case, though. What we saw in this case is this third variant, which we're going to go into. Um, and I mean this sort of starts with that source code repositories have somehow become our like management plane for pretty much everything at some point. So you have these CI/CD scripts and so on that you specify like what do you want to build, how do you want to

build it and so on. And this is either done uh outside but it could also in some cases be done inside the repo. Right? So if you're able to uh change like uh what's the word? uh if you're able to commit and push to the GitHub repo, you're able to change how it builds and so on, right? And then you can edit that can you please print all the environment secrets in this uh job and it's just going to censor them and so you say can you please print all those secrets basically for encode them and then all of a sudden you get them. So I mean the there's a lot of interesting things when it comes to

this. One of them is shared runners. Uh so maybe you are actually doing your job and you're making sure that no one is supposed to just be able to push to every repo and you need the approval and blah blah blah. Um then you hire this uh weird person that want to hack you and they are just able to push to a repo create and push to a repo and that repo that they have control over even if they don't have control over your sensitive repo is going to build on the same CI/CD server which means that they can push their malicious stuff and then just sit around and wait on the CI/CD shared runner and eventually they're going to

get some secrets. So there's a great write up about this uh if you want to uh read from this Dennis guy. Um what we saw in this case uh this case that we're going through is suspicious activity in the commit logs. So we see that someone has made a push to the requirements txt file and instead of the package that they had there originally, it's a link to GitHub, same package name. And we looked at that package and it's exactly the same as the original package aside from one file which is setup.py. Uh setup.py Pi is something that's going to run during pip install and that's the only time it's going to run which is exactly when the threat

actor wants this to run right because it wants to run this as part of that uh Jenkins docker uh job. So what that does is basically that it creates that same uh C2 tunnel. So they get a shell inside that docker container. So then we have that sort of first step right into the docker container. Um before we go into how did they get into GitHub GitLab then uh we're going to ask another question which we sort of skipped over which is even if they had access from the Docker container network-wise to the Jenkins interface they still need to have that credentials right um so how they did that is a longer story uh and it starts

in Google Cloud and we're going to talk about lateral movement in Google Cloud um and assume that the threat actor has compromised one machine in Google cloud and what could it do? So one thing which is kind of interesting is that if you just you know spin up a new VM in uh GCP and you just do next next next next then you're going to get like this default service account that's going to run uh because every machine needs to have this service account and that one actually has quite generous permissions including like reading your uh buckets. So in some cases actually you can like if you're live on the machine you can query this internal uh metadata right

you have sort of the same thing for all cloud providers um it's used to be really interesting when you found like an SSRF but now they added so you need to like provide some kind of header so it's not that often that it's you're able to do that in an SSRF context right but anyways you can query this if if someone has compromised the machine they can read out what does this uh service account um what's the permissions. So that's one way which is very interesting that you can find on a compromised uh cloud machine. Another one is those plea uh utilities that everyone is using. So assume that you have like a fairly locked down network. It's only able to

access APIs from this specific server. What's going to happen is that someone is going to remote into that server. then they want to use like uh Google cloud the cle tool or you know you have like AWS cle you have AZ and so on for the different vendors but what happens is that they log in they then are on that specific server they do like a cloud init or whatever command and they need to enter and authenticate the token and that's going to be saved in their user profile. So if a threat actor then comes along and compromises that machine then they can of course just dump whatever is in their user profile and all of a sudden they are inside that um

yeah that same context that that uh cle is and on Linux it would be yeah under your config or whatever right um these are all like sometimes they're encrypted sometimes they're plain text and so on but what what's sort of a good rule of thumb is that do you need to enter a password every single time you're using this? No. So then they're probably a reversible encryption, right? So the easiest way, like you can probably dump this in a really fancy cool way, but the easiest way is probably just copy everything that is in that config folder, paste it on your own machine, and then all of a sudden it just works. So it's fairly easy to dump this. Um so

I mean that's two techniques that I think are interesting and like coming from this like on premises Windows active directory environments I think this is very very interesting because what we see is that you know they compromise one machine and from there they're able to extract secrets maybe eventually they find something that are able to list instances they can add SSH keys allow their own IP to log in And they're then able uh yeah this is what it that would look like and then they're able to log into the next machine which is like what we're talking about here right lateral movement and when they're on that machine they're going to start doing the same thing and eventually they

find another credential and then they jump to the next one and this is what we're seeing coming back to the on premises and Windows active directory and so on. This is very different from that right where in Google cloud we're using Linux and so on but we're seeing the same fundamental problems because in Windows you have this where they compromise one machine they dump LSAs they dump like the u yeah whatever they want to dump like the SAM hives and so on right they use those credentials to pass the hash to jump to the next machine they repeat the process and they move laterally in the environment but here we see that it's not a problem it's

not a vulnerability that we can patch because the problem here is how we as IT persons use our systems. So I think it's quite interesting that uh you know you're not going to be you're not going to solve all your problems if you're using your environment improperly by moving to cloud. You're going to have the same problems probably just in a different slightly different form. Um so in this specific case that we're talking about they did this they got to one machine and eventually they started dumping credentials and they jump to the next machine and jump to the next machine. Eventually they found access to some uh buckets where they wrote backups from the on- premises environment to

these buckets and they were able to download those backups and that's how they found those secrets to be able to log into the Jenkins console. So with that said uh I guess we have one problem uh left which is how do they get access to GitLab then and the customer said that's not possible because we have MFA and yeah uh I mean there are many ways to bypass MFAs that we see today. MFA is great and it's something that everyone should use for obvious reasons but I mean what threat actors have adapted right so we have like adversary in the middle is really common so like if you fish the username and password why not

just relay that to the backend service get the prompt relay back the prompt to the user make them enter the credent um the mf prompt as well so they can just you know do the entire flow they just need to add one extra step in their fishing sites and there are of course like evil engined it. Um I think also speaking of MFA um and in a cloud context this becomes important right because a lot of it about cloud security is about the identities that we're trying to protect and uh I used to work as a red teamer and when I fished an account uh it was usually for that person at the company who isn't that great with security who's

not really aware about security. So if I then fish the username and password then I call the person says hi I'm from internal whatever and you know there's a problem with your salary uh but according to GDPR you need to show me the uh code in that authenticator app just so I know that you are you and of course they're going to just read it out to me because they don't care about security otherwise they wouldn't have been fished in the first place right so I just call them up ask for the MFA to to which I I mean it doesn't really matter what method that they use because I can trigger if I need to trigger a

prompt or something where it's like you know if they have that you need to accept this login then I can do that login from my end and say that yeah you need to um click okay to you know solve this problem with your salary or whatever story you come up with. Um, so I think that it's it's um it's a weird like it's it's not a perfect solution because we're trying to protect people that don't want to be protected in a sense and if they can't protect their password, why would they be able to pro protect their MFA token? Um, so there are better solutions for this and we're going to get into that. Um, one way we see that MFA is being

bypassed which is very common is info steelers and the there are a few of them that are quite big and um they have this like advertisements and so on. They're basically they're operated as malware as a service is a common term which is basically that they run this thing and you kind of subscribe on credentials. So they have like a the Russian market is a good example of that. Um so what they do is that they infect machines at scale and they steal cookies, they steal saved passwords, they steal like autofill data which would usually contain you know credit cards and that kind of information. Um a lot of them are also targeting like Bitcoin wallets and that kind of thing

as well. Um, and then they have this website which is just updated all the time where threat actors that do ransomware or something else can just go to and get credentials. So when it comes to browser secrets, it's interesting because if you have the cookie, you're inside the session, right? So you don't have to authenticate. You don't have to you don't it doesn't matter if they have MFA or whatever they have, right? Because you're already inside an authenticated session. And you know stealing uh secrets from browsers is fairly easy because either we talked about it before actually DP API is what protects on a Windows system in for example Chrome. Um, so either you can do some cool stuff

and try to unprotect and like even you can dump Elsas um and get the secrets needed to do it offline or like if you're domain admin you can have a backup secret but if you're domain admin you probably don't do this so yeah who cares um but the easiest way is that Chrome needs those cookies right so Chrome must have the cookies because otherwise you sort of lose the entire point of cookies Right? So what you can do is that you can start Chrome in debug mode. Then you just send a command which is like give me all cookies and then it's going to give you all the cookies. So it's pretty much a oneliner to just dump all those

secrets. And in a sense it doesn't really matter how you protect these secrets because Chrome need to be able to decrypt them, right? So if you're if you're local admin and you have control over the system, you're going to be able to get this. Um, another interesting technique is, you know, when you're inside your cor corporate computer, you're probably logged in to a bunch of office apps and you might think like, how does it know that I'm Alexander when I open uh, you know, Outlook or that I open PowerPoint or whatever? Um, there's actually a kind of easy way to check that and it was um, someone called Mr. Docs that showed this. I think he was kind of the first

to write about it. But basically, you just dump the process and then you grip for EY which is you know B 64 encoded start of uh the GVT tokens and of course they need to be in memory because otherwise you can't authenticate. That's how you authenticate right. So basically just do a process dump of the Outlook application read out those EVTs and you're in. So I mean that's one of many u techniques that we see uh that they bypass MFA. Um however I think we are getting slightly better at this. Um when it comes to if go back to Ashure they have some detections for this kind of thing. You might see an alert that says

anomalous token. Uh for example my IT department might have gotten one because I was in Stockholm and then a few hours later my token was all of a sudden in Germany. Um, so that's a weird uh movement. Then again, there was probably a gap in between there. So it's not going to it's sort of the same as impossible travel in many sense, right? So if a token moves very quickly between two locations uh that you can't really travel that fast, then it's going to trigger this kind of alert. Um it's kind of good. I've seen a few false positives of this where people are just traveling with their computer. Um but I think that's a good way uh where we start to

become better at detecting cookie theft and so on. Um another good way is if we're talking about enrolled devices. So in Windows you have different types of management of devices right. So you can be like active like the classic you join to an active directory domain but you can also be enterprise joined or you can be ashure ad joined and for that you need to have some kind of super secret inside your machine right which is the refresh token. So in Azure, you have this uh stored locally on your machine and if someone steals that, then it's kind of bad, right? Because they can refresh that token because all those other tokens are usually fairly

short-lived. So they can get into your account and probably sync your Outlook and download all the mails within that time. But um yeah, it's going to be a shortlived token. This is more of a longived token. It's not infinitive, but you see the uh day there um expired time of that specific one. But the thing that is good here is that Windows 11 requires TPM and this is one of those secrets that's stored in the TPM. And to my knowledge and I guess uh to public knowledge, there is no way to dump secrets from TPM. So it's actually a very good thing where we protect the our secrets on a hardware level. Um there's also some other interesting

projects I think going on where we have like uh Google is also Chrome is looking into storing cookies in the TPM. Um, I'm not sure if that's going to work out good because as I said, like Chrome needs to have the secrets, right? But at least maybe you can have secrets that are very shortlived. Uh, and then you need to like be on the actual machine to be able to um, yeah, keep a token that works for a reasonable amount of time. Um, but anyways, so that's sort of how we yeah, that's the entire flow of the attack pretty much, right? So we see that the user called Devon in this example got a info sealer on the machine. Um

that secrets that they dumped on his machine including browser cookies to GitLab was uploaded to an initial access broker website. U the threat actor acquired or bought the credentials from there were able to log into GitLab. on GitLab. They were able to find a way to push or like run code inside Docker containers which runs on a internal Jenkins machine as part of build jobs. They broke out of that Docker container over the network and got root access through the Yenkins console. Um then they jumped to the internal active Azure active directory sync server and with there they found the secrets able to sync and create accounts and so on which they abused to create that uh or sorry

take over that enterprise application uh add credentials and then enroll their own device and login and eventually they reach their target which would be the uh yeah some interesting persons at the company. Um the other thing which is kind of interesting is that how do we know I mean it's always hard to know like how does it work with this like info stealers and the threat actor are they the same person are the different persons how do we know that they didn't steal the credential some other way well in this case they were very friendly and enrolled their device right so while we can't go back in time before they enrolled it and see that they bought

these specific credentials. We can see them browsing Russian market which is uh you know initial access web shop. So basically they did that you know Russian market and then you see like these eddu which they visited shortly after. So basically they're buying credentials and then they're jumping into different uh victims. So um yeah, I think that's uh I mean it's not a perfect like evidence for it, but I think it's a fairly strong evidence that they uh got access to a initial access broker. Um yeah, so I guess that's the attack. Um, one final thing, uh, this red line stealer, which it was in this specific case, um, we found that the malware was downloaded from this

link. Um, that doesn't look good, right? Um, their competitors were really quick to point out that Microsoft's GitHub repo is compromised. uh they were very happy about it. But then someone at Blipping Computer did some digging and figured out that no it's not compromised. This is a interesting feature in GitHub which is that you can start an answer to a issue. you can attach a file and then you can just discard that comment and the file is still going to be there and that file is going to be uploaded under the repo where you're uh yeah creating the issue. So I mean this is isn't great, right? Uh I would download that Microsoft file and

think that it's legitimate. Uh GitHub did not think this is a vulnerability. They thought this was a feature. And with that said, I want to thank GitHub for hosting my documentation in their official documentation thingy. Um, and uh, yeah, with that, thank you [Applause]

Thanks a lot for uh for showing the incident. Uh I wanted to ask was there like any monitoring uh in place because like from the attack complexity or from the two ministers it seems like a big company. Uh so was there like any detections uh in the blaze? Uh first the question. Uh yeah absolutely. Uh but uh so keep in mind this is a combination of attacks. Uh but we I think there are like three different attacks which are kind of this scale that are combined into so it's kind of hard to answer specifically because it would depend on which one I'm answering about. But one that comes to mind is an attack where uh

that's yeah very similar to this all in all in complexity and so on. Uh the threat actor were able to because they had access like the compromised network infrastructure. So they could like sit there for quite some time and figure out which systems talk to the EDR and then they picked the specific systems that did not use EDR and jump on those. Um so I mean that would be one way. Um then like it depends on what kind of detection capabilities that you have right so EDRs would see a lot what happens on the specific systems um if we take like that Gmail uh rat uh it was based on can't remember but an open-source uh

kind of sliver you know that kind of open source framework but the EDR platforms aren't that great on Linux systems in general and it was just undetected basically um So and network wise uh I don't know what kind of rule you would write to detect something talking to you know slack if your everyone is using slack it's really hard maybe you can analyze that it's a weird pattern but then again if you have like build jobs that are frequently you know talking to integrations then yeah it's it's going to look weird I mean it's not only chat that you have there right um so yeah I mean in short yes definitely had a sock had a like a bunch of the

interesting U capabilities in that sense but yeah I only see where where the that uh the sec security operations fail right yeah continue to this uh any of those incidents were triggered by alerts from uh sock or all of them were triggered by the ransomware or the whatever yeah it's uh none of these were ransomware attacks uh it was more of a sophisticated actor um it's different. In some cases, it can be that the company is doing some hardening and then, you know, they start hitting that hardening and they're like, "Oh, that's weird. This uh EPC shouldn't block bunch of whatever." Right? Um in other cases, it's the like uh national intelligence services that reach out to the customer

and says that you need to check on this specific host name and they're like, "How did he know the host name?" Uh so that's kind of common as well. Uh I guess you have a similar um yeah stuff in Germany where where they can reach out if they have been tipped and so on. So yeah and more

thanks also from my side for this very interesting talk. I actually have three questions. First of all, uh I wondered uh how the info steel malware got on the system on in the first place. Then I wondered how long did the whole analyzes actually take you from a time perspective. And third, did the threat actor then also like um disconnect his device or do you still have access or get telemery data uh even to by today? Mhm. Uh so the first question uh there would be that it starts with the code execution to these docker containers that have um network access and then there's some kind of tunnel from that on premises server up to Google cloud. Um so that's basically

how they how they got there. Uh so they had sufficient credentials for that basically in the environment secrets and so on. So they were able to um yeah connect up to that machine. Uh but I mean it can be yeah a lot of different variants. Uh um the other question was how long? Uh it's also like yeah keep in mind it's different cases but one of them is like two years something from the start to the end and we were the third company that investigated it. Um so it's like the first team that investigated it. uh they are a really really good team but I think that the customer gave them a too narrow scope

where it was like you need to look how this specific thing happened and they just did that kind of uh which means that they missed the rats and so on. So we see that you know after the investigation they reset a bunch of credentials and then two days later the threat actor dumped the entire Yenkins machine again and they have all credentials just as nothing happened. So um the so for that reason and that the threat actor didn't really stress uh they were more interested in keeping access uh so installing a bunch of sneaky persistence and all of that uh and focus on keeping the access more than we need this access now kind of um

so um yeah I mean it the investigation is usually quite fast um the attack is usually very wrong. Um, I guess is the short answer. What was the third question? Forgot.

Uh, I don't know actually. I think they disconnected it. Uh, so I mean it's it's a it's one of our customers, right? So, I sort of just gota I don't know export CSV from By the way, guys, you probably want to see this. Yeah. So, I don't know, man. I'm pretty sure that they disconnected it by now. Um, yeah.

Uh, so it's Yeah, I mean it was like um I sort of touched upon it, but it's three different investigations, right? But I mean it can be anything from like but I would say like around a month maybe uh from like first start of the investigation until the report is done kind of um but then it varies quite a lot between the different from the customer's perspective we were said like the third company in that case. So from their perspective, they had to sort of battle this for the last two years kind of right since the first uh first thing started. But it varies a lot. Uh in some cases like in in ransomware cases it can

be like you know u like I don't know a week or something where like it's pretty fast because they aren't that sophisticated and they aren't using I mean they're literally installing any desk for C2 because you know it's actually pretty clever, right? Because no AV detects a legitimate remote access tool. Uh but it's kind of easy when you do a forensic investigation because there's literally a log of all the connections. So it's uh yeah um so it varies a lot about uh the length of and the complexity of of the investigation. Uh, one of the things that makes it vary is also if we want to do a stealthy investigation because maybe we don't want to alert the threat actor that

we're on to them. And then you use more uh yeah stealthy methods where if it's ransomware then everything is just encrypted, right? And threat actor knows that they know. So we don't need to hide. We just need to hurry up to make sure that they get back again and know what back doors to remove and so on. Uh so yeah.

Uh yeah yeah yeah absolutely. Uh but do you mean to share or

ah you mean like that? Yeah yeah yeah yeah absolutely. So that that is a major challenge of course. Uh so I mean imagine doing this investigation and it like patient zero was two years ago. So in many cases like some server that they compromised has just been reinstalled for whatever reason and doesn't exist anymore. Um and logs are retention is really short, right? Uh but you sort of uh like there's a lot of things that stay uh over longer time as well, right? So it can be like deleted files might still be there. Uh it can be some kind of logs are usually also in the backups. So if you restore backups from that date, maybe you're lucky and they still

have the uh artifacts that was there two years ago. And yeah, it it's obviously delays the investigation quite a lot because you need to work really hard just to get a simple log. Uh but uh usually we yeah try to and succeed to figure out where to get the evidence that we need. Uh but yeah, I I would prefer if everyone started detecting the attack the day after. That would make my life easier. Do we have any more questions? All right. Well, thank Thank you. Thank you so much, [Applause] Alexander. So, we are now at the last part of the con of the talks. We'd like to thank everybody, our sponsors, our vendors, our speakers, of course, uh the

Gotha University, their lighting guys, sound and everybody and all of you, our beloved attendees for taking time from your hectic schedule to come here and enjoy this conference with us. Thank you all so much. Of course, this and also our team, the B uh besides Frankfurt team, my fellow colleagues. Thank you so much. And of course, this won't be possible with our very two grooviest founders and organizers, Alex and Histine. Thank you. Thank you guys. [Applause] Yeah. So, Alex, no, you're first. You're first. Uh, yeah. wanted to thank you all for being here. Again, thank you to our sponsors, our um um volunteers, all the speakers um and um uh also we want to thank um Hacker School for providing the

devices for the kids track. Uh my kids were here, they really really enjoyed it. Uh if you got your kids here, I hope they also enjoyed it. Uh, and uh, yeah, I I think uh, we should have another round of applause for Alex because he did most of the work. So, thanks again. [Applause] Yeah, unneeded. Thank you. I want I want to thank you all of you. Uh, you came came here as I said on a Saturday. Most of you in a private. It's 30°. you stayed here you I don't know it's uh it feels warm for me maybe because of stress but thank you for staying thanks again sponsors the speakers came from Sweden United States all the way did it

volunteered and I don't know kids track was fine too um thanks for all the helpers you as well Patrick so all of them oh Alex um I would encourage you it ends but it's not over yet um to network grab someone I I will go and have a drink and dinner. Um, grab someone if you need to connect to someone. I meet down at the lobby. Meet down at the lobby. Rita says follow Rita or someone with the the t-shirts maybe. Um, I need to join later, but if you need help, ask. And um, yeah, I encourage you go network, talk, and exchange. And thank you so much for joining. Thank you guys. [Applause]