Aaron Jewitt - Zero Trust - Attack and Defend (BSidesFrankfurt 2024)

Show transcript [en]

Give him a big hand. Thank you and thanks for coming out on a Saturday. Um, once again, I'm Aaron Jwitt. I'm currently with Elastic. I'm a detection engineer and apparently I'm the only two-time speaker at Besides Frankfurt. So, uh yeah, LA last year I got to keynote, but this year we got a real keynote, so you know, I didn't have to do it again. And so I'm here to talk about zero trust and talking about from a real world point of view, how do people attack it? If you're a red teamer, you can take notes on that. And if you're a blue team, you're defending it, you get to take notes because I'm going to talk about how do

you prevent the attacks as well as how do you detect the attacks? Because you can never have 100% prevention. Um, here's a quick agenda. And starting out with definition, what is zero trust? And really what is zero trust depends on which marketing team you ask. Um, the key here is the key word in this definition. It is an evolving set of paradigms. Um really because it is all about marketing and who you ask it's like AI. Um so but what is it really from a real practical point of view? Zero trust is when your workstations don't trust anybody around them and your accesses to your work resources are based on your identity. Um, if you can

take a user and hand them a Chromebook and sit them in Bonhoffer tool on Wi-Fi and they have the same level of protections as they do in the headquarters office, then you might be zero trust. And so that's kind of my ballpark explanation that I tell. And like what's so cool? Like why do people like zero trust? Well, it gets rid of this old stuff. The uh the the idea that somebody breaks through your firewall and they get inside the LAN and they run Net View and they pivot pivot pivot and now they're on the server, they've got your goods and they deploy ransomware and they break your whole network down because everybody on the inside trusts

everybody around them based on location instead of based on identity. Uh with zero trust, there is kind of no LAN. There's no real local area network. So, at our offices, if you go to an elastic office, the it's the exact same access as you get at a Starbucks as far as corporate networks go. Um, except maybe a printer or two, that's about it. And so, very few organizations are 100% zero trust because you have to have servers, you you have to have custom applications, and unless you're 100% cloud-based, then you're not 100% zero trust. And so, how do you do this from an implementation point of view? Now, it's easy. You got users. The users talk

to an identity provider and the identity provider grants you access to your applications. Uh your workstations treat all networks as untrusted as I talked about. And then that's it. Your identity provider gives you an identity token. When you log in, you use that token. You present it to your applications to log in and you get sessions. It's that easy, right? No, it's not. Here's more what it re really looks like because it's not just users. You're your SSO and applications. You've got, you know, you've got somebody else's identity provider. Um, your company has a trust or a contract with some other identity provider and they have to synchronize and trust each other. Um, your cloud service provider has their

own identity provider that may not be what you're using. Um, you've got external contractors, you got service accounts that don't use MFA, you've got legacy applications that don't work with your SSO, and then there's always the some random application that somebody stood up and started using that you have no idea even exists and is and those are always fun. The other part of uh zero trust is how do you manage your workstations? If your trust if your workstations don't trust anybody around them, how do you manage them? You can't remote access them directly. You can't just run PowerShell on the uh remote workstation. So, how do you do it? Well, you got to have an agent. And anybody who's dealt

with this knows that there's not there's never just one agent. You've got an agent for your Intune. you got an, you know, if you're using Microsoft agent for your EDR, agent for your vulnerability management, for your asset management, your IT help desk has a remote access web agent so that they can uh, you know, whether it's team viewer or something like that, so they can gain remote access to help somebody. How do you manage all of these agents? There's always agents that you don't know about that some application software is running trusted code on your system that you don't even know is doing it. And so this is always the problem with zero trust. And it's it's kind of

like a bad cult. Zero trust. You tell your workstations, "Don't trust anything except me." But then whatever the uh whatever the EDR manager says to do, it does. As we all learned with the uh recent Crowd Strike incident, and it they drank the Kool-Aid, and it was bad. And there went your zero trust because you had to trust somebody. And then what's going on in the back end with your applications? You know, you connect to these applications with SSO. You see the front end. It's really nice and clean. You have access to your data, right? Now, what's going on behind the hood? You've got who has access to your data from the back end. Your cloud

security pro or your cloud service providers. Um the code is hosted in GitHub, GitLab, some other code repository. There's a CI/CD pipeline that's continuous integration, continuous deployment. So everybody likes to use Kubernetes, deploy as code, all this other stuff. All this stuff is going on in the background and thousands and thousands of open source libraries, log for shell that you don't know about, that you're not managing. All of this stuff is going on behind the hood. And in in a zero trust environment, how do you determine access your your identity provider whether it's Octa, Azure AD, Duo, one of these generally they have users, groups and applications and you you have a user and then based

on what groups they're a member of they can access applications. Well, a user can be a member of more than one group. A lot of times a group can be a member of a group and so and then applications are determining these accesses and it's never as clear-cut as you'd like it to be. And so sometimes people will come to you and ask a question to say, "Hey, this account was compromised. I need a list of every single application this user could access." That's not always as easy of a question to answer as it really should be. Or, you know, list me everybody who is an admin of this application. Same thing. this group is

an admin, but this group contains this group which contains this other group. And you just go down these rabbit holes to answer these ve what should be very simple questions. And so this is kind of lays the groundwork for, you know, zero zero trust attacks. So if you're a red teamer, how do you attack zero trust? Well, you steal an identity. It's identity theft is the core of zero trust attacks. And most of the time, an identity is just a cookie. It's a session token stored in your browser or stored in your Slack application or GitHub desktop application or whatever you're using. It's it's going to be a session cookie or a JIT token or JWT or

some form of string of text really. And these strings of text are generally not stored in a secure manner. And so you're going to try to attack it by fishing attacks. Uh you hunt for API tokens, access tokens, accounts, and you RTFM. You read the freaking manual when you get access in and you find out how everything works, how everything's talking to each other. And that's that's the overall view of how you attack zero trust. Going through this through the different phases of the kill chain. How do you attack initial access? Stealing cookies. Number one, if you're not using PH 502 mandatory everywhere for your MFA, you're vulnerable. Uh, trust me, we just migrated about a year ago because

we had a very exposing red team event where they showed us how push notifications with the numbers and all this other cool stuff wasn't enough. Um, 502 means that they do certificate pinning so that they can't proxy the connection. Um, that little icon with the uh the dude with the red eyes, that's Evil EngineX. Uh, you can go out there. They got loads of training courses. You can uh hire it as a service and that will proxy your login connections. It will look exactly like you're pro logging into your corporate website with the green lock at the top and the SSL connection and everything except they steal your username, your password, and your session token. Um,

and then you get logged in and they get logged in. Unless you're using PHTO2. So, if you're using 502, it does certificate pinning. It says, "Nope, I'm not logging in through this because that's not the right website." Um, most IDPs also support device certification. So, if you want to take it even further, you can say only 502 from an authorized device. And so, if you're using octa, they have octa verify that you can have on your workstations. Um, Windows Hello for Business is a very common one if you're using Microsoft. And so, then they can they can say this is a certified device. the device is registered on the network and the user is using 502. At that point, you can you

got a pretty good trust assessment of that identity. Um, if you don't have that, you shouldn't really trust them. Um, the other thing is is once again, these are just strings of text, these identities. So, info stealer malware will often just scrape all these and start uh selling them on the dark web. And so, what happens if one of your employees says, "You know what? I want to log into Slack from my personal computer so I can keep up to date with chat when I go home, you know, that way I can, you know, talk to people about stuff and then their kid comes along playing Roblox or something and installs some like Bitcoin mining info stealing

cheat code that they found on the internet. We've had this happen. And then they steal that Slack token and now that Slack token is available on the internet. Do you know how long a Slack token session lifetime is? It's forever. How often do you have to relog into Slack on your application on your desktop? Almost never because that'd be annoying. Same thing with mobile like your mobile applications like your uh like how often you all log into relog in on your phone if you have that uh your email all that stuff. Those have really long lifetimes. And so those sessions they may not get access to everything but the attackers can get access to that

application and from there start pulling the threads and gaining more and more access. Once they get those access, then they do recon and they start hunting for secrets inside of your environment. Um, you if you're not hunting for secrets actively in your environment, they are out there. And I, trust me, they're everywhere. They're in docs, crash dumps, screenshots, all over the place. Um, I really highly recommend if you get out, try Truffle Hog. Um, Intro is a corporate version of that. These are these are uh applications that just scan your environment for secrets. And I'll get into that a little later when we're talking about the like the real attack scenarios. Um the attackers will spend

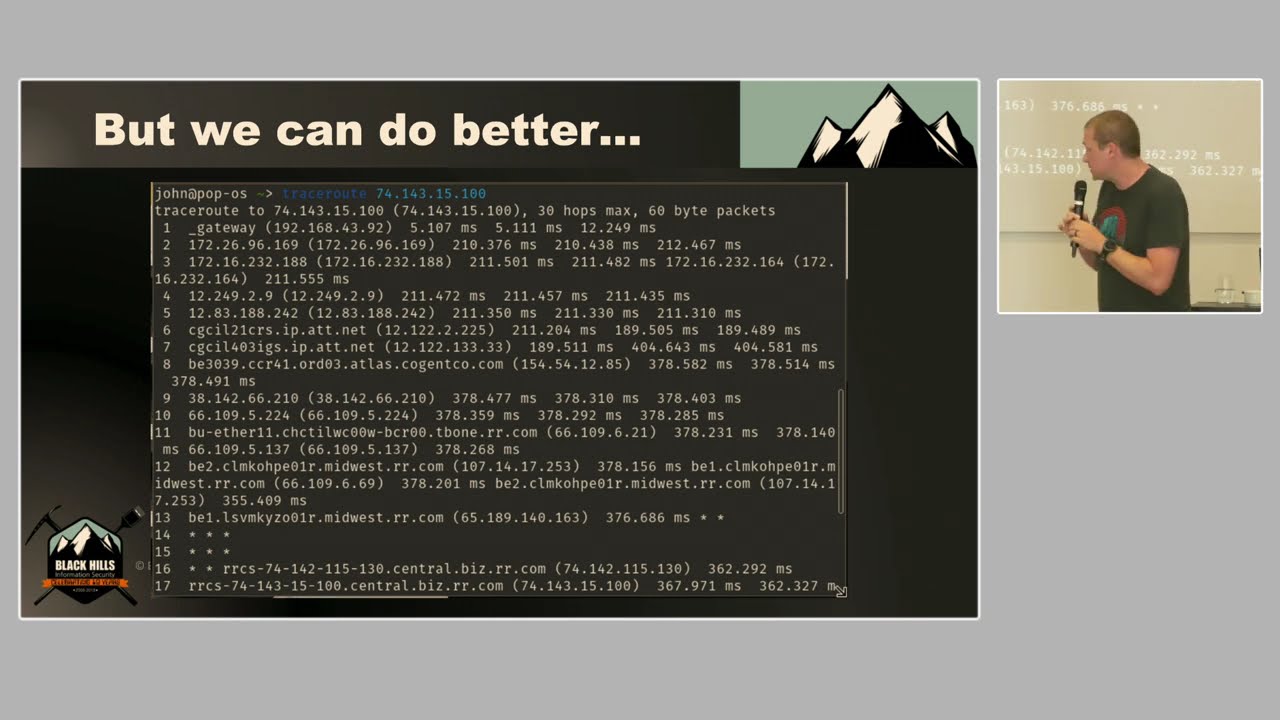

more time reading your internal documents than your employees do. Trust me, they will learn everything about how the environment works, how everything's synchronized, how you onboard a new employee, how you offboard an old employee. um if you purchase a new SAS uh application, how do you join that to your IDP? They're going to read all of those documents and they're going to look for all these these gaps and these ways to abuse it. And so, yeah, the days of getting access to a network, firing up net view, firing up end mapap, trying to, you know, scrape the network, those days are pretty much gone in a zero trust environment. And I this I put this up there. This was

earlier this week. This tweet came up about secrets that were and it it was shared in a Jira comment which got synchronized to Slack. Um, anybody out there using Jira and Slack, they've got lovely Slack bots so that anytime somebody comments on an issue, that will get synchronized over to Slack to let you know. So, you can see it right there. Well, maybe your Jira instance is really locked down and you have, you know, only internal people can access it. But then all the comments get synchronized right over to an open Slack channel that anybody in the company can read or search through and then bad things happen. So what does privilege escalation and pivoting look like in a

zero trust environment? It's just stealing new identities. That's all it is. And so they get in, they keep hunting, they find these secrets, they get more access, they rerun all the stuff they did with the lowle access with the high level access. They find even more access. and they keep repeating and that's that's all it is is just stealing more identities and maybe you have maybe they get in with a low permission session token that expires in six hours but then they find a secret that doesn't expire or expires in a week and then with that they have more access and they just keep going from there and I'll get into that with the real world

examples and so with this type of thing like once again they read the documents where are your secrets stored do you have vault do you have last pass all of these these things and then you've got multiple IDPs. You might think you only have one identity provider. Like we use Octa at Elastic. That's our identity provider, right? Well, we also use Windows for our workstations, which means you have to use Azure. You have for in tune. There's a second identity provider because Microsoft has their own. We use Gmail for Google Workspace. That means we have three. We have AWS for resources. There's four. We use GitHub for development. There's five. So there are five completely separate

identity providers that we have to manage. And then when you add a new employee to the company, how do they get synchron synchronized across all five? How do they get removed from all five? If somebody's removed from the company, this is in every time you add a new IDP. I put that little formula there just that's my ballpark. It's not five times the comp complexity. It's to the fifth power. It insanely insanely increases the complexity. And just just an example of the complexity, this was from a recent red team thing that we did. This one we handed the red team guy a laptop and gave him an account in our environment and said see what you can do. And they

found that there was an octa admin group called I'm this has been redacted. There wasn't actually one called cloud admin but they was there was an admin group called let's call it cloud admin. And there didn't exist a Google group with the same exact name. So they created a Google group called cloud admin which creates an email address with that with the company name all that automatically that synchronizes all the settings over to octa. Octa says oh there's a new group created I'm going to create an octa group so everything stays synchronized with the exact same name as the admin group because octa's cool kind of and they let you have multiple groups with the exact same name. I don't know

why, but you can have multiple groups that are called the same thing, but they have a different ID. And so now they go and log, they add their user to that group, and there are certain applications in the environment where instead of using the group ID, they use the group name. Great. Now they're in. And so I put that in there. The octa logs here, the event.action. Um, if you send the octa logs to elastic, that's what it looks like. So you can alert on new groups being synchronized and created. And then with zero trust, you got your doomsday scenarios. Your IDP gets compromised. Octa, um, if you didn't read about that, Octa support personnel

were compromised and then the attacker had access to a share where the customers were sharing H files which had session tokens. Um, and that's how they were able to gain access the the attackers were able to gain access to crowdstrike or not crowdstrike crow the other one cloudflare. That's the one. Yeah, they got access to Cloudflare. And so, yeah, if your IDP gets compromised, how would you ever know? Um, we actually do have some detections for that at Elastic where every time somebody from Octa accesses your environment, it creates an event. And so, we have alerts for that. And so, we we reach out and call them every single time. We're like, are you sure

this is this is cool? Um but then the other doomsday scenarios are the agent managers such as crowdstrike um any of your edrs your qualies that you're doing your vulnerability management patch management any of these things they can push out updates to all of your workstations because your workstations trust them absolutely and so these are the these are the doomsday scenarios that you have to threat model against to say okay what would how what would this happen how do I prevent it and you really have to take the time to do the threat modeling in your environment to make sure that these things are critical, so lock them down. High priv high high privilege API

tokens. Once again, these are just secrets that give you access to loads and loads of things, usually like AWS secret keys, things of that nature. And so now I'm going to walk through a real world attack scenario step by step to kind of show you like what this really what what a an attack looks like. And then I'm going to go through how do you prevent it along at each stage of the way and how do you detect it at each stage. And so I know this is real world because like I said we have red teams every six months we hire a red team to come in and attack our environment. And back before we went

to 502 everywhere this is this is kind of the story of what they did. Um so they attacked the first identity that they stole was a sales employee through SMS fishing. Um, so you would, we had nice, uh, email appliances to scan all inbound emails. That's nice. They sent them an SMS directly to their phone. Not much I can do about that. There, this was a sales and marketing employee. They were doing their job. Like, don't be mad at sales. That's what their job is. They put their phone number out there. They It's It's anywhere for anybody to see, so they're going to get contacted like this. Um, they got a link that just said, "Hey, your your login's about to

expire." And it said login- elalastic.co co instead of log instead of log elastic.co. It looked almost the same. They click on it. It come brings up our corporate website. They log in everything looks cool and then they get logged in and it says thanks for refreshing your token and then they close and go about their day. Meanwhile, the attackers have and now they have an identity token. They have access through this to Slack, One Drive, all the email, uh Salesforce, Confluence, everything that user can access they can access. And so now they have their foothold and this gives them a session token that's usually about 24-hour lifetime. And so from there they're really starting out

by hunting the environment. So Truffle Hog, if you've never played around with it, I highly recommend it. It's it's really it's just a collection of Python scripts and you can take a session token for Slack. You plug it into Truffle Hog and it will scan the entire Slack environment for known strings of secrets. And just to kind of full disclosure here, I work for Elastic. I'm a detection engineer. We have full audit logs of all of our Slack events going to Elastic Stack. I plugged in Truffle Hog, pointed at our instance, and hit go as aggressive as you can, and I couldn't find those events in our elastic stack. Like, Slack doesn't audit those. Like,

they're just not there. So, it's very, very hard to detect if at all. And so something else that's great about Slack, the API will let you search for text in screenshots. And so some developers had squared a screen shared a screenshot. The screenshot had a GitHub access token in the screenshot somewhere. And so that boom, now they've got access to GitHub as a developer. They've stolen this second identity. U there was a legacy access token as well in GitHub which don't expire and have the full privileges of that user. So now they go in, they clone all the private repos, they keep reading the freaking manual, and they learn more and more about the environment. Oh, by the way, now that

24-hour time that's they don't have to worry about that. They've got access. Now, after they read the manual, they learn that that access token can access the development environment of the HashiP vault. Anybody not familiar with vault, uh it's basically secrets management for developers and for cloud environments. Um it's kind of like LastPass from a development point of view. And so you got a vault instance hosted inside of your environment. It's got access to all your secrets. That's what it does. Um, and then this access token, they find that they can access the vault path uh without MFA of an application account. And now they have now this one's red because this is a this is a super user for this

application account. Never expires and they can do anything they want to that application. But wait, there's more. while they were doing their uh their tri their recon with the fishing attack or the the the fishing this the credentials stolen through fishing they find that that there's a a Google group that's open to anybody in the company and the they can join that is it these this is a Google group for a service account that has admin privileges so they just join their compromised employee account to this Google group and request a password reset and as soon as you join a Google group you get all the emails from that Google group so they say Hey, I forgot

my password. Reset it. Email comes in, they reset the password. Now they've got access to a service account that doesn't have MFA. Um, when they did this, three days later, one of the people that was on that Google group said, "Hey, I got this email. Is that okay?" Because it came they did it on like a Friday evening. So, they had all weekend long with no detections, no anybody realizing that that password had been reset and the account had been compromised. And so while they've got that access and it's the weekend, they find that there's a production vault instance and they can access production vault. They go into production vault. They find an AWS key

that belongs to an autoscaling account. Autoscaling if you use cloud is great because you know you have it's it's Christmas. You're a shopping website. You can scale up resources as needed. Christmas is done. You can scale down the resources. You can make your backups. You can delete things. You can create things. Well, now with this AWS key, they find that they could delete our entire data center effectively. Game over at this point. They delete all the backups, delete every everything you've got in the cloud. It's done. Go work on your resume because that's pretty much all you can do at that point. And so now, let's go back to the start. How do we prot how do we prevent

each step of this along the way? As I said, PHO2 is key. Um, if you take anything away from this, if you have limited resources, that is the most important thing because if you can stop them from ever getting in, then you don't have all of that other heartbreak at the end afterwards. Um, 502 for 100% people, it's impossible. Trust me, we've tried. You're going to have exceptions, but it doesn't mean you shouldn't try. If you can get all your salespeople on ph2, that's worth it. All of your engineers, everybody who's not, you know, who has a who doesn't have a very very valid reason, get them on it. Um, max session lifetime. We found out that

the uh we our max session lifetime was too high and as long as you kept refreshing it, it would stay alive for 7 days. And there was a Firefox plugin that would refresh your timeline, your lifetime. So go through, audit your lifetimes, audit your conditional accesses. If your IDP supports it, requires step-up authentication for your critical devices. And what I mean by step up is that let's say I log in, I have to do my fingerprint. I go to log into our seam to look at alerts. That's a critical asset. I have to redo my fingerprint every time I log in. And so it's not that hard to just touch a little sensor on my fingerprint sensor

or touch the UB key. So that is 100% worth it because then if my Slack co if if my token gets stolen from malware or something, they still can't access that critical resource. How do you detect it? Centrally collect all of your IDP logs. Um my preference is in putting it all on the elastic stack because that's what we do. Um install and configure all of your built-in seam detection rules. I've got a link there and then the QR code. Trust me, it goes to that website. Um it's okay. Uh these we've we publish all of our detection rules. Um it's all public. You can see the exact logic behind it. You can plagiarize them if you're not using

Elastic and put them in your environment. Um just, you know, don't go out and resell them, but you know, you can feel free to plagiarize them in your own environment. That's okay. Uh behavior-based detections for initial access are hard, trust me, because users move around a lot. People use VPNs, things like that. It can be very challenging. So, how do you detect the secret leaks? Once again, you have to find the secrets before they do. Truffle Hog, Entro, GitG Guardian, all of these secret scanners. Go use them. If you don't have any budget, go use Truffle Hog. Download it. Run it on your laptop and see what you find. And you'll be scared if you've

never done it before. Um, you're going to find AWS secrets, GitHub, SSH keys, everything all over the place. It's ugly. And the key is that you have to have a policy and you have to have leadership buyin to treat these internally leaked secrets as exposed and compromised because this is going to be the challenging part when you say hey there's a GitHub access token but it was leaked in a private Slack channel. We have to we have to go through all the work to rotate that token and the engineers and everybody's like man it wasn't really leaked. No yes it was. And if you have all the audit logs, you can go in and prove like, okay, this was

leaked on this date. We see zero evidence that it was ever abused. Okay, fine. It's not a critical emergency, but it's got to be rotated in the next two weeks. Um, but if you find evidence that it's been abused, you need to rotate it now. And that takes leadership buyin. So that's where the red team stuff comes in because then if you could get your butt kicked by the red team a couple times, you can point to that and be like, "Look, see, we need to do this." Um, detections once again collect all your audit logs. Uh, behavior-based alerts, I'll get into that a little later. Canary tokens, as John was talking about, those are great. Um, but it can

be a bit challenging once you get mature with this. So, like we're talking we internally we're talking about ways to distribute Canary tokens without getting getting caught by intro and truffle hog. And so like how do you make it available for the attackers to find without you also finding it and cleaning it up and quarantining it? That gets a little tricky, but I'll get into that a little later about some of the things you can do. Oh, little later is in this next slide. Vault access prevention, you know, don't use static tokens. Um that third line there, put your server behind a VPN, that's not very zero trust, but it is what it is. you shouldn't be putting that on the

network and you should use identity and location with something like that like you know maybe don't put those access accesses out available to the whole company things of that nature separate from your CI from your CD if possible continuous integration and your continuous development have two separate environments that is much harder to do than it is to say um but it's worthwhile if you can and as As far as detections go, canary paths, canary tokens, those are actually fairly easy because you don't have to put anything in them. If you're conducting all your audit logs, uh we have detection rules in our in our stack where we say if anybody accesses this this path, fire an alert because nobody

should be accessing it's infosc secrets, you know, and then somebody goes and look swoops around to see what's in there. Boom, fire an alert. And then maybe you put a token in there that's uh an AWS token that has no privileges, but it can, you know, do a get identity or get caller ID. It can do a who is. Then if somebody uses that token, now you file a critical alert. So things like that are great for detecting like vault secrets, s people trying to dig around inside your secret environment. for your IDP sync attack. Um, audit your groups, reserve any groups that are existing that belong to the admins, things of that nature. Uh, if possible, configure your so to

use the group ID instead of the group name. That really really should. That's the core like SAML is designed like you should be using the ID, but they don't. There are applications out there internally developed and externally developed where they base it on the name. So you kind of have to go through that manually and fix those. Uh detection, I brought that up originally. The system import group create event in Octa. Um there are similar groups in uh Microsoft and in Google and the other IDPs. Uh watch for new groups being created via an import. And then for us, we have a it's a very basic alert where I have a list of our hundred, you know, critical groups. And

if anybody creates a group that's named that, it fires an alert. And it's that's all it is. It was I thought about ways to do it high-tech, but it was a lot easier to just make a big or statement. Um it works. Um, and then how do you prevent this from, you know, how do you prevent somebody stealing an AWS secret and burning down your entire data center? Well, don't have one account to rule them all. Uh, your accounts should be separated. Your account that does your autoscaling up and down shouldn't also be able to delete all of your backups. Your account that does your backups, maybe it can do backups, but it can't delete the resources. You know, things

like that. Separate these out. Keep them, you know, keep these identities separate. And don't store all your secrets in one script either. Like it might be tempting to make all these identities and then you have one script that just calls all of them. Well, that that didn't do anything. So now I'm going to get into the section about generic like how do you detect a stolen identity in general. So this is one of the hardest things to detect reliably because API tokens can be used anywhere in the world. Once you got a token, you're accessing the application directly and you're not coming from inside your environment. Uh behavior-based rules are filled with false positives because humans are they

do strange things. Um, ne in a couple weeks I've got a work trip. I'm going to be logging in from a completely different country that I've never logged in from before. Um, do you want me to, you know, is that going to fire off alerts when everybody from my team is there firing off? No, you can't do that. And so things like that, it gets it gets hard. And the other problem is that a compromised identity looks exactly like an insider attack. And so you find out like okay is this a compromised account or is this a malicious in insider these are the challenges and so what we've come up with elastic we we have these this three things that we

really use to to do pretty good identity detections so we UEIBA user entity behavior analytics um our asset inventory and then our soar uh secure what's soar stand for again it's like security orchestration automation something I don't know. It's basically all your automation stuff. It's a bunch of scripts or tines. We use tines because I don't like writing scripts. Um, and so let me get into like how that works. And so each one of the things as I walk through the UEIBA I've I've written blog posts about all three of these. So if you want to take more time looking into them um there's a link at the end, another QR code that everybody should scan um takes you to the blogs.

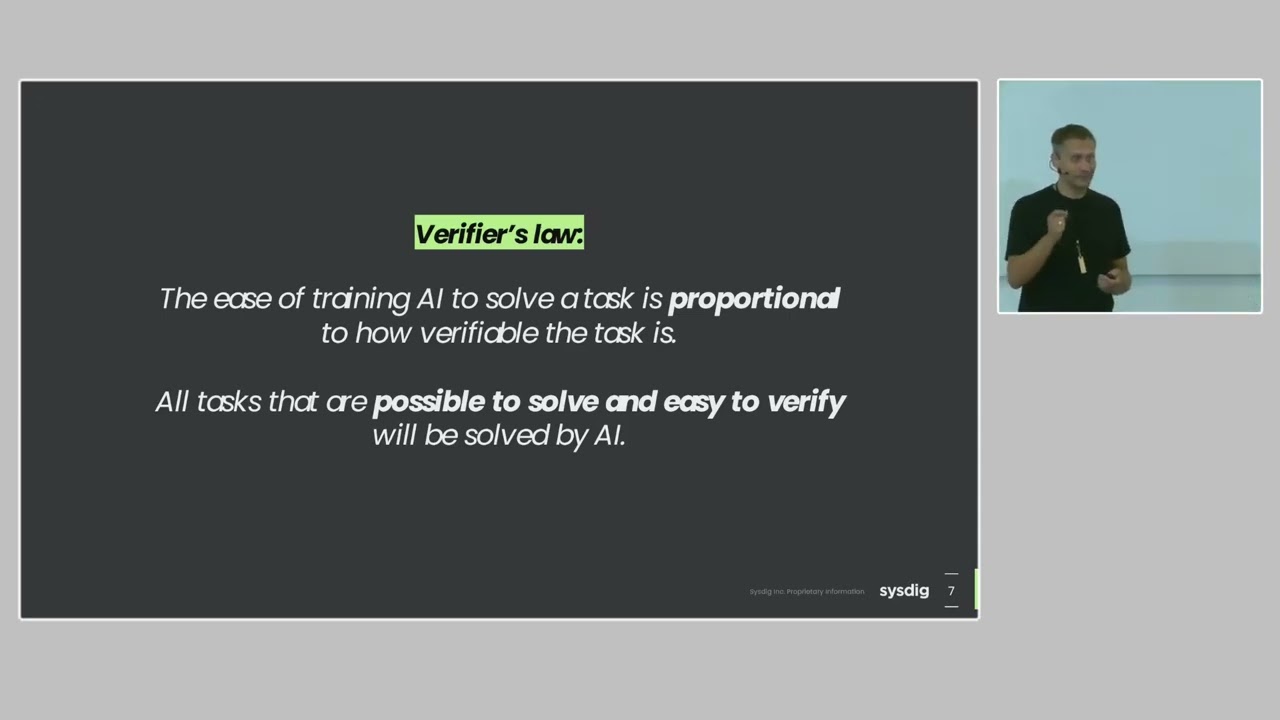

So UIBA packages the way we do this and so like some people they do UEIBA with complex machine learning AI algorithms or whatever marketing crap they tell you I don't know I didn't trust it because of that whole um non-deterministic nature of AI and with security I need absolutely deterministic behavior I need to know for certain what this is and so what I did is created these packages so I have a bunch of new terms rules and a new terms rule in elastic seam as you say, this this term was seen for the first time in X number of hours, minutes, days. And so almost all of these we do 14-day new term rules. If

you do longer than 14 days, your models get to be pretty big and your resources can, you know, your bill starts to go up. And so we say like the first it's a new user agent for an AWS key, a new geo region, a new cloud region, a new like new behavior that's never been seen in the last 14 days. Um, and so when we see three or more different new behaviors, we fire an alert. We have a a final threshold alert that says three or more alerts have been triggered. Fire a new alert. But then the problem is with that is that those alerts fire every time you've got a backup job that runs once a

month or you've got, you know, like I said, a user logging in from a new country and they've just traveled there and the whole team's there. You know, these types of things, these go off. Even this, you get a lot of false positives. So, what do we do? Well, we've got an asset inventory. And this one, this is this blog post we just uh released last week, uh, where we talk about like how we build the asset inventory. And really, it's a bunch of Python scripts and, uh, integration scraping our assets and putting them in the elastic stack right next to our alerts. And so, what is an asset or an asset? Is any really, it's a noun. It's

any person, place, or thing that belongs to your company. It's a a GitHub repository. It's a user. It's a user account in GitHub or a user account in AWS. And what we've done is we've each one of these kind of we normalize it. So we say, okay, the user email is user.e. And then we have a com we have a script that runs every 24 hours and joins them all together and say okay every object where the user email is Aaron Jwitt build one identity for this and so now we have one event one object and it's got my GitHub information it's got we have workday information for HR stuff um we have JF information in tune all this

entity information in addition to that for our cloud resources we get all the AWS GCP Azure information So I know all my VPCs are there. And so what we do with this is now I can say, hey, this alert that triggered for the first time ever came from this source IP. But if that source IP belongs to me, then close the alert. And so then we do that with our automation. And so we automate the triage using tines. Um you can do this with anything. So with elastic you have the the the detection rules. you can send them out to a web hook and you send the full contents of the as a JSON out

to your web hook and you can do anything you want and so from there we send the alerts to tines we say okay this behavior-based alert triggered and here's the source IP for it and then we go back and we run queries to the elastic stack and we say that source IP does that belong to our cloud VPC yes close the alert does that belong to has somebody logged into octa using 502 MFA in the last hour yes close the alert And so now we automatically triage this. And so we've in reality that story has about a hundred different checks um where we say okay if it matches any of these 100 checks close the alert. But if it

doesn't match any of these then this means that we have no information about the source IP that was using that. Now escalate that up to the incident response team. And so kind of seeing that in action. So you see three building blocks for a new AWS key activity. Then we get a so those three alerts come in. A lot of times one action will trigger three different alerts because it was somebody did a get caller identity which is basically a who is in AWS terms but it was from a new cloud region from a new source IP and from uh from a new user agent that we'd never seen before. So that one action triggers

three different alerts and so that meets our threshold. So we fire this the red box as a new alert. that alert goes to Tines and so we start triaging everything through our asset database through our octa logs through our uh intoune logs things like that and we say okay have we seen this IP address in any of those events and if yes we close the alert if no we escalate the alert in Slack we send it to the IR team um if it's certain alerts we send to pager duty because if it happens on a weekend we don't want to wait two three days before somebody looks at it and So that is that is our solution so far as to how

we deal with detecting a compromised identity. Um because like I said when an attacker steals the identity nine times out of 10 they're not going to operate from a compromised workstation unless they're an insider threat. That's one downside of this is it really bad at detecting insider threats because we triage all that stuff. Um, but if they take those AWS key that they found and they go use it from their attacker infrastructure, this will absolutely fire all the alerts. Um, and then the insider threat part, like I said, we had a red team recently. We handed them a laptop and we gave them a a password that showed us our weaknesses. And after that, we're deploying loads of Canary

tokens everywhere so that we catch them that way. And uh, I'm not going to talk about that because John just spent an hour talking about it. So, if there are any questions, I think we got another 20 minutes. Wow, I was moving quick on that one. So, any questions or we can go get coffee? Yeah, there's one back there.

So, question was if we detect something, do we follow up? Yes. So we um once again in elastic we shove everything into the elastic stack. And so I I'm lucky and that I've never once been told no, you can't put more data into your elastic stack um because it's kind of what we do. And so nine times out of 10 nine times out of 100, we've already got the alerts in our stack ready to go. And so we go there and we can do most of the forensics like almost real time and see exactly what happened. If not, um, as I said, we we escalate the alerts to our incident response team. Um, and then if

we need to, then we go and we, uh, you know, we can isolate hosts. We can we pull in the teams that we need to as far as, uh, um, SR AWS, like if we need to like move something to a new VPC, all that stuff, maybe then log in and start doing forensics. Um, we do that at that point. Um, but yes, that's absolutely this feeds into it. Something else, I didn't have it on my slides, but something else we've started doing with the uh uh the automation because we have the asset inventory and we have all the information, we can see exactly who owns a workstation. So, for example, if you own a Mac workstation and you have

elastic agent on it, the host ID is the serial number from the Mac. And so, we have Jam for managing our Macs. And so, we can see this serial number belongs to this person. So now when we have an alert, I know exactly who the owner of that workstation is, even if it's called MacBook Pro. Um, which trust me, we've got about 500 workstations in our environment called MacBook Pro. Um, because nobody wants to change the name of them. And so when we see an alert for MacBook Pro, we see that we can use this. We now know the owner of that and we can use our automation to send an alert directly out to the owner and say,

"Hey, do you recognize this activity?" And so we somebody starts investigating it and then they can respond in Slack and that comes right back to us and gets added to our case notes to say, "Hey, we sent a message to the user." The user the user responded and said, "Yeah, that was me. Don't worry about it." That gets added to the case notes and then we can say, "Okay, well that still looks fishy. Let's let's investigate this without talking to them." Um or they say, "I have no idea what you're talking about. Um what's going on?" And so then you really escalate the Yeah. Do you actually get updat from Amazon or Google when they test fabric

where your cloud is running on? So you know when the test and then you see kind of things happening in your environment. So as far as the logging it goes at elastic. So we're a like I took these slides out because they were a little marketing style but uh at elastic we're a cl you know we're a software provider. We have elastic cloud so you can spin up your elastic clusters in our cloud. Um we run on all three service providers uh plus IBM. Um and so we collect almost everything. We collect 150 pabytes a day of logs between our 60 different cloud regions and we use crosscluster search. So shipping all that data centrally is

really expensive. And so within each cloud region we have a logging cluster and so we collect all our data. We store it in that logging cluster so we don't have to pay to ship it all to one place. Um, but yeah, in that we have Auditbe on all of our cloud resources. So no matter what Amazon tells us, we've got Auditbe running on the clust on the containers and on the devices. The newest version of auditbe supports ebpf. So we've got really cool visibility with that. Um, in addition to that, we've got proxy logs at each gateway or at each cloud region. um centrally centrally uh collected we've got audit trail logs so like cloud

trail uh Google cloud audit I can't remember what they call it the Azure logs those are ugly but they're necessary and then we just started collecting the uh the Microsoft graph logs which are even uglier but they have even more data um they're I'm they kind of frustrate me because I don't know why but Microsoft didn't include the display name in the graph logs so you can see exactly what somebody did except there's no name. It just has an ID and it says this ID applicate they accessed this application ID and they did this you know they moved to this group ID. I'm like okay so you've got to build all this pipeline enrichment in to

dreference all these names to IDs. Yep. Do we have any more questions? Yeah. Thanks. Since we have 20 minutes, I'll waste everybody's time with another question. Um, you mentioned initially actually a really interesting point about the uh token timeouts. I I think you said 24 hours or something. Do you find that in zero trust environments that red teams and adversaries need to move a lot quicker than they otherwise would need to initially at least? So far, we've seen that they haven't had to. As I said, they get access and then they immediately take those tokens and plug it into Truffle Hog or whatever they're using and just start scanning your environment. And usually they'll find something or they won't within the

first 20 minutes. Um, and so we've we've updated our timeouts for a lot of things with uh with Octa. We've worked with them a lot complaining about the like the the Octa identity engine and like how we can customize a lot of these things. And so now we set it out so like you can they have conditionalbased loginins. So Octa has their own threat uh engine. So when you log in if there's certain things it triggers it'll say this is a high-risk login because it's from a new country from a new device from new locations they have certain things that they say this is a high-risk login. And now you can say in Octa, okay, high-risk login are not allowed to

access all of these other applications and they have a maximum lifetime of 30 minutes. And so if you have a high-risisk login, it's the first time ever being seen from this device. Really, that should only be seen when somebody's registering a new device. Um, and then 30 minutes later when they go relog in because we just kicked them out. Now they've they've been enrolled in our in our EDR. They've got our uh they've got Octa Verify. Octa Verify can see that it's got our endpoint agent on it that's managed by us and it says, "Hey, this isn't this is no longer a high-risisk login." Um, but as far as like a red team point of view, as I

said, they haven't had to yet. They've been able to kind of go through it at their leisure. They spend a lot of time reading the documentation. Um, I mean, we kind of told them to be an AP. Um, we hired Trusted SE for that a couple years ago and we were like, pretend you're an AP. you've got I think we gave them like three months. It wasn't like some 10day engagement where like smash and grab. We're like you got three months. Take your time. Show us what you can do. And that's what they did. That didn't you know that was a couple years ago. Since then we've we've required 502 everywhere and they came back and they spent a

month trying to get in and they failed and then after after a month they were like can you mail us a laptop? Like yeah. So that was a win. Cool. And so thank you. Yep. Oh, right next to you. Other way. Hey, uh, thanks for the talk first. Um, so a quick question. So you say, uh, the first step of the the attacker's path was compromising a Slack account and then you're saying, okay, the, uh, triage you're doing, you're also sending out Slack messages to the users. We've had this discussion a lot. Um, and so when they when they compromise it, yes, there's the issue that like the attacker may have compromised the Slack token and

they can just click respond like no, yeah, yeah, that was me, you know, before the user does. Um, that's why once again that goes to enrich the case information, but as not, you know, that's like that AI boundary. That's the red line like we don't, it's not the beall and endall. Um, so if they say, "Yeah, that was me and it was coming from a workstation and there was a 502 login from that same IP address," then we're like, "All right, that was probably them." Okay, thanks. Yep.

Um you also mentioned um the uh the best way to detect all these is um what use ueIBA um plus asset inventory plus saw but then with a person like of your skills and experience how long does it take typically to build these sort of super duper detections? It takes a while and a lot of resources and a lot of buyin from leadership telling you yes, you can keep spending money. So yeah, that's why you know what John was talking about with the Canary tokens. If you don't have the the resources for all of that, absolutely start sprinkling those tokens everywhere. Um, anytime you have a secret like like a vault path, Google Docs, shared docs, places that

you don't want people snooping around, as I said, zero trust attacks look exactly like an insider attack. And so if you want to kind of red team this yourself, you can create a new account that says, "Okay, add this to the all employees group and nothing else and see what you can do, see what you can find." Um, and then as you're snooping around and you're reading through all these paths and you're trying to access this data, say, "Hey, that's a good spot to put a token, a canary." And so if you don't have the budget for all of that, you know, stuff that we've built out, yeah, canary tokens 100%. Or both. Any more questions?

Aron, thank you again for u being with us this year. Yeah. Um I've been part of um attempts at least to implement zero trust in huge organizations. Yeah. And the biggest issue is or one of the biggest issues is background compatibility and um legacy authentication systems. So how or what is your take on that? how we counter this because obviously you have this new fancy technologies UAB um octa and uh whatnot but then if you um take a look at underlying uh legacy systems they're still leaking secrets yeah like uh LDAP and whatnot. Yeah. So so I'm not even going to talk about trying to migrate to zero trust. I've never tried that and I

can imagine it's a horrible, horrible experience if you're talking about some of these gigantic legacy environments where you got 100,000 employees spread around the world. And so, yeah, I'm not even going to touch that one. Uh, but let's say you're building your detections and you have that type of environment, the the identity detections I talked about at the end may actually work a little better for you because I have like Elastic, we're spread around the world. Um, you know, you may tell from my voice I'm not from Germany, but I've been living in Germany for nine years now because Elastic said, "I don't care where you live. Live anywhere you want." And so I just, you know, decided

to stay right here. And so we've been, you know, we have people all over the world traveling constantly, connecting to us from all over the place. It's hard to control a legacy environment. you're gonna have buildings and maybe that LDAP environment leaks secrets like crazy, but you can say if this secret is used outside of this subnet or the source outside of this environment, fire an alert. And so you you have some controls that we don't with that like I can't control where people log in from like that versus like certain environments we say you have to use the VPN and then if it doesn't come from a VPN fire an alert. Do we have any more

questions? All right. Thank you so much. Thank you. Thank you for the invite.

So the