Smart Phone to Medical Device in five not so easy steps

Show transcript [en]

hi i'm josephine winslows and today i'm going to teach you how you can save lives with a smartphone pretty ambitious but if you stick with me i'll walk you through it step by step my background is in building medical devices and medical device software in my free time i'm trying to teach myself cyber security one of the very first things that i learned and something that you guys are probably more familiar with than i am is that the first step in finding a solution is understanding the threat so what's the threat here what are we saving lives from neonatal jaundice this is a medical term with two main parts neonatal meaning affecting infants or newborn

babies and jaundice jaundice is a term used to describe a yellowing of the skin and the sclera that's the whites of the eyes it's caused by a buildup of bilirubin which is a yellow orange bile pigment produced when your body gets rid of red blood cells now usually bilirubin is not a big deal it's filtered out from your body like any other waste product it's when this delicate balance is disrupted that issues start to arise there are a couple of different ways this can happen but the important thing is that as bilirubin builds up in your bloodstream it causes abdominal pain fever weakness fatigue and at really high levels it can pass through the blood brain barrier

causing permanent disability or death this is a massive issue and it's a global health crisis neonatal jaundice affects up to eighty percent of premature births and up to sixty percent of full term births it can be fatal and although it's difficult to prevent it is quite easy to cure if it's diagnosed early enough in less severe cases it can be treated with exposure to uv light which is phototherapy and oxygen and in more severe cases an exchange transfusion can be used if it can be treated then why is it still causing 114 000 infant deaths every year to understand this we have to look at where those deaths are happening three-quarters of them occur in

sub-saharan africa and south asia and there's a number of different reasons which contribute to this including an increased likelihood of certain genetic risk factors like a g6pd deficiency and a lack of access to healthcare that the leading cause is the difficulty of diagnosing jaundice using traditional methods like visual inspection in infants with darker skin tones it's just much more difficult to detect a yellowing of the skin in infants with a darker skin tone and that's the current standard practice the need for a reliable low-cost screening solution which is accurate regardless of skin tone is clear so what are our options in the uk an infant who is suspected of having neonatal jaundice can be given a blood test to determine

the exact levels of bilirubin in their bloodstream this is the gold standard diagnostic technique and although it's very accurate it's simply not a feasible option for many infants in countries where there's difficulty in accessing the expensive and bulky medical equipment that's needed to perform this kind of testing it is also an invasive procedure which can be really difficult for parents of newborns as it involves drawing blood the other methods i've already talked about looking at the skin tone and the sclera that's marked on this image the white of the eye what we need is a solution that is non-invasive and cost-effective a method that's being tried in the uk is color imagery that's using these big expensive pieces

of equipment i call colorimetry machines they measure the color of a surface by looking at the rate of absorption of different wavelengths of light i got to play with some of these in my second year of university and i got involved in a project which was looking at recreating this effect using dslr cameras you take a picture with the camera export it to a computer and then use a programmer in matlab to do analysis i was debating back and forth with my professor and i said surely this could all be done on a smartphone and he said no that's impossible so of course i did what anyone else would have done and i spent the next three years of my life proving

him wrong we designed an app a smartphone app which would allow us to complete this colorimetry procedure luckily this was possible thanks to the rapid advance in technology over the last few years not only in the sensitivity of smartphone cameras but also in the processing power that it requires to perform analysis like this we created an app that uses two images of the whites of the eye to screen for neonatal jaundice two things to focus on here firstly the whites of the eye that's because this is a pretty consistent color across infants of all skin tones and secondly two images we use a flash and none flash pair this is basically done to remove ambient

lighting from the equation when you take an image with flash and then an image with no flash you can subtract one from the other and are left with a composite image which only takes into account the light that was emitted from the flash excluding all ambient lighting which is important because yellow light makes the image look more yellow and blue light does the same for blue and we want a really accurate reading of what the actual color of the eye is it's really important for an accurate diagnosis quick moment to shout out the organizations that supported me in this project it was a collaborative effort across all of these organizations and these charities who are really really

fantastic in supplying financial support to the organizations that have assisted in the saving lives of birth project i started this app with a proof of concept that i built over at two months in the summer of my second year of university it was really ambitious i built a very customizable app which was designed to be adaptable to the needs of the user once i'd proved it i had to go back to the drawing board i spoke to these wonderful wonderful doctors dr christophel and dr judith who are both paediatric consultants dr crystal in akron hospital in ghana and dr judith at the university college london hospital we sat down and we talked not only to

them but also to midwives and the neonatal care teams we asked them what they needed out of this app this is called user driven development instead of me assuming their needs i ask them directly i gave them my prototype and they came back and they had a whole bunch of requests which was so wonderful they said we love how customizable it is but that's not what's important to us we need it to be simple we're already dealing with a baby which can be difficult at the best of times this app needs to be intuitive not take up too much of our brain space we need to be focused on the patient here we also want you to

remove all of the sounds you've put in they're really disruptive it was tips like this that really helped make the app so much better in the end we settled on design with four stages uh using the two main principles that they have prioritized intuitive design and minimal use of language they don't want to read lots of stuff and also this app will hopefully go out to a wider reach where crossing language barriers and we want to make it accessible the first stage is just a full screen camera showing you as much of the image as possible so that you can focus on getting a clear shot of the white of the eye there is a camera capture button a

little counter to show you how many pairs of images you've taken and when you finish taking enough images we recommend five pairs and then you just press the tick button to show that you're done here's what that looks like in real life on a prototype version the next stage it shows you a pair of images that you have already captured one above the other the flash and the non-flash images which is a little bit difficult you can see it better here in this image of the app in use and because it's very difficult to capture an image that is entirely taken up with the white of the eye as i said babies tend to move about quite a bit

you can then zoom in and pan using quite intuitive pinch to zoom and scroll to pan methods that allows you to get the sample area that's this little yellow square in the middle of each image over the area of the eye that is entirely covered by just the sclera just the white avoiding any veins or shadows or the eyelid and making sure you can get a good sample out of the image this is larry is actually all we're going to be looking at in order to determine the colour imagery of that white of the eye this allows you not only to check that the image quality is good and that you can get a good sample

if you can't hit that delete button but it also lets you control the image after you've taken it and focus on getting a good image without worrying about making sure there's no eyelid in it in the initial stages this is just showing what that looks like the little area you want to be focusing in on here is the sclera once you've done that then you just press next and we get some results we average out a couple of different images because this is science this is diagnostics and we need the most accurate reading we can it will show you the scb that is your projected bilirubin levels based on the results what we think that means and then a

confidence rating because we want the healthcare professionals to feel confident in what the app is telling them if we think it's on the edge we'll tell you that and you can monitor the patient carefully but if we think it's definitely jaundice then we'll recommend immediate action after this since this is a research project we have the advanced results screen that means that we can feedback more intense data straight back to the team for continual improvement this app is based on the camera 2 api we decided to do it in android because it gives us much more control over the camera itself an api is just a programming interface it's a bunch of different modules a

framework that allows you to build your own apps the camera 2 api is one of the most advanced in terms of the level of control it gives you but it wasn't advanced enough we needed to build a custom camera capture session module and that's because to understand that you need to understand the internal workings of a camera this is a bayer matrix it's what the inside of your camera looks like it's a scientific approximation which is nearly as cool it's a series of light sensors red green and blue with more green because your eye is the most sensitive to that and these light sensors come together to form pixels four of them in a pixel

reason this is important is because you can't access individual light sensors using the camera 2 api so we had to build a custom module which would allow us to get data directly from these sensors rather than an averaged out version which compensated already for white balance and other factors we needed the actual data to get the most accurate reading we don't care about the image looking good we just need to understand it from a scientific point of view this involved getting the raw image data something that is not standardized across different cameras and not standard across different phone models either and transforming that into an image file that we could read to do this we used a custom raw image to

dng file conversion this is something that's still under development currently it only works for a few specific brands of phone but because they have to hard code certain values into the software to compensate for things like different color filter arrangements orientation masked pixels as well as a lot of different other characteristics once we've got this into a tagged image file format tiff or dng then we can move on to sampling this is a rough understanding of how the app is laid out in terms of the modules used as you can see there is a large section dedicated to this raw analysis that's the green and the blue sections it's a custom micro services architecture which is

just a fancy website we built the app in a way that is highly maintainable and really testable it uses a main activity and then hosts different fragments within that to perform the different features of the app once we built the app we needed to calibrate it as i mentioned each camera is going to be slightly different but also the flash is slightly different on each modular phone some are yellower some are more white and they vary in brightness as well so we needed to calibrate to a known color and these swatches are what we use to do that we take images of colors where we know the exact color value under a range of different conditions

and then we look at the output we're getting from the app and we calibrate it against the known color values of these swatches this is another hold up at the moment because it means the app can't just be deployed you can't just download it and go the it needs to be calibrated for the specific phone that you're using and this really needs to be done in the lab conditions again something we're working on and hopefully in future iterations of this project this can be avoided and we can find a way to calibrate it remotely for the phone or using an easier procedure because these color swatches are really difficult to get hold of and really difficult to

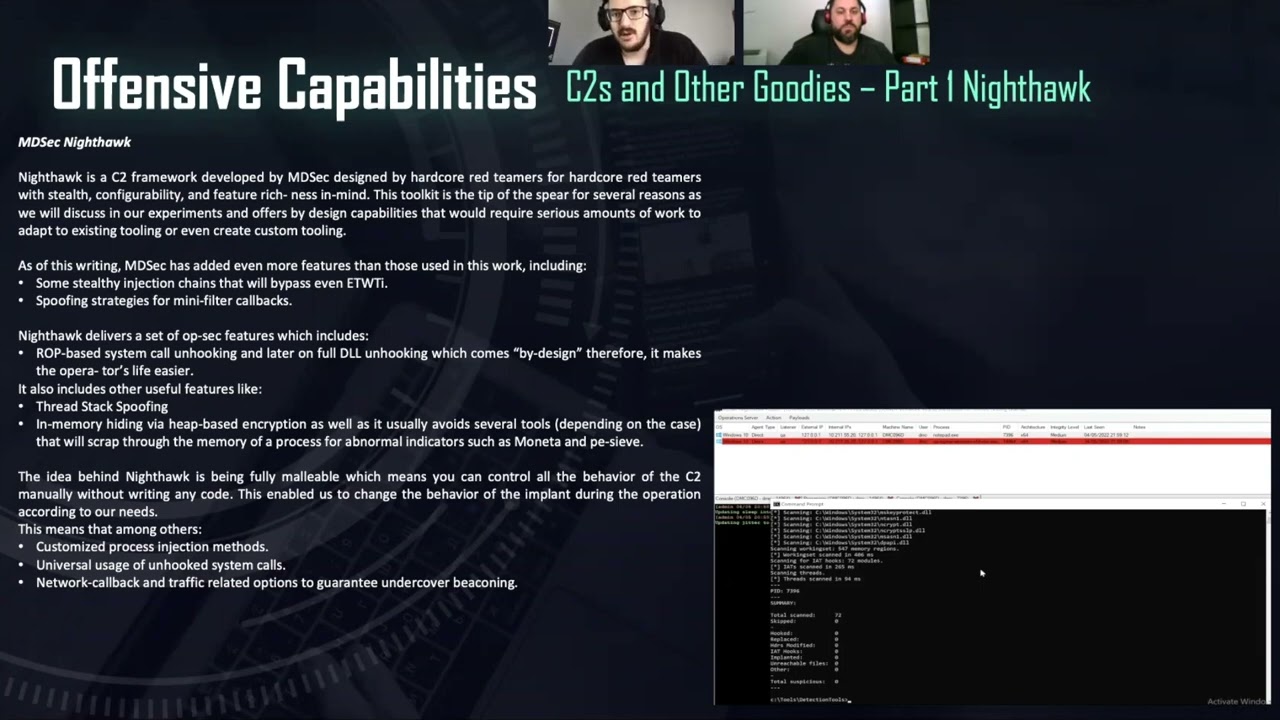

maintain they're very very sensitive something else to consider is security now as you can probably tell due to this slide coming quite so late in the presentation security wasn't baked into this project from the beginning unfortunately i'm a developer and the rest of you work on the project as scientists we didn't have much experience with security to give you some background this app is designed used by healthcare workers working in really remote locations who don't have access to reliable internet and may not be able to download the latest firmware updates for their phones leaving them really vulnerable in earlier iterations we were sending really sensitive data completely unencrypted over the internet this is not ideal it's not just a

theoretical problem there have been several cases recently where m health apps have been targeted by malicious actors because it's a really vulnerable situation you're in when you're dealing with health data and not just because we want to protect people this is a medical device and there are legal requirements surrounding medical devices and cyber security in order for the device to pass through these legislatures and be available on the market we need to come up with a way of making the data secure currently our data transfer solution is just not doing it over the internet we keep everything completely manual on the phones and keep them offline to try and secure them as best we can this isn't ideal our other

solution is to ask for help uh if you or someone you know are interested in security and phone applications or ideally any health security we would absolutely love to hear from you please get in touch my details are going to be available at the end of the presentation the next stage is field testing and we're really really excited so far we have two field testing locations one in the uk and one in accra in ghana 504 babies have already been surveyed in acro general hospital in the hospital so that we can verify these results against that gold standard blood test the next step is going to be expanding to different locations getting more people involved in the

project and hopefully saving some lives if you have any questions feel free to reach out to me on twitter or you can find me on linkedin i look forward to getting more involved in cyber security in the future and i really hope you've enjoyed the presentation today thank you so much for listening