AI in a Minefield: Itsik Mantin

Show transcript [en]

thank you for joining me in the talk about ai in a minefield learning from poison data i'm itzik mantin lead scientist at imperva in the last 21 years i've been innovating on security algorithm center intersection i love math i love algorithms i like the game of understanding threats and designing mitigations and you can see a couple of pictures of what i do in my spare time i will start with speaking about the ai and ai threatened landscape i will then zoom into the threat of data poisoning about when why and how this threat is uh uh is applicable and what can we do about it i will then speak about uh how the data poisoning threat uh is uh

uh realized or potentially be realized uh when building a web profile for the sake of web security uh and will end with the summary and conclusions uh no doubt we are in the ai era artificial intelligence systems are everywhere and are changing the way people do almost almost everything uh and the ai error is in fact also the data error because data is the fuel that uh that makes all these uh ai systems work and ai and data have huge contribution to many many domains however this contribution comes also with several caveats which we tend to uh to ignore or at least to uh to underestimate and we'll speak about some of these caveats uh in this session

uh most of you are probably familiar with the gartner hype cycle for new technologies so uh equivalent to this you can think of a security lifecycle for new technologies new technology begins with very little thinking about security or maybe no thinking about security uh people are focused on the technical obstacles and are excited with the applications and the opportunities at some point someone discovers that this system this technology behaves very odd when certain input is being fed to it and then you have another vulnerability and another one uh when the technology meets uh materiality uh and and it sometimes reaches a situation where people ask themselves whether this technology will ever be be usable in a safe manner but at some

point security researchers and uh and domain experts start to work together uh on um building methodology on uh modeling attacks and modeling mitigation techniques and uh then we get to a stage of a healthy development of the technology in a safe manner and it reaches at some point a plateau where all the the major threats are are already being uh understood and the threat and how to mitigate them and still new threats are being discovered all the time and new mitigations but but the situation the situation is essentially stable so we've seen this for a web in the 80s for mobile in the 90s and now we are seeing this for ai as well i think i

think in the last couple of years we're starting to see a really uh the discussion about ai threat landscape more seriously than before and what you see here is a typical machine learning system uh there is the training part where training data is being fed into a algorithm that builds a model this model can be a classification model it can be a regression model can be many other things um and then uh this model is used to classify uh or to predict or to process inputs data this is called the inference uh and the result is a prediction or classification in some cases in some systems the result of the model is being fed back uh to being evaluated

in order to continue and improve the algorithm or to make it work for a dynamic environment for example now when you're looking at the system from an attacker perspective uh then if the attacker is inside or if the attacker was outside but somehow find his way in by malware infection and fishing um whatever uh then he can do whatever he likes the sky's the limit he can tamper with the model he can steal the model he can change every particular decision the model makes i can replace the mother with his own uh you can of course steal the data however even if the attacker does not uh have a foothold inside and he's an outsider then still there

are plenty of things that this attacker can still do some of them are called uh evasion or deception or it's very serial examples most of you probably heard or so this comes very concerning the examples of how you add a couple of stickers to uh to a traffic uh a sign to a stop sign uh and then the ai engine that uh uh within the autonomous vehicle looks at it this uh this stop sign and identifies it as um a speed limit sign uh very concerning of course can have devastating uh consequences uh and from i think all the times where where someone tried to make um to thwart uh machine learning model usually it was not very hard it was

pretty easy to do that because machine learning models uh when you build something without thinking of an attacker and the attacker comes then probably you won't be safe we know that from many domains and ai is not different than that uh training data poisoning we'll speak in depth about this threat later training data leakage is slightly more esoteric a thread but still an important one in many cases when you build a model data from the training data leaks into the model and this data can sometimes be extracted later on and whenever you use sensitive data for the training and usually you use sensitive data for the training it can be either pii or health records depending

on what exactly the model that you're building uh but uh uh it will probably be sensitive and then the threat is is real and important to uh to understand uh and to uh to evaluate and to mitigate and so how does a data poisoning work on the left side you can see a linear classifier that is uh aiming to separate between the red triangles and the blue and the blue dots now if you only change the location of a single point then you get a completely different uh classifier it is still effective but it is different and really um it is very hard to to predict or to uh or or to uh to restrict

the uh the change in them in the model construction uh due to the change in the in in a small number of data points and on the right side you see even a more uh a deliberate attempt to uh to thwart the model construction uh again and the model here comes to separate between the blue and the red and the attacker now puts red dots in well in in places that uh it is clear it will make the model uh very different than what it was before and as you can see uh the new model now does pretty lousy job uh separating the the blue from uh from the red exactly and what the attacker wanted

to achieve uh this uh data poisoning methodology terminology discussion uh is pretty new it's i only met it in the last couple of years however the threat itself is not new it is all it's almost as old as the internet i think at any time like myself and you are looking at that tripadvisor hotel review then you are asking yourself is it a real review is it a fake review is it the hotel owner now that is uh trying to convince me that this hotel is very good although it is not or it's a competitor uh and the same thing the trip advisor also have the same concern uh so the threat of data poisoning is

there and people tweet it and treat it uh and it is there whenever you have um rating system it can be uh e-commerce like amazon or it can be travel like a booking or google or tripadvisor or it can be even movies like netflix or imdb whenever you have a rating system uh and the decision of this rating system is meaningful for someone uh then probably someone has motivation to to make this uh system making correct decisions and then you have the data posing through them usually people understand that and and treat it in one way or another uh one of them of the of the first more popular uh research fields for data poisoning threat and mitigation is

the area office of spam filters um maybe one of the reasons is that this is one of the the places where machine learning proved itself effective against a cyber security threat and on the left side you're seeing a model skewing attack on google on gmail spam filter the attackers created many many messages uh and classified and labeled them as benign and these messages were had some something in common with uh messages that they probably planned to send later uh as spam and so you can think of that as sort of a back door within the gmail spam filter the vector that they wanted to inject on the right side you see attackers that took actually this is a research not

actual attack but a different approach of availability attack here the victim is a spam based spam filter and what the attackers tried to do is they knew that the the the algorithm builds uh a dictionary of uh office spam hints and they wanted to pollute this dictionary with with a collection of probably very popular awards that are used in in many uh legitimate emails and uh the attack was was pretty successful uh with only control of one percent of the of the data used for the training uh the researchers were able to make the model uh classify eighty percent of the the benign email of legitimate emails as spam and ninety five percent of the legitimate emails as

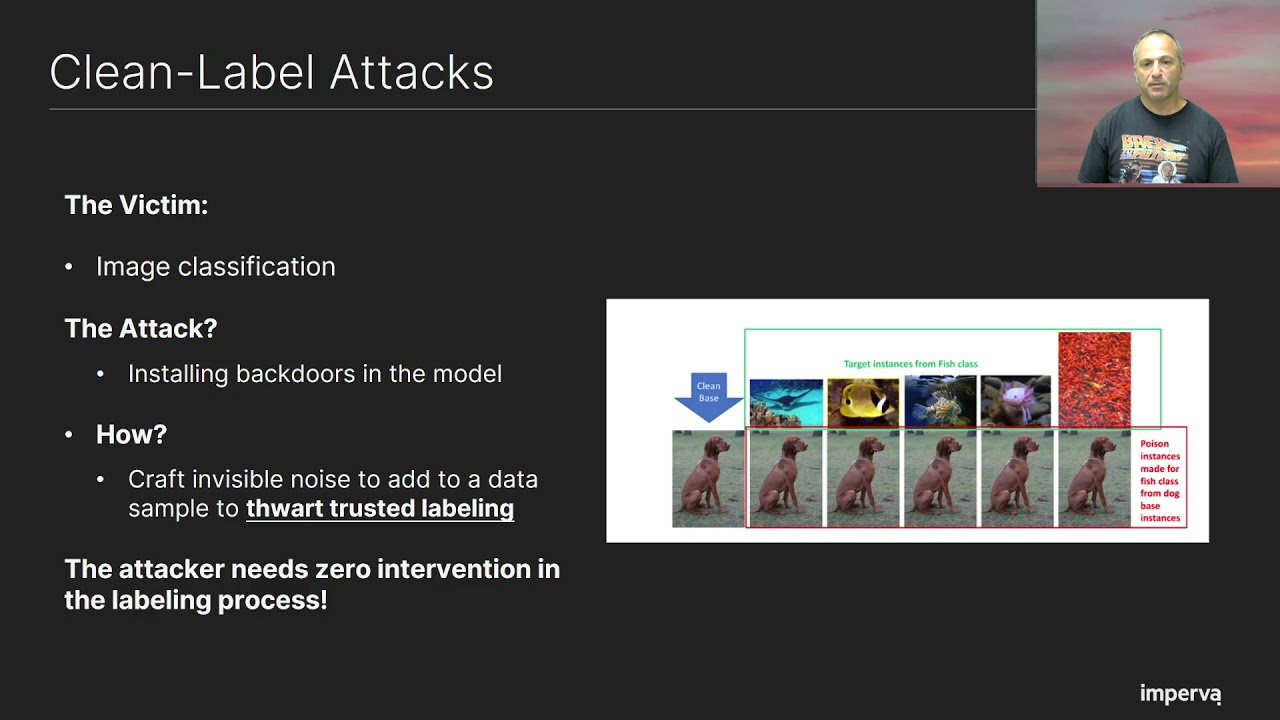

unsure so probably like myself you're you you see at the beginning uh you see this and you say okay so the process the problem is i give the attacker the possibility to do the labeling so i'll do the labeling and everything will be all right right wrong uh because in this variant of data poisoning a clean label attack uh the the attacker does not have any influence about the labeling process here the attacker wants to attack an image classification system he wants to convince this uh um this model that all these fish that you see in the top images are actually dogs and the way he obtains that he takes an image of a dog

and he adds an invisible noise that is somehow correlated with the image of images of the fish he adds these uh these noises to the image of the dog and now uh for us the image still looks like a dog for the trusted label labeling expert that is working on behalf of the image classification it also looks still like a dog and he labels it as a dog however the result of this labeling is that the model now we learned that images that have this hidden structure are dogs and the success of this attack is more than 95 percent uh confidence that the model says that the model um has when it claims that images of fish are actually dots

so uh the threat is uh is there and it's real uh how to prevent it uh there are two approaches that are again they were used even before uh we called data poisoning data poisoning i can filter suspicious data for example suspicious suspicious origins ip addresses or users or suspicious clients um for example in booking.com i think that you can only um you can only provide rating if you did actually a transaction so uh it gives some level of reliability to uh to all the the actors that that give ratings uh you can also take use the fault or one tolerant data sampling for example limits the impact of data points that are arriving arriving from a single entity

and what is exactly entity well that depends of course on the on the problem itself on the algorithm the model and and the situation um so these two are are are pretty effective but only to to some extent um there are also other mechanisms mostly for detection like diff tracking tracking the difference from the previous model a valid model and assume that you have data poisoning whenever you see too long too high distance or to use a reliable benchmark a golden data set that you use all the time to make sure that you don't have a model that is completely corrupted but again these two are detection and their effectiveness is very limited and of course you can also uh assume

that the attacker does not know what you are exactly doing or he knows what you're doing but he doesn't know the model and uh to have uh and that the attacker does will not know exactly how to attack you and he will not attack you uh but we uh we hearing besides we know that security by your obscurity very rarely proves itself as an effective mitigation technique about against any threat so not recommended not recommended so what we have so far and data poisoning is a significant threat on learning mechanism the threat is critical when using data from untrusted sources which is typical in almost every place it is effective like in cyber security uh

domains like firewalls spam filters and malware detection and um and rating systems like e-commerce or travel and it doesn't have a silver bullet mitigation it has a collection of mitigation techniques that together can throttle the uh the attacker next we go to the uh i'll explain about how this data poisoning a threat is uh realized or can be realized in the world of web and api uh security so what you see here is a waf a web application firewall between applications and outside your threads aka the internet um usually waff's work using a combination of negative security model and positive security model i will focus on the later one so positive security model is essentially the idea that everything is

bad uh except for what we know for sure that is good so it is sort of anomaly detection in order to uh to to use that we need to base to learn a baseline profile for the web or api traffic and to block or alert on the deviation but when i say that we learn we want to learn a baseline profile the the data that we will use is actually traffic uh that we that we've seen through this uh web api and if this traffic comes from outside there you go we have all the ingredients of data poisoning attack so it needs to be treated and mitigated uh so web or api traffic profile can

essentially look something like what you see here the red parts here are actually think of them of these as carriers of traffic can be cookies or query string parameters or body parameters each one of them is actually contains belongs to a host or url or method or a combination of these which we call containers and each one of them has actually a traffic profile the type of the value that can be for this parameter whether it can be com can come several times in the same request or only one time optional or mandatory a parameter size for numerical values parameter character set and length for uh for strings and stuff like that all of these are

things that we will not we will want later to enforce because they have some meaning some significance for um detection of web attacks uh so uh it makes a lot of sense to use both the mitigation techniques first a cleaning filter suspicious traffic for example suspicious events traffic that was identified as malicious by by some other mechanism maybe traffic from suspicious ips ips that are known to to generate malicious traffic uh also maybe sometimes it makes sense to filter all the traffic during an attack regardless from of whether it came because it makes sense that the possibility of a misclassification here uh is uh is and is not negligible uh or at uh traffic for bots it also

makes sense in some cases uh the next mitigation is a threshold learning um again limiting the impact of every entity uh entities that make sense can be ip addresses user agents go locations identified clients also if you want to uh to avoid um learning something that came in in a burst of of traffic then you want to learn things only when we you see them spread along several hours in a day or several days depending on your exact model and eventually once you complete the learning you can do the enforcement alert on deviations from this profile now the threshold learning here is pretty easy in batch processing however uh for doing batch processing you need to

consume a significant amount of memory uh which is not always a practical and thus there is a place for and there is a need for a string friendly algorithm for threshold learning and uh in order to uh to to present uh string friendly algorithm i will take you to a completely different problem and completely different world of a dog food tasting as a challenge and we want to know which of the two brands pedigree and teo is uh is good this tasty is favorable by by a dog by dogs and dog owners we run sort of off a poll and theo got 12 likes out of the 20 and pedigree got six like likes however we want to do threshold

learning here so we set threshold of at least three cities and at least three breeds meaning that we accept that something is tasty only if we've seen testimonies we've seen indications from people from three cities with three breeds of dogs and here only a pedigree passes and the reason for that is that you can see that for example um in the red part of the table you can see that san bernard owners and never said none of them said that theo is good and uh people from san francisco also never said that um teo is good but for pedigree we have a pretty uh balanced um uh voting spread between the different cities and the different

uh breeds uh and uh one of the reasons for that is that we had uh you can see many um many voters that have pomeranians how many voters from new york and all the six people that are new yorkers with pomeranians pomeranians all of them liked theo but we didn't want to to count them all uh as as new ones but you want to count them somehow together and this is exactly one thing we wanted to achieve uh so how to implement there these so we think of pedigree as an object and of the tastiness as a fact a boolean fact it can be tasty or not tasty and the treasure of learning is uh focused on two attributes city and

breed each of them have threshold of three and for each one of them we keep set a set of all the cities we've seen so far at the beginning we've seen no data so uh we know and nothing so everything is uh turned off uh for teal we have the same and we can have another factor whether the the dog food is nutritious or not data comes in and now uh we uh feed the data into the system and we see that now the sets for uh cities and breeds for pedigree tastiness are we have a set of three and that's we passed the two tests and we're good we believe that pedigree is tasty

however for teo we have only uh two cities and we have only two breeds and it didn't pass any of the tests and therefore teo is not accepted as tasty uh so looking at that um so what we did actually we learned the boolean fact that an object x has a property y uh looking at that from a memory consumption perspective then the memory consumption is proportional to the number of objects the number of properties facts the number of attributes and the thresholds however it is independent of the size of the data which is exactly what we wanted to to achieve how to use boolean facts for web api profile well it is pretty straightforward in the

during the training then for every request we see then we extract for every fact that is interesting for us we can uh extract a flag fact x scene and then we'll take all these fact x scene flags and we'll collect them together see which thresholds are passed and then we'll get into the profile effect x allowed in case we passed all effect x passed all the all the tests and if not we'll have fact x uh prohibited during the inference we have a profile and now for every new request we can extract all the factx scene from this request and if we have a fact x syn that is also prohibited then we have a violation and this is

what we wanted to achieve in the first place so we can learn boolean facts uh and we can use them very effectively in uh for the web profile and the next question is what can you do with boolean facts it seems like a pretty limited model and in the next couple of slides i hope to convince you that this model is is not limited at all actually that it can obtain quite a lot uh so let's start with what i call objects or containers we want to learn what are the hosts the methods and the question parameters and the relations to host and methods so actually this part is pretty much straightforward because uh all the things that we want to learn

are have actually have have a boolean nature uh url x at host y this is one boolean fact allowed could cookie x at urly allowed query string x at urly with methods that allowed all of them are boolean facts and again this is pretty easy it works very well no problem here however what about the parameter traffic profile we have later types we have ranges character set regular expressions can we also deal with with these guys uh so i i will dive into the uh area of um uh parameter type so suppose we have a parameter and we want to classify to include in the in the profile the type of this parameter now we will

have uh a num num is a numeric a numeric type allowed uh flag and uh which we'll try to learn and if we will not learn it then we will have a different flag in the profile numeric type prohibited we will also have a non-numeric type allowed and non-numeric type prohibited in the next slide i will show how exactly these are used they are very important and we have the same for string for boolean and also for none which represent the situation where we don't have a value for this uh for this parameter uh which is also um of course an important thing to learn so suppose that we want to include in the profile that the type is a string

and then we learn the flag str type aloud okay but we are not learning uh the flag num type allowed because we didn't see num type or maybe we've seen num type but we didn't see it in a way that passes all the threshold the test we also um we didn't see non-str type too much so we we don't allow uh non-str's within the profile only strings now if we see x equals abc or x equals x at gmail.com then these are strings we're good if we see x equal to 23 then actually we have num type scene and it contradicts the num type prohibited and there we go violation another example this time using regular expression we

want to enforce the fact that type is a mail address uh now the more i hope that you understand now that the more important is the prohibited part and not the learn part and uh we learned that non-male reg regeps is prohibited and the reason is that we didn't see many um indications of non-male values uh in the traffic for this parameter and and therefore it is not allowed and therefore it is prohibited and now whenever you see we see x gmail.com then this is okay because the non-male rejects uh scene is not there however if if we see abc then the non-male regex scene is there and it contradicts the non-male rejects prohibited and again we have a violation

when it comes to mandatory parameters or optional parameters then a missing prohibited and missing allowed are are the flags and the facts that we are using and it is essentially the very similar to what we've seen with the with the strings um when a parameter should we want to represent the fact that it shall have no value or that it has multiplicity then again we play with a non ta non non non non type and multi and multi multi-occurrences effects when we want to talk about the character sets then a non-b64 character set a fact uh when we want to play with particular uh special characters and we say that ascii 21 is allowed or prohibited ascii 7e is

allowed or prohibited etc and when we want to deal with ranges then here we need a little trick to do discretization of of all the uh of the of these ranges then we will not know exactly that um the parameter is between 34 and uh 345 but it will have to we will split the the domain into ranges for example we have greater than 5000 greater than 500 lower than 10 and we can play with these facts in order to represent these ranges in a way that makes sense for the web security domain and attacks so let me summarize data poisoning is a significant threat on learning mechanisms especially when they rely on data from

outside with or without labeling uh and outside um and it is applicable whenever this threat is there whenever you are doing something meaningful and that someone is cares about which i hope for you that this is always the case for you uh threshold based learning may provide an adequate robust learning solution uh the boolean facts framework provides a streaming friendly implementation for a threshold-based learning of a profile and although the boolean facts framework at the beginning looks uh restricted in fact many features can be expressed through this framework thank you very much and now we have time for a couple of questions