AI in a Minefield: Learning from Poisoned Data

Show transcript [en]

here we go uh thank you for joining uh the talk uh i'm uh i'm a lead scientist at imperva in the last 21 years i've been innovating on security and algorithms and their intersection i enjoy the game of analyzing threats and designing mitigation i love math and i love all sorts of algorithms and i love building security technology and uh in my spare time i love hiking biking uh trail running and traveling around the world uh alone or uh with my family and this is another one of my hobbies and i'm i'm really disappointed at this time i don't have a chance to to go physically to texas and i really hope that in the

in the next year we'll meet uh face to face and i can uh enjoy uh texas a good texas stakes i will start with a brief introduction of ai and the ai threat landscape i will continue with talking about data poisoning which is one of the of the main threats on on artificial intelligence systems then i'll continue with explaining the challenge of data poisoning uh in the world of uh web security uh how this uh uh threat is being um is effective in in this area and what are the possible mitigations uh then i will go into uh explaining uh about a solution to do a robust learning that is uh safe towards uh data poisoning uh on a stream data

meaning without keeping all the data and running it in batch processing uh which is very important in in many many applications and i will end with a summary and conclusions we are uh in the ai era and no doubt about that uh artificial intelligence is is everywhere uh it is uh it is uh it involves every probably almost every aspect of our life and the ai era is also the data error because data like the new gold or the new fuel uh the thing that that fuels uh artificial intelligence system uh helps them to work and do what they can do and uh both the ai era and the data era are responsible they have amazing contribution on the

on on many different domains however this contribution uh comes with several caveats which we usually ignore or at least underestimate to some extent uh and and this talk will uh we'll speak about uh about some of these most of you are probably familiar with the gartner hype cycle for new technologies uh and i think you can you can think about an equivalent security lifecycle for new technologies when you have a new technology and and that was the case for web that was the case for mobile that was the case for uh for iot then at the beginning uh people experts uh pioneers developed the technology without too much thinking about uh security uh they are excited with the with the

new opportunities that are unlocked and then at some point someone discovers that this system is technology behaves in an odd way when a particular input is being fed to it uh and you have a vulnerability and then another one and another one and at some point there are so many vulnerabilities that people start asking themselves whether um this technology can can be ever used in a safe manner and then we get to this uh valley to the to the bottom uh in the point where domain experts and security researchers start to join forces to develop a terminology to develop a methodology and to give names to attacks and to start the designing mitigation and then

we get to a stage of a healthy development where uh the main threats on this technology are understood and are to some extent mitigated uh and uh we get to this sort of a plateau this is not not very static because we know in the security we always have new vulnerabilities and new mitigations uh for them but uh but we get to a stable solution where technology can be used uh safely and uh and and ai is no different than other technologies in that sense it has no exception uh ai also have vulnerabilities and we'll speak about some of these in the next couple of slides what you see here is a typical machine learning system a

very very generic one uh there is a training data so in the training phase we build a model from a training data or training set um and this model can be a classification model regression model or it can be other things in depends on the other problem we want to solve uh and then uh this model is um stabilizing is converging and once seeing an inputs data in a phase that is called the inference data this inputs data an image is now being classified as a category dog uh it's that sort of a prediction in some cases then this this result the outcome of the model goes into an evaluation and and then it's evaluation whether it was good or

bad is being fed in the feedback loop to back the model in order to either to uh to enhance it or maybe to just uh if the model that we are building is um is coming to uh uh to represent in a dynamic environment then we want the model to keep uh keep being aligned with the uh with this environment and and continue to uh to evolve uh when you look at this system from an attacker's perspective then uh first you know the when you think of an internal attacker or an external attacker that's found his way in using some i don't know somehow a rce or any other attack vector then then the sky's the

limit you can do everything he can tamper with the model it can steal the model you can extract it and can modify every particular decision the model has made however even when the attacker is not an insider when he is working from outside then still there's plenty of things the attacker can still do uh one of them is a collection of um of attacks called uh sometimes evasion or adversarial examples or ai deception where the attacker actually creates data points that confuse that make the algorithm making correct mistakes most of you probably are familiar i've seen at least once this example of stop sign when you add a couple of stickers to it and then the ai engine of an autonomous

vehicle he looks at this uh [Music] stop sign and it looks for him like a speed limit site which of course can have uh a devastating uh consequences uh and in i think that in every situation where someone tried to create such data points that that uh that full the um machine learning model is success successful with with very little effort because we are in designs we know that whenever you build something without thinking about what will happen when the adversary will come and then your adversary counts then uh uh the adversary's work is it is very easy um the second uh uh threat is a training data poisoning and machine learning uh models uh are based on data

uh and this data usually on on big data usually it comes from outside from external sources that are not always uh trustworthy uh and uh if the attacker has some control over the data then he can carry out uh something that is called the data poisoning attack and we speak uh in in depth about this uh this kind of attack later on another slightly more esoteric threat uh is a training data leakage when you build the model from training data then some of the training data is actually leaking into the model is there implicitly and in some cases there are ways to uh to extract this data and if you use the sensitive data for

the training which is fairly typical because training is sometimes it used with a pii health record so it depends exactly on what is the problem that you're trying to solve uh then this is a thread that you should you should take care of and make sure that you are immune again against it uh but as i said we will zoom into uh data poisoning so uh what is data poisoning and how uh does it work uh on the left side you see uh a typical machine learning model it it is a clear classifier it tries to find the best line that separates between the red triangles and the blue circles and uh the the thing

is that if you take a single point and move it into different locations then the the optimal separator will be completely different so even if you have um if you're changing a very few uh data points in some cases it can have significant impact on the uh on the model that is going to be generated and on the right side you can see uh a more uh significance the example where the attacker actually uh tries to do something deliberately and he will put all these uh uh it is a batch of uh of red dots uh which will make the model uh be completely perpendicular to the original model and practically useless for uh separating uh

at the red from the uh from the blue which is exactly what the attacker was aiming to obtain uh now the the threat of data poisoning you know the terminology i i think it is fairly new i only uh heard about it a couple of years ago uh however uh this is not a new threat data poisoning um a threat i think it is um almost as old as as the internet because i i'm sure that like myself when you enter tripadvisor and you see now you want to uh to pick a hotel you see a positive or a five-star review then probably like myself you ask yourself okay is this a real review or a fake review

uh because trip advisor like many other travel sites or actually any side that use a rating system they count on data that is coming from outside and uh data is coming from what's up outside it can be subject to uh to malicious uh circumvention uh and this is the case for travel sites it is the case for um i don't know and film rating sites it is the case for e-commerce sites and everything that everything that has a rating system uh is subject to uh data poisoning uh and these sites are aware of that they do they take some at least some measures to try to mitigate the threat and to uh to minimize or

to make the life harder for the attackers to uh to impact uh the actual rating uh here you see an example of uh data poisoning in the wild and the attack here is a model skewing attack um sometimes called also a backdoor the attack occurred in the end of 2017 on gmail spam filter uh actually this is not surprising that one of the battlefield for data poisoning is uh is a spam because this is i think one of the of the first domains where machine learning models prove themselves very effective against adversaries and for the sake of this discussion let's say let's say they called some spam providers adversaries and what the attackers did they wanted

to uh to make the model think that their emails are legitimate so uh they fed the model with massive amounts of uh of spam like emails and they label of them as benign and their intention was of course that uh the model we learn all the the keywords the structures that are within uh these messages that you will learn that it will learn that these structures and keywords are actually representing legitimate emails and when they will have the actual spam uh then this uh then it will go under the radar of the model uh but uh uh google research team had detected it and and analyzed the and this is uh and this um this chart came from

google research uh another example again uh of uh of spam filters is um an availability attack so if the previous attack you can think about a bit of a sort of a backdoor because the attackers wanted to have backdoors within the model that will allow them to do something later so this was in is an availability attack the victim here is uh actually this is not an attack this is a research on the potential attack uh the victim here is a spam based spam filter and the idea was to pollute the the spam dictionary means legitimate words because they knew that this this is an open source spam and spam filter they knew that it works

uh that it is based on keywords of a bayesian network that is based on detecting keywords that are uh correlated somehow to spam or to legitimate uh emails and they created a large batch of um of uh uh of messages that were built from legitimate words probably very popular awards uh and they uh and they classified these as a spam and in this case they wanted to make the the staff filter detect more legitimate messages as a spam and indeed on the right side you can see the the results having uh one control over one percent of the of the messages it was sufficient to make the model classify eighty percent of the total of

the total legitimate messages as spam or to detect to classify 95 percent of the total legitimate messages as unsure uh both of them make this model practically uh uh completely unusable uh for any uh for any uh usage so probably like myself at the beginning you see these examples and you say okay so um we gave the attacker significant power we gave him the power to provide us data and to tell us also also what is the meaning of this data to do the labeling so if we only could get rid of the uh of giving the attacker the power to label then we'll be good right wrong because another variant of a data poisoning attack

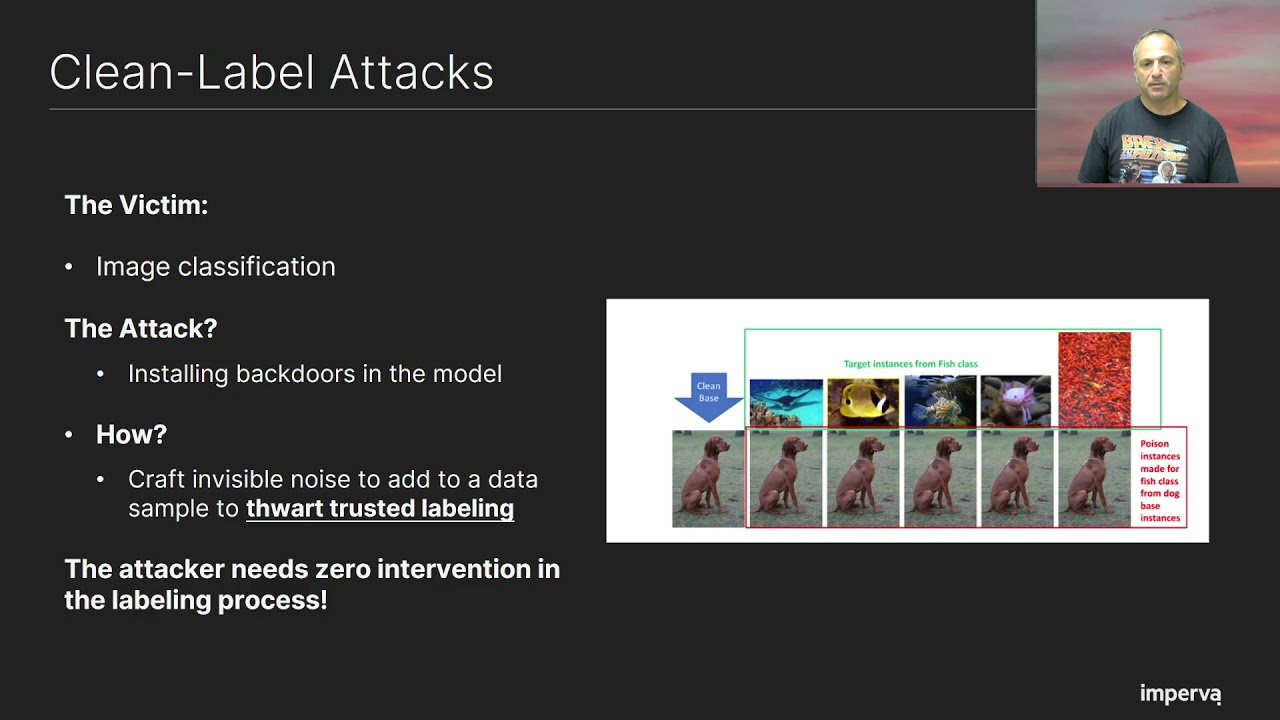

which is called a clean label attack actually works when the attacker is response is giving you the data but it doesn't have any influence about the labeling process and the way it works the the victim here is an image classification system and uh what the attacker does he wants uh this model to classify these images of fish you see on the top uh to classify them as dogs and the way the attacker achieves that uh he takes an image of a dog and now he adds to this image an invisible noise invisible for for me visible for you invisible for the trusted person that is responsible for doing the trusted labeling so he adds this invisible noise to the

image this invisible noise includes patterns that somehow are correlated to the the top image of the fish uh and what will happen next that the trusted labeler will see a dog will classify this image as a dog uh and the model will get this as a dog and when this model we see uh this image of a fish it would classify classify it as a dog and this uh attack works with a very very high uh uh success rate um you see this uh in this graphing that the blue side you see that the confidence of the of the model when seeing images of fish and classifying them as a dog is more than 95

uh same thing on the bottom when the attacker wants to classify images of dogs as fish uh this attack is uh extremely uh successful and the and the main thing here is that uh actually this attack works even when uh this service provider actually uh hire some uh trusted people to make the uh the labeling uh but still the attack is effective so uh that's we talked about the threats so let's uh talk a little bit about the mitigation uh there are two mitigation approaches which are well they are straightforward um the first one is let's filter suspicious data we get a lot of data that we do the training with uh now we can

filter uh data that looks suspicious uh it can be data coming from maybe the data itself is suspicious maybe it comes from suspicious suspicious origins maybe ip addresses that we suspect for some reason uh suspicious user suspicious uh client maybe we can uh filter out all the data that comes from from bots etc another approach uh another mitigation approach is to do the data sampling for the data that is used for the training to make it in a in a fault tolerant way uh to try to limit the impact of data points that are arriving from a single entity again entity can be a user it can be ip address uh can be things like that

uh and again if you think about the booking.com for example and i think that you cannot you can't do rating without having uh i committed a a transaction an actual transaction uh and uh i'm sure that if you go to i don't know amazon and you want to uh to give a rating to a particular product and you know you you push a 3 000 um five-star rating rates uh rating rates uh rates within one minute from the same ap and the same user then we probably you will get blocked so again this is a a a pretty straightforward not always very easy to carry out because we know that uh if we see messages from

different types then they still can come from the same source but it is what it is uh other uh mitigation approaches uh less effective uh are detection approaches uh like uh diff tracking uh to keep uh measuring the distance from uh a new model from the previous model and assume that if you see that the new model is very very different from previous model then uh prob then you can conclude that you are under data poisoning attack and you can use a reliable benchmark to build the golden data sets that you know for sure what should be the result of the model for this data set and if it makes different uh predictions then you can conclude that you are under

attack uh but but again these are um when the model comes to uh represent as changing a dynamic environment then uh these detections are are less effective because you expect the model to change and you don't know what is the the right prediction uh for a golden data set and of course there is a security by obscurity you can assume that the attackers don't care about you and you can assume that they don't know exactly how you work uh what is the model what is your model doing what is the type of the model and uh and even if they want to attack they will not know exactly how to do that uh a good deck with that we are in b

side we know that the security biosecurity uh very rarely proves itself as an effective mitigations against practically anything so let me summarize uh what we got so far so data presenting a significant threat on learning mechanisms uh this threat is critical when you use data from untrusted sources another uh important um condition is that the the output of the model the prediction of the model is significant for someone and i hope for you that every model that you are working with has a um is making predictions that are significant for someone uh and there is no silver bullet mitigation there is a collection of uh uh of uh mitigations that uh can be used uh to

throttle the attackers um uh but not necessarily to to stop them at once again and this threat is effective in any place where you have an adversary so every time you have travel sites or any site that uses rating definitely cyber security uh services um are subject because you have adversaries and the data uh next generation uh anti viruses they actually use data that not always they they know where it comes or not always they uh they can trust it so the threat is effective in many many cases next i will uh try to look at the data posing threat in the light of um when it comes to a web security uh what you see on the top

is a typical web security setup there is a web application or api and there is a waft web application firewall that protects uh the the application from outsider threats aka the internet usually web application firewalls working by using a combination of two security approaches just a second

uh there is the negative security model which assumes that everything is good all the traffic we see is good except for things that we know for sure that are bad usually when we have a matching pattern matching with some rule and the other model is a positive security model where um where the wealth assumes that everything is bad for what you knows for sure is good usually based on looking at the traffic for some time and learning what are building a baseline profile for how this traffic uh looks like and uh block or alert on a deviation from this profile this is practically what we call a anomaly detection and the difference each of these models have

a pros and cons um the negative security model is uh is more accurate it's false positives but it requires ongoing update because it is not effective against zero days uh but the main important thing for the sake of our discussion today is that uh when i'm when i'm saying that um uh the positive security model means that the what learns a baseline profile from traffic then you should now know that we have all the ingredients of a data poisoning threat being fulfilled being realized because data comes from outside and of course attackers want uh to make the wife make mistakes so no doubt that this is a situation is a is a good place for data poisoning

uh so again for the sake of this discussion uh let's assume that the the web or api traffic profile looks essentially like that and you have objects which are the red items here you can think of them as parameters uh query string parameters body parameters you can also think of of a cookie as a sort of a parameter and each parameter each object is contained in uh in a container which can be a url uh url and method maybe a host and url or global parameter can be different combinations of a host url and method and each one of them has a traffic profile which is the representation of all the values that are permissible to go through this

this object through this parameter and the features of this profile usually it makes sense to use features that have some web security meaning uh because they are somehow correlated to the way we understand the web attacks so it makes sense to learn the type of the parameter at the multiplicity whether it can come only once or multiple times within a single request optional or mandatory uh if it is a number then what are the typical sizes uh if it is a string then what is the length of uh uh the potential length uh range of this uh of the value uh and what are the uh the permissible characters for this uh parameter all of

these are things that can be correlated with uh different web attacks and it makes sense to uh to learn them uh and in order to do uh uh the learning in a robust manner um again this is a typical way to achieve that so the first uh phase you can do uh cleaning which is the first mitigation to filter all the suspicious traffic that you can think of so if you have events uh requests that you were classified by some uh engine as suspicious or malicious then you can throw them away if you have uh requests from ips that you have some reason to think that they are suspicious or malicious then again you can uh everything is good

okay um if you know that these ips generate a malicious traffic and again uh you can also when you have an attack on a site you can take all the batch off that request during this attack and throw them away because you you can assume that some of the attack was missed and uh and you can in some cases it makes sense all to throw away data coming from uh from bots uh then uh the second uh filtering is to try to do this um um this uh uh threshold learning to learn things only if you see them coming not from a single entity but maybe from a collection of different ip addresses or other network domains different user

agents different geolocation countries different identified clients uh and even if you want to prevent yourself to avoid learning from from a burst of requests coming at the same time then you can uh can require that you learn things only when you see them along several days or at least several hours and again you can play with the different uh attributes and put a threshold that makes sense for your application on any of these uh now however this threshold learning is it's very easy to uh to carry out in in batch processing uh however if you want to do base processing you you need now to to buffer all the data that you've seen along the

uh the learning period it can be a day it can be a week it could be months depending on what exactly you know how you set the learning period which in many cases will be impractical or at least very expensive and there is a need to to find a way to do this in a more uh memory efficient uh way uh and and at least in imperva we try to do everything we can in a streaming fact in a streaming friendly fashion uh in a way that uh we don't have to keep to to keep huge buffers that keep all uh all the data that we can do uh uh use the more efficient uh

structures uh in order in order to uh present the string friendly uh solution that we developed for um for a robust learning i will take you to a completely different world uh this this is by the way a completely uh fictitious uh story uh this is not a it's not a really research that we carried out important to say that in fact i don't have a dog i have a cat so i have no uh uh i'm not biased towards any uh dog food that ran so we want to uh to do some kind of a competition between two uh dog food brands pedigree and theo so we do sort of uh of a poll and between

different users and uh we got uh you seen the uh yellow text you can see the results and we got the 12 likes for a theo brand and six likes for a pedigree brand uh however we are afraid of uh data poisoning uh we don't want to give too much uh power to a single brand or a single uh uh city uh and then we set the threshold of at least three cities and at least their three uh dog breeds in order to to accept the fact that the theo is a tasteful um is a tasty uh food uh and when we do this threshold learning then only pedigree which has the six slides uh

it passes and the reason for that as you can see here that uh for tio there there is no sun bernard that they gave him a positive uh tastiness indication only pomeranian and german shepherd uh however for uh pedigree uh we have a quite balanced uh voting we have uh two votes from three cities and uh three uh uh dog breeds and then we're good and the reason for this uh for this sort of anomaly uh for this phenomenon is that uh the the data that we have you can see that all the uh new yorkers that have pomeranian they really like theo and there are uh quite many of these because our data is biased

towards uh new yorkers that have pomeranians and this is exactly the thing that we wanted to avoid we didn't want to to let this bias in our data to impact our decision so how do we do the learning on this data so uh first we have a pedigree is an object this object there is a fact that we want to learn whether pedigree is tasty or not and we define the threshold learning which is on two attributes city and breed for each one we put a threshold of three and for each one of these we actually maintain we keep a set of all the cities from which we've seen a tasting authentication for a pedigree

and we have the same structure for theo and the same for other facts like whether this dog food brand is nutritious and at the beginning we see no data so we are convinced at nothing we don't believe at anything we wait for data to start being convinced data coming in and now looking at the data we see that uh pedigree tastiness now have um tastiness indications from three cities and from three dog breeds and now we know that we're good uh we passed the city threshold we passed a brief threshold and therefore we now accept that pedigree is tasty on the other hand for teal we have only two cities and only two dark breeds and therefore it didn't pass

any of the tests and we don't believe that the ethio is tasty so uh what we learned in this uh thing in this example that we can learn a boolean facts whether an object x in this case this is dog food brand it has a property why which is whether or not it is tasty this is a boolean fact uh and you probably understood that the memory consumption here is proportional to several several parameters and to the number of the objects of course to the properties or the facts that we want to learn about every object and to the number of attributes and also for the thresholds because we need to uh to keep all the cities from which we've

seen uh this testing authentications until until we pass the test and then we can throw them away but uh we can until we pass the test we need to keep them but the thing that we wanted to achieve is that it is independent of the size of the uh of the data itself uh so it makes this algorithm this implementation of threshold learning uh memory efficient and can be used in the streaming uh situations and environments uh so we have this model of boolean facts how this model can be used in when we want to to build the web's uh profile or api profile and to uh and to enforce it later so actually it

is very straightforward when we see a data point a request then we can extract for a given fact whether fact x was seen within this uh request we collect all these fact accessing and then we try to learn uh whether uh this fact x passes all the threshold tests that we that we have and if it passes all the thresholds then uh we can define it as allowed and it can be now part of the profile if it is not allowed meaning that at least there was one threshold it hadn't passed then uh we have a different flag within the profile which is fact x prohibited uh so think of uh of a web profile as a

collection of fact x allowed in fact x prohibited for different uh facts uh during the inference we when when you see and you request you take uh you extract all the fact x seen from this request and and now you do you enforce by looking at fact x there is in this request that is corresponding to fact x prohibited and when you have a match then you have a contradiction to the profile and you can now declare a violation uh so this is how the enforcement can work and it is pretty straightforward so the boolean facts approach uh works well with uh with the web or api profiles uh but uh is this enough meaning

we can do boolean facts but what can you express with boolean facts so let's start with the object and containers we talked about url hosts and parameters cookies so in fact these are uh goes along pretty easily with boolean facts because um if you want to learn that the particular query string parameter x uh is uh belongs to urly with uh method get or post or or or that uh then the nature of this piece of information is boolean uh so this is pretty uh straightforward and it is the same if uh which urls you have and which cookies belong to url and which methods are available for a particular url it it goes fairly easily uh however

you want to answer also questions like what about things like data types ranges character sets etc uh so if we look now on the type of a parameter then you you need to do sort of um in in data science it is called one hot encoding and you divide uh for each of the uh of the categories of the parameter for uh parameter types and numeric string a none if it is representing a non-existent value or bullion so you have an num type allowed flag and a non-num type allowed flag they are different um and you have also corresponding uh the actually the the opposite one and so i either have a num type allows for this parameter in

the profile or a num type prohibited in the profile uh and you uh you have one from each uh from each of these pairs so how you use them and suppose you want to have in the profile to express the facts a particular parameter is a string and then you learn that a string type is allowed for values for this profile uh but you know you don't learn uh you fail to learn that num numeric type is allowed and therefore you have that numeric type is prohibited and if you only see strings then you you cannot learn that non-str values are allowed so you will learn that non-str types are prohibited and then when we see x equal 23

then it is prohibited and we have a violation if you have uh if the type is a mail address and you have um a regular expression that represents mail address then again i i believe that from the first example you understand that the more important uh flags are not the green ones but the red ones so you will if you only see email addresses then you have in the profile a non-male regular expression prohibited even if you've seen one or two times things that are not compatible with the regular expression they probably didn't pass the thresholds and and then when you see now abc for example in this value of this parameter which have non-male regular expression

then it contradicts uh this non-male regular expression prohibited flag and again you have a violation whether mandatory or optional the parameter is mandatory or optional so it can be addressed with a missing prohibited or missing allowed flags when you want to [Music] express the fact that a parameter can or cannot have a no value then do the same with the non-type allowed or not and non-non-type allowed allowed or prohibited when you want to express the fact that multiplicity is allowed for a parameter then again you can do it in in the same way you use multiple occurrences allowed or prohibited when you want to handle a character set then again you can build flags that are

corresponding with a set with character sets like a base s64 or for particular characters for example if you uh you want to say that um to express the fact that alpha numeric is okay and the colon and semicolon are okay but any other special character is forbidden uh then you will have str type allow the alphanumeric type allowed but special characters prohibited for the different special characters except for the ones that uh that we've seen so um when you want to learn uh numeric features and then you need to do uh more sort of a trick to the discretization of the of the range uh into uh several uh ranges so uh if you're uh

in the learning you you want to learn that to include in the profile the fact that the parameter length is between 34 and it's 345 characters then you have to define in advance threshold like 50 and 500 and to learn uh boolean flags boolean facts like uh whether the parameter value can be greater than 500 or greater than 50 and greater than 5000 or lower than 10 and uh that can help you to uh to express this thing uh but i i i don't get deep into the details so however it is done but i want to convince you that it can be done so we talked about uh uh about a profile uh about the features of a profile let me

uh try to explain uh how the boolean framework the boolean effects framework can be used for more complicated models like decision trees on the right side you see a very simple decision tree and this is one of the one of the machine learning models that are more popular and you have their two features whether a particular animal breathes air and whether an animal lay eggs and each of them is the bullion and when you see an animal then the decision tree says that you go yes no breathe air lay x and you uh in the leaves you have the the classes the different decisions and the potential decision and this is a very simple uh model uh and usually it is used in uh

something it's called an ensemble when you have a collection of models uh that are uh work together one type of ensemble is ensemble is begging when you build many models independently and then you do some kind of a voting or averaging of the the prediction of the models another approach is uh called boosting when you actually you you don't build the different models independently but you uh you build the models in a way that corrects the uh the mistakes of the previous of the previous model and then you you have a collection of models that together provide a a good result so how can we use the boolean framework in this situation uh let me first explain uh

what you see here in this decision stream uh so if we look at uh at this node at battle with a smaller equal 1.65 then 48 samples from the training data reached this uh this node uh 47 of them had the green class and one had the purple class uh and the decision if we should spawn the the one that has the uh the most examples which is uh uh the the green class versicolor uh so for the for building the tree uh the boolean framework cannot cannot help you but there are ways to build a trees in in a streaming a manner but the the boolean framework can help you to do validation of the tree and the way to

do that is you can add the sub leaves to each of the leaves uh you can also add a sublease to to the notes and all these uh fab leaves are actually um if we have here in in the most left leaf we had only uh the green cloth then we'll have a sub leaf for the green class if we have a nodes that have uh two classes it will have two sublimes representing uh both of them and now we go and we use the threshold learning and the boolean facts to validate uh these sub leads [Music] so you can see here uh for example for a decent note with the 47 and one uh

you have 47 data points uh that were had the green class so probably let's assume that they passed all the thresholds however you had only one data point for uh for the purple class so probably didn't pass the threshold so you learn only nodes and branches and leaves that are a pass all these deaths and now the tree that you're getting is actually a subtree of the original tree uh there is a some caveat here that before beforehand we only had one decision per sample now we have situations exactly for example uh if we reach this point uh then we have nothing that is validated so there is no decision for the model and if we reach this point for example

the tree was only truncated at this area at this level and this point has two decisions so we need to handle this situation but there are many ways to do that uh so this is a way for uh to do the validation of the trip uh what can we do when it comes to ensembles so when it comes to bagging actually this is straightforward when we have the models that are set we can now validate the trees and in fact it is even easier because the problem that we had of no decision or multiple decisions uh when you do an averaging or voting then you can say okay when i have no decision then i have no vote when i have two decisions

the permissible decisions then i give two votes one for the first class and the second for the second class uh so it actually may make sense uh for uh boosting uh and samples it is less straightforward to use because when i say three validation this is not exactly validation uh it is beyond that it is uh uh also actually changing the the tree and since we have a path of optimizations here then if you change the tree then you need to make sure that um that you will not impact the rest of uh of the chain so it might be useful also for this area but uh i uh it is probably uh more complicated

to uh to obtain that uh this is a good um direction for a researcher i believe so uh let's sum up um later poisoning is a significant threat or learning mechanism uh it is uh it is there whenever you do something meaningful based on data from untrusted sources with or without a trusted labeling and threshold-based learning may provide an adequate robust learning solution uh the boolean facts framework that i presented provides a streaming friendly implementation for threshold-based learning and many features of uh when you build a profile can be expressed with boolean facts even if it seems that a limited model it is not that limited uh it can be uh it can work also for

uh for numeric uh and for uh a categorized uh features and a traditional learning of trees and forests is partially possible with boolean in fact i showed you how you can uh you cannot build a tree uh in this manner but you can do validation of the tree to make sure that your tree is a full tournament and that's it thank you for listening and now we have a couple of minutes for

questions

um

i can't hear anyone

so

um

you

um

so

dropping out um video issues so i'm gonna go ahead and end this broadcast now um thank you for coming thank you for presenting um if you can make sure to stick around in the track one uh breakout session in discord to look for questions from our attendees but beyond that um we do have a question from ryan coming in which is interesting information more of a statement again again um so please go ahead and jump into the discord room and i'll go ahead and close this session out okay so i'm in a track one breakout yes okay awesome

hi there i'm melissa miller and i'm a hacker i'm also a security researcher and an advocate for hacking is not a crime and i'd like to share with you what being a hacker means to me because you see since i was a young young child i've always been a hacker i was the kid that liked to take my toys apart to figure out how they worked and to see if i could make them work better when i turned 12 years old i got a job i saved up enough money and i bought myself my own computer and on that computer i wiped it clean started from scratch figured out how to build it from the

ground up learned programming so that i could write my own software to run on my computer i learned modem communications and serial communications so that i could figure out how these online services that i like to use were working and so when i got into my career i took a lot of different twists and turns i started off as a penetration tester i moved into consulting i worked at high executive levels building massive application security programs across large enterprise organizations i work for product companies and resellers but through it all the one thing that stuck with me was this identity of being a hacker now we hear the word hacker thrown about in the media and

it's usually connected with some type of criminal activity but being a hacker does not mean being a criminal being a hacker is all about this innate curiosity this passion to understand how things work and to see how we can make them work differently better create new things i'm reminded of a quote from a keynote given by jason street one of my colleagues in which he said hackers are inventors and creators not criminals and freaks and that's the reality hackers are people who want to make technology better we want to make it do cool new things we want to understand how it works so that we can innovate we can make things better and we can make our lives all the more

exciting through technology so i hope you'll join with me and with hacking is not a crime to spread the message that hacking and being a hacker is not a crime we're not criminals we're artisan inventors thank you so [Music]

[Music] much

[Music]

[Music]

[Music] you