Using Machines to Exploit Machines — Harnessing AI to Accelerate Exploitation

Show original YouTube description

Show transcript [en]

good afternoon everyone and welcome to our talk and we hope that this slot will not get you too drowsy everybody had something to drink after lunch you're a bit more awake and we'll try to make this if not entertaining and least plausible in a sense before we begin there's a lengthy disclaimer that you all required to read and sign off on that's it no no in all seriousness I used to work for Intel not any longer which is why you can see me crossed out at the bottom when why I can make this joke and say you don't really have to read this anymore

someone has to my name is guy and this is Ezra we are both co-organizers of the tel-aviv besides which happens around June and you're all very welcome to join us in sunny Tel Aviv it's a pretty awesome besides just like here and we go and often talk about topics that cross both of our domains of expertise with this talk being one of them we do have some experience and we travel the world to some of the finest conference out there we both believe that besides service the most of our most lovable best conferences we love to attend loved to speak at because this is the one that's most homey most approachable most community driven and

this is something wanted to point out before we begin a bit of a history about how we came about to this topic and about the talk that we're going to do today because it's maybe a year ago about a year ago yeah and we're driving our car or a rental car and we're like wow this year we have some very good content and we were like we have we're talking about machine learning it was like so last year we did a talk here in this very same room about machine learning huh and how he would hack machine learning and we were trying to figure out okay this is a good topic this is something interesting which we

both like what can we do with it next what's the next step and that next step and that conversation led us here today which is how can you actually use machine learning to help us in our everyday life in our jobs of actually finding and exploiting issues in various systems that we explore so let me let me start with with our problem and and how it is related to our last talk last year our objective was hacked the hell out of machine learning and what I'm talking about that I'm not talking only about that were in mikkel part of the adversarial networks I'm also talking about finding benevolence the different frameworks that exist for different

machine learning purposes so we need what we researchers love to do the most and it's basically from a father and this father started to generate thousands of crashes to analyze and you can imagine that this is a good problem to have having gallop crashes good but take a lot of brushes brings us a small issue not of those cracks are exploitable and automation can do some good work but we made this some things I mean using heuristic approaches to find which power brushes with the gdb are exploitable boards but we make this a lot of interesting stuff on the other side doing it manually it's extremely expensive me as a researcher and math team of researchers can only go through

a small amount of explosive crashes like imagine for every single craft need to reproduce we need to open our GDP debugger and we need to take a look of the tire flow to know if it was exploitable or not and well this doesn't scale at all and when we when we saw that it doesn't scale we thought that our work would be mostly about building the model because we had the crashes and there and we thought well we are going to be working a lot on building this model and then so that the real problem was starting to gather the data because imagine now that we have thousands of crashes but we don't even know what's

their status we denote their good pressures bad crashes is same crashes we don't know anything about them so gathering the data became a big problem and then then we struck the real problem which is gathering good data because there's a big difference between data and good data like if I have 10 thousand crashes that all of them are going through the exact same path it's the same of having a single crash it's not relevant for me at all so this was a problem finding what is a good data finally how we can sift through different crushes and try to understand how we can leverage them so our problem statement became something like this can we as

researchers generate a machine learning model that can help us with the triage crushes and help us find exploitable ones and again remember that were problem born with thousands of fatal crash so to summarize that and kind of like put the focus that put the spotlight on our problem is we have a team of researchers it may in this case my team had about five researchers five researchers can go through I don't know maybe ten to twenty crashes a day in a very good day we have 10,000 crashes this does not scale this is not the solution and more than that in order to look at those 10,000 crashes usually you will see a lot of crashes that are

duplicates of each other and that means you are wasting a lot of time in finding out that they are duplicates of each other so the big question is can we use machines to help us sort out the exploitable paths or the exploitable crashes that we actually want to look at and invest the time and resources that we have into where we can actually drive impact so that was the big research question and the way we try to tackle it is to see if we can build a machine learning model that would outperform would actually be better than the best known alternative which is an open-source project which is called exploitable because the name is so

confusing I have underlined it and every time that I will refer to open source project and it says exploitable with an underline I mean the open source project and I say exploitable another way means it's just exploitable so this talk will not show you how to exploit the next zero day this talk will not be releasing new tools and methods in order to exploit something new that you haven't seen before but what we are going to show in this talk is how to utilize how to build such machine learning models see where they can help you and where they cannot and try to differentiate between the two cases and walk you through the research path that led us

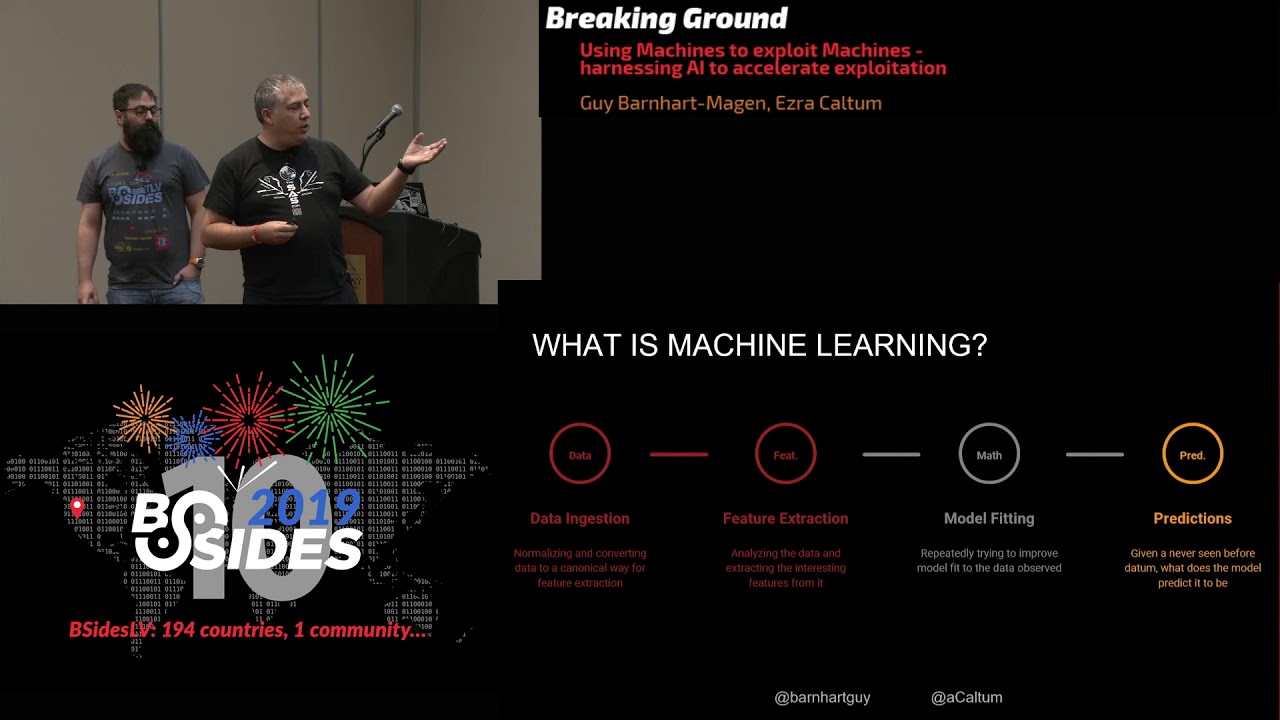

from an idea to something that actually works and the hiccups that we found along the way we do want to say beforehand we really can trust our results and we'll explain in the end why we can trust the results but you should always keep a very specific eye on the details because the details are what really really matters so we'll be happy to expand after the talk if someone has specific questions or issues so I'm not going to do this like 101 into machine learning what it is but I'm going to give you like a very high-level overview of how machine learning actually works so machine learnings has a couple of different paths when you building a

machine learning bundle or running a machine learning model the first step is that you have to give it the data and in order to give it the data you have to collect the data from whatever sources you get your data from and then you have to extract from that data the pieces of information that you want your machine learning model to look at for example if you're looking at the log file and log file is the large piece of data maybe I don't know 1 million log lines but the machine learning is not really reading 1 million log lines you have to extract from each line the specific items that you want to the machinery want to look

at the time step the host name the alert level the stuff that you wanted to actually look at this is called feature extraction so we have the data and we have to filter out the specific pieces of information that you want to look at and then we good we provide the model with that information and the model will go through an iterative phase where it will train on that pieces of information and will actually fit the model to the information that it saw and this is a very important distinction to make when a machine learning learns something it learns on basis of stuff that it had seen it does not learn on stuff that he has never seen before

therefore when you're hearing someone saying I have a machine learning model I trained it on data set a it's now capable to find out anything and everything where in the world it's probably not true okay keep an eye on the details the last point is that after the model has already learned the information in it internalized that information the next level is to make predictions so if I have a machine learning model that learns how to distinguish between cats and dogs so it learns about a lot of features of cats and dogs and now I can give it an image that it has never seen before and it will give me a prediction is it a cat or

is it a dog and the way that it works if we say okay I've never seen this picture before but it looks very similar to all of their pictures of cats that I've seen before though this is probably a cat and the prediction would be something like this is a cat 0.7 probability okay so this is how machine learning usually works machine learning is not magical I know some people think it is it's not it's not magical it's not difficult it's not complex at least when you starting out and then you make a lot of fine-tuning and nuance and stuff like that in the end you can think about it there used to be a show what was the

name of the incredible the Mystery Machine the Scooby Doo and there at the end of each show they would go up to the the villain and they will unmask him and they show odd it was you all along you meddling kids whenever someone who says AI a machine learning turn off the hood you see that it's linear regression okay so it's not difficult it's not complex but in the end it's a tool that fits some sort of problems and does not fit other types of problems and it's a very good buzzword just like other buzzer to be all like like blockchain and cyber and zero trust and stuff like that so it's very good to

keep it in your talks and the general rule of thumb is it's machine learning if you see code it's AI if you see presentation keep an eye on the details so we'll give you an example when you write a talk and you submit to a conference you don't say you're doing machine learning talk you are hacking AI you get much more acceptance rate that way but in the end everybody that says AI really means machine learning or statistics or statistics or linear regression or heuristics in the end it's all comes down to the same thing so it's not that we are not on board the hype train just a different we have a different look

from the inside so what is machine learning good for if I already struggle stop that it isn't it's very good at finding patterns and we as researchers trying to find patterns it's a good tool to have in our arsenal because if I'm if I need to scan 1 million log lines to find some patterns obviously I'm not going to do it manually having it to run on my screen trying to catch this something is miss formatted I'll give it to a tool will do grab and find it much better than myself the same way machine learning can find patterns where us as humans can't really see those patterns another thing that machine learning is very good at is looking at variable a

variable B then variable C and say these variables correlate together in some sort of way and if you can look at that information sometimes if you can find new links new opportunities that you haven't thought about before so if you fit at the right information it defines the right correlations you can learn something new about your data set that you didn't know beforehand and in the end something that you can do with machine learning it's a bit difficult to do with the better script you can abstract the problem throw that machine learning and see if something comes out the other end and if something does come out the other end it means that there's some sort of structured information

there that the machine learning was able to catch on to which means that you can catch on to which means that you found something new that you didn't know before and this is kind of what we were going for here we knew how to find exploitable patterns in code we didn't know how to write programs that will find exploitable patterns code so we just obstruct abstracted the problem way and called it machine learning and said look this some it this is something that is exploitable I know it's exploitable you find out why and tell me so this is what we try to achieve we wanted a system that we will give it some input and it will tell us

this is exploitable and why okay part of the problem is that machine learning is given predictions based on information that it has seen in the past so if I give it some sort of a new exploitable path that it has never seen before it will not be really able to tell me that this is exploitable and it's something that we kind of took into account okay there are 80 percent of the exploits out in the world come out of the same group causes so if you train the machine to find these root causes we will cover 80 percent of the world and the other 20 percent of the world is something that we've never seen before

and requires manual work and we might miss it with this system and you know what that's fine that's perfectly fine because if I can go over ten thousand crashes and throw away a thousand of them because they're all the same thing I saved a lot of time I saved a lot of resources and I'm very happy with that result so what we try to do is to create this data set test it validate it and to make sure that we actually actually have a working what would call it product but least a tool a tool that you can use in order to better facilitate your research activities in order to do so we first have to teach or understand what a crash

is to the machine what it needs to focus on and how we would go about describing those issues so before we start describe what the Machine does we'll start with what a human does and with that as well so I'm going to tell you a little bit about my life I love thinking about my life particularly I'm morning life or how like to call it when I arrive to the office but actually when I wrecked the only starts with the night before the night before I set up some of my passers to run on certain applications and started running in the background and I went home because I'm lazy and I need sleep the next morning I arrived to the

office extremely early from 11 o'clock and I drank my first cup of coffee because it's not like an opportunity afterwards I start seeing what were the results of that puzzle session I open the debugger I load into gdb the core logs from the crashes and I start to analyze them based on my experience based on what I had seen before based on the fact that I had seen before and the way I had analyzed crashes it passed I classified those crisis we either a potential to be exploitable or not and afterwards the real fun starts which is developing a proof of concept to for the crashing trying to actually exploit it this is the fun part of my day week here

whatever as we have seen until now with the dark until now it seems like a classical data problem because I have multiple brushes based on experience and I expect a result with a certain degree of foraging so if we are doing with machine learning a machine earnings do not need a slave nor coughing which is probably they will start a revolution eventually the prepper chief face is the same thing as me opening it with a debugger and extracting the different registers on the different data that I needs to perform I work and then the machine learning analyzes today dot is a experience I can have like its we train the model to learn from old self from

experience we managed to transfer this knowledge and amidst the same predictions and afterwards it will probably return to me to develop the concept we are still not on a place where the machine learning will be able to generate the payload that will generate a nice explode

so in order to train our model the first thing that we needed is something that will teach it experience or to give it experience and we looked about the internet we looked about our office we looked about our friends and we asked everyone do you have like a very good data set of a couple of exploitable crashes that you know what they exploit and what is the exploitable path in them so you know that this a program crashed and you know that if you will use this payload you will get a buffer overload in this address and the answer was there I have lots of fair crashes but no I don't really know if it's exploitable or

not so the peak problem was not that we were weren't able to get data we wanted good data we wanted data that we will know that it's either really exploitable or the summer took a look at it and it's not exploitable because all of the other pieces of data which is yeah it might be exploitable expert or it might not be exploitable doesn't really help us teach the Machine anything okay sorry so we search about we talk to people and finally we stumbled upon DARPA Grand cyber challenge and the reason that this really came as a huge bonus for us is because they were Ronnie already done all of that work for us for that

competition so I was not going to do this what this ARPA the Darbar cyber Grand Challenge was however what they do provide is they provide the Bahamas of six hundred and thirty two different exploitable crashes that come with a nice little wiki that says exactly for each and every binary what kind of exploitation technique you can use against that binary and they're already wrote the exploits so now we have a data set where we know for each and every binary in that data said that it is exploitable and we know how exactly it's exploitable so now we have something that we can use to teach the machine so we took that that data set and we ran it

through that open source exploitable and the open source told us that out of that 632 cases 607 are definitely exploitable twelve were probably exploitable and he didn't have an answer regarding the other thirteen so this is our baseline that means we know that all 632 are really really exploitable and the open-source package only found 607 to be exploitable so this is the baseline that we are going to measure ourselves against can we do better than that so in order to do that we looked at what kind of information we can use and we have tons of information we can look at the registers we can look at the addresses at the stack start at the spec

itself we can look at the heap we can look at the the control flow information we can look at a lot of different pieces of information and after we analyze a lot of that we said ah screw it we don't know we'll just take something simple see if it works and if it doesn't work we'll make it more complex but let's start small so we started with just a regular registers there EAX EBX pointers and segment registers stuff like that so our first step will take the crash dump then we extracted the registers from the crash down and we fed those registers for each crash we fed it to the machine learning to teach it

something and we'll talk a bit about how we taught the machine so we created a pipeline that just read the binary the binary crashed we captured the crash logs the crash down we analyzed the crash down and we took that crash dump and forked it into two different processes the first one took it and ran it against exploitable and the other against the machine learning under test ok so we can compare those results and then we hit a problem we hit a lot of problem this is just the first one I'm going to describe and maybe someone can see the problem right from this graph this graph is just showing the value histogram sorry not you some of the

value spread for the ECX register and what you can see here that if you look at the value of the ECX register over the 632 different runs of the program of the different program and see a lot of times it's a very low value sometimes it's a high value and very not often it's somewhere in between but that means is that most of the time when we are running this and we get a crash will either have very low value of a very high value so what does low value and high value mean here a register holds a piece of information the ECX register usually holds either one of two types of information either it's a value for computation like 1 plus

1 2 plus 3 usually it's a very low value or it's an address an address is usually something which is very large because the way of that the address is structured if you translate into what an integer value so either they say X is holding an address or if the new six is holding a value so that's kind of what you see in this graph and these two cases are dramatically not the same because if I have a crash and e6 was holding an address this is something interesting of type 1 and if we hide the crash and it held only a value like 5 it's interesting but of a wholly different degree so we need to we needed

a method a way to differentiate between these two cases because when we try to teach the machine learning model this it just got really really confused because they didn't know how to differentiate them so what did we do so there are a couple of different techniques of how to circumvent this problem the technique that we finally decided to go with something called pinning pinning is to take your value space and just chop it up into blocks you can do it uniformly or you can do it oniony formally but in the end we just took the easiest route we are lazy as as I mentioned and we took the entire range and split it into ten different blocks and then most of

our values either fell in the first block or in the last block and very uncommon ly summon the blocks in between but once we did that and we could teach the machine that says ok if you see something in the first block this is type a and this is something in the high block this is type B it made it much easier for the machine to understand and actually analyzing the value of the ECX register in each machine we thought because we don't really care about the value we just heard about the different cases so the first machine learning model that we tried to use maybe small introductory for that usually when people are discussing machine learning they mean a

very specific type of machine learning and that is the neural networks this is like the most common case neural networks is great but we obviously couldn't use it and I said obviously because it's really not obvious so one of the things that I said you really need to mind the details is the that in order to use any type of machine learning or more advanced models you have to tell it something about the good cases but also about the bad cases so you have to teach it like a crash that is exploitable but also to teach about a crash that is not exploitable and this is a big problem because we have a big data set well not not being

but pretty big data sub 632 crashes that we know that they are exploitable but we don't really have the other kind we don't have a crash that we analyze and we know for certain that it's not exploitable and this is also a very tough cookie because in order to validate that some crash is not exploitable you still need to invest time and effort in resources to analyze it to someone have to have someone sign off of it and say now this is not exploitable and usually it comes with like a mile grunty I don't think I can exploit this maybe it's exploitable by someone else so we can't really know if something is not exploitable we know

when it is exploitable because we know how to exploit it but we are not really sure if it's not exploitable by the mere fact that we didn't find a way to exploit it so it's not the same thing so what we chose to do is to go into a different branch of machine learning algorithms they take a single class a single class our algorithm just says that you only know one thing about the problem we don't have a lot of types of information about the problem so what we do here is we know all of our data set actually fits into the same class we know it's exploitable remember we have the wiki and now we will use these types

of machine learning algorithms in order to teach it something that we already know so the first type of algorithm is called one class SVM or one class support vector machines and very hand waving I will explain how it works so imagine we have this black spots and these white spots and we are trying to find a line that will separate the two groups from one another okay this is what the machine learning does it will try to find the the right equation for this line right parameters for the equation for this line that will give us the best separation between the white dots and the black dots so the green one here it's marked h1 is the very bad

separation we all see it's very bad separation because it doesn't really separate them so what the machine learning are going to do it will change the parameters and we'll skew it a bit now we have h to the blue line so this is a good separation because all the black points are on one side and all of the white points are in another side but mathematically speaking it's not the best separation that we can get because if it will keep changing it until we have h3 this is the red line the average distance of each spot from the line will be this the smallest for the red line versus the blue line so what I'm trying

to say here that even though the blue line is the good separation it's not the best we can do so usually what we do with machine learning algorithms we're trying to find the optimal separation between these two classes do groups of information so this is what we did with SVM and to just to reiterate what we know we know that the entire 632 points are all exportable but we took only 609 of them those that exploitable the open source found to be exploitable and we use this as our common base we taught the SVM that look this all of these are all of these points belonging to the same group now check out the rest of the

points and tell me do they belong to that group or not so you can imagine it like this we took those group we cluster them together and now for each new group that I'm adding I can calculate the distance repeat that new group between this new sample and the group that I had from before and close to the caster it's exploitable and if it's far away from the cluster it means that it's not exploitable okay so this is what we've done and we got pretty good results from those 23 records that exploitable wasn't sure or didn't know about 23 of them we found to be exploitable and two more were probably exploitable okay so we really

outperformed the open source package okay just by looking at the same pieces of data from a different perspective okay and that wasn't enough we wanted to test a couple of other algorithms to see at least the lay of the land ooh can we find a better algorithm ting more robust again we really wanted to increase our own trust in the model because we don't really trust it so another model is conch is called cosine similarity cosine similarity just means that we are going to measure the cosine of the distance between two points so here in this graph we have two points a burger and a sandwich obviously they are kind of the same class but not really the same thing

this burger sandwich I'm not sure if it translates well to English I don't think that work is a sandwich and then we calculate the cosine difference between them and if you're very very interested here's the formula you don't really need it and we looked at that model and we used used it to calculate the distance and we got other other results and here we got the 16 more samples to be exploitable we are still outperforming the open source package but we are not outperforming it by much but then we change the model from linear cosine similarity to centroid cosine similarity I know it sounds scary but all it means is that instead of looking at the

distance as a straight line we are looking at it as a sphere and it's very spiritual distance between points so when we change that algorithm suddenly we can cluster more points together just by looking it as how how far away are those points on a sphere versus how far away are they in a straight line again we outperformed the open-source package by a significant amount we also played with how many registers we are feeding into the machine learning to see do we really need all of those registers to get to get those results and we started with nine registers and we got 65% which is good and we upped it to fifteen registers now we got 87% so we fed it

more information that it had previously and we got better results not very surprising the last model is called XG boost energy boost is something that's probably the most similar to neural networks which people are very familiar with and that is we took eighty percent of the data we use it for training and we kept the other 20% for test and validation and excuse me and that is actually not very useful it's useful just in one parameter we found out that we have between 95 to 99 percent accuracy for this type of phone 4xg boost it means sorry yeah it was so we looked at that and said okay we have 99 percent success rate for

this it just doesn't doesn't make sense and then we looked at the data and we found out that we were pretty much lying to ourselves most of the time because if we looked at the data remember we had six thousand thirty two crashes out of them 609 where we all agreed that were exploitable and that means if we only guessed exploitable we will be correct 96 percent of the times which mainly means that there is no randomness involved as if there is nothing to learn if I ask my three-year-old son just keep saying no no no no he would be correct some of the times really so we didn't really learn anything useful however actually boost does give us something

else and this is called the decision tree and the decision tree what it does it takes each parameter that actually bushes they're basing its decision on it and puts it into a tree structure and that way you can you can visualize which parameters were more important to make decisions on XD boost and that gives you insight into the parameters that are have the most impact in your data set which registers are more important than others which data points are more interesting to look at than others so just to walk you through it if you're looking at EBP again is EBP in it being one assume that it is is assigned bin one similar to this is

Airfix and bin 1 yes it is then we have 16 records that fit this filtering criteria out of them 7 are exploitable for a probably exploitable and 5 are unknown so this gives you an insight into the way that the data data is structured so let's look at it at a different way EVP is not in bin 1 and ESP is not in bin - if that is true then 571 records are exploitable which means that now we have a very good rule of thumb we'll just look at the crash and if EVP is not in been 1 and ESP is not in bin 2 we now know it's exploitable success now we can profit as well so

eloquently put it before this means absolutely nothing because that's the way that the data is structured for those samples and we use the model that needs to have input about the the other completing the side of the information which is water is a non exploitable path look like would you'd never never saw I didn't know didn't train on any unexploited path because we don't have that data and that means that even though we have that decision tree it didn't really teach us something useful and this is something else that you have to really keep your eye on the details here when someone gives you information statistics out of those machine learning models statistics are great and they really describe the way

that that machine learning model was ran and trained on but it does not give you any kind of insight what was the data it was training on so if you don't know what the training data was what was tested statistical properties anything on it looking at the results out of context you just get that results out of context just like I got a 99% success rate it means absolutely nothing so to conclude this let's say experiment of this journey that we took with machine learning is that yes we can take the same kind of samples and compete against exploitable dopant source package and we can outperform the open source package but the open source package knows how to deal with instances

from the real world our machine learning model has no idea about the real world it just knows those 632 samples that it saw if we try to fit it new information new pieces of information never saw before will this whole true will this still find stuff to be exploitable when it isn't or when it is we actually don't know we don't have enough information to make those decisions and we believe we have at least a gut feeling that this is true which means that if you can think about the open source package as a set of heuristics that describe a set of rules that if you follow those rules you will know that something is it's

portable or not machine learning does the same thing it describes another set of rules that if you follow them something will be exploitable or not what we're trying to say here is that our model is probably not well trained enough to be useful in a real-world scenario but if someone wants to take it and train it or take it a different path and train it he will still probably get very good results probably better results in the open source package so in order to build this yourself you can do it you don't need a mathematical background you not need a data science background and which is a common misconception in order to build machine learning models you need

probably to know Python in order to write new machine learning algorithms you probably need a PhD it's not the same thing so don't be afraid go ahead pick out your favorite data science package talk your favorite data scientists and ask some advice and it's not that difficult it's not that hard it probably burns some CPU hours and that's it you'll have some results you'll need experience to interpret them but the way that we gain experience is by hacking things together trying them out and if you want our white paper the white paper describes everything that we've discussed but also gives the references and tools that we've used step aside okay so in conclusion machine learning

in the end is very good at finding patterns that humans are not very good at finding them it's very good at matching pieces there are different variables together and finding correlations between them and it's very good in misleading us into interpreting the wrong results so you have to be very careful when you do that but if you are careful when you do that you can get good results I'm not dissing machine learning I'm just saying use it with caution in the end we don't have enough non exploitable crashes in order to fully train our model which is as we've said in the beginning the biggest problem is getting good data and this is the biggest problem for anyone

who's playing in the data science field which is also the reason why the biggest players are people who have access to lots of data such as Facebook Google Amazon everyone else has access to huge amounts of data will have the best machine learning modules imagine that it actually worked and it was open source and you can use it and it was it would fit any kind of problem in the world what could you what you could you use this form so we thought about three main use cases out of which one was very interesting to us but we think that it might be interesting fathers so the first one would be imagine that you had

the system that would look at a crash and tell if it's exploitable or not with a high degree of confidence and that means if you hook it up into your favorite bug tracker you can very easily top your developers and saying we prioritize your bugs because these bugs are exploitable and these bugs are not and it's a new way to look at prioritization for bugs at least from a security perspective because if we could fix all of those exploitable bugs first our attack surface would be closed much faster the second area which was very interesting for us is fur for nobility hunters take those ten thousand crashes throw 99 thousand of them a sari nine thousand

nine hundred of them in the garbage remain 100 just look at 100 it's much better for vulnerability hunter to focuses attention on summer wear is a bigger expectation to actually have some yield and the last opportunity is actually to improve fuzzies the basic job of a fuzzer is to find some sort of input that will crash the problem the program but a smart fighter will not just crash the program it will crash the program and we'll give you those inputs that give you something that you can use those crashes for because if it just crashes the program and nothing happens and you can't use it for nothing it's not an interesting crash ok so to wrap this up

even though data science has the word science in its name it's more an art than a science talk to anyone with a pigeon in the field that he will agree with me and in the end we can learn a lot and we can use a lot from other disciplines so we had a data scientist in our team and that helped us look at those data problems from a new perspective from a new angle that we haven't considered before and it actually led us into this path to find out that we can improve the way that we look at things we can improve the way that we are doing our day-to-day jobs and save money and saves time and

resources so we would like to acknowledge dennis is the PhD on our team did on my previous team that did this work on the machine learning side and both Ezra and myself on the binary exploitation side of the matter the people behind the exploitable open-source package which is amazing and if you're in the field you probably already aware of it and the people behind the cyber Grand Challenge which obviously without that dataset we wouldn't be here today so thank to all of them and thank you and yeah you can and we have time for questions please don't be shy they don't have questions they are running away yeah

should are we at a point where a stock analysts or those aspiring to be a soccer analyst should actually become data scientist well the question was we probably all heard of them with microphone it's a tough question the data scientist is usually someone who has a very deep familiarity with the algorithmic side of machine learning when you're in the Sun you're not interested in the performance of the algorithm developing new algorithms you're actually interested in applying those algorithms so I would say someone your stock doesn't need to be a data scientist but it should be a data analyst at least and that should be at least know how to run queries against any kind of language SQL whatever you

can imagine it will make you a better analyst but also understanding the way the data structure it will help him improve his queries and that means he is already on the path to being a data analyst yeah more questions please no behind him thank you basically because most of the techniques you've used actually to keep the interpretability of the the features they found importance is that one of the things that you actually took away from that whole process to maybe develop further techniques of the technique of detecting exploitable crashes yeah so we looked at it and we try to find out well let me rephrase this and maybe answer that the same question I hope looking at

all of the crashes that we looked at in the machine learning maths that we developed it looked like the pieces of information features that we look at them will have a higher probability to know something is exploitable or not so this was part of the reason that we did this research because instead of looking at the entire data that we can look at the crash if it gives focus on a specific slice of data from that crash would make our job easier so the shorter answer is yes you can the larger answer no doesn't hold up in the real world and the reason for that is is that the machine learning models that we trained gave us information about the

information that they saw so they were very good at focusing your attention and with results that are relevant for these 632 test cases but once we took that same models and put them in something new that they've never seen before it would skew however if we had like 1 million test cases covering real-world use cases we could probably have better insight and we'll get a different focus and that focus would be probably more true for a broader sense of cases

learning we are not so concerned about miss accuracy misrepresentation because our objective is to fight and exploitable backs and we have the assumption there are multiple of them so it means one of them it's not that bad as in traditional of Defense machine learning just make sense do you have any ideas about how to expand your dataset oh maybe crowdsourcing something from people who are vulnerability researchers something like that so we have some ideas and we actually do ask every time we give a talk and like this people if they have information please share it with us the problem is that most of the people that have that information cannot share it with other people so if we talk

to like a large vendor I don't know sent in l1 silence carbon block however who have access to that those data points they can't share those data points with us both from liquor perspective business perspective and others so it's floating out there usually or you can't really touch it it's part of the problem of getting good data so if someone has like a very brilliant idea of how to go about that we would love to hear it that's really the reason why we're showing our makes apology

somebody yeah two-wheeled from these and make something way better yeah it looks like you were mainly doing memory register fuzzing what libraries and modules were you using to do that for the fuzzing which libraries and modules do you use for the memory fuzzing we didn't do specifically memory fuzzing what we've done is used AFL to find a crash when we found the crash we had we configured the linux back-end to save the crash dump and then we had a python script running analyzing the crash dump and extracting the information that we cared about at this point we cared about registers but we actually had a trove of information to look at which we didn't we just looked at the registers because

that's the decision that we've made it's not a necessity so my question is this so in the data science world they're always talking about how much data do you need to actually create a viable model and he talked about the and you talked about the limitations of the data set you can get how much data do you need to get a viable model it's actually a big an open a big open question the relationship between the amount of data that you need in versus the accuracy of the model that you're trying to build so there is a graph which I can't show because I don't have it here but it looks like something like this and it

means it's a nonlinear graph it means that in the beginning when you're building building your model you need more high accuracy data and less of it and as your accuracy increases when you're fine-tuning your model you have more accuracy you'll need more data and as you will increase the amount of data your accuracy will drop but the model will be more robust so there is a cutoff point where you give it more data you don't get much improvement in your accuracy and you're just waiting time and resources to do that but most of the problem is getting to that point and if someone already has more data than that point you he doesn't care about the question he

just has lots of data for everyone else if you can get into let's say a 60% accurate model with fixed amount of data and in order to get into 70 70% accuracy they have to double the amount of data you will usually stop because getting data is very expensive so this might be for the room as well there's a group I think they're called language theoretic does that sound familiar Lang SEC yeah so those guys if I'm remembering right they've done some research on classes of bugs basically I'll write an input parser for random input it'll be bad and that's where a large class of problems come from if you start out and build a

solid finite state machine that recognizes good input and bad input and all that so they may have some good information on non exploitable good parsers thank you first of all the second thing is that I agree but there's a caveat to that and that is that when we are discussing inputs to the machine we don't necessarily mean something that gets parsed by a parser inputs come in various forms and it kind of hides because it's not obvious when we say inputs inputs for example is the way that the stack is structured before you call a function it's an input to that function however it's not something that's user controllable in a way so it's not like

you entered your name in text field and then in a bug occurred and a crash happened sometimes it happens because you we ordered the parameters or you gave the wrong length of a parameter something like that which would cause the crash but not necessary something that a parser even gets to look at through that exploitation path so I fully agree that better sanitation will give better security that is of course however I'm not sure how how deep bugs this kind of approach will actually find or hard stop I agree I'm just saying that the probe larger than that yeah um so one of my questions was you had like that this three-stage validation thing where you

were checking contest as a pointer that index and then the contents of the ECX register I believe yeah now are you actually checking what's in the actual like region of memory to validate that it's the same data overflowing the same you know section of memory or is it that you're looking at the debug logs that you're talking about so the answer to that is yes and no we are looking at the specific values in the sense that we are analyzing them to read them but we don't care about them and we are pinning them meaning that if they are between a range a and B they're all belonging to pin 1 so we don't care about the value the

other thing is that we are not looking at the crash logs we are actually looking at the memory dump so we we get the the actual register of values so this is the information that we are based that the model is basing its decisions oh yeah yeah any more questions please just ask between you and happy hour so I don't remember the presentation I saw it in but I think someone hypothesized that AI is better at defending as opposed to adversarial types of tasks and you were looking at exploitable z-- do you agree with that hypothesis that yeah I'll have to look at the formulation of that hypothesis to either agree or disagree I would say

that we have some experience with the adversarial side for machine learning we actually gave a talk right in here in this room last year about that there are some stuff that machine learning is better at the some stuff that it's horrendously bad at adversarial analysis for example something the machine learning is very bad at just from the problem that the just one the reason that the problem space is so much larger than the solution space so there's so many other inputs that could lead into the same output for machine learning and that means that you easily trick machine learning to do to miss classify an input to make the wrong decisions etc however in that sense

machinery is much better defending because if as a defender you can create like a white listing set of rules and you can teach the machine learning those rules it would be much better than noone enforcing those rules on a specific data set however that's not the way that it's usually applied maybe something in the way that some security products do pattern matching trying to do anomaly detection stuff like that machine learning is much better than humans it's not very good it's not the same thing as experience it has and for defending it will only be able to defend what it has and you just to give you like an example give you the rule of thumb here someone

knows how often does Facebook update its machine learning models how often so the answer to that that there we analyzed their data and update the parameters for their models on a couple of hours cadence so they we learn we reteach the machine learning models that they have every couple of hours based on the new data that they received from the users and that means that they have like a moving target machine learning model because if they kept the same machine learning model it would drift away from other users are doing very very fast which is another problem with machine learning which we haven't discussed here at all a kind of answer is that from thank you all if you have any more

questions we're going to stay right here thank you